- The paper introduces a residual learning framework that computes trajectory differences to improve PDE solving accuracy with limited data.

- It employs auxiliary trajectory sampling and interpolation to enrich training inputs and reduce data bias effectively.

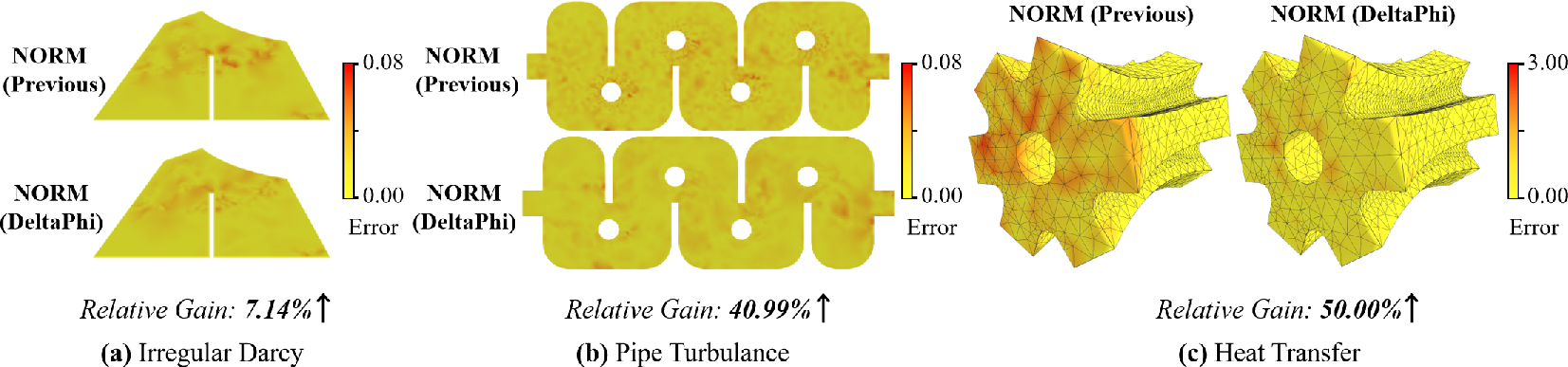

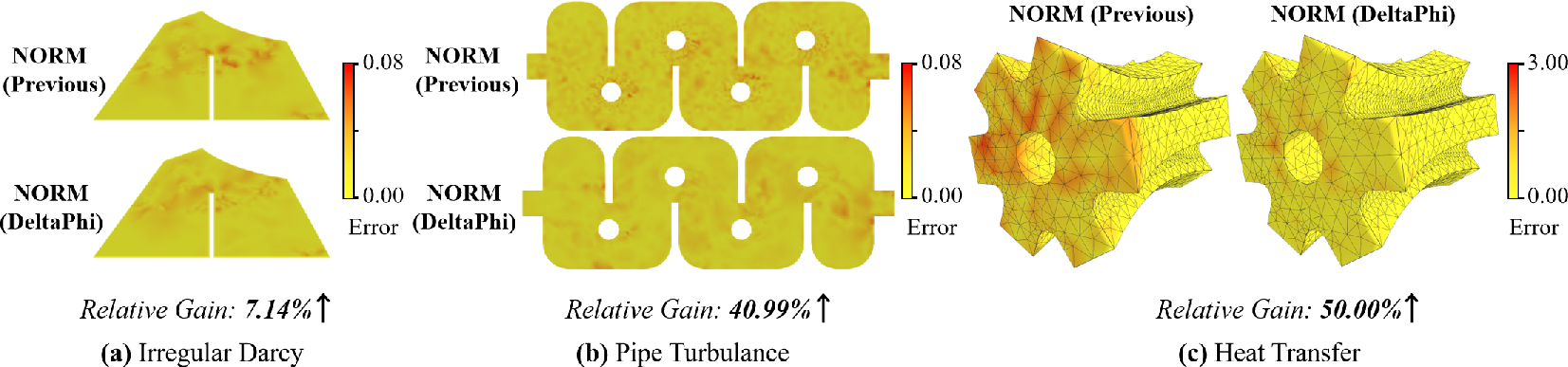

- Experimental results indicate significant error reduction and robust zero-shot resolution generalization across irregular and regular domains.

DeltaPhi: Learning Physical Trajectory Residual for PDE Solving

Introduction

The paper introduces DeltaPhi, a Physical Trajectory Residual Learning method designed for enhanced PDE solving via neural operators. While traditional neural operator networks can theoretically approximate any operator mapping, they struggle with generalization in practical applications, particularly in scenarios with limited and low-resolution data. By shifting from direct operator mapping to residual operator mapping, DeltaPhi addresses these shortcomings. It learns the residual differences between a primary trajectory and a similar auxiliary trajectory, thereby improving model generalization by reducing data bias and enhancing training label diversity.

Methodology

Direct Learning vs. Physical Residual Learning

Traditional direct learning of operator mappings G involves learning from input-output function fields G:A→U with A and U being Banach spaces. Physical Trajectory Residual Learning reformulates this by introducing a residual operator mapping GΔ that maps pairs of input functions to function residuals:

GΔ:A2→ΔU

This mapping learns from the differences between trajectories, providing a richer training set that helps alleviate data bias.

Auxiliary Trajectory Sampling and Integration

DeltaPhi uses auxiliary trajectory sampling to select similar trajectories from the training set, which are then integrated with inputs during training and inference. This involves:

- Retrieving auxiliary trajectories using similarity functions (e.g., cosine similarity).

- Interpolating auxiliary trajectories to match input resolution when needed.

- Providing additional auxiliary input data, such as past states or similarity scores, as network inputs, which can help reduce mapping complexity.

These approaches ensure that the residual learning process fully utilizes available data diversity.

Residual Operator Optimization

The parameterized residual surrogate model GθΔ is optimized to minimize function residual discrepancies using a loss function:

ℓ=i=1∑NL(GθΔ(ai,aki),ui−uki)

Optimization leverages residual connections in neural networks to support enhanced gradient descent dynamics. This setup effectively trains DeltaPhi on existing frameworks, maintaining consistency across different operator networks.

Experimental Results

Irregular and Regular Domain Evaluations

The method shows significant improvements over existing approaches on various PDE problems, including both irregular domain problems (e.g., Pipe Turbulence and Blood Flow) and regular domain problems (e.g., Darcy Flow and Navier-Stokes). In scenarios with limited training data, DeltaPhi offers superior predictive accuracy. The accompanying figures show notable reductions in prediction error across models and domains.

Resolution Generalization

DeltaPhi exhibits better performance in zero-shot resolution generalization tasks compared to traditional operator learning frameworks (Figure 1 shows this across different resolutions). By maintaining high accuracy even when trained on low-resolution data, DeltaPhi addresses a key limitation of existing neural operator methods.

Figure 2: Prediction error visualization on irregular domain problems.

Statistical Robustness

The method's robustness is further supported by statistical analysis on test sets, where it shows effective mitigation of training bias and improved training label diversity through controlled residual distributions. The results reinforce DeltaPhi's ability to generalize from limited and biased data.

Conclusion

DeltaPhi introduces an innovative framework for residual learning in PDE solving, improving generalization through effective use of auxiliary trajectories. Future extensions may explore its application to chaotic systems and integration with complex model architectures to manage increased learning complexity. These extensions emphasize DeltaPhi's potential for practical implementation in diverse scientific settings.