- The paper introduces innovative generative AI workflows that streamline 2D character animation and reduce manual labor in educational cartoon production.

- It employs diffusion models fine-tuned with DreamBooth and ControlNet to address inconsistencies in character proportions and line weights.

- The research achieves significant efficiency gains by completing approximately 50 shots in eight weeks, suggesting a paradigm shift in digital animation production.

Generative AI for 2D Character Animation

The exploration of generative AI in streamlining the 2D character animation process presents an intriguing confluence of technology and artistry. This research introduces several innovative workflows specifically designed to enhance the efficiency of producing educational cartoons, which traditionally suffer from a scarcity of high-quality animated content compared to entertainment media. By leveraging generative AI tools, the researchers aim to minimize manual effort in animation while maintaining high aesthetic standards.

Animation Workflows and Fine-Tuning

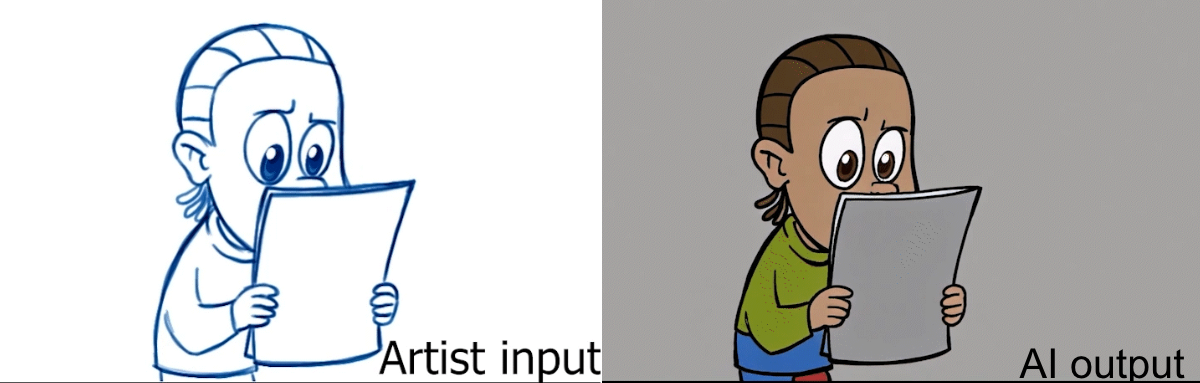

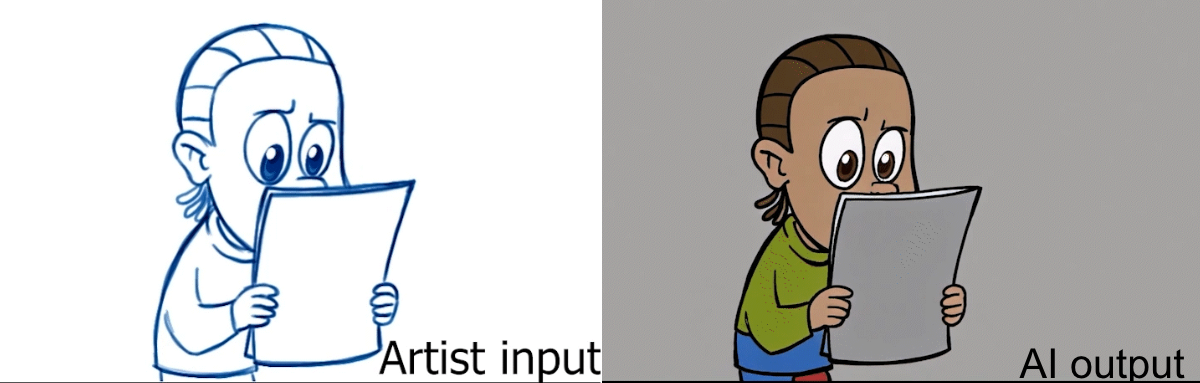

This work distinguishes itself by emphasizing the acceleration of character animation rather than automation of story simulation and visual design, as seen in previous research. The authors employed diffusion models fine-tuned for specific character styles using DreamBooth, despite challenges like inconsistent proportions and line weights. These inconsistencies, particularly prevalent in non-human characters, were mitigated through conditioning with artist drawings and ControlNet, especially using depth conditioning to align the output with desired poses.

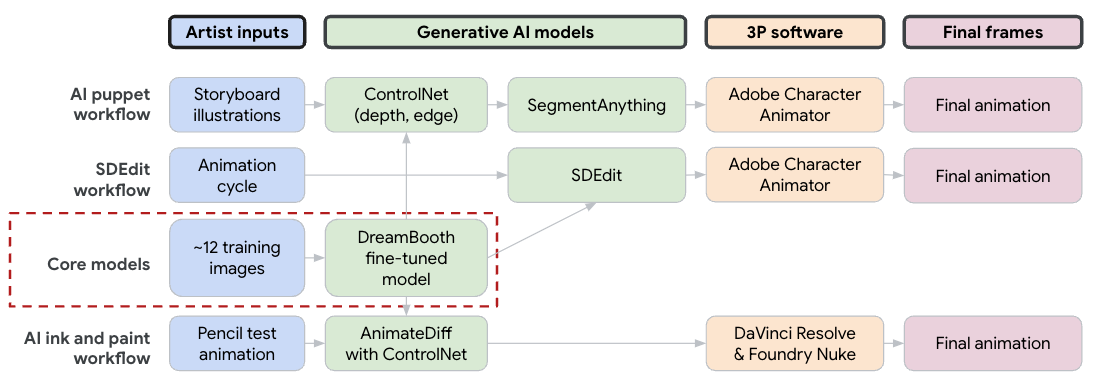

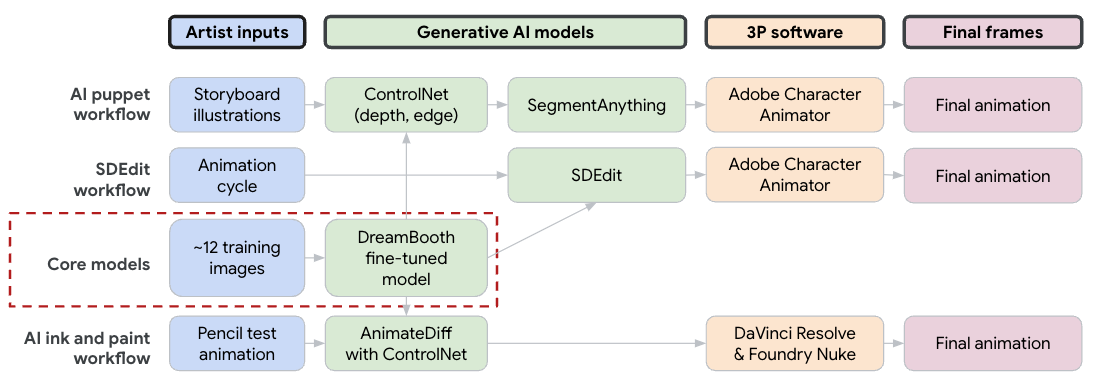

Figure 1: Schematic diagram showing three of our generative AI animation workflows.

A range of workflows was employed, with notable emphasis on three specific strategies:

- AI Puppets: This approach uses ControlNet to generate an initial hero frame from a sketch, which is then segmented and animated using Adobe Character Animator for pose and lip-sync animations.

- AI Ink and Paint: This process involves using pencil sketches per frame and employing AnimateDiff with ControlNet to achieve consistent "ink and paint" outputs. Ensuring line fidelity required additional steps for color correction and noise reduction.

- SDEdit for FIREFLY Character: This unique workflow involved inputting frames from a smooth cycle animation into SDXL models via SDedit, introducing randomness and variability that accentuate the character's erratic persona.

Practical and Theoretical Implications

From a practical standpoint, the paper highlights significant gains in efficiency, enabling completion of approximately 50 shots with minimal animator resources over an eight-week period. The diffusion models, while presenting initial challenges, demonstrated potential far exceeding expectations, necessitating less manual work in tedious processes like ink and paint.

Theoretically, the research implies a potential paradigm shift in animation production, where generative AI can shoulder more of the repetitive tasks, freeing artists to focus on creative aspects. The integration of such workflows might spur further advancements and refinements in AI models to better cater to stylistic and consistency demands inherent in animation.

Figure 2: A sample frame of input and output for the character LUTHER from our AI ink and paint workflow.

Future Developments in AI Animation

The authors anticipate future improvements by employing base models that are pre-trained or fine-tuned with a larger corpus of cartoon and animation samples. The development of more sophisticated conditioning strategies, which can better manage vital elements like character proportions, line weight, and color adherence, is expected to enhance the fidelity and efficiency of AI-generated animations.

The work also points to a broader application scope beyond educational media. As the technology matures, its principles could find applicability in various genres of animation, potentially democratizing content production and fostering new creative possibilities.

Conclusion

The research presents a comprehensive blueprint for employing generative AI in 2D character animation, highlighting both its efficiencies and challenges. While substantial manual intervention remains necessary to achieve the desired quality, the methodologies outlined suggest a promising avenue for animation workflows. Subsequent advancements in model training and conditioning methods are poised to further refine these processes, heralding a gradual transformation in the domain of digital animation.