Conversational Language Models for Human-in-the-Loop Multi-Robot Coordination

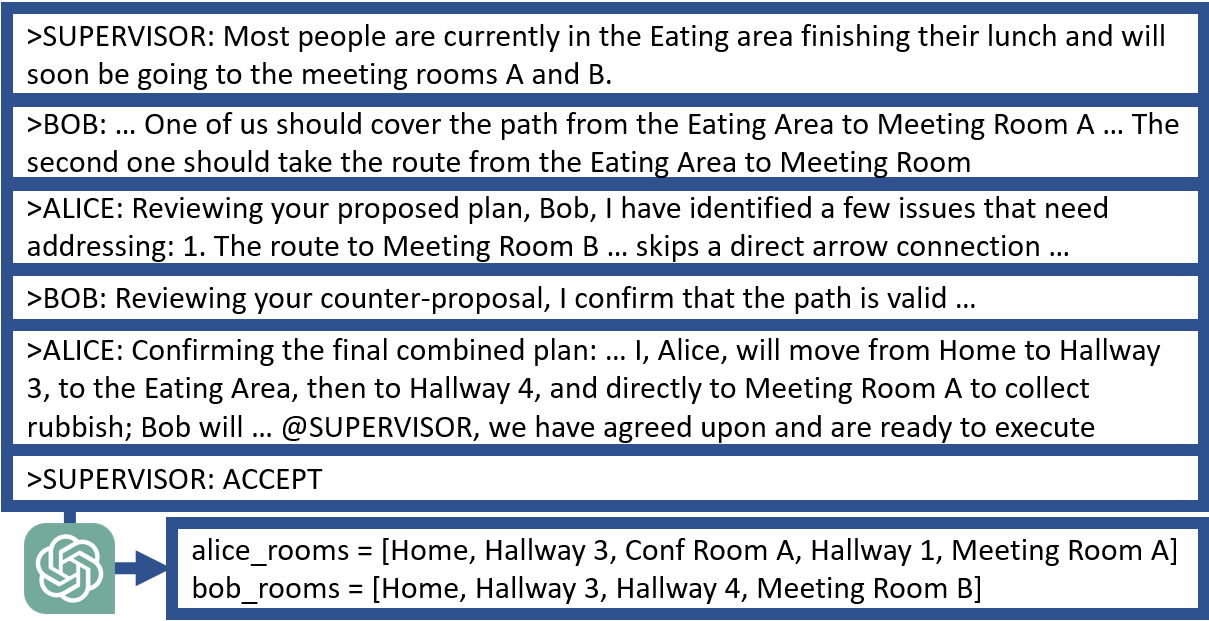

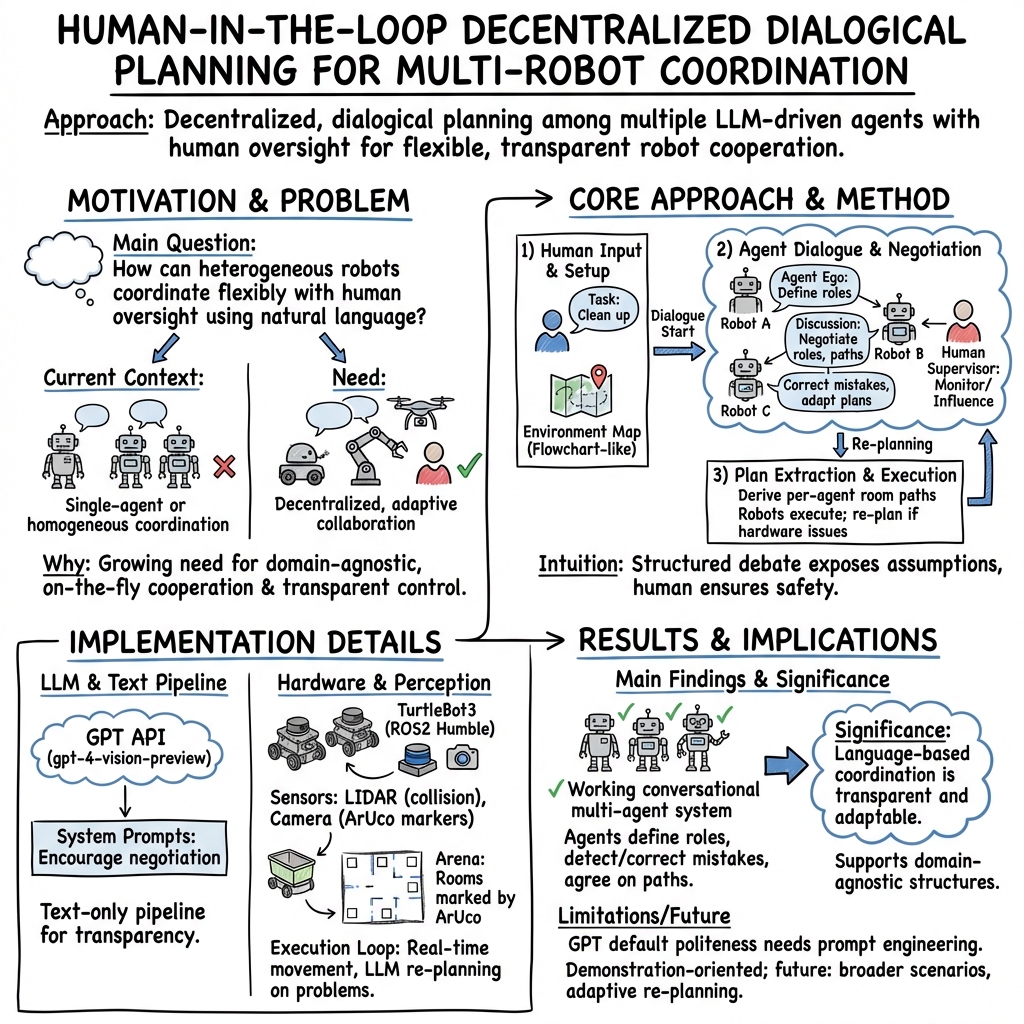

Abstract: With the increasing prevalence and diversity of robots interacting in the real world, there is need for flexible, on-the-fly planning and cooperation. LLMs are starting to be explored in a multimodal setup for communication, coordination, and planning in robotics. Existing approaches generally use a single agent building a plan, or have multiple homogeneous agents coordinating for a simple task. We present a decentralised, dialogical approach in which a team of agents with different abilities plans solutions through peer-to-peer and human-robot discussion. We suggest that argument-style dialogues are an effective way to facilitate adaptive use of each agent's abilities within a cooperative team. Two robots discuss how to solve a cleaning problem set by a human, define roles, and agree on paths they each take. Each step can be interrupted by a human advisor and agents check their plans with the human. Agents then execute this plan in the real world, collecting rubbish from people in each room. Our implementation uses text at every step, maintaining transparency and effective human-multi-robot interaction.

- Swarm robots in mechanized agricultural operations: A review about challenges for research. Computers and Electronics in Agriculture 193 (2022), 106608.

- Do as i can, not as i say: Grounding language in robotic affordances. In Conference on Robot Learning. PMLR, 287–318.

- Chateval: Towards better llm-based evaluators through multi-agent debate. arXiv preprint arXiv:2308.07201 (2023).

- AgentVerse: Facilitating Multi-Agent Collaboration and Exploring Emergent Behaviors in Agents. arXiv preprint arXiv:2308.10848 (2023).

- Industry Led Use-Case Development for Human-Swarm Operations. arXiv:2207.09543 [cs.RO]

- Palm-e: An embodied multimodal language model. arXiv preprint arXiv:2303.03378 (2023).

- Improving language model negotiation with self-play and in-context learning from ai feedback. arXiv preprint arXiv:2305.10142 (2023).

- Metagpt: Meta programming for multi-agent collaborative framework. arXiv preprint arXiv:2308.00352 (2023).

- Inner Monologue: Embodied Reasoning through Planning with Language Models. In Conference on Robot Learning. PMLR, 1769–1782.

- Camel: Communicative agents for” mind” exploration of large scale language model society. arXiv preprint arXiv:2303.17760 (2023).

- An overview of cooperative robotics in agriculture. Agronomy 11, 9 (2021), 1818.

- Roco: Dialectic multi-robot collaboration with large language models. arXiv preprint arXiv:2307.04738 (2023).

- Self-adaptive large language model (llm)-based multiagent systems. In 2023 IEEE International Conference on Autonomic Computing and Self-Organizing Systems Companion (ACSOS-C). IEEE, 104–109.

- Open X-Embodiment Collaboration. 2023. Open X-Embodiment: Robotic Learning Datasets and RT-X Models. https://robotics-transformer-x.github.io.

- OpenAI. 2023. Introducing ChatGPT and Whisper APIs. https://openai.com/blog/introducing-chatgpt-and-whisper-apis

- A generalist agent. arXiv preprint arXiv:2205.06175 (2022).

- Robots that ask for help: Uncertainty alignment for large language model planners. arXiv preprint arXiv:2307.01928 (2023).

- Straits Research. 2023. Household Robotics Market: Information by Application (Robotic Vacuum Mopping, Lawn Mowing), Offering (Products, Services), and Region - Forecast till 2030.

- Unleashing Cognitive Synergy in Large Language Models: A Task-Solving Agent through Multi-Persona Self-Collaboration. arXiv preprint arXiv:2307.05300 (2023).

- Distributed multi-robot algorithms for the TERMES 3D collective construction system. In Proceedings of Robotics: Science and Systems. Institute of Electrical and Electronics Engineers.

- Harnessing the power of llms in practice: A survey on chatgpt and beyond. arXiv preprint arXiv:2304.13712 (2023).

- Building Cooperative Embodied Agents Modularly with Large Language Models. arXiv preprint arXiv:2307.02485 (2023).

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.