- The paper shows that debate protocols enable non-expert judges to achieve up to 88% accuracy in discerning truthful answers.

- It compares Debate, Interactive Debate, and Consultancy protocols, revealing that optimized debater persuasiveness boosts truth identification.

- The study highlights that adversarial debate among LLMs offers a scalable method for self-regulating and aligning AI responses.

Assessing the Efficacy of Debate in Aligning LLMs for Truthful Responses

Introduction

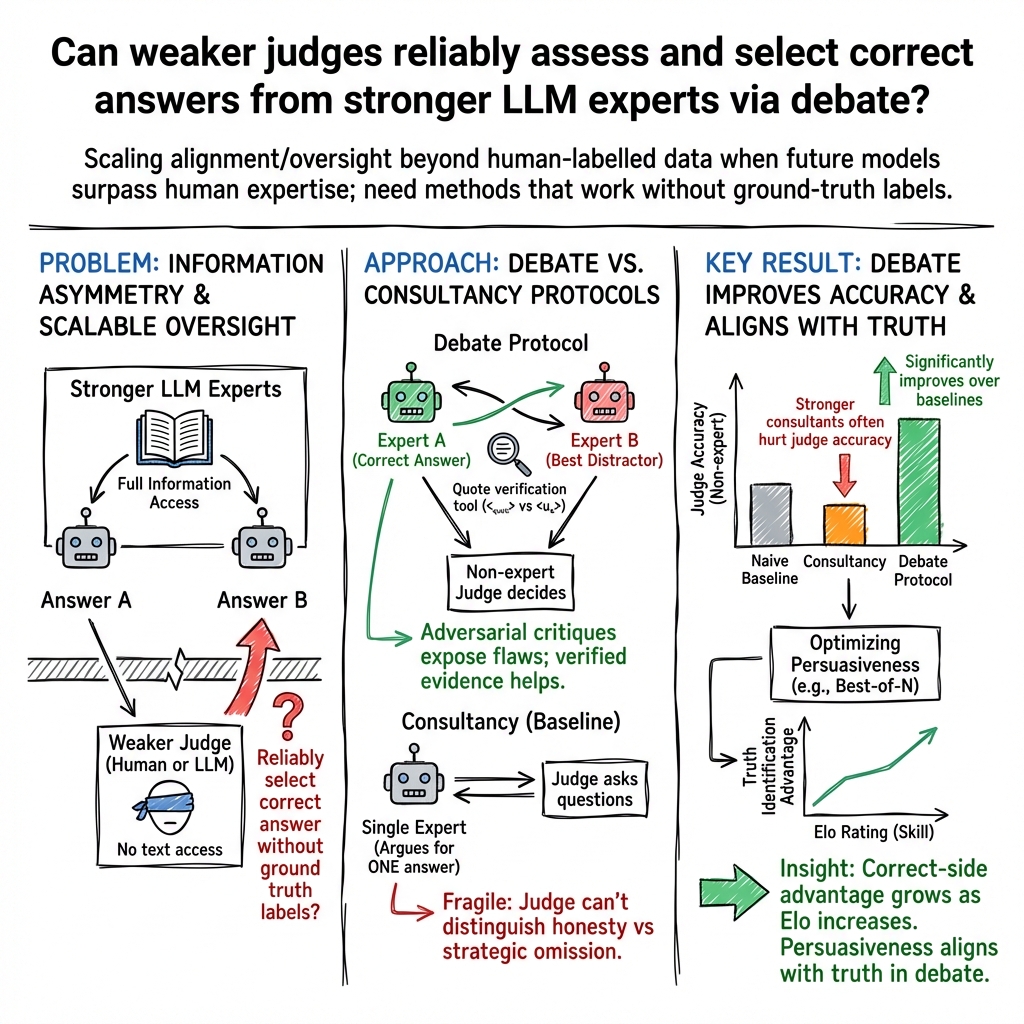

Aligning LLMs with human-desired outcomes presents significant challenges, especially in contexts devoid of ground truth. This paper evaluates the application of debate between LLMs as a method for eliciting truthful answers from said models. The central hypothesis explored is whether non-expert models (judges) can accurately assess the veracity of answers provided by expert models (debaters) in an information-asymmetric setting. The findings, drawn from experiments involving both human and LLM judges on the QuALITY comprehension task, suggest that debate significantly enhances the ability of non-expert judges to discern truthful responses, outperforming traditional single-model consultancy baselines.

Methodological Overview

The paper operationalizes debate through three distinct protocols: Debate, Interactive Debate, and Consultancy (serving as a baseline). In Debate and Interactive Debate, two expert models argue over the correct answer to a question from the QuALITY dataset without access to the underlying text, with a judge assessing the veracity of the arguments presented. The key difference in Interactive Debate is the judge's ability to seek clarifications, enhancing engagement with the debaters. Consultancy, contrastingly, involves a single expert model presenting arguments to persuade the judge. To evaluate the performance of various protocols, the paper devises novel metrics underpinning debater strengths and judge accuracy, all employed in an unsupervised setting devoid of ground truth labels.

Key Findings

- Efficacy of Debate Protocols: Across both human and LLM judges, debate protocols consistently outperformed the consultancy baseline, with human judges achieving an 88% accuracy and LLM judges 76%. Notably, optimising debaters for persuasiveness notably enhanced the ability of judges to identify truthful responses.

- Debater Persuasiveness and Truthfulness: The research revealed that debaters arguing for the correct answer exhibit a natural persuasive advantage, which amplifies with their persuasiveness. This supports the hypothesis that truth-telling is inherently easier and more convincing than fabricating responses, aligning with the foundational assumptions behind the applied debate methodology.

- Judge Calibration and Error Analysis: Human judges displayed improved calibration and lower error rates under debate protocols. Errors predominantly stemmed from inadequate arguments by correct debaters or the failure of judges to elicit crucial information in consultancy protocols, highlighting an area for further improvement.

Theoretical and Practical Implications

From a theoretical standpoint, this work validates the principle that it is easier to critique or evaluate information than to generate it, especially in contexts where ground truth is absent. Practically, the findings advocate for the integration of debate methodologies in overseeing increasingly sophisticated LLMs. Such approaches hold potential for scalable oversight, enabling models to self-regulate through adversarial critique and debate, ostensibly without the direct intervention of human expertise.

Future Directions

The paper suggests avenues for future research, particularly in enhancing debater performance and exploring the applicability of debate protocols across varied settings beyond reading comprehension tasks. The development of more advanced debater models and refinements in judge assessment strategies represents a promising frontier for realizing scalable, self-improving AI systems capable of aligning with human values.

The investigation into employing debate as a means for aligning LLMs with truthful outputs elucidates a promising pathway towards scalable, autonomous oversight of AI systems. By harnessing the adversarial nature of debate, it is feasible to elevate the accuracy of non-expert judges in identifying truthful responses, even in the absence of explicit truth labels. This research underscores the potential of debate as an effective tool in the continued advancement and ethical deployment of AI technologies.