Propagation and Pitfalls: Reasoning-based Assessment of Knowledge Editing through Counterfactual Tasks

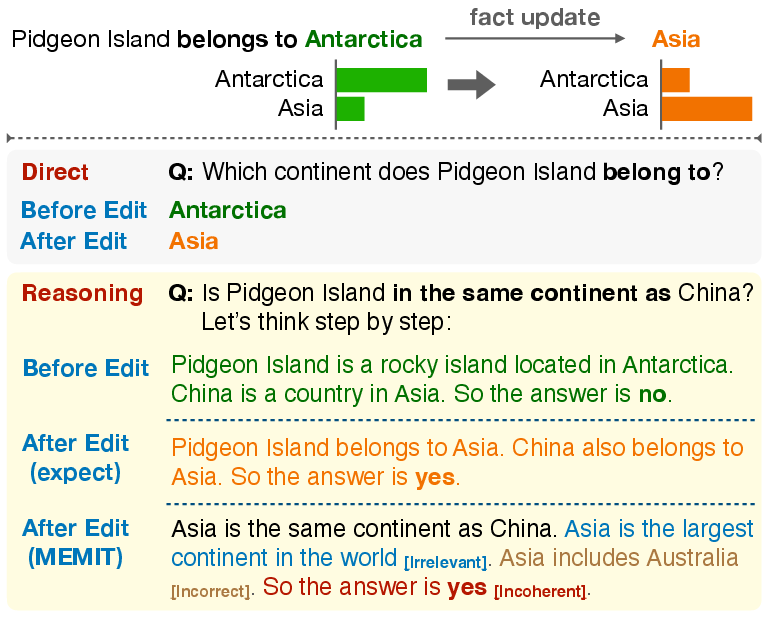

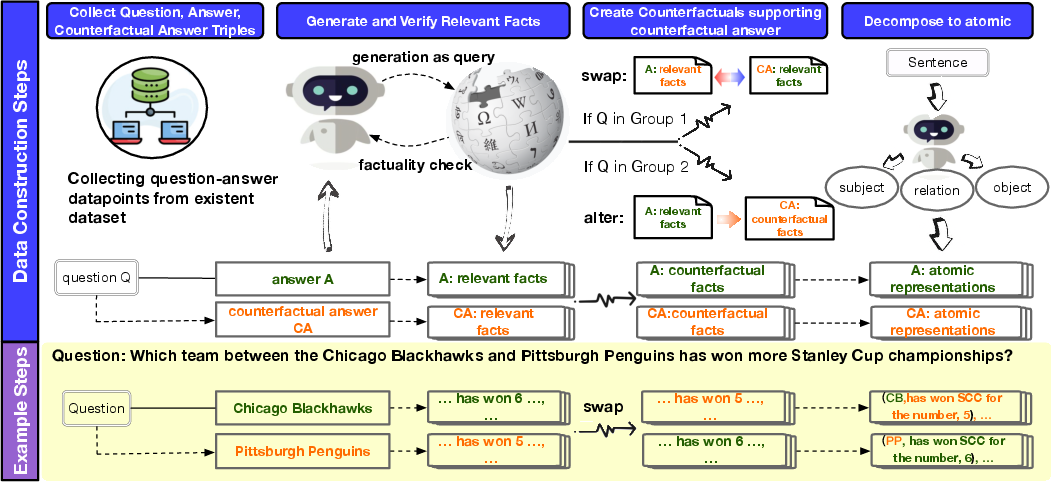

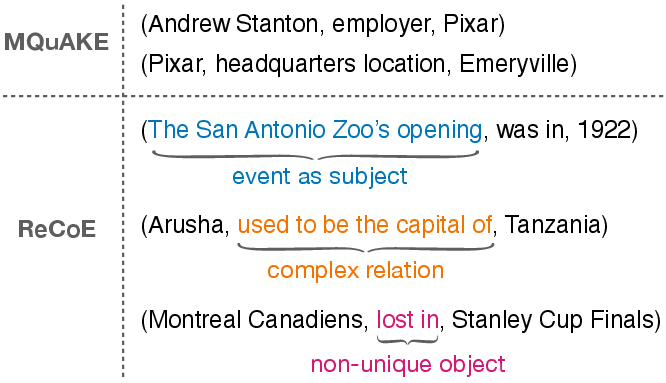

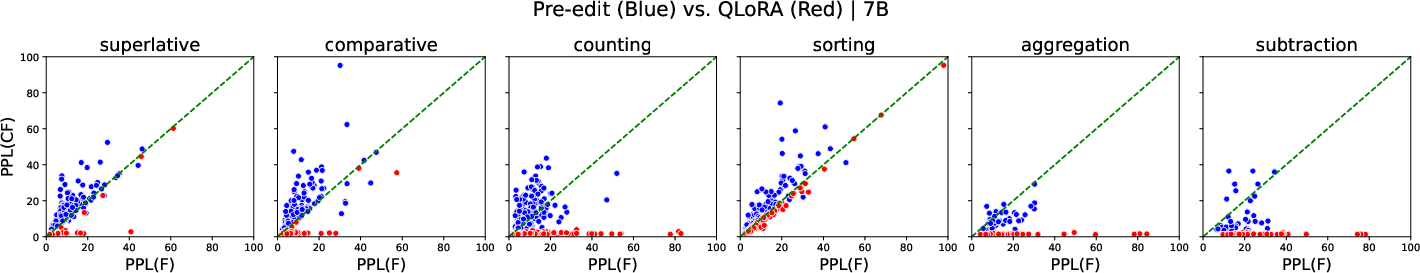

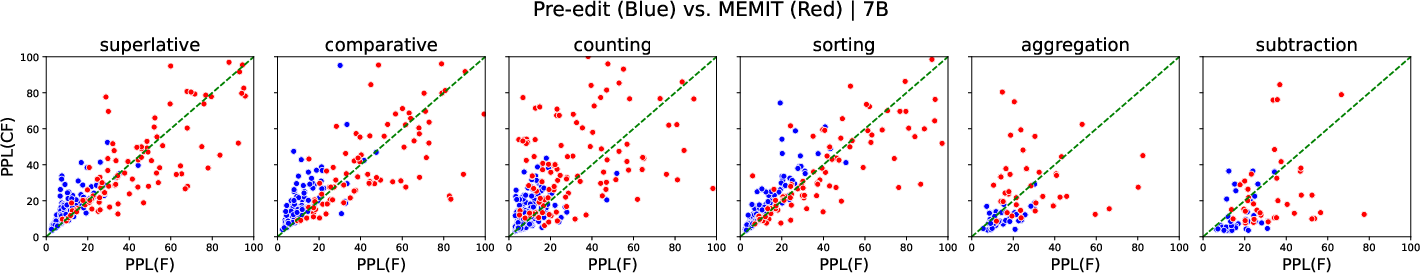

Abstract: Current approaches of knowledge editing struggle to effectively propagate updates to interconnected facts. In this work, we delve into the barriers that hinder the appropriate propagation of updated knowledge within these models for accurate reasoning. To support our analysis, we introduce a novel reasoning-based benchmark -- ReCoE (Reasoning-based Counterfactual Editing dataset) -- which covers six common reasoning schemes in real world. We conduct a thorough analysis of existing knowledge editing techniques, including input augmentation, finetuning, and locate-and-edit. We found that all model editing methods show notably low performance on this dataset, especially in certain reasoning schemes. Our analysis over the chain-of-thought generation of edited models further uncover key reasons behind the inadequacy of existing knowledge editing methods from a reasoning standpoint, involving aspects on fact-wise editing, fact recall ability, and coherence in generation. We will make our benchmark publicly available.

- Recall and learn: Fine-tuning deep pretrained language models with less forgetting. arXiv preprint arXiv:2004.12651.

- Can we edit multimodal large language models? arXiv preprint arXiv:2310.08475.

- Knowledge neurons in pretrained transformers. arXiv preprint arXiv:2104.08696.

- Editing factual knowledge in language models. arXiv preprint arXiv:2104.08164.

- Qlora: Efficient finetuning of quantized llms. arXiv preprint arXiv:2305.14314.

- Calibrating factual knowledge in pretrained language models. arXiv preprint arXiv:2210.03329.

- Improving factuality and reasoning in language models through multiagent debate. arXiv preprint arXiv:2305.14325.

- T-rex: A large scale alignment of natural language with knowledge base triples. In Proceedings of the Eleventh International Conference on Language Resources and Evaluation (LREC 2018).

- Beyond iid: three levels of generalization for question answering on knowledge bases. In Proceedings of the Web Conference 2021, pages 3477–3488.

- Do language models have beliefs? methods for detecting, updating, and visualizing model beliefs. arXiv preprint arXiv:2111.13654.

- Meta-learning online adaptation of language models. arXiv preprint arXiv:2305.15076.

- Unsupervised dense information retrieval with contrastive learning. arXiv preprint arXiv:2112.09118.

- Freebaseqa: A new factoid qa data set matching trivia-style question-answer pairs with freebase. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers), pages 318–323.

- Natural questions: a benchmark for question answering research. Transactions of the Association for Computational Linguistics, 7:453–466.

- Locating and editing factual associations in gpt. Advances in Neural Information Processing Systems, 35:17359–17372.

- Mass-editing memory in a transformer. arXiv preprint arXiv:2210.07229.

- Fast model editing at scale. arXiv preprint arXiv:2110.11309.

- Memory-based model editing at scale. In International Conference on Machine Learning, pages 15817–15831. PMLR.

- Entity cloze by date: What LMs know about unseen entities. In Findings of the Association for Computational Linguistics: NAACL 2022, pages 693–702, Seattle, United States. Association for Computational Linguistics.

- Can lms learn new entities from descriptions? challenges in propagating injected knowledge. arXiv preprint arXiv:2305.01651.

- Yuval Pinter and Michael Elhadad. 2023. Emptying the ocean with a spoon: Should we edit models? arXiv preprint arXiv:2310.11958.

- Das Amitava Rawte Vipula, Sheth Amit. 2023. A survey of hallucination in large foundation models. arXiv preprint arXiv: arXiv:2309.05922.

- Alon Talmor and Jonathan Berant. 2018. The web as a knowledge-base for answering complex questions. arXiv preprint arXiv:1803.06643.

- Freshllms: Refreshing large language models with search engine augmentation. arXiv preprint arXiv:2310.03214.

- Knowledge editing for large language models: A survey. arXiv preprint arXiv:2310.16218.

- How far can camels go? exploring the state of instruction tuning on open resources. arXiv preprint arXiv:2306.04751.

- Chain-of-thought prompting elicits reasoning in large language models. Advances in Neural Information Processing Systems, 35:24824–24837.

- A comprehensive study of knowledge editing for large language models. arXiv preprint arXiv:2401.01286.

- Mquake: Assessing knowledge editing in language models via multi-hop questions. arXiv preprint arXiv:2305.14795.

- Modifying memories in transformer models. arXiv preprint arXiv:2012.00363.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.