Progress and Prospects in 3D Generative AI: A Technical Overview including 3D human

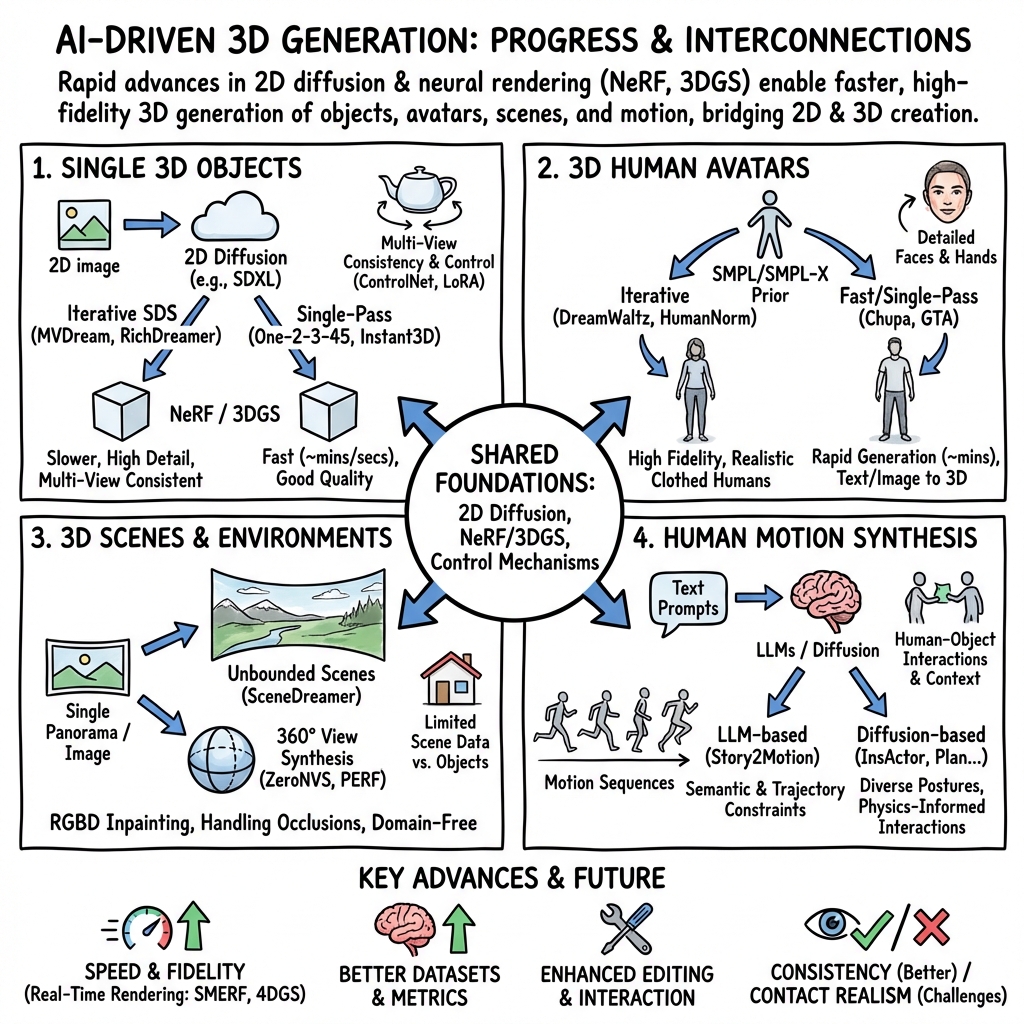

Abstract: While AI-generated text and 2D images continue to expand its territory, 3D generation has gradually emerged as a trend that cannot be ignored. Since the year 2023 an abundant amount of research papers has emerged in the domain of 3D generation. This growth encompasses not just the creation of 3D objects, but also the rapid development of 3D character and motion generation. Several key factors contribute to this progress. The enhanced fidelity in stable diffusion, coupled with control methods that ensure multi-view consistency, and realistic human models like SMPL-X, contribute synergistically to the production of 3D models with remarkable consistency and near-realistic appearances. The advancements in neural network-based 3D storing and rendering models, such as Neural Radiance Fields (NeRF) and 3D Gaussian Splatting (3DGS), have accelerated the efficiency and realism of neural rendered models. Furthermore, the multimodality capabilities of LLMs have enabled language inputs to transcend into human motion outputs. This paper aims to provide a comprehensive overview and summary of the relevant papers published mostly during the latter half year of 2023. It will begin by discussing the AI generated object models in 3D, followed by the generated 3D human models, and finally, the generated 3D human motions, culminating in a conclusive summary and a vision for the future.

- Nerf: Representing scenes as neural radiance fields for view synthesis, 2020.

- 3d gaussian splatting for real-time radiance field rendering, 2023.

- Generative ai meets 3d: A survey on text-to-3d in aigc era, 2023.

- Mvdream: Multi-view diffusion for 3d generation, 2023.

- Richdreamer: A generalizable normal-depth diffusion model for detail richness in text-to-3d, 2023.

- Direct2.5: Diverse text-to-3d generation via multi-view 2.5d diffusion, 2023.

- Objaverse-xl: A universe of 10m+ 3d objects, 2023.

- Diffusiondb: A large-scale prompt gallery dataset for text-to-image generative models, 2023.

- SMPL: A skinned multi-person linear model. ACM Trans. Graphics (Proc. SIGGRAPH Asia), 34(6):248:1–248:16, October 2015.

- Expressive body capture: 3D hands, face, and body from a single image. In Proceedings IEEE Conf. on Computer Vision and Pattern Recognition (CVPR), pages 10975–10985, 2019.

- Story-to-motion: Synthesizing infinite and controllable character animation from long text, 2023.

- Global-correlated 3d-decoupling transformer for clothed avatar reconstruction, 2023.

- Point-e: A system for generating 3d point clouds from complex prompts, 2022.

- Exim: A hybrid explicit-implicit representation for text-guided 3d shape generation, 2023.

- Sweetdreamer: Aligning geometric priors in 2d diffusion for consistent text-to-3d, 2023.

- Gaussiandreamer: Fast generation from text to 3d gaussians by bridging 2d and 3d diffusion models, 2023.

- One-2-3-45++: Fast single image to 3d objects with consistent multi-view generation and 3d diffusion. arXiv preprint arXiv:2311.07885, 2023.

- Instant3d: Fast text-to-3d with sparse-view generation and large reconstruction model, 2023.

- Wonder3d: Single image to 3d using cross-domain diffusion, 2023.

- Consistent-1-to-3: Consistent image to 3d view synthesis via geometry-aware diffusion models, 2023.

- Zeronvs: Zero-shot 360-degree view synthesis from a single real image, 2023.

- Hexplane: A fast representation for dynamic scenes, 2023.

- K-planes: Explicit radiance fields in space, time, and appearance, 2023.

- Denoising diffusion implicit models, 2022.

- One-2-3-45: Any single image to 3d mesh in 45 seconds without per-shape optimization. arXiv preprint arXiv:2306.16928, 2023.

- Adding conditional control to text-to-image diffusion models. In Proceedings of the IEEE/CVF International Conference on Computer Vision, pages 3836–3847, 2023.

- Learning transferable visual models from natural language supervision, 2021.

- High-resolution image synthesis with latent diffusion models, 2021.

- Objaverse: A universe of annotated 3d objects, 2022.

- A theory of shape by space carving. International journal of computer vision, 38:199–218, 2000.

- Real-esrgan: Training real-world blind super-resolution with pure synthetic data. In Proceedings of the IEEE/CVF international conference on computer vision, pages 1905–1914, 2021.

- Let there be color! large-scale texturing of 3d reconstructions. In Computer Vision–ECCV 2014: 13th European Conference, Zurich, Switzerland, September 6-12, 2014, Proceedings, Part V 13, pages 836–850. Springer, 2014.

- Google scanned objects: A high-quality dataset of 3d scanned household items, 2022.

- Dreambooth: Fine tuning text-to-image diffusion models for subject-driven generation, 2023.

- Laion-5b: An open large-scale dataset for training next generation image-text models, 2022.

- Fantasia3d: Disentangling geometry and appearance for high-quality text-to-3d content creation, 2023.

- Editing 3d scenes via text prompts without retraining, 2023.

- Lora: Low-rank adaptation of large language models, 2021.

- Attention is all you need, 2023.

- Zero-1-to-3: Zero-shot one image to 3d object, 2023.

- Stability AI. Stable Zero123: Quality 3D Object Generation from Single Images. https://stability.ai/news/stable-zero123-3d-generation, 2023. Online; accessed 13 December 2023.

- aitviewer, 7 2022.

- Dreamwaltz: Make a scene with complex 3d animatable avatars, 2023.

- Humannorm: Learning normal diffusion model for high-quality and realistic 3d human generation, 2023.

- Tada! text to animatable digital avatars, 2023.

- Tech: Text-guided reconstruction of lifelike clothed humans, 2023.

- Dreamavatar: Text-and-shape guided 3d human avatar generation via diffusion models, 2023.

- Deep marching tetrahedra: a hybrid representation for high-resolution 3d shape synthesis, 2021.

- Chupa: Carving 3d clothed humans from skinned shape priors using 2d diffusion probabilistic models, 2023.

- Avatarcraft: Transforming text into neural human avatars with parameterized shape and pose control, 2023.

- Dreamhuman: Animatable 3d avatars from text, 2023.

- One-shot implicit animatable avatars with model-based priors, 2023.

- Sdedit: Guided image synthesis and editing with stochastic differential equations, 2022.

- Avatarclip: Zero-shot text-driven generation and animation of 3d avatars, 2022.

- imghum: Implicit generative models of 3d human shape and articulated pose, 2021.

- Ref-nerf: Structured view-dependent appearance for neural radiance fields, 2021.

- Mip-nerf 360: Unbounded anti-aliased neural radiance fields, 2022.

- Perf: Panoramic neural radiance field from a single panorama, 2023.

- Luciddreamer: Domain-free generation of 3d gaussian splatting scenes, 2023.

- Scenedreamer: Unbounded 3d scene generation from 2d image collections. IEEE Transactions on Pattern Analysis and Machine Intelligence, 45(12):15562–15576, December 2023.

- Synthesizing diverse human motions in 3d indoor scenes, 2023.

- Humantomato: Text-aligned whole-body motion generation, 2023.

- Learned motion matching. ACM Transactions on Graphics (TOG), 39(4):53–1, 2020.

- T2m-gpt: Generating human motion from textual descriptions with discrete representations, 2023.

- Plan, posture and go: Towards open-world text-to-motion generation, 2023.

- Insactor: Instruction-driven physics-based characters, 2023.

- Imos: Intent-driven full-body motion synthesis for human-object interactions, 2023.

- Interdiff: Generating 3d human-object interactions with physics-informed diffusion, 2023.

- Chore: Contact, human and object reconstruction from a single rgb image, 2023.

- Editing conditional radiance fields, 2021.

- Nerf-editing: Geometry editing of neural radiance fields, 2022.

- Neuraleditor: Editing neural radiance fields via manipulating point clouds, 2023.

- Smerf: Streamable memory efficient radiance fields for real-time large-scene exploration, 2023.

- 4k4d: Real-time 4d view synthesis at 4k resolution, 2023.

- Spacetime gaussian feature splatting for real-time dynamic view synthesis, 2023.

- 4d gaussian splatting for real-time dynamic scene rendering, 2023.

- Deblurring 3d gaussian splatting, 2024.

- Sdxl: Improving latent diffusion models for high-resolution image synthesis, 2023.

- Scaling up gans for text-to-image synthesis, 2023.

- Open-nerf: Towards open vocabulary nerf decomposition, 2023.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.