Anti-Aliased Neural Implicit Surfaces with Encoding Level of Detail

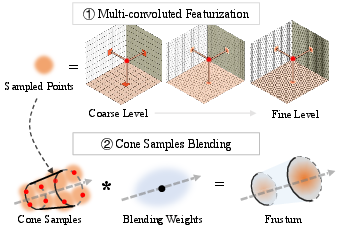

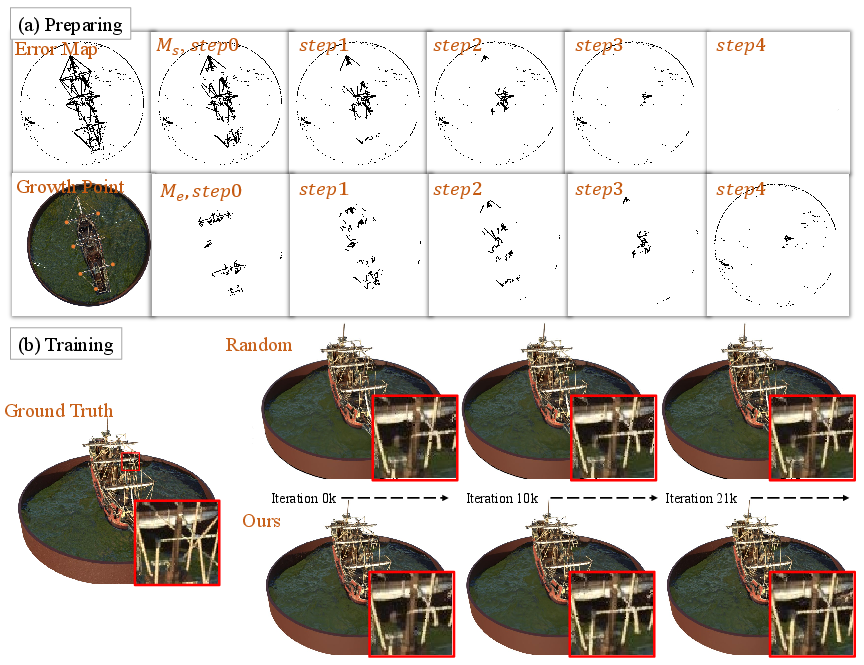

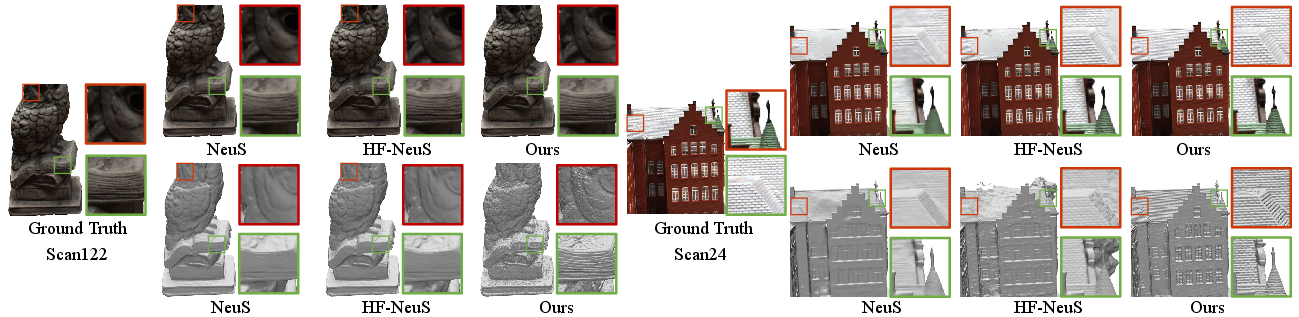

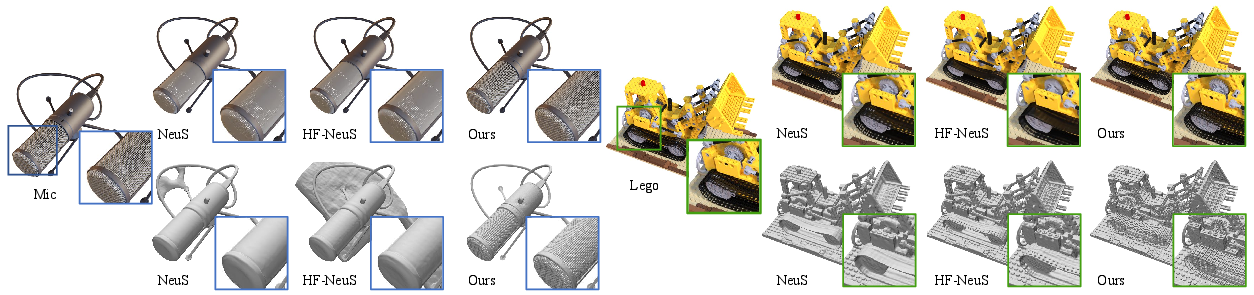

Abstract: We present LoD-NeuS, an efficient neural representation for high-frequency geometry detail recovery and anti-aliased novel view rendering. Drawing inspiration from voxel-based representations with the level of detail (LoD), we introduce a multi-scale tri-plane-based scene representation that is capable of capturing the LoD of the signed distance function (SDF) and the space radiance. Our representation aggregates space features from a multi-convolved featurization within a conical frustum along a ray and optimizes the LoD feature volume through differentiable rendering. Additionally, we propose an error-guided sampling strategy to guide the growth of the SDF during the optimization. Both qualitative and quantitative evaluations demonstrate that our method achieves superior surface reconstruction and photorealistic view synthesis compared to state-of-the-art approaches.

- Large-scale data for multiple-view stereopsis. International Journal of Computer Vision 120 (2016), 153–168.

- PatchMatch: A randomized correspondence algorithm for structural image editing. ACM Trans. Graph. 28, 3 (2009), 24.

- Mip-nerf 360: Unbounded anti-aliased neural radiance fields. In CVPR. 5470–5479.

- The ball-pivoting algorithm for surface reconstruction. IEEE transactions on visualization and computer graphics 5, 4 (1999), 349–359.

- A probabilistic framework for space carving. In Proceedings eighth IEEE international conference on computer vision. ICCV 2001, Vol. 1. IEEE, 388–393.

- Using multiple hypotheses to improve depth-maps for multi-view stereo. In Computer Vision–ECCV 2008: 10th European Conference on Computer Vision, Marseille, France, October 12-18, 2008, Proceedings, Part I 10. Springer, 766–779.

- Deep local shapes: Learning local sdf priors for detailed 3d reconstruction. In European conference on computer vision. 608–625.

- Efficient geometry-aware 3D generative adversarial networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 16123–16133.

- Tensorf: Tensorial radiance fields. In Computer Vision–ECCV 2022: 17th European Conference, Tel Aviv, Israel, October 23–27, 2022, Proceedings, Part XXXII. Springer, 333–350.

- Hallucinated neural radiance fields in the wild. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 12943–12952.

- Robert L Cook. 1986. Stochastic sampling in computer graphics. ACM Transactions on Graphics (TOG) 5, 1 (1986), 51–72.

- Bundlefusion: Real-time globally consistent 3d reconstruction using on-the-fly surface reintegration. ACM Transactions on Graphics (ToG) 36, 4 (2017), 1.

- Improving neural implicit surfaces geometry with patch warping. In CVPR. 6260–6269.

- Jeremy S De Bonet and Paul Viola. 1999. Poxels: Probabilistic voxelized volume reconstruction. In Proceedings of International Conference on Computer Vision (ICCV), Vol. 2. 3.

- Geo-neus: Geometry-consistent neural implicit surfaces learning for multi-view reconstruction. Advances in Neural Information Processing Systems 35 (2022), 3403–3416.

- Yasutaka Furukawa and Jean Ponce. 2009. Accurate, dense, and robust multiview stereopsis. IEEE transactions on pattern analysis and machine intelligence 32, 8 (2009), 1362–1376.

- Local deep implicit functions for 3d shape. In CVPR. 4857–4866.

- Implicit geometric regularization for learning shapes. In Proceedings of the 37th International Conference on Machine Learning. 3789–3799.

- Image-based 3D object reconstruction: State-of-the-art and trends in the deep learning era. IEEE T-PAMI 43, 5 (2019), 1578–1604.

- Surface reconstruction from unorganized points. In Proceedings of the 19th annual conference on computer graphics and interactive techniques. 71–78.

- Inverting the Imaging Process by Learning an Implicit Camera Model. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 21456–21465.

- Hdr-nerf: High dynamic range neural radiance fields. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 18398–18408.

- Kinectfusion: real-time 3d reconstruction and interaction using a moving depth camera. In Proceedings of the 24th annual ACM symposium on User interface software and technology. 559–568.

- Poisson surface reconstruction. In Proceedings of the fourth Eurographics symposium on Geometry processing, Vol. 7. 0.

- Kiriakos N Kutulakos and Steven M Seitz. 2000. A theory of shape by space carving. International journal of computer vision 38 (2000), 199–218.

- Efficient multi-view reconstruction of large-scale scenes using interest points, delaunay triangulation and graph cuts. In 2007 IEEE 11th international conference on computer vision. IEEE, 1–8.

- HR-NeuS: Recovering High-Frequency Surface Geometry via Neural Implicit Surfaces. arXiv:2302.06793 [cs.CV]

- Deblur-nerf: Neural radiance fields from blurry images. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 12861–12870.

- Nelson Max. 1995. Optical models for direct volume rendering. IEEE Transactions on Visualization and Computer Graphics 1, 2 (1995), 99–108.

- Real-time visibility-based fusion of depth maps. In 2007 IEEE 11th International Conference on Computer Vision. Ieee, 1–8.

- Occupancy networks: Learning 3d reconstruction in function space. In CVPR. 4460–4470.

- Nerf: Representing scenes as neural radiance fields for view synthesis. In European conference on computer vision.

- Instant neural graphics primitives with a multiresolution hash encoding. ACM Transactions on Graphics (ToG) 41, 4 (2022), 1–15.

- Real-time 3D reconstruction at scale using voxel hashing. ACM Transactions on Graphics (ToG) 32, 6 (2013), 1–11.

- Unisurf: Unifying neural implicit surfaces and radiance fields for multi-view reconstruction. In ICCV. 5589–5599.

- Johannes L Schonberger and Jan-Michael Frahm. 2016. Structure-from-motion revisited. In Proceedings of the IEEE conference on computer vision and pattern recognition. 4104–4113.

- Pixelwise view selection for unstructured multi-view stereo. In Computer Vision–ECCV 2016: 14th European Conference, Amsterdam, The Netherlands, October 11-14, 2016, Proceedings, Part III 14. Springer, 501–518.

- Steven M Seitz and Charles R Dyer. 1999. Photorealistic scene reconstruction by voxel coloring. International journal of computer vision 35 (1999), 151–173.

- Deepvoxels: Learning persistent 3d feature embeddings. In CVPR. 2437–2446.

- Nerv: Neural reflectance and visibility fields for relighting and view synthesis. In CVPR. 7495–7504.

- Neural geometric level of detail: Real-time rendering with implicit 3D shapes. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 11358–11367.

- Advances in neural rendering. In Computer Graphics Forum, Vol. 41. Wiley Online Library, 703–735.

- Efficient large-scale multi-view stereo for ultra high-resolution image sets. Machine Vision and Applications 23 (2012), 903–920.

- Ref-nerf: Structured view-dependent appearance for neural radiance fields. In CVPR. IEEE, 5481–5490.

- NeuS: Learning Neural Implicit Surfaces by Volume Rendering for Multi-view Reconstruction. Advances in Neural Information Processing Systems 34 (2021), 27171–27183.

- Hf-neus: Improved surface reconstruction using high-frequency details. Advances in Neural Information Processing Systems 35 (2022), 1966–1978.

- PET-NeuS: Positional Encoding Triplanes for Neural Surfaces. (2023).

- High-fidelity 3D Face Generation from Natural Language Descriptions. In Proceedings of IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR).

- Local Implicit Ray Function for Generalizable Radiance Field Representation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition.

- Volume rendering of neural implicit surfaces. Advances in Neural Information Processing Systems 34 (2021), 4805–4815.

- Multiview neural surface reconstruction by disentangling geometry and appearance. Advances in Neural Information Processing Systems 33 (2020), 2492–2502.

- Monosdf: Exploring monocular geometric cues for neural implicit surface reconstruction. arXiv preprint arXiv:2206.00665 (2022).

- A globally optimal algorithm for robust tv-l 1 range image integration. In 2007 IEEE 11th International Conference on Computer Vision. IEEE, 1–8.

- End-to-end wireframe parsing. In Proceedings of the IEEE/CVF International Conference on Computer Vision. 962–971.

- Pyramid NeRF: Frequency Guided Fast Radiance Field Optimization. International Journal of Computer Vision (2023), 1–16.

- NeAI: A Pre-convoluted Representation for Plug-and-Play Neural Ambient Illumination. arXiv preprint arXiv:2304.08757 (2023).

- Mofanerf: Morphable facial neural radiance field. In European conference on computer vision.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.