Computational Reproducibility in Computational Social Science

Abstract: Replication crises have shaken the scientific landscape during the last decade. As potential solutions, open science practices were heavily discussed and have been implemented with varying success in different disciplines. We argue that computational-x disciplines such as computational social science, are also susceptible for the symptoms of the crises, but in terms of reproducibility. We expand the binary definition of reproducibility into a tier system which allows increasing levels of reproducibility based on external verfiability to counteract the practice of open-washing. We provide solutions for barriers in Computational Social Science that hinder researchers from obtaining the highest level of reproducibility, including the use of alternate data sources and considering reproducibility proactively.

- Replicability, robustness, and reproducibility in psychological science. Annual Review of Psychology, 73(1):719–748, 2022. ISSN 1545-2085. doi:10.1146/annurev-psych-020821-114157. URL http://dx.doi.org/10.1146/annurev-psych-020821-114157.

- Open Science Collaboration. Estimating the reproducibility of psychological science. Science, 349(6251), 2015. ISSN 1095-9203. doi:10.1126/science.aac4716. URL http://dx.doi.org/10.1126/science.aac4716.

- Alexander Wuttke. Why too many political science findings cannot be trusted and what we can do about it: A review of meta-scientific research and a call for academic reform. Politische Vierteljahresschrift, 60(1):1–19, 2018. ISSN 1862-2860. doi:10.1007/s11615-018-0131-7. URL http://dx.doi.org/10.1007/s11615-018-0131-7.

- Psychology, science, and knowledge construction: Broadening perspectives from the replication crisis. Annual Review of Psychology, 69(1):487–510, 2018. doi:10.1146/annurev-psych-122216-011845. URL https://doi.org/10.1146/annurev-psych-122216-011845.

- A manifesto for reproducible science. Nature Human Behaviour, 1(1):1–9, 2017. ISSN 2397-3374. doi:10.1038/s41562-016-0021.

- Open Science now: A systematic literature review for an integrated definition. Journal of Business Research, 88:428–436, 2018. ISSN 0148-2963. doi:10.1016/j.jbusres.2017.12.043.

- Computational social science. Science, 323(5915):721–723, 2009. doi:10.1126/science.1167742. URL https://www.science.org/doi/abs/10.1126/science.1167742.

- Lorena A Barba. Terminologies for reproducible research. arXiv preprint arXiv:1802.03311, 2018.

- The Turing Way Community. The Turing Way: A handbook for reproducible, ethical and collaborative research, 2022.

- The End of the Rehydration Era - The Problem of Sharing Harmful Twitter Research Data. ICWSM, Jun 2023. doi:10.36190/2023.56. URL https://doi.org/10.36190/2023.56.

- Don Grady. The golden age of data: media analytics in study & practice. Routledge, 2019.

- Deen Freelon. Computational research in the post-api age. Political Communication, 35(4):665–668, 2018. ISSN 1091-7675. doi:10.1080/10584609.2018.1477506. URL http://dx.doi.org/10.1080/10584609.2018.1477506.

- Rebekah Tromble. Where Have All the Data Gone? A Critical Reflection on Academic Digital Research in the Post-API Age. Social Media + Society, 7(1):2056305121988929, 2021. ISSN 2056-3051. doi:10.1177/2056305121988929.

- Is the sample good enough? comparing data from twitter’s streaming api with twitter’s firehose. In Proceedings of the International AAAI Conference on Web and Social Media, volume 7, pages 400–408, 2013.

- Social media apis: A quiet threat to the advancement of science, 2023. URL psyarxiv.com/ps32z.

- Botometer 101: Social bot practicum for computational social scientists. Journal of Computational Social Science, pages 1–18, 2022.

- The false positive problem of automatic bot detection in social science research. PLOS ONE, 15(10):e0241045, 2020. ISSN 1932-6203. doi:10.1371/journal.pone.0241045. URL http://dx.doi.org/10.1371/journal.pone.0241045.

- How is ChatGPT’s behavior changing over time? arXiv preprint arXiv:2307.09009, 2023.

- Digital trace data collection for social media effects research: APIs, data donation, and (screen) tracking. Communication Methods and Measures, page 1–18, 2023. ISSN 1931-2466. doi:10.1080/19312458.2023.2181319. URL http://dx.doi.org/10.1080/19312458.2023.2181319.

- vcr: Record ’HTTP’ Calls to Disk, 2023. URL https://CRAN.R-project.org/package=vcr. R package version 1.2.2.

- The Availability of Research Data Declines Rapidly with Article Age. Current Biology, 24(1):94–97, 2014. ISSN 0960-9822. doi:10.1016/j.cub.2013.11.014.

- Data sharing practices and data availability upon request differ across scientific disciplines. Scientific Data, 8(1), 2021. ISSN 2052-4463. doi:10.1038/s41597-021-00981-0. URL http://dx.doi.org/10.1038/s41597-021-00981-0.

- Mandated data archiving greatly improves access to research data. The FASEB Journal, 27(4):1304–1308, 2013. ISSN 0892-6638, 1530-6860. doi:10.1096/fj.12-218164.

- Lisa M. Federer. Long-term availability of data associated with articles in PLOS ONE. PLOS ONE, 17(8):e0272845, 2022. ISSN 1932-6203. doi:10.1371/journal.pone.0272845.

- Stanford alpaca: An instruction-following llama model. https://github.com/tatsu-lab/stanford_alpaca, 2023.

- Opening up ChatGPT: Tracking openness, transparency, and accountability in instruction-tuned text generators. Proceedings of the 5th International Conference on Conversational User Interfaces, Jul 2023. doi:10.1145/3571884.3604316. URL http://dx.doi.org/10.1145/3571884.3604316.

- Misclassification in automated content analysis causes bias in regression. can we fix it? yes we can! arXiv preprint arXiv:2307.06483, 2023.

- Who is doing computational social science? trends in big data research. 2016. URL https://repository.essex.ac.uk/17679/1/compsocsci.pdf.

- A survey of researchers’ code sharing and code reuse practices, and assessment of interactive notebook prototypes. PeerJ, 10:e13933, 2022. ISSN 2167-8359. doi:10.7717/peerj.13933.

- Mary Elizabeth Sutherland. Computational social science heralds the age of interdisciplinary science. https://socialsciences.nature.com/posts/54262-computational-social-science-heralds-the-age-of-interdisciplinary-science/, 2018. [Online; accessed 05-May-2023].

- How do scientists develop and use scientific software? 2009 ICSE Workshop on Software Engineering for Computational Science and Engineering, 2009. doi:10.1109/secse.2009.5069155. URL http://dx.doi.org/10.1109/SECSE.2009.5069155.

- Best Practices for Scientific Computing. PLOS Biology, 12(1):e1001745, 2014. ISSN 1545-7885. doi:10.1371/journal.pbio.1001745.

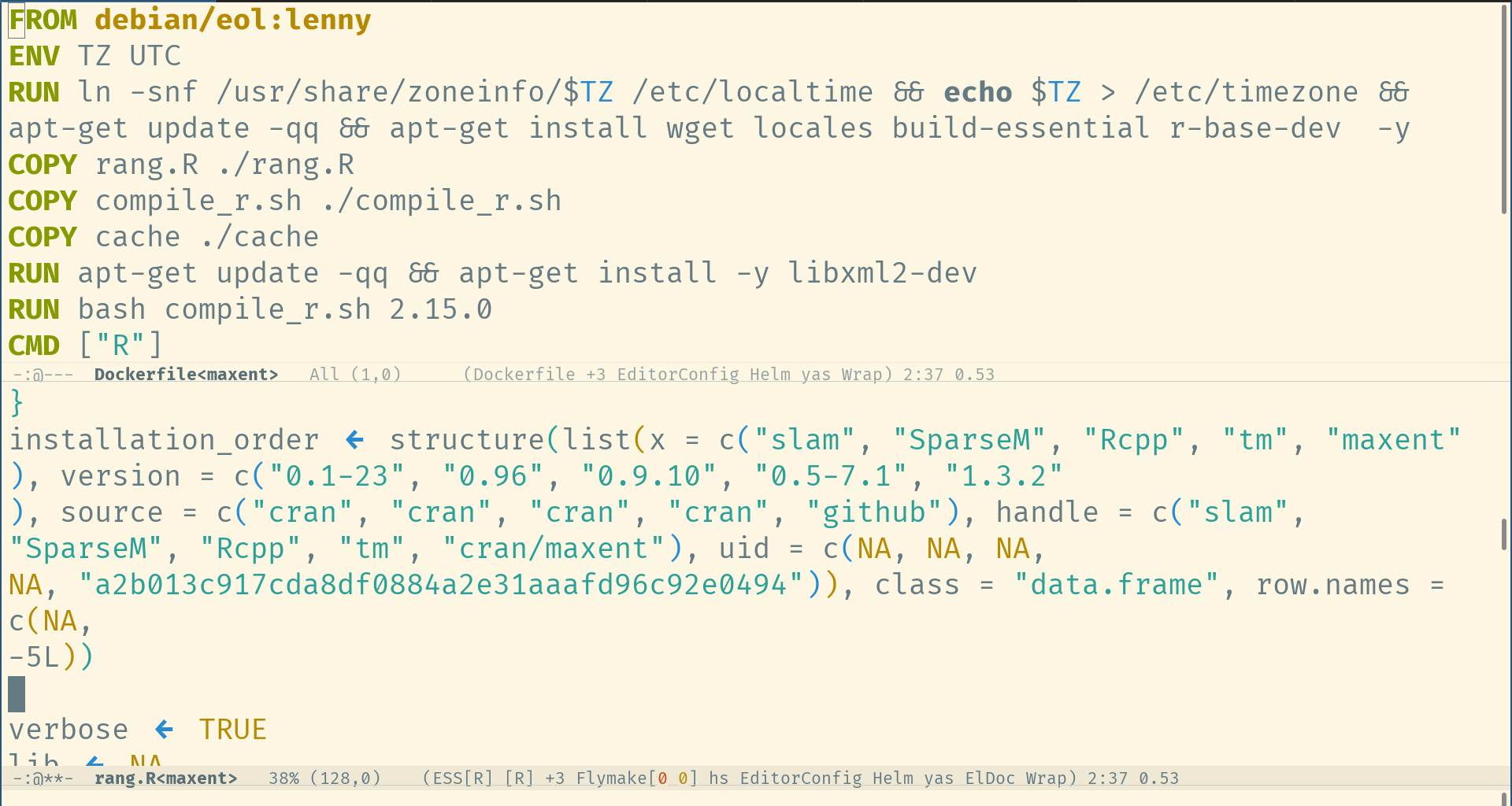

- rang: Reconstructing reproducible r computational environments. PLOS ONE, 18(6):e0286761, 2023. ISSN 1932-6203. doi:10.1371/journal.pone.0286761. URL http://dx.doi.org/10.1371/journal.pone.0286761.

- quanteda: An R package for the quantitative analysis of textual data. Journal of Open Source Software, 3(30):774, 2018. ISSN 2475-9066. doi:10.21105/joss.00774. URL http://dx.doi.org/10.21105/joss.00774.

- A large-scale study on research code quality and execution. Scientific Data, 9(1), 2022. ISSN 2052-4463. doi:10.1038/s41597-022-01143-6. URL http://dx.doi.org/10.1038/s41597-022-01143-6.

- Greg Wilson. Software carpentry: Getting scientists to write better code by making them more productive. Computing in Science & Engineering, 2006. Summarizes the what and why of Version 3 of the course.

- D. E. Knuth. Literate Programming. The Computer Journal, 27(2):97–111, 1984. ISSN 0010-4620. doi:10.1093/comjnl/27.2.97. URL https://doi.org/10.1093/comjnl/27.2.97.

- A multi-language computing environment for literate programming and reproducible research. Journal of Statistical Software, 46(3), 2012. ISSN 1548-7660. doi:10.18637/jss.v046.i03. URL http://dx.doi.org/10.18637/jss.v046.i03.

- Digits: Two Reports on New Units of Scholarly Publication. The Journal of Electronic Publishing, 22(1), 2020. ISSN 1080-2711. doi:10.3998/3336451.0022.105.

- Binder 2.0-reproducible, interactive, sharable environments for science at scale. In Proceedings of the 17th python in science conference, pages 113–120. F. Akici, D. Lippa, D. Niederhut, and M. Pacer, eds., 2018.

- Design choices for productive, secure, data-intensive research at scale in the cloud. arXiv preprint arXiv:1908.08737, 2019.

- Paving the way for data-centric, open science: An example from the social sciences. Journal of Librarianship and Scholarly Communication, 3(2), 2015.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.