- The paper reviews reproducibility efforts in NLP, organizing studies into same-condition, varied-condition, and multi-test categories.

- The paper finds that only 14.03% of reproduction scores exactly matched originals, with 59.2% yielding worse outcomes, highlighting experimental sensitivity.

- The paper underscores the urgent need for standardized terminologies and detailed protocols to improve reproducibility in diverse NLP settings.

A Systematic Review of Reproducibility Research in Natural Language Processing

Introduction

The paper "A Systematic Review of Reproducibility Research in Natural Language Processing" presents a comprehensive survey of the ongoing efforts to improve reproducibility in the NLP domain, addressing the so-called reproducibility crisis. The authors review a wide range of existing research and initiatives focused on reproducibility, emphasizing the variance in terminologies and conceptual frameworks, and propose categories for organizing reproducibility research in NLP.

Overview of Reproducibility Challenges

Reproducibility in NLP involves the replication of results with a degree of precision under specified conditions, yet the community faces significant challenges. The paper reports that 70% of scientists have failed to reproduce another's results, and over 50% have struggled to reproduce their own. These issues are compounded by the lack of consensus on definitions of reproducibility, replicability, and related terms, as well as the practical difficulties of matching experimental conditions, such as algorithm details, parameter settings, and initial conditions.

The authors highlight an alarming divergence in the field regarding the understanding of what constitutes reproducibility. Various organizations and researchers offer differing definitions, leading to confusion and inconsistency in reproducibility studies. This diversity in interpretation underscores the need for a unified understanding or framework.

Terminology and Frameworks

The paper addresses crucial terminological issues by exploring the definitions provided by notable organizations like the ACM and the International Vocabulary of Metrology (VIM). Here, reproducibility is associated with obtaining similar outcomes under varied conditions, while repeatability is linked to achieving consistent results under the same conditions. However, within the NLP literature, these terms are often used inconsistently.

The authors propose a common-ground terminology to navigate these challenges by distinguishing between three broad categories of reproducibility research: reproduction under same conditions, under varied conditions, and multi-test studies.

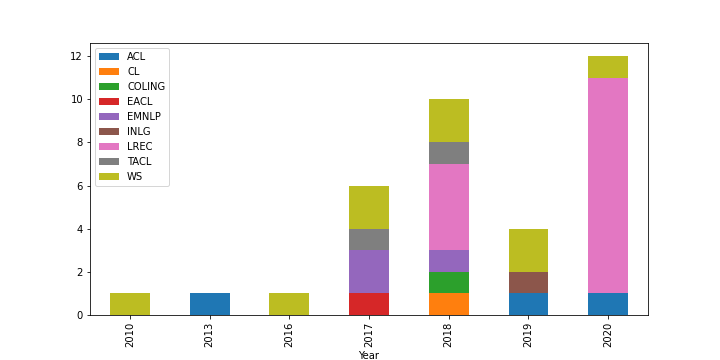

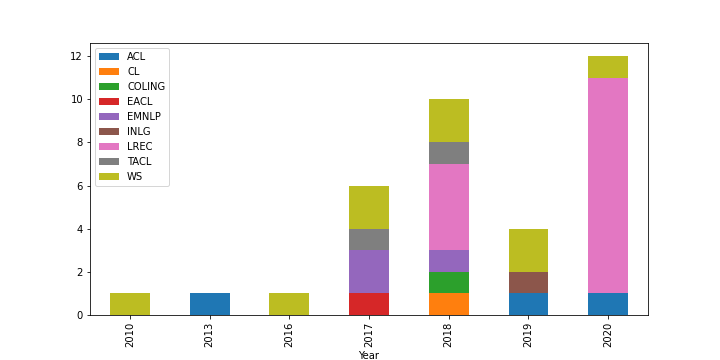

Figure 1: 35 papers from ACL Anthology search, by year and venue.

Reproduction Under Same Conditions

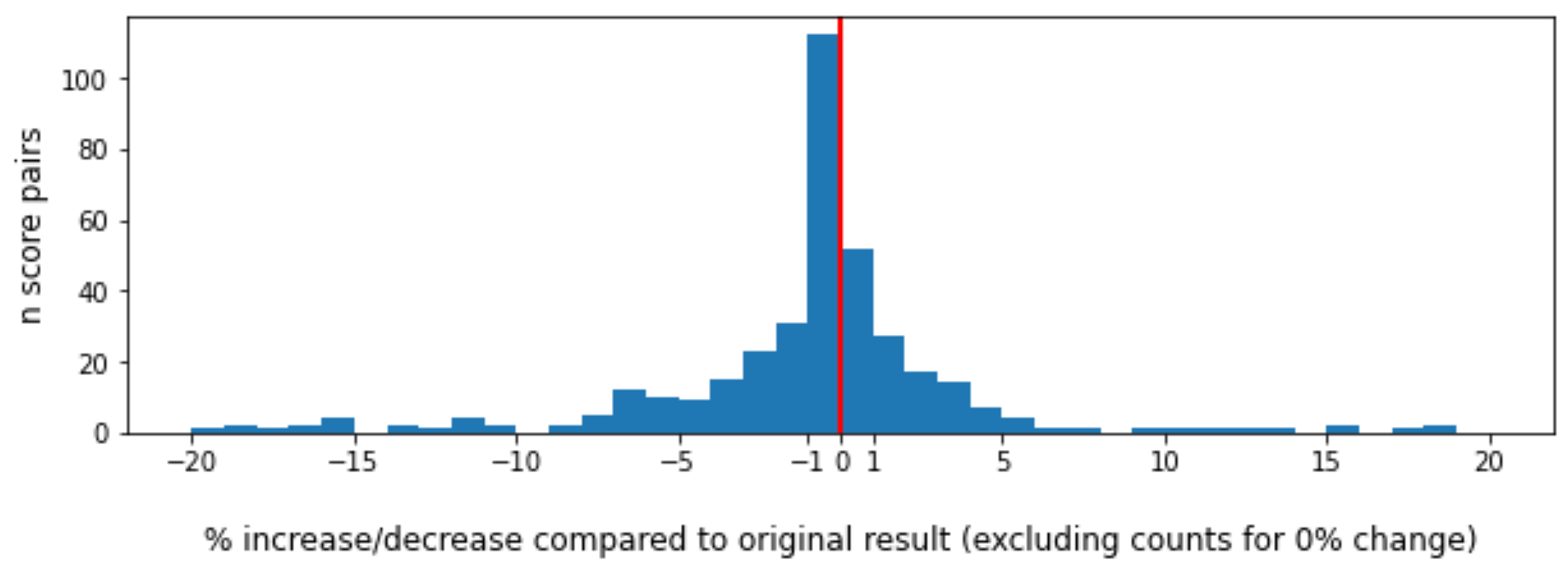

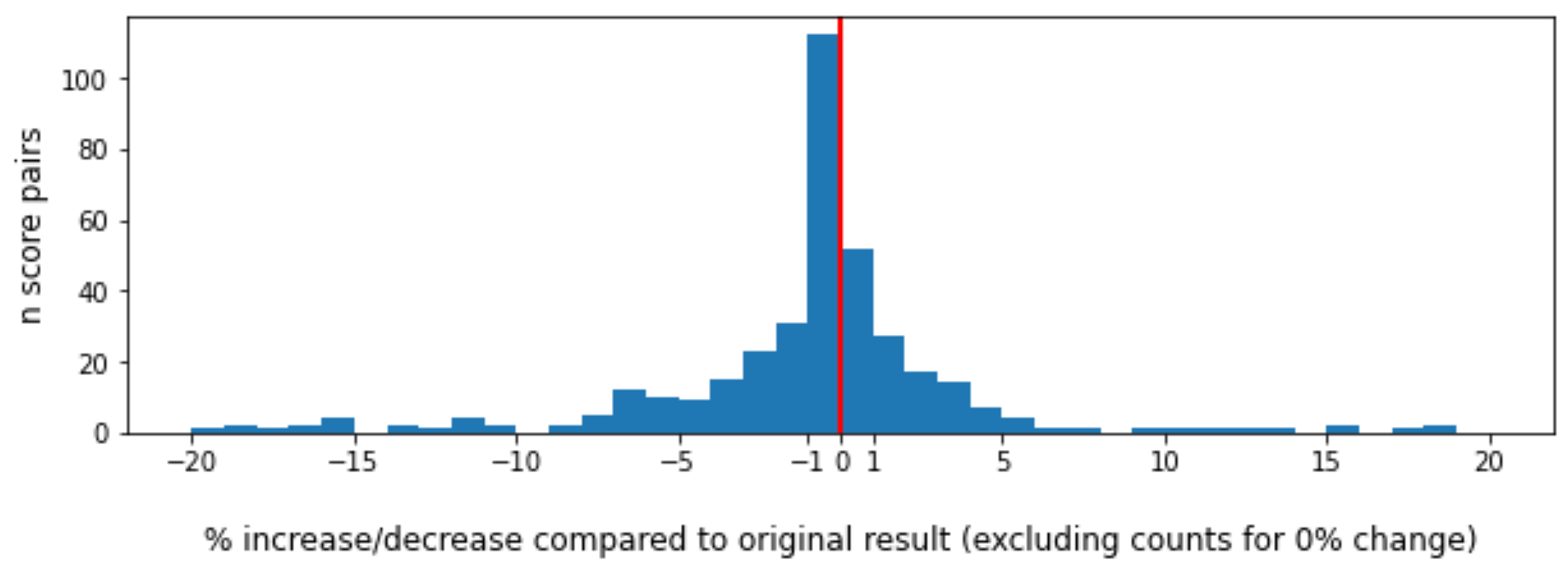

Significant effort in NLP has been directed towards reproduction under same conditions, where the aim is to exactly recreate an experimental setup and validate previous results. This endeavor is complicated by variances in hidden parameters and implementation details that often lead to results differing from those originally reported. Out of 513 examined reproduction cases, only 14.03% of reproduction scores were identical to the originals, with a notable 59.2% exhibiting worse results. This discrepancy signals a critical need for detailed sharing of experimental conditions and emphasizes how slight variations can lead to significant performance changes.

Reproduction Under Varied Conditions

Research under varied conditions aims to test the robustness and generalizability of conclusions by deliberately altering one or more aspects of the experimental setup. Although fewer in number, these studies are crucial for testing the scalability of NLP models across different settings. For instance, strong results for a LLM on a standard dataset may not hold when applied to less-resourced languages or smaller domains, revealing limitations that can go unnoticed in same-condition studies.

Figure 2: Histogram of percentage differences between original and reproduction scores (bin width = 1; clipped to range -20..20).

Multi-test and Multi-lab Studies

Multi-test and multi-lab studies provide a holistic perspective by engaging multiple teams in coordinated reproducibility efforts. These studies embody a robust methodology for verification by leveraging diverse operational conditions across teams. The REPROLANG shared task exemplifies such an approach, promoting reproducibility through structured collaboration, despite still facing challenges in deriving consistent criteria for evaluating success across different studies.

Conclusion

This systematic review uncovers essential insights into the complexity of achieving reproducibility in NLP, underscoring substantial divergences in methodologies and terminologies. The high variability in reproduction results highlights the urgent need for refined protocols and standardized practices. As reproducibility research continues to expand, these findings lay a foundation for developing coherent frameworks that can enhance the rigor and credibility of future NLP research efforts.

The implications of this work are both profound and practical, as addressing these challenges is vital for advancing the discipline and ensuring that NLP models perform reliably across varied applications. Future developments in NLP reproducibility will likely depend on continued efforts to standardize definitions, expand multi-test studies, and embrace a culture of transparency and collaboration.