- The paper demonstrates that prompt-induced personality traits in GPT-3.5 systematically affect cooperation rates in iterated Prisoner's Dilemma games.

- It employs a controlled experimental design with varied social prompts to assess differences across altruistic, competitive, selfish, and mixed conditions.

- Findings highlight GPT-3.5’s potential in emulating human social behavior while revealing limitations in nuanced adaptability in multi-agent scenarios.

The Machine Psychology of Cooperation: Can GPT Models Operationalise Prompts for Altruism, Cooperation, Competitiveness, and Selfishness in Economic Games?

Introduction

This paper explores the capability of GPT-3.5 to operationalize various social behaviors—such as altruism, cooperation, competitiveness, and selfishness—within economic game contexts, primarily using the iterated Prisoner's Dilemma (IPD) as a testbed. The investigation focuses on assessing how well LLMs can translate natural language prompts into social decision-making behaviors that align with human social norms and value systems. This research aims to expand our understanding of AI alignment within complex, multi-agent environments, contributing to a broader discourse on AI safety and the sociotechnical implications of deploying AI systems in societal contexts.

Methodology

The authors employed a within-subject experimental design using GPT-3.5 to generate agents through varied natural language prompts. The LLM-driven agents participated in a series of IPD games with simulated partners following four strategic conditions: unconditional cooperation, unconditional defection, tit-for-tat starting with cooperation, and tit-for-tat starting with defection.

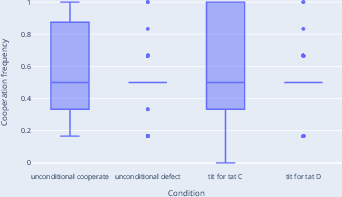

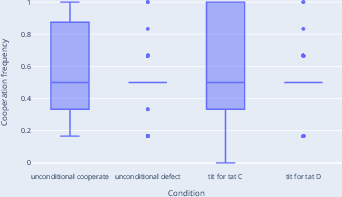

Figure 1: The control group was used as a baseline to compare behavior across various social condition settings.

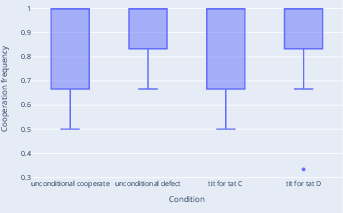

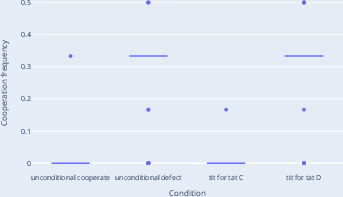

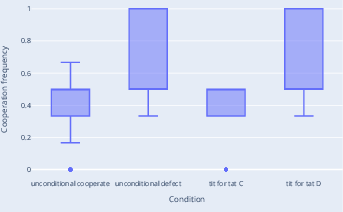

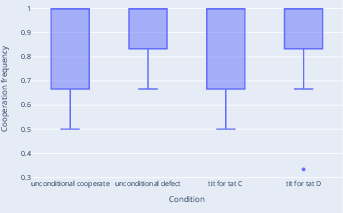

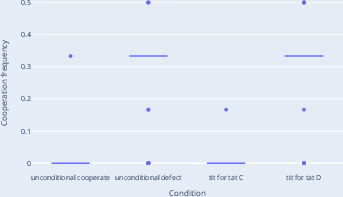

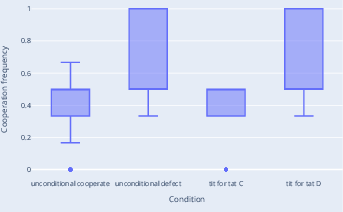

Throughout the experiments, each simulated agent was instantiated using one of five personality-altering prompt types. These include competitive, cooperative, altruistic, selfish, and mixed-motivation prompts. The experimental protocol was carefully designed to observe changes in cooperative behavior in response to diverse social conditions and conversational contexts. The data was collected using OpenAI's chat completion API, with parameters like temperature set to 0.2 to control for randomness in behavioral responses.

Results

The results indicate varied degrees of cooperation as aligned with the initial prompt conditions. Agents instantiated with altruistic prompts exhibited higher cooperation rates, while the competitive and selfish groups saw more frequent defection, regardless of their partner’s strategy. This outcome supports hypotheses H1, H2, and H3, positing that prompt-induced personality traits reliably influence agent behavior. Conversely, hypotheses concerning nuanced behavioral adaptability to partner strategies showed inconsistencies, partially confirming the inherent limitations of GPT-3.5 in translating dynamic social concepts into appropriately conditioned behaviors.

Discussion

The study confirms that LLMs like GPT-3.5 can capture and operationalize abstract social concepts from prompts, translating them into behavior patterns that mirror those of their real-world social counterparts to a degree. Findings underscore the influence of initial conversational scaffolding and prompt engineering on emergent behavior. Yet, limitations in the nuanced adaptability reveal potential for improvement in more complex multi-agent interactions. Moreover, the results reveal a critical observation: while agents can exhibit generalized behavior in response to prompts, they lack the versatility to adequately apply conditioned reciprocity or strategic depth typical of human players in IPD scenarios.

Conclusion

The research substantiates the potential of LLMs to navigate social dilemmas through prompt-induced personas but also highlights pronounced limitations in adaptive behavior modulation. Future work is necessary to examine more sophisticated prompt configurations and model architectures (e.g., GPT-4) to enhance narrative alignment with human values. Additionally, scaling the experiments to include other economic games and interaction dynamics will aid in refining the conceptual modeling of agent behavior in LLMs. Such endeavors are pivotal for advancing AI systems capable of integrating seamlessly and ethically within societal frameworks.