- The paper introduces a Volterra reservoir kernel that universally approximates causal, fading memory filters using state-space dynamics.

- It employs RKHS and the Representer Theorem to convert nonlinear dependencies into linear regression tasks for dynamic systems.

- Empirical evaluations demonstrate superior performance in financial modeling by efficiently computing time-varying conditional covariances.

Reservoir Kernels and Volterra Series: An Analytical Exploration

Introduction

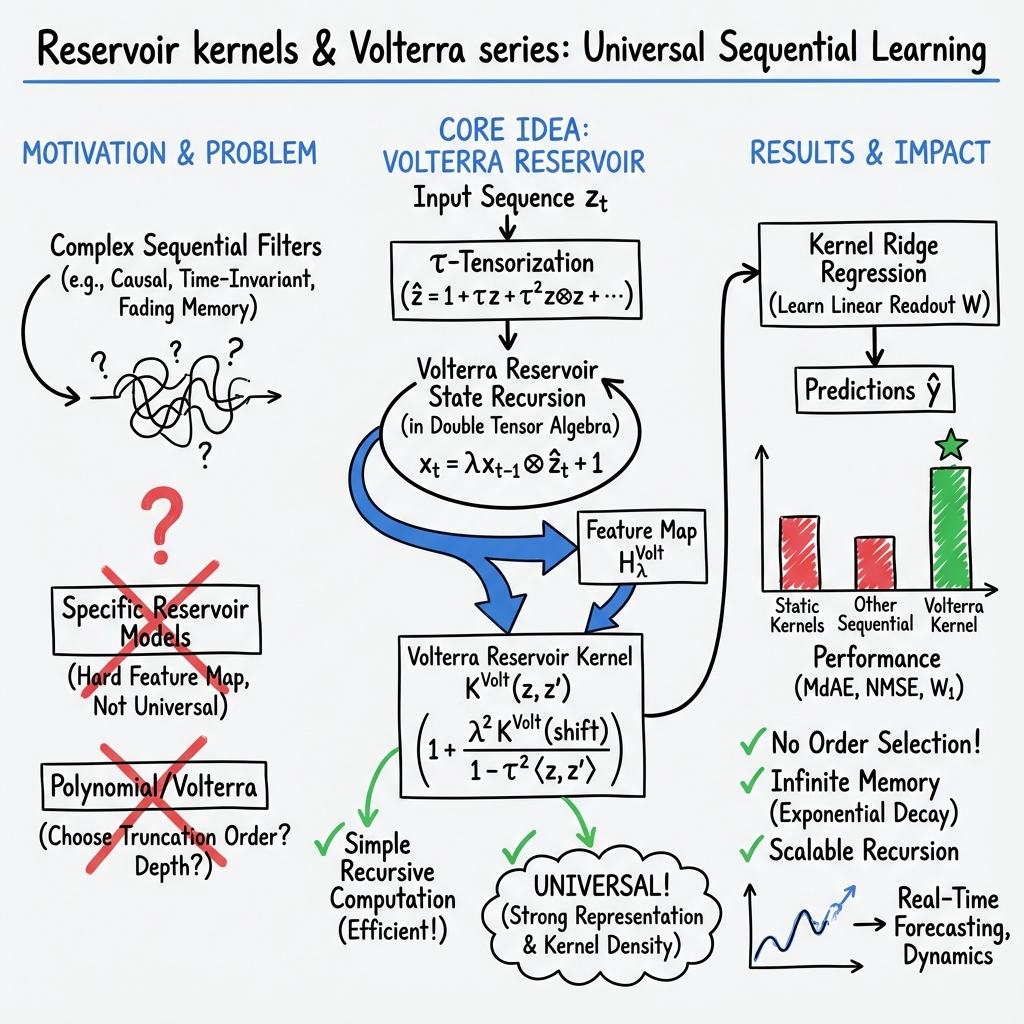

The paper "Reservoir Kernels and Volterra Series" (2212.14641) offers an incisive exploration into the construction of a universal kernel aimed at approximating any causal and time-invariant filter within the fading memory category. The kernel harnesses the reservoir functional related to a state-space representation of the Volterra series, employed broadly across analytic fading memory filters. This paper contributes significantly to the understanding and application of kernel methods in dynamic systems, particularly within financial modeling contexts.

Key Contributions

Reservoir Computing and Kernel Methods

The central focus of the paper is the Volterra reservoir kernel, constructed from the state-space representation of the Volterra series expansion. The authors exploit the inherent properties of reproducing kernel Hilbert spaces (RKHS) and reservoir computing (RC), which facilitate approximations of nonlinear input/output systems through random state-space dynamics. This approach underscores the potential to convert nonlinear dependencies into linear regression tasks, leveraging the classical Representer Theorem.

Construction of the Volterra Reservoir Kernel

The Volterra reservoir kernel represents a solution to two primary challenges associated with reservoir kernels: the efficient computation of state functionals and ensuring universality. The kernel map is evaluated using explicit recursions, enabling its application over specific data sets for dynamic learning problems. This universality extends the kernel's application scope beyond immediate tasks, such as forecasting time series and path-continuation in dynamical systems.

Theoretical and Practical Implications

The paper establishes that the Volterra kernel is universal, encapsulating an infinite-dimensional feature map which does not depend on the specific fading memory filter being realized. Instead, only the linear functional readout adapts, rendering the approach broadly applicable across different filters. This strong universality ensures that the kernel sections coincide with the space of fading memory filters, thereby broadening potential applications in various dynamic learning scenarios.

Empirical Evaluation

The empirical performance of the Volterra reservoir kernel is validated through a multidimensional learning task involving the conditional covariances of financial asset returns. The results demonstrate superior performance and scalability when benchmarked against standard static and sequential kernels. These findings affirm the kernel's efficacy, particularly in financial econometrics, where the modeling of covariances is pivotal.

Conclusion

This research meticulously develops a Volterra reservoir kernel that successfully approximates time-invariant filters within the fading memory category, providing a robust tool for dynamic system modeling. The explicit recursive nature of the kernel computations enhances its practicality, enabling efficient application across various domains. The paper underscores the relevance of the Volterra reservoir kernel in forecasting and modeling complex dynamics, presenting promising directions for future exploration in generalized dynamic regression frameworks.

In summary, the Volterra reservoir kernel, through its unique construction and universal properties, presents profound implications for machine learning applications in signal processing and beyond, marking a noteworthy advancement in the interface of kernel methods and reservoir computing.