- The paper introduces a formal definition of domain generalization, distinguishing it from related fields while detailing its diverse methodologies.

- The paper reviews techniques including domain alignment, meta-learning, data augmentation, and self-supervised learning to tackle unseen domain shifts.

- The paper highlights evaluation challenges and outlines future directions for dynamic architectures and robust out-of-distribution performance.

Domain Generalization: A Survey

Introduction to Domain Generalization

Domain Generalization (DG) addresses the challenge of enabling models to generalize well to unseen, out-of-distribution (OOD) data. This is critical since most machine learning models rely on the i.i.d. assumption of the training and test data, which is often violated in real-world scenarios due to domain shifts. DG aims to overcome these shifts using only source domain data for model training, without access to target domain data.

The paper provides a comprehensive review of DG, systematically examining methodologies and application areas such as computer vision, speech recognition, and reinforcement learning. A formal definition is presented, distinguishing DG from related fields like domain adaptation and transfer learning. The survey covers existing strategies including domain alignment, meta-learning, data augmentation, ensemble learning, and self-supervised learning, highlighting the breadth of approaches developed over the past decade.

Methodologies in Domain Generalization

Domain Alignment

Domain alignment methods form a substantial part of DG research. These methods seek to minimize differences between source domains to learn domain-invariant representations. Techniques include minimizing moments, using the KL divergence, employing Maximum Mean Discrepancy (MMD), and adversarial learning. Domain alignment typically requires domain labels and focuses on aligning either the marginal distribution P(X) or the class-conditional distribution P(X∣Y).

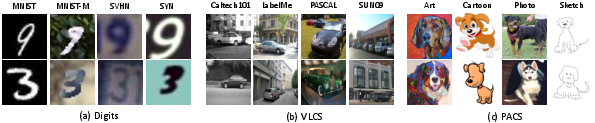

Figure 1: Example images from three domain generalization benchmarks manifesting different types of domain shift.

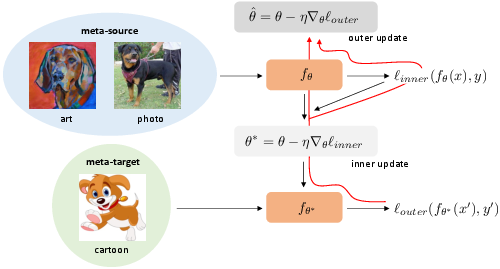

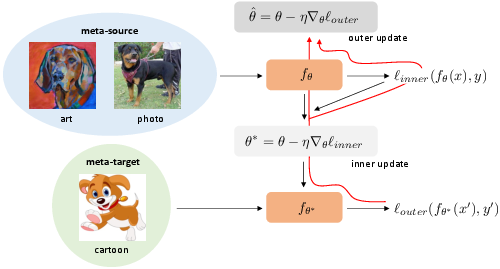

Meta-learning approaches in DG expose models to domain shifts during training, enabling better handling of unseen domain shifts. The meta-learning paradigm involves dividing source domains into meta-source and meta-target domains, simulating domain shift in training. This category requires domain labels and employs bi-level optimization methods to train models.

Figure 2: A commonly used meta-learning paradigm in domain generalization.

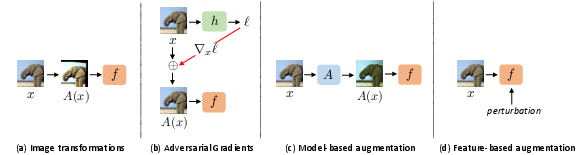

Data Augmentation

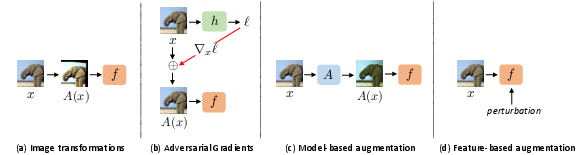

Data augmentation techniques in DG simulate domain shift, extending the diversity and robustness of the model to OOD data. Methods range from traditional image transformations to adversarial gradient-based perturbations and learnable data synthesis networks. Some approaches use feature-level augmentation and style transfer models to enrich the training domain diversity.

Figure 3: Categories of data augmentation methods based on transformation formulation.

Self-Supervised Learning

Self-supervised learning leverages free labels generated from data itself to learn robust features that generalize well across domains. Tasks like solving Jigsaw puzzles or predicting rotations aid models in learning regularities pertinent to DG without requiring domain labels.

Figure 4: Common pretext tasks used for self-supervised learning in domain generalization.

Evaluation and Implications

The paper discusses evaluation techniques for DG, often using the leave-one-domain-out rule and focusing on metrics like average and worst-case performance. Model selection methods are vital and should be carefully considered in evaluations. The paper ultimately argues that DG remains a challenging problem, warranting further exploration of dynamic architectures, causal representation learning, and novel training paradigms.

Conclusion

Domain Generalization is an evolving field with significant implications for AI applications requiring robust model performance across varying environments. The survey underscores the diversity of existing methodologies while emphasizing the need for continued research into efficient DG strategies. Future directions include exploration of adaptive model architectures, semi-supervised learning, and integration of transfer learning techniques. The interdisciplinary nature of DG presents substantial opportunities for advancing AI's real-world applicability.