- The paper introduces NeuTex, which disentangles geometry and appearance using a novel 3D-to-2D texture mapping and cycle consistency loss.

- The methodology leverages multiple MLP networks, including an inverse mapping network and positional encoding, to optimize both rendering fidelity and editability.

- NeuTex demonstrates high-fidelity view synthesis on DTU scenes while enabling intuitive surface texture edits and integration with reflectance fields.

NeuTex: Neural Texture Mapping for Volumetric Neural Rendering

Abstract and Introduction

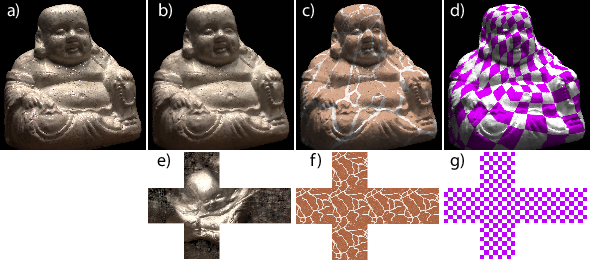

The paper "NeuTex: Neural Texture Mapping for Volumetric Neural Rendering" focuses on advancing volumetric neural rendering techniques by challenging the entangled geometry and appearance in traditional neural scene representations. Specifically, it presents NeuTex, a method that disentangles geometry represented as a 3D volume from appearance represented as a 2D neural texture map. This is achieved through a 3D-to-2D texture mapping network constrained by an inverse mapping network and a novel cycle consistency loss. Such a disentangled representation allows for intuitive surface appearance editing directly by interacting with the 2D texture maps.

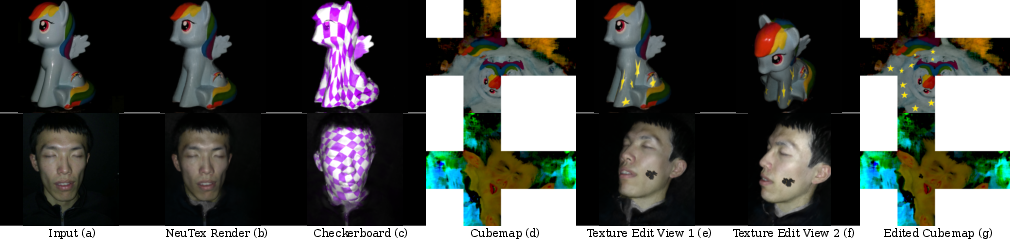

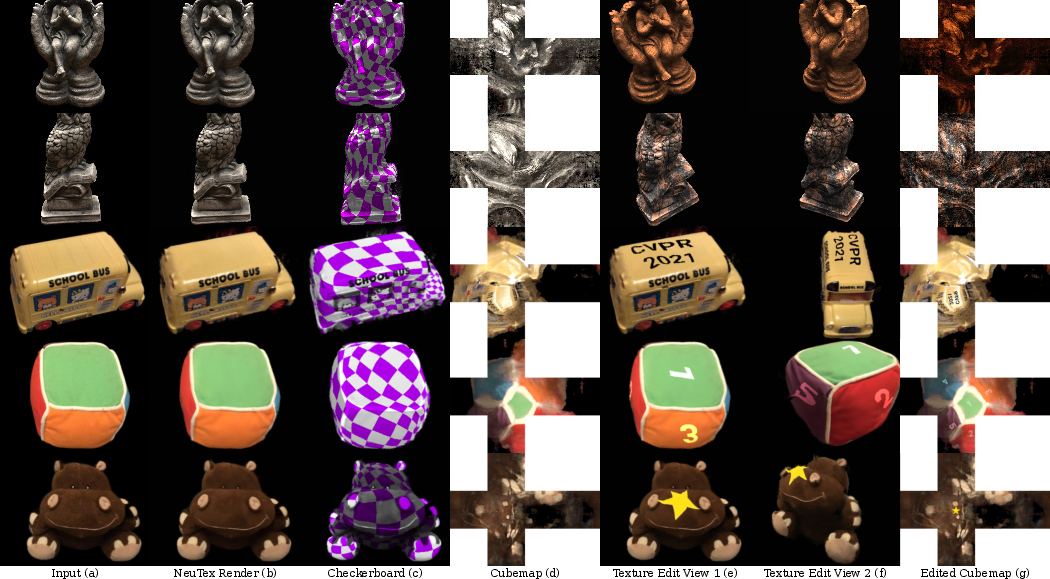

Figure 1: NeuTex is a neural scene representation that simultaneously represents appearance as a 2D texture and geometry as a 3D volume.

Previous neural rendering methods, such as NeRF and Deep Reflectance Volumes, create high-quality visual scene representations through differentiable volume rendering techniques. However, they fail to provide easy access to surface appearance editing due to the "black-box" nature of their geometry-appearance encoding. Traditional mesh-based methods, despite their widespread use, struggle with generating realistic images without extensive additional processing. By leveraging volumetric neural rendering, NeuTex provides both high-quality photo-realistic rendering and broad possibilities for texture-based appearance editing.

Neural Texture Mapping and Methodology

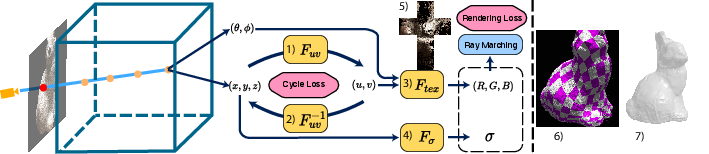

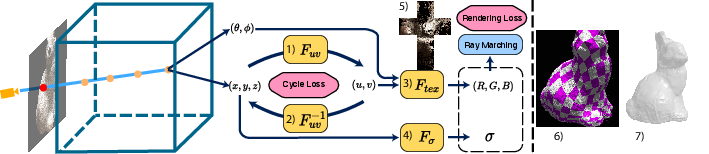

NeuTex separates the scene's appearance into a texture space, wherein a 3D scene point is mapped to 2D UV coordinates via the texture mapping function. The neural architecture consists of four key components: geometry, texture mapping, texture rendering, and inverse texture mapping networks. Cycle consistency loss plays a crucial role, guiding the neural network to embrace a more realistic texture mapping that ultimately supports intuitive editing capabilities. Supervised by rendering and mask losses, NeuTex learns this consistent mapping end-to-end.

Figure 2: Overview of NeuTex’s disentangled structure using multiple MLPs for volumetric rendering.

Implementation Details

The architecture employs MLP networks for its various subcomponents. Positional encoding is utilized for F_{geo} and tex, enhancing the resolution for fine details, while smoothness is maintained by withholding encoding in mapping networks. Training involves initializing the inverse mapping network using point cloud data from COLMAP, followed by iterative refinement using cycle loss and eventual fine-tuning of texture details.

Experimental Results

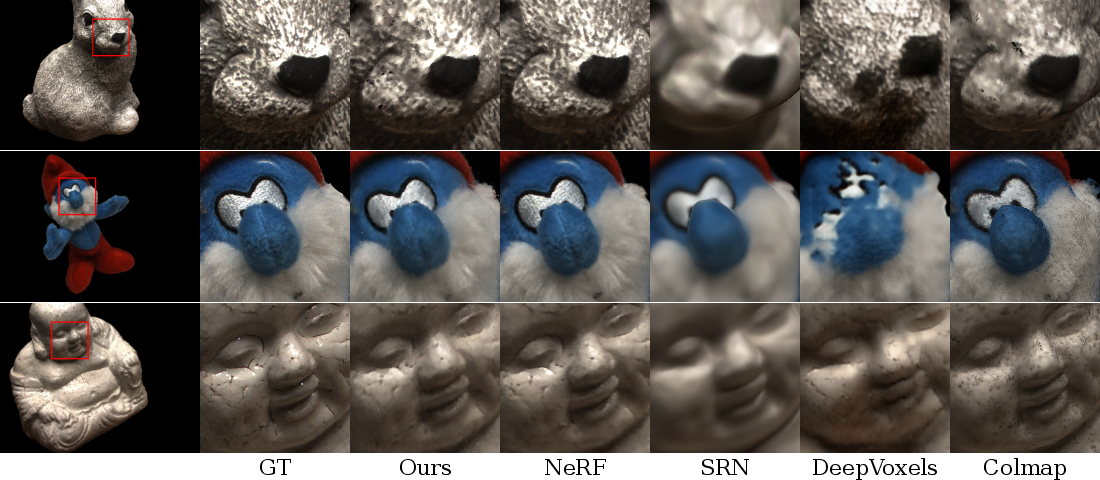

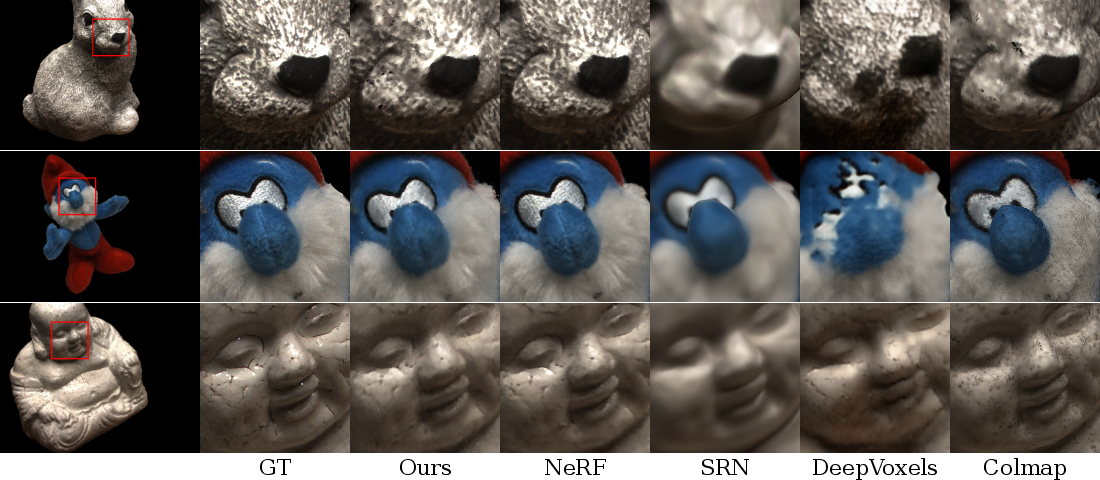

NeuTex's view synthesis results were evaluated on DTU scenes, demonstrating realistic rendering quality on par with NeRF, and surpassing traditional neural rendering methods in realism and texture editing capabilities. NeuTex achieves meaningful texture mapping—a feat difficult for single MLP-based networks—and supports surface appearance edits through intuitive texture manipulation.

Figure 3: Comparison of methods on DTU scenes, showcasing NeuTex's editing capability while maintaining visual fidelity.

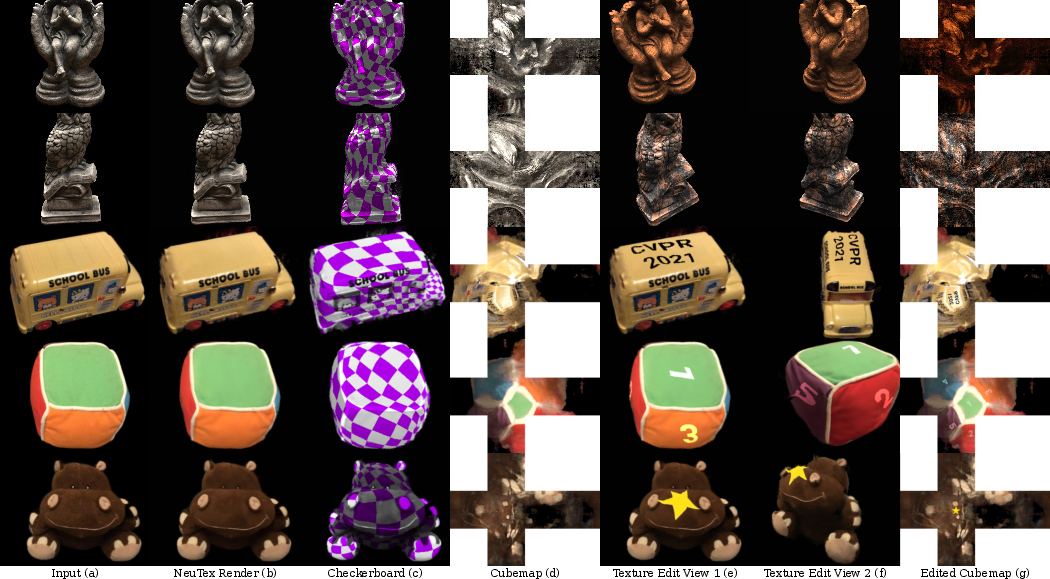

Texture Mapping and Appearance Editing

NeuTex revolutionizes control over scene appearance through texture mapping. Scenes previously accessible only via complex adjustments are now editable through surface texture, empowering a broader scope of creative applications. NeuTex’s disentangled structure naturally facilitates texture adjustments, showcased by altering object appearance from one material type to another, introducing patterns, logos, and more without compromising realism.

Figure 4: Examples of texture editing applied to various objects, demonstrating NeuTex's broad appearance manipulation capabilities.

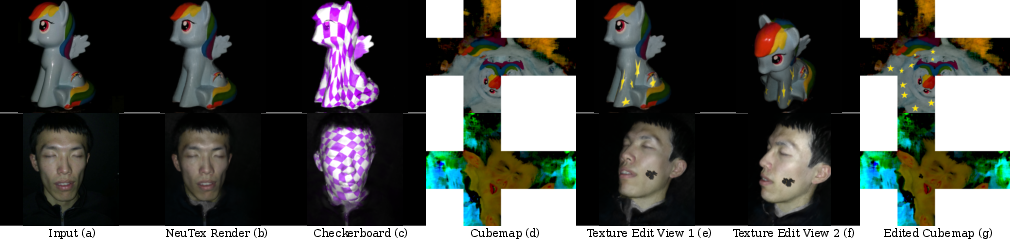

Reflectance Fields Extension

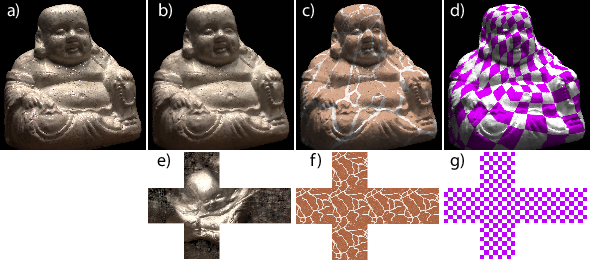

NeuTex integrates seamlessly with reflectance field frameworks, extending utility beyond simple texture editing. This adaptability is validated by experiments with Neural Reflectance Fields, where NeuTex preserved surface details while allowing BRDF edits in a newly defined texture space. This potential opens avenues for adjusting reflectance characteristics in neural rendering tasks.

Figure 5: NeuTex applied to reflectance fields, showing significant flexibility in editing albedo properties.

Conclusion

NeuTex advances volumetric rendering through its clear separation of geometry and appearance in texture space. It addresses practical limitations in current neural rendering methods, enhancing the scope for realistic editing and rendering applications. This advancement not only delivers high-fidelity images but positions NeuTex as pivotal in evolving the application of neural rendering within modern 3D design processes.