- The paper's main contribution is FedAMP, an attentive message-passing mechanism that personalizes models for non-IID data.

- It employs adaptive client collaboration based on model similarity, effectively addressing data heterogeneity.

- Empirical results on FMNIST, EMNIST, and CIFAR100 demonstrate improved performance over traditional global federated learning methods.

Personalized Cross-Silo Federated Learning on Non-IID Data: A Summary

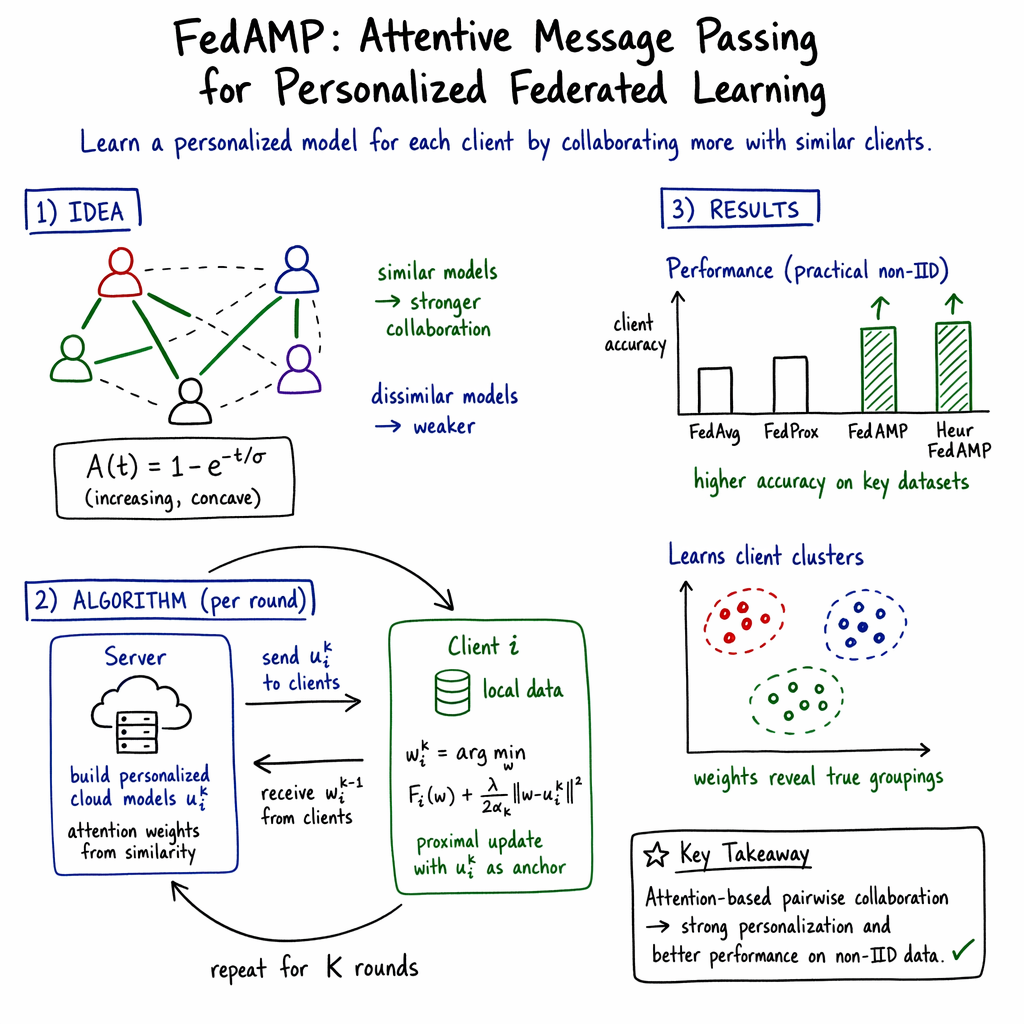

This paper addresses the critical challenge of personalized federated learning on non-IID data, proposing a novel method named Federated Attentive Message Passing (FedAMP). The fundamental issue with federated learning in such scenarios is the heterogeneity of data distributions across clients, which complicates the creation of a single model that performs adequately for all participants. FedAMP introduces a mechanism to adaptively facilitate pairwise collaborations between clients, especially those with similar data distributions, enhancing the learning performance while preserving data privacy.

Main Contributions

The paper's key contribution is FedAMP, which employs an attentive message-passing approach. This method allows each client to maintain a personalized model and focuses collaboration efforts between clients with similar data. Traditional federated learning techniques often utilize a global model trained from all clients' data, but this can be inefficient or even ineffective when data are non-IID. FedAMP, instead, does not rely on a universal model, thereby avoiding this pitfall.

The mechanism works by iteratively passing model parameters between clients. This is controlled by an attention-inducing function that weighs the parameter exchanges based on the similarity between clients' models. Notably, this framework is proven to converge for both convex and non-convex objective functions, demonstrating robustness even in complex settings involving deep neural networks.

Results and Implications

The empirical evaluations of FedAMP indicate superior performance compared to existing personalized federated learning methods, particularly on challenging datasets such as FMNIST, EMNIST, and CIFAR100. This underscores FedAMP's ability to dynamically adjust collaborations based on model parameter similarity, leading to improved model performance for each client without compromising the privacy of individual datasets.

Moreover, the introduction of a heuristic improvement, HeurFedAMP, specifically enhances performance when employing deep neural networks. By leveraging cosine similarity for model parameter assessment, this heuristic further optimizes collaboration weights and achieves notable improvements in high-dimensional spaces typical of deep learning models.

Broader Impact and Future Directions

The implications of this research are substantial for both theoretical and practical applications of federated learning. By efficiently managing collaboration in non-IID settings, FedAMP provides a scalable solution that can be deployed across various domains, from mobile networks to healthcare systems, where data privacy and heterogeneity are paramount concerns.

Looking forward, adjusting FedAMP to handle different types of model architectures and exploring its integration with other privacy-preserving techniques could open new avenues in federated and distributed learning. Additional benchmarks and real-world applications would further validate and refine this framework, making it a cornerstone for future developments in AI collaboration without central data aggregation.