- The paper demonstrates that a ReLU layer is injective when its weight matrix meets the Directed Spanning Set condition across all input directions.

- It establishes that an expansivity factor of 2 is both necessary and sufficient for injectivity, with empirical validation showing higher factors for Gaussian weights.

- The study connects theoretical injectivity conditions to improved generative model inference and universal function approximation in multilayer architectures.

Globally Injective ReLU Networks

The paper "Globally Injective ReLU Networks" addresses the problem of determining injectivity for ReLU neural networks, especially focusing on fully-connected and convolutional networks. Injectivity is a crucial property in applications such as generative models and inverse problems. This work provides theoretical insights on conditions for neural network layers to be injective, establishes lower bounds on expansivity, and connects these findings to practical enhancements in generative model inference.

Layerwise Injectivity

Directed Spanning Set

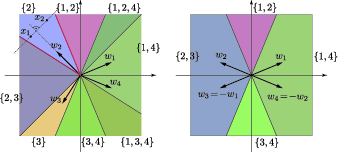

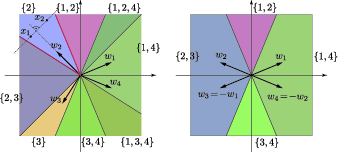

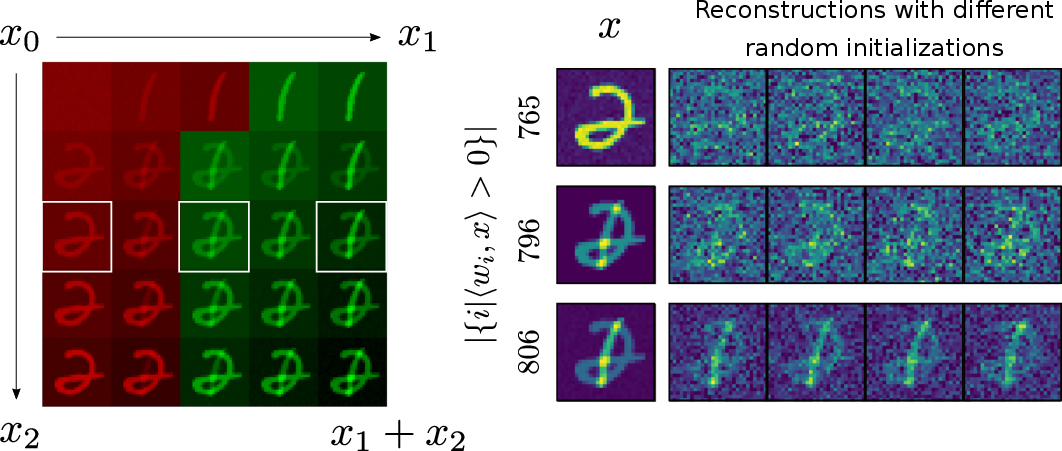

The concept of a Directed Spanning Set (DSS) is introduced to understand when a ReLU-layered network is injective. This involves determining whether the weight matrix has enough vectors with positive inner products across all directions in the input space. Specifically, a ReLU layer is injective if for each input direction, it spans the output space.

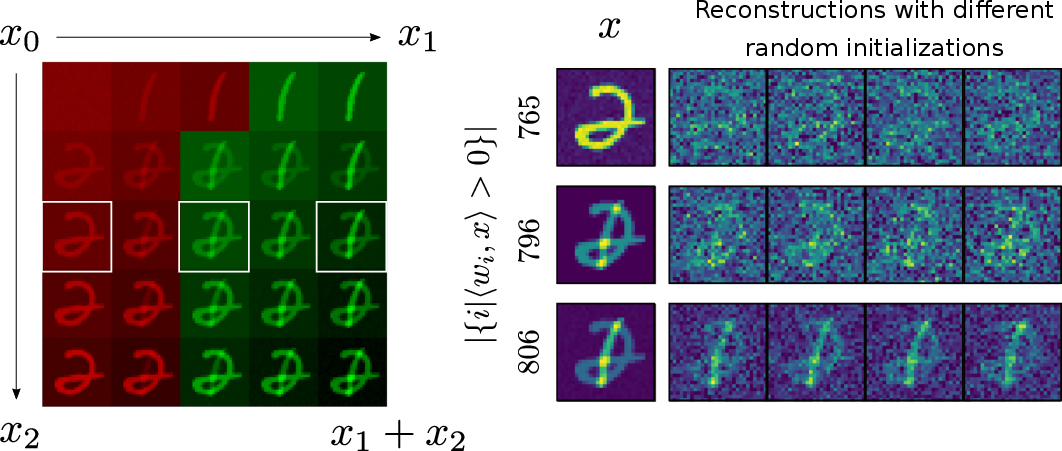

Figure 1: Illustration showing non-injective configuration due to absence of DSS.

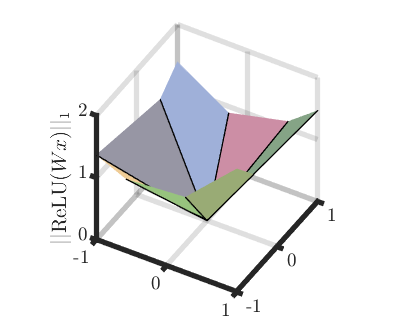

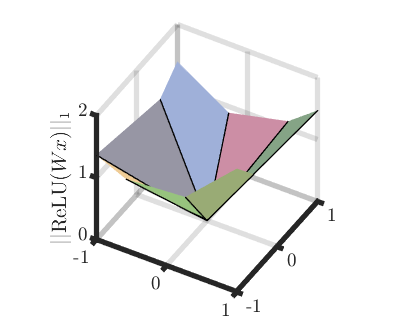

Minimal Expansivity for Injective Layers

A critical result shows that an expansivity factor of 2 is both necessary and sufficient for the injectivity of ReLU layers. This presents a practical guideline for designing injective architectures, particularly for layers with Gaussian weights.

Figure 2: Visualization of weight matrix mappings in an injective vs. non-injective scenario.

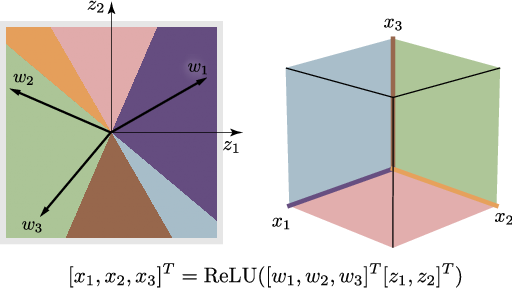

Multilayer Networks and Universal Function Approximation

Injectivity Across Layers

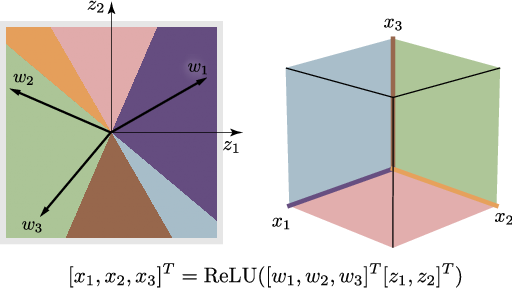

While each layer can be injective, the composition (entire network) may not be. The paper proves a universal approximation theorem for injective networks, showing that any continuous function can be approximated by an injective ReLU network with doubled output space dimensions. This results in a deeper understanding of how to preserve injectivity across layers.

Practical Implementation: Generative Inference

In practice, ensuring injectivity across a generative model facilitates better inversions within generative adversarial networks (GANs). The study shows that imposing injective constraints can lead to improved inference without sacrificing expressivity.

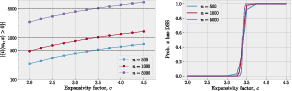

Empirical Validation

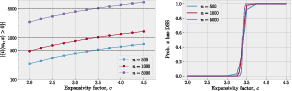

The work empirically validates the necessity of sufficient expansivity (greater than 3.4) for Gaussian weights to achieve injectivity. This demonstrates a sharp characterization of layerwise and end-to-end injectivity, challenging previous work that suggested lower factors for injectivity assurance. The numerical experiments confirm the theoretical predictions, while also highlighting the benefits in the context of generative model inference.

Figure 3: Empirical probability of injectivity as a function of expansivity for various dimensions.

Conclusion

Overall, the paper provides a comprehensive theoretical treatment of injectivity in ReLU networks, proposing practical conditions for ensuring injectivity and illustrating the role of expansivity and layer design. This lays the groundwork for more robust applications in generative modeling and inverse problems where injectivity is critical for mapping back from the range to the domain.

Figure 4: Mapping illustration demonstrating ReLU layer injectivity on latent spaces.