- The paper shows that log-domain smoothing acts as geometry-adaptive regularization, ensuring generated samples remain on the data manifold.

- It establishes theoretical bounds linking kernel variance, manifold reach, and intrinsic dimensions to balance smoothing effects.

- Empirical results confirm that geometry-adaptive smoothing outperforms traditional KDE in preserving manifold structure in high-dimensional settings.

Geometry-Adaptive Log-Domain Smoothing in Diffusion Models

This paper rigorously investigates the mechanisms by which diffusion models generalize, focusing on the manifold hypothesis and the role of log-domain smoothing via score matching. The authors provide both theoretical and empirical evidence that smoothing the score function—equivalently, smoothing in the log-density domain—induces geometry-adaptive regularization, promoting sample generation along data manifolds. The work further demonstrates that the choice and structure of the smoothing kernel can be used to control the geometry of the interpolating manifold, offering a principled approach to biasing generative models toward desired geometric structures.

Theoretical Foundations: Score Matching and Log-Domain Smoothing

Diffusion models generate samples by reversing a stochastic noising process, requiring an accurate approximation of the score function ∇logpt(x). In practice, score matching is performed on the empirical data distribution, leading to a minimizer that reproduces the training data. However, empirical success suggests that implicit regularization—particularly smoothing of the score function—enables generalization beyond memorization.

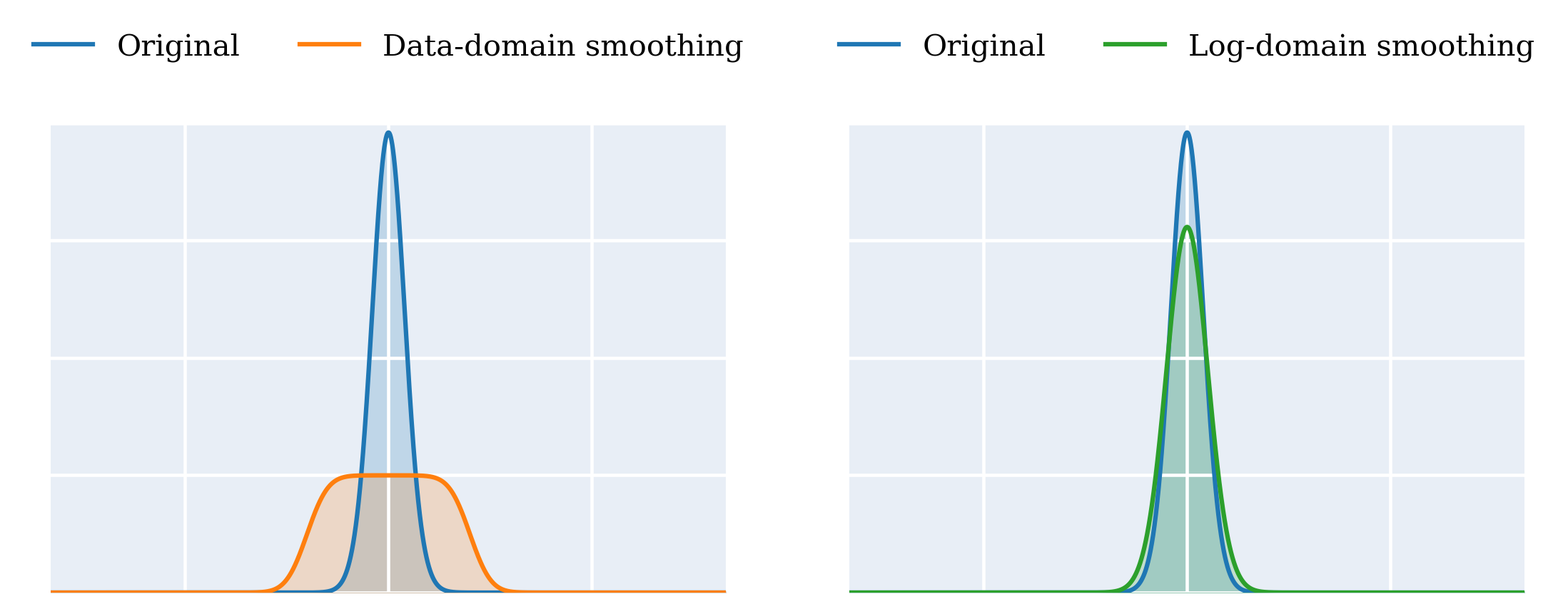

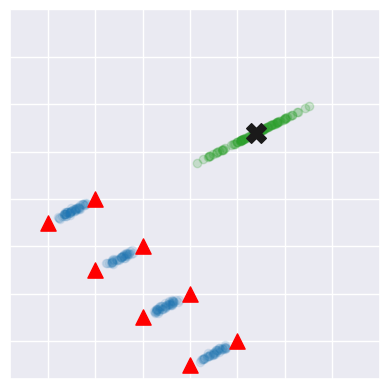

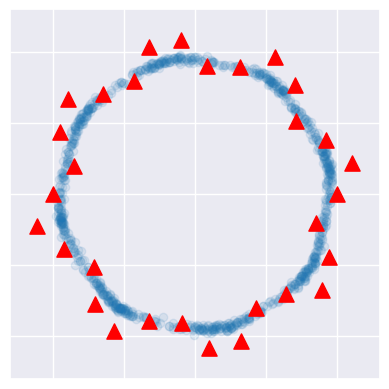

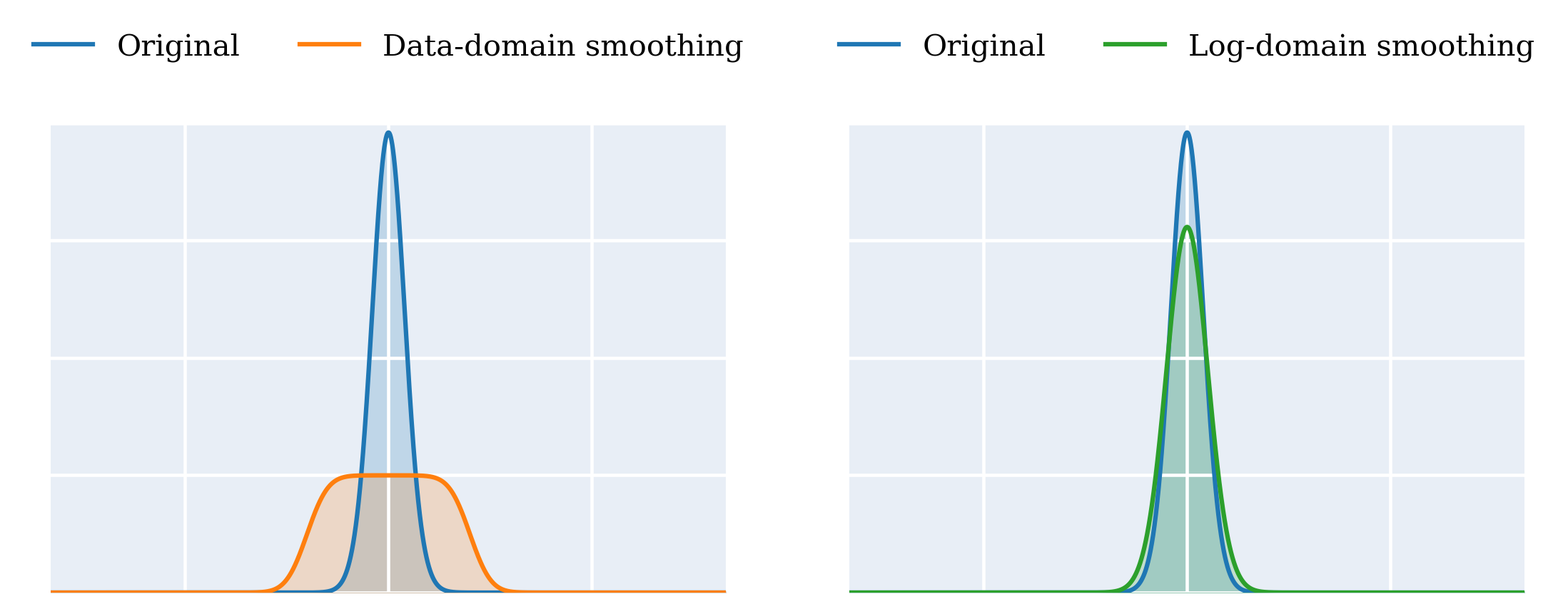

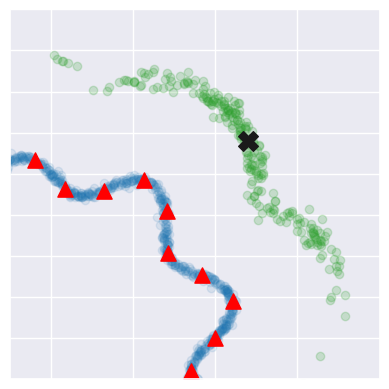

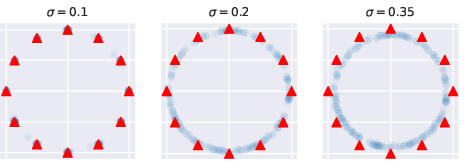

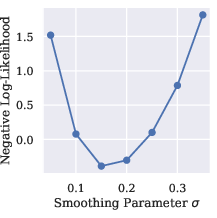

The authors formalize score smoothing as convolution of the empirical score function with a kernel k, yielding sk(t,x)=∫∇logp^t(y)kx(dy). Under mild locality assumptions, this is equivalent to smoothing the log-density: sk(t,x)=∇(k∗logp^t(x)). This log-domain smoothing is shown to be fundamentally geometry-adaptive, in contrast to classical density-domain smoothing (e.g., KDE), which disperses probability mass off the data manifold.

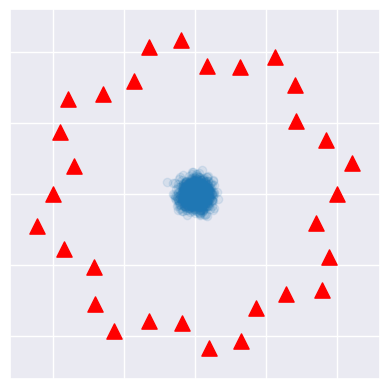

Figure 1: Density smoothing generates samples off-manifold, whereas score smoothing generates samples that retain manifold structure.

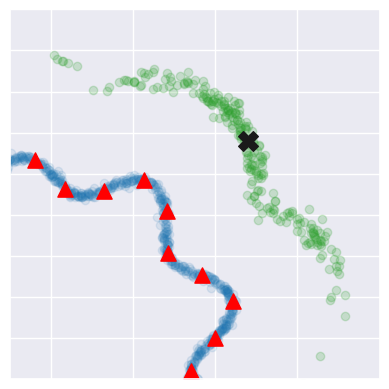

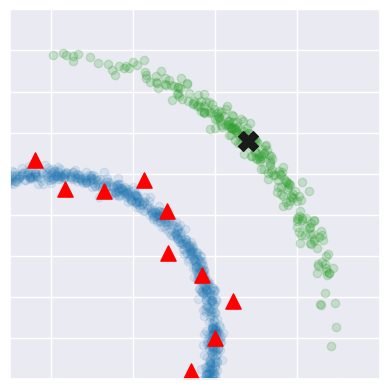

Geometry-Adaptivity: Linear and Curved Manifolds

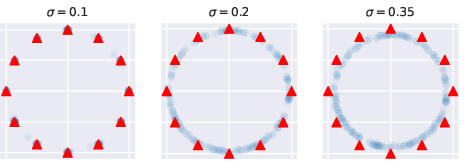

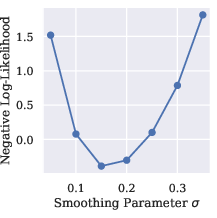

The paper provides a rigorous analysis of log-domain smoothing in both linear and curved manifold settings. For data supported on an affine subspace, log-domain smoothing with a generic kernel is shown to be exactly equivalent to smoothing with a geometry-adapted kernel that only spreads mass tangentially to the manifold. For curved manifolds, the authors introduce the concept of reach to control curvature and prove that log-domain smoothing approximates manifold-adapted smoothing, with the closeness quantified by Rényi divergence. Theoretical bounds depend on the kernel's variance normal to the manifold (K), the reach (τ), and the intrinsic dimension (d∗), establishing that geometry-adaptivity is achieved when K is small relative to τ.

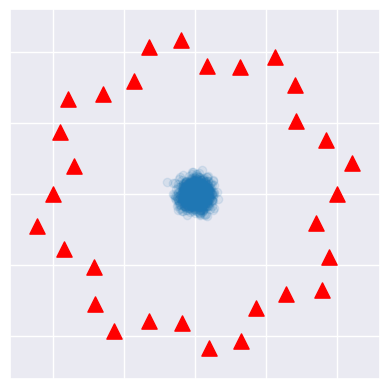

Figure 2: The choice of smoothing kernel influences the manifold on which generated samples lie.

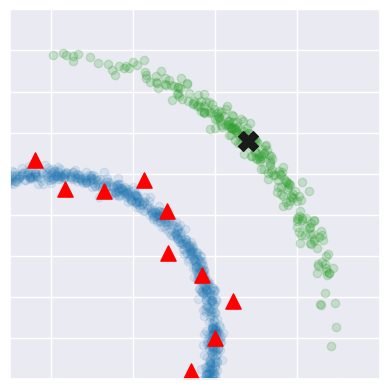

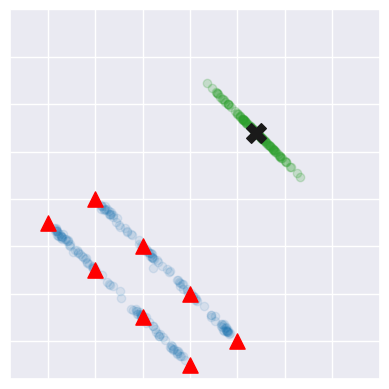

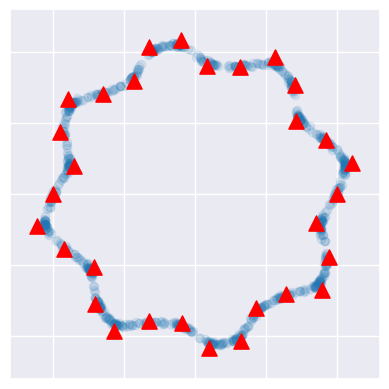

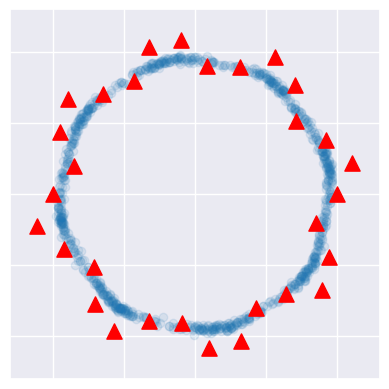

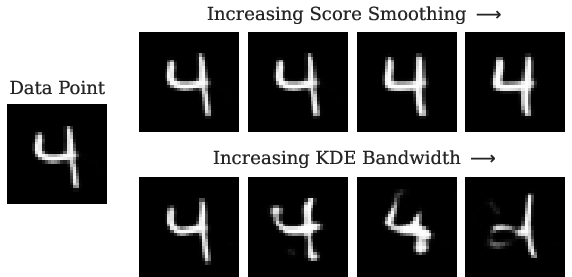

Figure 3: Score smoothing can promote generalisation along curved manifolds, but too much smoothing can distort the desired structure.

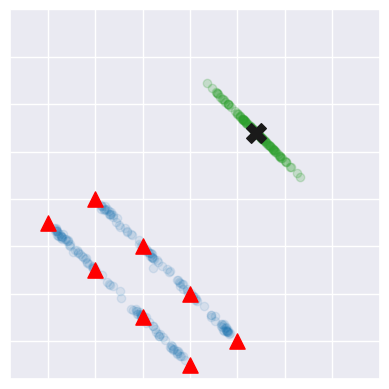

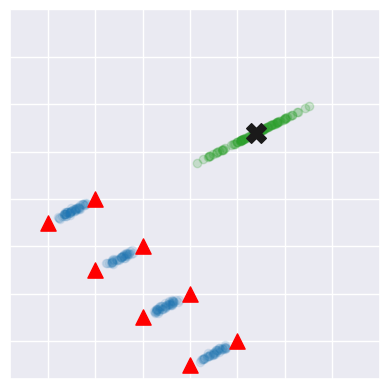

Geometric Bias and Manifold Selection

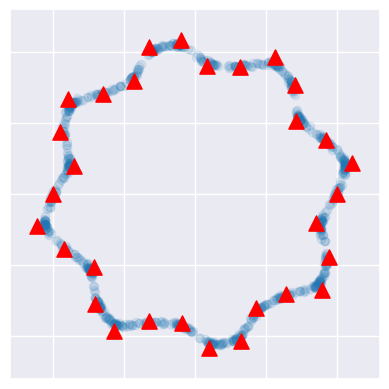

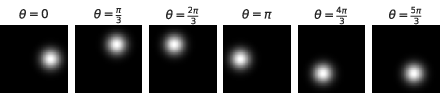

The authors challenge the traditional manifold hypothesis, arguing that in high-dimensional, data-sparse regimes, there are many plausible interpolating manifolds. The smoothing kernel's structure and scale induce a geometric bias, determining which manifold the diffusion model generalizes along. By tailoring the kernel to align with specific geometric structures, practitioners can control the dimension, curvature, and connectedness of the generated samples' manifold.

Figure 4: Different smoothing kernels can isolate alternative manifolds, given the same training data.

Empirical Validation: High-Dimensional and Image Manifolds

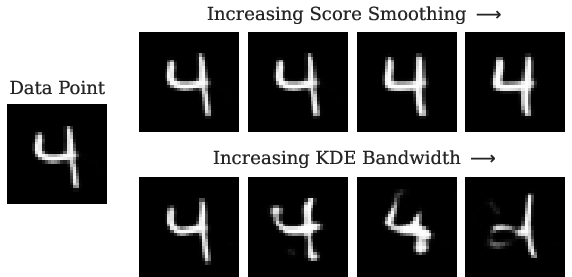

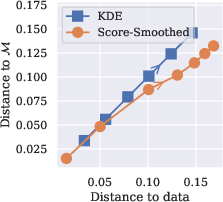

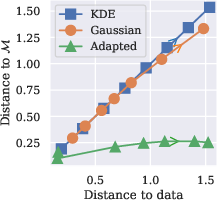

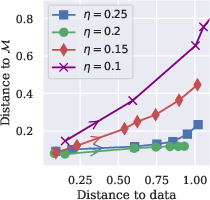

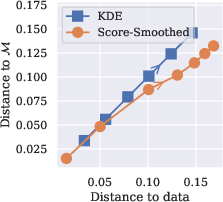

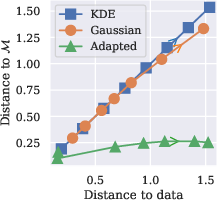

Experiments on MNIST latent space and synthetic image manifolds demonstrate that score-smoothed diffusion models preserve manifold structure even as smoothing increases, whereas KDE samples rapidly deviate off-manifold, resulting in poor reconstructions. Quantitative metrics (e.g., L2 distance to manifold, FID) confirm that log-domain smoothing enables generation of novel samples close to the underlying geometry, while density-domain smoothing fails to generalize.

Figure 5: As smoothing level increases, generations from the score-smoothed diffusion model remain in the manifold structure. In contrast, samples from KDE quickly deviate from the manifold as the kernel scale increases, leading to poor reconstructions.

Figure 6: Visualisation of traversing the synthetic image manifold.

Figure 7: Comparing L2 distance to data and M for different smoothing strategies.

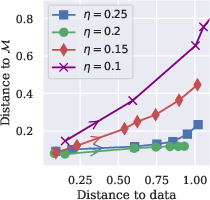

Trade-offs and Generalization Error

Theoretical and empirical results reveal a trade-off in generalization error governed by the scale of log-domain smoothing, the kernel's alignment with the manifold, and the manifold's curvature. Excessive smoothing can distort the desired structure, while insufficient smoothing leads to memorization. The optimal smoothing parameter balances these effects, promoting generalization along the manifold without sacrificing sample quality.

Implications and Future Directions

This work provides a principled framework for understanding and controlling the geometric bias in diffusion models via log-domain smoothing. The results have direct implications for the design of generative models in data-sparse, high-dimensional settings, where explicit control over the interpolating manifold is desirable. The analysis suggests that architectural choices (e.g., convolutional or attention mechanisms) may implicitly induce manifold-adaptive smoothing, a hypothesis warranting further investigation.

Potential future directions include:

- Extending the framework to location-dependent kernels and highly curved manifolds.

- Deriving formal generalization error bounds for log-domain smoothing.

- Investigating the interaction between neural architecture inductive biases and geometry-adaptive smoothing.

- Applying these insights to privacy and memorization analyses in generative models.

Conclusion

The paper establishes that log-domain smoothing in diffusion models is inherently geometry-adaptive, enabling generalization along data manifolds and providing a mechanism for controlling the geometric bias of generative models. Theoretical results are supported by empirical evidence in both low- and high-dimensional settings. This framework offers a foundation for principled design and analysis of diffusion models, with broad implications for generative modeling, manifold learning, and inductive bias engineering.