- The paper introduces ACAI, a multimodal generative AI tool that uses structured prompting to reduce cognitive load and align outputs with user goals.

- It employs a three-panel interface that merges textual and visual inputs to accurately capture creative intent in advertising and branding.

- By leveraging interactive style selection and iterative processing, ACAI offers practical solutions for co-creative design in novice user environments.

Expanding the Generative AI Design Space through Structured Prompting and Multimodal Interfaces

Introduction

The paper "Expanding the Generative AI Design Space through Structured Prompting and Multimodal Interfaces" explores the limitations of current generative AI systems, particularly for novice users such as small business owners (SBOs) attempting to leverage these technologies for advertising and branding. The authors identify persistent issues with text-based prompting that frequently lead to cognitive burdens and the generation of generic outputs misaligned with user expectations. To address these, the paper introduces ACAI (AI Co-Creation for Advertising and Inspiration), a multimodal generative AI tool designed to enhance user experience and output alignment.

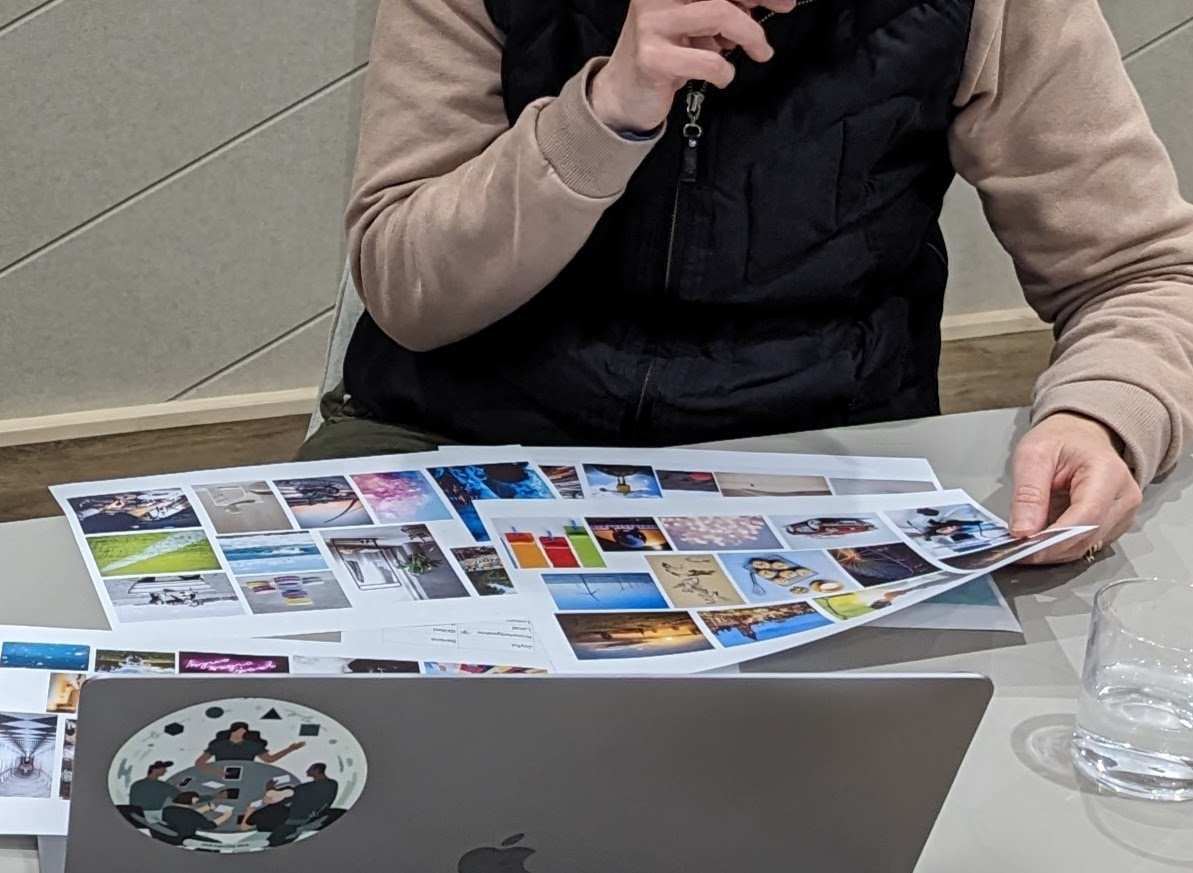

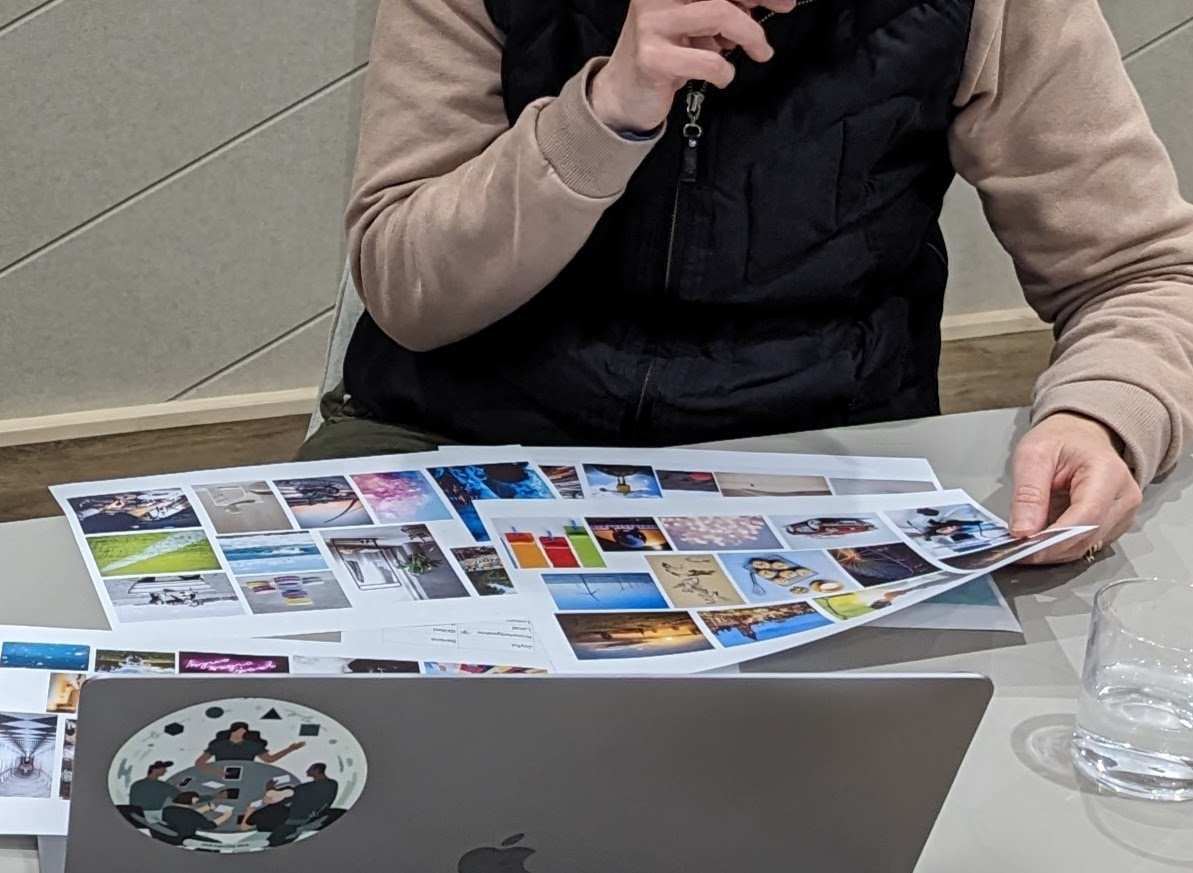

Figure 1: Formative Study: 6 UK SBOs identify visual elements they would like to incorporate into their branding.\vspace{-10pt

The authors conducted a formative study involving semi-structured interviews with six SBOs in Manchester, UK. This study highlighted the cognitive effort required by SBOs to formulate effective prompts and the dissatisfaction with AI-generated outputs that were generic and not grounded in the brand tone or audience needs. SBOs also expressed concern about losing creative control, signaling a need for AI systems that enable co-creation and preserve user agency.

Design Requirements and ACAI Architecture

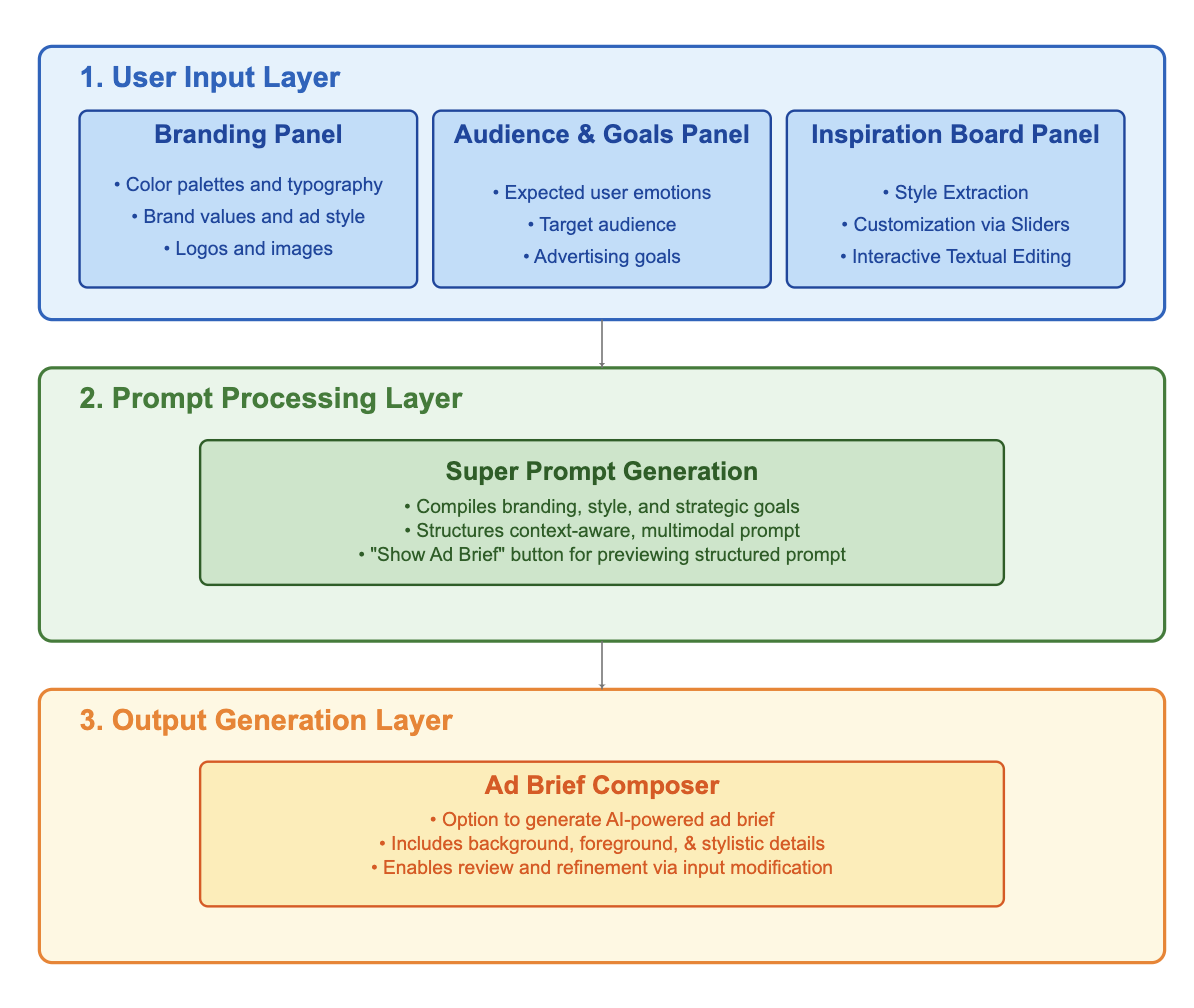

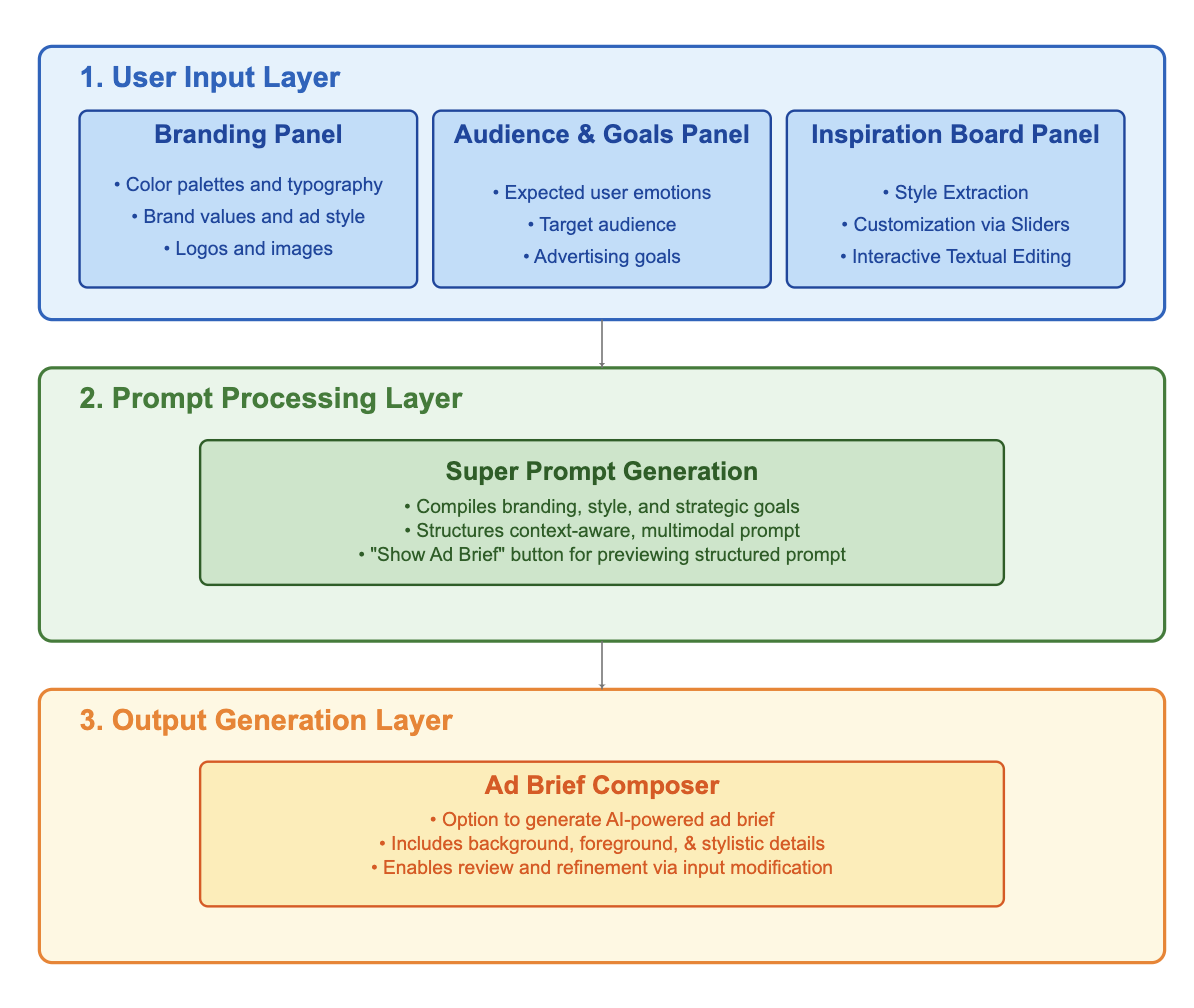

From this study, design requirements were derived, emphasizing the importance of multimodal inputs and interactive style selection to effectively express creative intent. ACAI was thus developed featuring structured interfaces composed of three main panels:

- Branding Asset Panel

- Audience Goals Panel

- Inspiration Board Panel

These panels allow users to specify color palettes, typography preferences, visual elements, and campaign objectives, which are then processed into a "super prompt" using a Multimodal LLM (MLLM). This structured prompt ensures alignment with user-defined goals.

Figure 2: ACAI Architecture \vspace{-15pt

Implementation Details

ACAI's architecture supports co-creation by synthesizing user inputs into multimodal prompts. The system processes these inputs through three layers: User Input Layer, Prompt Processing Layer, and Output Generation Layer. The system's core innovation lies in its interface, which combines textual and visual inputs, supporting novice users in effectively capturing and expressing their branding vision through structured steps.

By leveraging Gemini 1.5 Pro, ACAI offers interactive style selection, supports user agency, and facilitates iterative design processes, significantly reducing the friction associated with conventional text-based prompting.

Discussion

The ACAI tool demonstrates a shift from traditional text-based interactions to multimodal interfaces that cater specifically to novice users like SBOs. By structuring user inputs, ACAI enhances semantic alignment and engagement, thus addressing cognitive and usability barriers identified during interviews.

The system is poised to inform future developments in AI interface design by emphasizing inclusivity and task alignment, addressing common promptability challenges encountered by non-expert users.

Conclusion and Future Work

By foregrounding user-defined context, ACAI enhances alignment and promptability in creative workflows for SBOs. The study and tool contribute to co-creative tooling within HCI, presenting an inclusive approach that may be adapted to other domains requiring contextual grounding. There remains potential for expanding ACAI's functionalities, exploring further adaptations, and ensuring broader applicability across diverse user backgrounds and challenges in brand-aligned creative tasks.

Overall, the paper delivers a structured approach to generative AI interactions, offering a strong foundation for enhancing novice user interfaces in generative design domains. Future research could focus on usability studies and interface optimizations for greater adaptability and efficiency.