- The paper introduces a novel analysis of NVIDIA GPU cores through reverse engineering, revealing advanced, compiler-driven dependency management techniques.

- It details a refined register file organization that employs a register file cache to mitigate port conflicts and reduce energy consumption.

- The study presents a precise modeling of the memory pipeline, achieving lower simulation errors and higher fidelity in replicating GPU performance.

Analyzing Modern NVIDIA GPU Cores

The paper "Analyzing Modern NVIDIA GPU cores" (2503.20481) investigates the intricacies of the NVIDIA GPU architecture, focusing on multiple facets such as issue logic, register file architecture, and memory pipeline. By reverse engineering the latest NVIDIA GPU cores, this research offers more accurate models for microarchitectural simulations, aiming to enhance the precision of academic studies relying on outdated models.

Issue Logic and Dependency Management

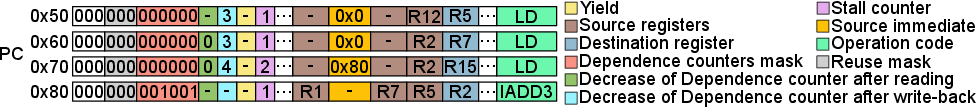

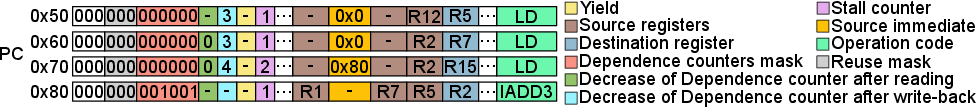

The paper reveals that modern NVIDIA GPUs employ a sophisticated issue logic where the compiler manages data dependencies through control bits rather than hardware-based scoreboarding. The Stall Counter, Yield bit, and Dependence Counters play a critical role in ensuring the readiness of instructions for issue. The introduction of a software-hardware co-design allows efficient dependency handling by enabling the compiler to set appropriate control bits, ensuring correct execution without additional hardware complexity (Figure 1).

Figure 1: Example of using Dependence counters to handle dependencies.

Register File and Cache Design

The register file in modern NVIDIA GPUs consists of a Regular Register File divided among banks with a Register File Cache (RFC) to alleviate port conflicts and reduce energy consumption. The RFC is managed by the compiler using reuse bits, offering a strategy to optimize register accesses. This organizational detail is pivotal for performance improvement, particularly in scenarios with high demand for register file bandwidth.

Memory Pipeline Characteristics

A detailed analysis of the memory pipeline indicates that modern NVIDIA GPUs integrate both local and shared queue stages for handling memory requests efficiently. The observed configurations point towards an ability to buffer up to four consecutive memory instructions per sub-core, with a sophisticated queue architecture designed to optimize bandwidth usage across shared memory components. The precise measurement of latencies and memory access patterns supports the development of more accurate simulation models that closely mimic real hardware performance.

Implementation of a New GPU Microarchitecture Model

The enhancements to the Accel-sim simulator framework integrate the novel findings from this research, such as the introduction of an L0 instruction cache with an effective stream buffer. This setup mirrors a more realistic fetching and issuing policy, incorporating compiler-driven optimizations like hardware-directed dependency management through control bits.

Validation results demonstrate that the new model achieves a considerable reduction in mean absolute percentage error (MAPE) compared to previous simulators, indicating higher fidelity in mimicking actual GPU behavior across a variety of workloads. The model's portability to other NVIDIA architectures like Turing further emphasizes its robustness.

Conclusion

The investigation into the architecture of modern NVIDIA GPUs advances the precision of microarchitectural models by unveiling critical components such as issue logic, register file organization, and dependency management mechanisms. These findings not only contribute to academia by providing a more accurate basis for simulation but also have practical implications for designing next-generation GPUs with enhanced efficiency and reduced hardware complexity.