- The paper introduces a novel SSRepL-ADHD framework that integrates self-supervised and transfer learning with LSTM and GRU for enhanced EEG-based ADHD detection.

- It employs SMOTE and advanced preprocessing to mitigate class imbalance and accurately extract temporal features from a 19-channel EEG dataset.

- The SSRepL-ADHD model outperforms RF and lightweight DNN benchmarks with an accuracy of 81.11%, demonstrating robust clinical applicability.

Adaptive Self-Supervised Representation Learning for ADHD Detection via EEG Attention Tasks

Introduction

The paper "SSRepL-ADHD: Adaptive Complex Representation Learning Framework for ADHD Detection from Visual Attention Tasks" (2502.00376) presents a hybrid framework leveraging Self-Supervised Representation Learning (SSRepL) and Transfer Learning (TL) with LSTM and GRU modules for ADHD classification using EEG records gathered during visual attention exercises in children. The approach targets robust characterization of neurodevelopmental phenotypes, addressing feature extraction challenges and class imbalance intrinsic to EEG datasets related to clinical disorders.

Dataset Characteristics and Preprocessing

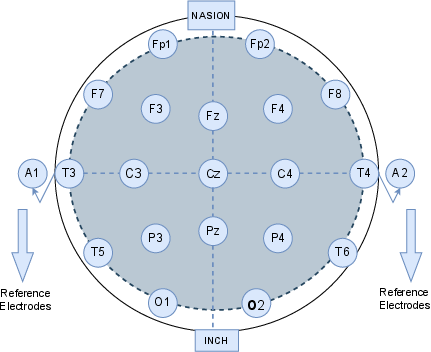

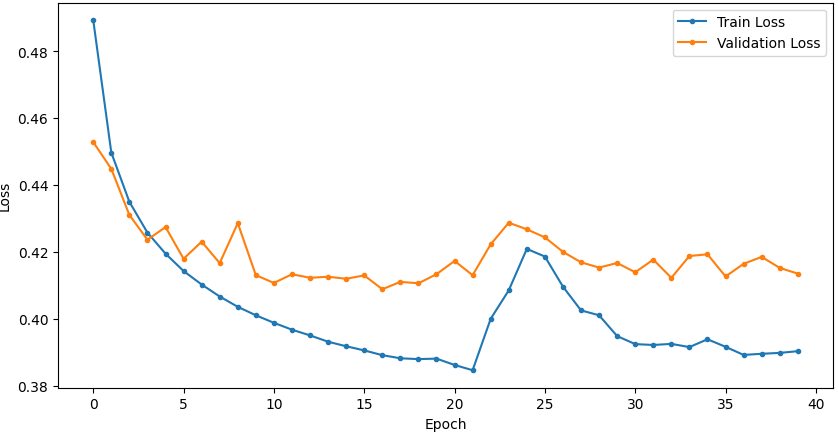

The EEG dataset, comprised of recordings from 120 children (60 diagnosed with ADHD and 60 healthy controls), was generated according to the 10-20 international placement standard using 19 electrodes (see Figure 1). The task-based protocol involved rapid visual disambiguation, leveraging known ADHD-specific defects in sustained attention and stimulus response. Preprocessing integrated categorical label encoding, normalization, and advanced data balancing (SMOTE), ensuring minimal bias and preparation for deep sequence analysis.

Figure 1: Placement schema for the 19-channel EEG using the 10-20 system targeting pediatric neurodevelopmental assessment.

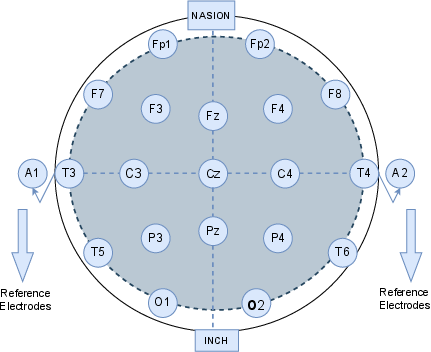

Model Architecture: SSRepL-ADHD

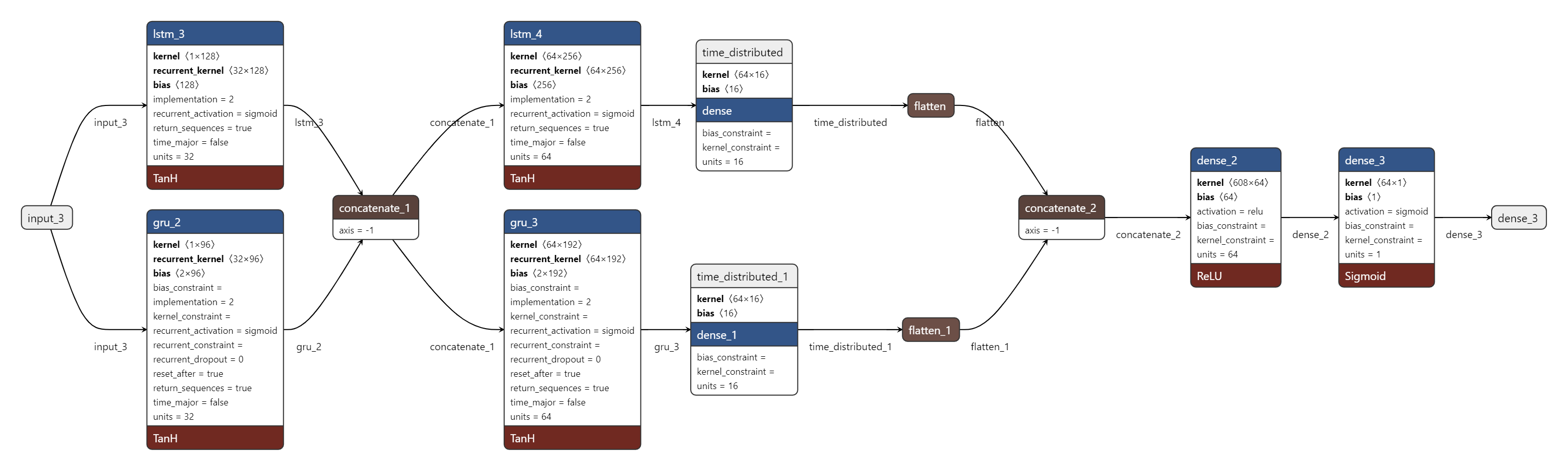

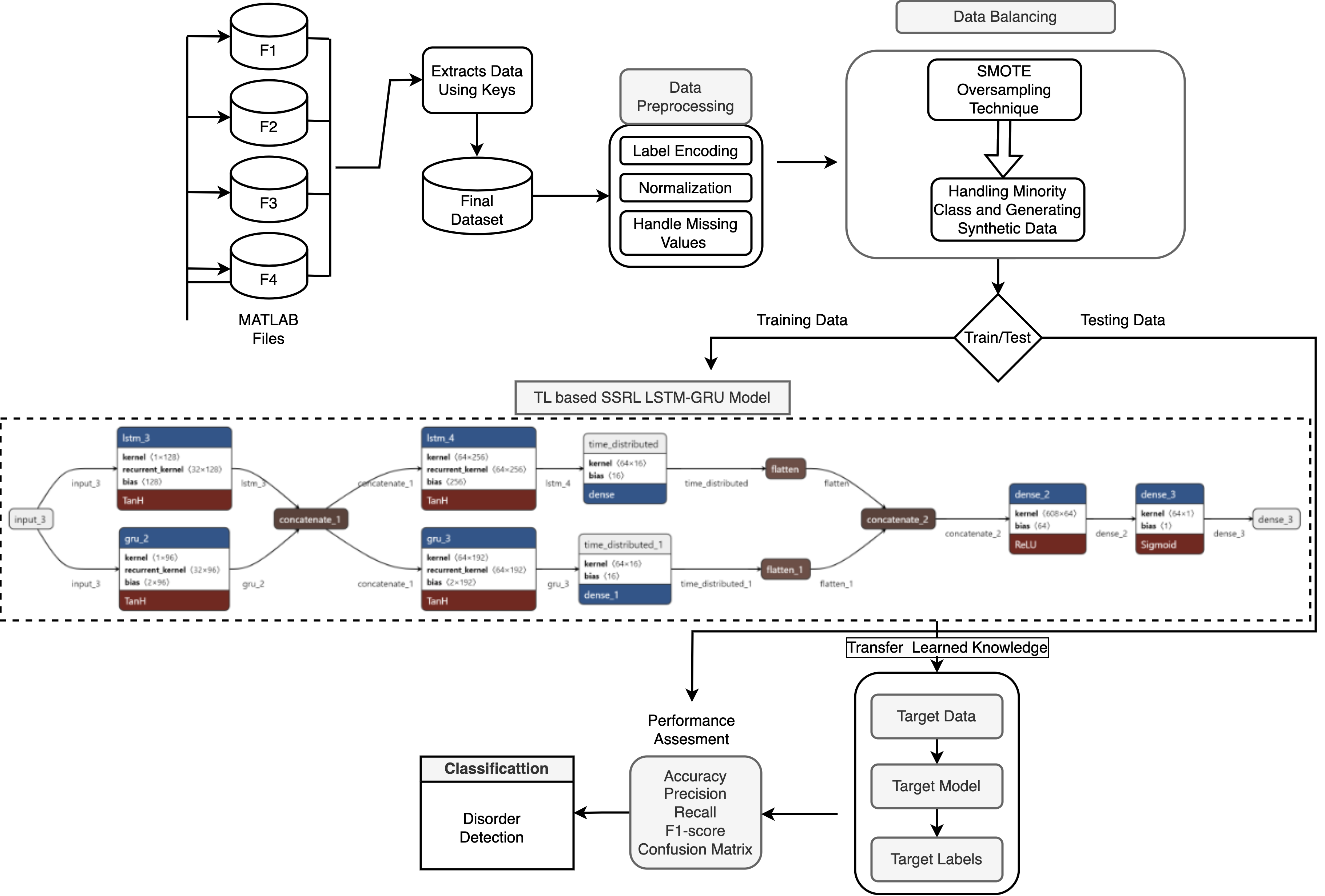

SSRepL-ADHD synergistically integrates self-supervised representation learning with transfer learning, structured around a composite LSTM-GRU stack. The architecture (Figure 2) is sequenced to isolate latent temporal dynamics, commencing with convolutional sequence abstraction through LSTM/GRU layers, followed by concatenation, fully-connected stabilization, and flattening. These representations are then cascaded through additional dense layers optimized under the Adam algorithm with binary cross-entropy as the loss metric. The model is structured for both discrimination (ADHD vs. control) and feature reusability in downstream tasks.

Figure 2: Sequential block diagram of the SSRepL-ADHD model, integrating self-supervised pretraining, feature fusion, and transfer learning in a deep RNN context.

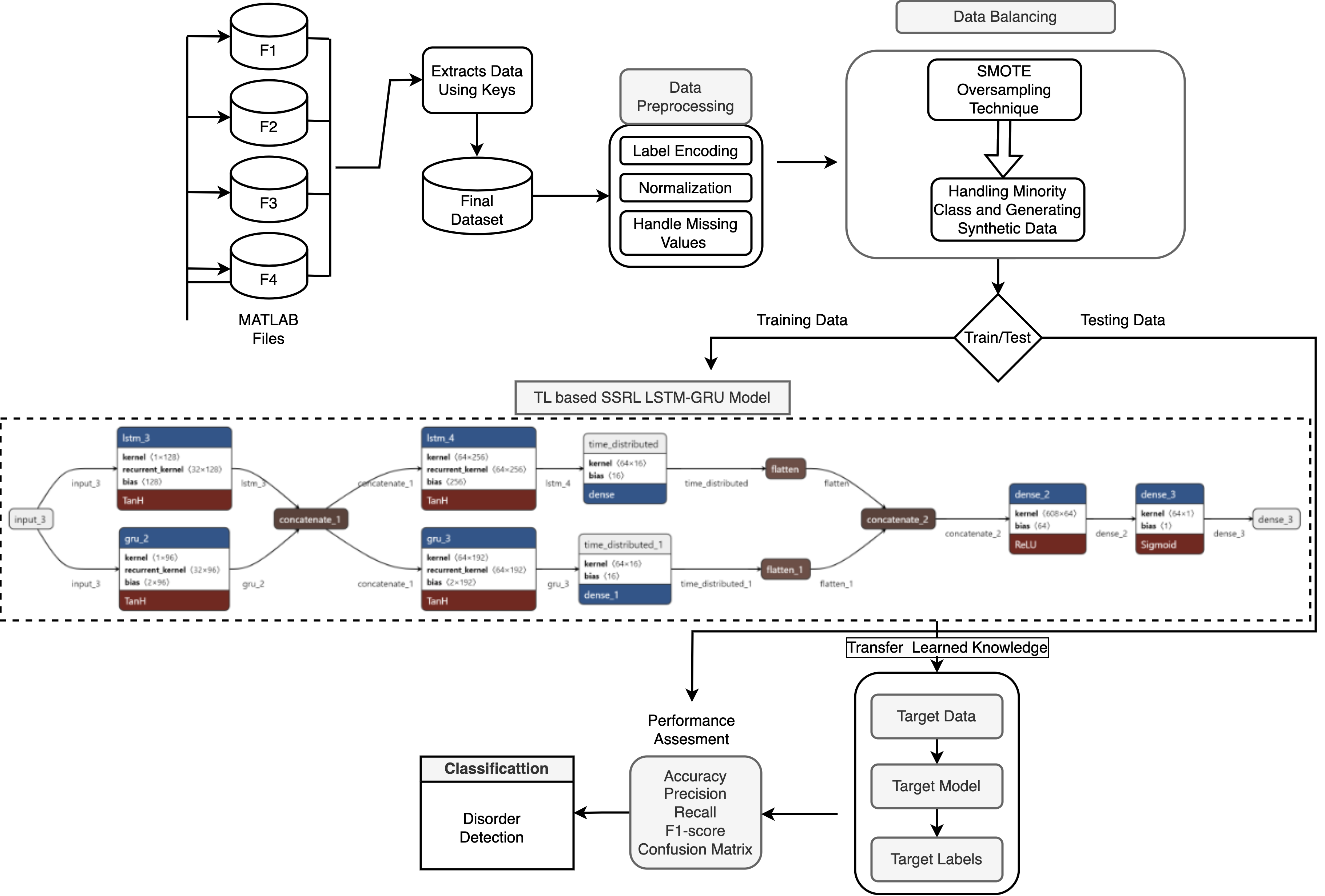

Notably, Figure 3 offers a macro perspective of the knowledge transfer paradigm in the context of EEG-based disease detection, showing modularity for cross-task adaptability.

Figure 3: Overview of the transfer learning-enabled methodology for ADHD detection applied to visual attention EEG signals.

Experimental Evaluation and Numerical Outcomes

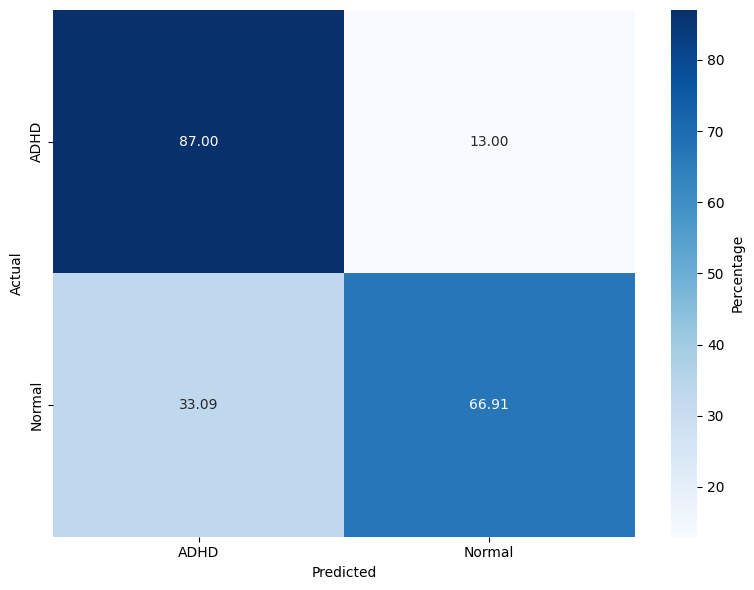

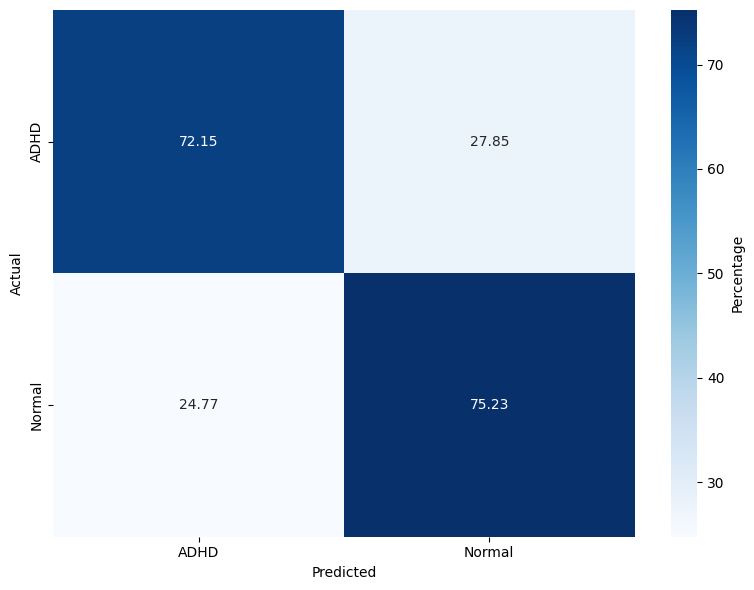

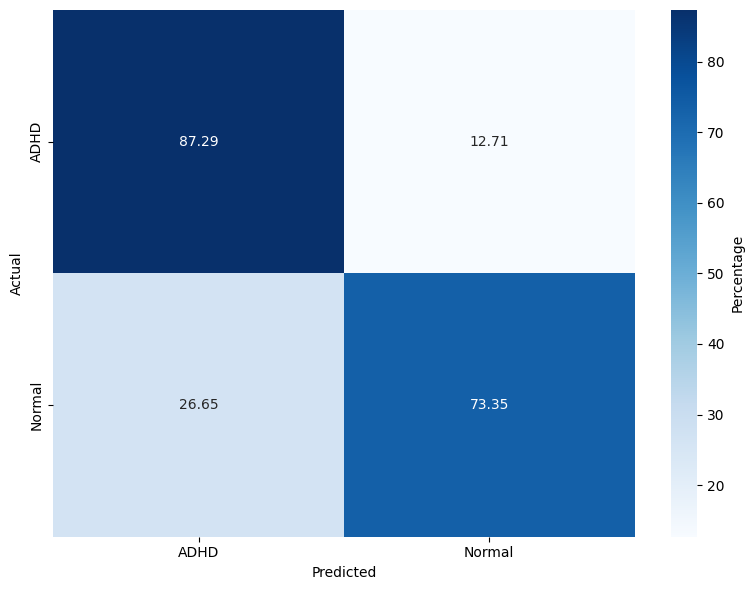

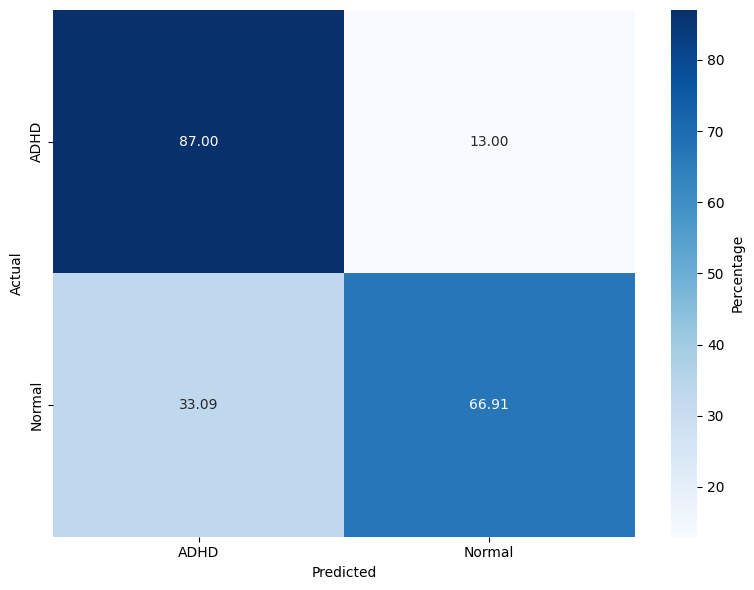

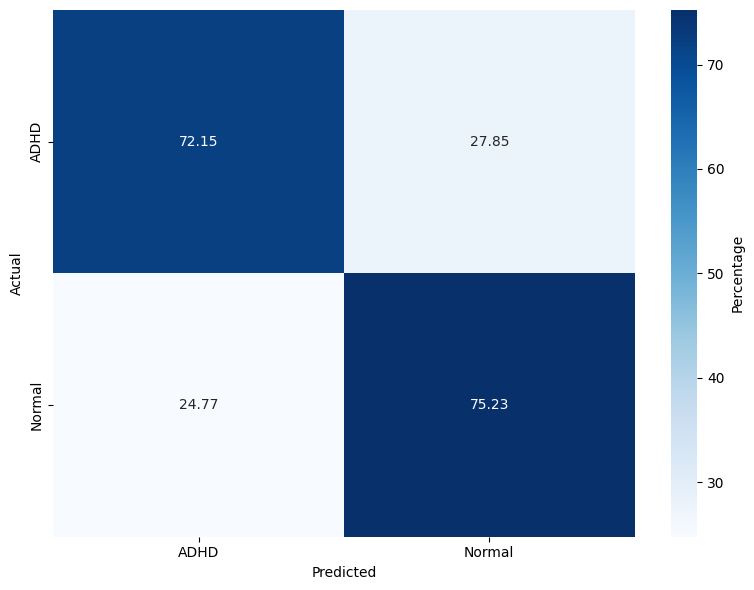

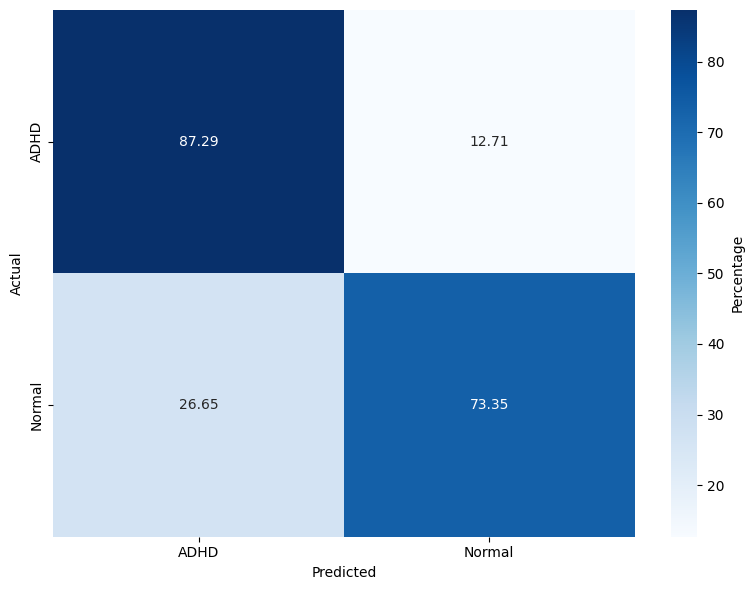

The evaluation spans three models: RF, lightweight DNN (LSSRepL-DNN), and SSRepL-ADHD. The SSRepL-ADHD model reports the highest accuracy (81.11%), outperforming both RF (78.01%) and the lightweight DNN (73.67%). Confusion matrices (Figure 4) display that SSRepL-ADHD achieves an 87.29% true positive rate for ADHD and 73.35% correct classification for controls, indicating improved sensitivity and moderate specificity under class imbalance constraints.

Figure 4: Confusion matrices for Random Forest, DNN, and SSRepL-ADHD models, highlighting classification performance across ADHD/control classes.

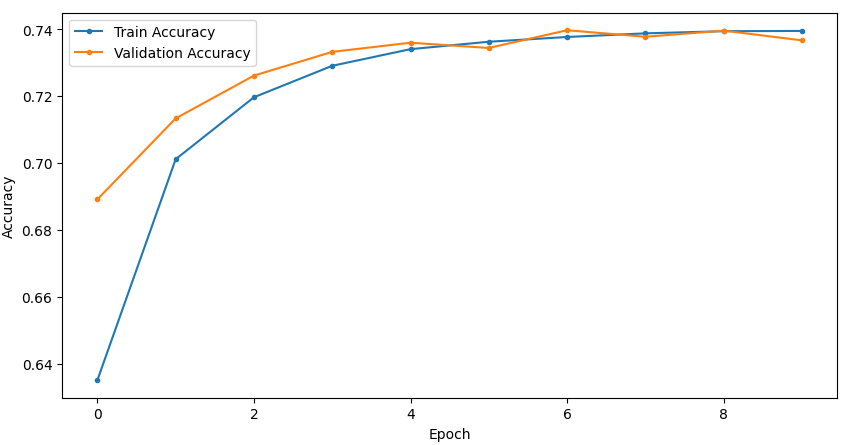

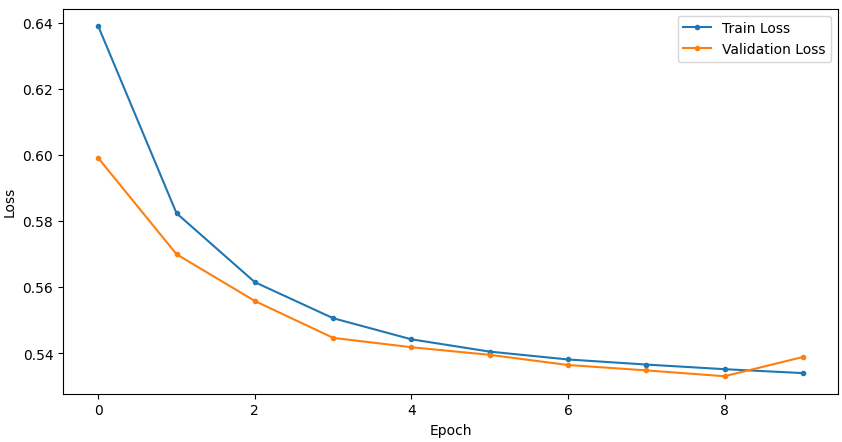

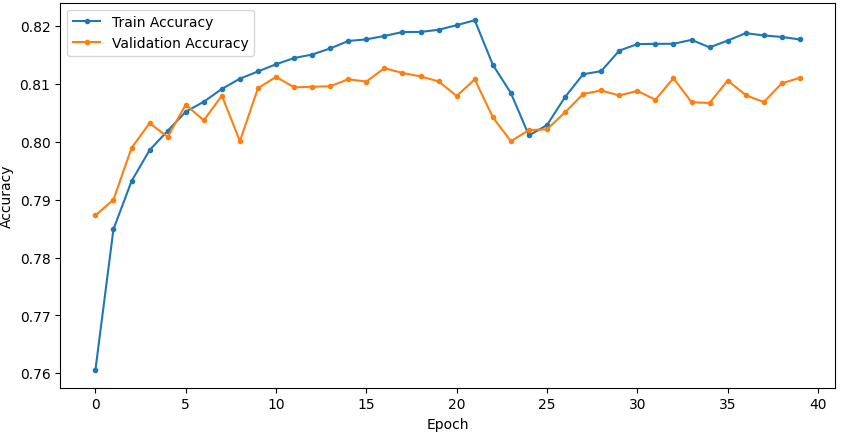

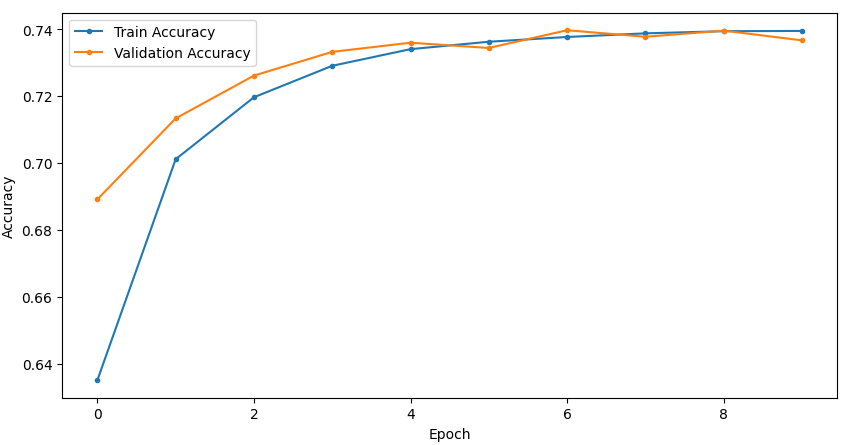

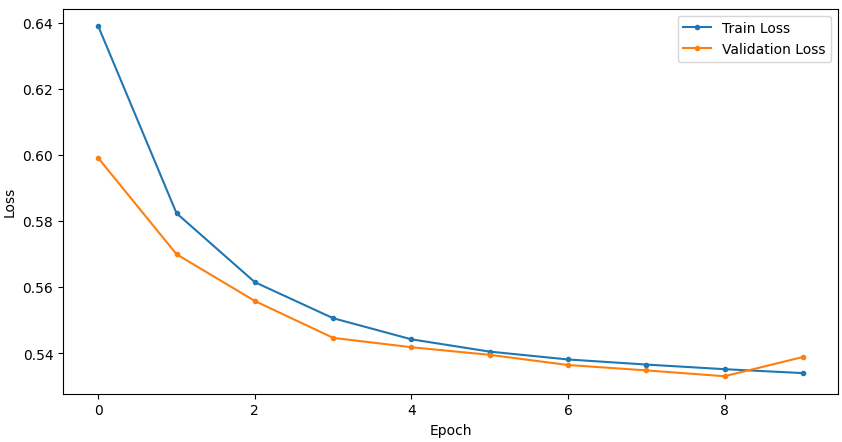

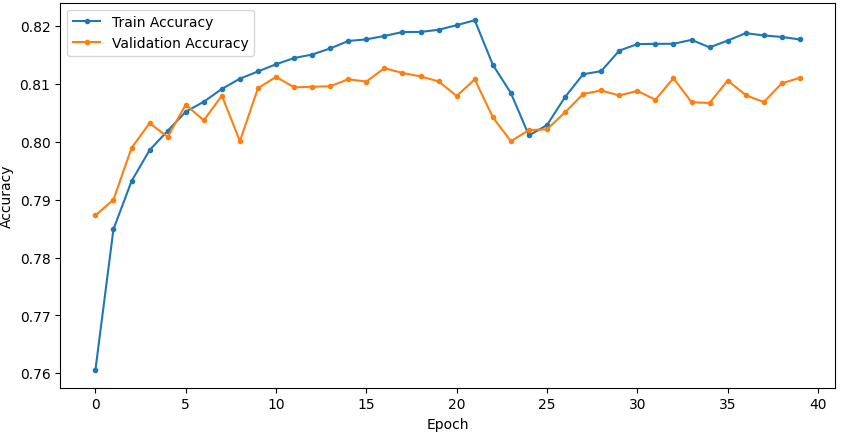

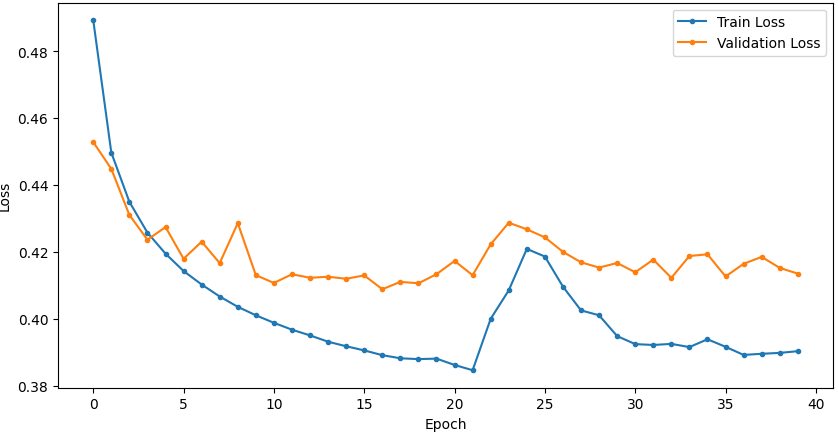

Temporal convergence curves (Figure 5) demonstrate continuous improvement in train and validation accuracy/loss for SSRepL-ADHD, with minimal overfitting even after extensive training (40 epochs, ~23 hours), confirming stable generalization.

Figure 5: Training and validation curves for accuracy and loss in DNN and SSRepL-ADHD models, illustrating enhanced convergence and stably learned representations in the proposed architecture.

Implications and Prospects

From a practical lens, the SSRepL-ADHD framework sets the basis for deployable EEG-based clinical decision support in ADHD diagnosis, particularly where annotated EEG resources are limited, making transfer learning with robust representation layers essential. Theoretically, the model confirms the utility of self-supervised learning for neurodevelopmental disorder phenotyping and motivates the design of generalizable EEG feature extractors transferable across related NDDs. Another key observation is that even with complex, high-dimensional, and imbalanced clinical time-series, properly regularized RNN architectures with self-supervised pretraining can outperform classical ensemble methods.

Methodological advances in preprocessing, especially the integration of SMOTE for minority class balancing, are critical for achieving these results. The framework is extensible to other neurological disorders (e.g., epilepsy, autism) where task-specific EEG dynamics can be captured and transferred.

Future research directions include improving interpretability of temporal feature attributions in the LSTM-GRU layers, integrating multi-modal neuroimaging data, and deploying hierarchical transfer learning pipelines for subtype classification and comorbidity detection.

Conclusion

The study delineates a sophisticated deep learning approach for distinguishing ADHD in children using EEG data from attention tasks, emphasizing the advantages of self-supervised and transfer learned temporal representations. Reporting an accuracy of 81.11%, the SSRepL-ADHD model surpasses both classical and shallow neural baselines, offering robust, transferable EEG-based features for clinical neurodevelopmental classification. Remaining challenges concern dataset imbalance and optimal feature selection, but the experimental evidence argues strongly for the SSRepL-ADHD methodology as the current state-of-art in EEG-driven ADHD detection, with promising generalization to broader NDD contexts.