FC-KAN: Function Combinations in Kolmogorov-Arnold Networks

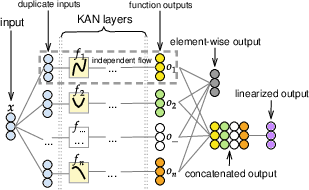

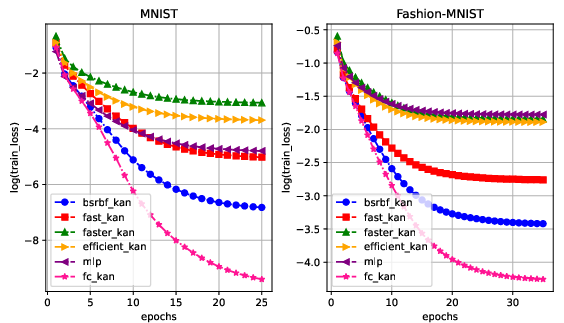

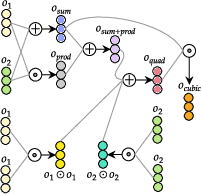

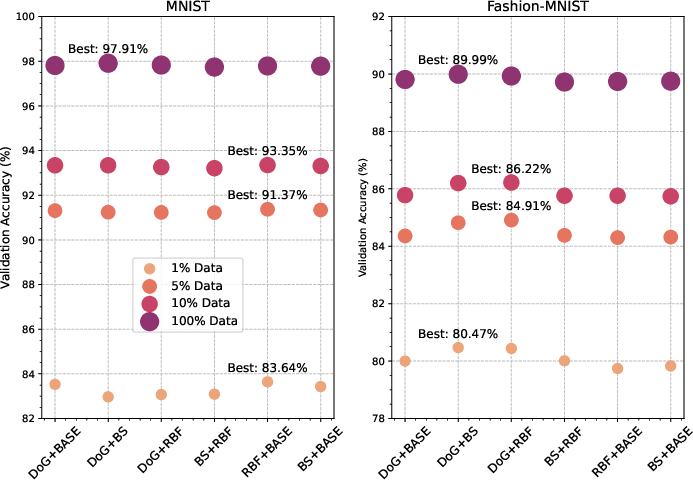

Abstract: In this paper, we introduce FC-KAN, a Kolmogorov-Arnold Network (KAN) that leverages combinations of popular mathematical functions such as B-splines, wavelets, and radial basis functions on low-dimensional data through element-wise operations. We explore several methods for combining the outputs of these functions, including sum, element-wise product, the addition of sum and element-wise product, representations of quadratic and cubic functions, concatenation, linear transformation of the concatenated output, and others. In our experiments, we compare FC-KAN with a multi-layer perceptron network (MLP) and other existing KANs, such as BSRBF-KAN, EfficientKAN, FastKAN, and FasterKAN, on the MNIST and Fashion-MNIST datasets. Two variants of FC-KAN, which use a combination of outputs from B-splines and Difference of Gaussians (DoG) and from B-splines and linear transformations in the form of a quadratic function, outperformed overall other models on the average of 5 independent training runs. We expect that FC-KAN can leverage function combinations to design future KANs. Our repository is publicly available at: https://github.com/hoangthangta/FC_KAN.

- Kan: Kolmogorov-arnold networks. arXiv preprint arXiv:2404.19756, 2024a.

- Kan 2.0: Kolmogorov-arnold networks meet science. arXiv preprint arXiv:2408.10205, 2024b.

- Andrei Nikolaevich Kolmogorov. On the representation of continuous functions of many variables by superposition of continuous functions of one variable and addition. In Doklady Akademii Nauk, volume 114, pages 953–956. Russian Academy of Sciences, 1957.

- Ziyao Li. Kolmogorov-arnold networks are radial basis function networks. arXiv preprint arXiv:2405.06721, 2024.

- Athanasios Delis. Fasterkan. https://github.com/AthanasiosDelis/faster-kan/, 2024.

- Wav-kan: Wavelet kolmogorov-arnold networks. arXiv preprint arXiv:2405.12832, 2024.

- Sidharth SS. Chebyshev polynomial-based kolmogorov-arnold networks: An efficient architecture for nonlinear function approximation. arXiv preprint arXiv:2405.07200, 2024.

- Hoang-Thang Ta. Bsrbf-kan: A combination of b-splines and radial basis functions in kolmogorov-arnold networks. arXiv preprint arXiv:2406.11173, 2024.

- Activation space selectable kolmogorov-arnold networks. arXiv preprint arXiv:2408.08338, 2024.

- On a constructive proof of kolmogorov’s superposition theorem. Constructive approximation, 30:653–675, 2009.

- Treedrnet: a robust deep model for long term time series forecasting. arXiv preprint arXiv:2206.12106, 2022.

- Space-filling curves and kolmogorov superposition-based neural networks. Neural Networks, 15(1):57–67, 2002.

- Mario Köppen. On the training of a kolmogorov network. In Artificial Neural Networks—ICANN 2002: International Conference Madrid, Spain, August 28–30, 2002 Proceedings 12, pages 474–479. Springer, 2002.

- On the realization of a kolmogorov network. Neural Computation, 5(1):18–20, 1993.

- The kolmogorov spline network for image processing. In Image Processing: Concepts, Methodologies, Tools, and Applications, pages 54–78. IGI Global, 2013.

- The kolmogorov superposition theorem can break the curse of dimensionality when approximating high dimensional functions. arXiv preprint arXiv:2112.09963, 2021.

- Representation properties of networks: Kolmogorov’s theorem is irrelevant. Neural Computation, 1(4):465–469, 1989.

- AG Vitushkin. On hilbert’s thirteenth problem. In Dokl. Akad. Nauk SSSR, volume 95, pages 701–704, 1954.

- Věra Kůrková. Kolmogorov’s theorem is relevant. Neural computation, 3(4):617–622, 1991.

- Vikas Dhiman. Kan: Kolmogorov–arnold networks: A review. https://vikasdhiman.info/reviews/KAN_a_review.pdf, 2024.

- Carl De Boor. On calculating with b-splines. Journal of Approximation theory, 6(1):50–62, 1972.

- Deepokan: Deep operator network based on kolmogorov arnold networks for mechanics problems. arXiv preprint arXiv:2405.19143, 2024.

- Subhransu S. Bhattacharjee. Torchkan: Simplified kan model with variations. https://github.com/1ssb/torchkan/, 2024.

- Fourierkan-gcf: Fourier kolmogorov-arnold network–an effective and efficient feature transformation for graph collaborative filtering. arXiv preprint arXiv:2406.01034, 2024.

- Seyd Teymoor Seydi. Unveiling the power of wavelets: A wavelet-based kolmogorov-arnold network for hyperspectral image classification. arXiv preprint arXiv:2406.07869, 2024.

- Seyd Teymoor Seydi. Exploring the potential of polynomial basis functions in kolmogorov-arnold networks: A comparative study of different groups of polynomials. arXiv e-prints, pages arXiv–2406, 2024.

- Andrei Vladimirovich Chernov. Gaussian functions combined with kolmogorov’s theorem as applied to approximation of functions of several variables. Computational Mathematics and Mathematical Physics, 60:766–782, 2020.

- Johannes Schmidt-Hieber. The kolmogorov–arnold representation theorem revisited. Neural networks, 137:119–126, 2021.

- Li Deng. The mnist database of handwritten digit images for machine learning research [best of the web]. IEEE signal processing magazine, 29(6):141–142, 2012.

- Fashion-mnist: a novel image dataset for benchmarking machine learning algorithms. arXiv preprint arXiv:1708.07747, 2017.

- Kan or mlp: A fairer comparison. arXiv preprint arXiv:2407.16674, 2024.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.