Data Valuation with Gradient Similarity

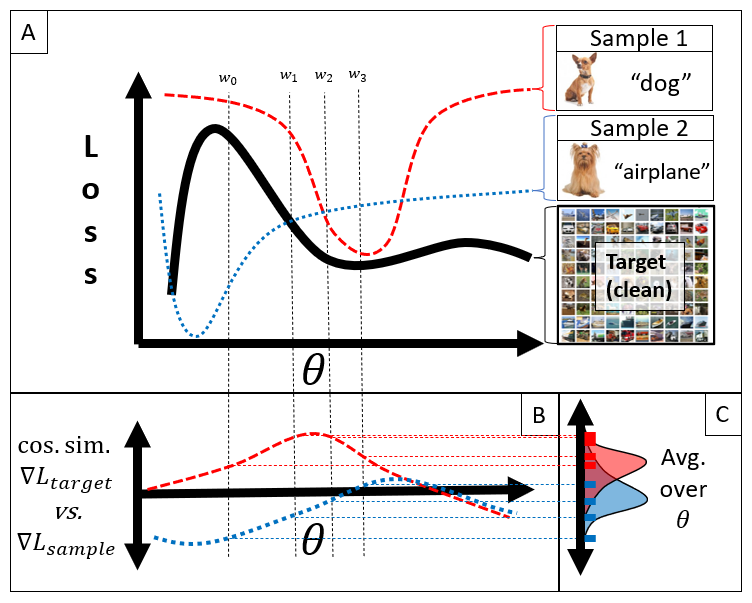

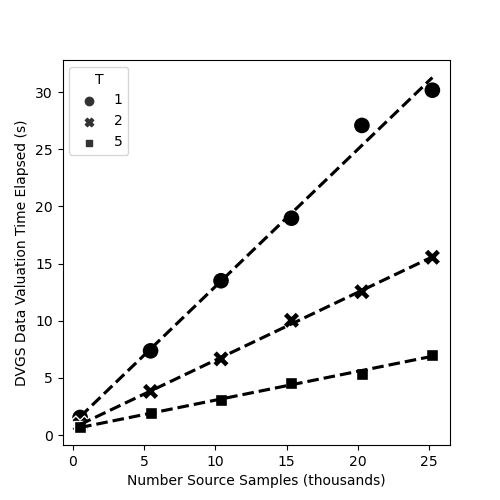

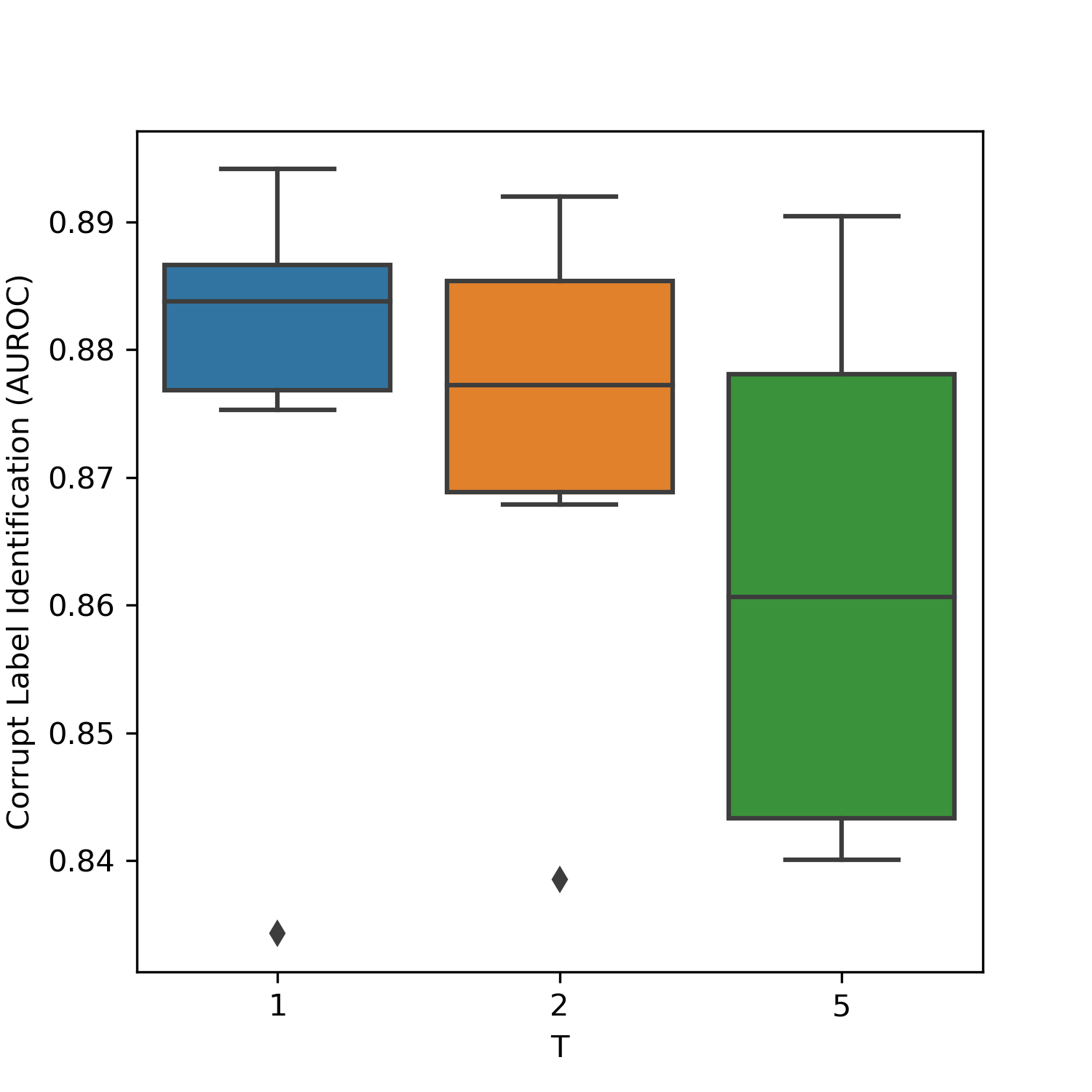

Abstract: High-quality data is crucial for accurate machine learning and actionable analytics, however, mislabeled or noisy data is a common problem in many domains. Distinguishing low- from high-quality data can be challenging, often requiring expert knowledge and considerable manual intervention. Data Valuation algorithms are a class of methods that seek to quantify the value of each sample in a dataset based on its contribution or importance to a given predictive task. These data values have shown an impressive ability to identify mislabeled observations, and filtering low-value data can boost machine learning performance. In this work, we present a simple alternative to existing methods, termed Data Valuation with Gradient Similarity (DVGS). This approach can be easily applied to any gradient descent learning algorithm, scales well to large datasets, and performs comparably or better than baseline valuation methods for tasks such as corrupted label discovery and noise quantification. We evaluate the DVGS method on tabular, image and RNA expression datasets to show the effectiveness of the method across domains. Our approach has the ability to rapidly and accurately identify low-quality data, which can reduce the need for expert knowledge and manual intervention in data cleaning tasks.

- A multi-center study on the reproducibility of drug-response assays in mammalian cell lines. Cell Systems, 9(1):35–48.e5, 2019.

- Drug development: Raise standards for preclinical cancer research. Nature, 483(7391):531—533, March 2012.

- Believe it or not: how much can we rely on published data on potential drug targets? Nature Reviews Drug Discovery, 10:712–712, 2011.

- L Cheng and L Li. Systematic quality control analysis of LINCS data. 5(11):588–598.

- A review of data quality assessment methods for public health information systems. International journal of environmental research and public health, 11(5):5170–5207, 2014.

- The effects of data quality on machine learning performance, 2022.

- Li Cai and Yangyong Zhu. The challenges of data quality and data quality assessment in the big data era. Data Sci. J., 14:2, 2015.

- Data shapley: Equitable valuation of data for machine learning.

- Data valuation using reinforcement learning. Number: arXiv:1909.11671.

- R. Dennis Cook. Detection of influential observation in linear regression. 19(1):15–18. Publisher: [Taylor & Francis, Ltd., American Statistical Association, American Society for Quality].

- Data valuation for medical imaging using shapley value and application to a large-scale chest x-ray dataset. 11(1):8366. Number: 1 Publisher: Nature Publishing Group.

- A next generation connectivity map: L1000 platform and the first 1,000,000 profiles. Cell, 171:1437–1452.e17, 2017.

- A Bayesian approach to accurate and robust signature detection on LINCS L1000 data. Bioinformatics, 36(9):2787–2795, 01 2020.

- l1kdeconv: an r package for peak calling analysis with lincs l1000 data. BMC Bioinformatics, 18, 2017.

- The characteristic direction: a geometrical approach to identify differentially expressed genes. BMC Bioinformatics, 15, 2014.

- L1000cds2: Lincs l1000 characteristic direction signatures search engine. NPJ Systems Biology and Applications, 2, 2016.

- A deep learning framework for high-throughput mechanism-driven phenotype compound screening and its application to COVID-19 drug repurposing. 3(3):247–257. Number: 3 Publisher: Nature Publishing Group.

- Dataset distillation, 2018.

- Dataset distillation: A comprehensive review, 2023.

- Max Welling. Herding dynamical weights to learn. In Proceedings of the 26th Annual International Conference on Machine Learning, ICML ’09, page 1121–1128, New York, NY, USA, 2009. Association for Computing Machinery.

- Super-samples from kernel herding, 2012.

- Coresets for nonparametric estimation - the case of dp-means. In Francis Bach and David Blei, editors, Proceedings of the 32nd International Conference on Machine Learning, volume 37 of Proceedings of Machine Learning Research, pages 209–217, Lille, France, 07–09 Jul 2015. PMLR.

- Scalable training of mixture models via coresets. In J. Shawe-Taylor, R. Zemel, P. Bartlett, F. Pereira, and K.Q. Weinberger, editors, Advances in Neural Information Processing Systems, volume 24. Curran Associates, Inc., 2011.

- Coresets for data-efficient training of machine learning models. 2019.

- Dynamic prompt learning via policy gradient for semi-structured mathematical reasoning, 2022.

- Data selection for language models via importance resampling, 2023.

- Deep learning for anomaly detection. ACM Computing Surveys, 54(2):1–38, mar 2021.

- Backpropagated gradient representations for anomaly detection, 2020.

- Learning to reweight examples for robust deep learning, 2018.

- Mentornet: Learning data-driven curriculum for very deep neural networks on corrupted labels. 2017.

- Generalized cross entropy loss for training deep neural networks with noisy labels. ArXiv, abs/1805.07836, 2018.

- Using trusted data to train deep networks on labels corrupted by severe noise. ArXiv, abs/1802.05300, 2018.

- Langche Zeng. Logistic regression in rare events data 1. 1999.

- D. Dua and C. Graff. UCI machine learning repository.

- Krisztián Búza. Feedback prediction for blogs. In Annual Conference of the Gesellschaft für Klassifikation, 2012.

- Alex Krizhevsky. Learning multiple layers of features from tiny images. pages 32–33, 2009.

- Going deeper with convolutions, 2014.

- C. Spearman. The proof and measurement of association between two things. by c. spearman, 1904. The American journal of psychology, 100 3-4:441–71, 1987.

- The meaning and use of the area under a receiver operating characteristic (roc) curve. Radiology, 143(1):29–36, 1982.

- Learning Internal Representations by Error Propagation, pages 318–362. 1987.

- Pierre Baldi. Autoencoders, unsupervised learning, and deep architectures. In Isabelle Guyon, Gideon Dror, Vincent Lemaire, Graham Taylor, and Daniel Silver, editors, Proceedings of ICML Workshop on Unsupervised and Transfer Learning, volume 27 of Proceedings of Machine Learning Research, pages 37–49, Bellevue, Washington, USA, 02 Jul 2012. PMLR.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.