Detecting Scams Using Large Language Models

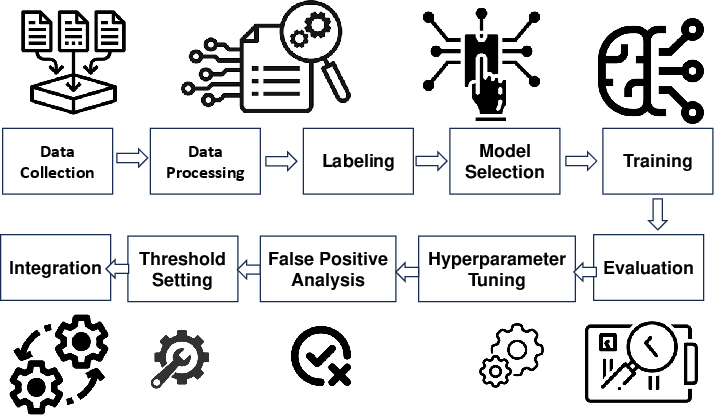

Abstract: LLMs have gained prominence in various applications, including security. This paper explores the utility of LLMs in scam detection, a critical aspect of cybersecurity. Unlike traditional applications, we propose a novel use case for LLMs to identify scams, such as phishing, advance fee fraud, and romance scams. We present notable security applications of LLMs and discuss the unique challenges posed by scams. Specifically, we outline the key steps involved in building an effective scam detector using LLMs, emphasizing data collection, preprocessing, model selection, training, and integration into target systems. Additionally, we conduct a preliminary evaluation using GPT-3.5 and GPT-4 on a duplicated email, highlighting their proficiency in identifying common signs of phishing or scam emails. The results demonstrate the models' effectiveness in recognizing suspicious elements, but we emphasize the need for a comprehensive assessment across various language tasks. The paper concludes by underlining the importance of ongoing refinement and collaboration with cybersecurity experts to adapt to evolving threats.

- Y. Chang, X. Wang, J. Wang, Y. Wu, K. Zhu, H. Chen, L. Yang, X. Yi, C. Wang, Y. Wang et al., “A survey on evaluation of large language models,” arXiv preprint arXiv:2307.03109, 2023.

- W. X. Zhao, K. Zhou, J. Li, T. Tang, X. Wang, Y. Hou, Y. Min, B. Zhang, J. Zhang, Z. Dong et al., “A survey of large language models,” arXiv preprint arXiv:2303.18223, 2023.

- J. Wu, S. Yang, R. Zhan, Y. Yuan, D. F. Wong, and L. S. Chao, “A survey on llm-gernerated text detection: Necessity, methods, and future directions,” arXiv preprint arXiv:2310.14724, 2023.

- M. U. Hadi, R. Qureshi, A. Shah, M. Irfan, A. Zafar, M. Shaikh, N. Akhtar, J. Wu, and S. Mirjalili, “A survey on large language models: Applications, challenges, limitations, and practical usage,” TechRxiv, 2023.

- X. Wu, R. Duan, and J. Ni, “Unveiling security, privacy, and ethical concerns of chatgpt,” 2023.

- S. R. Bowman, “Eight things to know about large language models,” arXiv preprint arXiv:2304.00612, 2023.

- W. Zhao, Y. Liu, Y. Wan, Y. Wang, Q. Wu, Z. Deng, J. Du, S. Liu, Y. Xu, and P. S. Yu, “knn-icl: Compositional task-oriented parsing generalization with nearest neighbor in-context learning,” 2023.

- A. Fan, B. Gokkaya, M. Harman, M. Lyubarskiy, S. Sengupta, S. Yoo, and J. M. Zhang, “Large language models for software engineering: Survey and open problems,” 2023.

- X. Hou, Y. Zhao, Y. Liu, Z. Yang, K. Wang, L. Li, X. Luo, D. Lo, J. Grundy, and H. Wang, “Large language models for software engineering: A systematic literature review,” arXiv preprint arXiv:2308.10620, 2023.

- A. J. Thirunavukarasu, D. S. J. Ting, K. Elangovan, L. Gutierrez, T. F. Tan, and D. S. W. Ting, “Large language models in medicine,” Nature medicine, vol. 29, no. 8, pp. 1930–1940, 2023.

- J. Clusmann, F. R. Kolbinger, H. S. Muti, Z. I. Carrero, J.-N. Eckardt, N. G. Laleh, C. M. L. Löffler, S.-C. Schwarzkopf, M. Unger, G. P. Veldhuizen et al., “The future landscape of large language models in medicine,” Communications Medicine, vol. 3, no. 1, p. 141, 2023.

- P. Caven, “A more insecure ecosystem? chatgpt’s influence on cybersecurity,” ChatGPT’s Influence on Cybersecurity (April 30, 2023), 2023.

- M. Al-Hawawreh, A. Aljuhani, and Y. Jararweh, “Chatgpt for cybersecurity: practical applications, challenges, and future directions,” Cluster Computing, vol. 26, no. 6, pp. 3421–3436, 2023.

- J. Marshall, “What effects do large language models have on cybersecurity,” 2023.

- Y. Yao, J. Duan, K. Xu, Y. Cai, E. Sun, and Y. Zhang, “A survey on large language model (llm) security and privacy: The good, the bad, and the ugly,” arXiv preprint arXiv:2312.02003, 2023.

- P. Dhoni and R. Kumar, “Synergizing generative ai and cybersecurity: Roles of generative ai entities, companies, agencies, and government in enhancing cybersecurity,” 2023.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.