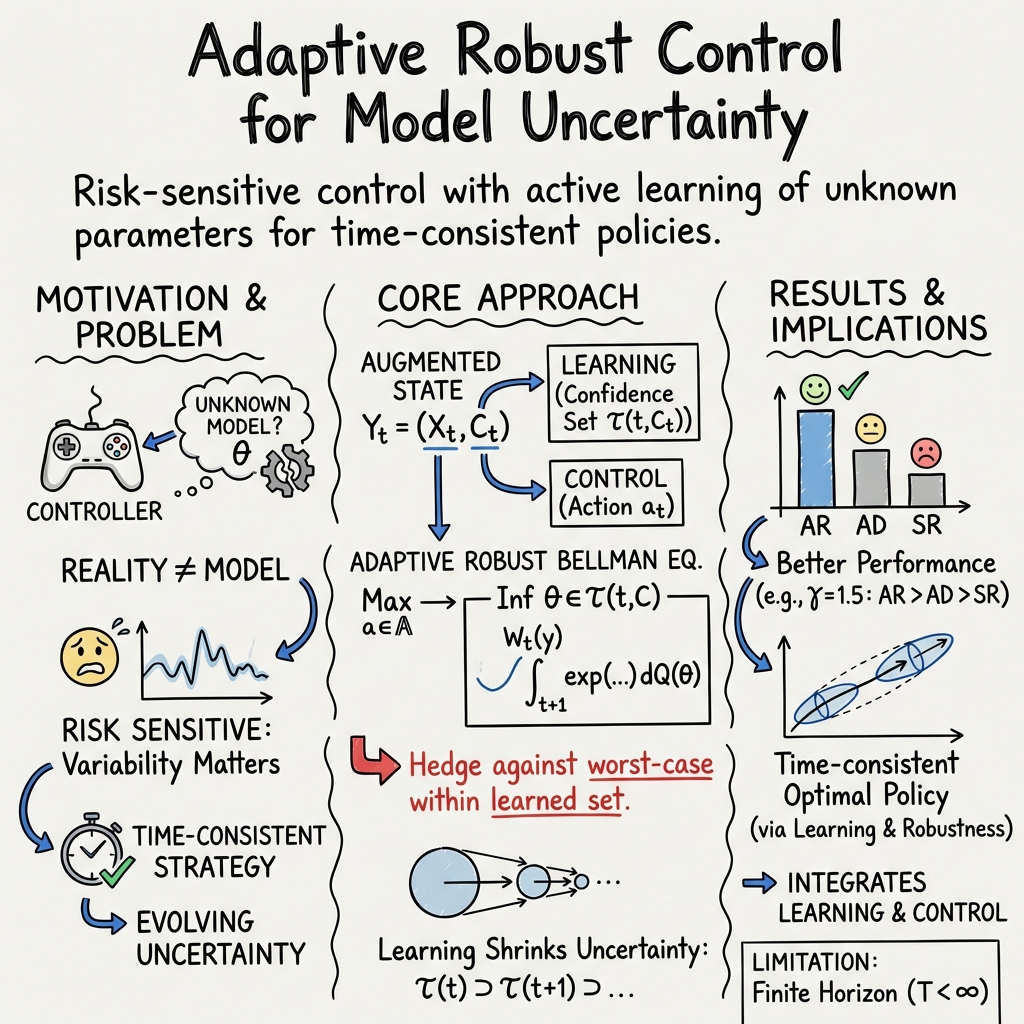

- The paper presents an adaptive robust framework to address finite-horizon risk-sensitive Markov decision problems by incorporating recursive confidence region estimates for model uncertainty.

- It employs machine learning techniques, such as Gaussian Process surrogates and regression Monte Carlo, for value function approximation in high-dimensional spaces.

- Numerical experiments demonstrate that the adaptive robust strategy outperforms both adaptive and strong robust methods across varying risk-sensitivity levels.

Risk-Sensitive Markov Decision Problems Under Model Uncertainty

Introduction

The paper "Risk-sensitive Markov decision problems under model uncertainty: finite time horizon case" (2104.06915) addresses a class of discrete-time Markovian control problems with a focus on risk sensitivity and model uncertainty. The authors propose a methodology leveraging adaptive robust control merged with machine learning techniques to efficiently solve these problems over a finite time horizon.

The contribution is set against a backdrop of significant prior work in model uncertainty for stochastic control. The adaptive robust framework extends existing robust and adaptive control approaches, incorporating recursive confidence regions and machine learning to optimize under a risk-sensitive criterion. This work differentiates itself by its allowance for intermediate rewards, as opposed to only terminal rewards, using a discounted risk-sensitive criterion, and deriving corresponding Bellman equations.

The research formulates the problem of risk-sensitive Markovian control with model uncertainty through a discounted cost criterion. The problem setup includes a measurable space (Ω,F), a finite time horizon T, and a parameter space Θ reflecting model uncertainty. The state process, controlled by an admissible control process, evolves according to a known stochastic dynamic influenced by uncertainty in the model's parameters.

The paper defines a discounted, risk-sensitive optimization criterion using an entropic risk measure, where the risk sensitivity factor γ quantifies the decision-maker's aversion or preference to risk. The core challenge is solving this control problem without knowledge of the true model parameter θ∗, demanding a robust adaptative approach to determine strategies based on recursive confidence regions for parameter estimates.

Adaptive Robust Control Approach

Building upon the adaptive robust paradigm from prior works, this study uses a novel set of recursive Bellman equations to tackle the control problem in question. The control process adaptation involves learning about the model's unknown parameters via recursive confidence region constructions. The methodology allows controllers to derive optimal policies through dynamic programming, explicitly accommodating model uncertainty.

A notable feature is the avoidance of grid-based approaches to handle high-dimensional state spaces, opting instead for Gaussian Process (GP) surrogates and regression Monte Carlo methods. This method constructs confidence regions using empirical estimates adjusted through recursive learning, refining them to adaptively achieve robust decision-making despite model discrepancies.

Numerical Example and Machine Learning Implementation

The paper implements the proposed methodology in a linear-quadratic control setting, parameterizing a two-dimensional controlled process under model uncertainty. The solution involves discretizing the state space in a regression Monte Carlo framework and employing GP surrogates for value function approximation.

A computational experiment is conducted to contrast adaptive robust (AR), adaptive (AD), and strong robust (SR) control strategies across different risk sensitivity levels. Results indicate that AR consistently performs better in maintaining efficacy across varying γ, showing a strategic advantage when handling model uncertainty. These findings underpin the practical utility of the proposed adaptive robust framework in complex environments.

Conclusion

The study introduces a comprehensive adaptive robust approach for risk-sensitive Markov decision processes under model uncertainty, presenting a viable alternative to traditional control methods. Through rigorous theoretical foundations and a performant computational architecture integrating machine learning, the approach offers scalable solutions to high-dimensional control problems. Future research might extend these ideas to infinite horizon settings or explore more intricate nonlinear dynamics within the presented framework.