- The paper introduces a decomposition approach for indoor lighting prediction that breaks the task into geometry estimation, scene completion, and LDR-to-HDR conversion.

- It leverages differentiable warping and GAN-based panorama completion to preserve high-frequency lighting details and ensure end-to-end trainability.

- Quantitative evaluations demonstrate improved accuracy over traditional end-to-end methods, benefiting applications in AR, VR, and robotics.

"Neural Illumination: Lighting Prediction for Indoor Environments" (1906.07370)

Overview

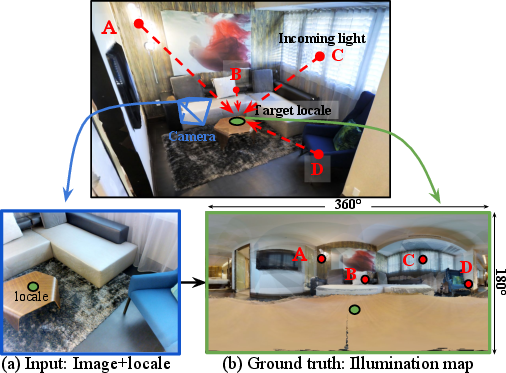

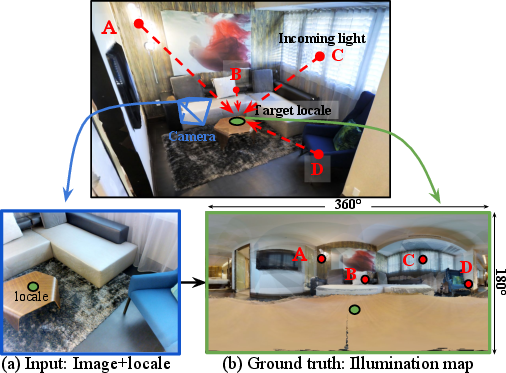

The paper "Neural Illumination: Lighting Prediction for Indoor Environments" presents a novel approach to estimating illumination in indoor scenes by predicting the HDR (High Dynamic Range) illumination map for a 3D point in an observed scene. This paper focuses on overcoming the limitations of previous methods that use single neural networks to directly predict illumination, which often fail to capture high-frequency lighting details due to complex 3D geometries. The proposed method, termed "Neural Illumination," decomposes the illumination prediction task into three manageable sub-tasks: geometry estimation, scene completion, and LDR-to-HDR conversion, which are all fully differentiable and trained with supervision both for individual sub-tasks and end-to-end for the complete pipeline.

Methodology

The core innovation in this work is the decomposition of the illumination prediction problem, which is traditionally monolithic. This decomposition includes several interconnected modules:

- Geometry Estimation: A neural network estimates the 3D scene structure from a single LDR image, generating a "plane equation" for each pixel and subsequently inferring depths.

- Differentiable Warping: Utilizes the estimated geometry to warp the input into a spherical projection centered on a locale of interest. This is crucial for mapping visible geometry into an illumination space.

- LDR Panorama Completion: Employs a generative adversarial network to predict missing or unobserved lighting in the warped panorama, thus completing the visual information from the scene.

- LDR-to-HDR Conversion: Transforms the completed LDR image into HDR intensity maps, addressing the limitations of tone mapping and intensity clipping in LDR images.

Figure 1: Given a single LDR image and a selected 2D pixel, Neural Illumination infers a panoramic HDR illumination map.

Training and Dataset

The paper introduces a new benchmark dataset comprising input LDR images and output HDR illumination maps. This dataset is distinct in its use of HDR and depth information from Matterport3D. The data synthesis involves warping and blending RGB-D inputs to simulate a realistic set of ground truth illumination maps for diverse indoor locales.

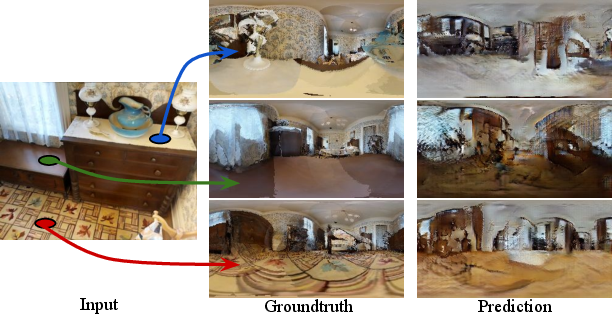

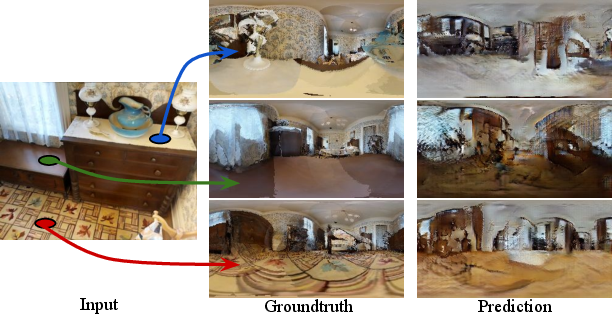

Figure 2: Spatially varying illumination generation capabilities enabled through 3D geometry.

Results and Evaluation

The effectiveness of Neural Illumination is supported by quantitative and qualitative evaluations against existing methods, particularly those using end-to-end networks without task decomposition [Gardner et al., 2017]. The new approach demonstrates superior performance in capturing high-frequency lighting details (i.e., small, intense light sources) and offers the capability to estimate spatially varying illuminations dependent on the selected pixel's location in the image.

The evaluation metrics employed include pixel-wise ℓ2 loss for both logarithmic and raw HDR intensities, and diffuse convolution errors, which collectively depict the predictive accuracy and visual fidelity for virtual object lighting.

Figure 3: Qualitative results demonstrating superior high-frequency lighting detail capture.

Implications and Future Work

The implications of this research are significant for applications in AR/VR, robotics, and other fields requiring realistic environmental lighting estimations. The proposed method not only enhances the realism in virtual renderings by providing more accurate lighting conditions but also introduces a new paradigm in illumination prediction via problem decomposition—enabling better generalization and ease of training.

Looking forward, further improvements could be pursued by integrating explicit modeling of surface materials and exploring more advanced 3D geometric representations to improve out-of-view illumination estimations.

Conclusion

"Neural Illumination" introduces a differentiated approach to illumination prediction that leverages task decomposition and detailed geometry estimation to produce high-quality lighting maps. This method sets a promising precedent for future research in environmental perception and light interaction within AI-driven applications. The framework's adaptability and efficiency suggest potential extensions into more complex scenes and dynamic lighting conditions.