- The paper introduces methodologies to predict unreported IR metrics using linear regression models.

- The study finds strong correlations among metrics like MAP, R-Prec, nDCG, and RBP, allowing one metric to serve as a proxy for another.

- The approach effectively reduces evaluation costs by using low-cost measures to reliably predict high-cost, deep evaluation metrics.

Introduction

The paper explores methodologies for assessing the effectiveness of Information Retrieval (IR) systems by examining the relationships between multiple evaluation metrics. With the diversity of metrics available, the authors aim to predict unreported metrics using reported ones to facilitate more comprehensive comparisons across different research studies. They also study the feasibility of predicting high-cost measures using low-cost alternatives, reducing evaluation expenses without sacrificing metric accuracy.

Correlation of Evaluation Metrics

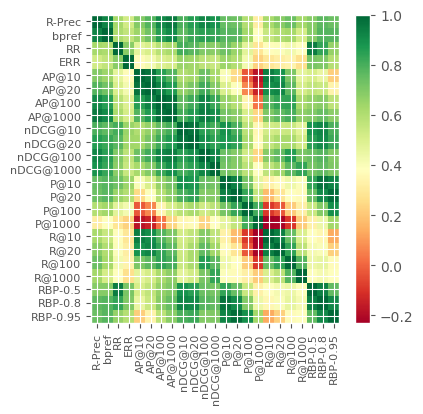

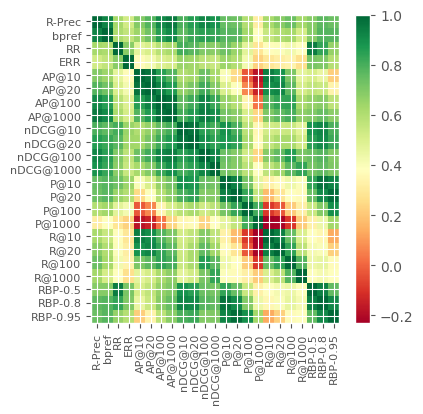

The study investigates the correlation among 23 IR metrics derived from various TREC test collections. This includes metrics like MAP, R-Prec, nDCG, and various forms of RBP. Through the application of Pearson correlation, the authors identify strong correlations, such as between MAP, R-Prec, and nDCG. Similarly, RR and RBP(p=0.5) demonstrate strong alignment, and so do nDCG@20 and RBP(0.8).

Figure 1: Pearson Correlation between Metrics.

The correlation analysis provides insights into which metrics can serve as reliable proxies for others when assessing IR system performance, offering valuable information for selecting metrics in research reporting. This understanding is crucial for accurately characterizing systems in scenarios where only a limited set of metrics are reported.

Prediction of Evaluation Metrics

The authors employed linear regression models to predict the scores of various evaluation metrics based on a combination of 1-3 other metrics. The findings indicate that metrics such as MAP, P@10, RBP(p=0.5), and RBP(p=0.8) can be predicted with high accuracy even when fewer evaluation metrics are available. This provides a method to estimate otherwise unreported metrics with acceptable reliability.

High-Cost to Low-Cost Measure Predictions

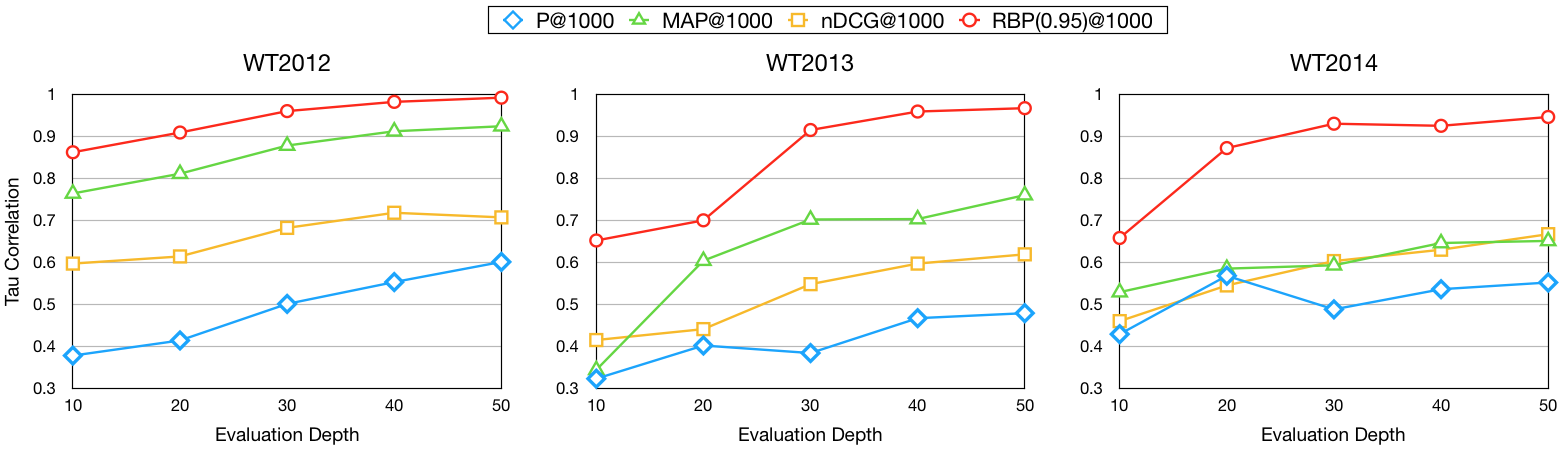

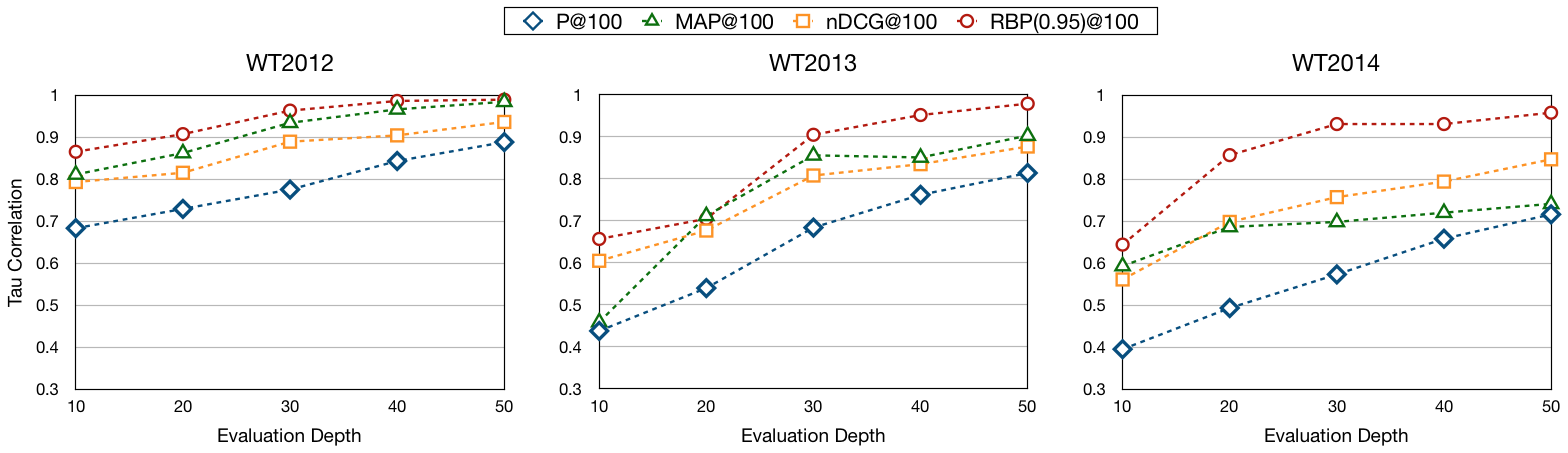

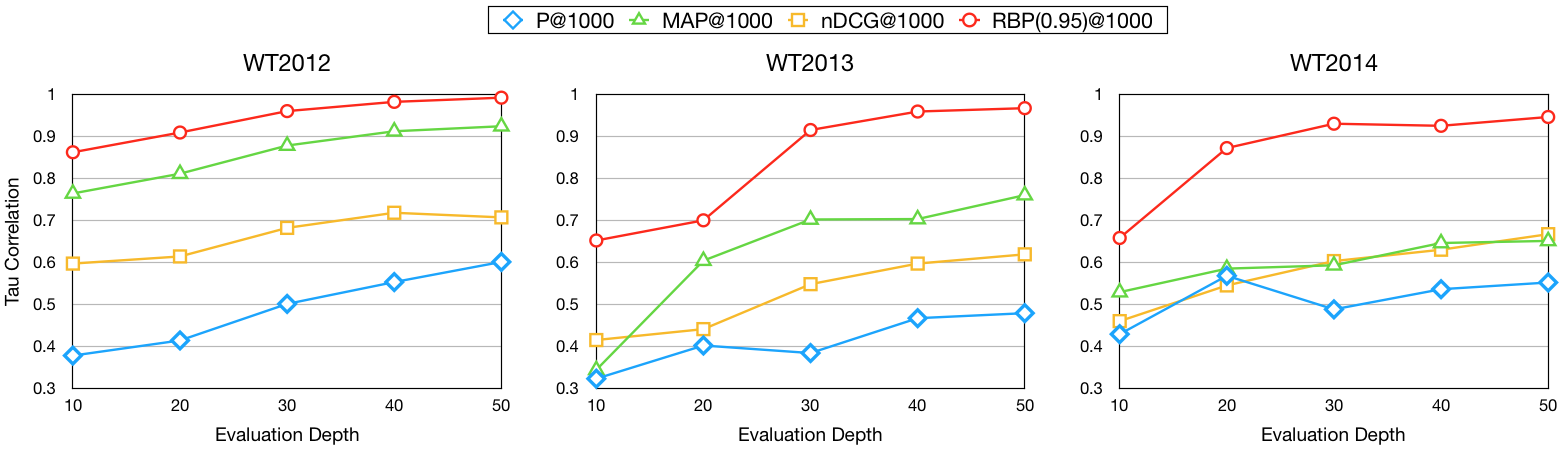

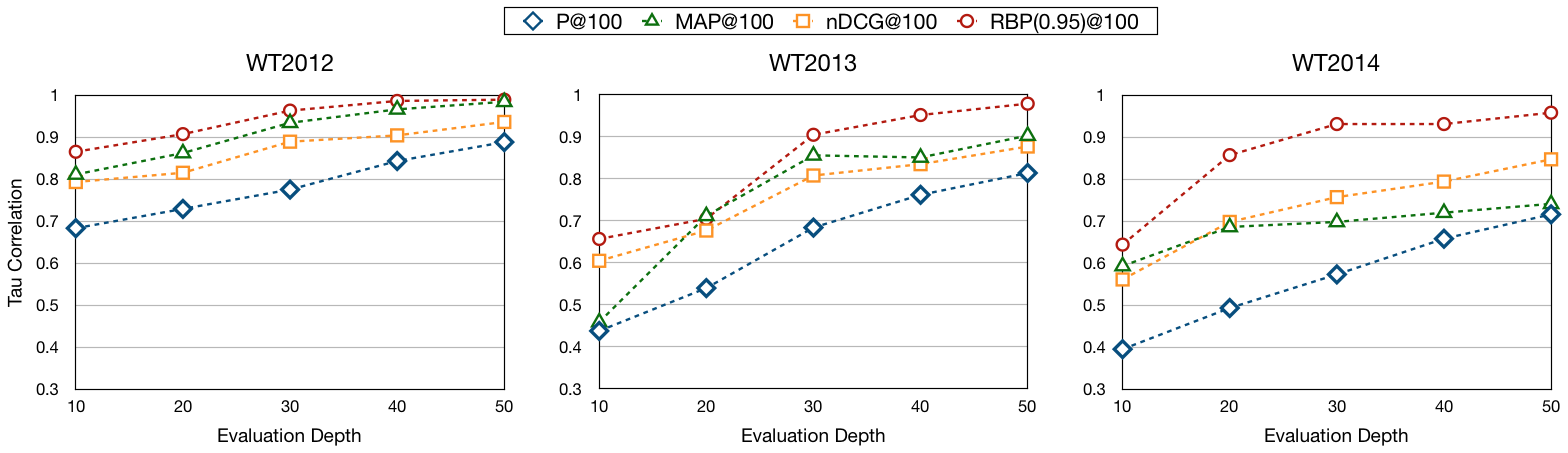

The paper also proposes using low-cost measures to predict high-cost measures. This is particularly advantageous in reducing the costs associated with deep evaluation measures. The study shows that highly accurate predictions of RBP with a judgment depth of 1000 can be achieved using measures evaluated at a depth of only 30.

Figure 2: Judgment Depth of High-Cost Measures is 1000.

This strategy suggests a potential reduction in evaluation costs in practice, allowing researchers to utilize less resource-intensive metrics to form comprehensive assessments of IR systems.

Conclusion

The paper highlights the utility of predicting IR evaluation metrics to enhance the capacity for comparative analysis in information retrieval research. By demonstrating the ability to replace high-cost, deeply evaluated metrics with their low-cost counterparts, the study opens avenues for efficient and comprehensive system assessments. Future directions may include leveraging more sophisticated models or expanding the datasets to encompass a broader range of evaluation metrics in IR research.