Reversible Duplex Transformer Layer

- Reversible Duplex Transformer Layer is a reversible neural architecture that enables exact bidirectional sequence mapping using a palindromic stack and additive coupling.

- It reuses a single set of parameters to perform both forward and inverse tasks, reducing parameter interference while enhancing training efficiency.

- Empirical results show state-of-the-art non-autoregressive performance with significant speed improvements in machine translation and speech-to-speech applications.

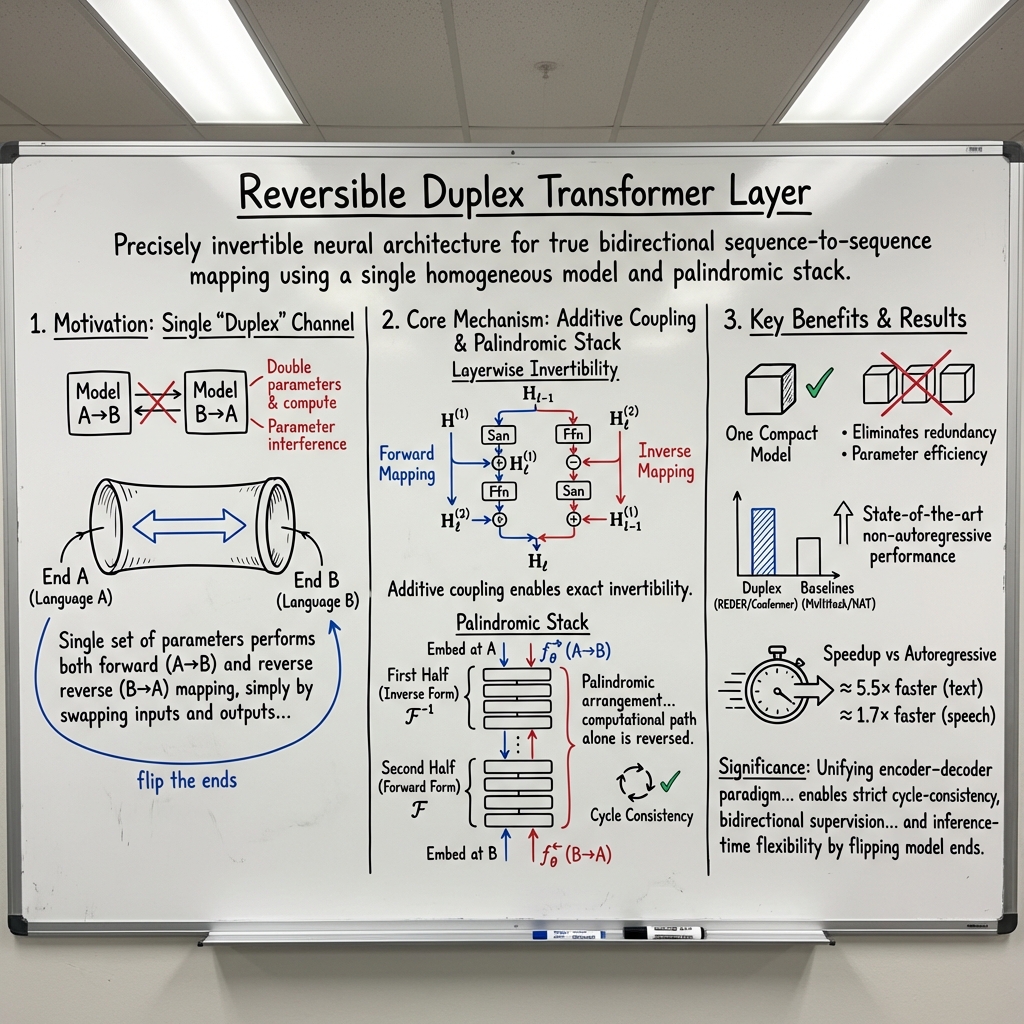

A Reversible Duplex Transformer Layer is a precisely invertible neural architecture that enables true bidirectional sequence-to-sequence mapping—most notably, reversible machine translation and speech-to-speech translation—using a single homogeneous model and a palindromic stack of reversible layers. Each layer employs an additive coupling structure for exact invertibility, permitting the model to swap task direction (“flip the ends”) with no parameter changes, all while leveraging bidirectional supervision for efficient training. This framework, exemplified by the REDER (Reversible Duplex Transformer) and @@@@1@@@@ designs, addresses parameter interference seen in multitask or shared-encoder models and achieves state-of-the-art non-autoregressive performance with substantial speedups over autoregressive baselines (Zheng et al., 2021, Wu, 2023).

1. Core Design and Motivation

The Reversible Duplex Transformer Layer is motivated by the bidirectional nature of sequence-to-sequence (seq2seq) tasks. Traditional seq2seq models, such as in machine translation or speech translation, often require:

- Two separate models (one per direction), doubling parameters and compute, or

- A multitask/shared model, which suffers from “parameter interference” as encoder representations struggle to specialize for distinct source–target language pairs.

The reversible duplex approach treats the network as a duplex channel: one end specializes for each language (or modality), and the stack is designed to be precisely invertible in the continuous representation space. A single set of parameters performs both forward (A→B) and reverse (B→A) mapping, simply by swapping inputs and outputs and inverting the computational path (Zheng et al., 2021). This architecture is extended to speech modality tasks through the Reversible Duplex Conformer with diffusion models (Wu, 2023).

2. Formal Definition and Layerwise Invertibility

Each Reversible Duplex Transformer Layer splits its input along the feature dimension:

The reversible layer uses additive coupling of multi-head self-attention (San) and feed-forward networks (Ffn):

Forward Mapping

Inverse Mapping

All layers share parameters for both directions; the architecture’s invertibility depends on the bijective design of these residual couplings and absence of information-destroying operations (such as softmax or cross-attention) in each layer (Zheng et al., 2021). The underlying attention is replaced with relative attention, and the stack is encoder-only (non-autoregressive), enabling operational symmetry.

In the Reversible Duplex Conformer for speech, the layer splits input , then sequentially applies a macaron FFN, multi-head self-attention (MHSA), convolution (CNN), and another FFN—each coupling only one half at a time to preserve invertibility:

Its inverse subtracts the same modules in reverse order, guaranteeing layer-wise reversibility (Wu, 2023).

3. Palindromic Stack and Duplex Operation

A central property is the palindromic arrangement: for even-layer stacks ( layers), one defines “forward” and “reverse” computation as compositions of the first half of layers in inverse form and the second half in forward form, or vice versa:

Bidirectionality is realized by “flipping the ends”: for A→B, embed the sequence at end A and apply ; for B→A, embed at B and apply . All operations and parameters are reused; the computational path alone is reversed (Zheng et al., 2021).

This symmetric design facilitates bidirectional supervision and supports simultaneous use of both translation directions in a single compact model, with stringent cycle consistency.

4. Loss Functions and Bidirectional Training

Beyond standard sequence-level loss (e.g., CTC or MSE for speech, or likelihood for text), duplex models introduce additional regularizers to encourage invertibility and bidirectional consistency (Wu, 2023):

- Layerwise forward-backward agreement:

- Cycle consistency loss:

- Directional losses (CTC or MSE) for each direction:

The total loss aggregates these with predetermined weights, favoring models that preserve information under both forward and inverse mappings, thus enforcing strong duplex properties absent from standard seq2seq models.

5. Empirical Performance and Comparative Results

Experiments demonstrate the efficacy of reversible duplex layers across both text and speech modalities:

- On WMT’14 English–German, REDER achieves 27.50 BLEU (En→De) and 31.25 BLEU (De→En), matching or exceeding multitask and non-autoregressive baselines by up to +1.3 BLEU, and decoding approximately 5.5× faster than the autoregressive Transformer (Zheng et al., 2021).

- On WMT’16 En–Ro: 33.60/34.03 BLEU, outperforming separate NAT baselines.

- In speech-to-speech translation (Fisher Es→En), the Reversible Duplex Conformer achieves 51.8% ASR-BLEU standalone; with duplex diffusion objectives, this rises to 57.4% ASR-BLEU, a +1.5 absolute gain over the strongest UnitY baseline, and one-pass reversal runs ≈1.7× faster than the two-pass cascaded models (Wu, 2023).

A plausible implication is that the duplex approach nearly closes the gap to strong autoregressive models, while drastically improving latency and model compactness.

6. Architectural Hyperparameters and Implementation Notes

Representative hyperparameters from empirical results are:

| Parameter | Value (Machine Translation (Zheng et al., 2021)) | Value (Speech (Wu, 2023)) |

|---|---|---|

| Stack depth | 12, split 6 inv. + 6 fwd. | 17 (8 reverse + 9 forward) |

| Model width | 1024 | 1024 |

| FFN inner dimension | 4096 (4×d) | 4096 |

| Attention heads | 16 (d/16 each) | 16 |

| Coupling submodules | San+Ffn (text) | FFN, MHSA, CNN, FFN (speech) |

| Dropout | Varied | 0.1 (attention, FFN) |

In all settings, every residual connection couples only one half of the split representation, enabling exact layerwise reversal by subtraction. Relative self-attention is used throughout, and all modules implement layer normalization in "pre-LN" form.

Ablation studies confirm that duplex coupling even without diffusion outperforms single-direction Conformer or Transformer baselines, while the addition of duplex diffusion objectives further improves bidirectional performance.

7. Significance and Extensions

The Reversible Duplex Transformer Layer establishes a principled architecture for reversible sequence modeling, unifying the encoder–decoder paradigm and eliminating redundancy inherent to dual-model or multitask systems. These reversible layers enable strict cycle-consistency, bidirectional supervision, parameter efficiency, and inference-time flexibility by flipping model ends. Extensions to Conformer-based architectures indicate modality generalization beyond text, providing a single, efficient, and invertible network for sequence transduction in both directions (Zheng et al., 2021, Wu, 2023).