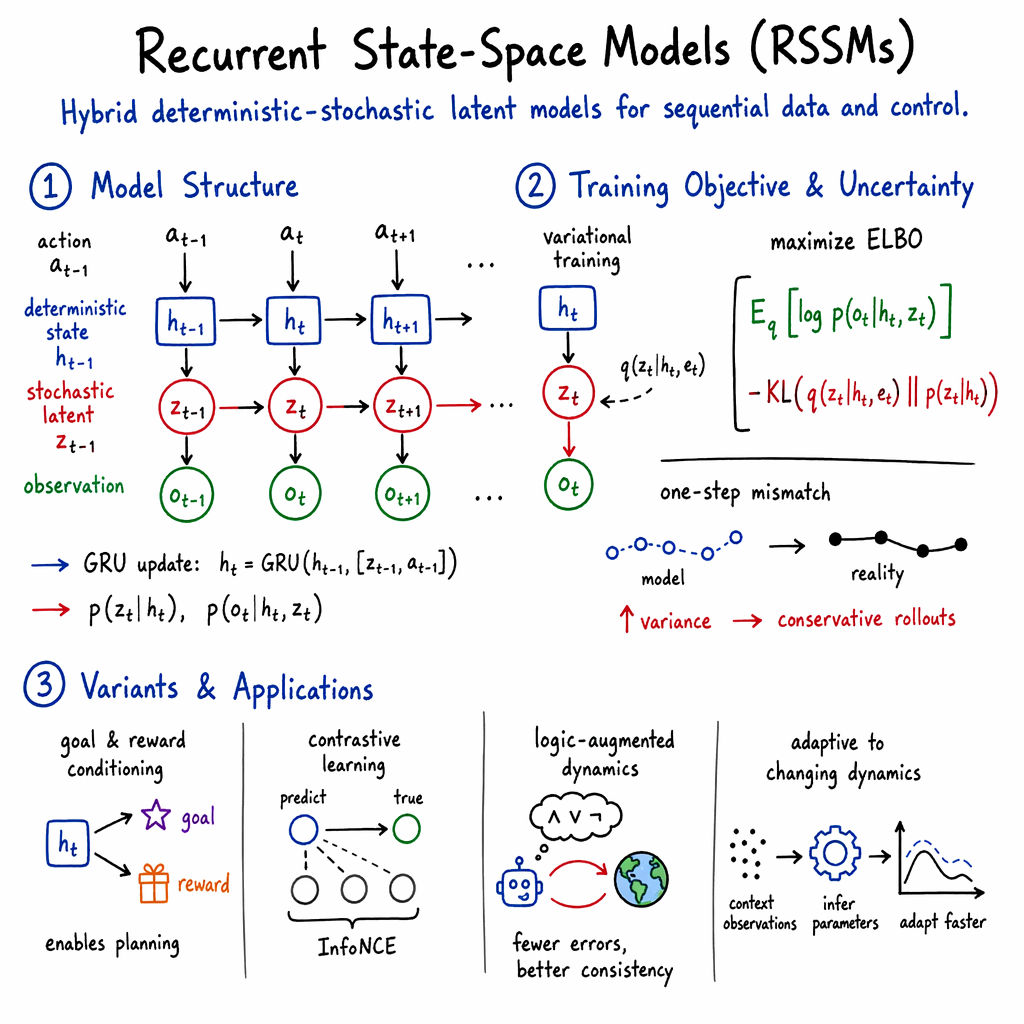

Recurrent State-Space Model (RSSM)

- RSSM is a latent variable model that combines deterministic recurrence (e.g., GRU) and stochastic state transitions to effectively capture complex sequential dynamics.

- It employs variational inference to learn latent representations, facilitating robust planning and control in model-based reinforcement learning and robotic applications.

- Architectural variants introduce contrastive objectives, goal conditioning, and adaptations for missing or occluded data, enhancing RSSM's efficiency and applicability.

A Recurrent State-Space Model (RSSM) is a class of latent variable models designed for sequential data, combining both deterministic and stochastic components to jointly model observation dynamics and uncertainty in controlled dynamical systems. RSSMs have become central to modern model-based reinforcement learning (MBRL), robotic control from high-dimensional observations, and complex sequential prediction in partially observed or nonstationary environments.

1. Formal Structure and Generative Model

RSSMs unify recurrent neural architectures with probabilistic state space models, parameterizing latent state transitions using a hybrid of deterministic recurrence (typically a GRU or similar) and explicit stochastic latent variables. At each time step , the model maintains:

- a deterministic hidden state , encoding long-term memory;

- a stochastic latent , often drawn from a simple or discrete distribution;

- an exogenous action , when modeling controlled systems;

- observed or encoded data derived from the actual observation .

The standard generative process factorizes as: with deterministic recurrence

and typically either continuous (Gaussian) or discrete (categorical) latent variables. The stochastic transition , and the emission , are parameterized by neural networks, as is the approximate posterior 0 for variational inference. This design enables RSSMs to efficiently capture both system memory and complex, multimodal uncertainty (Becker et al., 2022).

RSSMs are trained to maximize a variational lower bound (ELBO) on the marginal log-likelihood of the observations, yielding the objective: 1 which can be augmented with reconstruction, reward, or contrastive losses depending on application (Li et al., 2024, Srivastava et al., 2021).

2. Inference, Objectives, and Regularization

The inference scheme of RSSMs employs amortized variational inference, with the approximate posterior 2 only utilizing present and past information (“filtering” inference). Resulting one-step deviation between model-generated trajectories and actual observations must be explained by inflation of the stochastic transition’s variance, often leading to systematic overestimation of aleatoric uncertainty (Becker et al., 2022). Such overestimation supplies an implicit form of regularization that discourages overconfident, spurious predictive rollouts in planning (functionally analogous to epistemic uncertainty in ensemble-based models).

To address posterior collapse and encourage informative latent variables, KL-balancing and auxiliary prediction terms (e.g., reward or inverse dynamics) are frequently introduced (Li et al., 2024, Wang et al., 11 Feb 2025). In contrastive RSSM variants, information-theoretic objectives (e.g., InfoNCE) are used to enforce discriminability between predicted and true observations in the latent space (Srivastava et al., 2021).

Some regimes, notably those involving occlusion, missing data, or multi-frequency sensor fusion, expose the limits of the standard, filtering-only RSSM; alternative models such as the Variational Recurrent Kalman Network (VRKN) employ smoothing inference and explicit epistemic uncertainty modeling to improve robustness (Becker et al., 2022).

3. Architectural Variants and Key Extensions

RSSM architectures display considerable diversity along several axes:

- Latent Variable Types: Early examples adopt continuous Gaussian latents; recent work employs high-dimensional discrete latents (e.g., 3 categorical variables with 4 classes to yield 5-dimensional discrete state, as in DeformNet (Li et al., 2024)).

- Recurrent Core: GRUs with hidden sizes in the 200–300 range are typical, with deterministic recurrence supporting both long-term memory and efficient rollout (Li et al., 2024, Kadi et al., 23 Aug 2025).

- Latent Conditioning: Goal-conditioned RSSMs (GC-RSSMs) inject encoded goal states into both prior and posterior networks, allowing for direct multi-step planning toward target configurations (Kadi et al., 23 Aug 2025).

- Reward Modeling: Explicit reward heads trained alongside the main RSSM permit planning directly in latent space via model-predictive control or cross-entropy optimization (Li et al., 2024, Kadi et al., 23 Aug 2025).

- Contrastive Regularization: In high-dimensional observation settings, contrastive prediction replaces pixel-space reconstruction to encourage the latent to capture task-relevant variations under observational distractors (Srivastava et al., 2021).

- Logic-Augmented Dynamics: The DMWM framework combines RSSM-S1 (standard RSSM) and a logic-integrated System 2 (LINN-S2), incorporating logical constraints into latent rollouts and planning, demonstrably improving trial efficiency and long-term consistency (Wang et al., 11 Feb 2025).

- Hidden Parameter Models: HiP-RSSM augments RSSM with global episode/task parameters, enabling adaptation in settings with changing or locally stationary dynamics (Shaj et al., 2022).

A representative architectural comparison is shown below:

| Application | Latent Types | Recurrence | Reward/Goal Conditioning | Reference |

|---|---|---|---|---|

| DeformNet | Discrete (6) | GRU, 7 | Yes (goal & reward) | (Li et al., 2024) |

| LaGarNet | Gaussian, 8 | GRU, 9 | Yes (goal at each step) | (Kadi et al., 23 Aug 2025) |

| CoRe | Gaussian, 0 | GRU, 1 | Contrastive, SAC | (Srivastava et al., 2021) |

| DMWM | Gaussian, flexible | GRU/MLP | Logic feedback (S2) | (Wang et al., 11 Feb 2025) |

| HiP-RSSM | Gaussian, global & per-step | Linear-Gaussian | Task context | (Shaj et al., 2022) |

4. Training Regimens and Practical Considerations

RSSMs are optimized either via full-sequence backpropagation-through-time or minibatch subtrajectory training. Standard configurations use Adam optimizers with step sizes 2 or similar, gradient clipping (norm 3), and latent state dimensions 4 or 5 for stochastic components (Li et al., 2024, Kadi et al., 23 Aug 2025). Representation learning may be bootstrapped by first training an autoencoder (e.g., PointNet + NeRF for geometric vision) and then freezing it during RSSM training (Li et al., 2024).

Data augmentation—including random rotations, flips, and goal perturbations—is frequently employed to promote robustness, especially for vision. In tasks with rapidly changing or task-dependent dynamics, HiP-RSSM uses a context update stage to infer hidden parameters from a subset of observations, enabling fast adaptation without variational inference (Shaj et al., 2022).

RSSMs can be used for online planning by rolling out imagined latent trajectories for candidate action sequences, using costs (e.g., Chamfer distance, SIoU, or reward model predictions) to select optimal controls via sample-efficient methods such as iterative Cross-Entropy Method (iCEM) or actor-critic RL (Li et al., 2024, Wang et al., 11 Feb 2025, Srivastava et al., 2021).

5. Applications in Sequential Prediction, Control, and Manipulation

RSSMs have enabled significant advances in both simulated and real-world tasks:

- Deformable object manipulation: RSSMs paired with PointNet + NeRF latent encodings allow accurate modeling of complex deformable dynamics in high-DOF robotic systems, supporting goal-conditioned planning and multi-step MPC (Li et al., 2024).

- Garment flattening: Goal-conditioned RSSMs, with per-step goal and reward conditioning, generalize across object categories without mesh supervision, yielding state-of-the-art performance with reduced inductive bias (Kadi et al., 23 Aug 2025).

- Robotic control from pixels: RSSMs with contrastive objectives yield robust control in the presence of significant visual distractions, outperforming bisimulation and other RL baselines (Srivastava et al., 2021).

- Imagination and long-horizon reasoning: Logic-augmented RSSMs (DMWM) reduce error accumulation in multi-step predictions, improving long-horizon planning, trial and data efficiency, and logical consistency in both simulated and physical domains (Wang et al., 11 Feb 2025).

- Adaptive dynamics: HiP-RSSMs enable adaptation to shifting physical parameters or task variations, outperforming standard RSSMs, recurrent networks, and meta-learned architectures in system identification tasks (Shaj et al., 2022).

6. Limitations, Robustness, and Future Directions

Standard RSSMs exhibit suboptimal inference, overestimating aleatoric uncertainty when faced with modeling mismatch or missing data; while this acts as a heuristic regularizer in RL, it distorts true uncertainty profiles (Becker et al., 2022). Kalman-based or smoothing inference variants better handle partial observability and sensor fusion, and further work aims to unify these properties with the flexibility of RSSMs.

Recent developments focus on:

- Extending RSSMs with richer latent spaces, explicit epistemic uncertainty modeling, and logical constraints (Wang et al., 11 Feb 2025).

- Integrating task and goal conditioning at each recurrence step for enhanced generalization (Kadi et al., 23 Aug 2025).

- Using exact inference in the presence of known graphical structure or global context (e.g., HiP-RSSM) (Shaj et al., 2022).

- Improving sample-efficiency and robustness to distributional shift via contrastive or logic-regularized objectives (Srivastava et al., 2021, Wang et al., 11 Feb 2025).

A plausible implication is that future research will converge on hybrid designs incorporating both classical smoothing inference and domain-knowledge constraints, enabling RSSMs to robustly support increasingly complex, real-world sequential decision-making tasks.