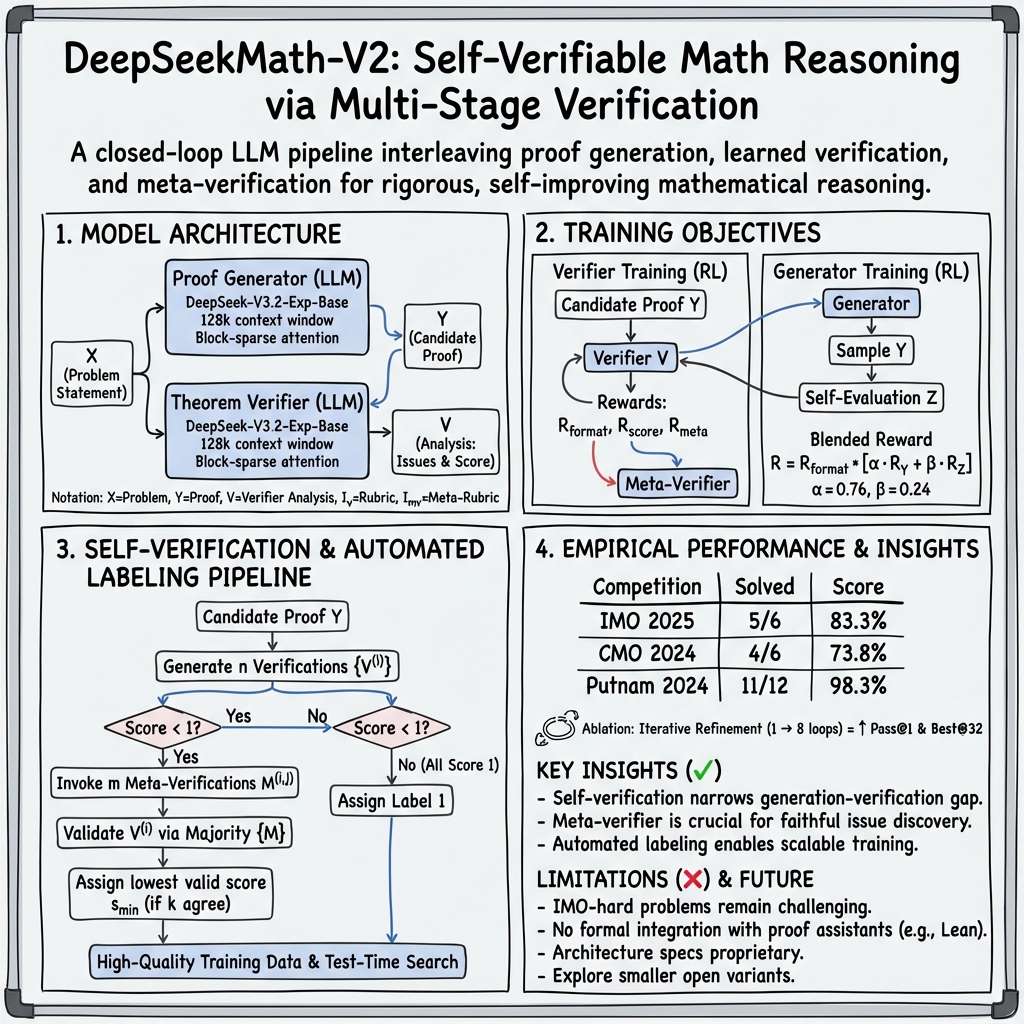

DeepSeekMath-V2: Self-Verified Math Proofs

- DeepSeekMath-V2 is a large language model that achieves self-verifiable mathematical reasoning by interleaving proof generation with learned verification.

- It leverages a deep transformer with a 128,000-token context and block-sparse attention to effectively process long, multi-stage proofs.

- The system sets a new standard on advanced benchmarks, demonstrating exceptional performance in open-ended mathematical problem solving.

DeepSeekMath-V2 is a LLM system targeting self-verifiable mathematical reasoning, with a focus on rigorous step-by-step theorem proving and proof verification. Building upon the limitations of final-answer-centric reward mechanisms, DeepSeekMath-V2 interleaves the training and deployment of both a proof generator and a learned verifier, culminating in a self-improving closed-loop pipeline. The system demonstrates exceptional performance on advanced mathematical benchmarks, establishing a new standard for machine reasoning in the context of open-ended mathematical problem solving (Shao et al., 27 Nov 2025).

1. Model Architecture

Both the proof generator and theorem verifier in DeepSeekMath-V2 share an identical backbone architecture based on the “DeepSeek-V3.2-Exp-Base” deep transformer model. Key features include a 128,000-token context window employing block-sparse attention, enabling the processing of long mathematical proofs and multi-stage reasoning.

Essential architectural details such as the number of layers, model width, and attention head count remain proprietary, but both generator and verifier operate at a scale consistent with current large autoregressive LLMs, each fine-tuned for its specialized task. The system introduces the following notation:

- : problem statement

- : candidate proof

- : verifier's analysis (identification of issues and a summary score)

- : high-level rubric specifying verification requirements

- : meta-verification rubric for verifying the verifier's analysis

The generator and verifier are thus tightly coupled, with their outputs and analyses linking procedural and meta-analytical layers throughout the training and evaluation processes.

2. Training Objectives and Loss Formulations

2.1 Theorem Verifier

Proof verification is formulated as a reinforcement learning (RL) task with a composite reward function:

- Format Reward:

- Score Reward:

The verifier's RL objective is: Meta-verification introduces an additional reward evaluating the faithfulness of the listed issues. The total reward becomes: A separate meta-verifier is trained with the same structure on meta-verification tasks.

2.2 Proof Generator

The proof generator is optimized by RL, directly using the learned verifier as its reward model. The generator samples a proof 0 and, uniquely, is incentivized to conduct self-evaluation by producing its own analysis 1 with self-assigned score 2: 3 where

4

This blended reward explicitly balances the generator's solution quality and its self-verification fidelity as judged by the verifier and meta-verifier.

3. Self-Verification and Automated Labeling Pipeline

A closed-loop, self-verification protocol orchestrates inference and ongoing improvement. During candidate proof evaluation, the following algorithmic sequence is enacted:

- Generate 5 independent verifications 6 for candidate proof 7.

- For verifications with score 8, invoke 9 meta-verifications 0.

- Validate 1 if the majority of 2 confirm its conclusions.

- Assign the lowest valid score 3 across all valid 4 as the label for 5, if at least 6 such verifications agree.

- If no issues are found in any verification, assign label 1; otherwise, defer labeling to human annotators.

This pipeline supports two critical functions:

- Autonomous generation of high-quality training data for the verifier, especially on newly encountered, challenging proofs.

- High-compute search at test time, incorporating repeated candidate generation, multi-threaded verification, and iterative refinement of proofs until consensus is achieved.

4. Empirical Performance and Benchmark Results

DeepSeekMath-V2 has been evaluated on both in-house and public competition datasets. The salient results are:

| Competition | Problems Solved | Score |

|---|---|---|

| IMO 2025 (6) | 5 fully, 0 partial | 83.3% |

| CMO 2024 (6) | 4 fully, 1 partial | 73.8% |

| Putnam 2024 (12) | 11 fully, 1 partial | 98.3% |

On a 91-problem CNML-level internal benchmark, DeepSeekMath-V2 outperforms other leading LLMs (GPT-5-Thinking-High, Gemini 2.5-Pro) in algebra, geometry, number theory, combinatorics, and inequalities, with correctness adjudicated by majority over 8 verifier analyses.

Ablation studies varying the number of self-verification loops (from 1 to 8) reveal monotonic improvements in Pass@1 and Best@32, indicating the tangible benefit of iterative refinement for proof quality.

Expert grading confirms model performance exceeds gold-medal thresholds and that the Putnam 2024 score (98.3%, 118/120) surpasses all known human efforts for that exam. Formal significance testing is not reported.

5. Key Insights and Theoretical Implications

Empirical findings indicate that self-verification substantially narrows the gap between generation and verification: compelling the generator to audit and rectify its own proofs before external evaluation leads to demonstrably stronger final solutions than simply optimizing for end answers. The inclusion of a meta-verifier—tasked with verifying the verifier—proves crucial for adopting faithful, non-hallucinatory issue discovery. Automated labeling via scaled multi-stage verification ensures scalability, drastically reducing reliance on manual annotation for challenging tasks.

6. Limitations and Open Challenges

Despite these advances, DeepSeekMath-V2 exhibits limitations:

- Top-level IMO-hard and advanced-ProofBench problems remain challenging, suggesting the verifier and generator insufficiently capture intricate logical nuances.

- No formal integration with automated proof assistants such as Lean or Isabelle is provided, meaning the pipeline remains semi-informal; bridging this with formal proof systems is recognized as an important next direction.

- Model architecture specifications (size, depth, width) are not publicly disclosed, limiting reproducibility and systemic analysis.

- Exploration of smaller, open variants is highlighted as a means to democratize access to self-verifiable mathematical reasoning.

7. Broader Significance and Future Directions

DeepSeekMath-V2 demonstrates that LLMs, trained within a multi-level verification framework, can generate not only plausible mathematical proofs but can develop the capability to self-audit, resolve issues, and deliver solutions with verifiable rigor. This architecture offers a blueprint for future AI systems aimed at trustworthy, self-guaranteed derivations, and provides an empirical foundation for advances in AI-driven scientific discovery and mathematical research (Shao et al., 27 Nov 2025).