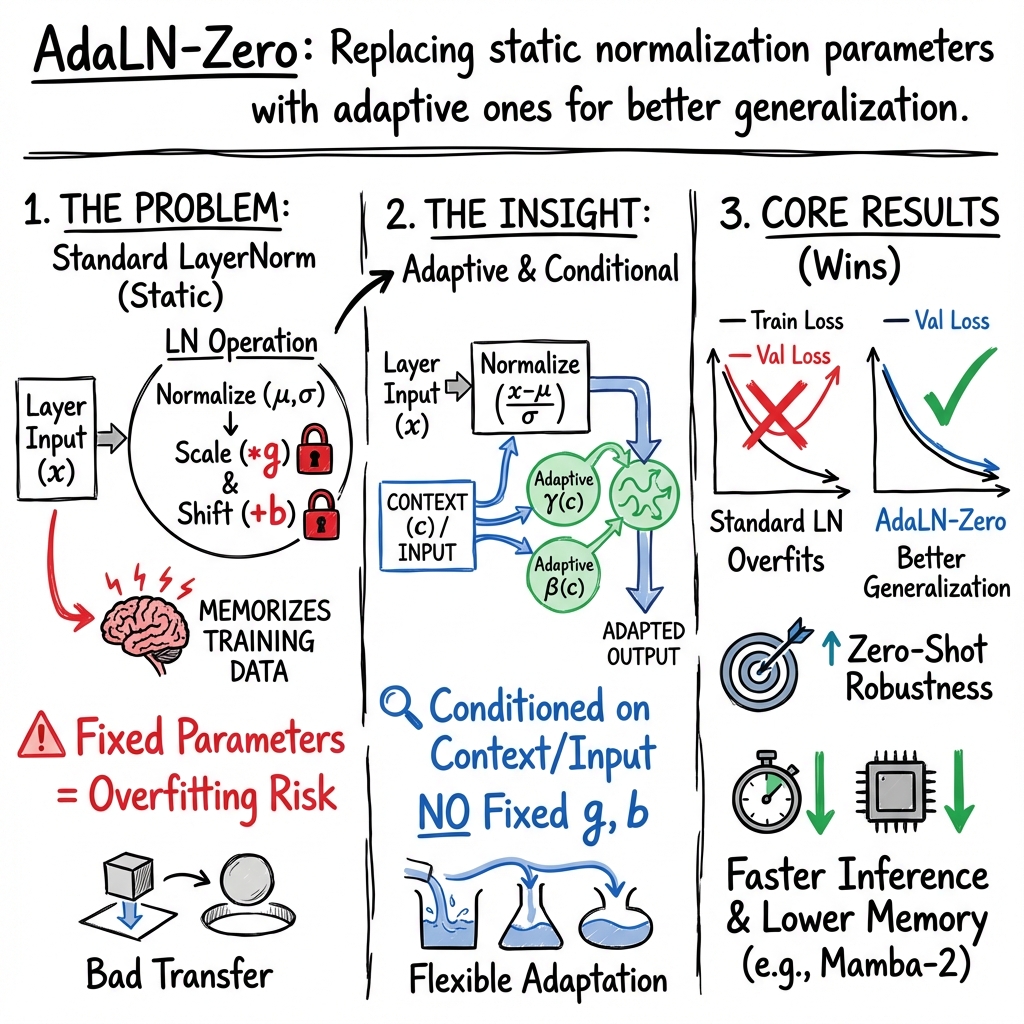

Adaptive LayerNorm Zero Overview

- Adaptive Layer Normalization Zero is a method that eliminates fixed per-neuron affine parameters by using context-conditioned transformations to reduce overfitting.

- It integrates input-conditioned normalization and gradient re-scaling to enhance robustness in zero-shot and few-shot learning scenarios.

- Its architectural deployment in sequence and multimodal models yields superior efficiency with reduced memory footprint and faster inference.

Adaptive Layer Normalization Zero (adaLN-Zero) refers to a family of normalization methods in deep neural networks that eliminate fixed, per-neuron affine transformations (i.e., gain and bias) and instead employ adaptive, input- or context-conditioned transformations during training and inference. This approach has emerged from theoretical and empirical analyses demonstrating the risks of overfitting associated with static affine parameters in standard Layer Normalization, and synthesizes advances in input-conditioned normalization, re-centering and re-scaling of gradients, and efficient architecture integration in modern sequence models.

1. Fundamentals of Layer Normalization and Its Evolution

Layer Normalization (LN), originally proposed to address the limitations of Batch Normalization (BN) for recurrent and sequential architectures, operates by normalizing neuron activations within a layer over a single training instance, rather than across a mini-batch. For a layer with activations , LN computes per-instance statistics: and produces normalized activations using learnable gain and bias : This architecture, unlike BN, is stable across varying batch sizes and time steps and is especially well-suited for RNNs (Ba et al., 2016). However, the per-neuron fixed affine parameters have been identified as a source of overfitting, particularly in non-i.i.d., transfer, or zero-shot settings (Xu et al., 2019, Mao et al., 2023).

The motivation for adaptive variants, specifically adaLN-Zero, arises from analyses highlighting that LN’s principal benefit is not merely from the forward normalization but from its effect on the backward gradients—specifically, the re-centering and re-scaling mechanisms that regularize learning dynamics (Xu et al., 2019).

2. Theoretical Foundations and Adaptive Formulations

Empirical and theoretical work has demonstrated that the static bias and gain in classic LN can lead to memorization of training-specific patterns, resulting in diminished generalization. Adaptive Layer Normalization Zero addresses this by eliminating per-neuron fixed affine parameters (, ) and instead utilizes transformation functions conditioned on the normalized input itself or contextual information.

AdaNorm provides a formalized instantiation: after normalizing ,

an adaptive, element-wise scaling is performed: with (e.g., ), and chosen such that with high probability (Xu et al., 2019). Derivatives through are detached, ensuring that forward normalization properties are preserved but the learned affine parameters are replaced with an adaptive, non-fixed transformation.

Such transformations satisfy:

- Differentiability of ,

- Constraint that ,

- Boundedness of .

This mechanism generalizes beyond AdaNorm, allowing for architecture-specific adaptations, such as regression of normalization parameters from auxiliary networks or context encodings.

3. Architectural Integration and Conditional Adaptation

Recent architectures extend the adaLN-Zero paradigm by conditioning normalization parameters on richer, often multimodal, contextual information, as exemplified in models for speech-driven gesture generation (Zhang et al., 2024, Zhang et al., 2024). In these models, Adaptive Layer Normalization modules are incorporated within state-space or diffusion-based architectures, such as Mamba-2, to achieve high expressiveness with improved efficiency.

Given an intermediate representation , and context features (e.g., derived from audio or fuzzy latent variables), the adaptive normalization is formulated as: where and are per-dimension scaling and shifting parameters produced by regression networks (e.g., MLPs) acting on context . The adaptation can be performed per-token, uniformly across sequence tokens, or in a time-varying manner suitable for sequential tasks.

This design supports nuanced, context-dependent modulation, e.g., encoding speaker style or emotional tone for gesture generation, and adapts the normalization layer to non-i.i.d. data regimes.

4. Implications for Overfitting, Generalization, and Zero-Shot Learning

AdaLN-Zero mechanisms yield several empirical and theoretical improvements over standard LN:

- Reduction of overfitting: By eliminating static affine parameters, adaLN-Zero (and AdaNorm) avoids memorizing dataset-specific distributional offsets, as indicated by consistently lower validation losses and improved generalization across multiple datasets and modalities (Xu et al., 2019).

- Robustness in zero-/few-shot transfer: In multilingual and zero-shot translation settings, normalization order and adaptability are critical for generalization. Experiments show that adaptive or carefully-placed normalization (PostNorm variants) outperform fixed-parameter or PreNorm configurations by up to 12.3 BLEU in zero-shot directions, primarily by reducing off-target errors and language bias entanglement (Mao et al., 2023).

- Applicability to complex, conditional, or dynamic data: Adaptive normalization layers are especially well-suited for models requiring synchronized modulation across modalities, as in co-speech gesture generation, enabling the network to responsively adjust to input-driven nonstationarities.

5. Efficiency and Scalability in Modern Architectures

Incorporation of adaLN-Zero mechanisms within efficient architectures such as Mamba-2 yields substantial improvements in computational efficiency:

- Memory footprint: By regressing adaptive parameters from compact context features and reducing over-parameterization, models employing adaLN-Zero can achieve reductions in memory usage (e.g., by factors of 2.4 compared to transformer-based alternatives) (Zhang et al., 2024).

- Inference speed: Mamba-2 frameworks with adaLN-Zero can achieve 2–4 faster inference on sequence generation tasks, demonstrating improved scalability for low-latency and large-scale applications (Zhang et al., 2024, Zhang et al., 2024).

This efficiency is achieved without sacrificing model expressiveness or sample fidelity, as validated by both subjective (e.g., human-likeness and appropriateness) and objective (e.g., Fréchet Gesture Distance, BeatAlign) measures on standard benchmarks.

6. Prospects and Open Directions

The adaLN-Zero framework encompasses a spectrum of designs: from eliminating per-neuron affine parameters (as in LayerNorm-simple), to AdaNorm’s input-adaptive scaling, to context-conditioned normalization (as in multimodal generative models). Several promising directions may be inferred:

- Dynamic control of normalization placement (e.g., blending PreNorm and PostNorm) to mitigate overfitting on supervised directions while enhancing zero-shot transfer (Mao et al., 2023);

- Real-time modulation of normalization in response to distributional shifts, system state, or learned auxiliary metrics (such as off-target rates);

- Further integration of state-space models and diffusion mechanisms with adaptive normalization for both expressiveness and efficiency.

Consistent with its underlying theory, adaLN-Zero approaches can be viewed as part of a broader trend toward normalization layers that not only stabilize training but actively participate in the adaptation and generalization strategies of deep neural networks across diverse application domains.