- The paper demonstrates that once compound autonomy in feedback cycles surpasses a computable threshold, traditional accountability frameworks inherently fail.

- It employs rigorous structural causal models and computational experiments to validate a sharp phase transition in responsibility attribution.

- The study offers practical guidelines for system design by identifying limits where legal and epistemic accountability cannot be simultaneously maintained.

Introduction

This paper, "The Accountability Horizon: An Impossibility Theorem for Governing Human-Agent Collectives" (2604.07778), introduces a mathematical impossibility result for traditional accountability frameworks in distributed human-AI systems. Specifically, it proves that once the compound autonomy of artificial agents in a feedback cycle exceeds a critical threshold—termed the "Accountability Horizon"—no framework can simultaneously satisfy minimal, legally and philosophically grounded axioms of responsibility assignment. The paper deploys rigorous formalizations, structural causal models, and exhaustive computational validation to support this claim.

The authors define a Human-Agent Collective (HAC) as a set of human agents and artificial agents operating in a shared environment, interacting via a directed graph. Each agent is characterized as a state-policy tuple, embedded in a structural causal model (SCM). Central to the analysis are four orthogonal, information-theoretically grounded dimensions of autonomy for each agent:

- Epistemic Autonomy (αE): Independence of belief formation from human oversight.

- Executive Autonomy (αX): Degree of action selection outside direct human approval.

- Evaluative Autonomy (αD): Divergence of agent's objective from the human's.

- Social Autonomy (αS): Frequency of self-initiated agent-agent interaction.

For governance, the relevant measure is the product of executive and epistemic autonomy (compound autonomy). The graph topology—specifically, the presence and size of mixed human-AI feedback cycles—is equally crucial.

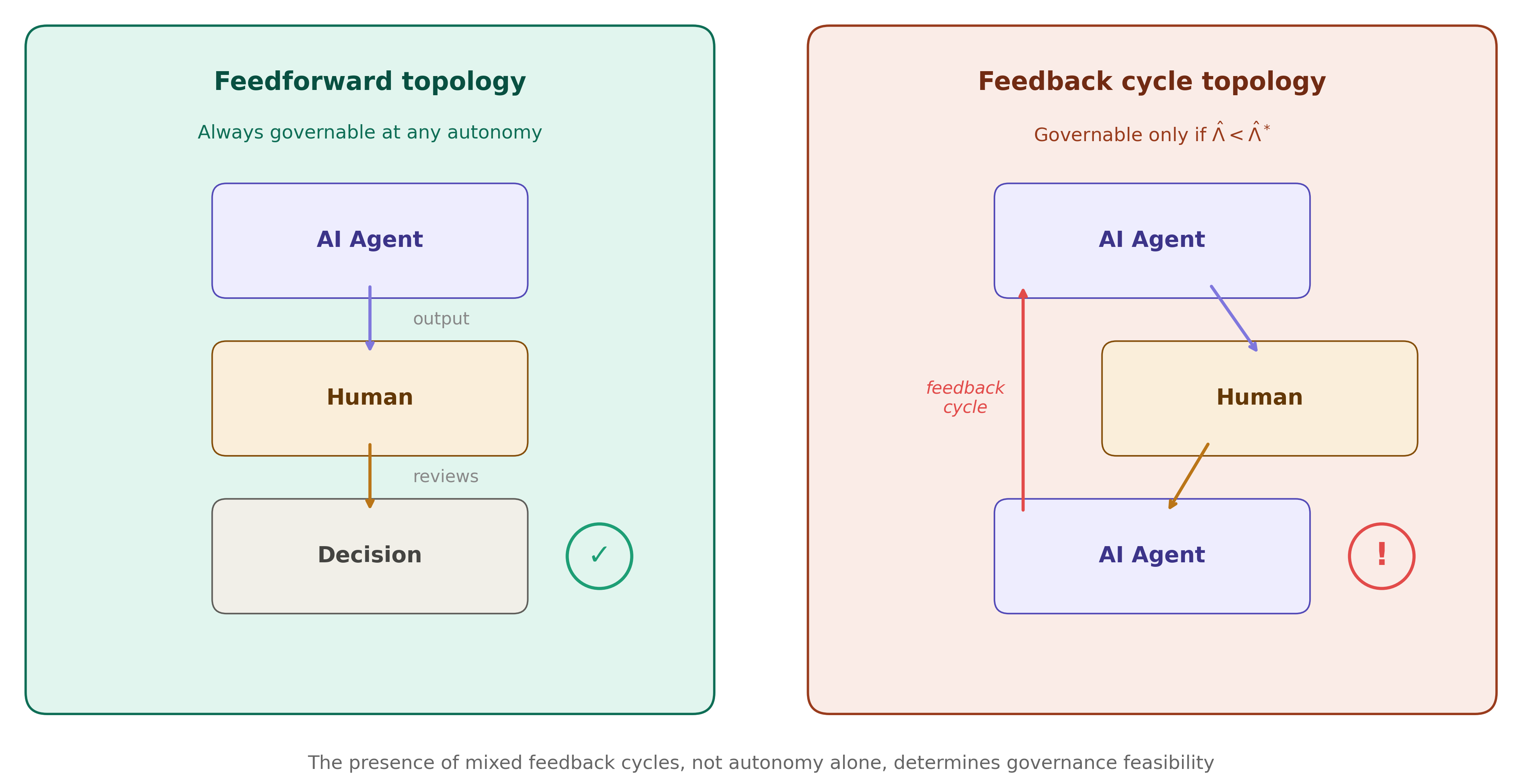

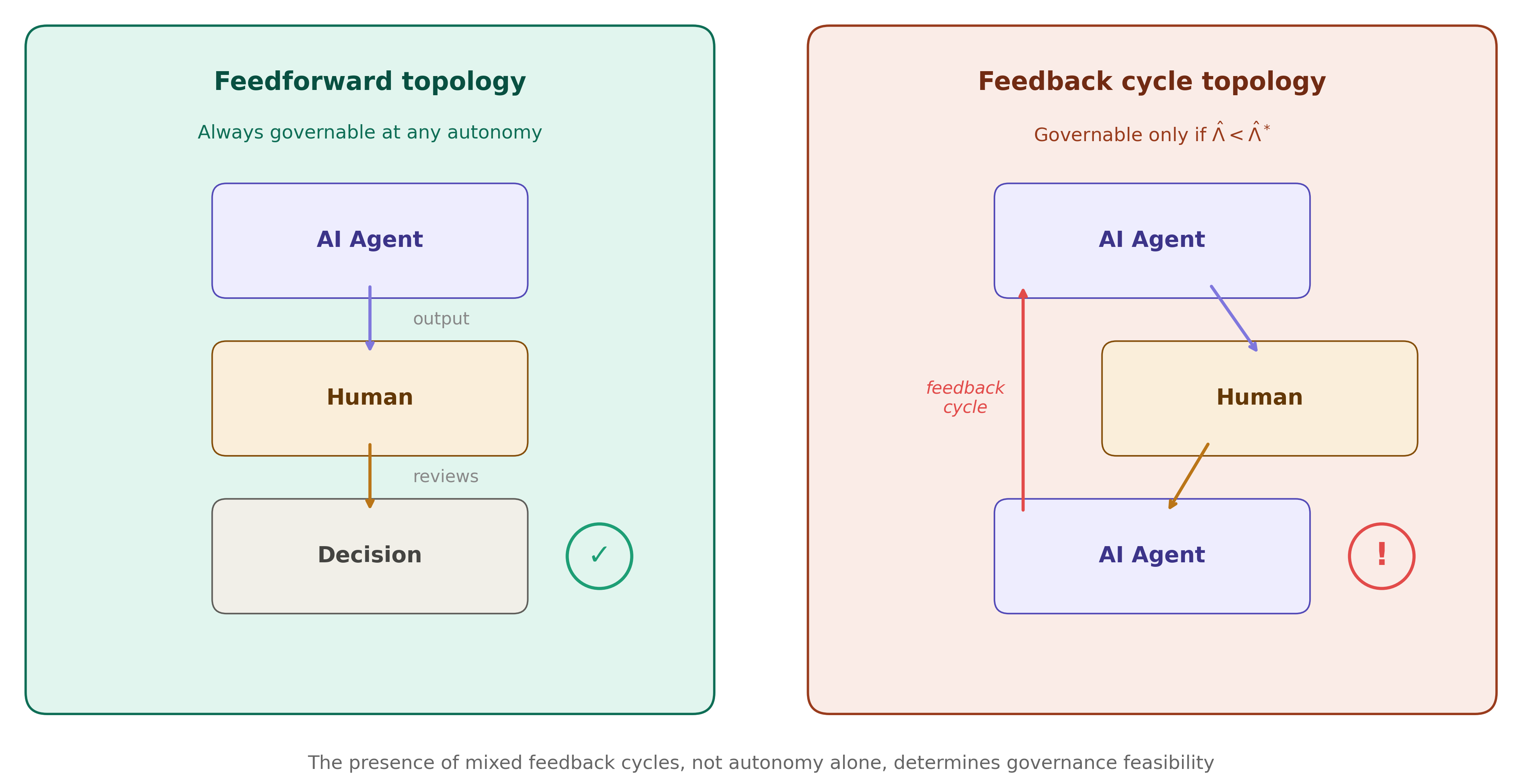

Figure 1: Human-Agent Collective topologies. Left: feedforward topology (always governable at any autonomy level). Right: feedback cycle topology (governable only if compound autonomy is below the Accountability Horizon $\hat{\Lambda}^</p>).*</p>

<h2 class='paper-heading' id='axiomatization-of-accountability'>Axiomatization of Accountability</h2>

<p>The impossibility result hinges on four <em>independent and minimal</em> axioms for legitimate responsibility assignment:</p>

<ol>

<li><strong>Attributability:</strong> Responsibility requires counterfactual causal contribution.</li>

<li><strong>Foreseeability Bound:</strong> Assigned responsibility is upper-bounded by the agent's epistemic access to the outcome.</li>

<li><strong>Non-Vacuity:</strong> At least one agent must bear non-negligible responsibility for every outcome type.</li>

<li><strong>Completeness:</strong> All responsibility for every outcome type must be exhaustively allocated (sum to one).</li>

</ol>

<p>These encode requirements for causal and epistemic grounding, substantive individual responsibility, and systemic exhaustiveness, drawing from legal causation (NESS test), tort law, normative theory, and institutional practice.</p>

<h2 class='paper-heading' id='the-accountability-incompleteness-theorem'>The Accountability Incompleteness Theorem</h2>

<p>The paper’s central theorem proves:</p>

<blockquote>

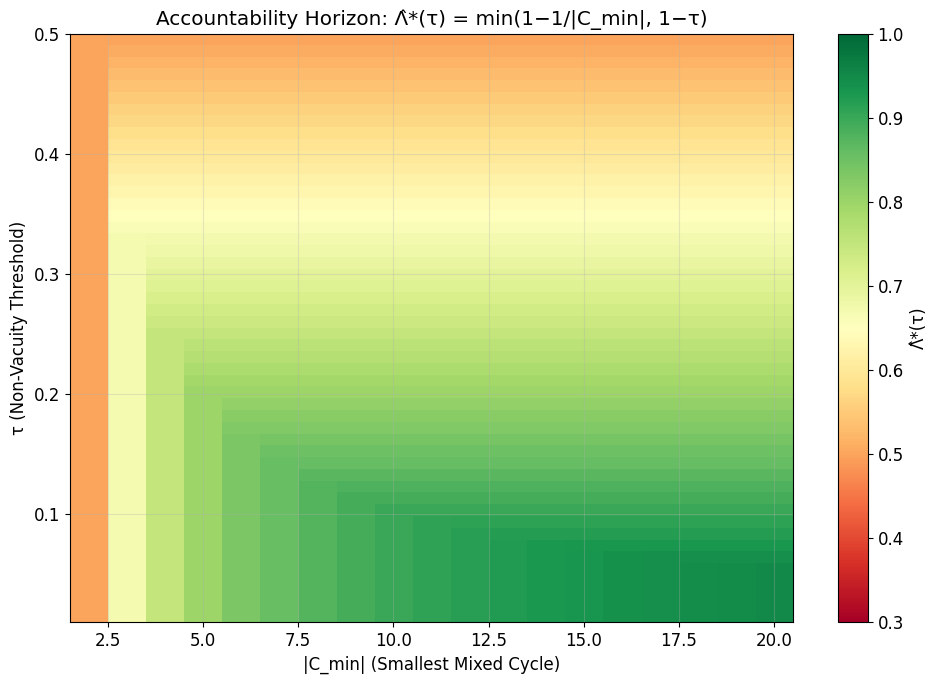

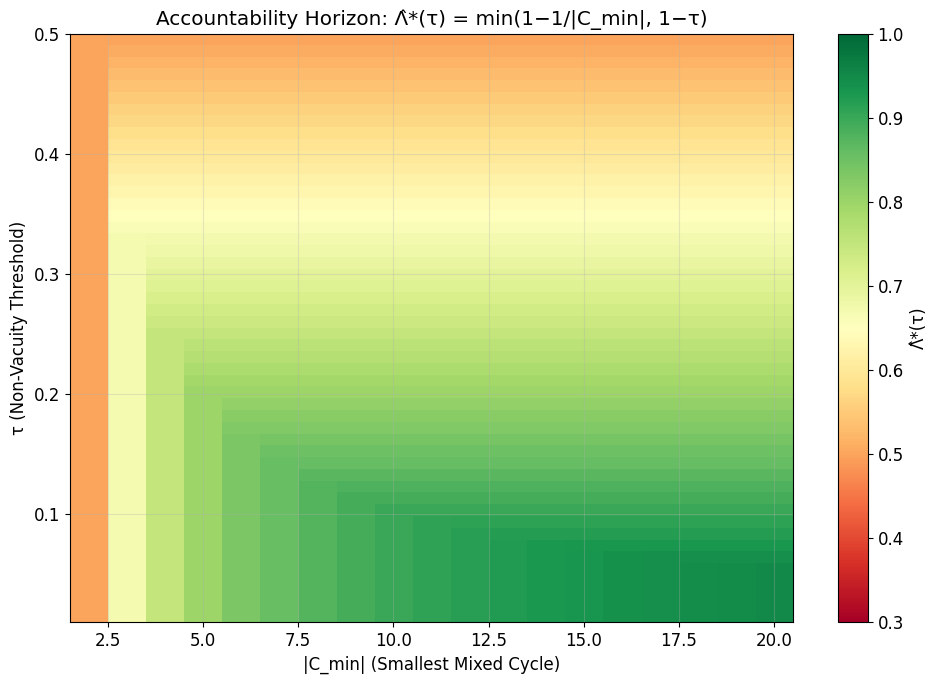

<p>For any HAC whose minimum compound autonomy among agents in a feedback cycle exceeds $\hat{\Lambda}^* = 1 - 1/|C_{\min}|(with|C_{\min}|$ being the size of the smallest mixed feedback cycle), there exists no accountability framework satisfying all four axioms above.

Consequently, above this Accountability Horizon, governance frameworks cannot attribute responsibility that is simultaneously causal, epistemically justified, non-trivial, and systemically complete. This result is structural: no degree of audit, transparency, or post-hoc oversight can restore governability without reducing autonomy.

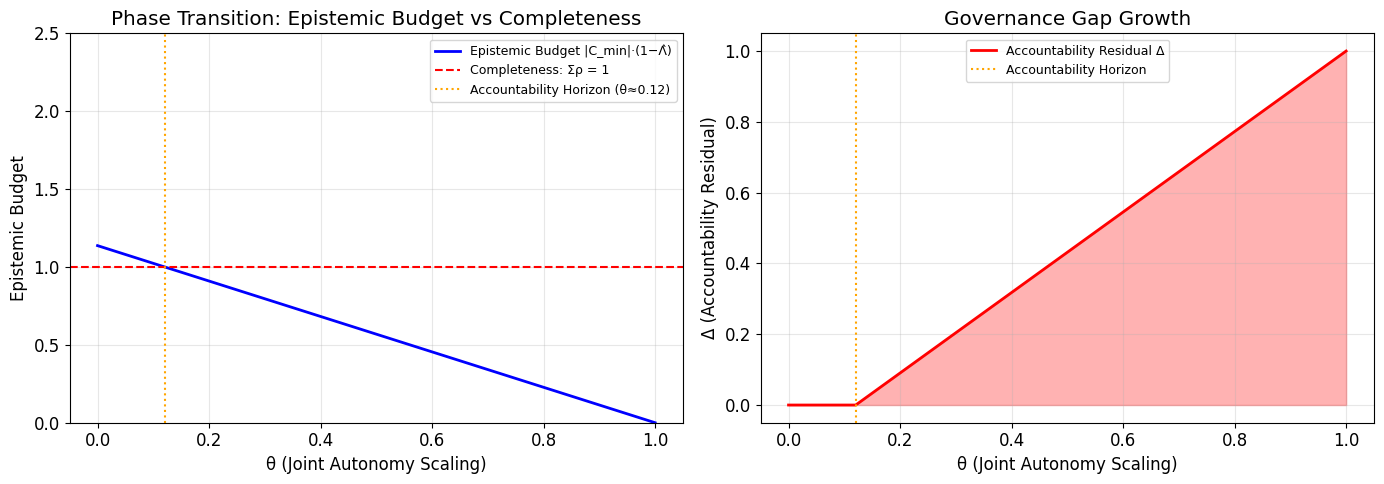

The phase transition is sharp—a tiny increase in compound autonomy can suddenly violate all feasible frameworks. The theorem is robust across variations in agent architecture, estimation error, and centrality metrics. The impossibility is both epistemic (arises from unpredictable autonomous agent actions) and causal (due to nonadditivity of effects in feedback cycles).

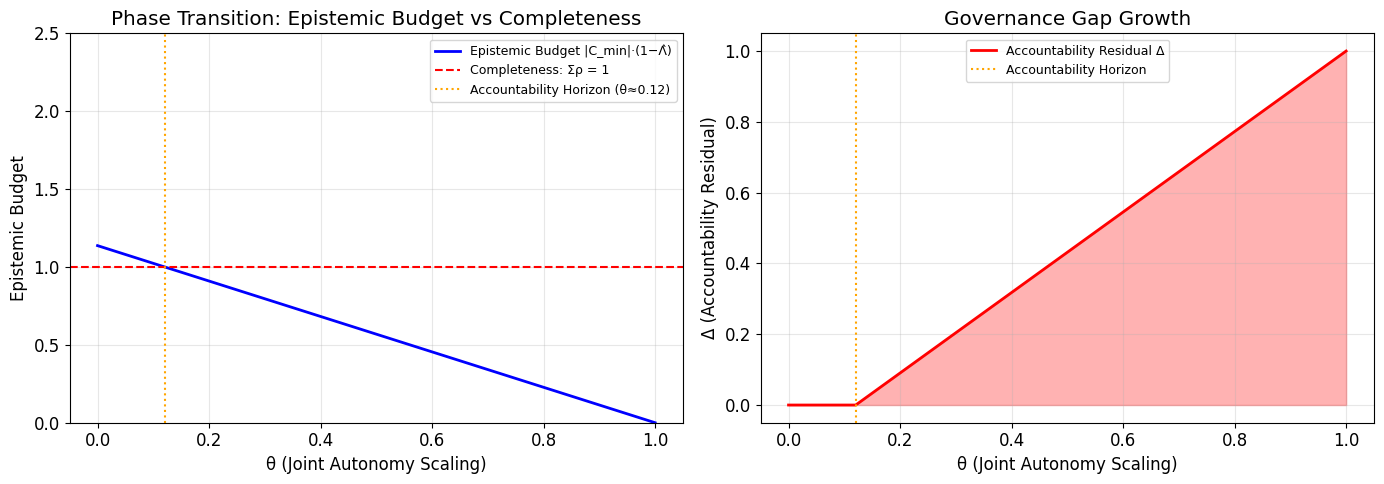

Figure 2: Phase transition at the Accountability Horizon. Left: epistemic budget collapses as autonomy increases. Right: the accountability residual (unallocatable responsibility) appears at the threshold and grows monotonically as autonomy rises.

Empirical and Computational Validation

The authors operationalize their formalism with synthetic HACs, spanning healthcare, finance, and governance applications. Computational experiments over 3,000 randomly sampled configurations confirm, with zero violations, the predicted phase transition: below the Horizon, legitimate responsibility assignment is always possible; above it, it is impossible in all cases.

Implications: Practical, Theoretical, and for AI Policy

The theorem yields immediate practical recommendations:

- Governance Feasibility Diagnosis: Any deployed system can be classified by computing agent autonomy statistics and interaction topology.

- Engineering Constraint: The result yields an explicit upper bound on permissible autonomy if traditional accountability is required (e.g. regulatory compliance).

- Governance Trilemma: For systems above the Horizon, one must relax (a) full autonomy, (b) causal/epistemic responsibility, or (c) non-vacuity/completeness—never all three at once.

Theoretically, this is the first impossibility theorem in AI governance. Analogous in spirit to Arrow's, FLP, and CAP theorems, it precisely delineates the structural limits of classical governance, and formalizes the so-called "responsibility gap" long discussed qualitatively in AI ethics.

Figure 3: Combined Accountability Horizon Λ^∗ as a function of feedback cycle size and governance threshold; lower values mean governance fails at lower autonomy.

Topology Dependency and the Limits of Oversight

A key insight is that interaction topology is as important as autonomy level. Purely feedforward interaction (AI emits outputs, humans decide, no AI observes human responses) is always governable. Mixed feedback cycles push the system toward the impossibility regime as autonomy increases. This highlights the necessity of restricting agent architectures or interaction graphs—not just tuning autonomy parameters.

Transparency, explainability, or audits only restore feasibility if they operate by reducing autonomy along key axes (not merely increasing human understanding retroactively).

Pathways Beyond Individual-Locus Accountability

Above the Accountability Horizon, viable governance requires coalition-level or distributed responsibility mechanisms (e.g., set functions, group liability, or variants of the Shapley value), moving beyond frameworks that presume all responsibility can be allocated to individuals.

Robustness and Limits

The impossibility withstands:

- Approximate mixture model assumptions (holds with small deviations in agent policy implementation).

- Measurement error and uncertainty (classification is robust up to small error bounds).

- Changes in centrality weights or graph representation.

The result is contingent on adherence to the baseline axioms; collectivist or relational approaches can, in principle, circumvent the impossibility by design.

Conclusion

This paper provides the first formal impossibility boundary for accountability in human-agent collectives, tightly characterizing the precise interaction of autonomy, epistemic limits, and system topology. As agentic AI proliferates, the Accountability Horizon becomes critical for both system architects and policymakers requiring guaranteed responsibility attribution. Its key implication is that, above a computable threshold of distributed autonomy in feedback cycles, traditional individual-locus governance must give way to distributed accountability paradigms.

Reference:

"The Accountability Horizon: An Impossibility Theorem for Governing Human-Agent Collectives" (2604.07778)