- The paper introduces a two-stage segmentation mechanism using uncertainty estimation to iteratively refine ambiguous cloud regions for improved accuracy.

- The approach integrates a dual-scale CNN–Mamba network that fuses local and global spatial features to capture detailed cloud morphologies.

- Empirical evaluations demonstrate that CloudMamba outperforms conventional models with up to 91.24% mIoU and 95.42% F1 score on benchmark datasets.

CloudMamba: Uncertainty-Guided Dual-Scale Mamba Network for Cloud Detection in Remote Sensing Imagery

Introduction

Cloud detection in remote sensing imagery is critical for numerous geospatial applications but remains notably challenging due to the ambiguity in thin-cloud regions, cloud boundaries, and the need for precise delineation in complex and fragmented cloud morphologies. The CloudMamba framework introduces two major innovations: an uncertainty-guided two-stage segmentation mechanism and a dual-scale CNN–Mamba hybrid network. These address, respectively, the need for targeted iterative refinement in ambiguous regions and the challenge of capturing spatial dependencies across multiple scales under linear computational complexity.

CloudMamba Architecture

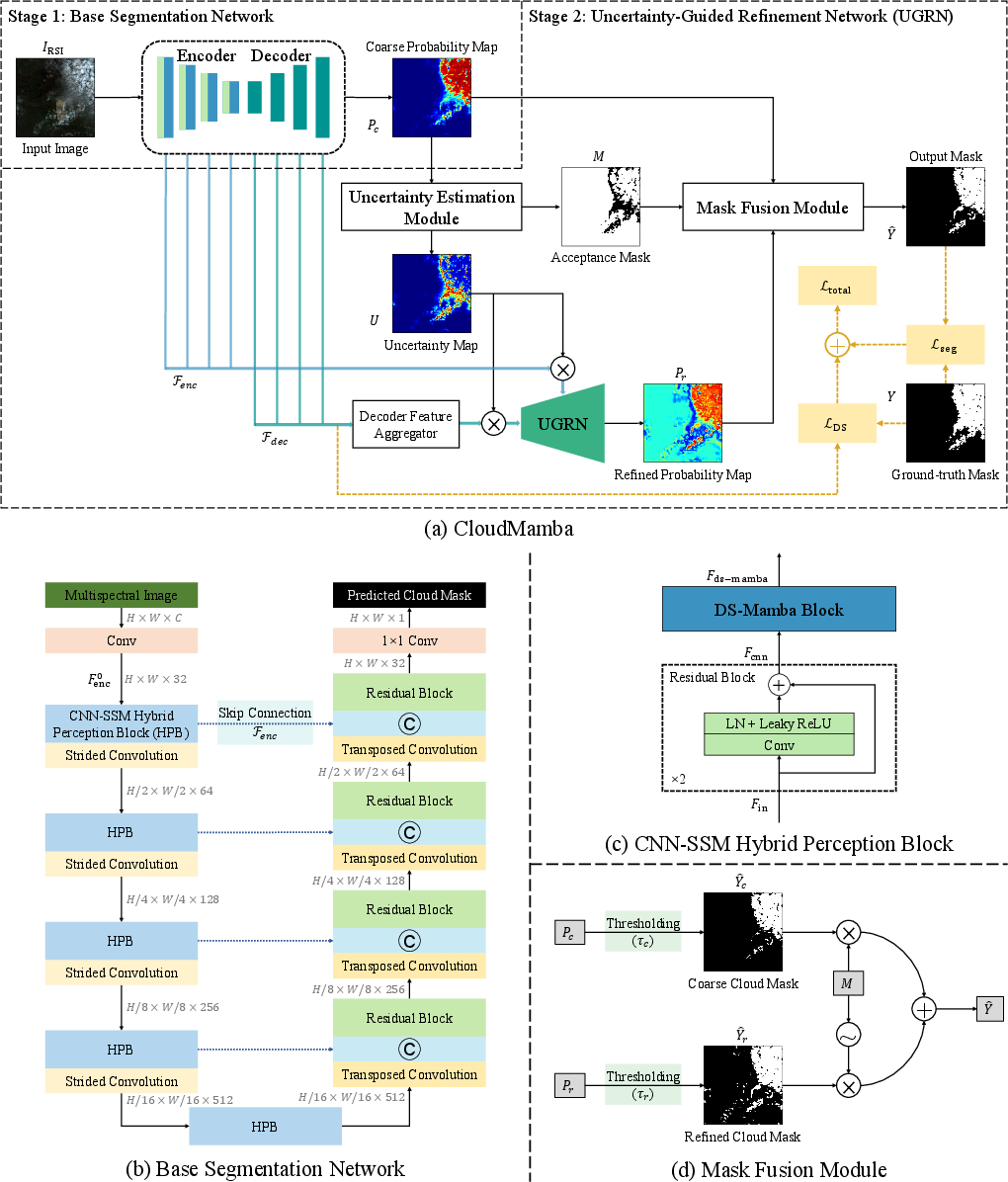

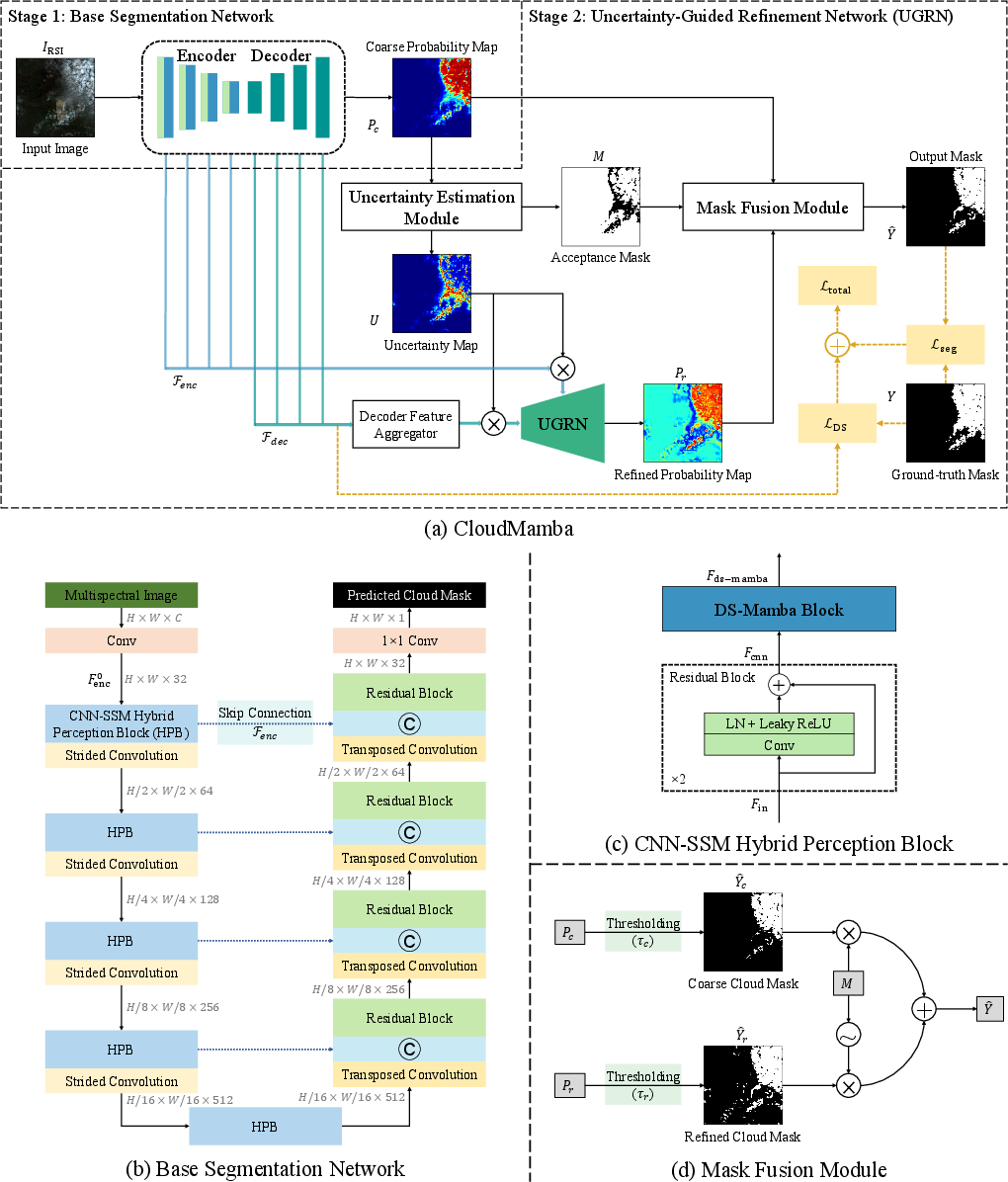

CloudMamba adopts a cascaded two-stage framework comprising a base segmentation network with an encoder-decoder structure and a second-stage Uncertainty-Guided Refinement Network (UGRN). The base network leverages a CNN–State Space Model (SSM) hybrid, integrating local feature extraction with global context modeling. The UGRN focuses computational resources on low-confidence regions identified via uncertainty estimation, enhancing segmentation where ambiguity is highest.

Figure 1: The overall CloudMamba framework, highlighting the encoder-decoder base segmentation and the uncertainty-guided second-stage refiner.

The encoder incorporates Hierarchical Perception Blocks (HPBs) that stack residual CNNs with dual-scale Mamba modules. This hybrid structure optimally captures both granular boundary details and global cloud morphology. Decoder stages are tightly coupled with skip connections to preserve high-resolution spatial information.

The key innovation in uncertainty handling is the explicit estimation of per-pixel uncertainty from the coarse probability map. Pixels with uncertainty above a predefined threshold are refined in the second stage, yielding selective computational efficiency and improved segmentation reliability.

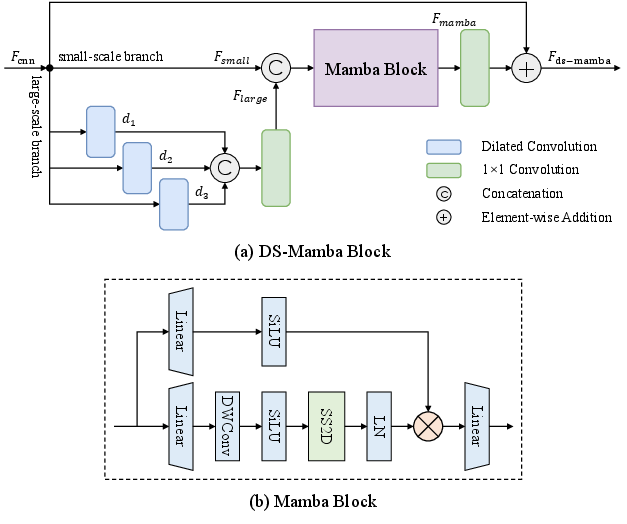

Dual-Scale Mamba Module

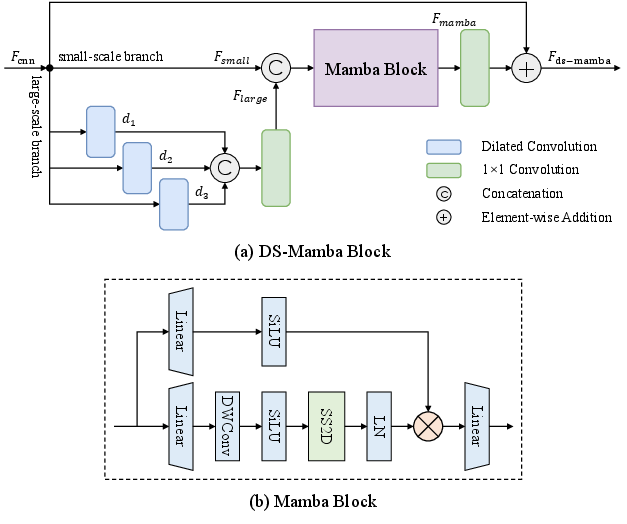

CloudMamba's multi-scale feature extraction is centered on the Dual-Scale Mamba (DS-Mamba) block. Extending SSMs to 2D, the DS-Mamba module comprises two parallel branches: a small-scale branch operating on standard features and a large-scale branch utilizing multiple dilated convolutions to expand receptive fields. Their concatenation is input to a shared Mamba block that fuses local and global context while preserving efficiency.

Figure 2: Detailed illustration of the Dual-Scale Mamba (DS-Mamba) block, showing parallel multi-dilation convolution fusion prior to Mamba sequence modeling.

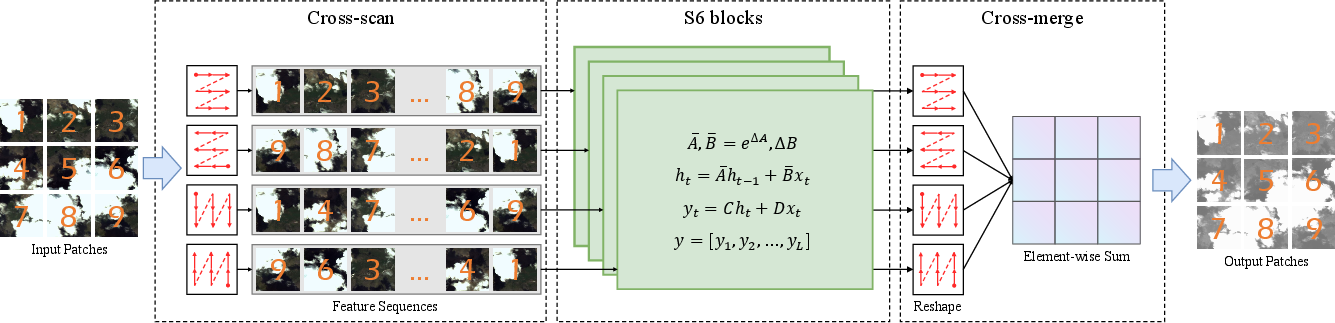

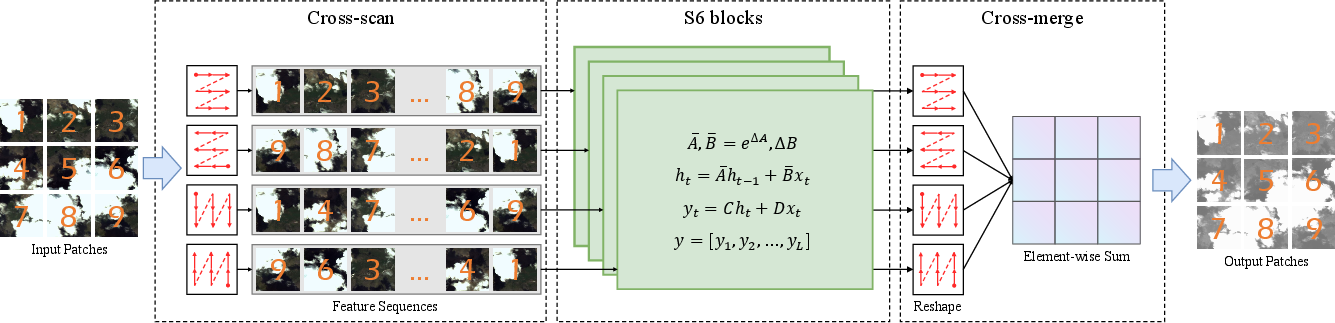

The Mamba core employs a 2D Selective Scan (SS2D) mechanism. Four-directional serializations scan the feature map to ensure comprehensive spatial dependency modeling. The outputs are merged to reconstitute a globally informed 2D feature map.

Figure 3: The four-directional scanning scheme of the 2D Selective Scan (SS2D) module, crucial for capturing anisotropic dependencies.

Empirically, a progressive dilation configuration ({1,2,4}) for the large-scale branch achieves the best trade-off between context aggregation and spatial precision.

Uncertainty-Guided Two-Stage Segmentation

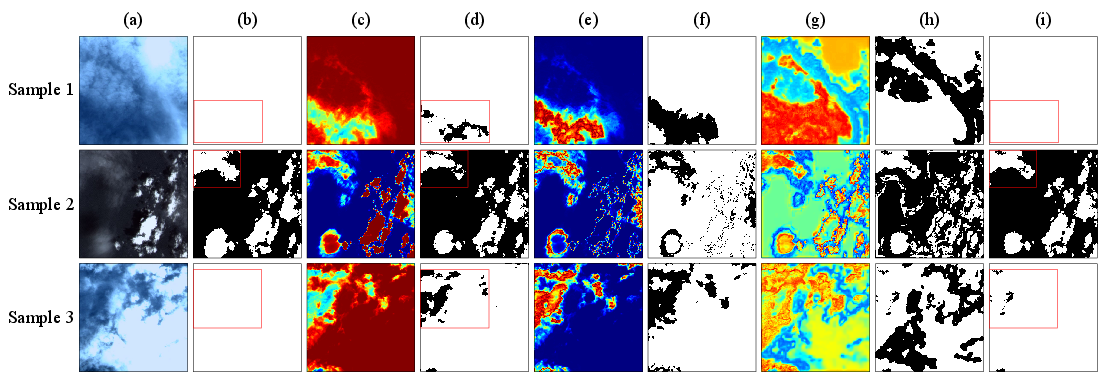

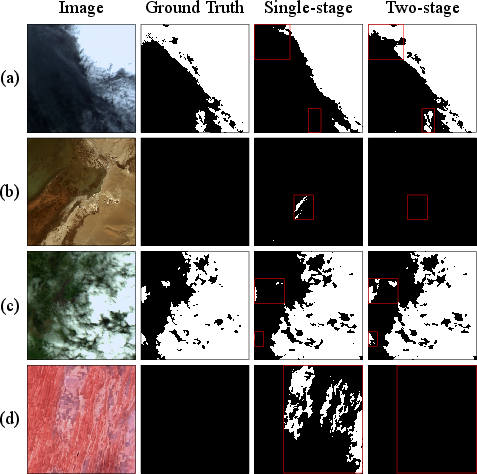

The segmentation strategy in CloudMamba departs from single-pass forward inference. The first stage produces a coarse probability map, from which an uncertainty map is deterministically computed. Pixels with high uncertainty (∣P−0.5∣ small) are flagged for reprocessing.

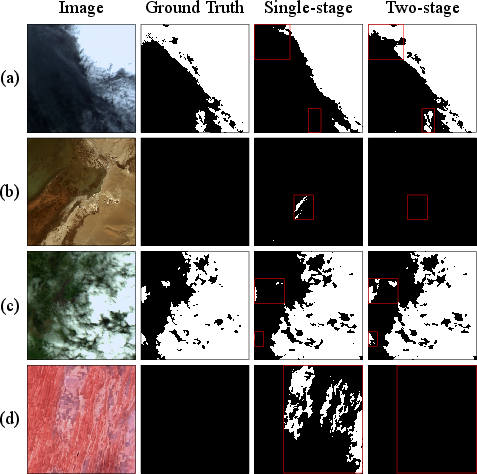

The UGRN reuses encoder and decoder features, modulated by the uncertainty map, ensuring that refinement targets hard regions without redundant global computation. This leads to focused improvements in thin clouds, boundaries, and ambiguous backgrounds—problematic cases for prior architectures.

Figure 4: Visual comparison between single-stage and CloudMamba two-stage segmentation, highlighting rectification of missed thin-cloud regions in the second stage.

Empirical Evaluation

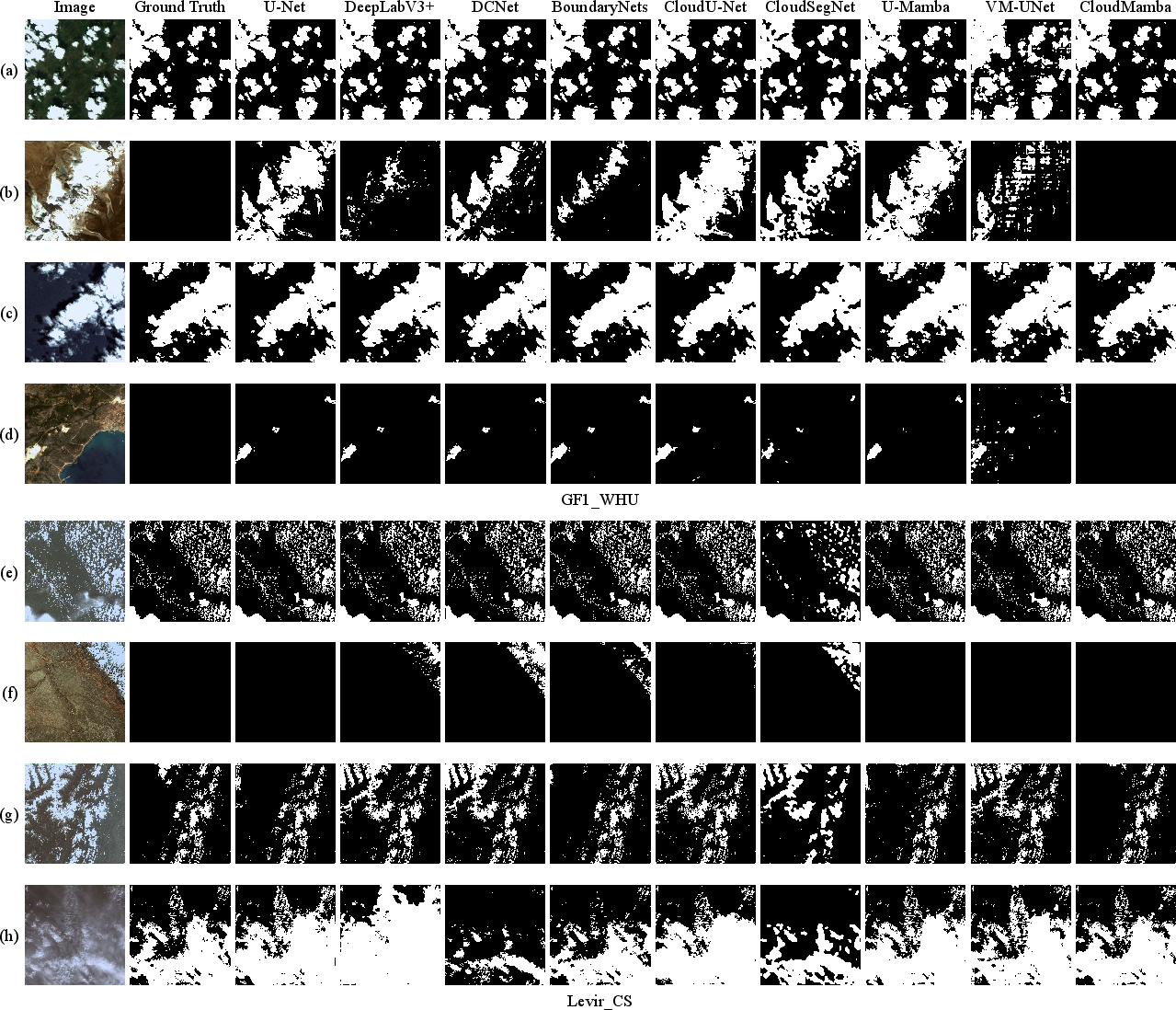

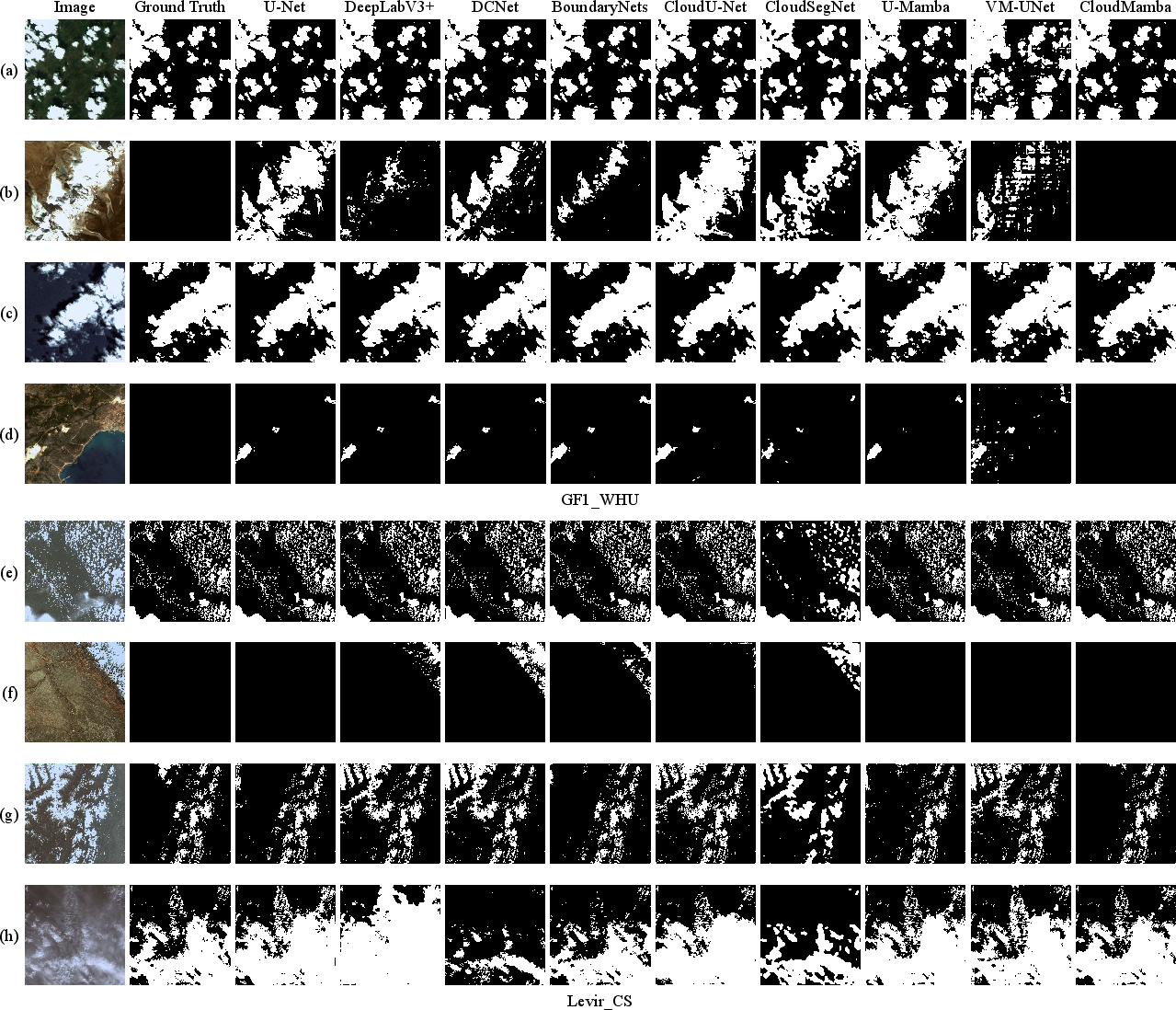

CloudMamba is evaluated on GF1_WHU and Levir_CS datasets. Across mIoU, F1, and Overall Accuracy, CloudMamba surpasses classical U-Net, DeepLabV3+, and state space model-based variants such as U-Mamba and VM-UNet. On GF1_WHU, CloudMamba achieves mIoU 89.27%, F1 94.33%, and OA 96.78%. On Levir_CS, scores are mIoU 91.24%, F1 95.42%, and OA 98.31%. Performance improvements, though numerically modest in some metrics, are consistent and robust across diverse and difficult scenarios.

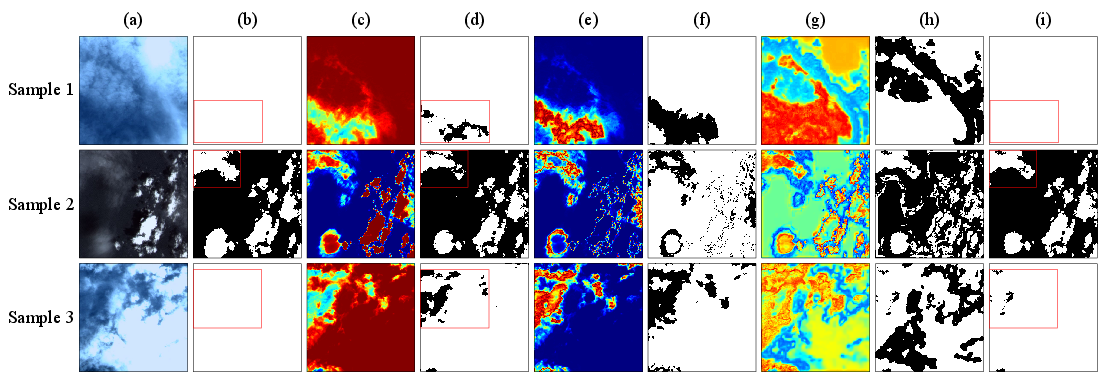

Crucially, evaluation on a hard-sample subset characterized by high model uncertainty shows a 1.6-point improvement in mIoU over the single-stage variant, evidencing the practical effectiveness of the uncertainty-guided mechanism.

Qualitative comparisons substantiate these metrics, with CloudMamba demonstrating superior suppression of false positives over snow and bright ground, and more accurate continuity in fragmented and thin clouds.

Figure 5: Visually compares CloudMamba and other methods across complex scenes; CloudMamba outputs show reduced over-segmentation and improved edge fidelity.

Figure 6: Stepwise visualization of CloudMamba intermediate results, showing uncertain regions identified and corrected between stages.

Ablation and Component Analysis

Ablation studies confirm that the dual-scale architecture significantly outperforms both CNN and standard single-scale Mamba designs by approximately 1.5-2% on key metrics. The DS-Mamba’s early fusion of different-scale features via a shared Mamba block is shown to elicit greater cross-scale interaction than late fusion variants. The uncertainty-guided second stage further improves F1 and mIoU, especially on ambiguous and boundary-dense samples.

Additionally, analysis of the dilation rate in the DS-Mamba large-scale branch indicates that multi-scale context (d1,d2,d3=1,2,4) achieves optimal results, while either overly small or large receptive fields degrade fine-grained segmentation.

Theoretical and Practical Implications

CloudMamba's dual-scale SSM approach demonstrates that linear-complexity global modeling can be combined with rich local detail extraction—overcoming Transformer-like quadratic overhead. The uncertainty-guided refinement paradigm introduces a paradigm for semiautonomous, region-specific iterative segmentation, enabling accuracy improvements without incurring significant computational penalties.

Practically, these architectural advances enable deployment of robust cloud detection in high-resolution, real-time satellite data pipelines, where both computational efficiency and segmentation accuracy are primary constraints.

Theoretically, the work presents a template for combining deterministic and learnable uncertainty quantification, suggesting avenues for future exploration using Bayesian or evidential uncertainty modeling in vision SSMs. Further research should evaluate generalization under distributional shift and adversarial corruption, and extend the two-stage refinement principle to other pixel-level prediction domains (e.g., change detection or scene parsing).

Conclusion

CloudMamba unites dual-scale state space modeling and uncertainty-guided selective refinement to establish a new standard for cloud segmentation in remote sensing. Its architectural choices yield measurable and visual advances in both conventional and ambiguous cases, with empirical superiority across major benchmarks. Remaining limitations—such as additional computation from refinement and heuristic uncertainty estimation—suggest further advancements via adaptive or Bayesian mechanisms and dynamic computation gating. The demonstrated effectiveness of Mamba-based multi-scale feature integration under linear complexity will likely inform future SSM architectures for a wide range of spatial analysis tasks.