- The paper demonstrates that CNNs achieve high classification accuracy (93.75%) in decoding hand movement motor imagery from EEG topographies.

- The methodology uses rigorous EEG data preprocessing, including mu-band filtering and spatial normalization, followed by a multi-layer CNN architecture.

- The adversarial autoencoder exhibits potential for semi-supervised learning but shows instability with lower accuracy (60%-68%), indicating avenues for further model refinement.

Computer Vision-based Neural Methods for EEG Image Classification: CNN and Adversarial Autoencoders

Experimental Protocol and Data Preparation

This study investigates the application of convolutional neural networks (CNNs) and adversarial autoencoders (AAEs) for the classification of hand movement-related motor imagery based on EEG-derived topographic images. The experimental protocol focused on a controlled cohort of 15 right-handed male subjects. EEG was acquired with a 32-channel setup following the 10-10 system, with stringent signal artifact removal using ICA, and careful control of experimental conditions. The task consisted of visually-cued, real-motor hand movements divided into left and right hand conditions.

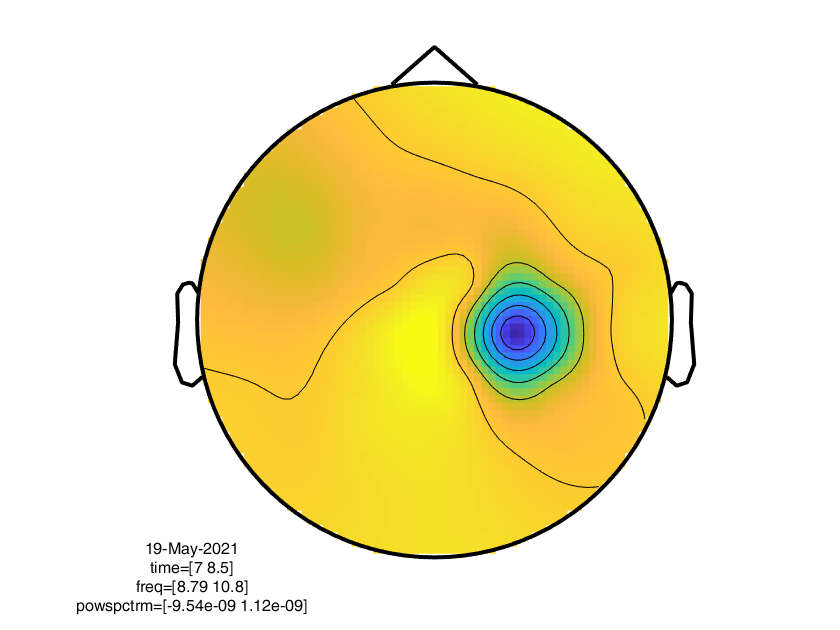

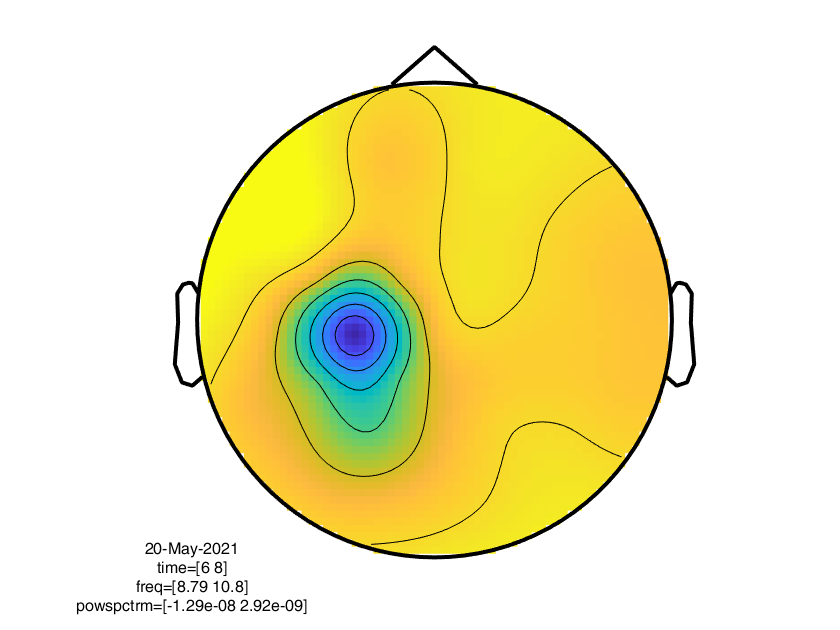

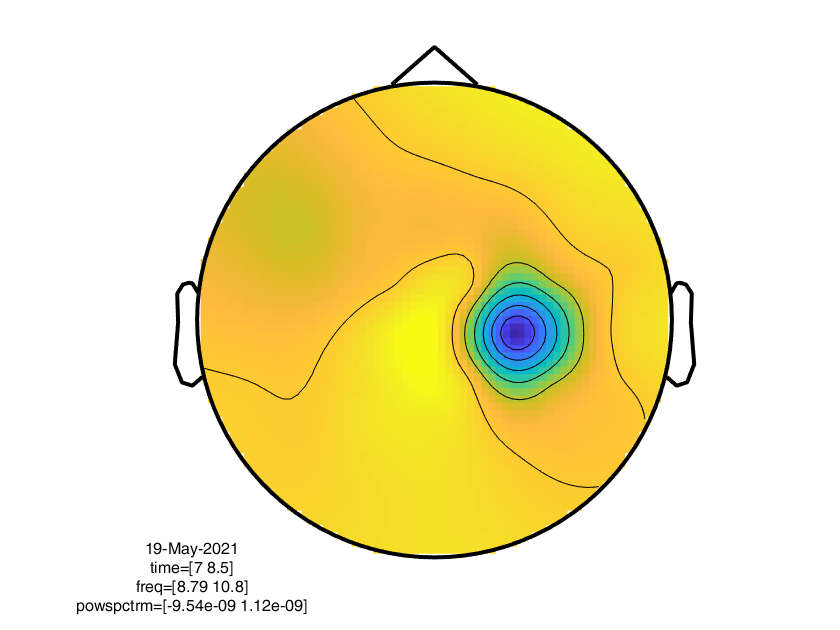

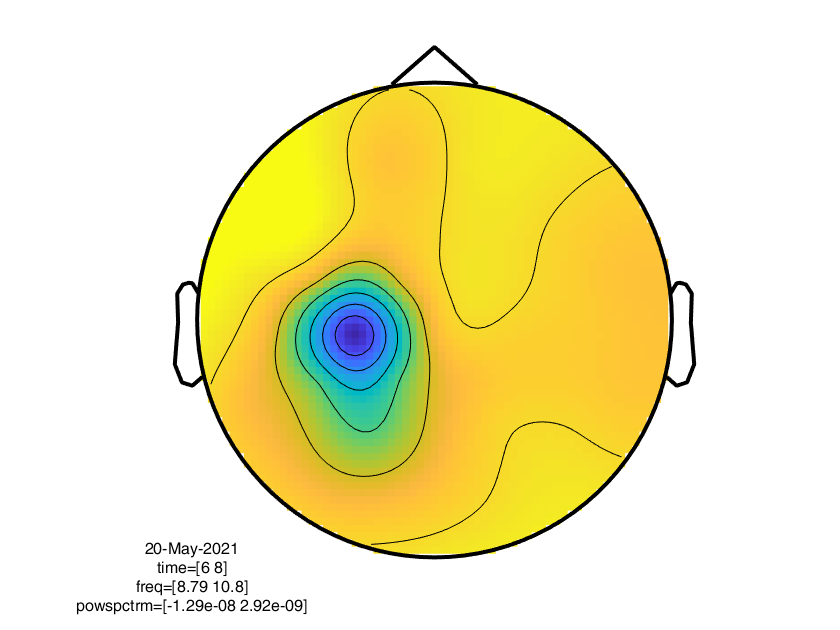

Preprocessing involved filtering to the "mu" frequency band (9–11 Hz), which is implicated in sensorimotor activity. Four overlapping time windows from each trial maximized data extraction, leveraging both absolute and relative baseline protocols. The final dataset comprised 939 high-resolution (840×630 px) topograms depicting spatial EEG power distribution during movement intention and execution for each hand.

Figure 1: Left hand-related EEG topography in the Mu frequency band.

Figure 2: Right hand-related EEG topography in the Mu frequency band.

These topograms, reflecting distinct motor-cortical activation patterns, fed downstream neural classification models.

Topographic images were resized to 84×63 pixels as the CNN input, achieving optimal trade-off between spatial resolution and computational tractability. The architecture consisted of four convolutional layers (kernel size 5×5) with increasing channels, each followed by 2×2 max-pooling layers, and culminating in three fully connected layers reducing to a two-class softmax output. ReLU activation was adopted throughout except for the output stage.

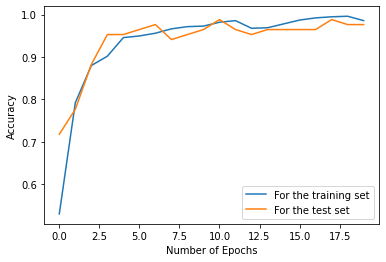

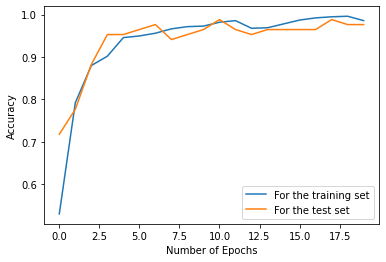

Supervised training with 80% of the data as the training set and early stopping achieved a test accuracy of 93.75% after only 10 epochs. This demonstrates that even with limited data, sufficient nonlinear discriminative features exist in mu-band topographies to support high-fidelity classification of motor state with a conventional CNN.

Figure 3: The CNN achieves over 90% classification accuracy despite a limited dataset size.

This quantitative result establishes a robust supervised learning baseline for EEG-based motor imagery decoding, with significant implications for non-invasive BMI systems.

Semi-supervised Adversarial Autoencoder Model

The adversarial autoencoder integrates GAN principles and standard autoencoding. Both the generator and discriminator are fully convolutional. The AAE is trained to reconstruct input topograms, with discriminator loss based on pixel-wise L1 distance. Adversarial feedback aims to force reconstruction indistinguishability between real and generator outputs.

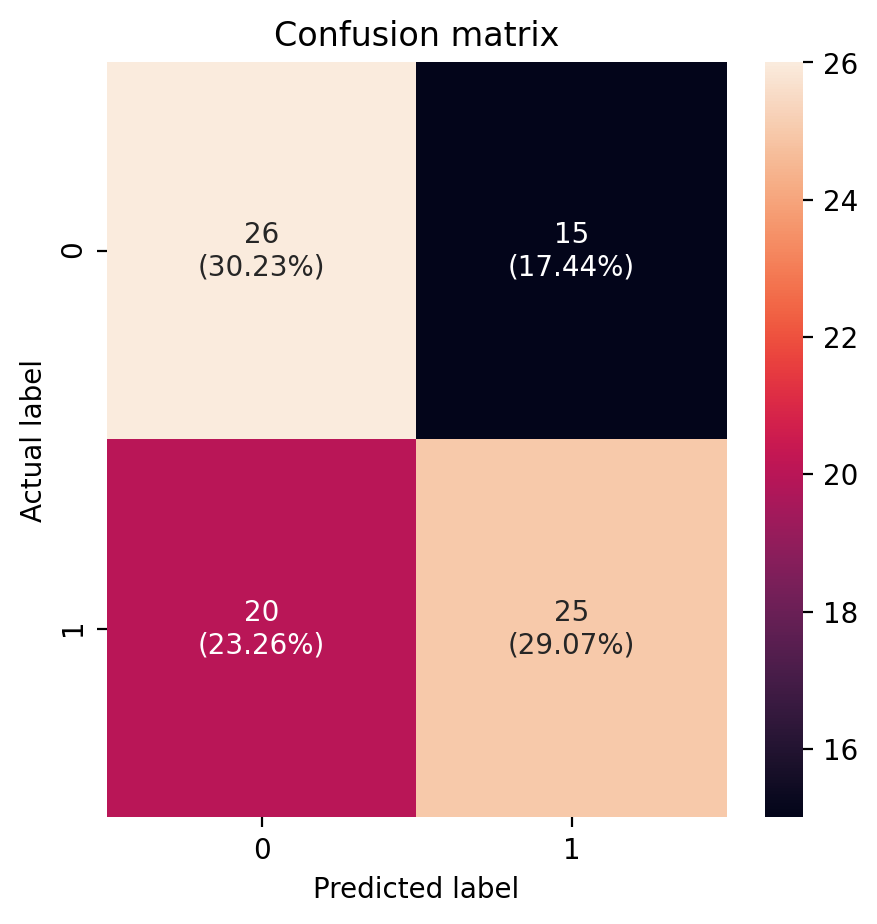

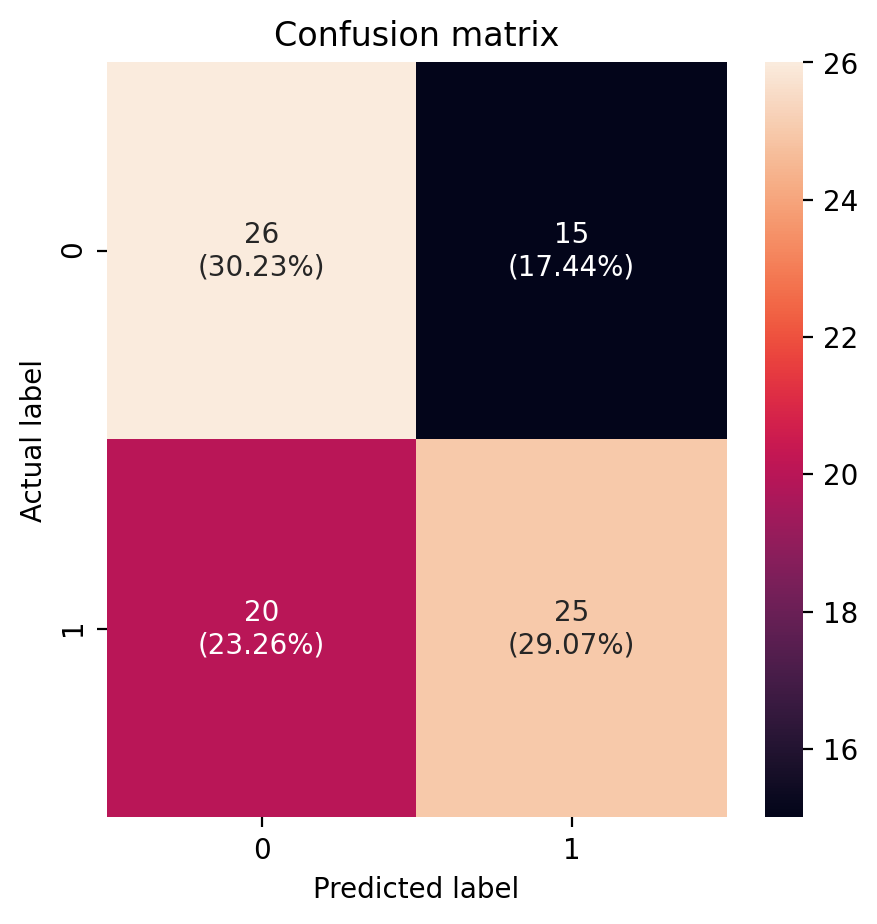

Unlike the CNN, the AAE was evaluated as a semi-supervised classifier, with performance fluctuating between 60% and 68% classification accuracy across training runs. The optimal result reported was 68%, revealing instability and lower peak accuracy compared to the supervised approach. The network was trained for 400 epochs, and its confusion matrix indicates moderate discrimination between left and right hand motor imagery.

Figure 4: Confusion matrix for the AAE, illustrating imperfect but non-random separation of left/right hand movement classes.

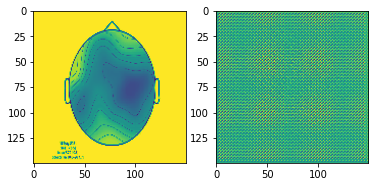

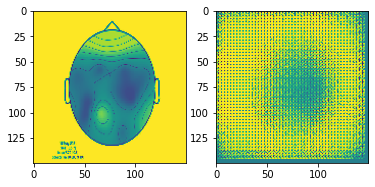

Visualization of the generative process provides insight into the model's feature capture and reconstructive capabilities:

Figure 5: Step 0—comparison of augmented input and generator output demonstrates early generative behavior.

Figure 6: Step 150—progression of generator output during training reflects improved but still limited fidelity.

While training instability and limited topogram realism are noted, the unsupervised and semi-supervised regime is promising for scenarios with minimal annotation.

Practical and Theoretical Implications

The strong performance of the CNN confirms the utility of spatial topographies in low-frequency EEG bands for discriminating fine motor intentions. This supports the use of computer vision-inspired deep learning for direct neurophysiological decoding, potentially extending to real-time control applications, BCI, and rehabilitation engineering. However, the AAE’s comparatively inferior and less stable performance highlights ongoing limitations in robust generative modeling on modest datasets with limited class signal, suggesting a need for further refinement in architecture, regularization, or data augmentation.

A theoretical implication is the confirmation that mu-band spatial patterns encode motor state with linearly separable structure and that relatively shallow CNNs suffice for state-of-the-art performance within the boundary of clean, well-preprocessed experimental EEG. In contrast, generative modeling remains susceptible to mode collapse or class confusion when working with limited spatial data and labels.

Future Directions

Future research could address data scarcity via cross-subject transfer learning, synthetic data augmentation, or more sophisticated contrastive/semi-supervised paradigms. Temporal feature incorporation (e.g., exploiting non-stationary spectral features), multi-modal fusion, or attention-based architectures may close the gap for unsupervised representation learning. Improvements in generative model training stability—such as integration of newer GAN variants or regularization strategies—are essential for performance under practical constraints.

Conclusion

This study demonstrates that CNNs can efficiently and accurately classify hand movement-related EEG topographic images, achieving high accuracy with minimal data. While adversarial autoencoders are less stable and performant for this task, they illustrate the potential of semi-supervised learning in EEG-based motor decoding. These findings underscore the promise and current limitations of applying advanced computer vision techniques to EEG neuroimaging and motivate further work in semi-supervised and generative paradigms for robust neurotechnology pipelines.