- The paper presents DebugHarness, which emulates human debugging to dynamically identify and repair complex C/C++ vulnerabilities.

- It combines dynamic runtime analysis tools with LLM reasoning, achieving an impressive 89.5% to 94.5% resolution rate on SEC-bench.

- Empirical evaluations highlight that integrating interactive state introspection significantly outperforms traditional static debugging methods.

DebugHarness: Human-Like Interactive Debugging for Autonomous Program Repair

Introduction and Problem Context

The DebugHarness framework addresses the persistent bottleneck in automated program repair (APR): the effective localization and resolution of low-level, security-critical vulnerabilities in complex systems software. While LLM-based agents have achieved promising performance on high-level language issues, they have consistently underperformed on C/C++ vulnerabilities that require deep comprehension of dynamic memory behavior, pointer manipulation, and cross-file interactions. This deficiency is primarily due to a static analysis paradigm that omits dynamic runtime context, in stark contrast to human experts who leverage live debugging, memory inspection, and replay to resolve such bugs.

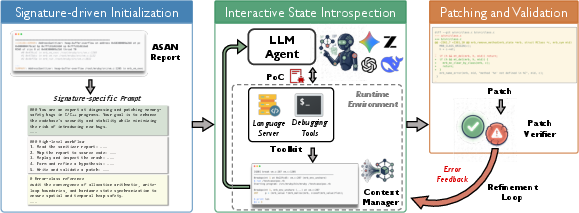

The paper introduces an autonomous LLM-based harness—DebugHarness—that fundamentally reconfigures the program repair workflow. Rather than statically analyzing crash reports and code, DebugHarness emulates human debugging: it reasons about hypotheses, commands debuggers for memory and execution inspection, utilizes dynamic runtime state, and synthesizes patches within a closed-loop validation cycle. This design integrates signature-driven investigation, interactive state introspection, and advanced debugging tools (e.g., GDB, rr, pwndbg) to augment LLM reasoning, closing the gap between static and dynamic contexts. The approach is rigorously evaluated on the SEC-bench benchmark, demonstrating substantial resolution improvements over state-of-the-art agents.

Motivation: The Need for Emulating Human Debugging

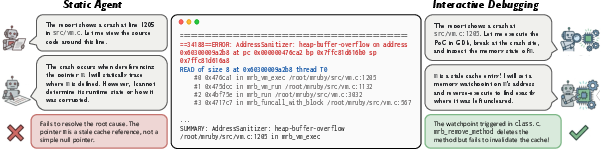

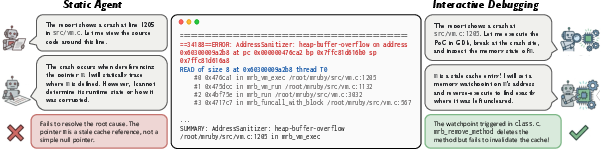

Legacy APR systems and LLM-based repair agents typify bug fixing as a text-generation problem, working primarily from PoC-triggered stack traces and source code. This static approach is sufficient for shallow, localized defects but fundamentally incapable of traversing the root-cause chains endemic to memory-safety bugs. The paper illustrates this through the CVE-2022-1286 heap buffer overflow in mruby:

A static agent, constrained to the allocator site present in the crash report, repeatedly proposes superficial patches or stalls, unable to infer that the actual root cause—a stale method pointer—originates from a cache invalidation bug in a distinct compilation unit.

By contrast, DebugHarness dynamically sets watchpoints, traces pointer lifecycles, and reasons about empirical runtime evidence. It identifies the source of the corruption by reverse-executing to the prior cache manipulation, mimicking an expert's workflow.

Figure 2: Comparison of traditional static agent workflow versus DebugHarness's interactive debugging for CVE-2022-1286; only the latter can trace from crash site to true root cause across files.

DebugHarness Architecture and Workflow

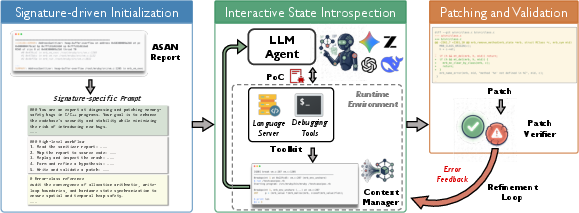

DebugHarness is architected as a client-server harness mediating between the LLM reasoning core and a suite of deterministic and dynamic analysis tools. The architecture orchestrates the following workflow:

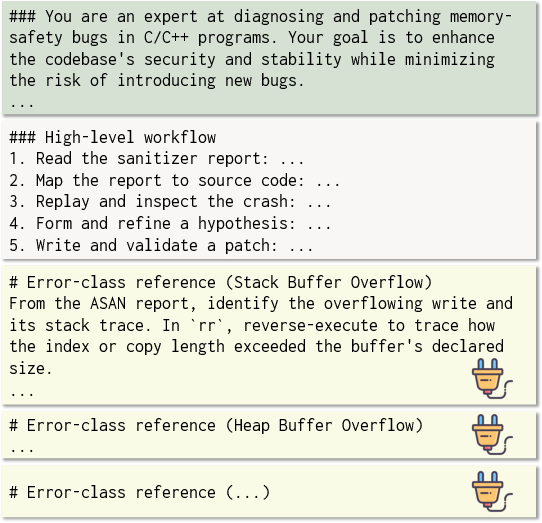

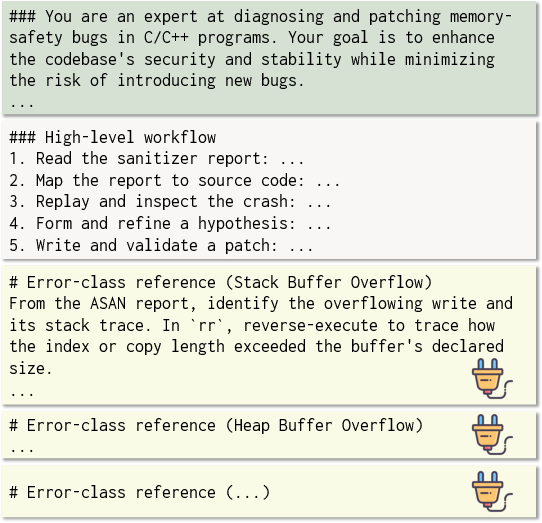

- Signature-Driven Initialization: The crash signature (from ASan, etc.) is parsed, and domain-specific debugging heuristics are injected into the LLM’s context. This constrains early agent actions, preventing premature patch synthesis and steering the agent toward relevant investigative strategies per error class.

Figure 1: Prompt template for signature-driven initialization, with customized error-specific troubleshooting guidelines injected based on the crash signature.

- Interactive State Introspection: The agent launches debugging sessions, sets breakpoints and watchpoints, leverages GDB for live state inspection, rr for deterministic reverse execution, and pwndbg for heap analysis. All tool interactions are abstracted through an MCP layer, ensuring robust, reproducible communication and command validation. Context summarization scripts distill large and verbose outputs, efficiently managing LLM input constraints.

Figure 4: High-level DebugHarness workflow, showing orchestrated execution phases and integration of static and dynamic tools.

- Patching and Closed-Loop Validation: Once sufficient evidence for a root cause is accumulated, the agent synthesizes a patch. Diff alignment and context correction are performed automatically to accommodate LLM output imperfections. The candidate patch is then validated: the code is recompiled, the PoC trigger re-executed, and all tests run. Failure logs are synthesized back into the agent’s context for further refinement, forming a convergent repair loop.

Empirical Analysis

Effectiveness and LLM Backbone Generality

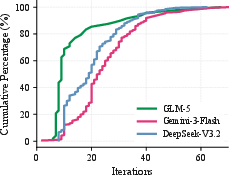

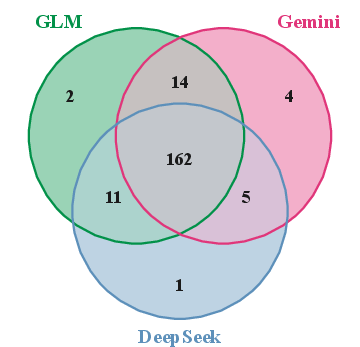

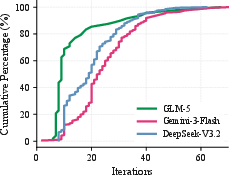

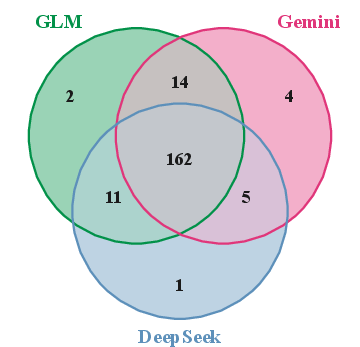

DebugHarness achieves a resolution rate between 89.5% and 94.5% on SEC-bench (200 real C/C++ vulnerabilities across 29 OSS projects), a >30 percentage point gain over previous state-of-the-art agents. Specifically, general-purpose agents resolve less than 40%, vulnerability-specific LLM agents reach at most 67.5%, while DebugHarness achieves 89.5% (DeepSeek V3.2), 92.5% (Gemini-3 Flash), and 94.5% (GLM-5). Cost per resolved vulnerability remains low and competitive across models.

Figure 3: Cumulative distribution of repair iteration counts—70% of cases are resolved in ≤30 iterations, with clear diminishing returns beyond 40 iterations.

Figure 5: Venn diagram showing successful patch overlap for different LLM backbones—some non-overlapping cases indicate potential for ensembling.

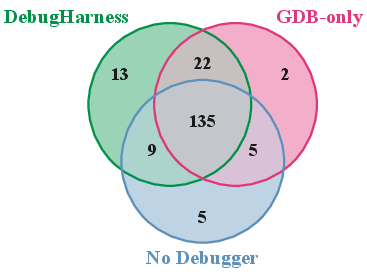

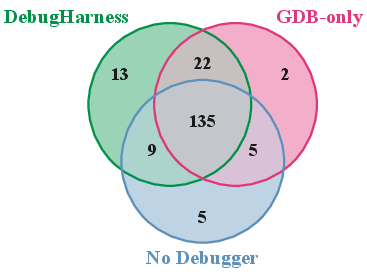

Component Contribution and Ablation Study

Disabling advanced debugging features (rr, pwndbg) reduces the success rate by 7.5–12.5 percentage points. Without any debugger, the agent’s performance decreases to 77.0%, still outperforming static-only baselines, but unable to resolve a difficult subset of deeply dynamic bugs.

Figure 6: Venn diagram showing the overlap in successfully resolved vulnerabilities across full DebugHarness and ablation variants, highlighting the unique value of dynamic introspection for certain cases.

Bug Type Sensitivity

Dynamic introspection particularly benefits temporal bugs (heap use-after-free, leaks) and spatial bugs (heap buffer overflows), supporting the design hypothesis that dynamic, state-aware debugging primitives are strictly necessary for addressing these error classes.

Implementation Considerations

DebugHarness is implemented on top of LangChain, with MCP abstraction for interactive tool execution and context management. Language Server Protocol support provides precise codebase navigation for mapping sanitizer reports and LLM queries to source code. The modular integration supports arbitrary dynamic analysis tools, and context summarization scripts mitigate the cost of unstructured or voluminous outputs.

Robustness to compiler optimizations, which may obfuscate debug information, remains an open challenge. In practice, automated adjustment of compilation flags or strategic fallback to static analysis can partially address these deficits.

Implications and Prospective Directions

DebugHarness introduces a shift in the design of autonomous repair agents. By tightly coupling LLM reasoning with dynamic state investigation, it enables agentic workflows previously unattainable with static-only paradigms. The demonstrated resolution rates on challenging SEC-bench vulnerabilities open the path for adoption in CI pipelines for security-sensitive codebases and motivate agent extensions to logic/concurrency bugs via integration of specialized diagnostics (e.g., Valgrind, strace).

This paradigm generalizes: any software error with latent or temporally-distant root causes benefits from such closed-loop, dynamic introspection. As LLMs improve in their ability to interpret diagnostic output and strategically compose debugging actions, agentic program repair will continue to approach expert-level capability for real-world software maintenance.

Conclusion

DebugHarness (2604.03610) establishes that LLM-powered program repair can transcend static text generation by leveraging systematic, human-like dynamic debugging. Structured initialization, interactive introspection, and empirical validation underpin its superior resolution rates across diverse LLM backbones. The work demonstrates both the necessity and tractability of agents that orchestrate tool-driven workflows, setting a template for future frameworks that will extend dynamic, evidence-based reasoning to even broader classes of software failures and security-critical automation tasks.