- The paper introduces a spatially adaptive frequency modulation method using a learnable grid to filter Fourier features based on local signal characteristics.

- It employs a difference-of-sigmoids filter for dynamic low-, band-, and high-pass filtering, enhancing neural tangent kernel conditioning and optimization.

- Empirical results demonstrate superior performance in 2D image fitting, 3D geometry modeling, and sparse inpainting, with interpretability provided by learned α maps.

Adaptive Local Frequency Filtering for Fourier-Encoded Implicit Neural Representations

Introduction

The paper "Adaptive Local Frequency Filtering for Fourier-Encoded Implicit Neural Representations" (2604.02846) introduces a spatially adaptive frequency modulation technique for implicit neural representations (INRs) leveraging Fourier feature encodings. Standard Fourier-encoded INRs utilize global, fixed-frequency bases, which are insufficient for modeling signals with spatially heterogeneous spectral characteristics. The proposed approach enables element-wise, position-dependent filtering of encoded Fourier components through a learnable spatial field, α(x), facilitating context-sensitive low-, band-, and high-pass filtering. This work also provides an analytical interpretation via the Neural Tangent Kernel (NTK) framework and demonstrates the superiority of adaptive frequency filtering empirically across multidomain tasks, such as 2D image fitting, 3D SDF-based geometry modeling, and sparse data inpainting.

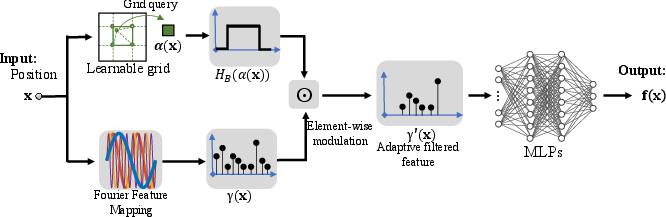

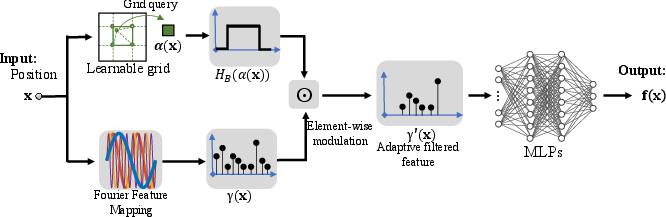

Figure 1: The AL-Filter architecture introduces a learnable grid for the adaptive parameter α(x), modulating the local frequency response of Fourier features prior to MLP-based decoding.

Methodology

Adaptive Local Frequency Filtering

Central to the proposed framework is the adaptive local frequency filter parametrized by α(x), stored efficiently as a learnable grid with multilinear spatial interpolation for smoothness and scalability. The main operation is a channel-wise modulation of the Fourier-encoded feature vector, yielding

γ′(x)=HB(α(x))⊙γ(x)

where HB(α(x)) is a difference-of-sigmoids filter whose passband (center and bandwidth) is controlled by α(x) and a scalar hyperparameter B.

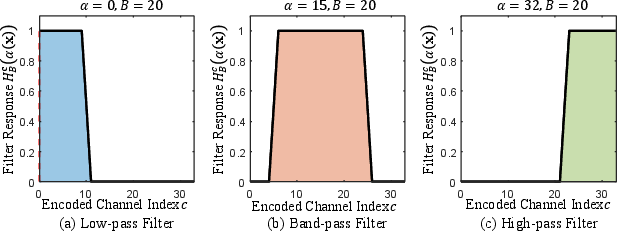

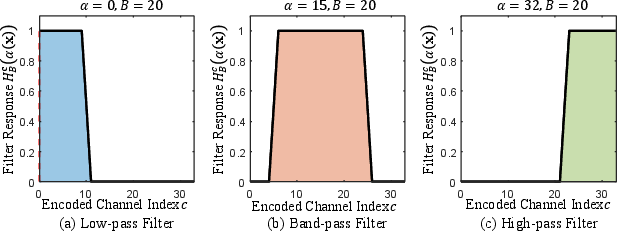

The filter supports subregions of low-, band-, and high-pass behavior by dynamically shifting the modulation mask along the ordered encoded-channel axis. Low α(x) values select low-index (low-frequency) channels, high values select high-frequency components, and intermediate values implement band-passing (Figure 2).

Figure 2: The adaptive filter’s frequency responses for different α(x) values, demonstrating smooth and continuous modulation among low-, band-, and high-pass operations.

Implementation and Computational Characteristics

The modulation field α(x) is efficiently represented as a scalar grid, enabling significantly lower memory requirements than previous approaches using high-dimensional feature grids. The filtering operation is directly applied after feature encoding and before the MLP, adding minimal computational and memory overhead while facilitating explicit, interpretable local frequency adaptation.

Neural Tangent Kernel Analysis

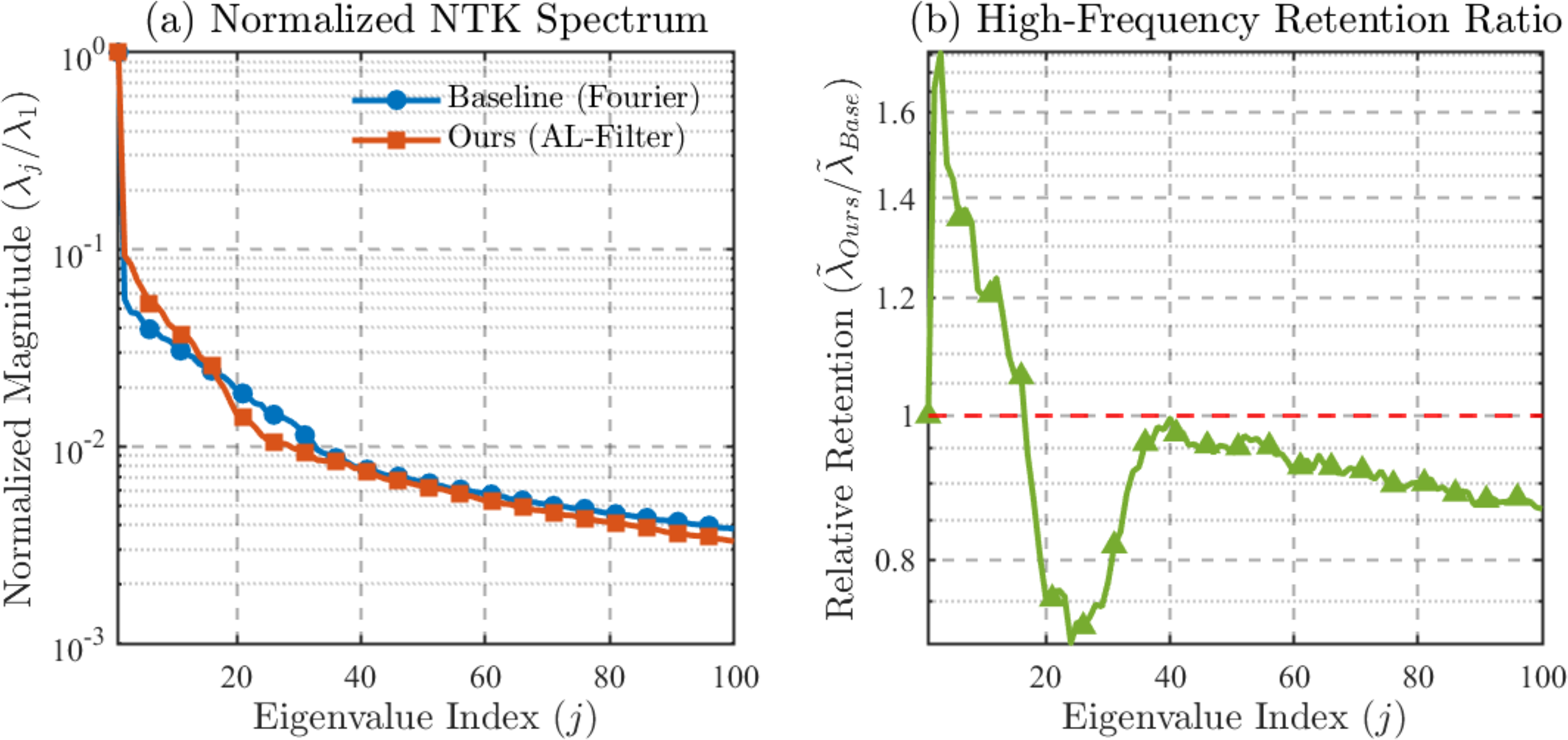

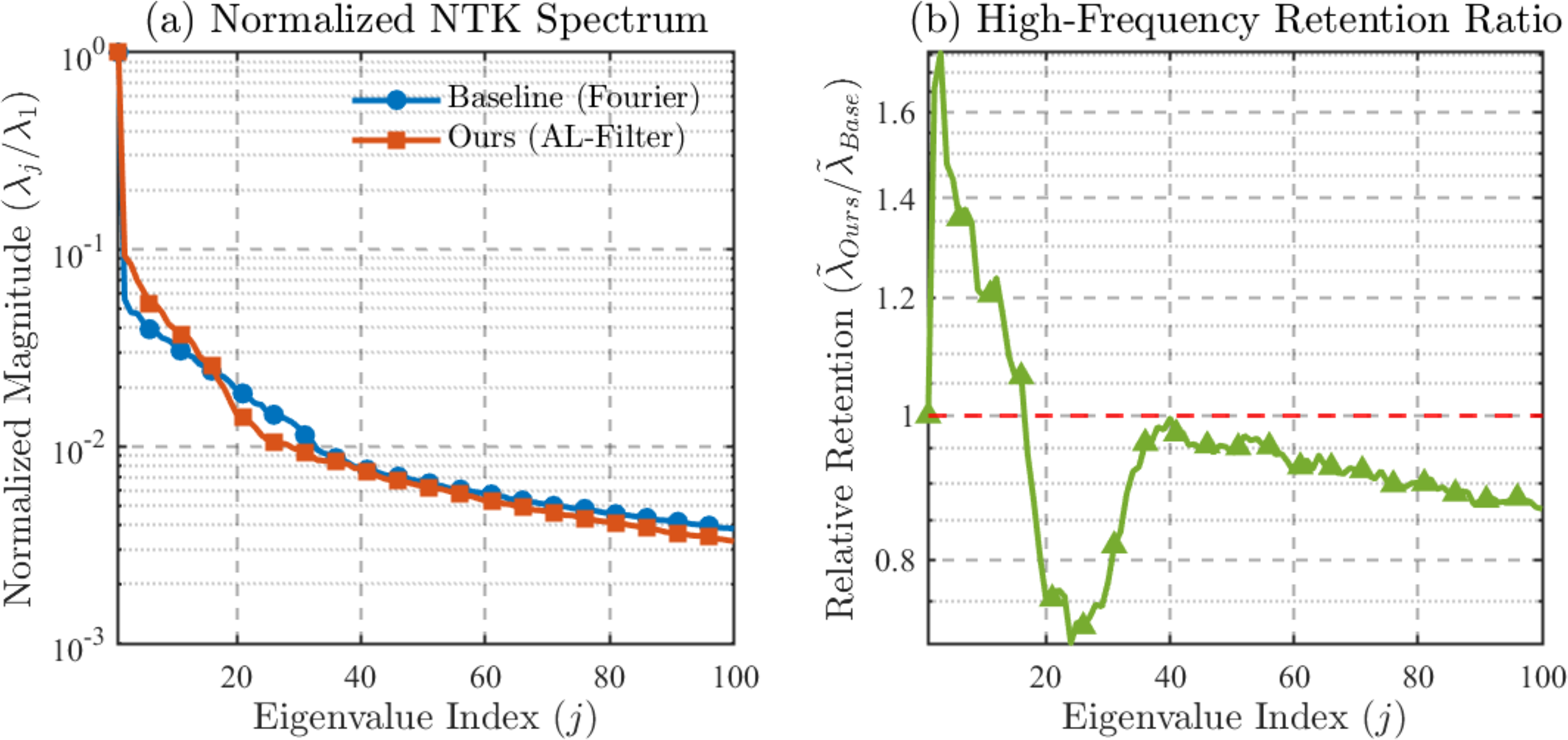

The effect of the AL-Filter is further elucidated by an NTK-based lens. Locally, the adaptive filter reweights the kernel eigenvalues associated with different dyadic frequency scales:

α(x)0

where α(x)1 is the baseline Fourier induced eigenvalue, and α(x)2 aggregates the filter response over channels corresponding to scale α(x)3. Thus, the method spatially modulates the effective kernel eigenspectrum, improving the conditioning for high-frequency component learning, especially in regions demanding complex detail.

Figure 3: Empirical NTK eigenspectra, showing increased retention of intermediate/high-frequency eigenvalues and more favorable spectral balance with the AL-Filter versus fixed-frequency methods.

Empirical Results

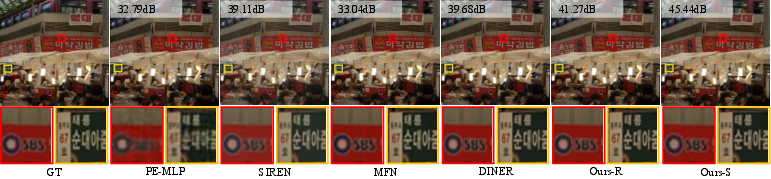

2D Image Fitting

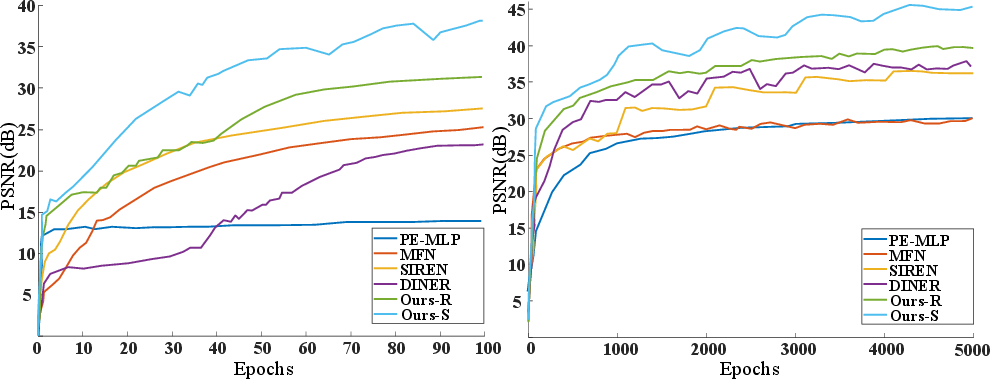

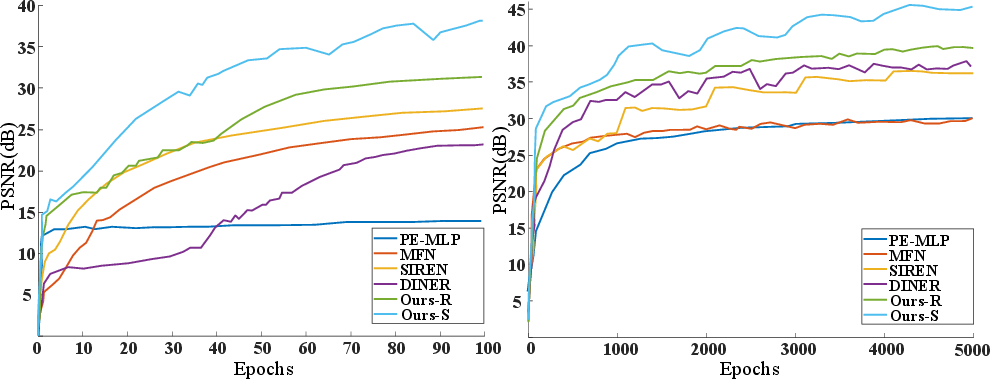

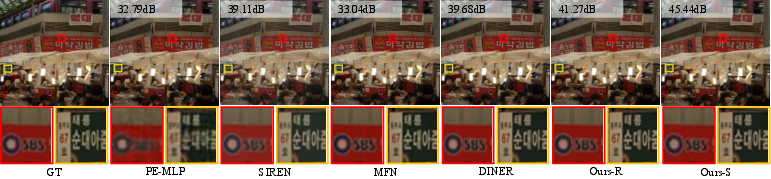

In standard image regression tasks, the adaptive filter accelerates convergence and elevates peak reconstruction accuracy. Quantitatively, the method outperforms fixed-frequency baselines in PSNR, SSIM, and LPIPS. Notably, the AL-Filter enables sharper recovery of text and structure, suppressing over-smoothing seen in traditional encodings.

Figure 4: INR model convergence on 2D image fitting; AL-Filter variants achieve higher PSNR both in early and late-stage training.

Figure 5: Visual comparison on image fitting, showing the AL-Filter’s superiority in capturing fine structures and sharp features over classic INRs.

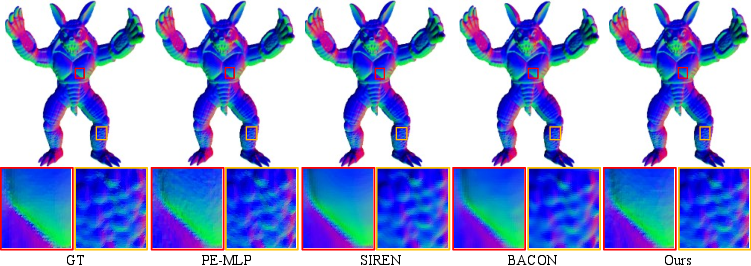

3D Signed Distance Field Representation

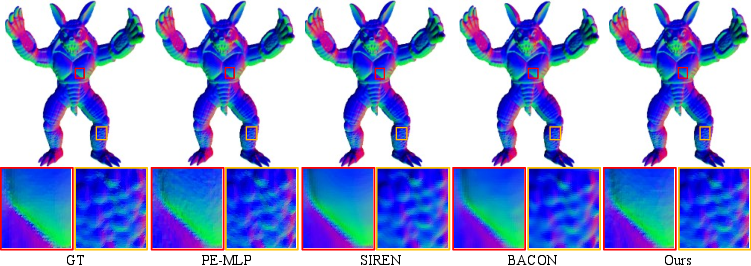

For geometry encoding, especially on Stanford 3D Scanning Repository models, the AL-Filter both lowers Chamfer distance and raises surface IoU across diverse objects. It distinctly maintains geometric detail in high-complexity regions while preserving smoothness elsewhere.

Figure 6: SDF surface reconstructions for Armadillo, illustrating the adaptive filter’s ability to preserve high-frequency detail (legs) without introducing noise in smooth regions.

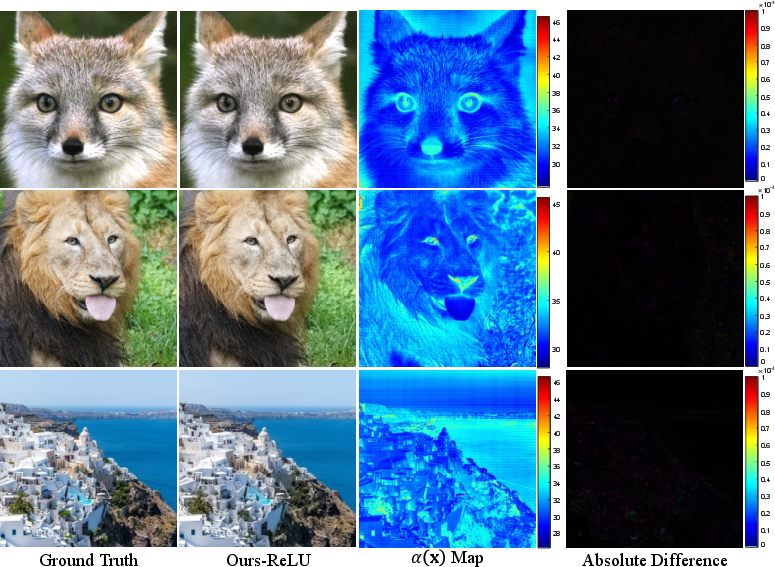

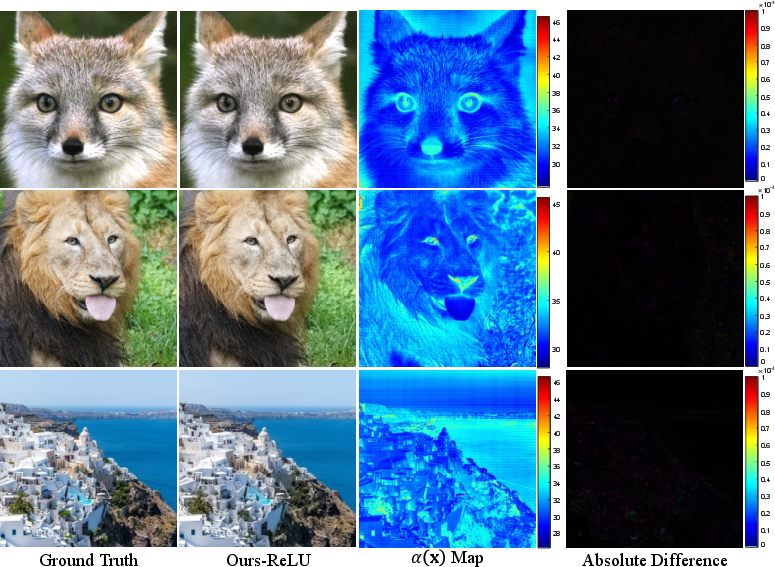

Interpretability and Learned Frequency Maps

Learned α(x)4 maps directly reflect spatial frequency demand—elevated values at image edges or textured areas, lower values in smooth background. This provides direct interpretability and diagnostic insight into local model behavior.

Figure 7: Visualization of the learned α(x)5 field, highlighting increased frequency selection at edges and structures.

Robustness in Sparse Observation Regimes

In image inpainting with limited observations, the spatial smoothness regularization on the α(x)6 field allows reliable reconstruction without introducing spurious high frequencies, underscoring the regularizer’s synergy with the AL-Filter.

Theoretical and Practical Implications

Theoretically, adaptive local frequency filtering addresses the longstanding limitation of global, fixed spectral bases in Fourier-encoded INRs, mitigating spectral mismatch in nonstationary settings. The NTK-based analysis connects spatial adaptation with improved datadependent kernel conditioning, offering a direct route for future kernel-based model selection and optimization.

Practically, this approach introduces minimal overhead and can be seamlessly composed with standard MLP-based INR pipelines. It is especially advantageous in multidomain continuous signal modeling where local spectrum varies sharply, such as real-world textures, highly detailed geometry, and spatially nonuniform measurement phenomena. Interpretability of the learned adaptive field informs debugging and model selection. Limitations include the dependence on grid resolution for α(x)7 and sensitivity to certain hyperparameters, which suggests future work in adaptive grid and bandwidth parameterization.

Conclusion

Adaptive local frequency filtering via a learnable grid-based field provides explicit, interpretable, and effective spatial frequency selection for Fourier-encoded INRs. The method reshapes and localizes the kernel eigenspectrum, accelerating optimization and improving data fidelity in both dense and sparse settings. This explicit local modulation framework is robust and extensible, motivating further research into dynamic, context-dependent encoding mechanisms for high-performance neural representation learning.