- The paper introduces an agentic system that integrates LLM reasoning with specialized git tools for robust bug-inducing commit detection.

- It employs a ReAct-style iterative loop with domain-specific heuristics and structured context compression to enhance precision and efficiency.

- Quantitative evaluation shows up to a 27.2% F1 improvement and a 300% increase in recall on challenging cross-file cases.

AgentSZZ: LLM-Driven Agentic Identification of Bug-Inducing Commits

Introduction

Identifying bug-inducing commits (BICs) is essential for downstream software engineering (SE) tasks ranging from defect prediction to vulnerability triage. The SZZ algorithm, operating primarily via git blame, has long been the dominant method for BIC identification, with a range of variants extending its core mechanics. However, all existing pipeline-based and LLM-enhanced approaches exhibit limited performance, particularly on challenging cases such as cross-file and ghost commits, due to the intrinsic line- and file-local constraints of git blame.

AgentSZZ introduces an agent-based paradigm driven by a LLM, operationalized as an autonomous investigatory agent guided by domain knowledge and interacting adaptively with a curated set of task-specific tools. This system leverages a ReAct-style iterative exploration loop and structured context compression to efficiently traverse codebases and establish causality between bug-fixing commits (BFCs) and their inducing origins, thus addressing core SZZ limitations.

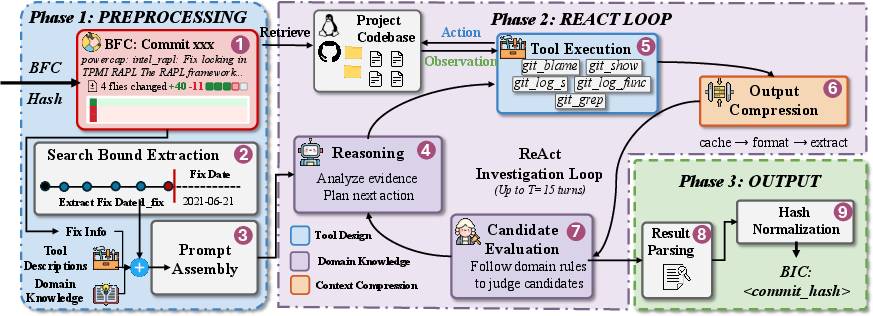

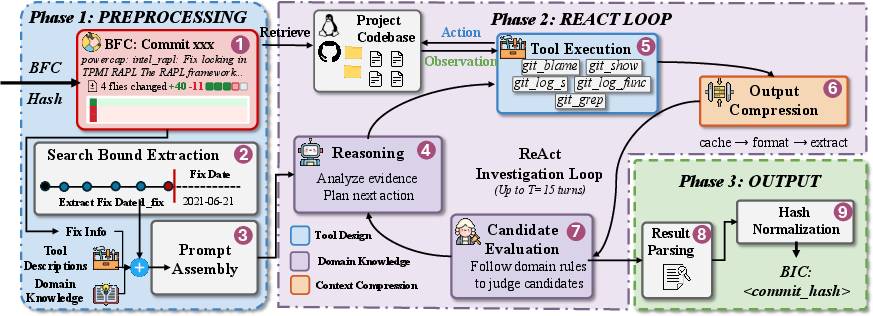

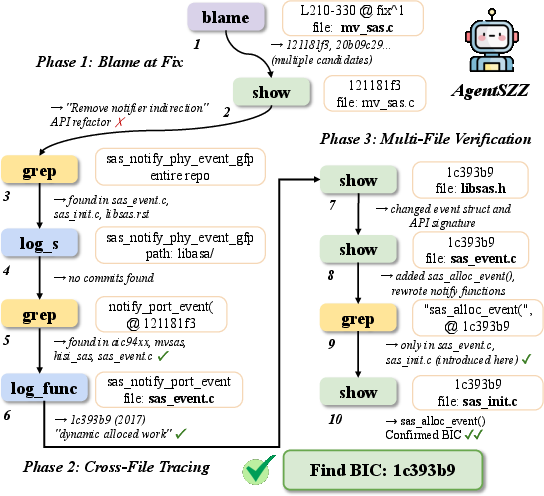

Figure 1: AgentSZZ end-to-end workflow, from preprocessing and context setup, through ReAct-driven investigation using domain-guided tool interactions, to output normalization and BIC selection.

AgentSZZ Architecture and Methodology

Domain-Guided and Tool-Constrained Agentic Exploration

AgentSZZ dispenses with static trace pipelines in favor of interactive, LLM-driven exploration. The agent is provided with a constrained “action space” defined by five carefully designed task-specific tools, each abstracting semantically important git operations (e.g., line-level blame, diff inspection, scoped log search, repo-wide grep):

- git_blame: Fine-grained line owners.

- git_show: Diff/message inspection.

- git_log_s / git_log_func: Search structural/code-level origins.

- git_grep: Cross-file reference/usage discovery.

Tool interfaces enforce scoping (line ranges, temporal bounds), which enables targeted, high signal-to-noise queries and obviates the inefficiencies/ambiguities of unconstrained bash-based agents.

AgentSZZ employs a ReAct-style loop: for each investigation turn, the LLM observes context, reasons over intermediate findings, and selects the next tool+parameters in accordance with encoded domain knowledge.

Figure 2: AgentSZZ's prompt encodes explicit SE domain knowledge, guiding the agent through best practices and investigation heuristics.

Prompt Engineering and Domain Knowledge Injection

The system’s prompt encodes not only the high-level task specification but a distilled set of expert investigation heuristics such as:

- Entry strategies: blame-local patch lines if present, adjacent/surrounding context otherwise.

- Escalation policies: traverse to cross-file/function-level search upon signature refactorings, meta-changes, or semantic disconnects.

- Disambiguation heuristics: counterfactual “would the bug exist if this commit did not land?” criteria.

- Causal verification: preclude mere correlational candidates or commits that merely expose (not induce) the defect.

This explicit domain knowledge scaffolds the LLM’s episodic reasoning, providing guardrails for robust BIC localization beyond static blame-based approaches.

Structured Context Compression

Since tool outputs can be voluminous and LLM context windows are finite, AgentSZZ employs a staged compression module. This mechanism deduplicates repeated queries, enforces tool- and parameter-specific truncation, prunes uninformative context, and extracts only structurally significant evidence (commit hashes, diff hunks, file headers). As demonstrated in ablation, context compression achieves >30% reduction in token usage with no measurable efficacy penalty.

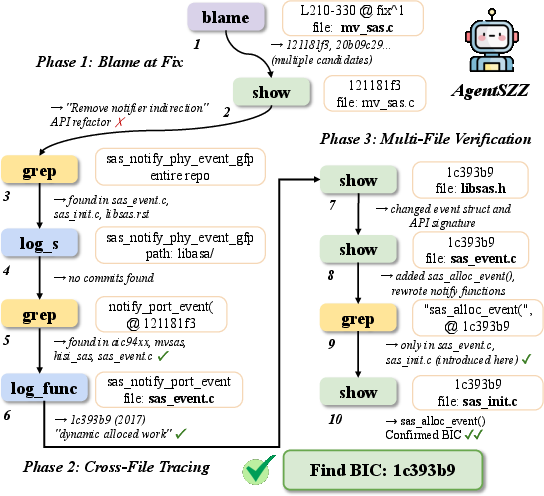

Agentic ReAct Investigation Trajectory

A canonical successful case involves initial blame and show operations to classify the immediate changes, dynamic escalation to cross-file search tools, and causality validation across multi-file histories, as shown below.

Figure 3: A full AgentSZZ investigation trajectory resolving a cross-file bug, where the inducing change predates the fix by years and lies outside the fix-modified file.

Quantitative Evaluation

Comparative Efficacy

Empirical assessment across three developer-annotated datasets (Linux, GitHub, Apache; n=2,095 BFCs) reveals:

- F1 improvement of up to 27.2% over prior SOTA LLM4SZZ, even with matched LLM backbone and identical fix context.

- Recall increases of up to 300% for cross-file cases and 60% for ghost commits, which are almost inherently untraceable by traditional blame-centric methods.

- Precision, recall, and F1 consistently outperform all static and agentic baselines (including bash-based mini-SWE-agent).

Ablation demonstrates that tool structuring is indispensable (removal leads to near-zero F1), while prompt-based domain scaffolding provides nontrivial recall gains across diverse datasets.

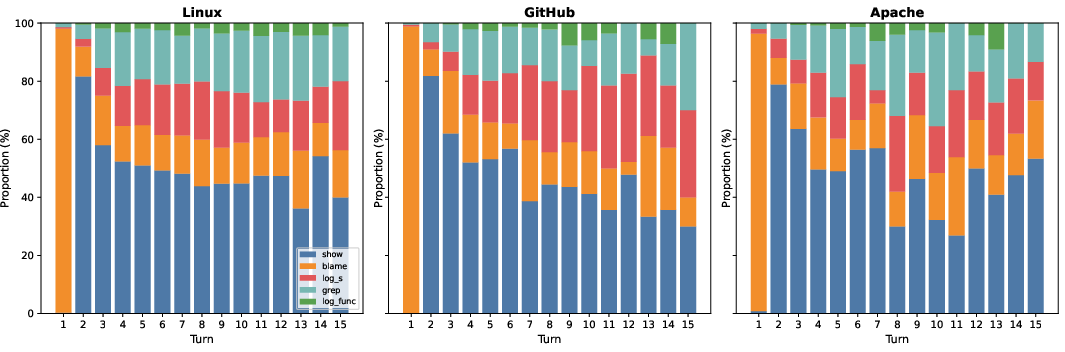

Efficiency

Despite more dynamic investigation loops, AgentSZZ converges with fewer, denser turns (~5.7 vs 11.2 for LLM4SZZ), maintains competitive runtime, and reduces resource utilization (tokens, API cost) by an order of magnitude over unconstrained agentic alternatives.

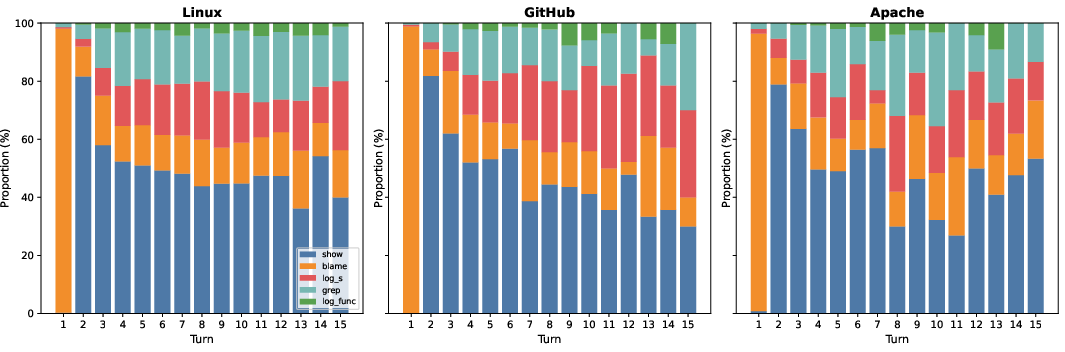

Figure 4: Tool utilization distribution across agentic investigation turns; escalation from blame/show to log_s/grep is more frequent later for harder cases.

Qualitative Insights: Challenging Cases and Error Analysis

AgentSZZ's design specifically mitigates SZZ’s failure cases:

- Cross-file BICs: Adroit use of global search/grep tools and function history tracing allows the agent to “jump” across files, linking symptom and root cause even in heavily refactored codebases.

- Ghost commits: Context-driven heuristics for line selection and identifier-based searches recover inducing commits missed when fix-side patches lack deletions/modifications.

For the small fraction of residual failures, error analysis reveals the locus is typically not search/localization failure but subtle misattribution among several plausible candidates, often due to incomplete symbolic/contextual signals or insufficient causal reasoning.

Implications and Future Directions

Practical Relevance

AgentSZZ establishes that agentic, tool-constrained, domain-knowledge-infused systems can substantially outperform both static SZZ pipelines and “bash-agent” approaches. This finding is nontrivial for SE maintenance and security tasks that rely on robust BIC identification, including defect prediction, vulnerability triage, and code forensics.

Theoretical Consequences

Results suggest that, for large-scale SE knowledge integration, pure LLM internalization is insufficient: coupling LLMs with external tool-calling and encoded domain practices achieves state-of-the-art results on tasks previously limited by context locality. The agentic loop supports adaptive exploration, resisting “dead-ends” inherent to hard-coded tracing.

Directions for Advancement

Immediate areas for further research include:

- Finer-grained causal verification: Augmenting textual/structural reasoning with dynamic program analysis or execution-based validation to suppress near-miss misattributions.

- Multi-BIC handling: Enhanced output representations and search policies for fixes induced by several distributed changes.

- Meta-learning of investigation heuristics: Automated distillation of prompts/tool preferences based on large-scale curation of SE practice.

Conclusion

AgentSZZ represents a significant advance in agentic SE automation: through the combination of LLM-driven reasoning, domain-guided tool orchestration, and context management, it overcomes core limitations of blame-based methods. Empirical results demonstrate robust, generalizable gains for practical BIC identification as well as strategic lessons for the design of effective LLM-based software engineering agents.

Reference: "AgentSZZ: Teaching the LLM Agent to Play Detective with Bug-Inducing Commits" (2604.02665).