- The paper presents a novel method that integrates multi-view point tracking to update 3D Gaussian representations, ensuring robust and fast dynamic scene reconstruction.

- It employs an affine transformation via Parallel Weighted Incremental Least Squares and motion regularization to mitigate artifacts common in gradient-based adaptations.

- Its parallel, multi-GPU pipeline scales reconstruction throughput while preserving high visual fidelity, enabling practical applications in robotics, AR/VR, and telepresence.

TrackerSplat: Exploiting Point Tracking for Fast and Robust Dynamic 3D Gaussians Reconstruction

Introduction

Dynamic scene reconstruction with temporally consistent photorealistic rendering is a critical challenge for computer graphics, robotics, and immersive media. The paradigm of 3D Gaussian Splatting (3DGS) has shown substantial promise for static and dynamic scene reconstruction, but previous variants suffer from severe quality degradation when processing fast object motions or operating under large frame gaps, particularly in parallel or multi-GPU settings. The paper "TrackerSplat: Exploiting Point Tracking for Fast and Robust Dynamic 3D Gaussians Reconstruction" (2604.02586) presents a principled method addressing the limitations of gradient-based frame-to-frame adaptation in 3DGS by incorporating multi-view point tracking for direct, data-driven estimation of Gaussian scene element trajectories. TrackerSplat sets a new standard for scalability, temporal coherence, and reconstruction fidelity, especially for high-throughput or interactive applications.

Limitations of Gradient-Based 3DGS Approaches

Conventional 3DGS approaches operate by iteratively refining Gaussian parameters using gradients computed over local neighborhoods of the input images. This local optimization becomes brittle for large scene displacements, as the limited support of the gradients leads to artifacts such as fading and incorrect recoloring (Figure 1). As multi-GPU parallelism increases the inter-frame distances that must be covered in a single step, these issues are dramatically exacerbated.

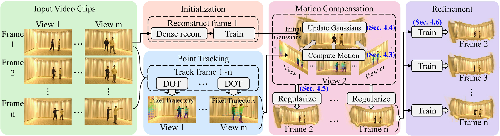

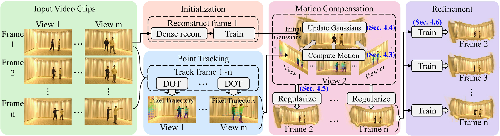

Figure 1: TrackerSplat overview. Multi-view video clips are processed; initialization uses 3DGS for first frames, subsequent frames update Gaussians via point tracking and regularization, then refinement is performed.

The TrackerSplat Methodology

TrackerSplat introduces a multi-stage pipeline that integrates recent advances in dense and sparse point tracking:

- Initialization: The baseline 3DGS is employed to generate initial 3D Gaussian scene elements for the first frame, leveraging robust static scene techniques such as InstantSplat.

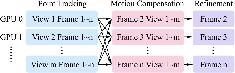

- Point Tracking: For each viewpoint, off-the-shelf dense point trackers (e.g., DOT) extract dense pixel-wise 2D trajectories across sequence frames (Figure 2). These robust pixel-wise correspondences capture large and nonrigid motions inaccessible to local gradients.

Figure 2: DOT point tracking on a multi-view video sequence. Colored trajectories reflect tracked pixels over time.

- Motion Compensation via PWI-LS: TrackerSplat formulates Gaussian motion update as an affine transformation problem solved using Parallel Weighted Incremental Least Squares (PWI-LS). Each Gaussian’s position, rotation, and scale are adjusted by aggregating weighted 2D motions of the image plane pixels they cover, which are triangulated across views to update 3D parameters efficiently on the GPU.

- Motion Regularization: To control noise and drift from tracking inaccuracies and partial observations, a robust motion regularization is performed. This involves median filtering across K-nearest neighbors in 3D space and propagating motion to occluded or unreliable Gaussians via neighborhood consensus, while static region detection avoids over-smoothing stationary objects.

- Parameter Refinement: The updated Gaussians undergo a rapid local optimization (1000 iterations) using the input frames to further refine color and geometric parameters, since initialization via point tracking largely localizes them correctly.

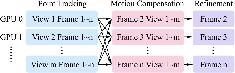

- Parallel Execution: All stages except initialization are designed for high parallelism. Multi-GPU pipelines process multiple frames independently after tracking, using only initial frame synchronization. This design achieves high throughput and scalability unprecedented for temporally consistent dynamic scene reconstruction (Figure 3).

Figure 3: TrackerSplat parallel pipeline. Point tracking, motion compensation, and refinement stages are all parallelizable, yielding significant runtime improvements.

Evaluation

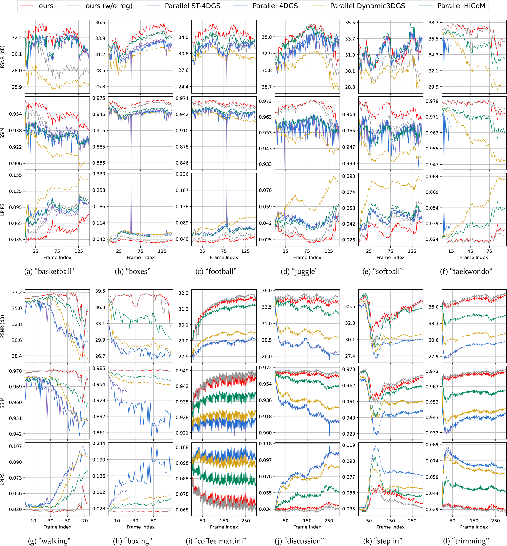

TrackerSplat was evaluated on diverse, large-scale, high-resolution dynamic scene datasets (Meeting Room, N3DV, Dynamic3DGS, st-nerf, RH20T). The focus was on both short-clip (parallelism, no cumulative errors) and long-video (temporal consistency) settings. Visual quality was assessed quantitatively using PSNR, SSIM, and LPIPS metrics, and qualitatively via rendered frames in fast-motion regimes typical of live or interactive content.

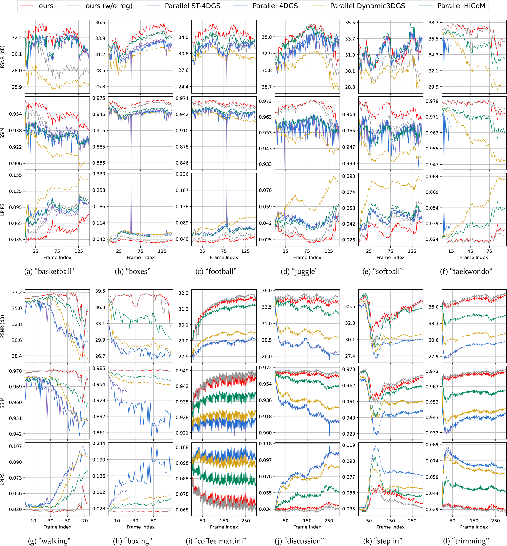

- Short-Clip Results: TrackerSplat consistently outperformed state-of-the-art baselines such as HiCoM, Dynamic3DGS, 4DGS, and ST-4DGS, especially as the degree of frame-parallelism increased. The method maintained visual quality (minimal loss in PSNR/SSIM/LPIPS) even as the number of simultaneously processed frames scaled from 1 to 8, while other methods exhibited severe quality drops and artifacts (Figure 4).

Figure 4: PSNR, SSIM, LPIPS curves for long-video sequences at 8-GPU parallelism. TrackerSplat maintains both stability and high visual quality, outperforming all baselines.

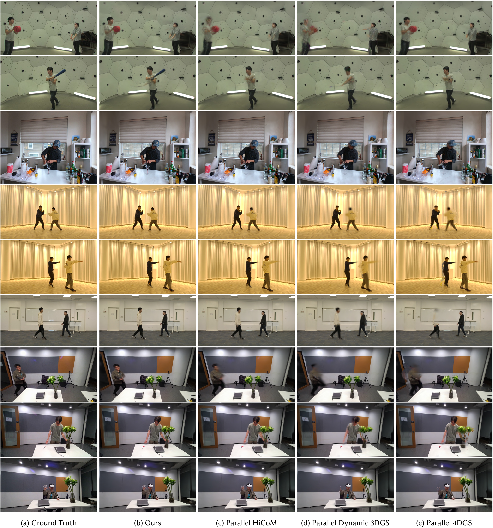

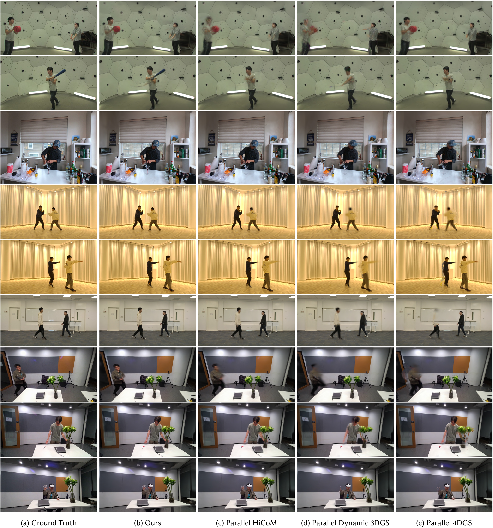

- Long-Video and Qualitative Results: In extended reconstructions, TrackerSplat preserved visual details and temporal consistency with minimal drift or flicker, substantially mitigating fading or ghosting in high-velocity regions present in all baselines. Figure 5 illustrates representative frames, showing TrackerSplat’s superior handling of large, nonrigid motion and fine details.

Figure 5: Qualitative comparison: TrackerSplat’s renderings (right) show reduced artifacting and sharper detail in dynamic and occluded regions versus baseline methods.

- Throughput: TrackerSplat’s pipeline yielded the highest throughput in seconds-per-frame under all tested configurations except in cases where point tracking overhead dominates for extremely many views per frame.

- Ablation: Removing motion regularization revealed its role in suppressing outliers but with minor quality tradeoffs for already well-initialized regions, demonstrating the effectiveness of each stage in the pipeline.

Limitations

Despite substantial robustness and scalability improvements, the method's performance is ultimately bounded by current tracking methods. Small-scale features (thin objects, hands), severe occlusions, or ambiguous correspondences remain challenging. Temporal jitter from tracking errors and spatiotemporal inconsistencies inherent to the 3DGS model under noisy video can still introduce subtle artifacts. Error propagation from inaccurate initial frames is only partially mitigated by the final refinement phase. Future integration of dynamic Gaussian add/remove methods, error decomposition techniques, and improved correspondence tracking may ameliorate these issues.

Implications and Future Directions

The methodology presented sets a new benchmark for scalable, high-fidelity dynamic 3D reconstruction, providing practical benefits for areas requiring real-time performance, such as robotics, telepresence, and event capture. The direct linkage of 2D visual correspondences and 3D Gaussian scene elements expands the class of dynamic phenomena amenable to photorealistic, temporally coherent modeling. The modular parallel pipeline allows rapid extensions to integrated object manipulation, real-time AR/VR applications, and embodied AI navigation. There is clear potential for future research in tracking-augmented neural rendering, video-based editing, and robust scene understanding pipelines operating at scale.

Conclusion

TrackerSplat demonstrates that integrating multi-view point tracking with 3D Gaussian Splatting fundamentally addresses limitations in prior gradient-based methods for dynamic scene reconstruction. Its robust affine motion estimation, regularization strategies, and highly parallel architecture enable fast, high-quality, temporally stable reconstructions even under challenging large-displacement and high-throughput regimes. This work delivers both a practical tool and a framework for future research at the intersection of dense tracking, neural scene representation, and scalable, real-time 3D vision systems.