- The paper demonstrates that high-quality, richly annotated DR datasets dramatically enhance deep learning model performance and clinical interpretability.

- The deep learning methodologies, particularly CNN architectures like EfficientNet and ResNet, excel in detecting and grading DR severity under data constraints.

- The study highlights dataset challenges such as class imbalance, annotation inconsistency, and demographic bias, suggesting the need for standardized protocols.

Managing Diabetic Retinopathy with Deep Learning: A Data-Centric Perspective

Clinical Context and Retinal Pathology

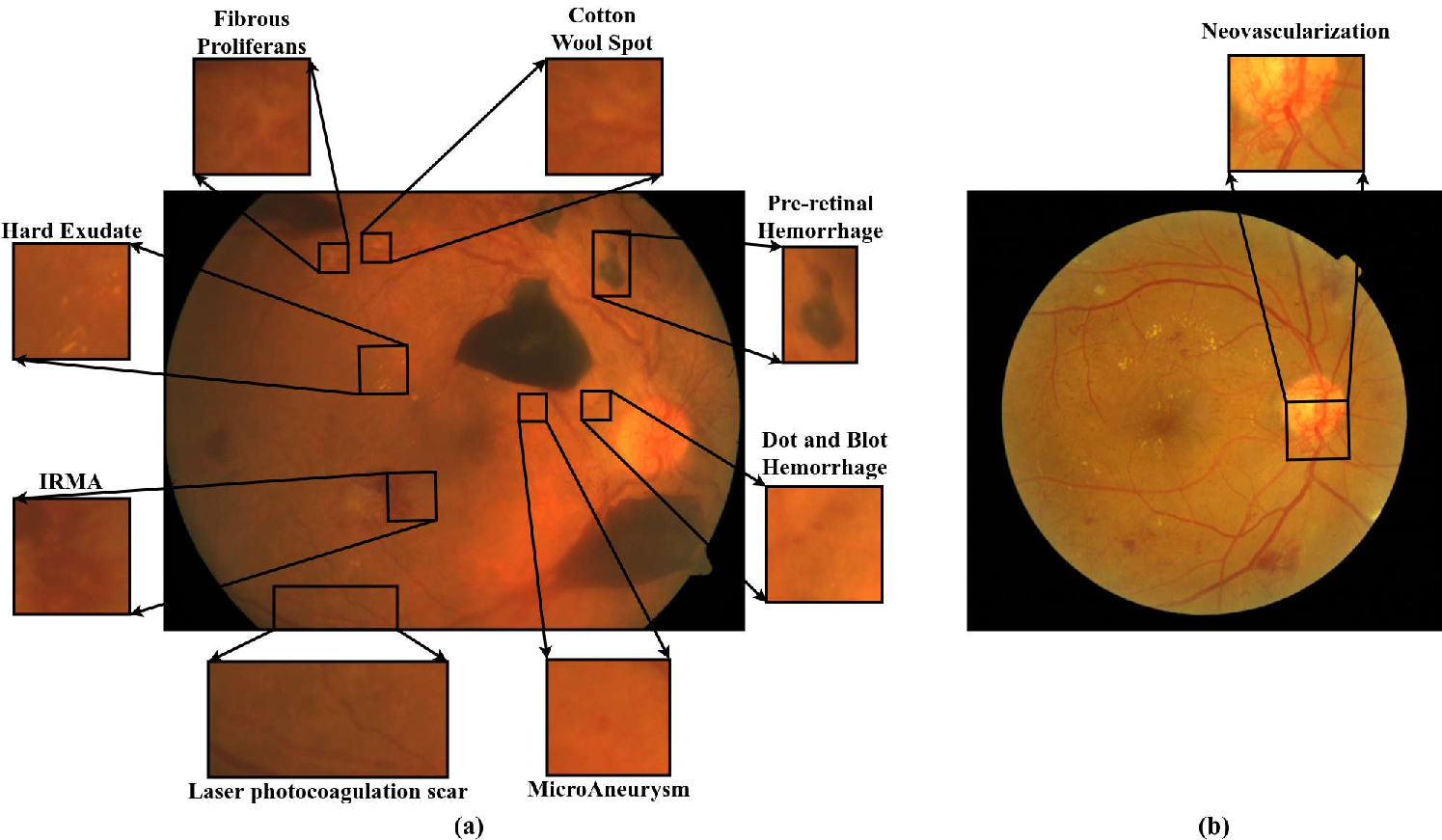

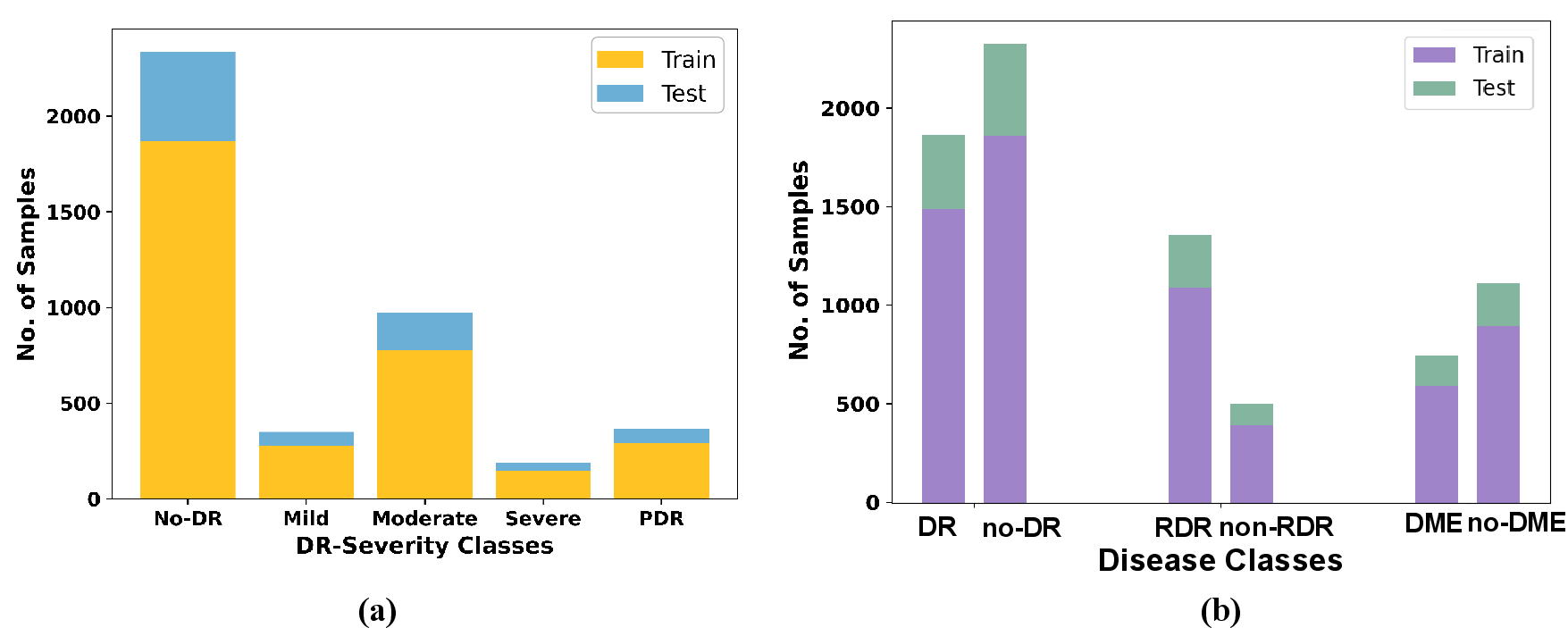

Diabetic Retinopathy (DR) is a leading cause of irreversible vision loss globally, and its rapid prevalence among diabetic populations underscores the urgency for scalable screening solutions. The disease manifests through progressive microvascular damage, with hallmark lesions such as microaneurysms, hemorrhages, hard exudates, soft exudates, intraretinal microvascular abnormalities, and neovascularization. Lesions range from subtle artifacts in mild Non-Proliferative DR (NPDR) to aggressive neovascularization in Proliferative DR (PDR) (Figure 1).

Figure 1: Sample fundus images depicting key DR-associated lesions including microaneurysms, hemorrhages, exudates, IRMA, and neovascularization.

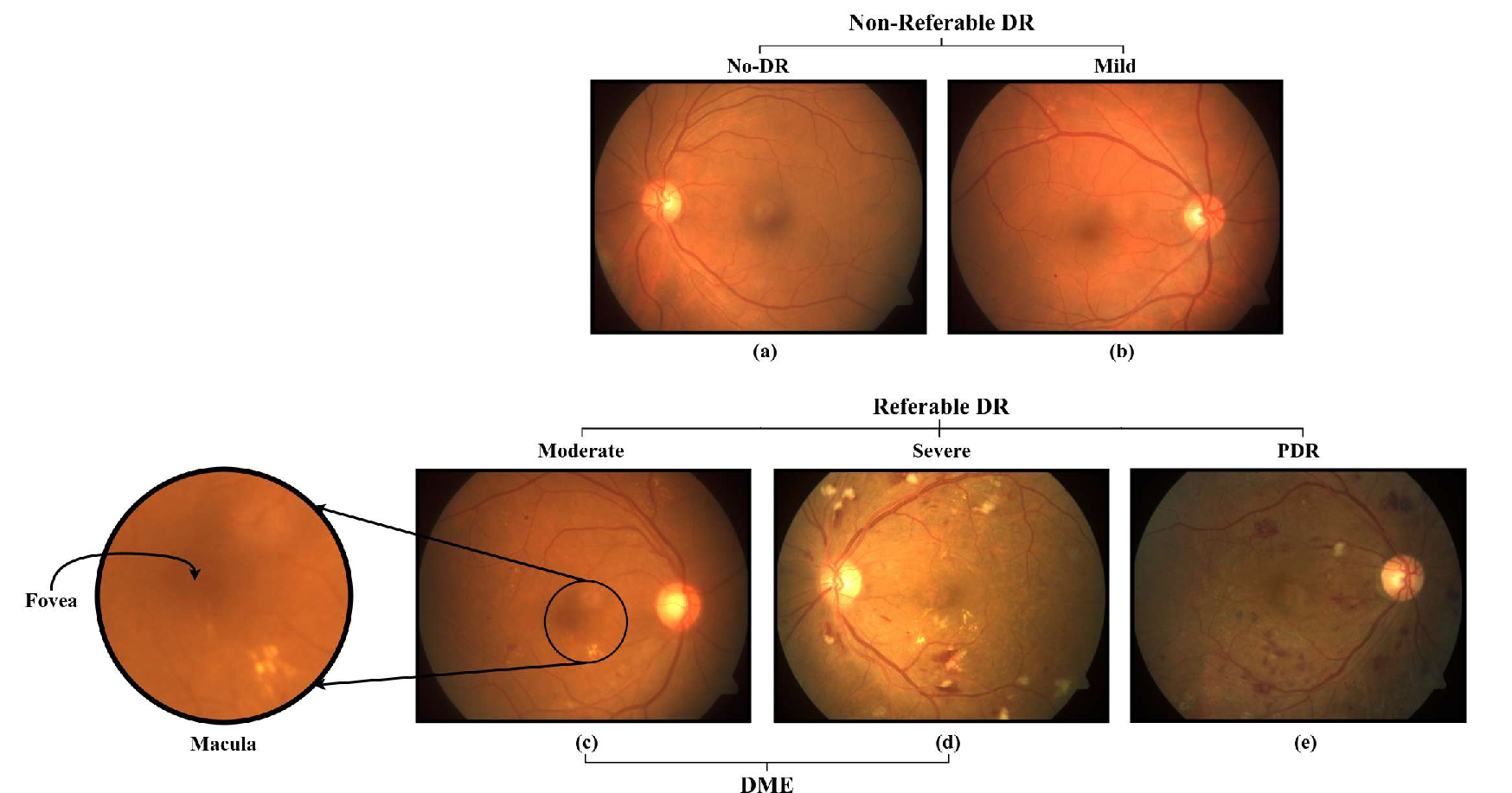

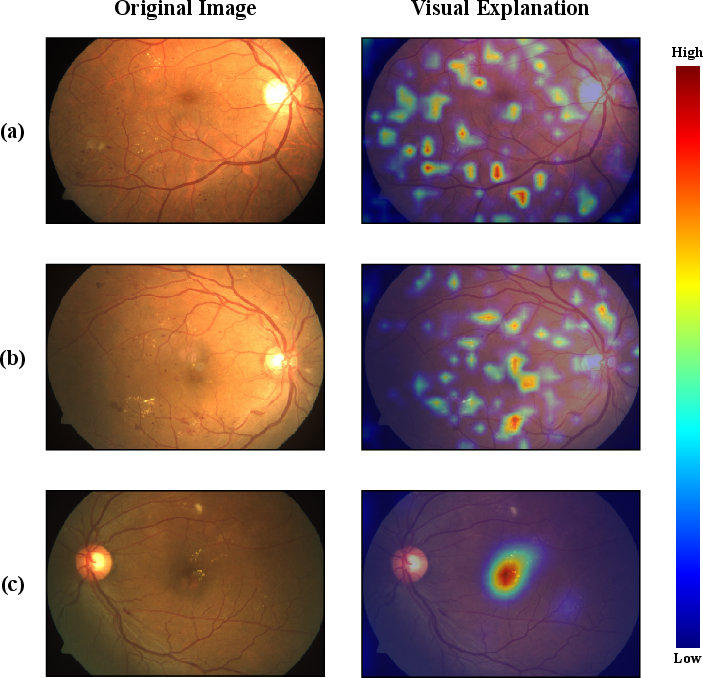

Severity grading is central to DR management, with distinctions between healthy, mild, moderate, severe, and proliferative cases (Figure 2). Early detection of diabetic macular edema (DME) and referable DR (RDR) is critical, as both are major causes of preventable blindness.

Figure 2: Fundus images illustrating the severity spectrum from healthy retina to various grades of DR.

Deep Learning Approaches for DR Screening

Fundus photography is the primary modality for DR screening due to its non-invasiveness, accessibility, and suitability for automation. Classical image processing techniques falter in handling the inconsistency and diversity of retinal imagery. Deep Learning (DL), especially Convolutional Neural Networks (CNNs) and Vision Transformers (ViTs), has demonstrated enhanced diagnostic accuracy and robustness.

CNN architectures such as VGG, ResNet, InceptionNet, EfficientNet, and DenseNet are widely used. VGG's hierarchical extraction favors small datasets and localized lesions, while ResNet's skip connections enable gradient propagation in deeper networks. InceptionNet's multi-branch design captures multi-scale features, important for lesion size variability but demands higher resolution. DenseNet encourages feature reuse, making it effective in data-scarce and lesion-diverse settings but at higher memory costs. EfficientNet optimizes the accuracy-efficiency trade-off through compound scaling.

ViTs model images as sequences with self-attention, capturing global dependencies but are constrained by the lack of spatial priors and require extensive data. Empirically, transformer-based approaches do not consistently outperform CNNs in DR classification tasks, especially in imbalanced and scarce datasets.

Automated screening tasks are grouped into:

- Classification: Assigning severity or binary labels (e.g., DR/No-DR, RDR/Non-RDR, DME/No-DME).

- Segmentation: Pixel-level delineation of pathological features, supporting model interpretability but offering limited direct clinical value.

- Detection: Identifying and localizing lesions (e.g., via bounding boxes), bridging the gap between coarse classification and fine-grained segmentation.

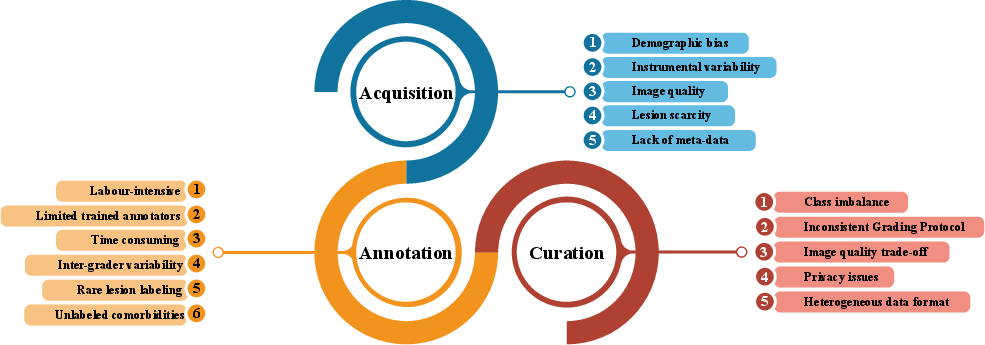

Challenges in DR Dataset Development

Quality, size, diversity, and annotation richness of datasets are pivotal for clinically reliable DL systems. Key challenges include:

Evolution and Classification of DR Fundus Datasets

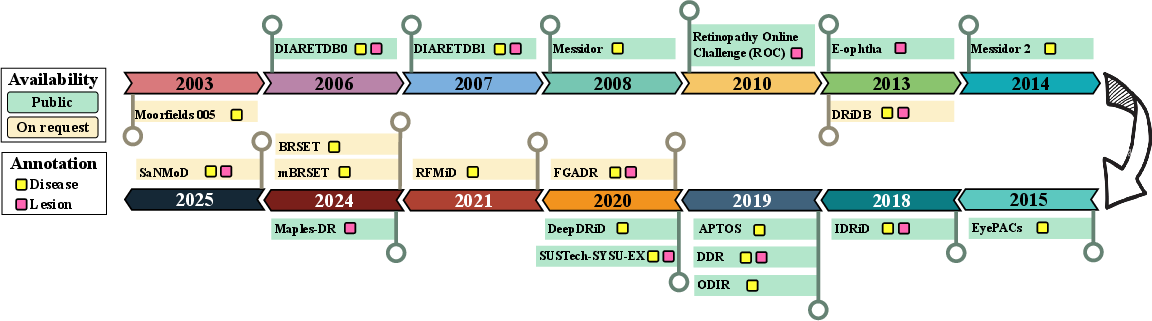

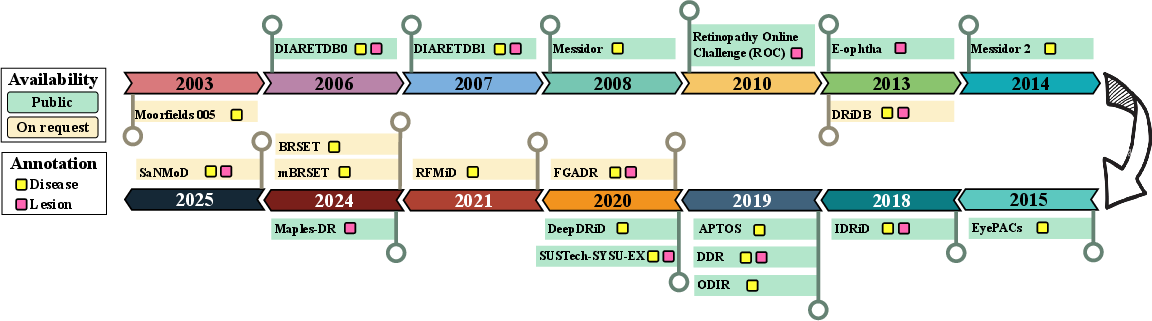

DR image repositories have evolved from small, lesion-centric datasets (2003–2014) to large-scale, clinically-oriented collections (2015–2025). Earlier datasets (e.g., DIARETDB0/1, DRiDB, E-ophtha) focused on benchmark tasks with limited diversity and annotation. Recent datasets (e.g., EyePACS, APTOS, DDR, SaNMoD, BRSET) encompass tens of thousands of images, richer metadata, and support multi-tasking, including other ocular diseases.

Figure 4: Chronological timeline highlighting the release, annotation type, and availability of major DR fundus image datasets.

Despite progress, most datasets are affected by class imbalance, limited lesion-level annotation, and demographic specificity. For segmentation and lesion localization, fine annotation remains exceptional and sample sizes are generally inadequate. The move to population-specific datasets (from India, China, USA, Brazil) addresses some biases but still lacks standardized protocols and longitudinal tracking.

SaNMoD: A Case Study in Data-Centric Benchmarking

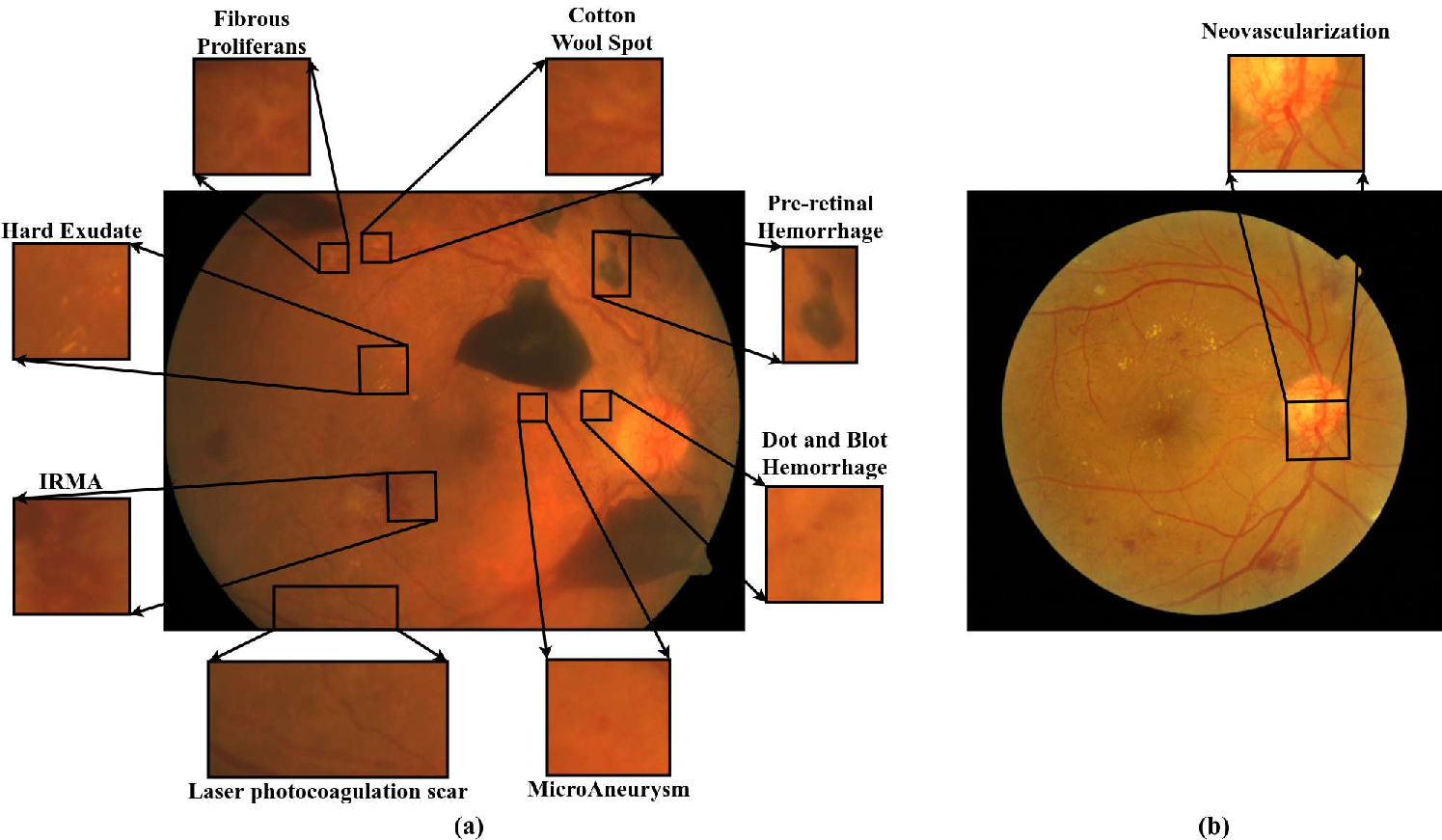

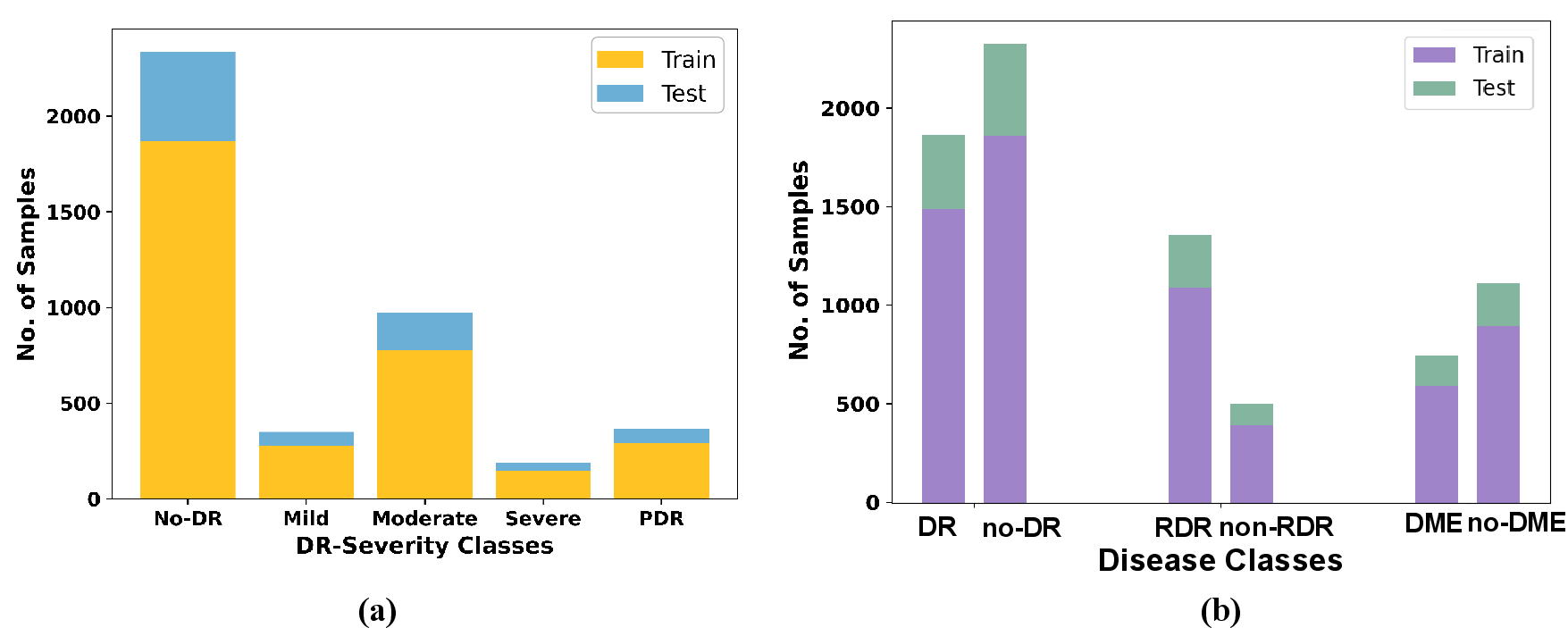

The SaNMoD dataset stands out for its scale, annotation comprehensiveness (DR stages, DME, RDR, 11 lesion types), and high-resolution samples curated by eight ophthalmologists. Its significant class imbalance mirrors real-world screening populations, with most samples being normal or moderately affected.

Figure 5: Distribution of DR severity classes and sample categories in the SaNMoD dataset.

Performance benchmarking on SaNMoD employed CNN and transformer models, with tasks aligned to clinical workflows:

- Binary DR classification: EfficientNetB2 and other CNNs achieved F1-scores close to 0.90 for both classes; ViT notably underperformed.

- RDR classification: EfficientNetB2 and InceptionNetV3 led in sensitivity and specificity; ViT showed unstable boundary detection.

- DME classification: VGG16 excelled, reaffirming the importance of strong local feature bias; ViT was less effective.

- Multi-class grading: ResNet50 yielded the most balanced performance; class overlap and minority representation challenged all architectures.

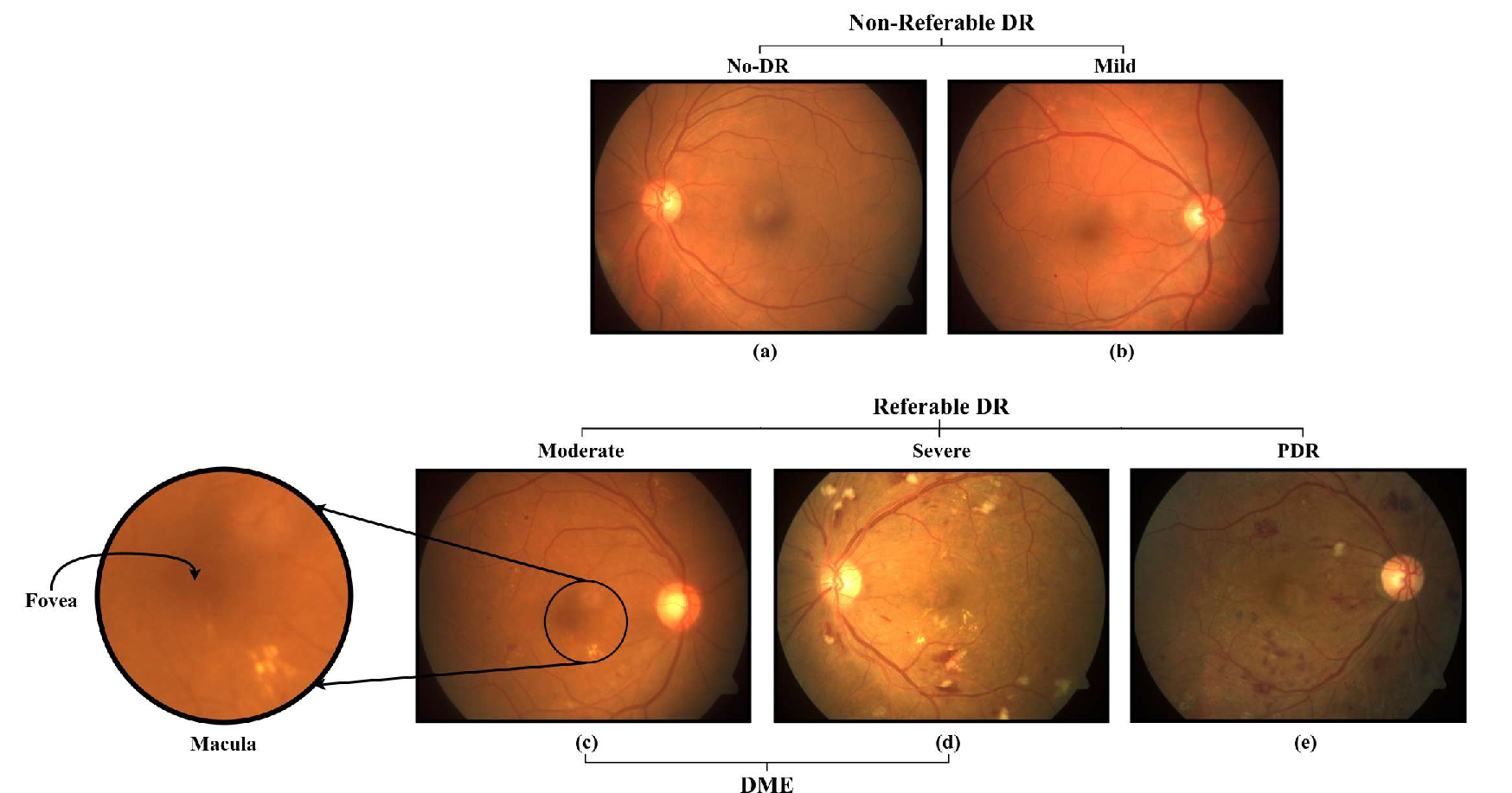

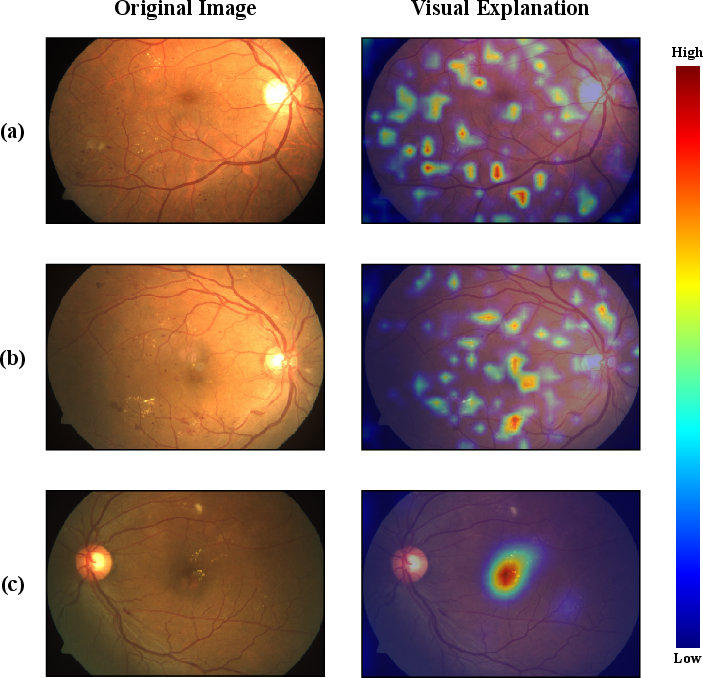

Grad-CAM visualization corroborated lesion-centric activation across tasks, with high attention over clinical markers such as microaneurysms and exudate clusters.

Figure 6: Grad-CAM activations demonstrating lesion-specific model focus during DR, RDR, and DME classification.

Key empirical insights:

- Dataset characteristics drive model selection: Lesion-centric distributions favor CNNs with spatial inductive biases.

- Imbalanced and subtle-class representation restricts transformer efficacy.

- Multi-scale architectures like InceptionNetV3 stabilize performance amidst diverse lesion sizes.

- High-resolution and fine-grained annotation amplify interpretability and robustness.

- Richly annotated datasets become foundational for clinically actionable and explainable AI systems.

Implications and Future Directions

This review consolidates the impact of dataset curation on DL performance for DR screening. Persistent gaps in annotation quality, demographic diversity, and longitudinal data hinder clinical translation. Empirical benchmarking demonstrates CNN superiority over ViTs in data-constrained, imbalanced settings. The lack of standardized lesion-level annotation limits segmentation and fine-grained detection tasks. Integrating richer metadata and multi-disease labels enhances prognostic modeling.

Future developments should prioritize:

- Standardized annotation frameworks across centers.

- Expansion of longitudinal imaging for disease progression modeling.

- Multi-modal and multi-disease labeling to enable robust, generalizable models.

- Lightweight, resource-efficient architectures for deployment in low-resource settings.

Enhanced dataset quality and diversity remain indispensable for explainable, clinically reliable DL solutions in DR management, with implications for global blindness reduction.

Conclusion

The paper underscores that advances in automated DR screening are contingent upon high-quality, standardized, and richly annotated fundus datasets. Deep learning models, especially CNNs, demonstrate strong performance when trained on such data, but persistent gaps limit clinical translation and generalizability. The evolution of dataset curation practices, exemplified by SaNMoD, reveals the centrality of data-centric strategies for robust, interpretable, and scalable AI systems for DR management.