- The paper introduces a multi-stage CARE framework that separates patient-specific inference from proprietary guidance to tackle evidence discordance while preserving privacy.

- It utilizes the MIMIC-DOS benchmark to rigorously evaluate performance, demonstrating balanced accuracy improvements over traditional single-model approaches.

- The approach shows that explicit state and transition reasoning is essential for robust, privacy-preserving clinical decision support in high-noise ICU environments.

CARE: Privacy-Compliant Agentic Reasoning under Evidence Discordance

Introduction

The paper "CARE: Privacy-Compliant Agentic Reasoning with Evidence Discordance" (2604.01113) addresses a critical deficiency in current LLM-driven clinical decision-support systems: the management of conflicting subjective and objective evidence under strict privacy constraints. Existing approaches, which typically employ either single-pass proprietary models or local LLMs, show fragility when faced with evidence discordance such as contradictory patient-reported symptoms and physiological metrics. This work introduces the MIMIC-DOS benchmark to isolate such discordant cases and proposes CARE, an agentic, multi-stage reasoning architecture that rigorously separates patient-specific inference from global, value-independent guidance.

Problem Setting and Limitations of Existing Approaches

Standard LLM clinical pipelines perform well in settings where evidence is congruent, but real-world ICU scenarios often contain incomplete, noisy, or contradictory data. Conventional ML models (e.g., XGBoost), though competitive on classical benchmarks, fail to address evidence discordance due to their black-box nature and inability to reason about conflicting signals, especially in phenomena such as occult hypoperfusion where surface-level patient stability masks latent risk. Similarly, recent LLM-centered paradigms—whether single-pass inference, majority voting, or multi-agent debate—demonstrate instability, collapse to one-class predictions, and lack mechanisms for structured reasoning or state transitions when confronted with conflicting inputs.

Privacy constraints exacerbate this challenge. The highest-performing models are usually closed-source LLMs that cannot access raw patient data, whereas open-source LLMs, though deployable on-premise, are less robust in complex clinical reasoning. Direct exposure of patient data to a remote proprietary LLM is therefore infeasible, and naive application of local models yields ineffective or degenerate decisions.

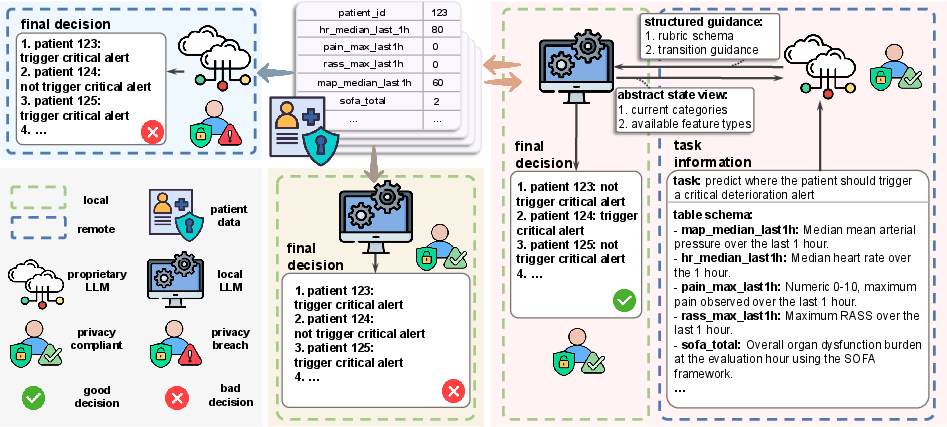

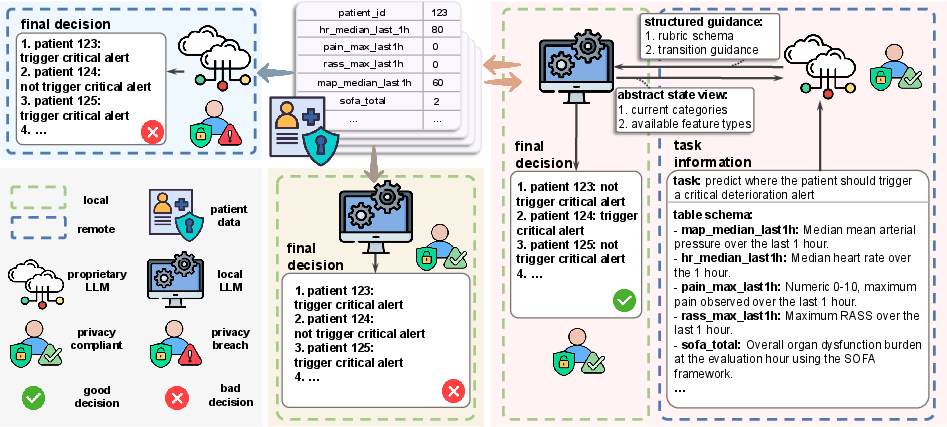

Figure 1: Comparison of decision-making paradigms. Proprietary single-pass (left) risks privacy breach, local single-pass (middle) underperforms, whereas CARE (right) enables privacy-preserving, performant decision making via separation of concerns.

MIMIC-DOS: A Benchmark for Discordant Evidence

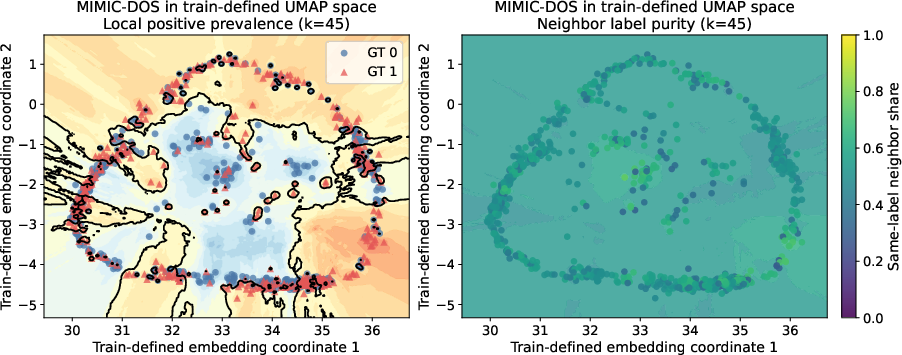

To rigorously evaluate model performance on evidence discordance, the authors introduce MIMIC-DOS, a dataset derived from MIMIC-IV, constructed exclusively from ICU stay-horizon intervals where bedside subjective measures (pain score, RASS) are reassuring but objective hemodynamics (MAP) indicate instability. The prediction task is binary: determining whether a patient's SOFA score will worsen by at least two points in the subsequent 12 hours. The evaluation set is class-balanced and designed to eliminate agreement-driven shortcuts, focusing on the model’s capacity to reconcile discordant cues.

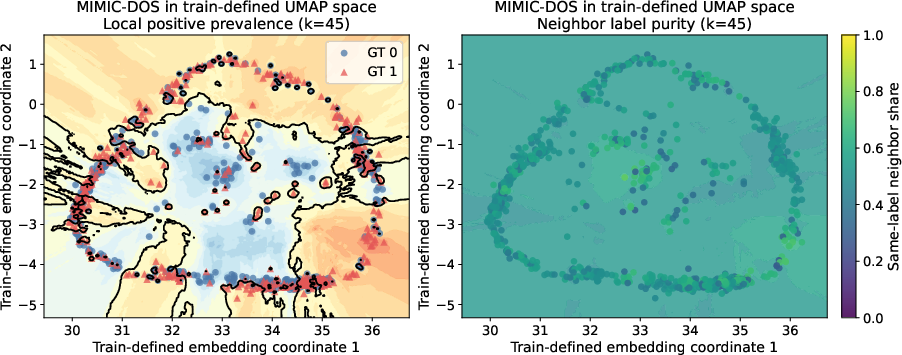

Figure 2: UMAP embedding of MIMIC-DOS reveals substantial overlap between positive and negative classes, emphasizing the intrinsic difficulty of separating cases under sign-symptom discordance.

CARE Framework: Agentic, Staged, and Privacy-Respecting Reasoning

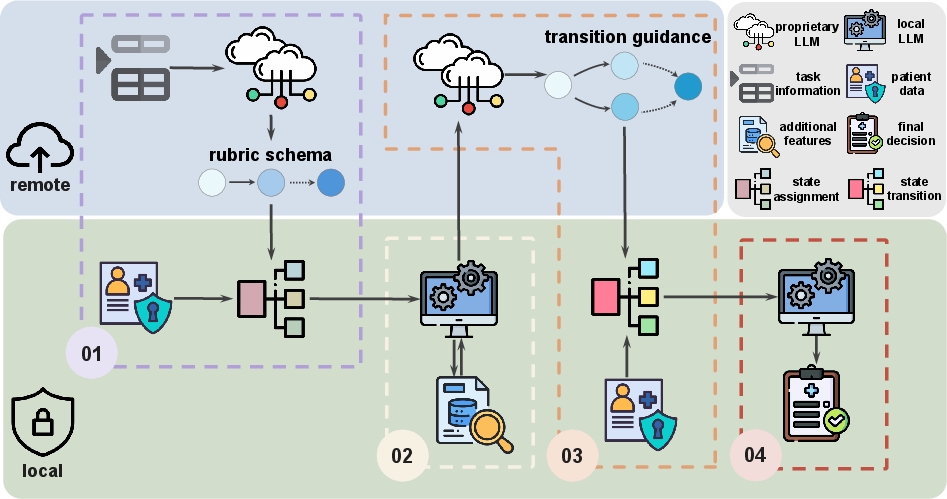

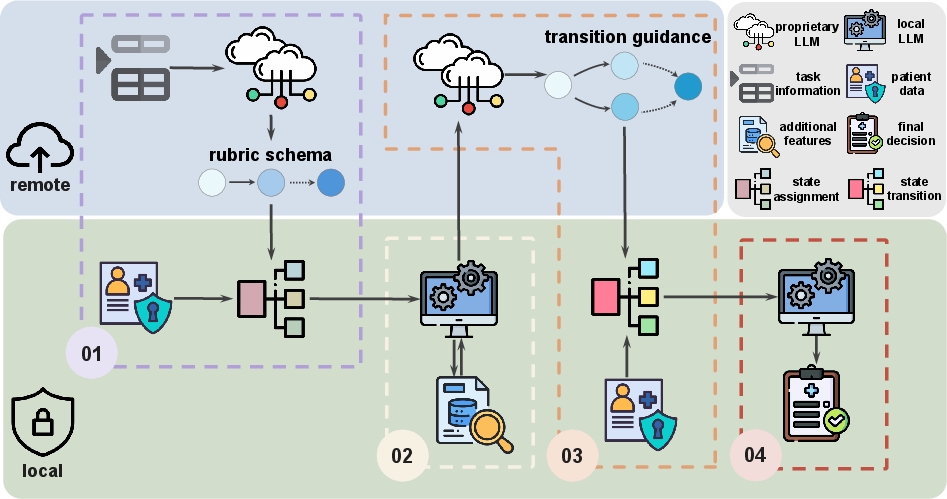

CARE—privacy-Compliant Agentic REasoning—decomposes clinical inference into four stages:

- Rubric generation and initial state assignment: The proprietary LLM generates a task-level rubric schema defining intermediate patient states and their evidentiary requirements, without exposure to individual data. The local model maps actual patient data to this rubric.

- Category-aware data acquisition: The local LLM inspects current state assignments and, leveraging rubric-derived requirements, retrieves only task-relevant additional features in a state-conditioned fashion.

- Transition reasoning: The proprietary LLM, given only abstract state/context and available feature types, produces structured advice regarding plausible state transitions. The local model then concretely updates states and reasoning traces.

- Final decision-making: The local LLM synthesizes the accumulated evidence trace to produce a task-level decision.

Figure 3: CARE framework staggers global abstract guidance from the proprietary LLM (privacy-preserving) and local patient-specific data processing, enabling robust, dynamic state reasoning while ensuring data locality.

Notably, raw patient values never leave the local environment, achieving privacy compliance. Rubric schemas can be either human-authored or, as in this work, generated by a remote LLM using only descriptive (not value-containing) metadata.

Empirical Evaluation and Interpretation

CARE is benchmarked against four competitive workflows: single-pass LLM inference, majority voting of parallel LLMs, round-synchronous multi-agent debate, and confidence-aware sequential debate. Local LLMs used include autoregressive (GPT-OSS, Qwen) and diffusion-based architectures (LLaDA); GPT-5 serves as the proprietary LLM.

The core finding is that CARE (GPT-OSS local, GPT-5 proprietary) is the only system achieving both TPR and TNR > 0.5 on MIMIC-DOS, with BA = 0.546, G-mean = 0.5455, and MCC = 0.0921—in contrast to all baselines, which collapse to unbalanced predictions (high recall, low specificity or vice versa) and suffer severe label bias in one-shot zero-shot settings. Multi-agent debate and voting redistribute rather than resolve these biases and incur significant token overhead without tangible improvements on balanced accuracy.

Ablations demonstrate that excluding Stage 1 (rubric generation) or Stage 3 (transition reasoning) consistently degrades performance, confirming the necessity of explicit state modelling and transition. Gains are largest with a stable local LLM prior (GPT-OSS); less so with models prone to intrinsic bias (Qwen, LLaDA). The effect persists even though no system, including standard RF classifiers, can attain high discrimination on MIMIC-DOS due to its intrinsic feature overlap.

Practical and Theoretical Implications

CARE’s abstraction and workflow modularization establishes a paradigm for privacy-preserving agentic systems in high-stakes domains. Clinically, this enables LLM-driven support for scenarios characterized by evidence discordance, avoiding the risk of privacy breaches or brittle outputs endemic to end-to-end single-model designs. The approach can generalize to decision-support in other regulated domains where the “data-observer” (LLM) and “reasoning-expert” (closed-source model) must be systematically separated.

Theoretically, the work motivates further investigation into staged, intermediate-state and transition-based reasoning under configuration-level constraints (privacy, access, or regulatory), extending beyond black-box function approximation. The explicit interface between proprietary, schema-generating or transition-advising models, and local semantic execution, may serve as a template for constrained agentic workflows.

Future Directions

The current study limits analysis to a singular discordance subtype and a class-balanced diagnostic benchmark. Extensions include generalization to multiple evidence conflict regimes, cross-task transferability of rubric/transition logic, dynamic schema adaption, and formal privacy guarantees on higher-level metadata exposure. Scaling CARE to broader clinical and cross-domain datasets underlies the next research frontier.

Conclusion

CARE establishes a rigorous, multi-stage framework for privacy-compliant clinical reasoning under evidence discordance, incorporating abstract, non-value guidance from high-capability proprietary LLMs with local, data-protected inference. Empirically, it unequivocally outperforms competitive agentic and single-pass LLM paradigms on MIMIC-DOS, uniquely escaping one-sided prediction collapse. This validates the architectural principle that explicit interface stratification—rubric generation, category-aware acquisition, structured transition reasoning—is essential for robust, privacy-aligned deployment of LLMs in complex, high-noise real-world decision support.