Multimodal Analysis of State-Funded News Coverage of the Israel-Hamas War on YouTube Shorts

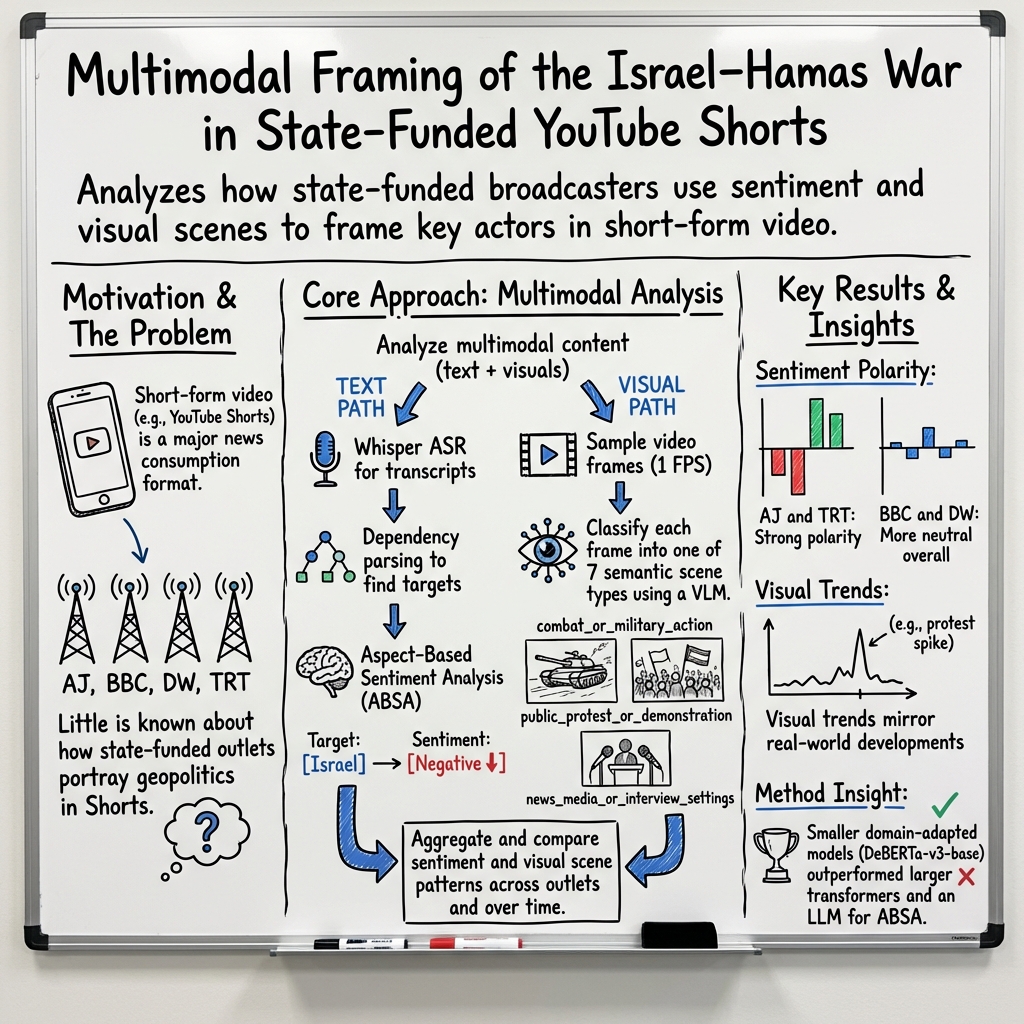

Abstract: YouTube Shorts have become central to news consumption on the platform, yet research on how geopolitical events are represented in this format remains limited. To address this gap, we present a multimodal pipeline that combines automatic transcription, aspect-based sentiment analysis (ABSA), and semantic scene classification. The pipeline is first assessed for feasibility and then applied to analyze short-form coverage of the Israel-Hamas war by state-funded outlets. Using over 2,300 conflict-related Shorts and more than 94,000 visual frames, we systematically examine war reporting across major international broadcasters. Our findings reveal that the sentiment expressed in transcripts regarding specific aspects differs across outlets and over time, whereas scene-type classifications reflect visual cues consistent with real-world events. Notably, smaller domain-adapted models outperform large transformers and even LLMs for sentiment analysis, underscoring the value of resource-efficient approaches for humanities research. The pipeline serves as a template for other short-form platforms, such as TikTok and Instagram, and demonstrates how multimodal methods, combined with qualitative interpretation, can characterize sentiment patterns and visual cues in algorithmically driven video environments.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

A simple explanation of the paper

1) What this paper is about

The paper looks at how government-funded news channels used YouTube Shorts to report on the Israel–Hamas war. The authors don’t just read the words in the videos—they also look at the pictures. By combining what’s said (the transcript) and what’s shown (the visuals), they try to understand the feelings and messages these short videos spread.

They studied 2,300+ Shorts from four big international outlets: Al Jazeera, BBC, Deutsche Welle (DW), and TRT World, posted between late 2023 and 2024.

2) The big questions they ask

The researchers mainly ask:

- How do these news outlets differ in the feelings they express about key political groups or people (like “Israel,” “Palestine,” “Hamas,” or specific politicians)?

- How do their visuals (what you see on screen) differ—for example, are they showing protests, interviews, destruction, or battles?

- Do these patterns change over time?

3) How they did the study (in everyday terms)

Think of their approach as a smart sorting system that listens, reads, and watches videos:

- Turning speech into text: They used an automatic tool (like super-powered auto-captions) to turn spoken words into written transcripts.

- Finding who or what is being talked about: They searched each sentence for specific “aspects” (topics), like “Israel,” “Palestine,” “Zionism,” “Hamas,” or named politicians. This is like tagging each sentence with who it’s about.

- Checking the sentiment: For each tagged sentence, they asked, “Is the tone toward this topic positive, negative, or neutral?” This is called aspect-based sentiment analysis (ABSA). It’s like checking the tone of a sentence only about one person or group at a time.

- Looking at the visuals: They grabbed one image per second from each video and sorted each frame into one of seven scene types—like sorting photos into labeled albums.

To make the picture-sorting clear and consistent, they used seven easy-to-spot categories:

- News studio or interview

- Political or diplomatic events (like a speech at the UN)

- Public protest or demonstration

- Destruction or humanitarian crisis (damaged buildings, aid scenes)

- Combat or military action

- Symbolic or religious ritual (prayers, funerals, memorials)

- Other/unknown (when it’s not clear)

They used open-source AI models and showed that smaller, customized models (carefully trained for this topic) can work better than giant general-purpose ones.

4) What they found and why it matters

Here are the main takeaways:

- Different outlets, different tones:

- Al Jazeera and TRT World often showed more negative sentiment toward Israel and more positive sentiment toward Palestine.

- BBC and DW were more neutral overall.

- Across all outlets, negative tones appeared more often than positive ones.

- What gets more views:

- For Al Jazeera and TRT, videos with more positive tones (especially toward Palestinians) tended to get higher median views.

- For the BBC, videos with more negative tones tended to get more views.

- The tone stayed steady over time:

- The pattern of negative sentiment toward Israel and positive sentiment toward Palestine stayed consistent throughout the year.

- Mentions of “Zionism” were usually negative; mentions of “Islam” were mostly neutral.

- Where the emotion came from:

- Strong emotional language often came from protest chants or quotes from interviewees—not from the news anchors themselves. Short videos often amplify these moments because they’re quick and attention-grabbing.

- Visuals mirrored real events:

- The most common visuals were news/interviews, then destruction/aid scenes, and then political events.

- Early months showed more destruction/aid scenes; spring had more protests (like university protests); February had more diplomatic events (like the UN and the International Court of Justice); late 2024 showed more combat footage.

- This helps confirm the method works: the visuals tracked with what was happening in the real world.

- Small, specialized AI can beat big, general AI:

- A smaller, fine-tuned model did better at judging sentiment than larger, more general models. That’s important for researchers with limited computing power.

Why this matters: Short videos spread fast and are designed to grab attention. If they often highlight emotionally charged quotes and scenes, they can shape how people feel about events—especially when there’s little time for background or context.

5) What this could mean going forward

- The pipeline is a practical tool: It shows how to study TikTok-like videos by combining the words and the visuals. Other researchers can copy this approach for TikTok, Instagram Reels, and more.

- It helps track polarization: Because Shorts can amplify emotional moments, this method can help monitor how news outlets might influence audiences through tone and imagery.

- It’s accessible: You don’t need huge AI systems to get useful results. Small, carefully trained models can be enough.

- Limits to keep in mind:

- The study looked at English-language videos only.

- Some categories (like “other/unknown”) are broad and can be confusing for the model.

- Automatic transcripts can make mistakes, especially with noisy audio or multiple languages.

- The videos were manually selected and may not represent everything these outlets posted.

Overall, the study shows that short-form news often leans on emotionally strong clips and visuals that match real-world events. The new method gives researchers a way to measure and compare these patterns over time and across different outlets.

Knowledge Gaps

Unresolved Knowledge Gaps, Limitations, and Open Questions

Below is a single, consolidated list of concrete gaps, limitations, and open questions the paper leaves unresolved, intended to guide future research:

- Data selection bias: Shorts were “manually selected and scraped approximately two weeks after publication,” but selection criteria are not specified; replicability and representativeness of the corpus remain unclear.

- Platform lifecycle bias: Scraping two weeks post-publication may miss early engagement dynamics and edits/removals; effects on view counts and exposure are unmeasured.

- Outlet scope and imbalance: Only four “state-funded or state-supported” outlets are included, with extreme imbalance in sample sizes (e.g., TRT and AJ dominate; BBC and DW sparse), limiting cross-outlet comparability.

- Missing comparison groups: No inclusion of non-state-funded mainstream or local outlets; cannot attribute observed patterns to “state-funded” status vs. broader editorial norms.

- English-only focus: Non-English Shorts from the same outlets are excluded; cross-language differences in sentiment and visuals remain unknown.

- ASR validity: No quantitative transcription metrics (e.g., WER, CER, language-ID accuracy) or analysis of how ASR errors propagate to aspect linking and ABSA.

- Speaker attribution: No diarization or role attribution (anchor vs. interviewee vs. chant); the outlet’s stance may be conflated with quoted or ambient speech.

- Quotation detection: No automatic detection of quoted speech or chants; inability to separate reported speech from editorial narration limits interpretability of outlet sentiment.

- Aspect lexicon coverage: The handcrafted lexicon (56 surface forms, 10 groups) may miss key actors (e.g., additional politicians, states, organizations) and evolving terms; recall of aspect detection is unreported.

- Aspect linking evaluation: Dependency-based aspect–head linking is not evaluated for precision/recall; no ablation versus simpler span- or rule-based baselines.

- ABSA label granularity: Sentiment is restricted to {negative, neutral, positive}; no intensity, uncertainty, irony/sarcasm, moral frames, or stance directionality beyond polarity.

- ABSA training data curation: Semi-automatic selection via high-confidence model predictions (p ≥ 0.75) risks confirmation bias; no inter-annotator agreement or detailed annotation protocol is reported.

- Class imbalance handling: Known imbalances (e.g., Arabs, politicians) are acknowledged but not methodologically addressed (no reweighting, resampling, or calibration analysis).

- Generalization of ABSA: No out-of-domain or cross-dataset validation; robustness across outlets, time, and evolving vocabulary is untested.

- Multimodal alignment: Textual sentiment and visual scenes are analyzed in parallel but not temporally aligned; no synchronized co-occurrence analysis at the frame/segment level.

- Frame sampling strategy: Uniform 1 FPS sampling lacks shot boundary detection, scene change sensitivity, deduplication, or sensitivity analysis to FPS choices; may miss salient micro-scenes.

- Visual taxonomy limitations: A 7-category, single-label taxonomy is coarse; multi-label scenes and category overlaps (e.g., combat vs. destruction) are unresolved; “other/unknown” remains broad and error-prone.

- Visual model validation: Scene classification is validated on 799 frames without inter-annotator agreement, per-category confusion analysis, or outlet/time-stratified robustness; generalization to varied overlays, resolutions, and lighting is unassessed.

- VLM choice and bias: Reliance on a single small VLM (Qwen3-VL 4B) without benchmarking against other open VLMs; potential cultural/religious attire bias is noted qualitatively but not audited.

- Missing modalities: No OCR for on-screen text, no prosodic or audio affect features (e.g., chants, music, crowd noise); short-form overlays and audio cues likely carry major framing information.

- Engagement analysis limits: Only view counts are analyzed; likes, comments, shares, watch time, and retention are absent; no control for confounders (upload time, channel size, video length, title/thumbnail, hashtags).

- No causal inference: Relationship between sentiment/scene types and engagement is descriptive; effects of recommendation algorithms or editorial choices on exposure and engagement are not modeled.

- Statistical rigor: No hypothesis testing, uncertainty intervals, or effect sizes for cross-outlet and temporal comparisons; multiple-comparison risks are unaddressed.

- Normalization across outlets/time: Disparate upload volumes and video lengths are not systematically normalized or weighted; comparability of monthly trends across outlets is uncertain.

- Granularity of political actors: Entity groups (e.g., “Israeli politicians,” “Zion,” “Jews”) can conflate distinct targets; sub-actor analysis and disambiguation are missing, risking interpretive errors.

- Geographic context: No location inference for scenes; lack of spatial analysis obscures how coverage varies by place or distance from events.

- Authenticity and generative media: AI-generated or manipulated imagery is a concern but not detected or quantified; prevalence and impact are unknown.

- Data and annotation availability: Code is shared, but release of annotated ABSA data and frame labels is unclear; limited transparency hinders replication and benchmarking.

- Ethical and bias audits: No systematic audit of model bias across demographics, attire, or cultural symbols; potential harms from misclassification or sentiment toward protected groups are not quantified.

- Cross-platform generalizability: Claims of portability to TikTok/Instagram are untested; platform-specific affordances (e.g., music libraries, filters) may affect multimodal signals.

- Open question: To what extent do observed sentiment and scene patterns arise from outlet editorial policy versus platform recommendation dynamics?

- Open question: Do temporally aligned visual–textual co-occurrences (e.g., protest scenes + negative sentiment) predict engagement better than unimodal signals?

- Open question: How do on-screen text overlays and nonverbal audio cues mediate sentiment perception when spoken narration is absent?

- Open question: What differences emerge when analyzing non-English Shorts from the same outlets, and do those differences alter conclusions about polarization?

- Open question: Can a learned multimodal fusion model (with OCR, audio features, and temporal alignment) improve prediction of engagement and outlet classification over the current pipeline?

Practical Applications

Immediate Applications

Below are specific, deployable use cases that leverage the paper’s models, taxonomy, and pipeline as they currently stand.

Industry (media, platforms, adtech, software)

- Brand-safety and adjacency screening for short-form ads (YouTube Shorts)

- What: Use the 7-class scene taxonomy and transcript-level ABSA to score videos for violence, political sensitivity, and humanitarian-crisis imagery before ad placement.

- Sectors: Advertising, media, software.

- Potential tools/products/workflows: Brand-safety classifier API; pre-bid adapter mapping scene types to IAB categories; dashboards for campaign managers.

- Assumptions/dependencies: Continued YouTube API access; 1 FPS sampling is sufficient for reliable detection; acceptable false-positive rate for “other/unknown” category; English-language transcripts.

- Newsroom coverage analytics and self-auditing

- What: Internal dashboards that chart outlet-level sentiment toward specified aspects (e.g., Israel, Palestine, Zionism) and visualize scene-type distributions over time to identify imbalances or shifts.

- Sectors: Media, software.

- Potential tools/products/workflows: “Shorts Analytics” plugin for newsroom CMS; ABSA + scene-type time-series reports; editorial alerts when polarity exceeds set thresholds.

- Assumptions/dependencies: Editorial buy-in; accurate ASR for noisy field audio; domain lexicon alignment with each outlet’s topics.

- Content moderation triage for violent or distressing visuals

- What: Automatically surface Shorts with “combat_or_military_action” or “destruction_or_humanitarian_crisis” scenes for human review, age-gating, or regional restrictions.

- Sectors: Platforms, trust & safety, software.

- Potential tools/products/workflows: Queue prioritization tool; reviewer UI summarizing top frames and transcript sentiment.

- Assumptions/dependencies: Operational tolerance for ~13% frame-level error; clear policy definitions; appeals and reviewer oversight.

- Competitor and narrative monitoring for media and PR teams

- What: Track cross-outlet polarity and scene strategy (e.g., share of protest footage vs. interviews) to benchmark editorial approaches and plan counter-messaging.

- Sectors: Media, PR/communications, software.

- Potential tools/products/workflows: Competitive intelligence reports; automated weekly snapshots; alerting on sentiment spikes.

- Assumptions/dependencies: Representative sampling; stable aspect lexicon across campaigns.

- Contextual video search and retrieval for archives and rights holders

- What: Index Shorts by ABSA-labeled aspects (e.g., “Netanyahu: negative”) and scene types (e.g., “public protest”) for fast retrieval.

- Sectors: Media libraries, software.

- Potential tools/products/workflows: Retrieval API; librarian-facing interface for rights clearance and compilations.

- Assumptions/dependencies: Storage and metadata governance; English-language focus.

- Resource-efficient NLP/vision deployments

- What: Replace heavy LLMs with finetuned smaller encoders (e.g., DeBERTa-v3-base) for domain ABSA workloads to reduce cost without losing accuracy.

- Sectors: Software, AI/ML Ops.

- Potential tools/products/workflows: “Small-but-tuned” model catalog; inference microservices with autoscaling.

- Assumptions/dependencies: Availability of domain gold data and lexicons; periodic re-finetuning as topics evolve.

Academia and Research

- Reproducible multimodal analysis for political communication and digital humanities

- What: Apply the open-source pipeline to study framing and visuals across conflicts, elections, and protests on Shorts-like formats.

- Sectors: Academia, software.

- Potential tools/products/workflows: Course modules; replications; shared datasets of frame labels and aspect sentiments.

- Assumptions/dependencies: Ethical approvals; platform terms compliance; careful reporting on sampling bias.

- Methods training in resource-constrained settings

- What: Use small, finetuned models as practical teaching tools for multimodal research without large GPU budgets.

- Sectors: Academia, education.

- Potential tools/products/workflows: Lab assignments; “starter kits” with pretrained checkpoints.

- Assumptions/dependencies: English-language focus; curated domain lexicon.

Policy and Government

- Transparency reporting on state-funded media narratives

- What: Regular reports quantifying sentiment toward key actors and prevalence of protest/crisis imagery to inform public communication strategies.

- Sectors: Policy, public administration.

- Potential tools/products/workflows: Monthly dashboards; API feeds to oversight bodies; whitepapers with methodological appendices.

- Assumptions/dependencies: Non-partisan framing; safeguards against misuse for censorship; acknowledgment of sampling limitations.

- Early situational awareness for crisis communication

- What: Rapid detection of spikes in “destruction_or_humanitarian_crisis” or “public_protest” scenes to inform briefings and outreach.

- Sectors: Policy, emergency management.

- Potential tools/products/workflows: Alerting systems; cross-validation with ground reports.

- Assumptions/dependencies: Shorts reflect real-world conditions; verification protocols to mitigate synthetic or misleading content.

Daily Life and Education

- Media literacy aids for short-form video

- What: A browser/mobile companion that summarizes likely sentiment by aspect and dominant scene types to help users contextualize what they watch.

- Sectors: Education, consumer software.

- Potential tools/products/workflows: Lightweight extension/app with on-demand analysis; classroom demos.

- Assumptions/dependencies: Latency constraints; clear disclaimers about model error; English-only at first.

- Fact-checking triage for journalists and civic groups

- What: Flag quotes/chant-heavy Shorts (often driving polarity) to prioritize manual verification.

- Sectors: Civil society, journalism, software.

- Potential tools/products/workflows: Watchlists for high-polarity items; transcript extraction with timestamped segments.

- Assumptions/dependencies: ASR reliability in noisy scenes; access to original source links.

Long-Term Applications

These opportunities require further research, scaling, or development beyond the paper’s current scope.

Industry (media, platforms, adtech, software, finance)

- Cross-platform, multilingual short-form intelligence (YouTube, TikTok, Reels)

- What: Extend pipeline to multiple languages and platforms for comprehensive monitoring of global narratives.

- Sectors: Media intelligence, software.

- Potential tools/products/workflows: Ingestion connectors; multilingual ASR; cross-lingual aspect lexicons.

- Assumptions/dependencies: Platform API/ToS stability; robust language ID and code-switching support; compute scaling.

- Real-time brand-safety and contextual targeting in programmatic ads

- What: Map scene types and ABSA outputs to IAB content categories for real-time bidding decisions on short-form inventory.

- Sectors: Adtech, software.

- Potential tools/products/workflows: Low-latency inference services; safety-score calibration; continuous human-in-the-loop QA.

- Assumptions/dependencies: Sub-second inference; regulatory compliance (e.g., privacy, platform policies).

- Algorithmic auditing of recommendation systems

- What: Correlate content features (sentiment, scenes) with exposure and engagement to study whether algorithms amplify certain framings.

- Sectors: Platforms, academia, policy.

- Potential tools/products/workflows: Controlled audits; instrumentation to capture impressions; causal inference tooling.

- Assumptions/dependencies: Access to exposure data; cooperation from platforms; robust statistical design.

- Geopolitical and market risk monitoring

- What: Use spikes in crisis/protest imagery and negative sentiment toward key actors as soft signals for risk dashboards.

- Sectors: Finance, risk analytics, software.

- Potential tools/products/workflows: Signal fusion with news wires and satellite data; alert scoring models.

- Assumptions/dependencies: Guardrails against overreliance on platform content; validation to reduce false signals.

- Synthetic media and manipulation detection in short-form news

- What: Add detectors for AI-generated imagery, deepfakes, and manipulated overlays; integrate OCR and audio emotion cues.

- Sectors: Platforms, trust & safety, software.

- Potential tools/products/workflows: Multimodal authenticity scoring; provenance metadata integration (C2PA).

- Assumptions/dependencies: Evolving adversarial threats; benchmark datasets for short-form contexts.

Academia and Research

- Joint modeling of text–vision alignment at segment level

- What: Align per-second frames with transcript snippets to detect “affective coupling” (sentiment spikes co-occurring with specific visuals).

- Sectors: Academia, software.

- Potential tools/products/workflows: Multimodal fusion architectures; fine-grained annotated corpora.

- Assumptions/dependencies: Precise ASR timestamps; improved synchronization under rapid edits.

- Public benchmarks for short-form multimodal ABSA and scene classification

- What: Curate open datasets with gold labels across languages and topics to standardize evaluation.

- Sectors: Academia, open science, software.

- Potential tools/products/workflows: Data governance frameworks; shared leaderboards; annotation guidelines.

- Assumptions/dependencies: Ethical safeguards; licensing clarity for video frames and transcripts.

- Causal studies on affect and engagement

- What: Experimentally test how sentiment polarity and scene types influence watch time, sharing, and perception.

- Sectors: Academia, platforms.

- Potential tools/products/workflows: A/B experiments; pre-registered studies; human-subject protocols.

- Assumptions/dependencies: Platform collaboration; strict ethical review.

Policy and Government

- Narrative transparency standards for state-funded media

- What: Develop reporting norms requiring aggregate disclosures of affective framing and visual-content mixes during crises/elections.

- Sectors: Policy, regulators.

- Potential tools/products/workflows: Compliance toolkits; third-party audit frameworks; public dashboards.

- Assumptions/dependencies: Political will; safeguards against misuse; clear definitions of “state-funded.”

- Early warning and crisis informatics for humanitarian response

- What: Combine scene-type spikes (e.g., crisis/destruction) with geospatial enrichment and OSINT to inform relief allocation.

- Sectors: Humanitarian agencies, emergency management.

- Potential tools/products/workflows: Geotagging pipeline; cross-source corroboration protocols.

- Assumptions/dependencies: Reliable geolocation; mitigation of propaganda bias; verification workflows.

Daily Life and Education

- Curriculum-integrated, interactive media analysis platforms

- What: Classroom dashboards where students explore how sentiment and visual framing evolve across outlets and time.

- Sectors: Education, edtech.

- Potential tools/products/workflows: Lesson plans; sandboxed datasets; educator controls for sensitive content.

- Assumptions/dependencies: Age-appropriate filters; institutional approvals.

- Consumer-facing “context cards” for Shorts

- What: Platform-integrated panels that summarize likely scene types and aspect sentiment, plus source context (e.g., state-funded label).

- Sectors: Consumer software, platforms.

- Potential tools/products/workflows: UI widgets; explainability snippets with uncertainty indicators.

- Assumptions/dependencies: Platform UI/UX integration; careful messaging to avoid perceived bias.

Notes on feasibility across applications:

- The pipeline is strongest today for English-language content and structured political topics covered by the provided lexicon; multilingual and broader topical coverage require new training data.

- Whisper ASR degrades with chants, overlapping speech, and noisy environments; accuracy in such cases is a key dependency for ABSA.

- The 7-class visual taxonomy achieves high accuracy but includes ambiguity in “other_or_unknown”; further prompt and label refinement is advisable for high-stakes deployments.

- Ethical safeguards, transparency, and human oversight are necessary to prevent misuse (e.g., censorship, biased labeling).

Glossary

- ABSA (Aspect-Based Sentiment Analysis): A sentiment analysis approach that assigns polarity toward specific targets (aspects) mentioned in text. "To address this gap, we present a multimodal pipeline that combines automatic transcription, aspect-based sentiment analysis (ABSA), and semantic scene classification."

- amod: A dependency relation label indicating an adjectival modifier of a noun. "the dependency relations nsubj and amod link aspect and head."

- aspect lexicon: A curated list of surface forms for entities or concepts used to detect and group aspect mentions for ABSA. "Our aspect lexicon consists of 56 manually selected surface forms grouped into ten substantive categories relevant to the Israel--Hamas war"

- ASR (Automatic Speech Recognition): Technology that converts spoken audio into text automatically. "The MultiTec framework \cite{shang2025multitec} fuses ASR, OCR, visual features, audio sentiment, and metadata to investigate Healthcare misinformation on TikTok."

- biaffine-dep-en: A biaffine-scoring English dependency parsing model (Dozat & Manning 2017) used for syntactic analysis. "the dependency parser by \citet{dozat:manning:17} (model: biaffine-dep-en), implemented in the SuPar library."

- Bimodal representations: Joint feature representations that combine two modalities (e.g., audio and visual) for analysis. "The approach generates bimodal representations of visual and audio content to differentiate mainstream topics such as food and beauty care from niche interests including war and mental health."

- DeBERTa-v3-base: A transformer-based LLM variant used for NLP tasks, here fine-tuned for ABSA. "DeBERTa-v3-base achieves the best performance on this structured, transcript-based political ABSA task (macro-F1 = 81.9), outperforming larger encoder variants, the ABSA-specialized Yang model, and the Qwen LLM (macro-F1 = 72.5) (see Appendix~\ref{appendix:configs} for all results)."

- dependency parser: A model that analyzes the grammatical structure of a sentence by identifying head-dependent relations between words. "We converted the Whisper-generated transcripts into structured, syntax-anchored aspect rows using the dependency parser by \citet{dozat:manning:17} (model: biaffine-dep-en), implemented in the SuPar library."

- dependency-based aspect linking: Linking aspect mentions to their syntactic heads using dependency parse structures to enable targeted sentiment analysis. "we introduce a multimodal pipeline that combines automatic transcription, dependency-based aspect linking, aspect-based sentiment analysis (ABSA), and visual scene-type classification"

- Dependency triples: Triples capturing (aspect, syntactic head, dependency relation) from a parsed sentence for structured analysis. "Dependency triples consist of the aspect, its syntactic head and the dependency role in the parse."

- held-out set: A dataset subset reserved for evaluation to assess generalization, not used in training. "We manually evaluated 799 randomly sampled frame-level labels across all outlets in a held-out set to assess the model’s predictions."

- International Court of Justice (ICJ): The principal judicial organ of the United Nations; referenced as a locus of hearings in the analysis. "International Court of Justice (ICJ) hearings addressing Israel’s occupation"

- log-transformed view counts: Applying a logarithmic transformation to highly skewed view counts to stabilize variance in analysis. "we analyzed log-transformed view counts to reduce the influence of a small number of extremely viral videos."

- Macro-F1: An evaluation metric averaging the F1 score equally across classes, regardless of class size. "DeBERTa-v3-base achieves the best performance on this structured, transcript-based political ABSA task (macro-F1 = 81.9)"

- OCR (Optical Character Recognition): Technology that extracts textual content from images or video frames. "The MultiTec framework \cite{shang2025multitec} fuses ASR, OCR, visual features, audio sentiment, and metadata to investigate Healthcare misinformation on TikTok."

- QLoRA: A parameter-efficient fine-tuning method using quantized low-rank adapters for LLMs. "a Qwen2.5-7B-Instruct LLM \cite{qwen2025qwen25technicalreport} finetuned with QLoRA \cite{dettmers2023qlora}."

- Qwen3-VL: An open-source vision-LLM used here for image-text reasoning and scene classification. "For visual classification, we employ the open-source Qwen3-VL model (4B) \cite{qwen3vl_2025}, which is the best option for image-text reasoning tasks given our limited computational resources."

- RoBERTa-base: A transformer-based pre-trained LLM used as a baseline encoder for fine-tuning. "Using the augmented dataset, we finetuned several models under identical splits: RoBERTa-base \cite{liu2019roberta}, DeBERTa-v3-base, DeBERTa-v3-large \cite{he2021debertav3}, DeBERTa-v3-large-absa-v1.1 \cite{YangPyABSA}, and a Qwen2.5-7B-Instruct LLM \cite{qwen2025qwen25technicalreport} finetuned with QLoRA \cite{dettmers2023qlora}."

- Semantic scene classification: Assigning images or frames to predefined semantic categories capturing scene types. "we present a multimodal pipeline that combines automatic transcription, aspect-based sentiment analysis (ABSA), and semantic scene classification."

- Semantic scene taxonomy: A compact, defined set of scene categories used to systematically classify visual content. "The final taxonomy comprises seven distinguishable semantic scene types with refined definitions that minimize overlap while covering dominant visual themes in 2023--2024 Israel--Hamas war coverage."

- SuPar: A library for efficient, state-of-the-art structured parsing, including dependency parsing. "the dependency parser by \citet{dozat:manning:17} (model: biaffine-dep-en), implemented in the SuPar library."

- Uniform-FPS strategy: A frame sampling approach that captures frames at a consistent rate (e.g., one frame per second) across videos. "Our sampling uses a uniform-FPS strategy, which aligns with \citet{brkic2025framesamplingstrategiesmatter}."

- Universal Dependencies (UD): A cross-linguistic, standardized framework for annotating grammatical relations in dependency parsing. "The parser was trained on English Universal Dependencies (UD) treebanks."

- Vision-LLM (VLM): A model that jointly processes visual and textual inputs for multimodal reasoning. "While prompt-based VLM can generate rich open-ended image descriptions, these descriptions are difficult to aggregate systematically at scale."

- Whisper large-v3: A large-scale automatic speech recognition model used to transcribe video audio. "We used Whisper large-v3 \cite{whisperopenai} to generate textual transcripts."

- nsubj: A dependency relation label indicating the nominal subject of a clause. "the dependency relations nsubj and amod link aspect and head."

Collections

Sign up for free to add this paper to one or more collections.