- The paper introduces a novel DP framework for manifold denoising, jointly managing measurement, privacy, and intrinsic geometric uncertainties.

- It employs a DP local PCA for tangent space estimation, achieving near-optimal recovery with theoretical error bounds that balance noise and curvature effects.

- Empirical results on biomedical and single-cell datasets demonstrate that the approach preserves local geometry and prediction accuracy under moderate privacy budgets.

Differentially Private Manifold Denoising: Technical Analysis

Introduction and Problem Setting

The manuscript "Differentially Private Manifold Denoising" (2604.00942) addresses the challenge of leveraging latent manifold structure for denoising high-dimensional data in settings where geometric reference datasets are sensitive and protected by differential privacy (DP) constraints. The authors formulate a practical and theoretically grounded framework enabling iterative correction of noisy query points using privatized geometric signals derived from a reference cohort, while rigorously accounting for privacy loss across queries and iterations.

The core technical objective is to jointly manage three sources of uncertainty:

- Measurement noise in both references and queries,

- Privacy-induced noise—introduced to geometric summaries for (ε,δ)-DP,

- Intrinsic geometric error owing to manifold curvature and finite sample estimation.

This work occupies a critical intersection between geometry-driven latent structure inference and the formal privacy regime required by HIPAA, GDPR, and other regulations, where existing manifold denoising strategies either fail to protect confidentiality or are rendered ineffective by privacy noise.

Framework: Differentially Private Local Geometry Estimation

The denoising methodology proceeds in two tightly coupled phases: (i) DP estimation and release of local geometric surrogates; (ii) correction of queries using these privatized objects.

The reference data {yi}i=1n⊂RD comprises noisy samples near a d-dimensional C2 manifold M, with independent query points z∈RD for correction.

DP Local PCA

Local tangent space geometry is recovered using a variant of kernelized principal component analysis (kPCA), computed over reference neighborhoods BD(z,h). The algorithm privatizes:

- The empirical tangent projector (rank d spectral projector of the local covariance),

- The kernel-weighted local mean.

Sensitivity analysis yields that the Frobenius-norm change in both summaries under a replace-one operation is O((nhd)−1) (projector) and O((nhd−1)−1) (mean), for suitable scale {yi}i=1n⊂RD0. Each summary is privatized via independently calibrated Gaussian mechanisms, with post-processing (returning the top-{yi}i=1n⊂RD1 eigenspace for projectors) preserving privacy under arbitrary further computation.

Theoretical results (Theorem 1) establish that the privatized tangent estimator achieves principal angle distance to the true tangent space

{yi}i=1n⊂RD2

where the first two terms reflect geometric and measurement errors, and the last term is the privacy penalty, scaling optimally in the ambient dimension {yi}i=1n⊂RD3, sample size, and privacy budget.

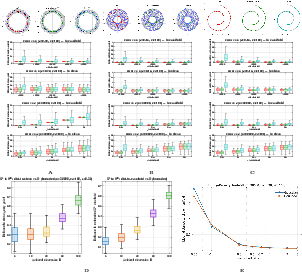

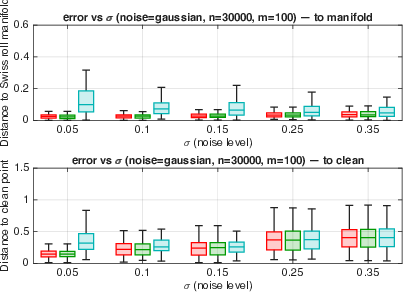

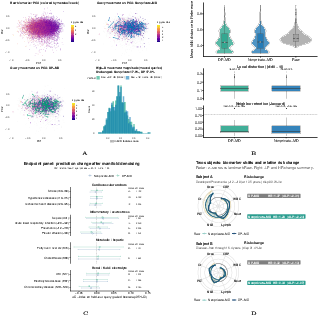

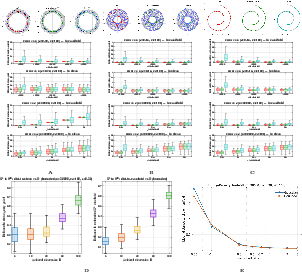

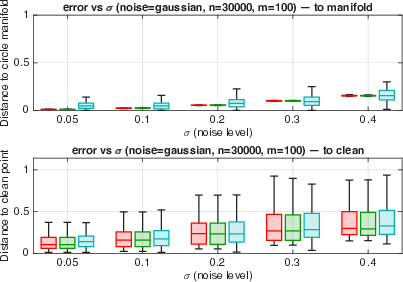

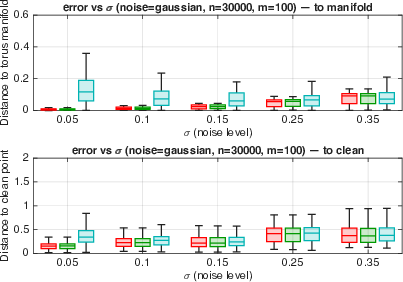

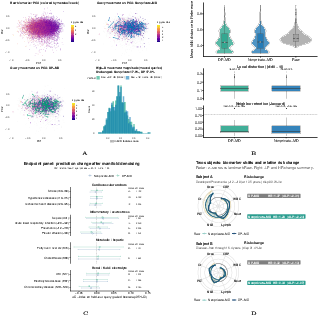

Figure 1: Synthetic manifold denoising results show effective recovery for canonical geometries (circle, torus, Swiss roll, sphere) across multiple error regimes and noise scales.

Iterative Manifold Denoising Under Privacy

The denoising operator, motivated by the normal bias decomposition [yao2025manifold], implements a fixed-point update:

{yi}i=1n⊂RD4

where {yi}i=1n⊂RD5 is the privatized local tangent projector (from the weighted sum of reference projectors), and {yi}i=1n⊂RD6 is the privatized local mean. All privacy noise is introduced in these low-dimensional surrogates.

Compositional privacy accounting is conducted using zero-concentrated DP (zCDP), allowing for modular split of the overall budget over iterations and queries, and precise tracking of the cumulative privacy loss under additive composition.

Theoretical guarantees (Theorem 2) show the denoised query converges to the manifold with error bounded by

{yi}i=1n⊂RD7

with {yi}i=1n⊂RD8 the privacy noise scales for projector and mean, respectively.

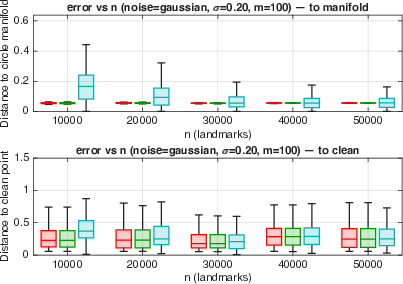

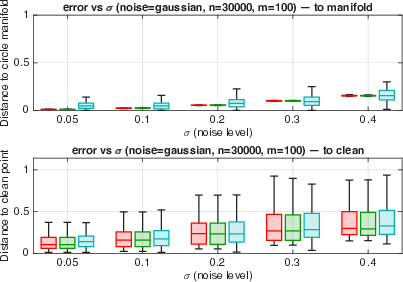

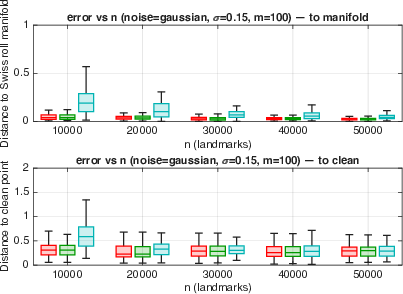

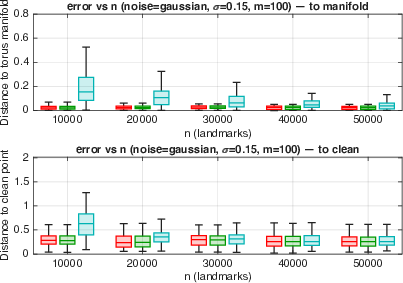

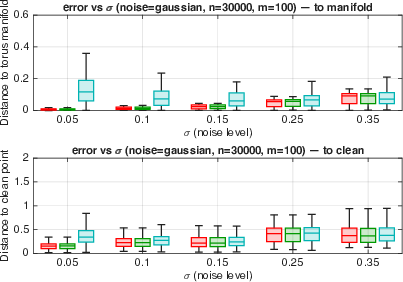

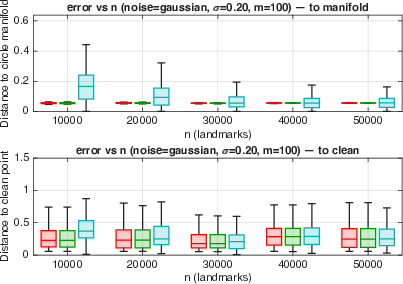

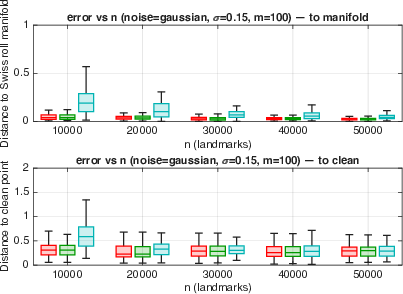

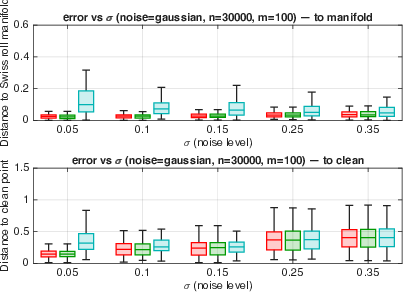

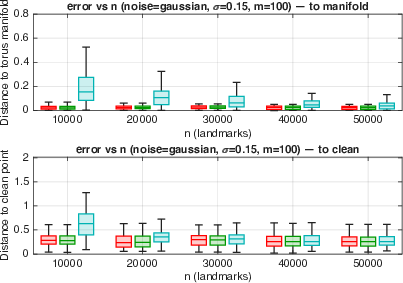

Figure 3: Robustness of denoising performance with respect to noise scale, sample size, and ambient dimension across canonical manifolds under Gaussian noise.

Empirical simulations show that with moderate privacy budgets {yi}i=1n⊂RD9, the DP denoiser achieves utility comparable to non-private analogues, and consistently preserves local and global geometric structure across severe high-curvature regimes and high d0.

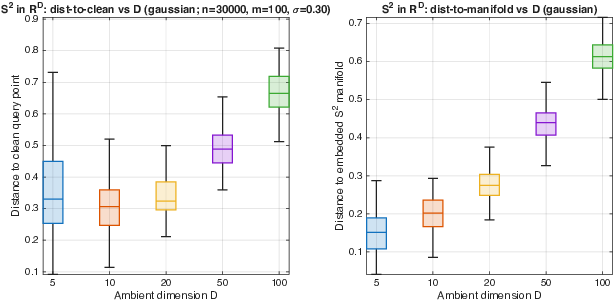

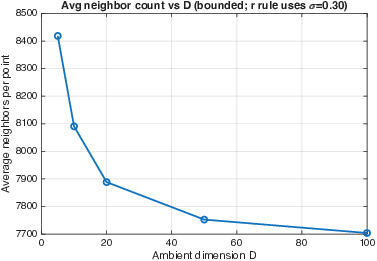

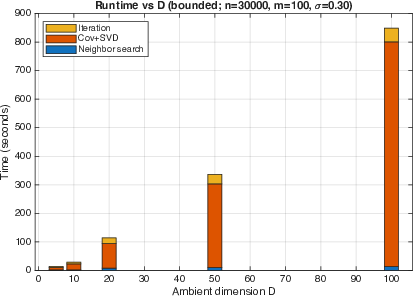

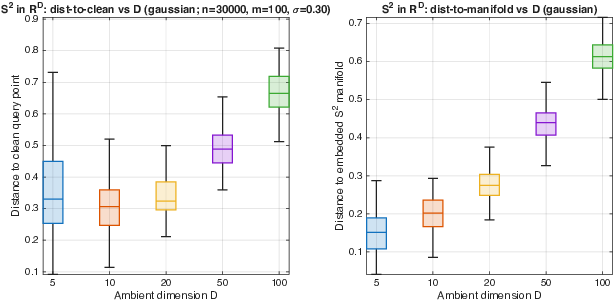

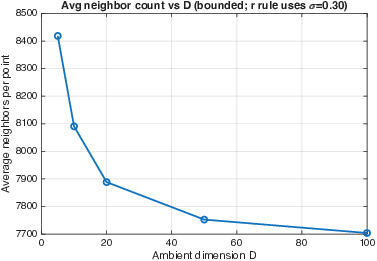

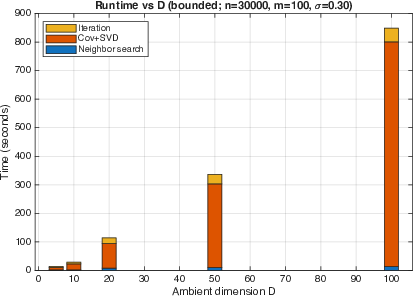

Figure 2: Scalability results on high-dimensional spheres demonstrate that error remains stable as d1 increases, with controlled computation cost and neighborhood size.

Empirical Analyses: Biomedical and Single-Cell Applications

The DP manifold denoising pipeline is validated on two high-dimensional real-world tasks:

UK Biobank Clinical Biomarkers

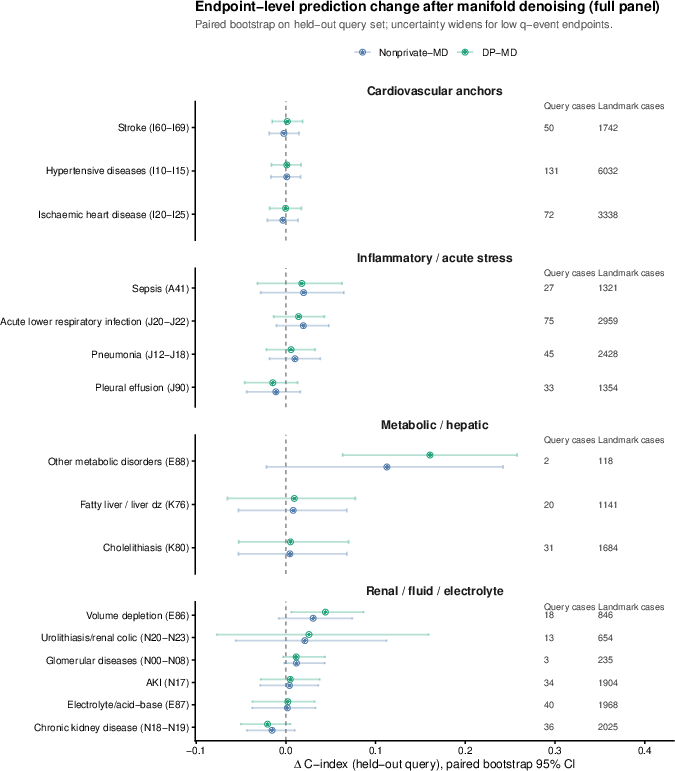

Application to UK Biobank biomarker profiles (60 dimensions, d2) demonstrates that DP-manifold denoising preserves local geometry, maintains subject-level stability, and yields consistent or improved discrimination in downstream Cox models for disease risk prediction, even under privacy constraints. The noise-induced privacy-utility tradeoff is quantified across clinically meaningful endpoints.

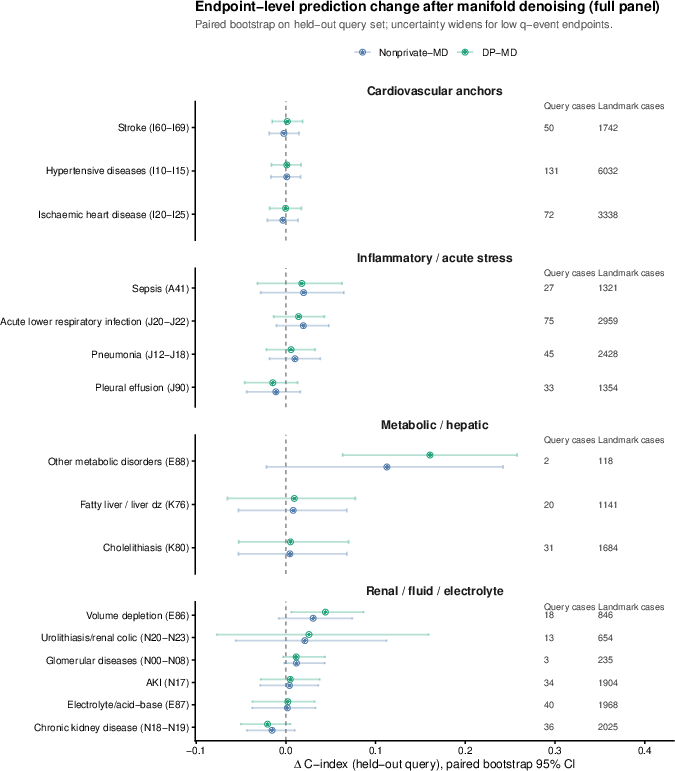

Figure 4: Manifold denoising of UK Biobank data maintains local geometric stability and supports robust downstream risk stratification across numerous disease endpoints.

Figure 6: Full ICD-coded endpoint panel; denoising shifts are consistent across all clinical outcomes of interest, indicating broad compatibility for risk modeling.

Single-Cell RNA-Seq

In single-cell RNA-seq datasets, DP denoising systematically improves clustering accuracy and normalized mutual information relative to input expression matrices, closely tracking non-private denoising in ARI and NMI across both homogeneous and complex tissues. The effect sizes are consistent across datasets with varying sparsity and cell-type composition.

Privacy Versus Utility: Tradeoff and Optimization

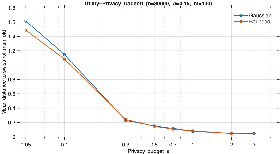

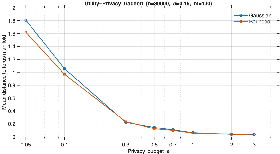

The modular zCDP-conforming budget allocation enables explicit management of the privacy-utility tradeoff. Empirical curves show sharp reductions in error as the privacy budget increases, with saturation observed at practical privacy values d3. The empirical results are stable with respect to underlying geometry complexity and data dimensionality.

Figure 5: Privacy–utility tradeoff curves on Swiss roll and torus demonstrate that utility loss from privacy saturates rapidly, with near-optimal accuracy attained for moderate budgets.

Theoretical and Practical Implications

This framework provides the first rigorous, non-asymptotic utility bounds for geometry-aware denoising on unknown manifolds under formal DP, with explicit separation of curvature, measurement, and privacy terms. Its mechanisms are both computationally efficient (achieving d4 complexity per iteration, sublinear in both d5 and d6 under parallelization) and directly transferable to regulated high-dimensional applications. Notably, geometric errors do not substantially inflate under privacy, provided neighborhood size and noise scales are set according to the theoretical recommendations.

Key theoretical insights:

- The sensitivity of geometric surrogates is governed by local neighborhood cardinality and bandwidth, following the minimax scaling for manifold estimation,

- Privacy noise does not induce structural collapse, as projector noise primarily perturbs only the normal correction rather than intrinsic geometry,

- The modular design allows implementation under general privacy accounting (standard DP, RDP, zCDP, or Gaussian DP).

Open Problems and Future Directions

The study remarks on several open challenges:

- Eliminating the leading-order noise dependence from the utility bound via higher order cancellation or improved geometric surrogates,

- Extending to unbounded (e.g., Gaussian) or heavy-tailed noise models, possibly integrating robust DP statistics,

- Defining and privately releasing global manifold representations (meshes, implicit functions) beyond discrete point corrections,

- Generalizing to privacy of both reference and queries (two-sided or interactive DP).

Conclusion

This work delivers a comprehensive framework for manifold denoising with rigorous differential privacy guarantees, achieving strong empirical and theoretical performance across synthetic, biomedical, and omics datasets. The methods are immediately deployable for privacy-sensitive geometric workflows and establish foundational limits for privacy-preserving inference under the manifold hypothesis.

Reference: "Differentially Private Manifold Denoising" (2604.00942)