- The paper introduces a unified quantitative framework to evaluate and optimize SRL configurations based on workspace partitioning and autonomy selection.

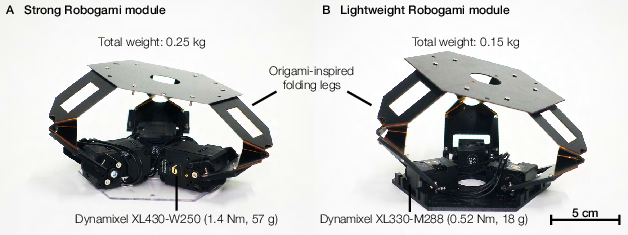

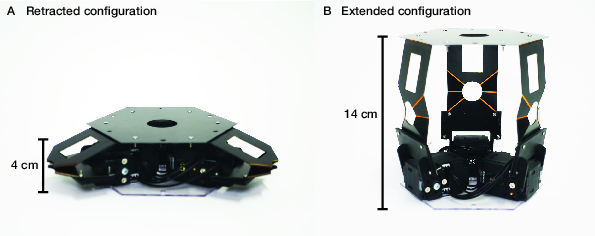

- Utilizing a modular origami-inspired 'Robogami Third Arm,' the study demonstrates significant morphological flexibility and payload adaptability.

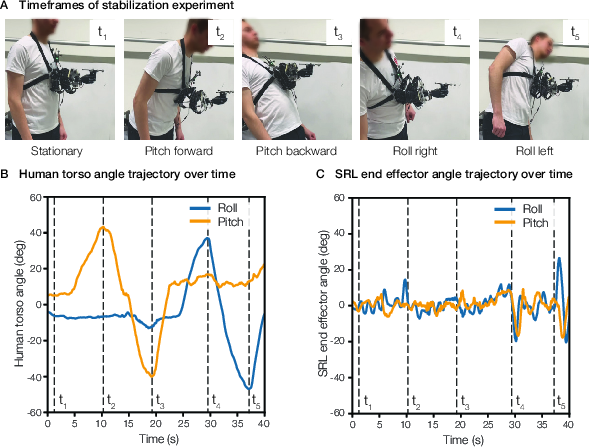

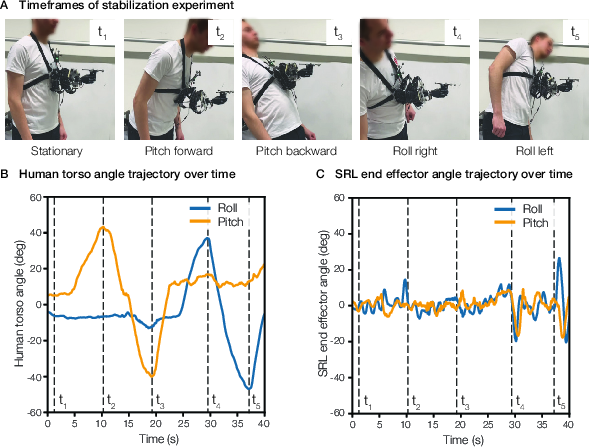

- Closed-loop QP-driven control enables robust human-in-the-loop stabilization with orientation errors below 5° in dynamic scenarios.

Reconfiguration of Supernumerary Robotic Limbs for Human Augmentation

Introduction

The study "Reconfiguration of supernumerary robotic limbs for human augmentation" (2603.29808) presents a formalized approach to maximize human augmentation through the dynamic physical and functional adaptation of supernumerary robotic limbs (SRLs). It systematically addresses the limitations of current SRL systems, particularly their lack of configurability and adaptability to unstructured settings and diverse user needs. The paper introduces a unified quantitative human augmentation analysis framework, validates a modular origami-inspired SRL ("Robogami Third Arm"), and demonstrates scenario-dependent reconfiguration and control, providing a theoretical and experimental foundation for task-adaptive, user-centric wearable augmentation.

Figure 1: SRLs enable user-centric assistance across diverse domains, adapting morphology and autonomy for flexible augmentation.

Human Augmentation Analysis

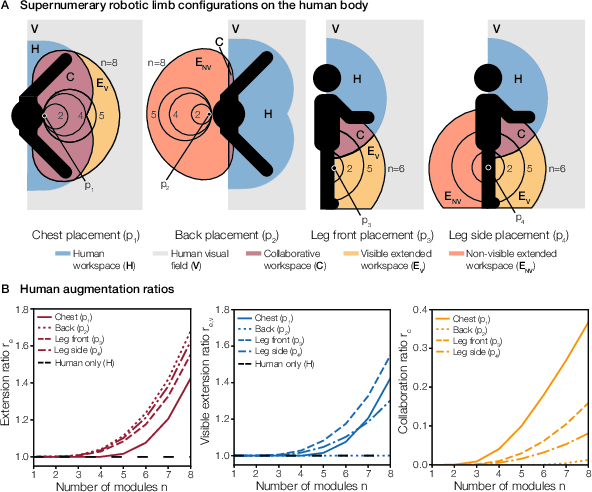

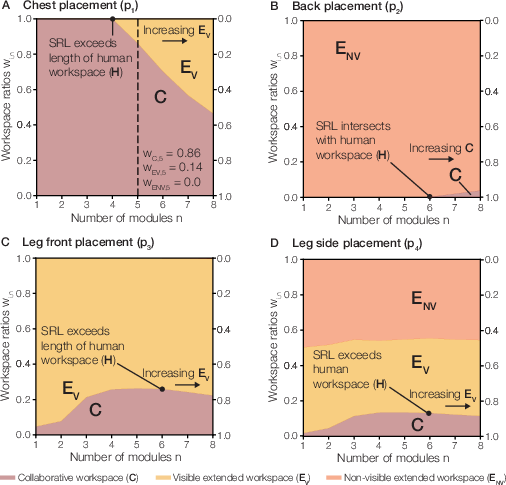

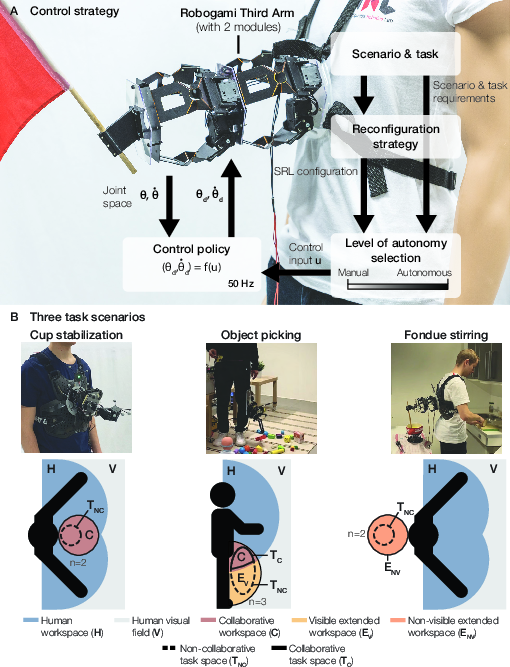

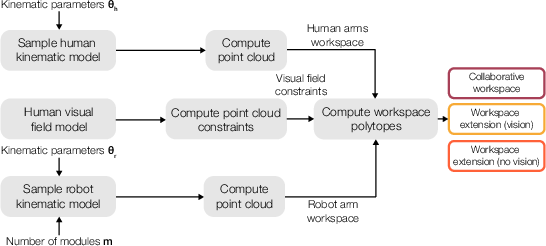

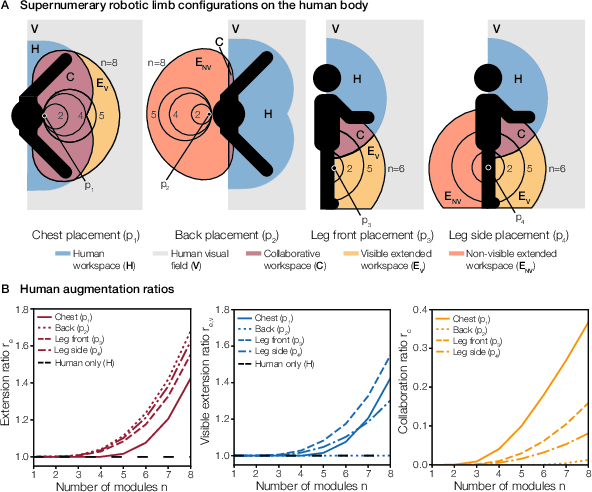

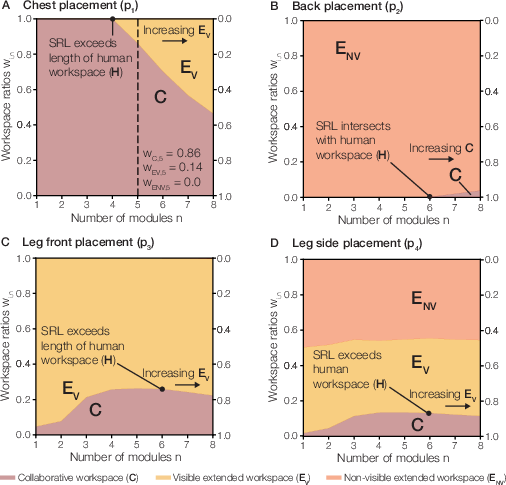

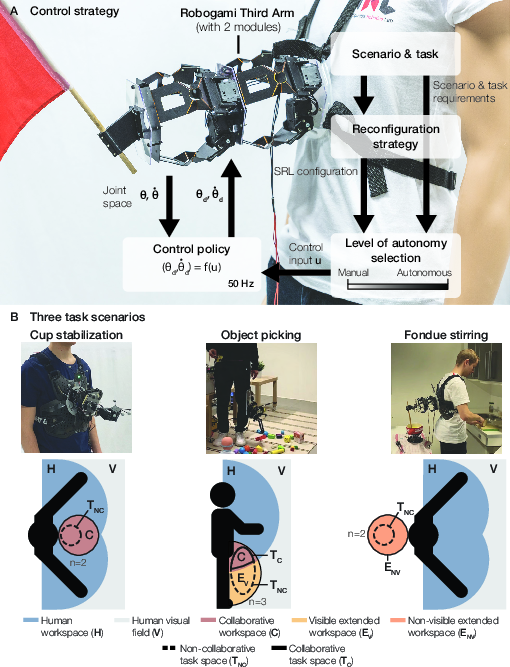

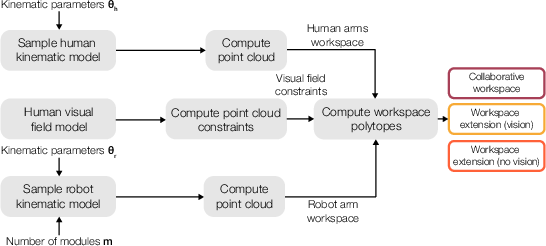

A principled human augmentation workspace analysis is introduced to quantify the effects of SRL configuration variables—number of modules, placement, and available human sensory feedback (primarily vision)—on the utility and controllability of the SRL system. Workspaces are classified as:

- Collaborative Workspace (C): spatial overlap between SRL and the user's hands, supporting coordinated manipulation.

- Visible Extended Workspace (EV): SRL workspace extending human reach and inside the user's visual field, suitable for closed-loop manual control.

- Non-Visible Extended Workspace (ENV): workspace outside the user's visual field, necessitating autonomous or semi-autonomous control.

Augmentation ratios (extension, collaboration, and visible extension) formalize these relationships, providing an analytic basis for SRL configuration selection. Critically, this decomposition enables immediate mapping from workspace topology to the selection of autonomy (manual/autonomous/mixed) and morphology.

Figure 2: SRL configurations and augmentation ratios as a function of module count and placement, illustrating trade-offs between extension and collaboration.

Figure 3: Workspace ratio diagrams highlight how placement and the number of modules affect collaborative, visible, and non-visible extended workspaces.

Morphological and Structural Reconfiguration

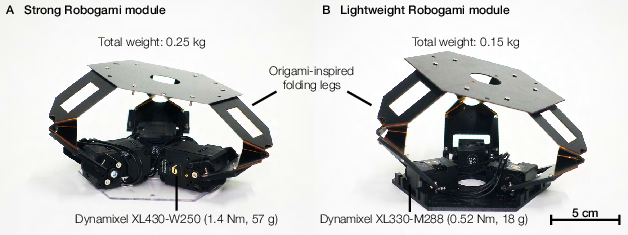

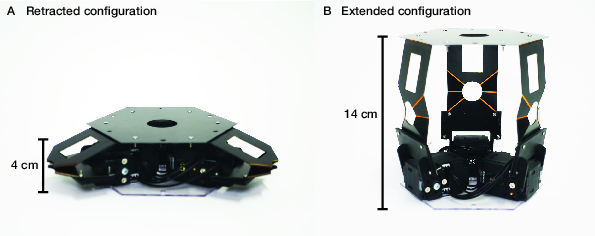

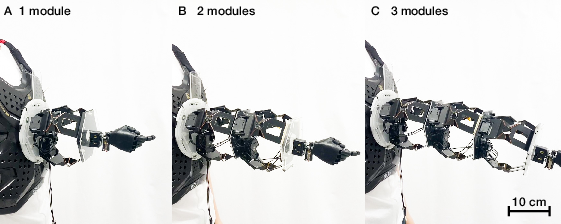

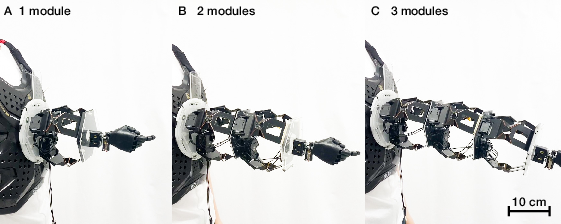

A highly modular, origami-inspired SRL—the Robogami Third Arm—serves as the realization platform. The series-parallel origami modular construction confers significant morphological flexibility: individual modules can reconfigure their length (extension ratio up to 3.5), be stacked to tailor the workspace, or collapsed for storage. With module weights of 150–250 g, payloads scaling up to 3 kg for single-module and 0.3 kg for three-module configurations are demonstrated.

Figure 4: The Robogami module—parallel origami kinematics driven by three individual actuators.

Figure 5: The module morphs between highly compact and extended states, central to rapid reconfiguration and deployment.

Figure 6: Stacked Robogami modules enable construction of SRLs with variable length and custom end-effectors for diverse tasks.

Adaptable Control and Autonomy Selection

The framework couples configuration with a control strategy that selects the autonomy spectrum (manual, autonomous, mixed) according to workspace classification and task requirements. For regions within the collaborative and visible workspace, high DoF manual control (via a custom 3-DoF joystick or intention-detection interfaces) is favored. For non-visible extension, full autonomy is selected, offloading operator cognitive burden and reducing the risk of task error induced by lack of feedback.

Figure 7: The adaptable control strategy integrates configuration, autonomy selection, and task-driven policy execution, demonstrated in everyday scenarios.

Closed-Loop Control Policy and Experimental Validation

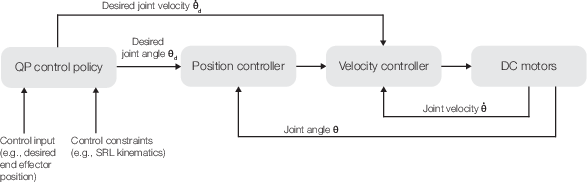

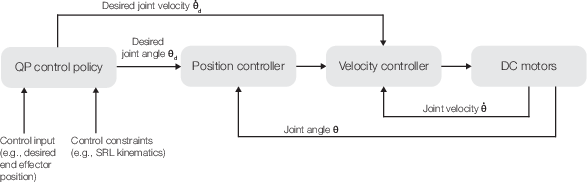

Scaling closed-loop control to dynamic, morphologically flexible SRLs is addressed via a quadratic programming (QP) approach. This policy handles redundant kinematics, modular stacking, and physical constraints (joint ranges, self-collision, internal chain closure), and is agnostic to autonomy source (manual or autonomous planner). Experimental validation includes human-in-the-loop stabilization tasks such as cup-holding under disturbances, yielding orientation errors of under 5°, despite significant wearer motion, supporting claims of both robustness and safety.

Figure 8: The SRL maintains target end-effector orientation under human-applied disturbances, validating effective closed-loop control.

Figure 9: Control policy flowchart for safe QP-driven joint regulation and mode switching.

Hardware and Implementation Details

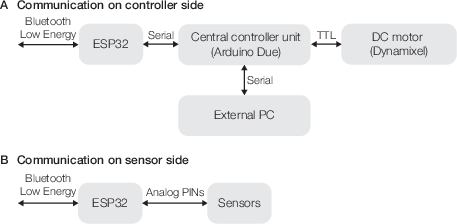

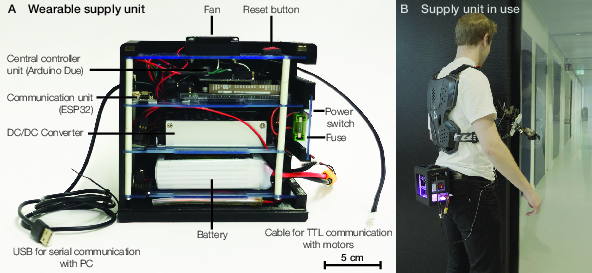

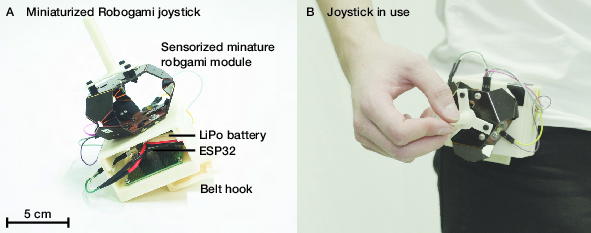

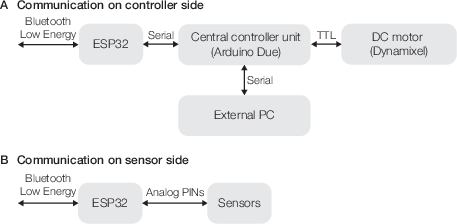

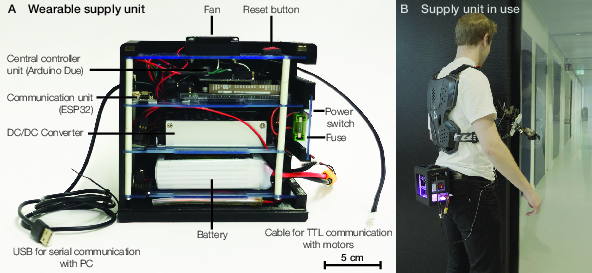

The Robogami Third Arm’s electronic architecture enables untethered operation. Wireless BLE-based control interfaces and a wearable battery allow full mobility. Communication between high-level QP controller and low-level joint drivers is synchronized for real-time actuation, ensuring safe command delivery and execution.

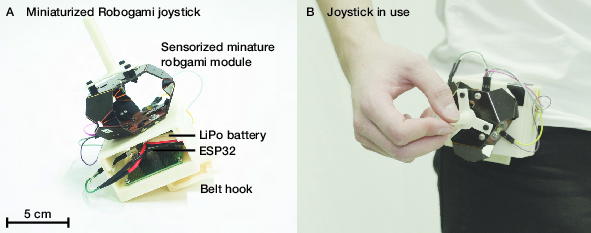

Figure 10: Wearable, sensorized joystick enables intuitive manual control within collaborative/visible extended workspaces.

Figure 11: ESP32-based communication architecture joins user interface inputs and high-level control.

Figure 12: Battery-powered, wearable supply unit supports untethered multi-hour operation in real-world environments.

Systematic quantification demonstrates payload limits for varying module counts, with strong modules maintaining performance as the stack increases—key for practical assistive deployment in everyday and industrial scenarios.

Figure 13: Payload versus module count, mapping mechanical limitations onto reconfiguration decisions for task planning.

Workspace Computation and Methodology

Workspace calculation utilizes dense kinematic sampling, intersection computation, and convex polytope reconstruction to analytically partition the SRL/human/visual workspaces—supporting user-specific adaptation via parametrized anthropometric data.

Figure 14: Partitioning of spaces with combined human/robot kinematics and user visual field constraints, producing actionable volumetric data for configuration.

Discussion and Implications

The framework fundamentally departs from fixed-morphology, single-placement SRL paradigms, integrating analytics, design, and control for versatility. The task-relevant workspace analyses and adaptability enable deployment in unstructured, multipurpose daily environments, including collaborative manipulation, multitasking, and specialized domains (surgery, industry). Limitations include a focus on visual feedback—future extensions must generalize to multisensory feedback and address dynamic user and task postures for more comprehensive augmentation.

From a theoretical perspective, the explicit separation of workspace utility and controllability, mapped onto physical configurations and autonomy strata, provides a generalizable model for wearable augmentation, relevant for embodied AI, human-robot teaming, and collaborative robotics. Practically, the modular hardware and validated control architecture can underpin next-generation deployable assistive systems.

Conclusion

This work delivers a rigorous, quantitative framework for SRL reconfiguration, grounded in workspace analytics and validated with an origami-inspired, modular robotic arm. By jointly optimizing morphology, placement, and control, the approach enables adaptive, user-centric human augmentation suitable for unstructured, real-world tasks. This methodology establishes essential groundwork for future SRLs capable of general-purpose, dynamic assistive operation and provides a template for continued research at the intersection of soft robotics, wearable augmentation, and adaptive autonomy in human-robot interaction.