- The paper introduces IQPIR by replacing traditional ground-truth reliance with external NR-IQA priors to guide restoration towards optimal perceptual quality.

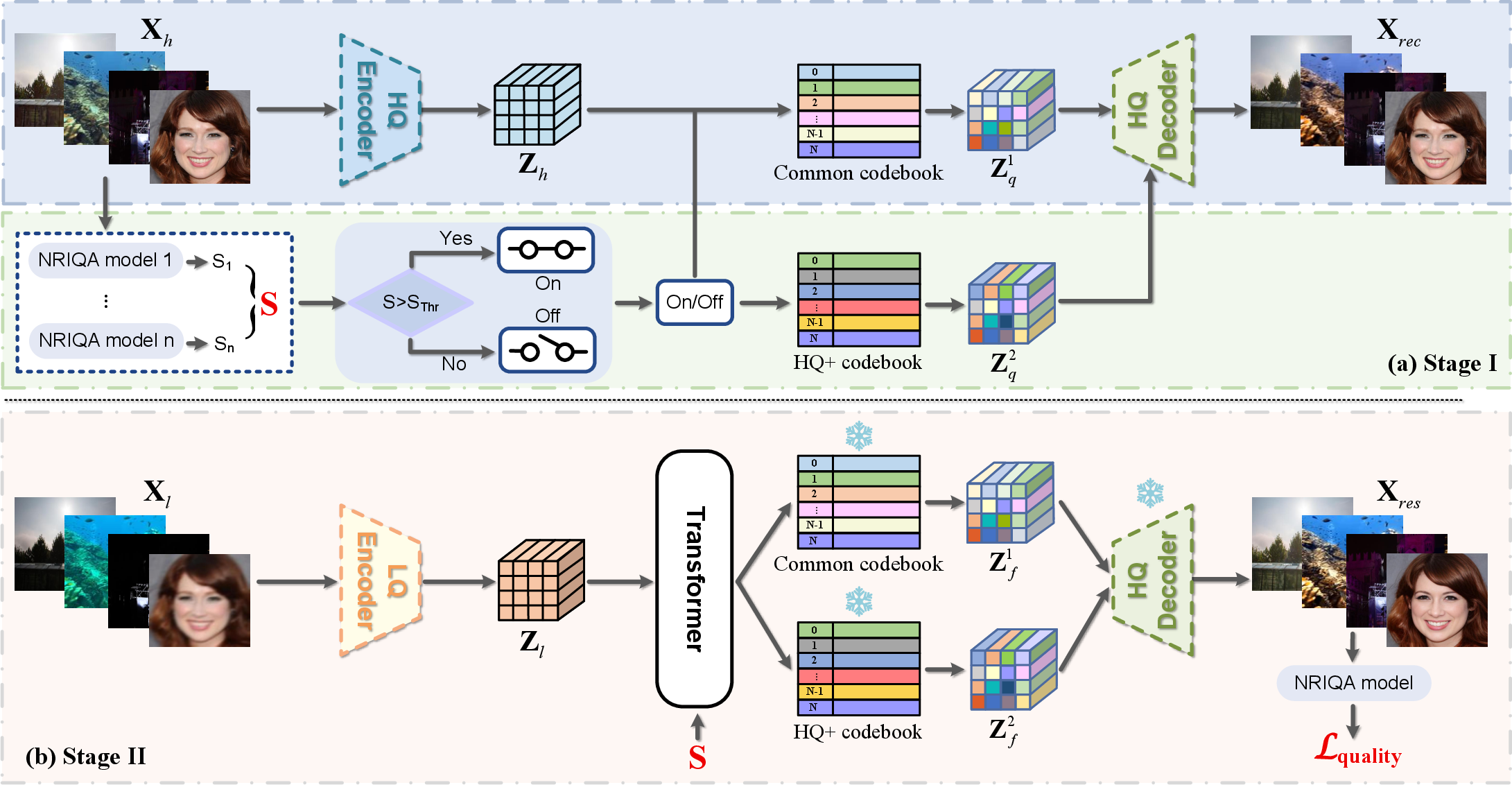

- It employs a dual-codebook architecture and a score-conditioned Transformer to disentangle high-fidelity details from inherent dataset noise.

- Experimental results across diverse domains validate the plug-and-play quality optimization, showing consistent performance gains over state-of-the-art methods.

Leveraging Image Quality Priors for Robust Real-World Image Restoration: An Analysis of IQPIR

Introduction and Motivation

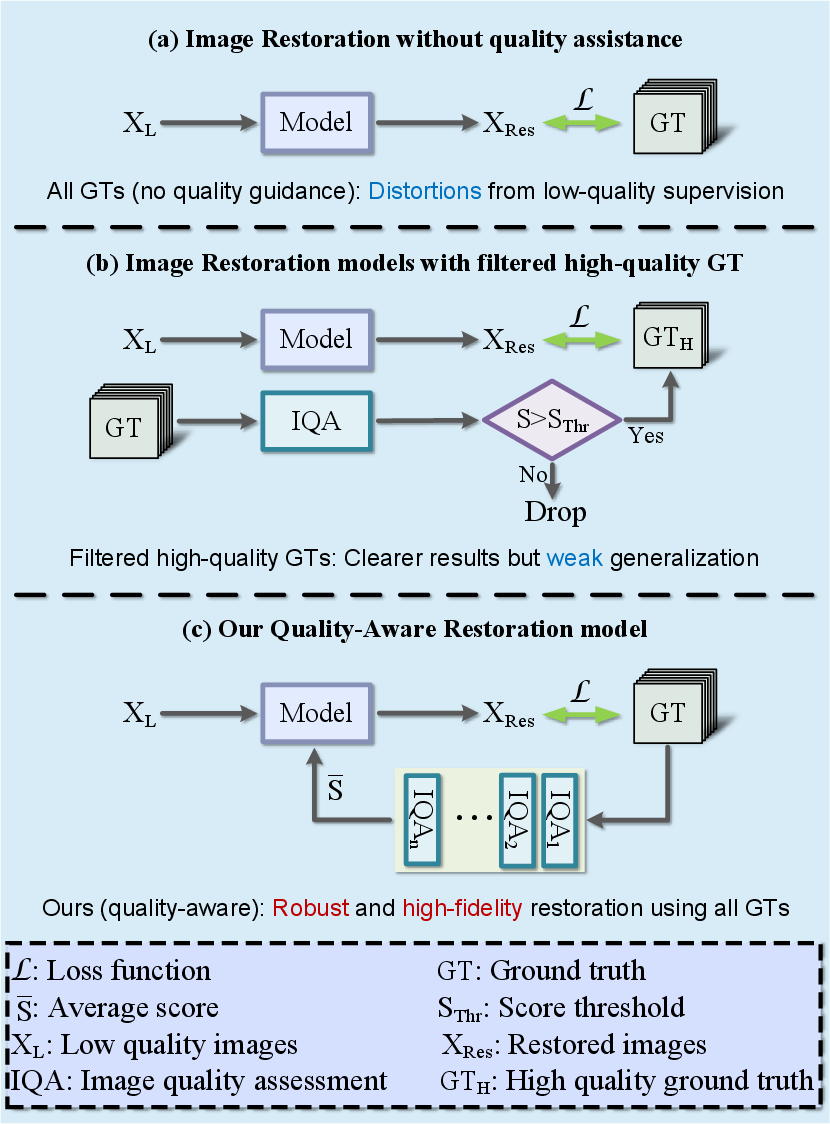

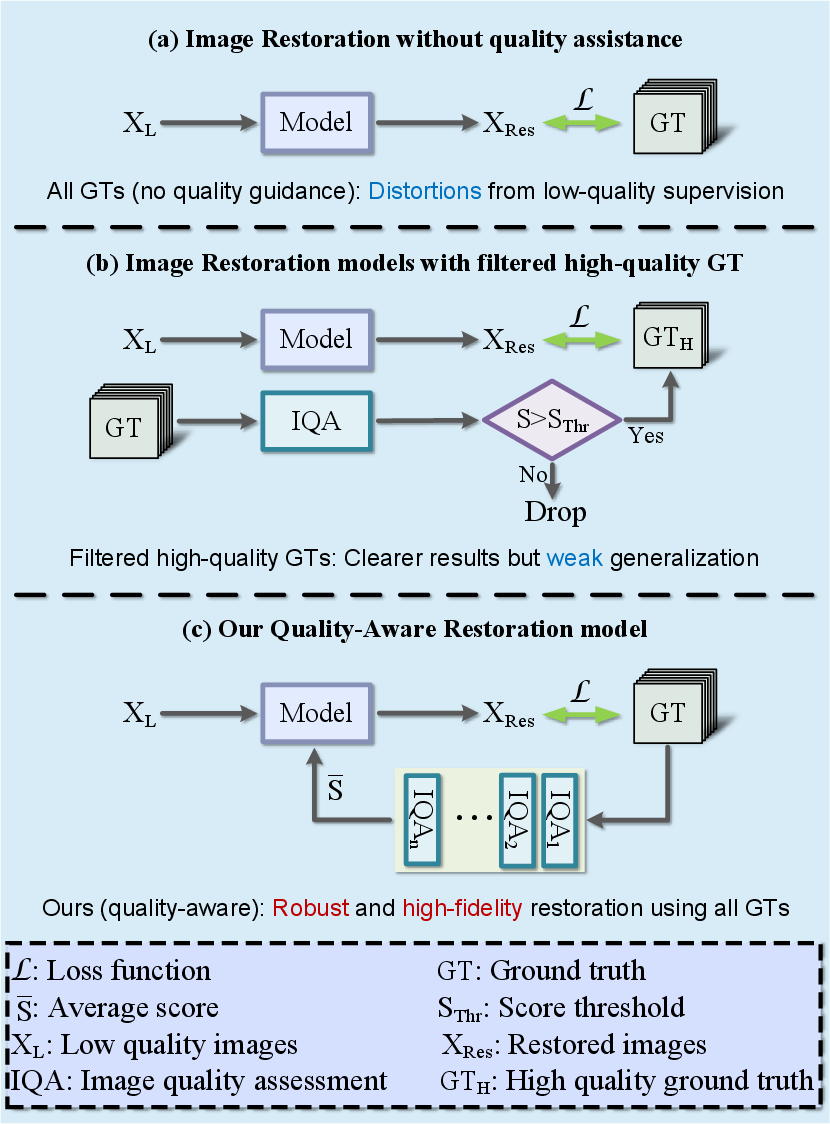

Supervised real-world image restoration traditionally relies on high-quality ground truth (GT) to guide reconstruction from low-quality (LQ) inputs. However, standard GT datasets often exhibit intrinsic quality inconsistencies and residual degradations, leading to network convergence toward average, rather than optimal, perceptual quality. The paper "Beyond Ground-Truth: Leveraging Image Quality Priors for Real-World Image Restoration" (2603.29773) challenges this paradigm, arguing that optimal perceptual quality cannot be attained by GT matching alone.

This work systematically integrates external Image Quality Priors (IQPs), derived from advanced No-Reference Image Quality Assessment (NR-IQA) models, into the restoration pipeline via a novel framework—IQPIR. The primary innovations include a dual-codebook architecture, score-conditioned transformation, and perceptual quality optimization in discrete latent spaces. The approach explicitly steers restoration toward the highest attainable perceptual fidelity, disentangling restoration objectives from imperfect GT.

Methodology

Image Quality Prior as Auxiliary Supervision

Unlike classical schemes that regard GT as perfect, IQPIR leverages NR-IQA scores to implement an external perceptual quality signal. These scores, obtained from an ensemble of state-of-the-art IQA models (including MLLM-based techniques), inform both the network’s representation learning and optimization objectives. The architecture adopts two routes for integrating IQP: quality conditioning and perceptual objectives.

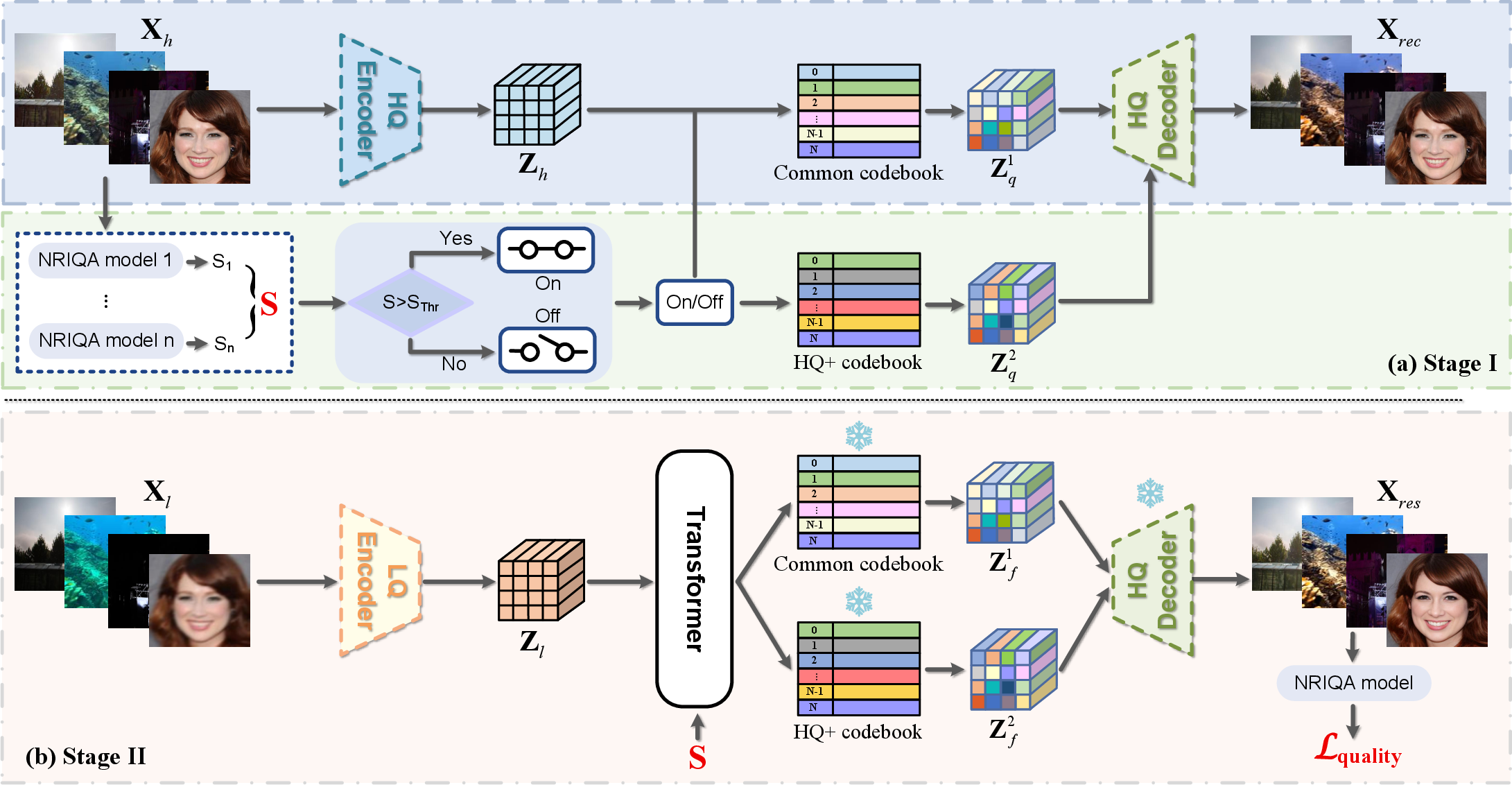

Dual-Codebook Architecture

To explicitly model both generic structure and fine content, IQPIR deploys dual codebooks within a vector-quantized (VQ) representation space. A common codebook captures robust, low-level patterns, while an HQ+ codebook specializes in encoding features from top-quality samples, as defined by an IQA-derived threshold. Images exceeding this threshold have their representations quantized jointly by both codebooks; others leverage only the common codebook. The final latent feature is a weighted sum:

Figure 2: The IQPIR framework utilizes a dual-codebook architecture and score-conditioned code prediction to integrate perceptual quality priors for restoration.

This separation enables the model to synthesize high-fidelity details while preserving general structural faithfulness.

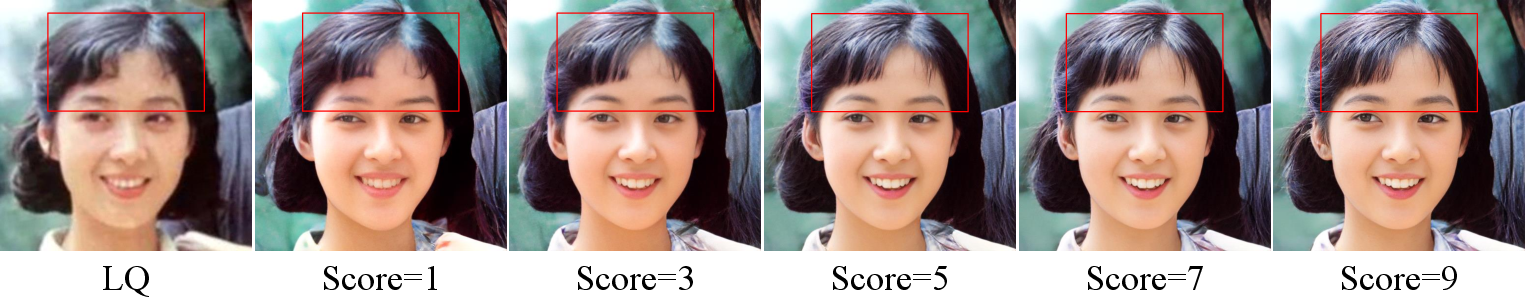

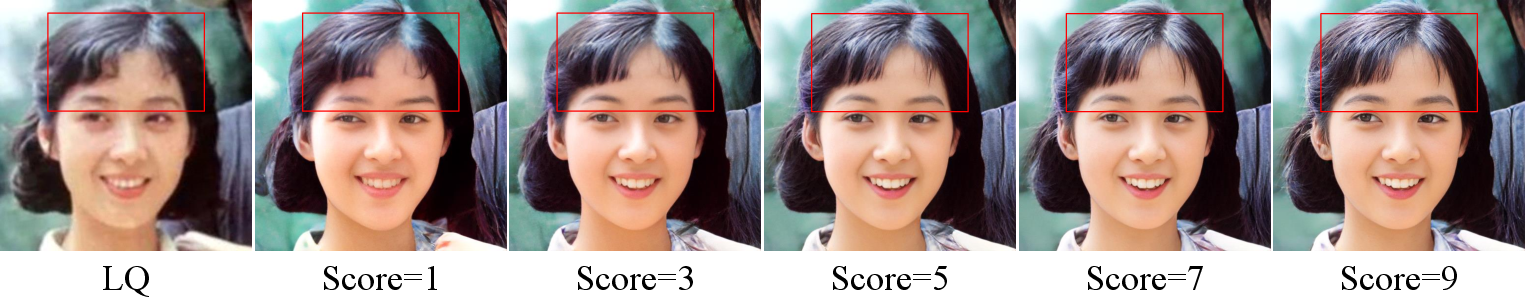

IQPIR’s code prediction employs a Transformer that conditions on a learned embedding of the NR-IQA quality score. By directly adding the score embedding to encoded representations, the Transformer is compelled to learn and exploit the correlation between input image features and targeted quality levels. At inference, setting the score condition to its maximum explicitly directs the model to reconstruct the most perceptually faithful output, regardless of the GT reference.

Figure 3: Structural comparison of conventional, IQA-filtered, and IQPIR training paradigms. Only IQPIR utilizes IQA as an integrated condition for both data selection and network guidance.

Discrete Representation-Based Quality Optimization

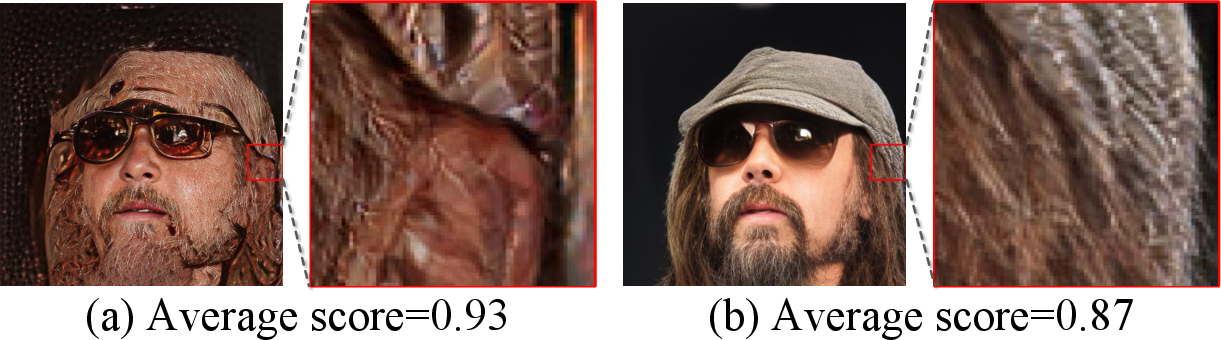

One of the core insights is that using discrete codebooks mitigates the well-known over-optimization artifacts that plague continuous representations when NR-IQA-based objectives are used directly. In continuous spaces, direct IQA optimization can cause the network to exploit weaknesses in the IQA models, producing perceptually implausible but high-scoring outputs (reward hacking). The discrete codebook restricts the solution space, dramatically reducing adversarial examples and ensuring that maximizing IQA score aligns with human perception.

Experimental Results

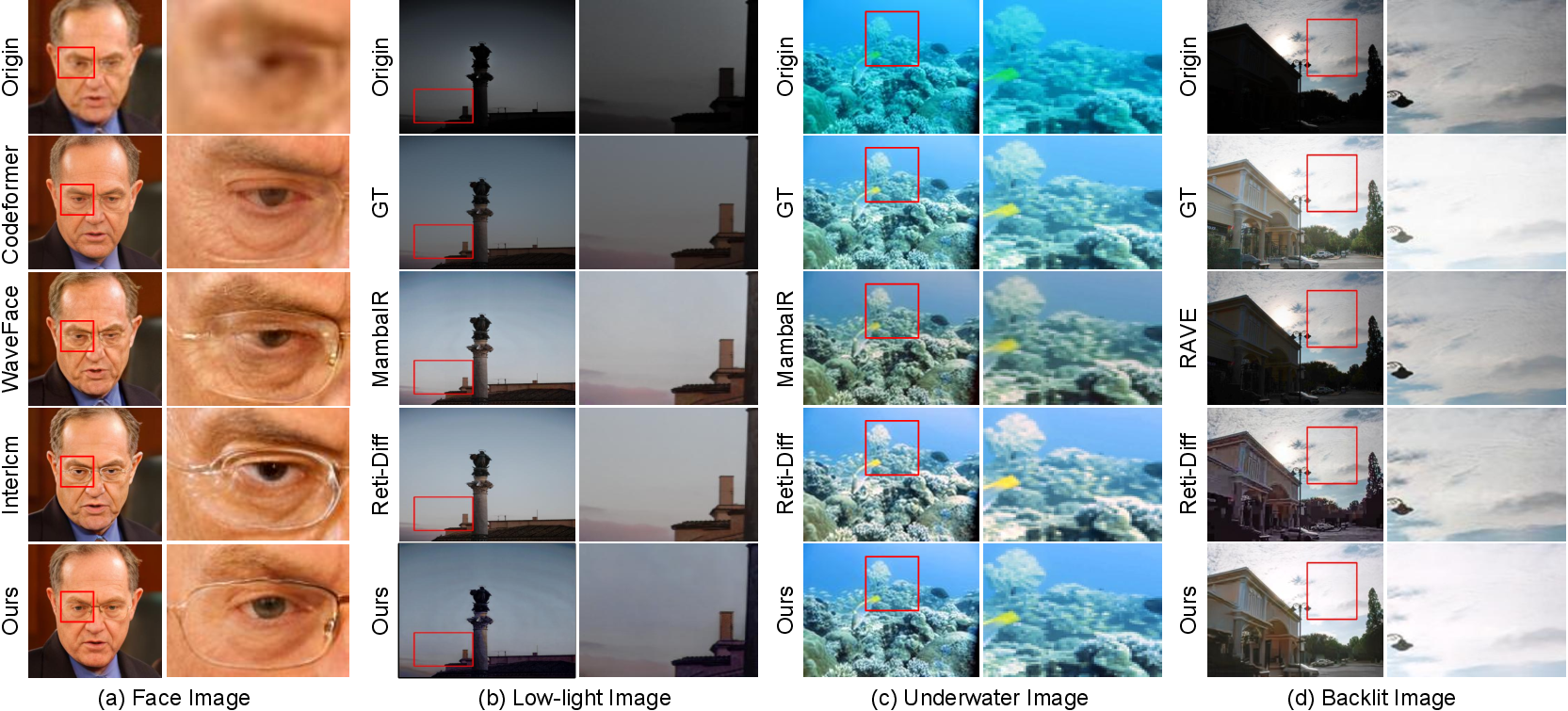

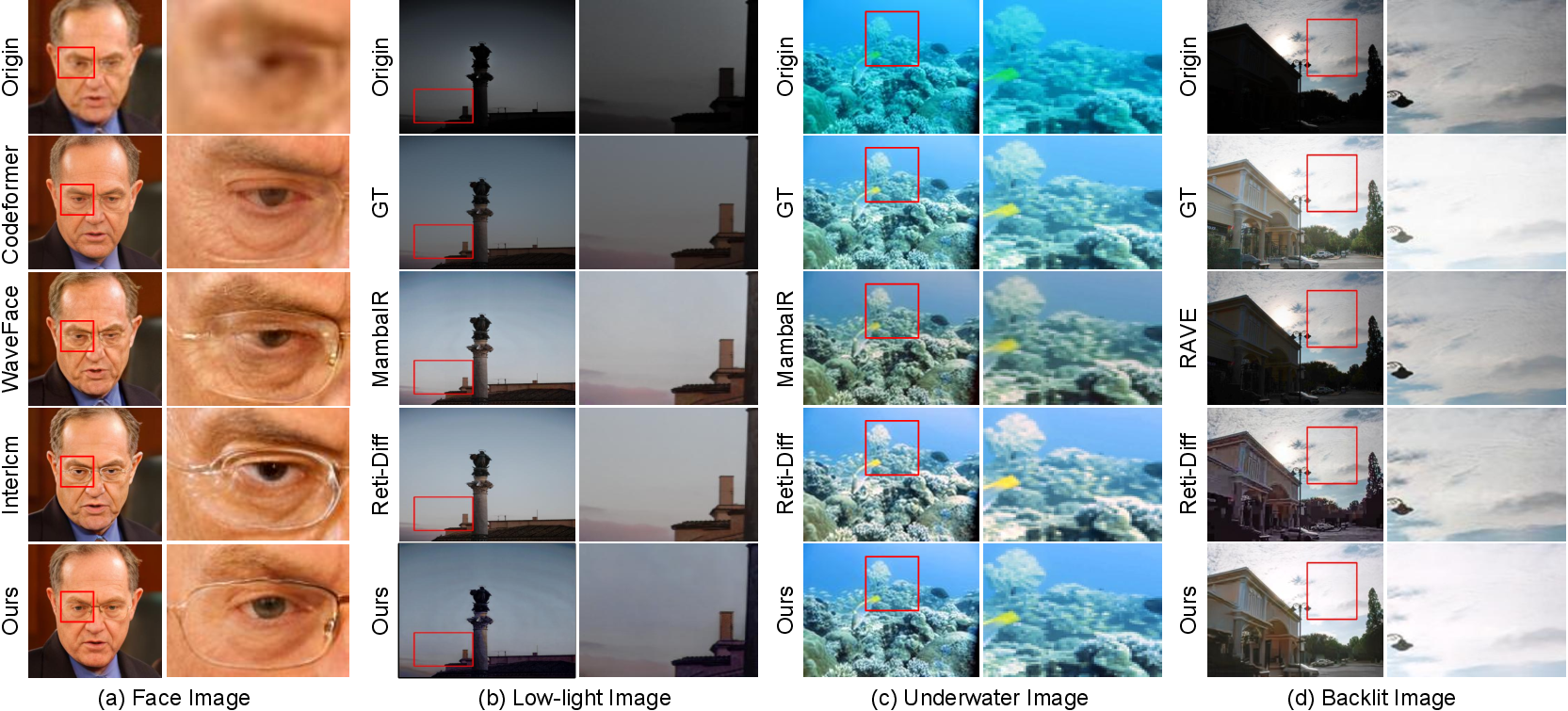

IQPIR undergoes extensive evaluation on diverse restoration tasks: blind face restoration (BFR), low-light image enhancement (LLIE), underwater image enhancement (UIE), and backlit image enhancement (BIE). The framework is benchmarked against recent SOTA models, utilizing both standard distortion and advanced NR-IQA metrics for comprehensive analysis.

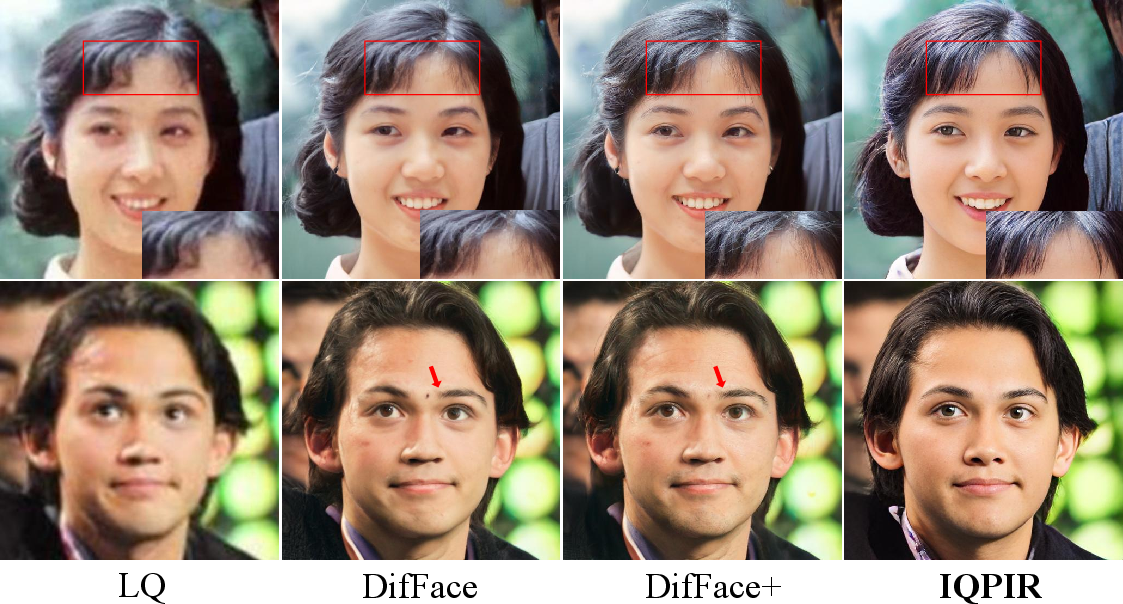

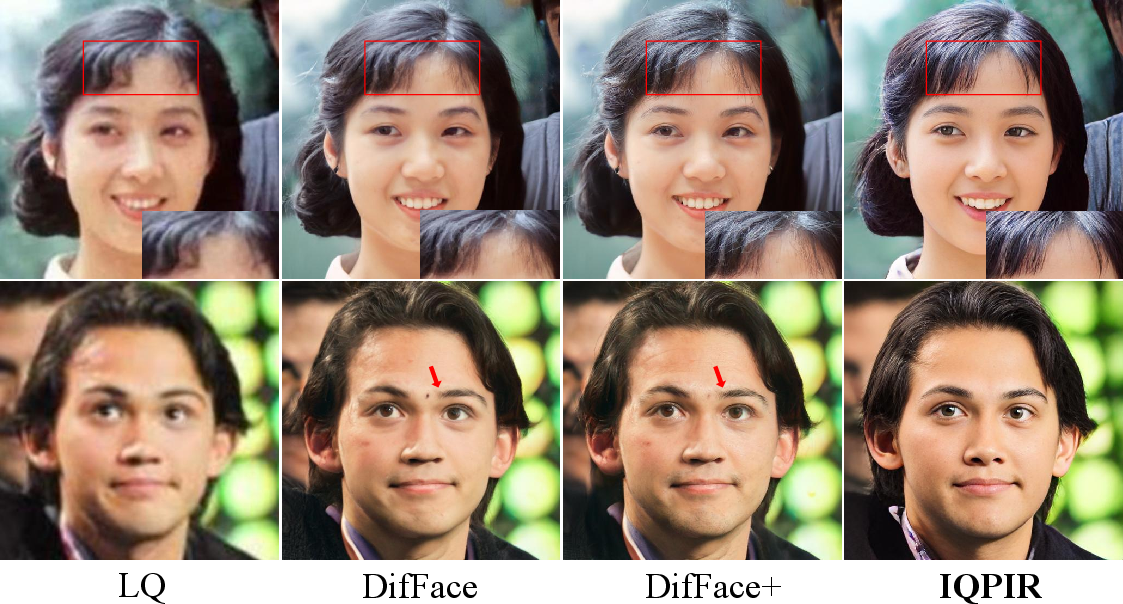

Blind Face Restoration (BFR)

On challenging BFR benchmarks (LFW, WebPhoto, WIDER), IQPIR consistently outperforms prior approaches across all NR-IQA metrics, delivering both numerically superior and visually more compelling results. For example, on LFW-Test, IQPIR surpasses the previous best (InterLCM) by a 2–3% margin on essential face IQA metrics. Ablation confirms that both the dual-codebook design and score conditioning are critical for these gains.

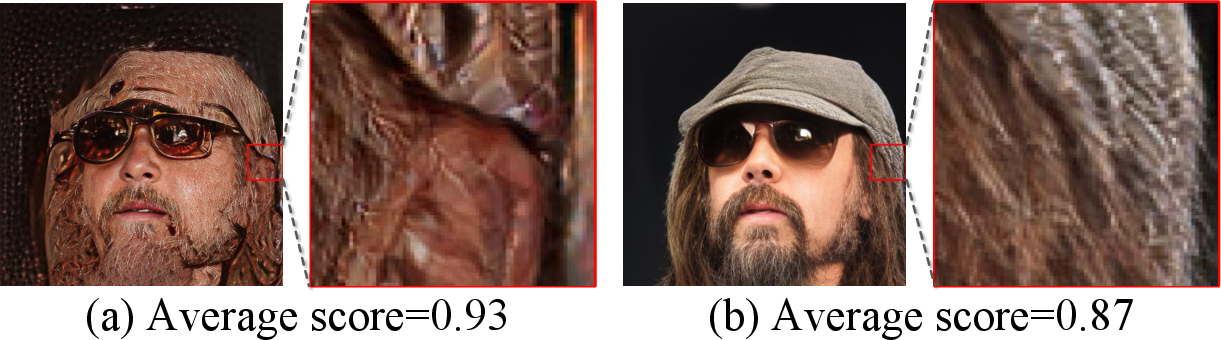

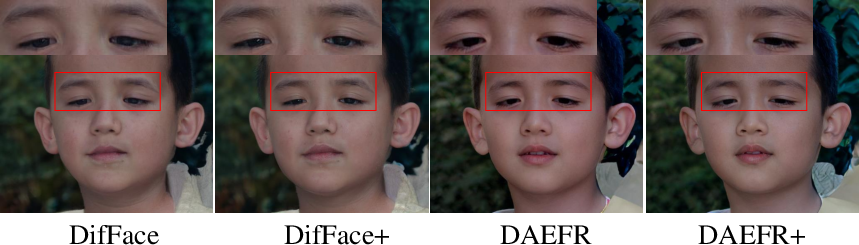

Figure 1: Visual comparison for blind face restoration. IQPIR exhibits strong texture preservation and credible facial reconstruction.

Low-Light, Underwater, and Backlit Image Enhancement

Across LOL-v1/v2, UIEB, and BAID datasets, IQPIR achieves higher PSNR (~0.5–1 dB), SSIM, and perceptual quality scores (UCIQE, UIQM, LPIPS, FID) than strong recent baselines—demonstrating generalizability and the efficacy of IQA-driven optimization.

Figure 4: Visualizations for low light, underwater, and backlit image restoration show that IQPIR reliably reconstructs color and local details across diverse domains.

Plug-and-Play Generalization

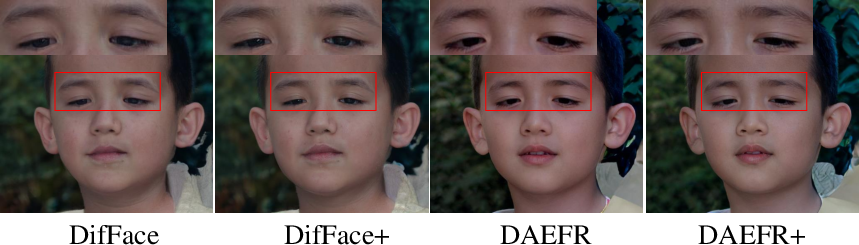

Applying IQPIR’s score conditioning paradigm to external SOTA architectures yields consistent, significant metric improvements—demonstrating the general utility of quality priors as a plug-and-play enhancement for restoration backbones.

Over-Optimization Analysis

A key empirical finding is that the discrete codebook space reconciles NR-IQA optimization and human perception, while continuous spaces break this alignment, validating the theoretical motivation.

Figure 5: Breakdown ablation visualizations for score conditioning, dual codebooks, and quality optimization sequence.

Figure 6: IQPIR generalization: cases where “+” denotes external models equipped with the plug-and-play quality conditioning.

Implications and Theoretical Significance

IQPIR advocates for replacing the dogma of GT reference perfection with explicit, external measures of perceptual quality. By leveraging robust, ensembling-based NR-IQA priors and integrating them at multiple levels, restoration models are enabled to surpass dataset limitations and emulate human-centric restoration objectives.

Practically, IQPIR demonstrates that the plug-and-play approach can be harmoniously merged with mainstream architectures, providing substantial perceptual gains across domains. The explicit use of discrete representation spaces for NR-IQA objective alignment also suggests a principled solution to reward hacking—relevant for broader reward-driven optimization in vision and RL.

Theoretically, this paradigm posits that reference-free quality signals, combined with discrete output controls, can redefine the limits of perceptual enhancement in supervised regimes, with implications for any domain where GT is noisy or suboptimal.

Conclusion

IQPIR introduces a comprehensive, perceptually-aligned, and architecture-agnostic framework for robust image restoration. It integrates ensemble IQA-driven priors, a dual-codebook structure, and explicit quality conditioning to decouple restoration targets from flawed GT supervision. The empirical evidence establishes that this strategy provides consistent, scalable gains in visual fidelity and resilience to diverse real-world degradations. The insights regarding discrete representation for quality optimization hold broader significance for optimization in perception-related tasks.

Future directions include optimizing IQA prior ensembles to limit inherited bias and jointly learning quality priors and restoration objectives for more robust perception-driven restoration.