- The paper demonstrates that both human EEG data and artificial neural models exhibit robust construction-specific representations, especially at the object position.

- It employs rigorous EEG preprocessing and SVM-based classification to decode differential activity across argument structure constructions.

- The study highlights that alpha band oscillations mark the integration stage in language comprehension, mirroring computational model dynamics.

Convergent Representations of Linguistic Constructions in Human and Artificial Neural Systems

Introduction

This study systematically investigates the alignment of neural representations between human brains and artificial neural LLMs concerning Argument Structure Constructions (ASCs) during auditory sentence comprehension. ASCs—including transitive, ditransitive, caused-motion, and resultative constructions—are central to both Construction Grammar and modern syntactic theory, serving as form-meaning pairings that guide compositional interpretation.

Recent computational work has demonstrated that both recurrent and transformer-based LLMs can spontaneously form cluster-like internal representations for ASCs in the absence of explicit syntactic cues, leading to specific, testable predictions about the locus and structure of constructional information during language processing. The present study approaches these predictions from a neurophysiological perspective, using high-density EEG to probe construction-sensitive neural dynamics during the online comprehension of auditorily presented sentences.

Experimental Paradigm and Data Preprocessing

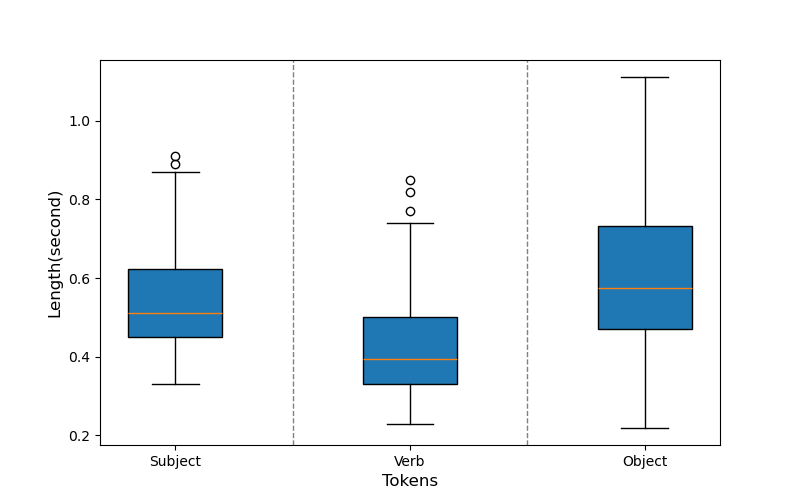

Native English speakers were presented with balanced sets of synthetically generated ASC sentences, each tightly controlled in structure and content. Auditory stimuli were delivered while 64-channel EEG was recorded at 2.5 kHz, followed by a standard preprocessing pipeline (high-precision channel interpolation, FIR band-pass filtering, ICA-based artifact correction, epoching, and baseline correction). Epochs were time-locked to syntactic positions (subject, verb, object), enabling syntactic-role-specific analyses even in the presence of substantial token duration and sentence length variability.

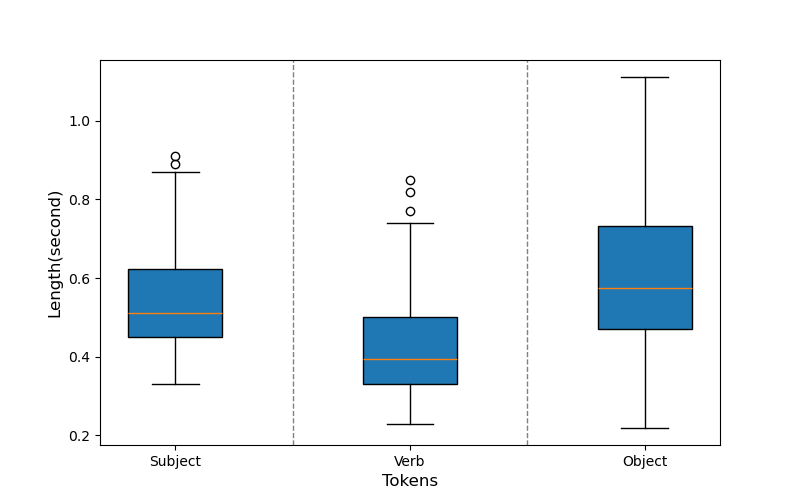

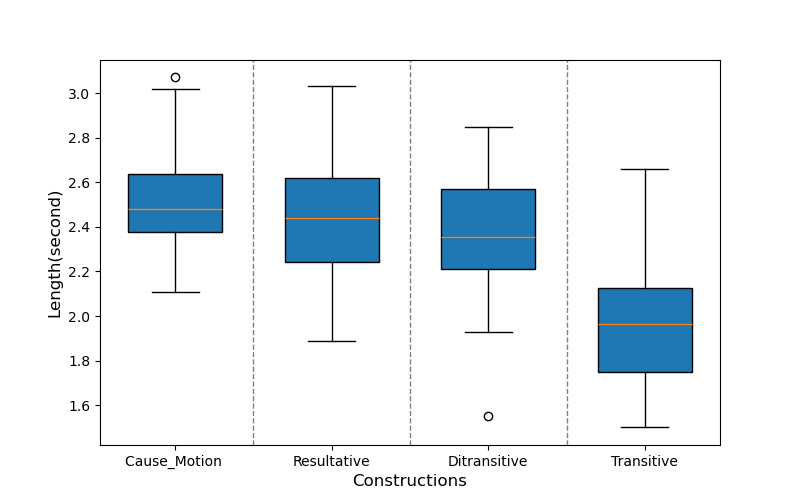

Figure 1: Duration variability across syntactic roles for stimuli tokens, necessitating careful alignment and length-independent feature extraction.

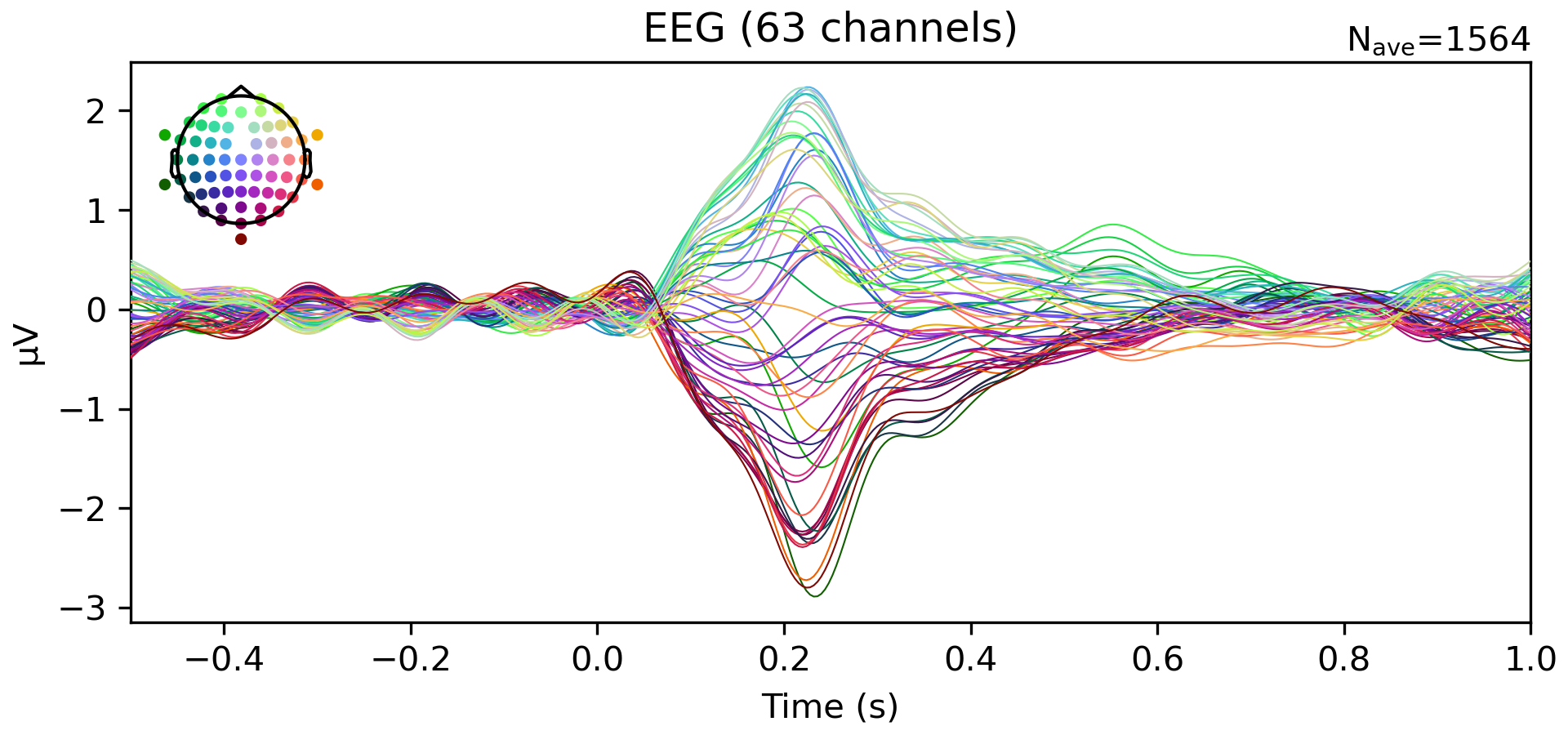

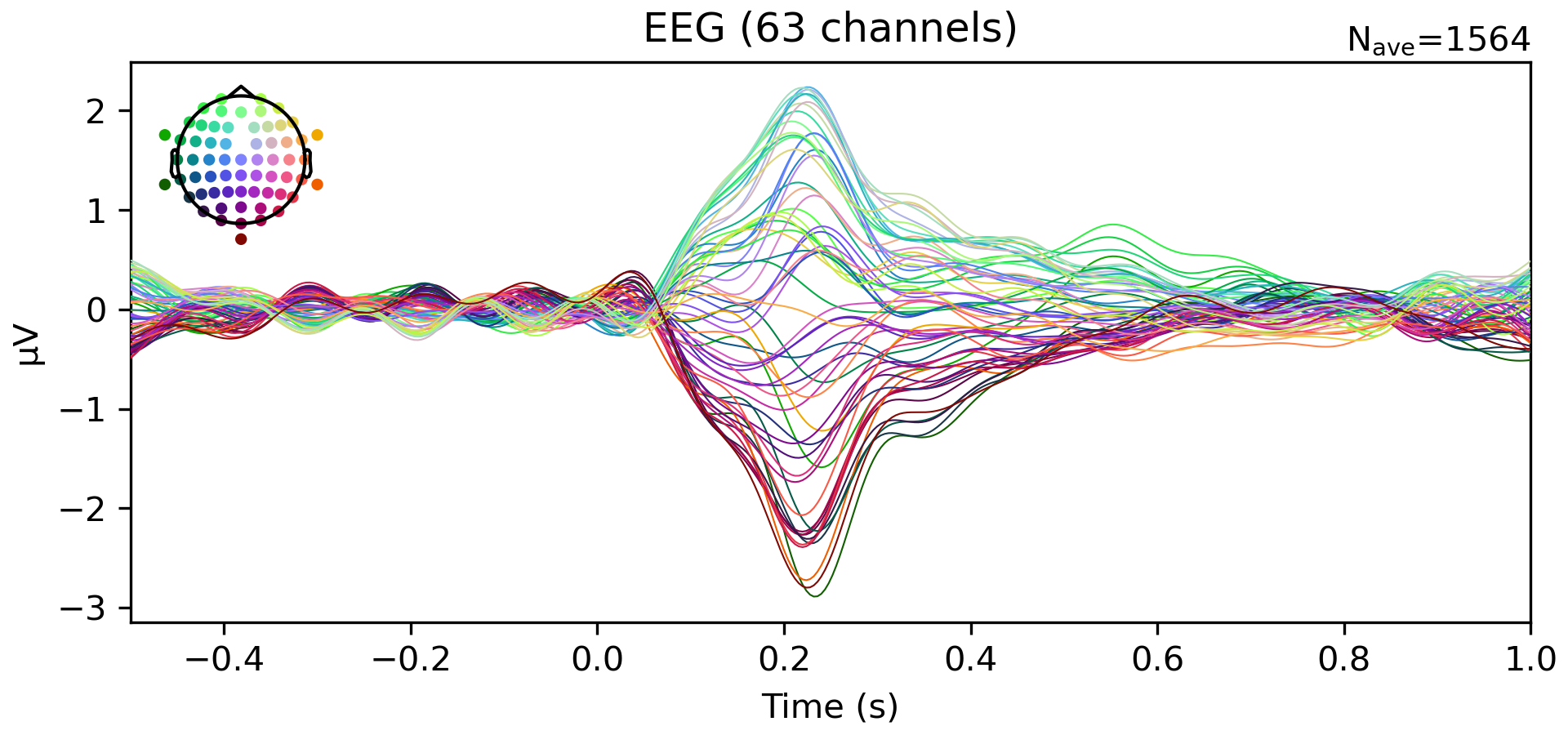

Robust P200 components were observed at sentence onset, validating the quality of the neural data and the effectiveness of the experimental protocol.

Figure 2: Grand-average ERPs across channels, demonstrating prominent P200 responses and reliable neural engagement during language comprehension.

Time-Frequency and Statistical Analyses

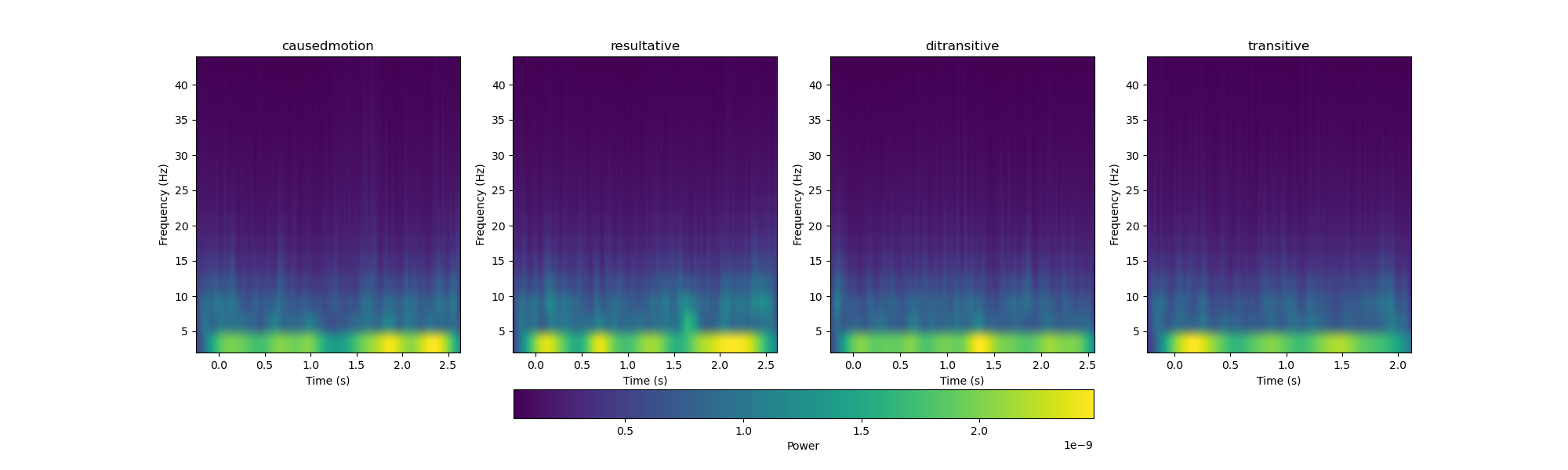

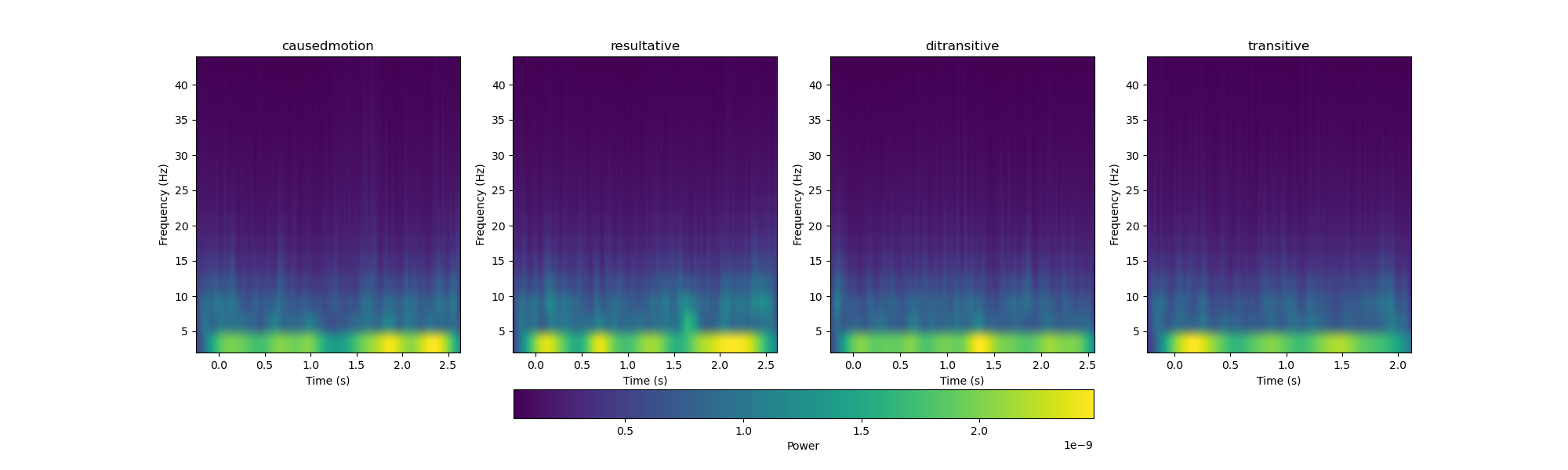

Time-frequency representations (TFRs) were estimated for each construction type using Morlet wavelet decomposition, with the common average across constructions subtracted to isolate construction-specific dynamics.

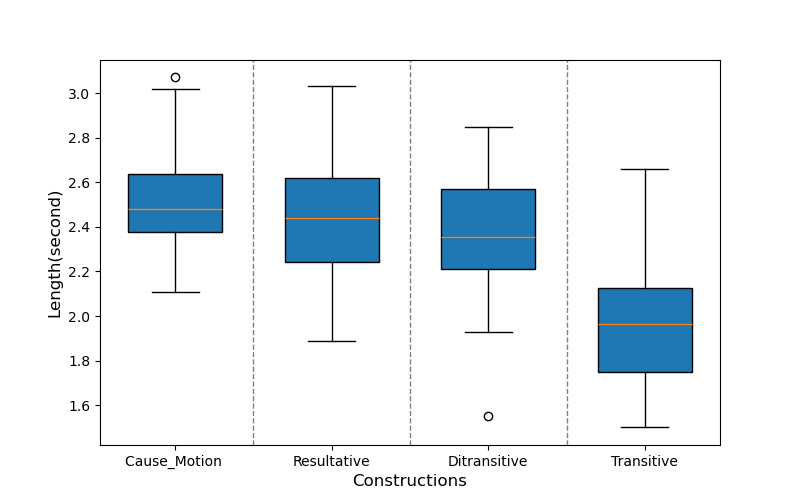

Figure 3: Sentence duration variability across construction types, supporting the need for statistical feature extraction rather than direct waveform comparison.

Figure 4: TFRs post-mean subtraction, highlighting construction-specific oscillatory divergence, especially in the low-frequency (2–5 Hz) range.

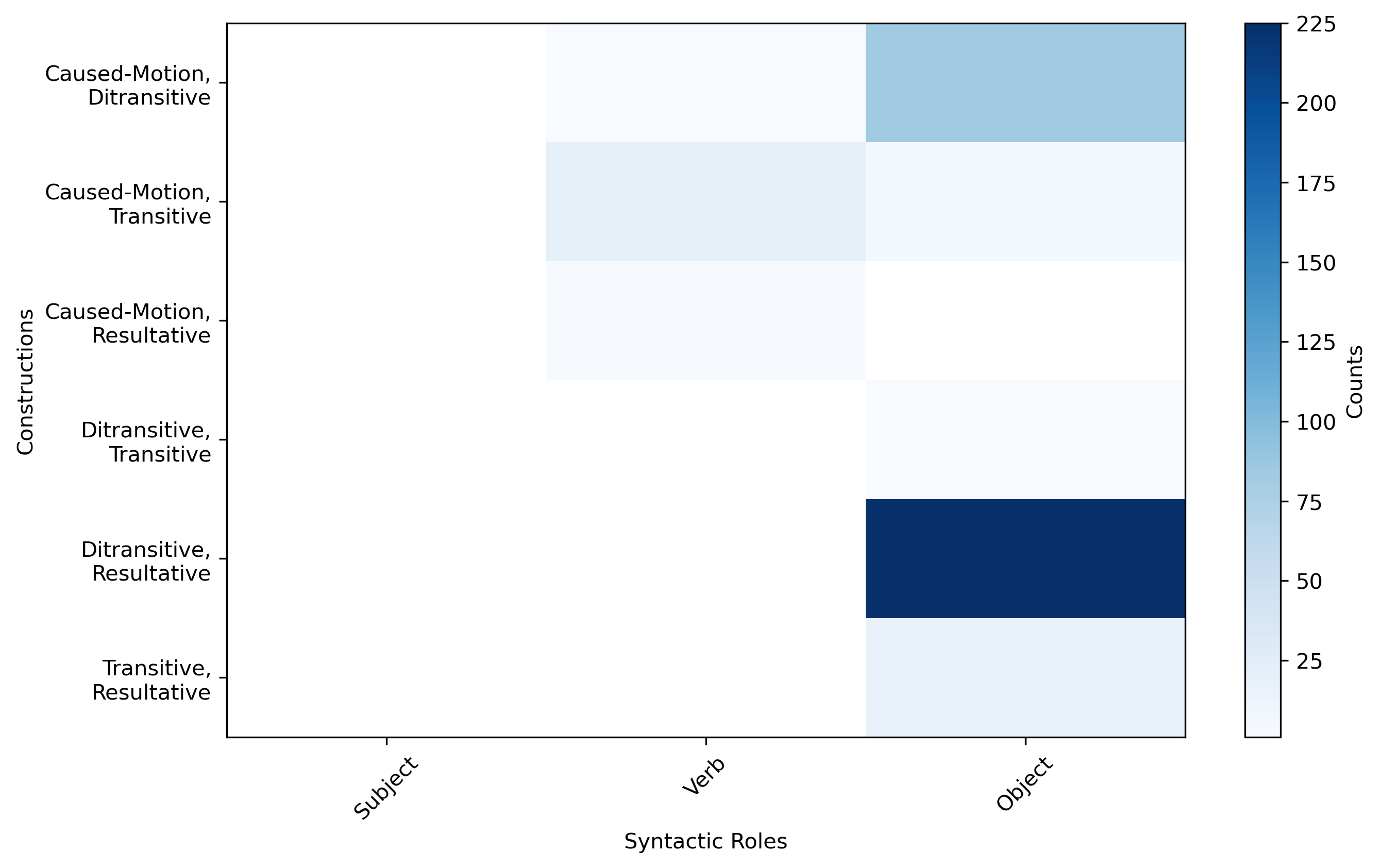

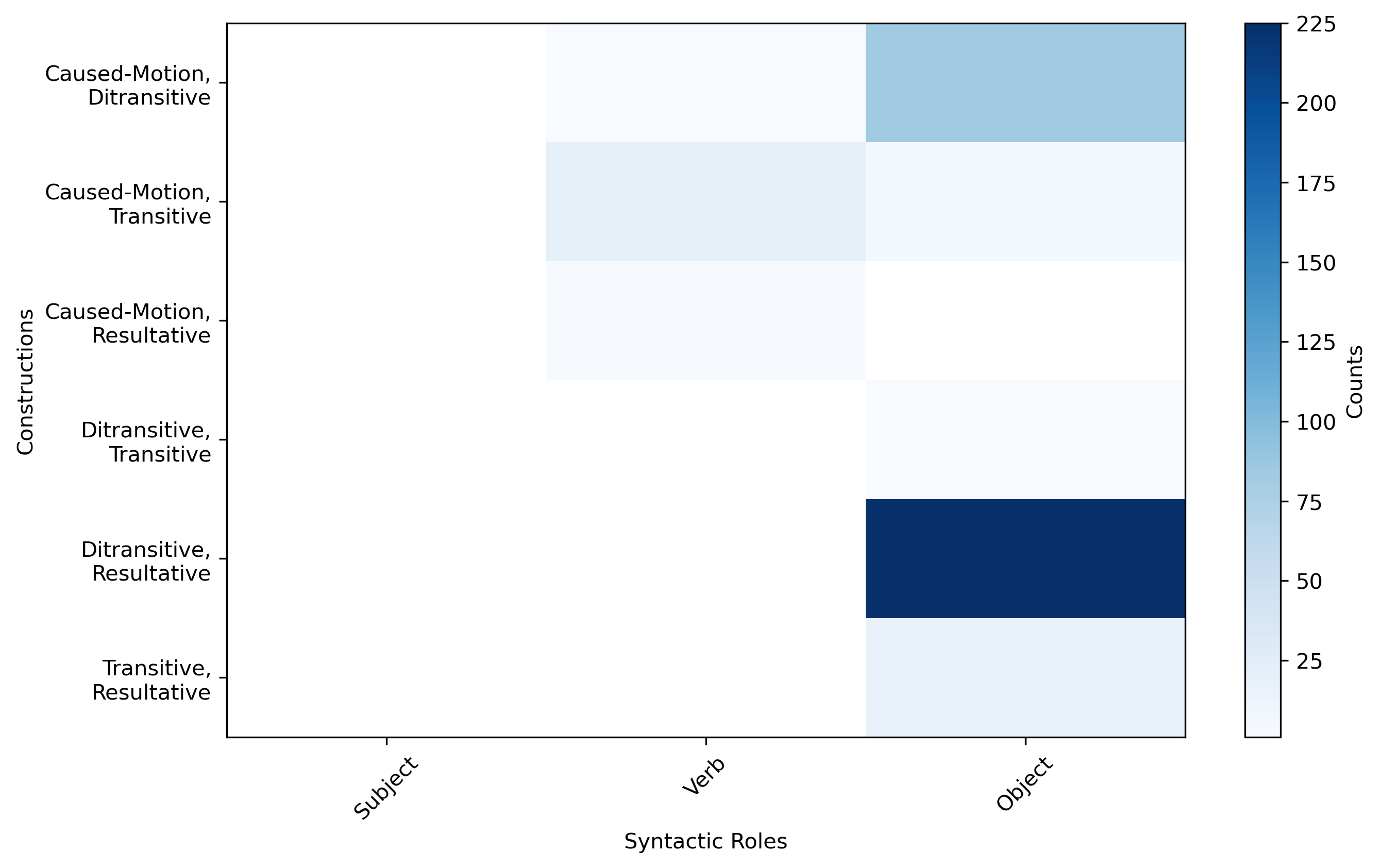

Statistical analyses were conducted across syntactic roles and frequency bands, focusing primarily on extracted features (peak amplitude, kurtosis, etc.). Notably, construction-sensitive neural effects were largely absent at subject and verb positions but emerged robustly at the object role, corresponding to the point of maximal event-structure disambiguation within the sentence.

Figure 5: Heatmap of significant EEG feature counts by construction pair and syntactic role, revealing sharply increased construction discriminability at object.

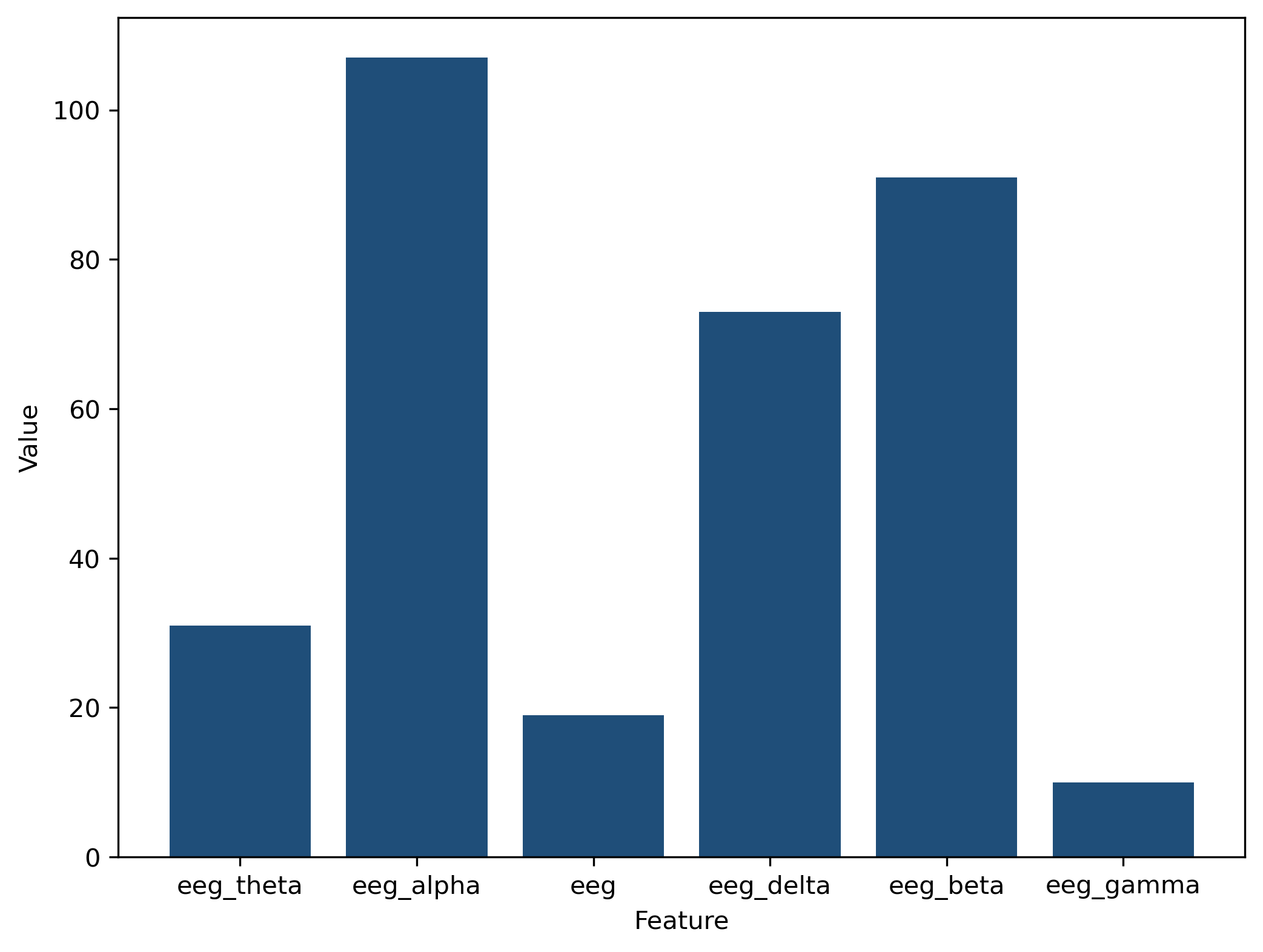

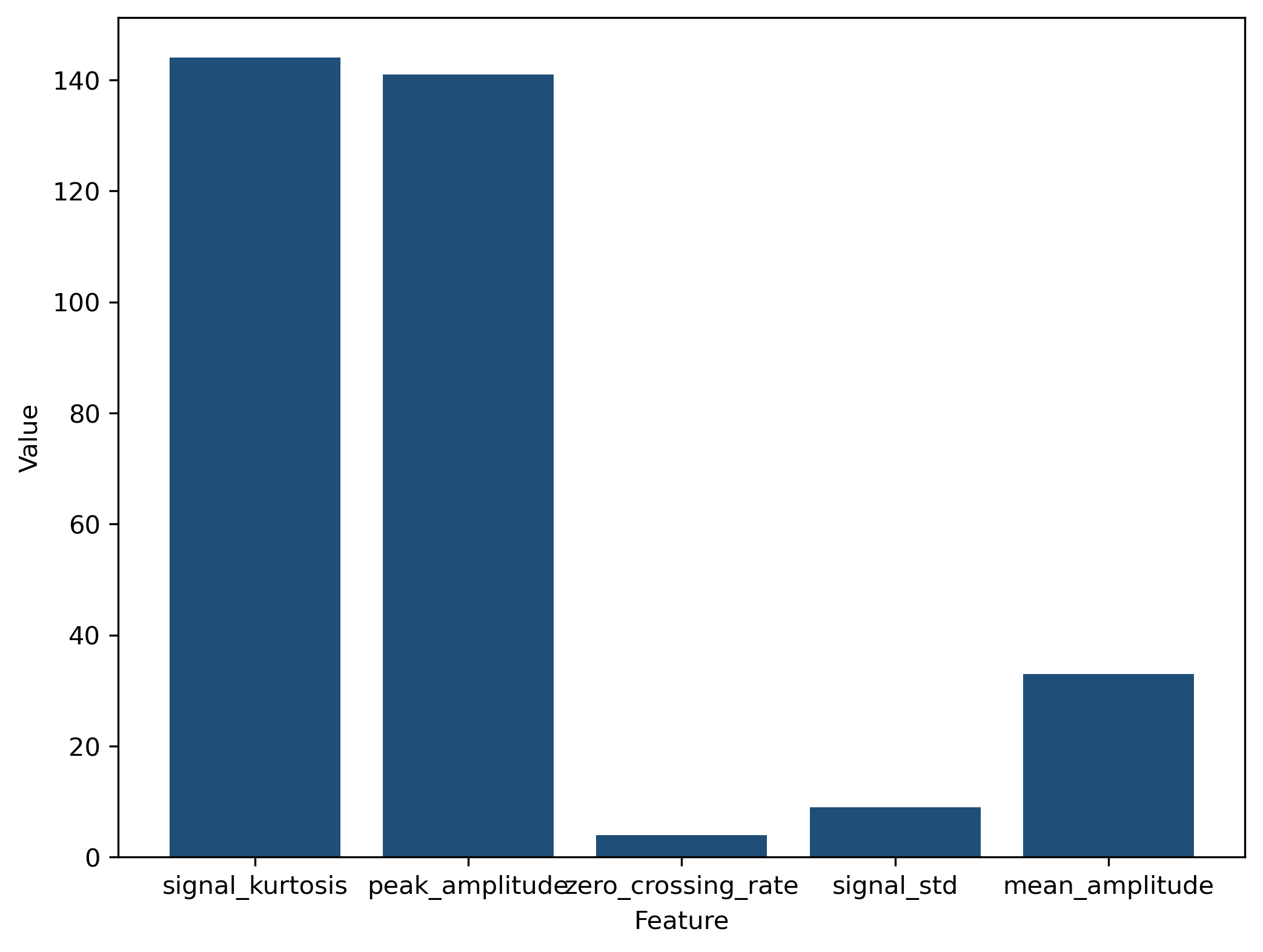

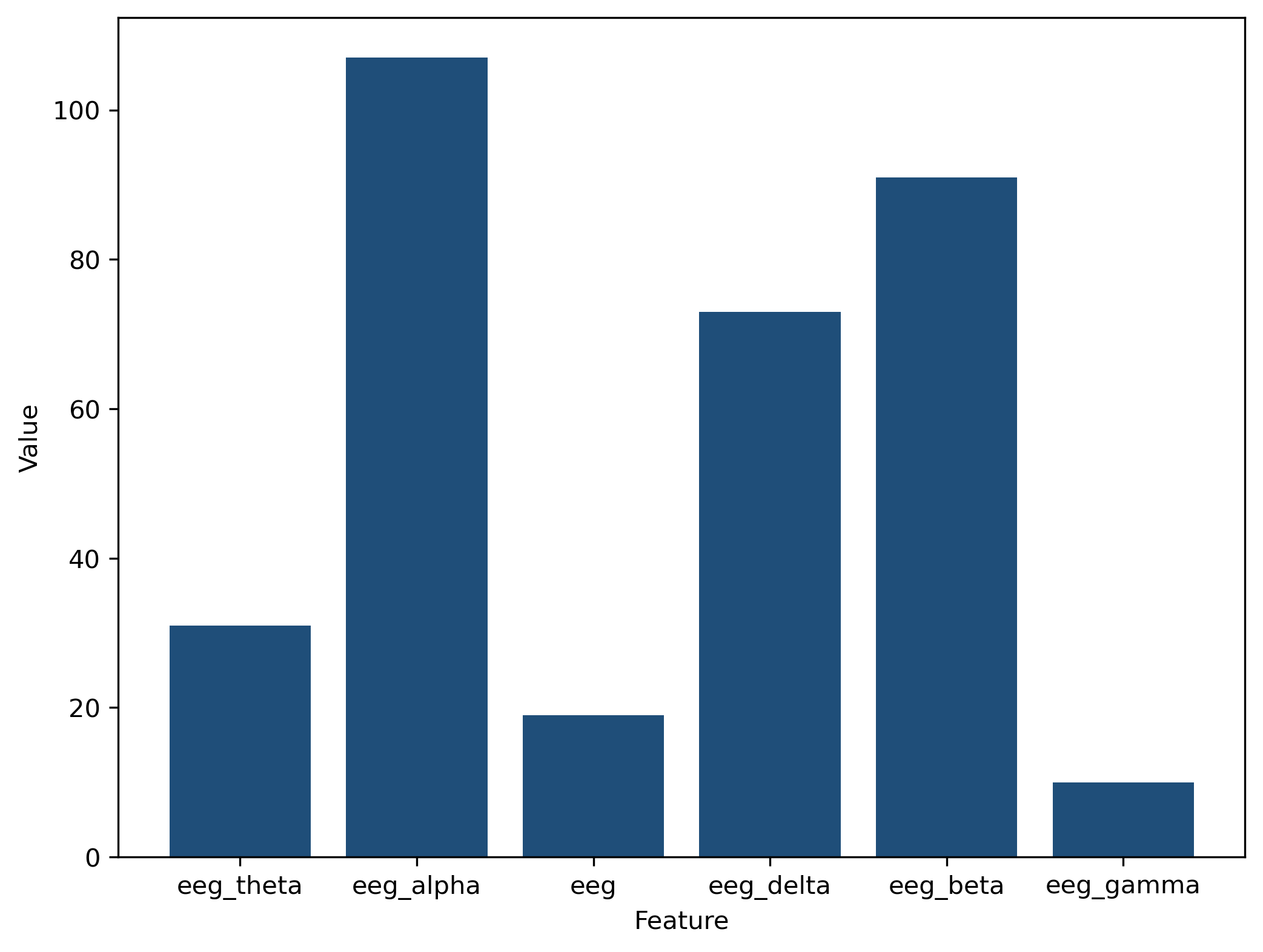

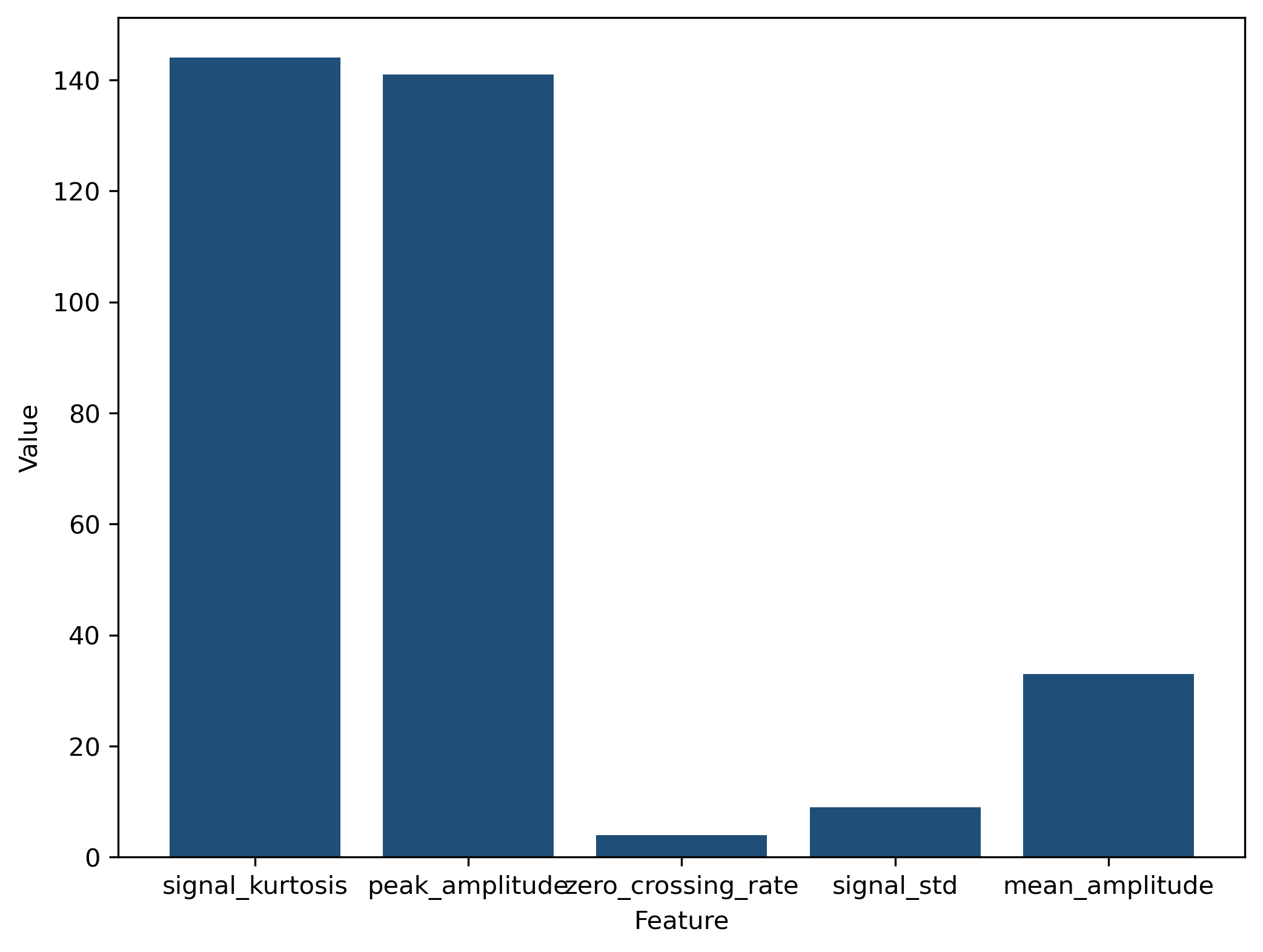

Discriminability was most pronounced in the alpha band, with additional but weaker effects in beta and delta. Kurtosis and peak amplitude outperformed other statistical features in revealing constructional differences.

Figure 6: Frequency band contributions to construction discrimination, with alpha dominating.

Figure 7: Feature-type effectiveness, highlighting kurtosis and peak amplitude as sensitive markers of constructional neural signatures.

Classification and Validation

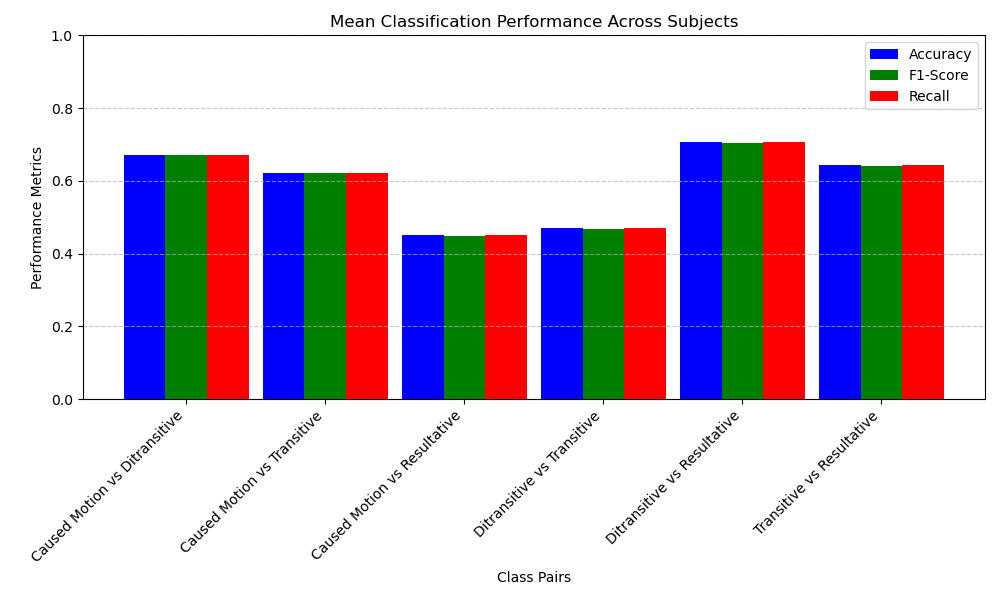

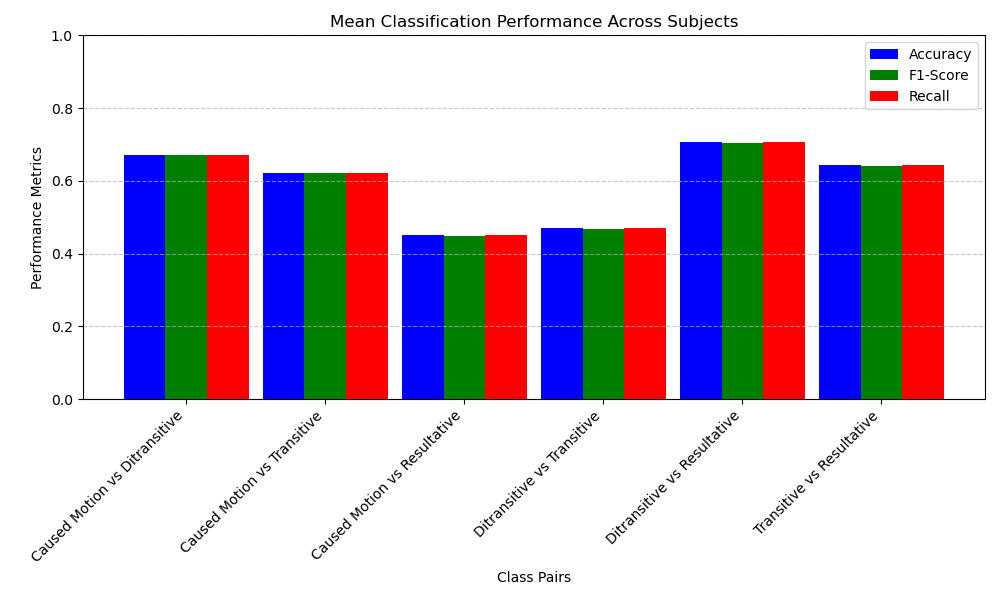

SVM-based pairwise classification analyses were performed on object-aligned epochs. Multi-class (four-way) classification approximated chance, but several construction pairs (especially ditransitive vs. resultative) demonstrated reliable above-chance decoding.

Figure 8: Classification accuracy, F1, and recall for all construction pairs, indicating selective but strong separability—most notably between ditransitive and resultative.

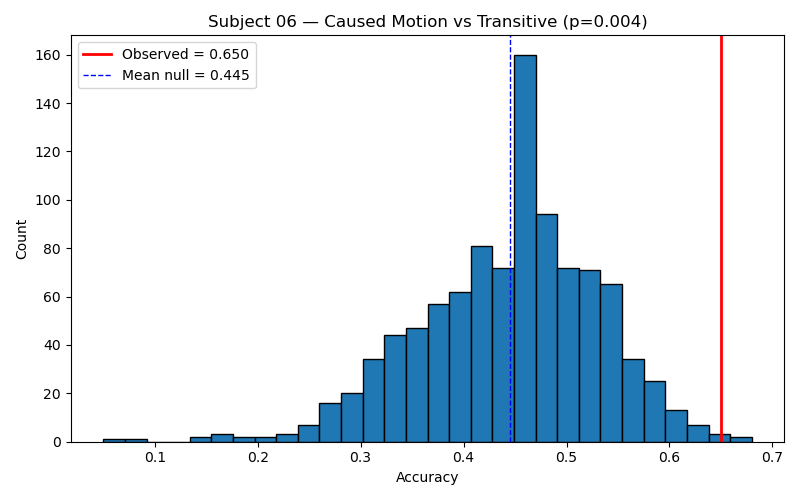

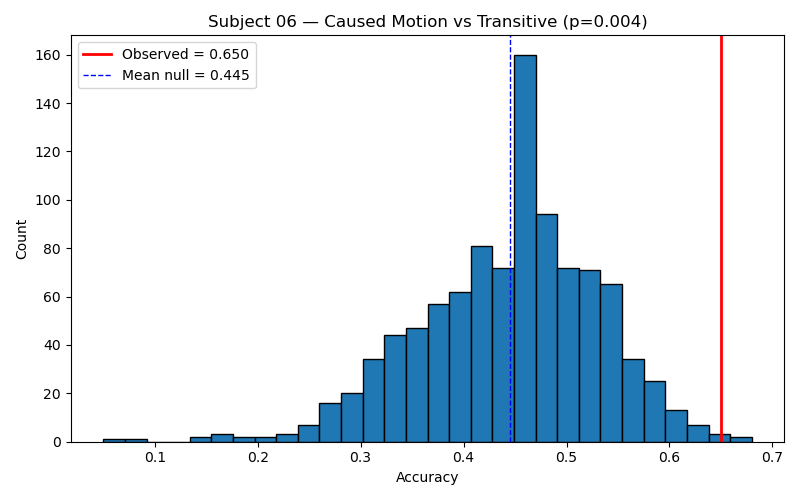

Permutation testing further substantiated statistical validity, with significant deviations from null distributions only for a subset of construction pairs.

Figure 9: Representative permutation test for caused-motion vs. transitive, showing observed accuracy significantly above the null distribution.

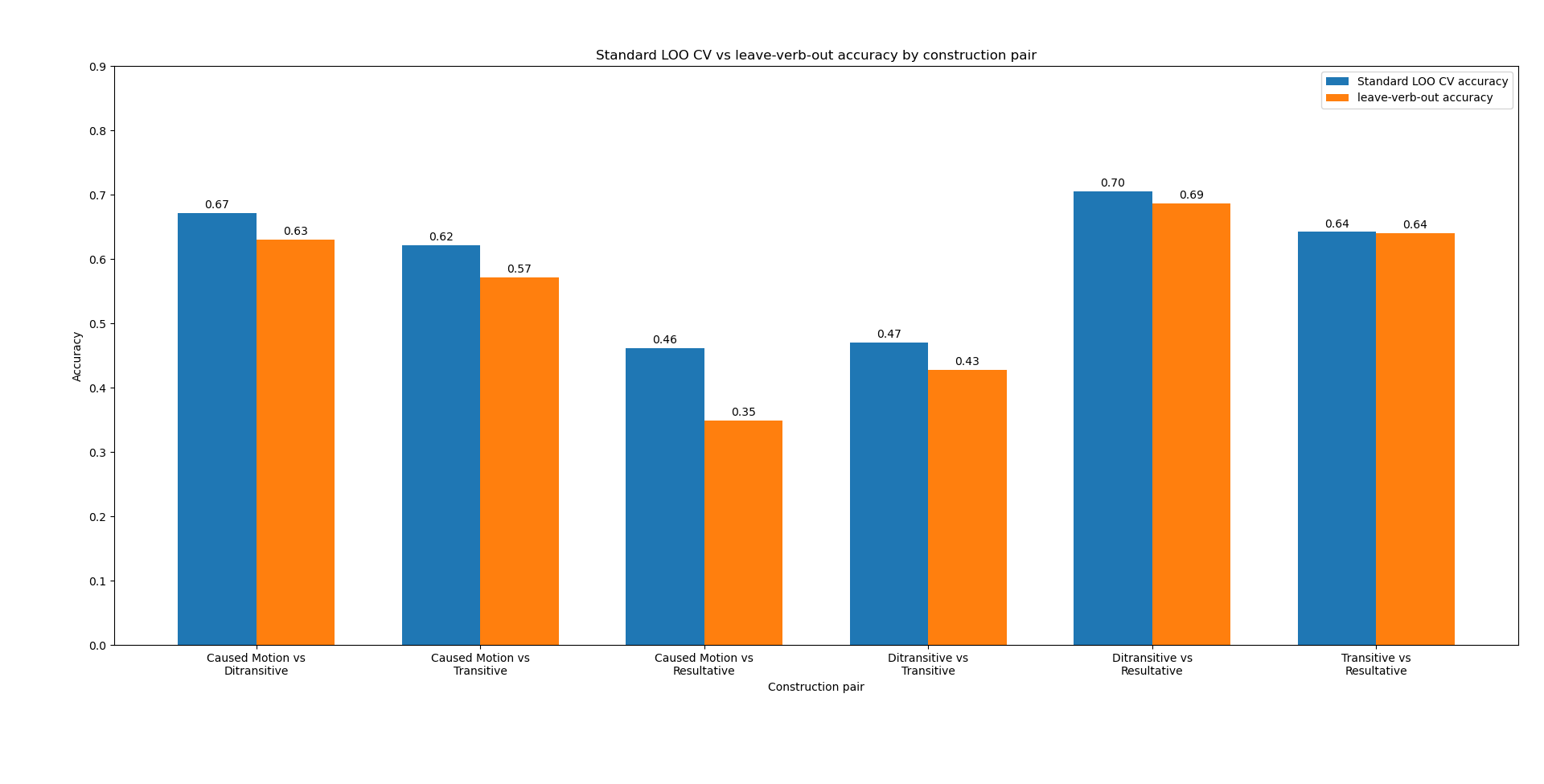

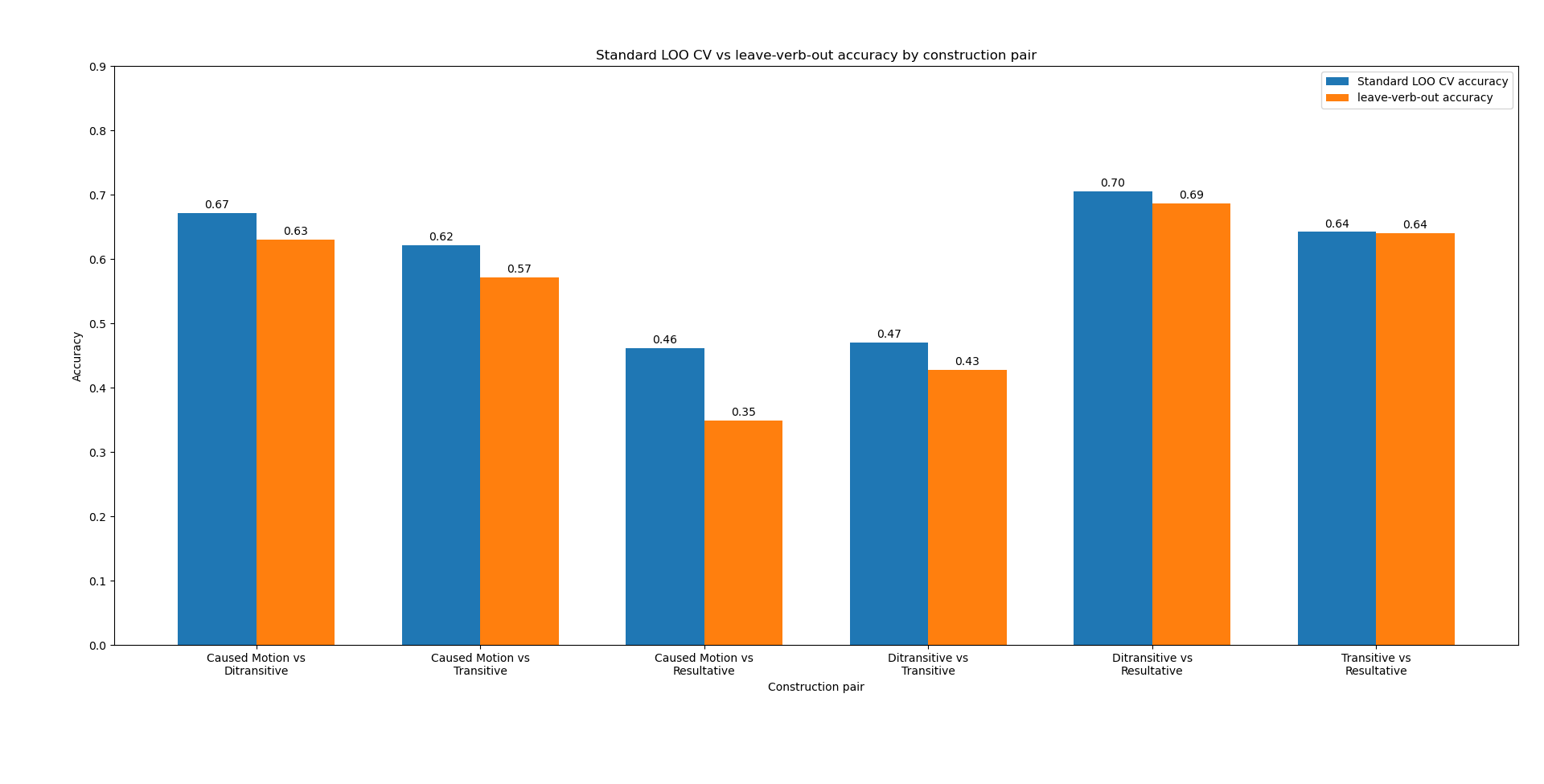

To rule out confounds from verb overlap between training and testing sets ("verb leakage"), a leave-verb-out cross-validation regime was implemented. The qualitative pattern of construction discriminability persisted, confirming that SVM performance reflected constructional—rather than purely lexical—information.

Figure 10: Comparison of standard versus verb-controlled cross-validation; construction discrimination is robust to lexical variability.

Theoretical Implications: Convergence Across Biological and Artificial Systems

Three critical convergences emerge between human EEG data and artificial neural model behavior:

- Temporal locus of differentiation: In both human EEG and artificial models, construction-specific representations become robust only at integrative processing stages—specifically at the point where argument structure saturates stimulus ambiguity (i.e., object position).

- Similarity structure: The discriminative geometry across construction types (with ditransitive vs. resultative most separable, and caused-motion vs. resultative least separable) closely mirrors the internal representational landscape discovered in model analyses.

- Spectral signature of integration: Construction-sensitive effects are strongest in the alpha band, aligning with the literature relating alpha oscillations to chunk-level integration and unification operations during language comprehension and mirroring the late, context-sensitive emergence seen in both RNNs and transformer LMs.

These convergences imply a shared computational constraint space—recently conceptualized as a Platonic representational space—that governs the stability and expressivity of constructional abstraction in both human and machine learners.

Methodological Limits and Inter-Individual Variability

While group-level effects are robust, there is notable individual variability (channel, frequency, and feature sensitivities), consistent with usage-based theories that accommodate gradient, experience-dependent adaptations of construction inventories. Sentence length and uncontrolled lexical overlap introduce noise, though permutation and verb-controlled validations mitigate concerns regarding overfitting or trivial lexical solutions.

Conclusion

The results provide strong evidence that construction-specific form-meaning pairings are reflected in human neural processing and that the topography of these neural representations closely aligns with those that emerge organically in modern LMs. This convergence supports the view that general learning systems—biological or artificial—are subject to shared representational constraints, which can be formalized as stable attractors in a Platonic abstraction space. Future work extending to broader ASC inventories, cross-linguistic samples, and L2 acquisition will further clarify the universality and flexibility of construction-based neural encoding, and the degree to which current model architectures recapitulate or diverge from human representational logic.