- The paper demonstrates that structured weight permutation and global AGC can prevent runaway excitation in reservoir computing.

- Controlled matrix permutation, such as assigning weak rows, boosts accuracy over 50% by mitigating global saturation and preserving input diversity.

- Global automatic gain control dynamically stabilizes reservoir activity across coupling regimes, ensuring near-perfect task performance.

Structural and Dynamical Strategies to Prevent Runaway Excitation in Reservoir Computing

Introduction

This work rigorously analyzes the structural and dynamical determinants of dynamical stability in recurrent neural reservoirs, focusing on regimes in which strong recurrent coupling exerts global positive or negative feedback, leading to undesirable attractor dynamics such as chaos, global oscillations, or fixed points. The pathological effect of these states is a decoupling of the reservoir dynamics from input-driven fluctuations, leading to a collapse in information processing capacity. Two complementary methods to avoid runaway excitation are comprehensively evaluated: non-disruptive structuring of the connection matrix, and global automatic gain control (AGC).

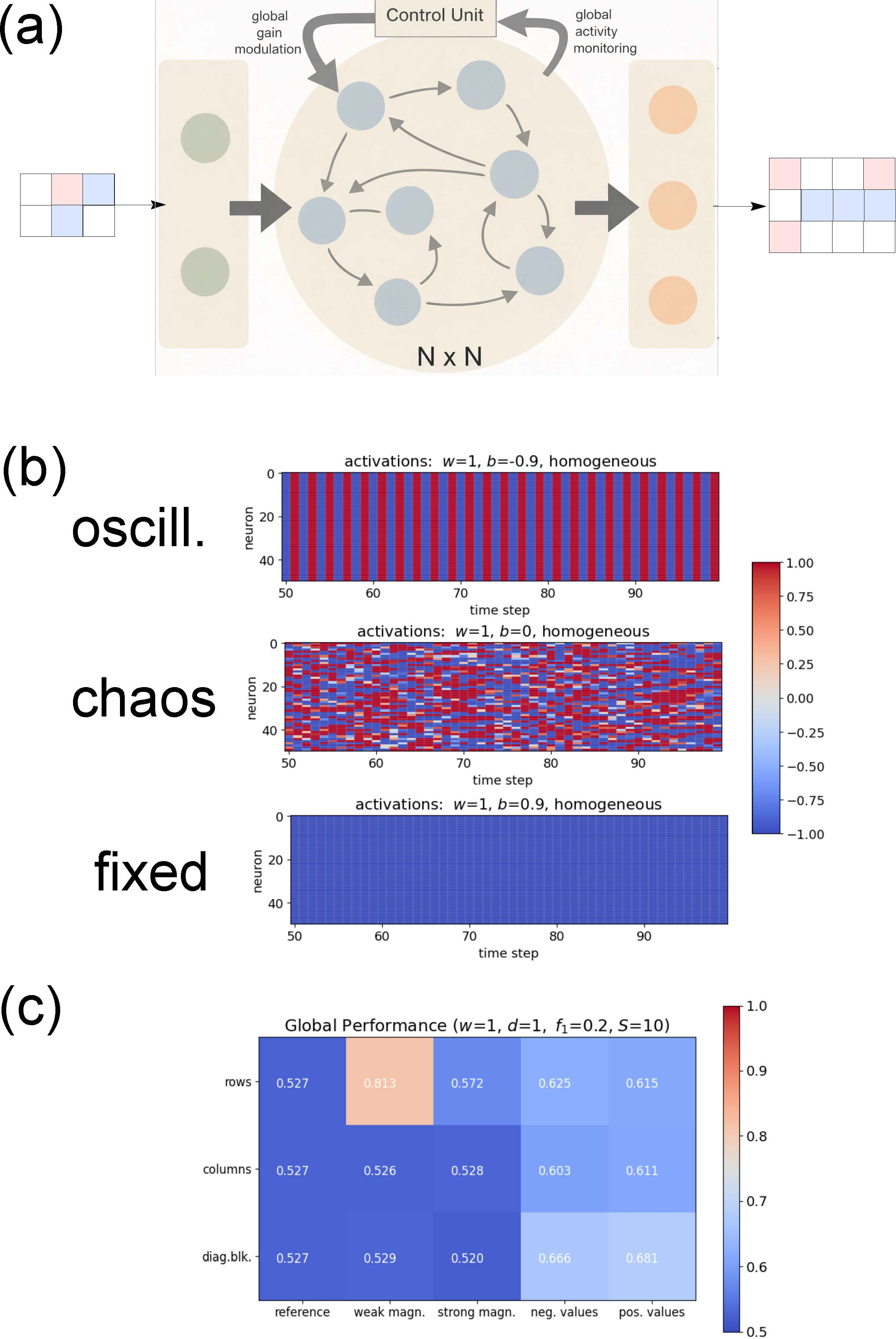

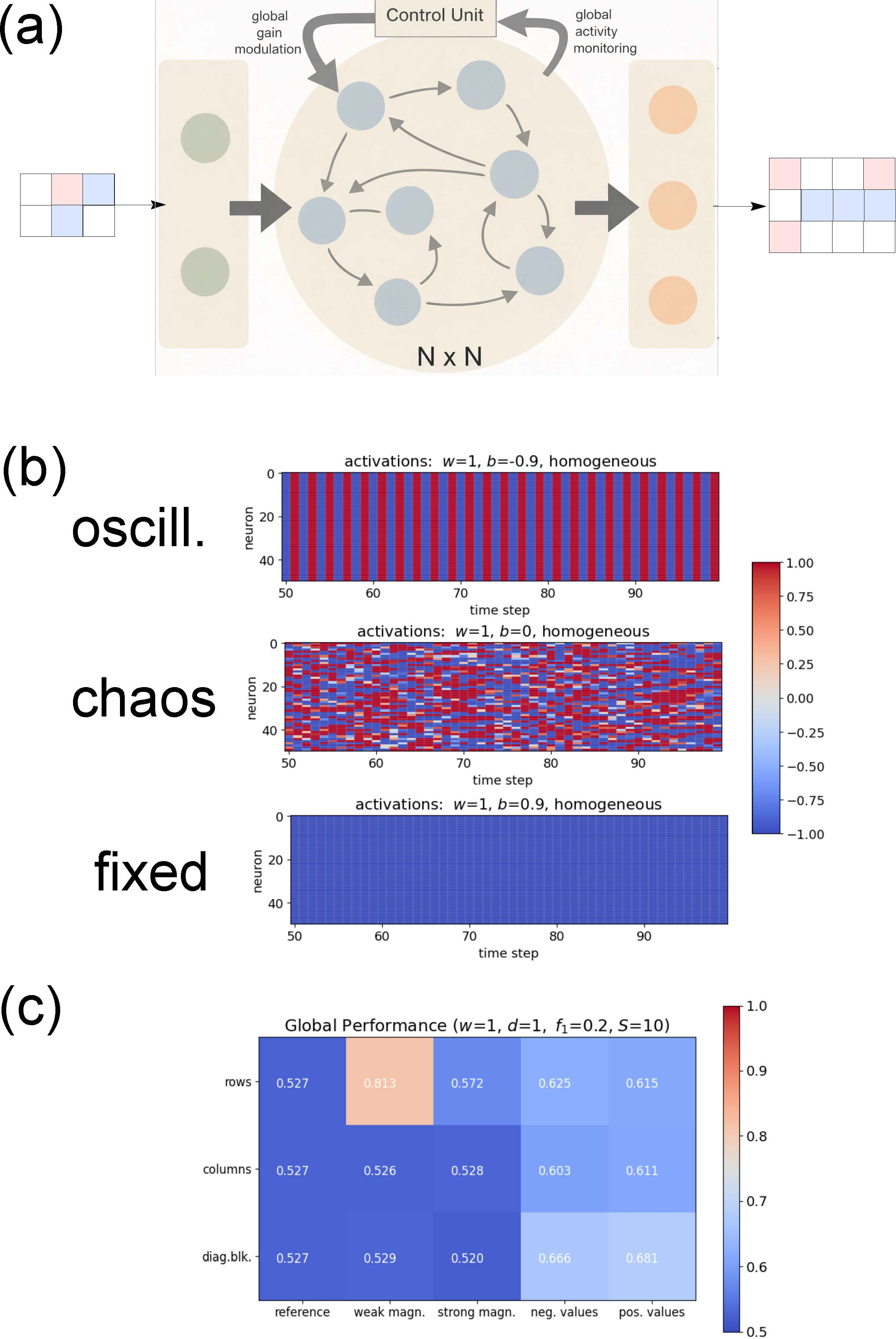

Figure 1: Overview of the reservoir computing system and main findings regarding the impact of permutative weight structuring.

Analysis begins with an N-unit RNN reservoir using tanh activations, parameterized by coupling strength w (width of the connection distribution), balance b (excitatory/inhibitory ratio), and full connection density. For weak coupling (w≪1), the system exhibits stable, input-driven dynamics across all b, with perfect accuracy in a sequence generation benchmark. However, for strong coupling (w≫1), three dynamical regimes emerge as b is tuned:

- Global oscillations for large negative b,

- Chaos near balanced b≈0,

- Global fixed points for large positive tanh0.

In all three, accuracy collapses except in small parameter ranges ("edge of chaos"), corroborating prior studies indicating optimal information processing near criticality [bertschinger2004real, boedecker2012information].

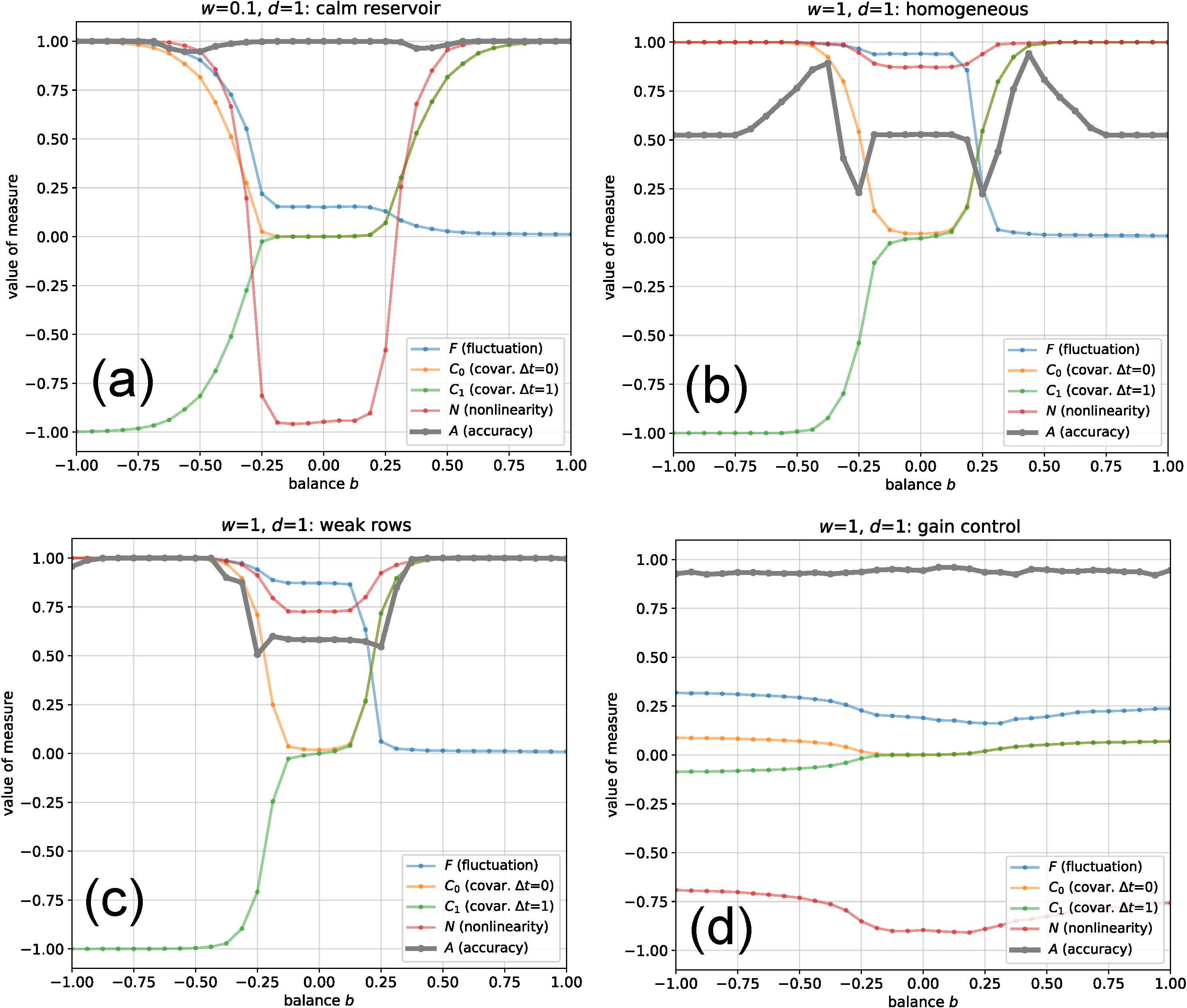

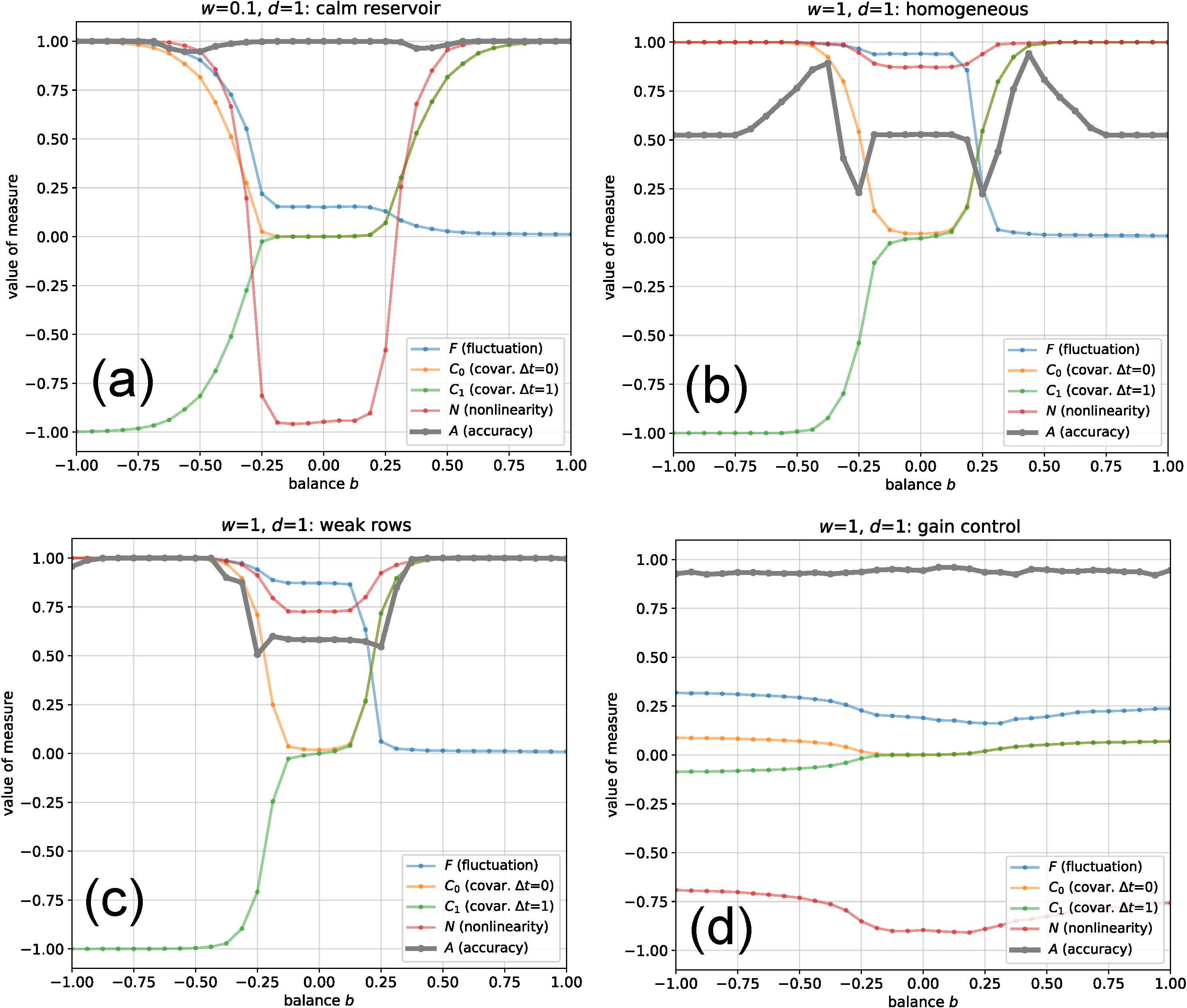

Figure 3: Scan of fluctuation, covariances, nonlinearity, and accuracy across the balance parameter, with different structural and regulatory interventions.

Structural Stabilization by Matrix Permutation

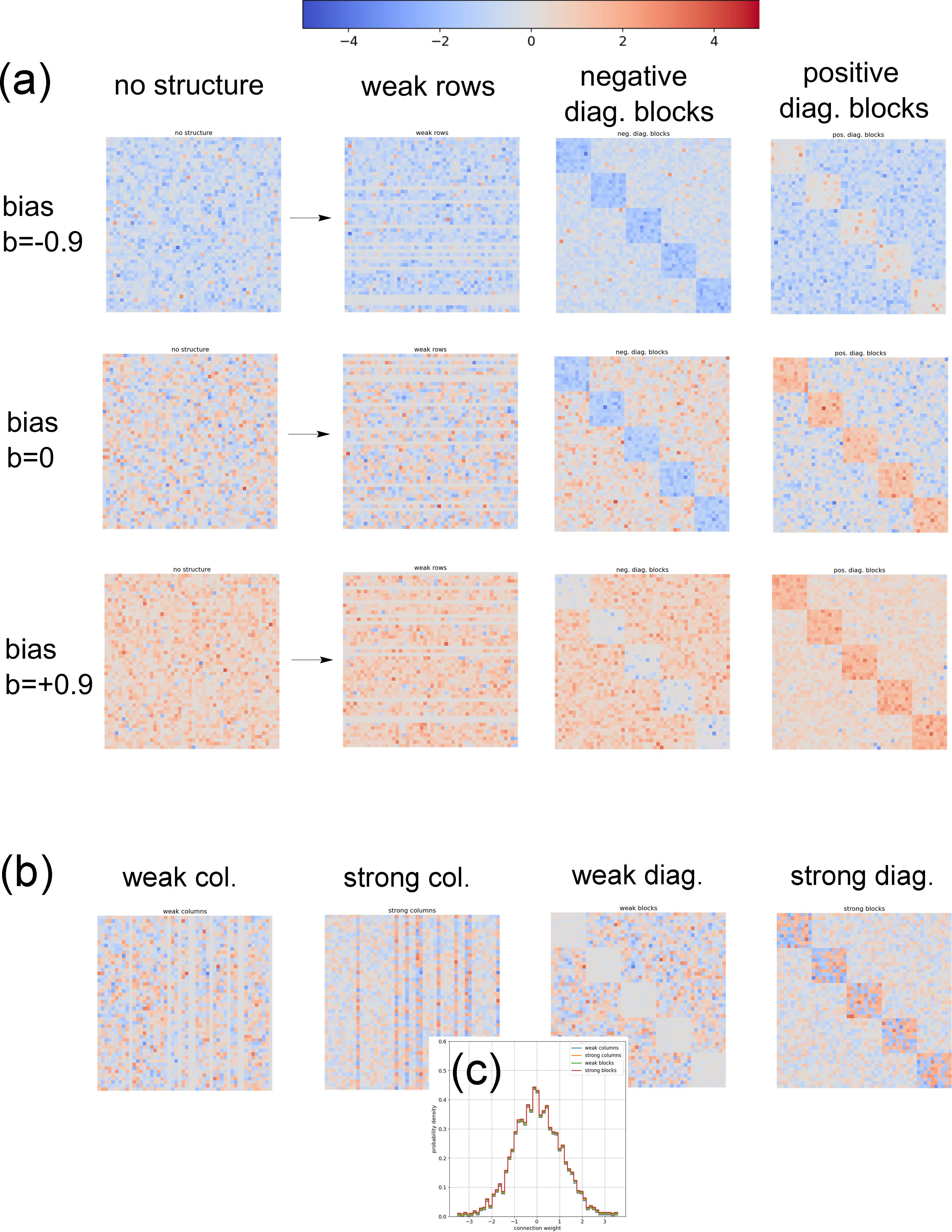

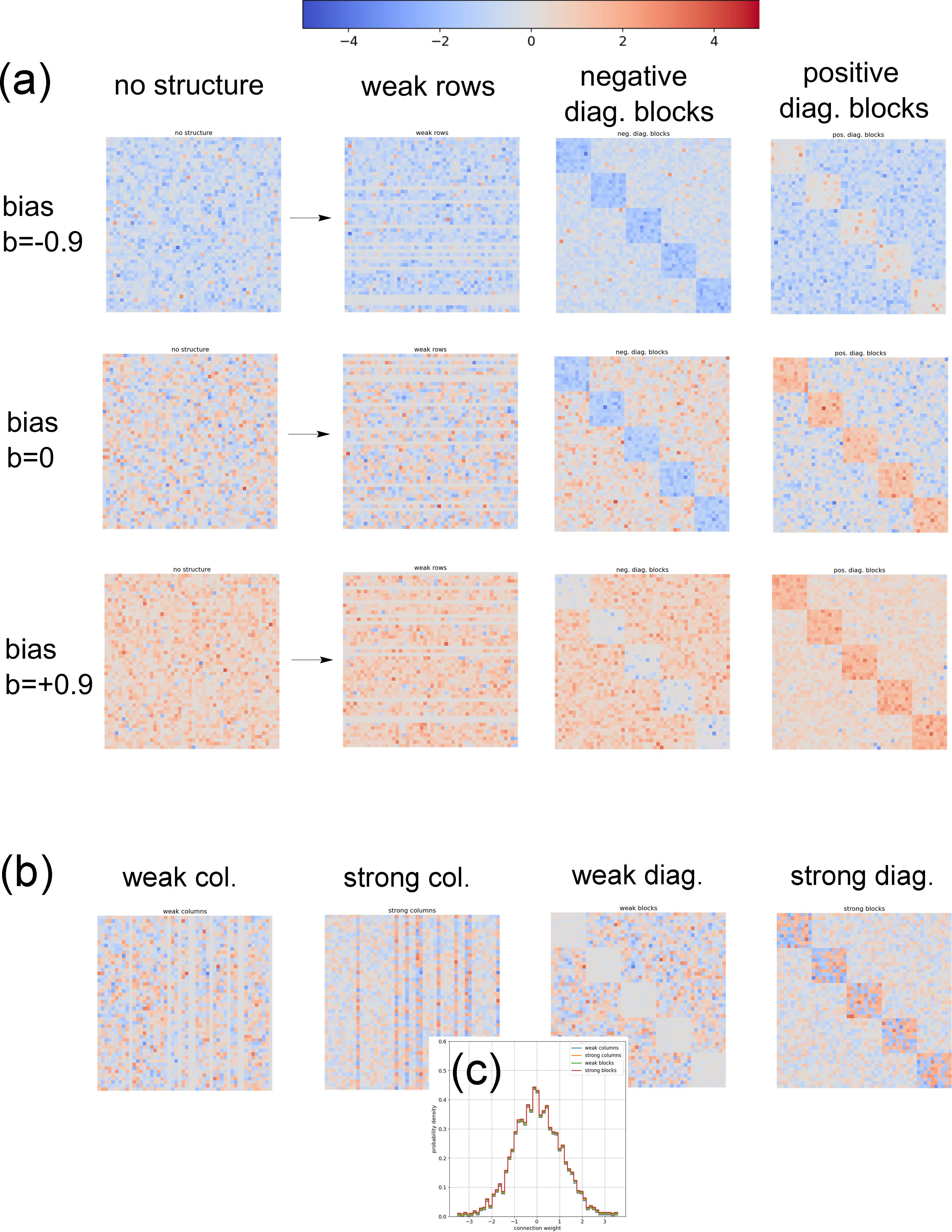

The first strategy introduces controlled heterogeneity to the weight matrix while strictly preserving its global statistical properties; i.e., the marginal tanh1 is invariant. Through a mask-driven permutation method, rows, columns, or diagonal blocks are assigned systematically weaker or stronger-than-average weights, or more positive/negative values. The configuration with the most significant impact is assignment of weaker-magnitude entries to a small (e.g., 20%) random subset of rows ("weak rows"). This induces a subpopulation of neurons with reduced recurrent drive, effectively decoupling them from global synchronous attractors and preserving their sensitivity to input structure.

Global performance (GP), defined as task accuracy averaged over tanh2, is increased more than 50% over random homogeneous configurations (0.813 vs. 0.527 for optimal structuring; see Fig. 1c).

Figure 4: Examples of matrix structuring and weight distributions, demonstrating how GP-enhancing and non-enhancing permutations manifest in tanh3.

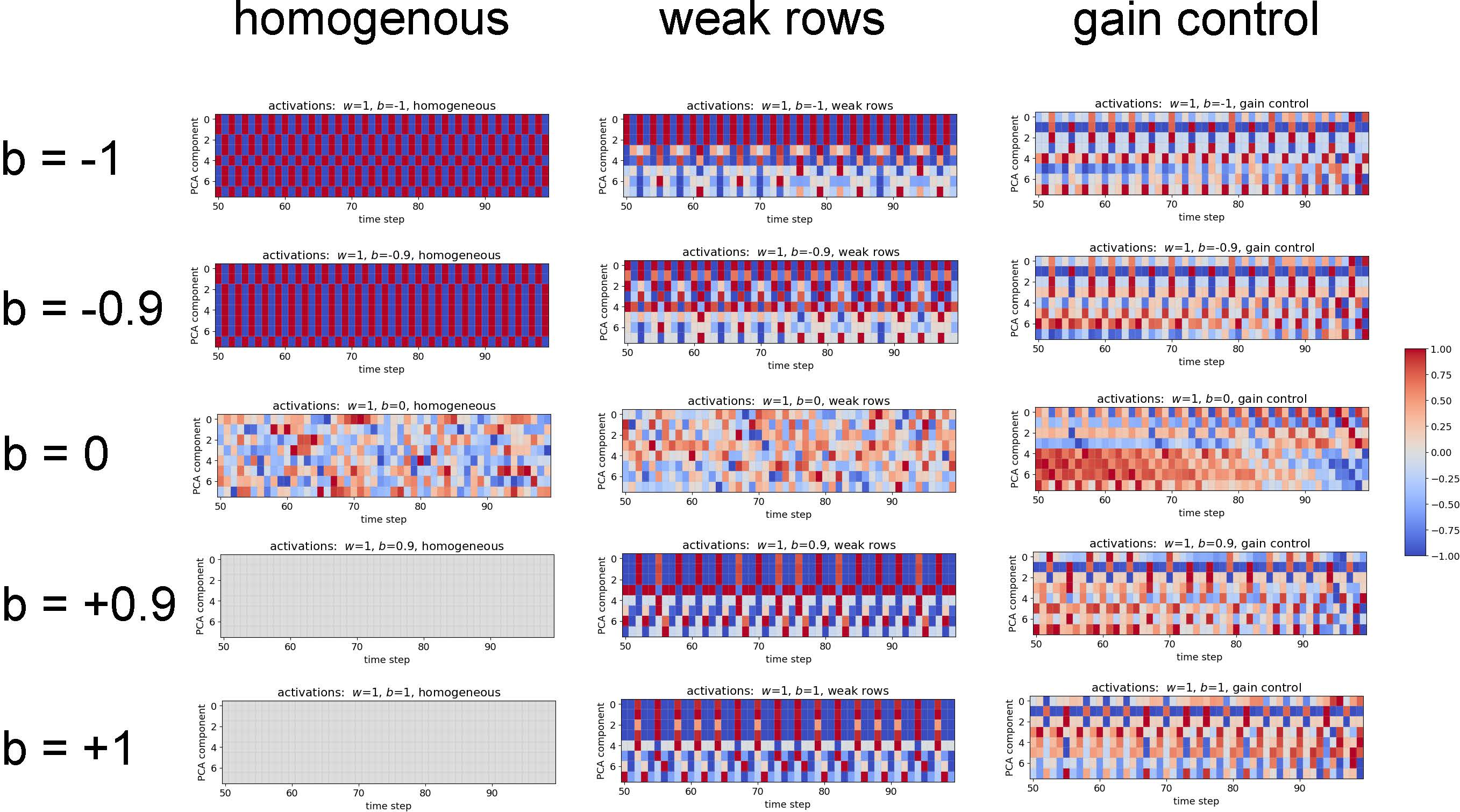

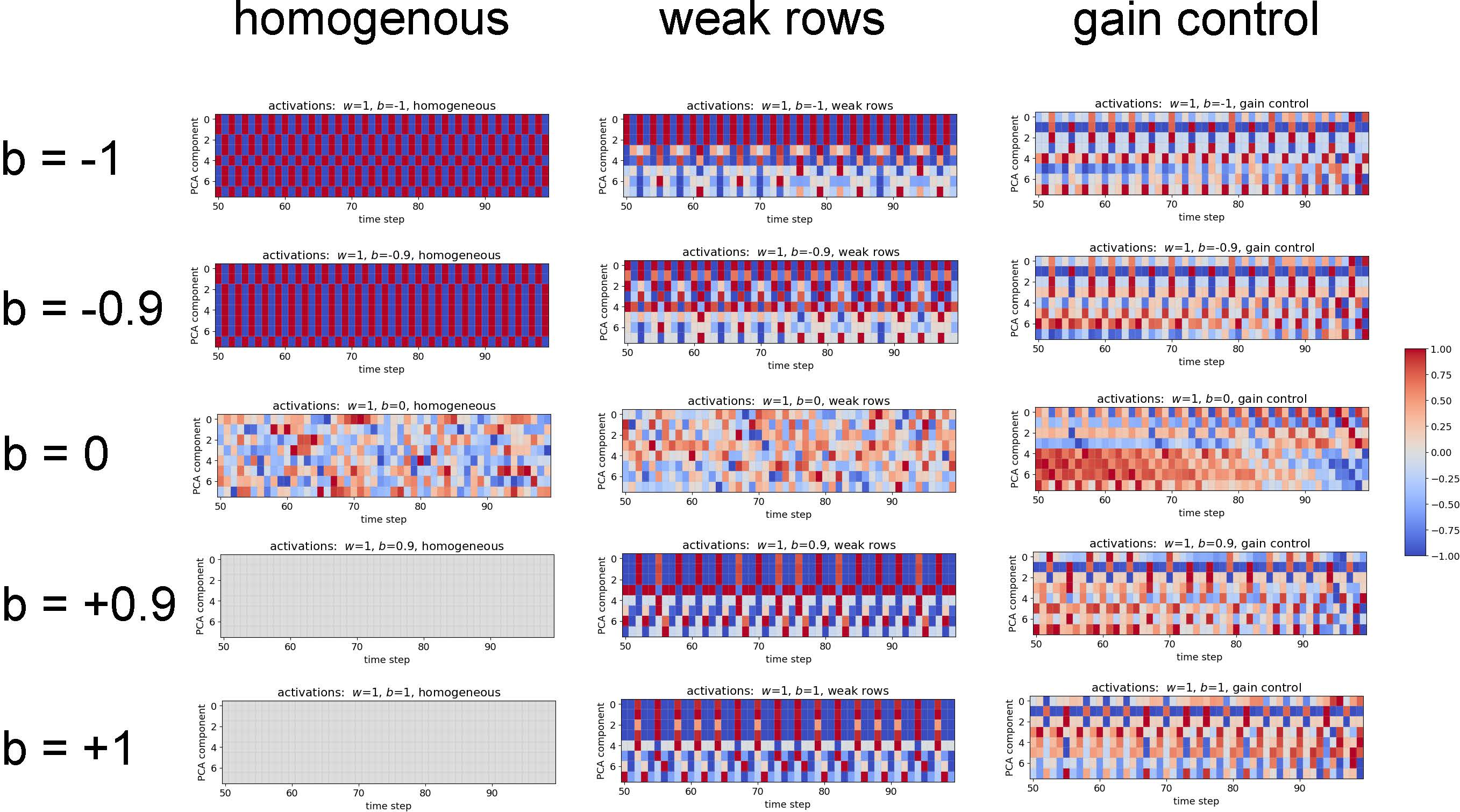

PCA performed on reservoir activations reveals that, in homogeneous reservoirs, all principal components in saturated regimes are near-constant or binary, encoding negligible input-related diversity. In contrast, `weak row' reservoirs exhibit a subset of principal components with rich, input-locked temporal structure even in the presence of global saturation elsewhere, greatly enhancing the informative subspace available to the readout layer.

Figure 2: Principal component analysis demonstrates the emergence of temporally diverse, input-coupled activity in structured systems.

Dynamical Regulation via Automatic Gain Control

The second strategy adds a global feedback unit that adjusts a multiplicative gain on tanh4, in response to the running average reservoir RMS activity, steering it toward a setpoint. This implements an online control analogous to neuromodulatory regulation of circuit excitability in biological systems. The effect is the dynamical suppression of both chaotic and saturated attractors, even for arbitrarily strong coupling and arbitrary tanh5. All dynamical measures—fluctuation, nonlinearity, covariances—now remain in the optimal range for input representation, and accuracy is near perfect across the entire sweep of network parameters.

Implications

The demonstrated structural and dynamical interventions together broaden the operable dynamical regime of reservoir computers, obviating the need for fine-tuned positioning at the edge of chaos. Practically, this decouples functional performance from microscopic details of network connectivity and statistics, allowing robust deployment in unpredictable or time-varying environments, and enhancing resilience to parameter drift or input outliers. The results are directly relevant to neuromorphic reservoir implementations, where hardware constraints preclude post-hoc retraining or parameter adjustment.

Theoretically, the findings bear on the relationship between heterogeneity, modularity, and computational stability in high-dimensional nonlinear systems. Specifically, even minimal subpopulations with reduced recurrent gain can undermine global attractor pathologies, providing a blueprint for minimal structural interventions, while global homeostatic feedback (AGC) provides a dynamical alternative to architectural fine-tuning. These insights connect closely to the neuroscience of homeostatic and structural plasticity, echo state property stabilization, and biologically-inspired neuromodulatory control [turrigiano2012homeostatic, manjunath2013echo].

Future Directions

Further work should characterize the interplay between local and global stabilization mechanisms, examine scalability to larger networks and more complex tasks, and systematically explore interactions with input-driven transitions into or out of pathological states. The extension to tasks requiring strong nonlinearity or saturation—such as emergent logic or discrete attractor computation—remains open. In addition, integration of local, biologically-plausible forms of homeostatic plasticity or inhibitory stabilization may yield further insight into how regulation and architecture jointly shape functional capacity.

Conclusion

This study identifies and characterizes minimal, non-destructive structural and dynamical interventions that suppress runaway excitation and promote robust input-driven computation in strongly coupled recurrent reservoirs. Permutative structuring of weights and automatic gain control each independently restore high performance across wide swaths of parameter space, indicating that fine-tuned network statistics are not strictly necessary for reservoir utility. These principles offer concrete guidance for the design of next-generation adaptive, robust reservoir computing systems.