- The paper presents a full-system simulator that accurately models CXL memory expansion by positioning devices correctly on the I/O bus and adhering to CXL2.0/3.0 standards.

- It integrates an unmodified Linux kernel and a complete x86 BIOS to support standard CXL programming models, ensuring realistic OS-level interactions.

- Experimental benchmarks reveal precise cache behavior and memory interleaving impacts, validating the simulator's ability to assess latency and bandwidth under varied configurations.

Comprehensive System-Level Simulation of CXL Memory Expansion with CXLRAMSim v1.0

Motivation and Context

The intensifying memory footprint of generative AI workloads, particularly during LLM training and inference, is catalyzing rapid adoption of scale-up systems leveraging CXL-based load/store interconnects. However, accurate architecture-level evaluation of system memory expansion via CXL is hindered by the lack of full-system simulators with precise interface modeling, BIOS support, and protocol fidelity. Prior tools often oversimplify CXL behavior, assign memory expansion to architecturally invalid buses, or require modifying the OS or kernel, thus reducing their utility in rigorous micro-architectural or system-level studies.

Simulator Architecture and Implementation

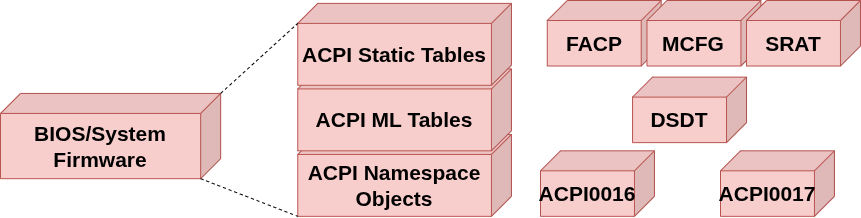

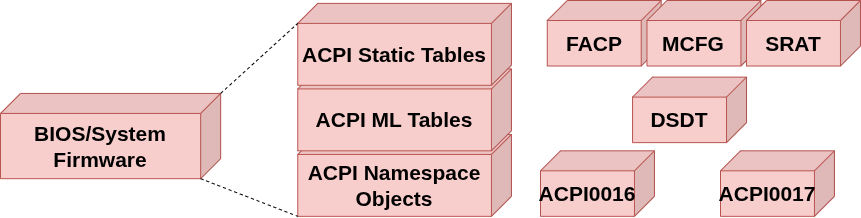

CXLRAMSim v1.0 introduces a gem5-integrated, full-system CXL memory card simulator that positions CXL devices at their correct location on the I/O bus, closely adhering to CXL2.0/3.0 specifications. By leveraging an unmodified Linux kernel (v6.14+) and native drivers, the tool transparently supports established OS-level CXL programming models such as zNUMA, Flat mode, and other vendor toolchains (e.g., CXL-CLI, Intel UMF, Samsung SMDK, SK hynix HMSDK). This integration preserves system-level software fidelity and enables benchmarking under realistic latency and bandwidth conditions, as well as accurate interleaving with system DRAM. Notably, the simulator brings forward a complete and extensible x86 BIOS implementation supporting the full PCIe/CXL hierarchy, including the ACPI, MCFG, DSDT, CEDT, and SRAT tables, critical for exposing compute and memory heterogeneity to the OS.

Figure 2: The x86 BIOS model in gem5 is extended to fully support enumeration and configuration of CXL2.0 devices via appropriate ACPI and PCIe tables.

Protocol Modeling and Hardware Abstractions

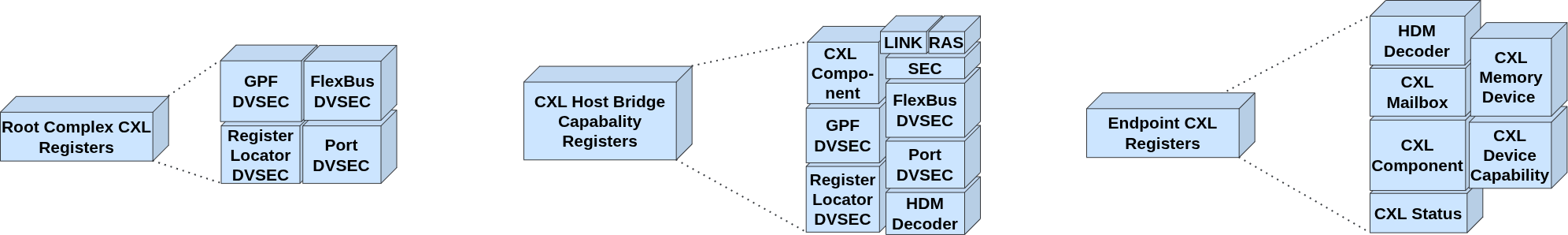

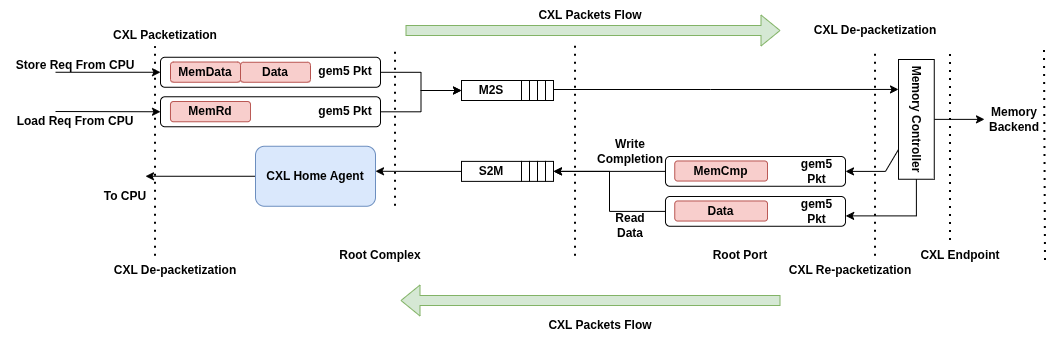

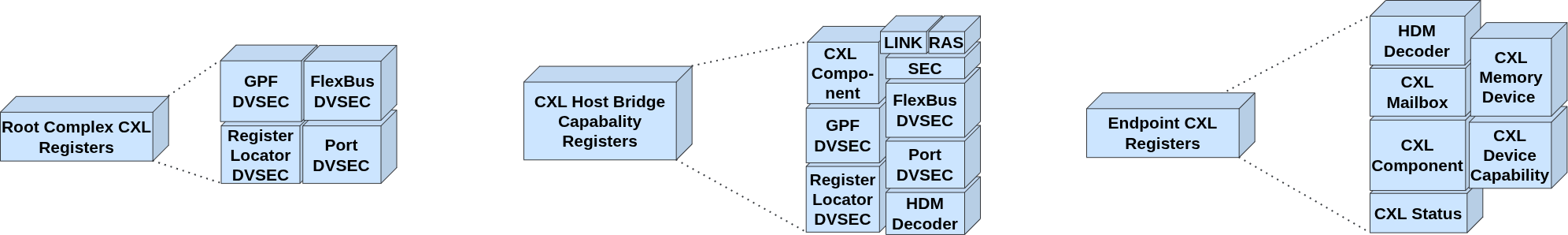

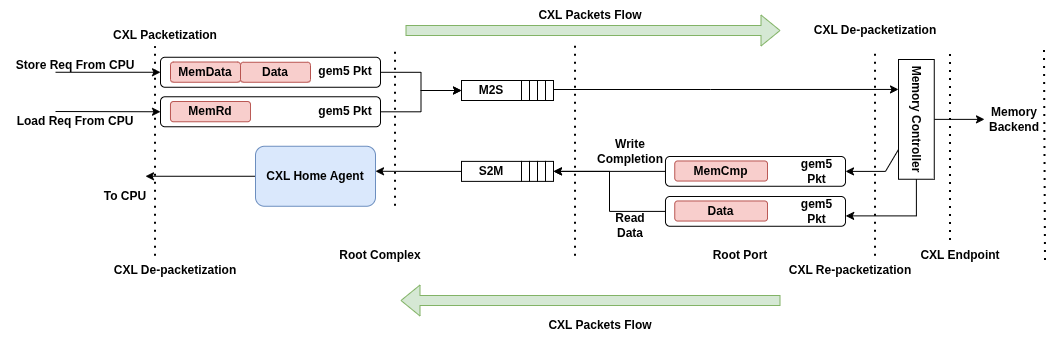

CXLRAMSim features architecturally correct implementations of both CXL.io and CXL.mem protocols, including packetization logic in the Root Complex and de-packetization at the Endpoint. Three classes of configuration space registers are precisely realized according to the CXL2.0 specification: Root Complex registers (DVSEC, Flexbus, Port), Host Bridge registers (Link, RAS, Security, HDM decoders), and device-level Mailbox/Status registers. The transactional semantics—including M2S and S2M packet flows and opcode-level distinction between load/store and memory responses—are modeled with explicit control of associated latencies and bandwidths at the Python layer, permitting hardware-calibrated configuration.

Figure 1: The CXL register set implementation in gem5 conforms to all major CXL2.0 interface and capability requirements for Root Complex, Host Bridge, and Endpoint devices.

Figure 3: The CXL.mem Transaction Layer within gem5 accurately enforces protocol-level packetization, supporting contention, flow control, and precise timing behavior for system-level studies.

A distinguishing feature is the explicit exposure of CXL memory as a CPU-less NUMA node, supporting out-of-the-box zNUMA mode operation. The simulator enables experimentation with page interleaving policies and exposes hardware configuration through both CXL-CLI and standard Linux utilities, without requiring kernel patches or driver modifications.

Experimental Characterization

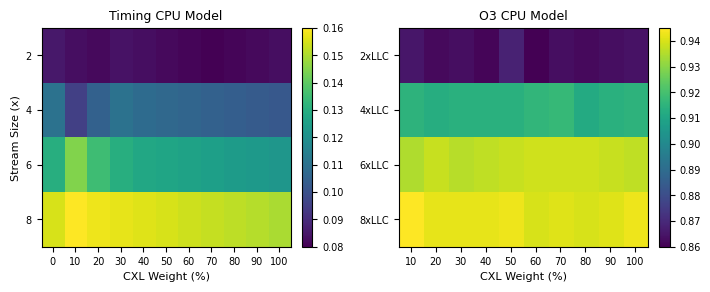

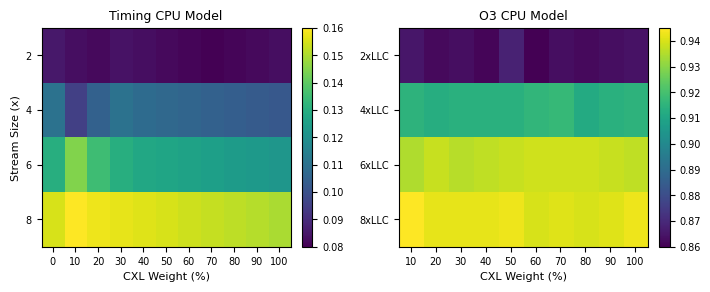

To validate fidelity and provide micro-architectural insights, the authors conducted system-level simulations using the STREAM bandwidth micro-benchmark, parameterized as recommended for stressing memory subsystems (2–8× L2 size). Experiments varied interleaving ratios between DRAM and CXL memory and measured L2 miss rates under Timing and O3 CPU models. The results indicate that LLC miss behavior can be precisely probed as a function of memory expansion configuration, interleaving scheme, and workload footprint.

Figure 4: STREAM micro-benchmark measured L2 miss rate shows the architectural cache effects of CXL memory expansion and explicit page interleaving under various CPU and memory parameters.

Practical and Theoretical Implications

CXLRAMSim v1.0 enables, for the first time, rigorous and architecturally faithful evaluation of CXL memory expansion systems at full-system, OS-unmodified scale—bridging a critical methodological gap in current hardware/software co-design research. The simulator’s extensible BIOS and firmware stack unlocks modeling of advanced configuration scenarios, such as multiple logical CXL devices (preparing for MLDs in future releases), while the protocol-level precision allows micro-architectural investigations into cache pollution, latency hiding, data placement, NUMA-aware scheduling, and resource sharing policies in heterogeneous memory architectures. Vendor-specific and user-tunable latency/bandwidth controls make CXLRAMSim adaptable for performance studies targeting forthcoming hardware revisions.

On the practical end, the simulator provides a platform for developers of device drivers, CXL-aware runtime systems, and memory-centric middleware to evaluate integration and performance prior to silicon availability. The broad support for contemporary CXL programming models ensures utility across industrial and academic environments.

Future Directions

Planned enhancements include the introduction of CXL switches for more complex topologies (v2.0), support for Processing-Near-Memory (PNM) architectures (v3.0), and full Type-2/Type-1 device modeling (v4.0). These developments signal the maturing of CXLRAMSim toward a comprehensive, standard toolchain for end-to-end system architecture research in the CXL ecosystem.

Conclusion

CXLRAMSim v1.0 provides an unprecedentedly faithful, BIOS- and protocol-accurate system simulation environment for CXL memory expansion in gem5. By enabling unmodified OS and toolchain integration, supporting vendor programming models, and exposing low-level architectural details, it establishes a baseline for future research on memory disaggregation, NUMA-aware scheduling, and heterogeneous system architectures. The tool’s architectural correctness and extensibility render it an essential asset for system architects and memory research communities focused on CXL-enabled platforms.