- The paper introduces a stochastic bilevel reformulation that extends projection methods to inconsistent convex feasibility problems using adaptive Polyak-like stepsizes.

- The proposed SBBP method utilizes random mini-batches and Bregman geometry to achieve both sublinear ergodic and linear convergence rates under noise.

- It demonstrates robust performance and optimal block size selection for scalability in signal recovery and computational mathematics applications.

Stochastic Block Bregman Projection with Polyak-like Stepsize for Possibly Inconsistent Convex Feasibility Problems

Introduction and Motivation

The paper "Stochastic Block Bregman Projection with Polyak-like Stepsize for Possibly Inconsistent Convex Feasibility Problems" (2603.29348) presents a unified and robust algorithmic and analytical framework for the convex feasibility problem (CFP) in finite-dimensional Hilbert spaces, including cases where the intersection of underlying convex sets may be empty (inconsistent CFPs)—a scenario with limited theoretical tools and scalable algorithms. The work addresses major challenges associated with scalability, inconsistency, and the need for regularized solutions in practical inverse problems, signal recovery, and computational mathematics. The authors propose a stochastic bilevel reformulation that naturally generalizes prior stochastic and Bregman projection methods and establish rigorous convergence guarantees encompassing both sublinear and linear rates.

The methodological starting point is the stochastic bilevel reformulation for the CFP. Instead of seeking a point in C=∩i=1mCi, which might be empty due to modeling inaccuracies or noise, the model selects a regularized surrogate solution:

x∈Rnminψ(x)s.t. x∈argx∈RnminF(x),F(x)=E[fi(x)]=i=1∑mP(i)fi(x)

where fi(x) quantifies the deviation from convex set Ci, and ψ imposes structural regularization (e.g., sparsity, low-norm, etc.). This bilevel structure fully integrates regularizers (e.g., ℓ1 for sparsity) and accommodates inconsistent feasibility, generalizing both classical projection and proximity minimization approaches. The foundation in convex and Bregman geometry circumvents the need for geometric regularity of sets by employing a Bregman distance growth condition on F.

SBBP Method: Algorithmic Structure

The primary algorithmic development is the Stochastic Block Bregman Projection (SBBP) method with minimization over random mini-batches and Bregman distances leveraged for projections:

- Stochastic Mini-batch: At iteration k, a random block Jk⊂[m] is sampled, and the stochastic gradient ∑i∈Jkwi,k∇fi(xk) is evaluated.

- Dual Update: x∈Rnminψ(x)s.t. x∈argx∈RnminF(x),F(x)=E[fi(x)]=i=1∑mP(i)fi(x)0.

- Primal Update: x∈Rnminψ(x)s.t. x∈argx∈RnminF(x),F(x)=E[fi(x)]=i=1∑mP(i)fi(x)1.

The block selection induces parallelization and computational efficiency, while the use of Bregman geometry ties regularization directly into the iteration dynamics—including propagation of sparsity-inducing properties and strong convexity.

Two categories of stepsizes are analyzed:

- Decreasing Mirror Stochastic Polyak Stepsize (DecmSPS): An adaptive Polyak-like rule that tunes step lengths via noisy function suboptimality, ensuring expected convergence to minimizer sets even for inconsistent problems.

- Projective Stepsizes: Stepsizes derived from a cut-and-project interpretation, corresponding to Bregman projections onto separating halfspaces determined by stochastic cuts.

Theoretical Results: Convergence and Rates

Sublinear and Linear Convergence

SBBP is shown to admit both sublinear and linear convergence rates in expectation under mild assumptions on the proximity function x∈Rnminψ(x)s.t. x∈argx∈RnminF(x),F(x)=E[fi(x)]=i=1∑mP(i)fi(x)2. Notably, the use of DecmSPS yields ergodic sublinear convergence for the expected inner function and linear convergence in Bregman distance to the minimizer set given a Bregman distance growth condition. Critically, exact convergence to the appropriate minimizer set is achieved in expectation even for inconsistent CFPs.

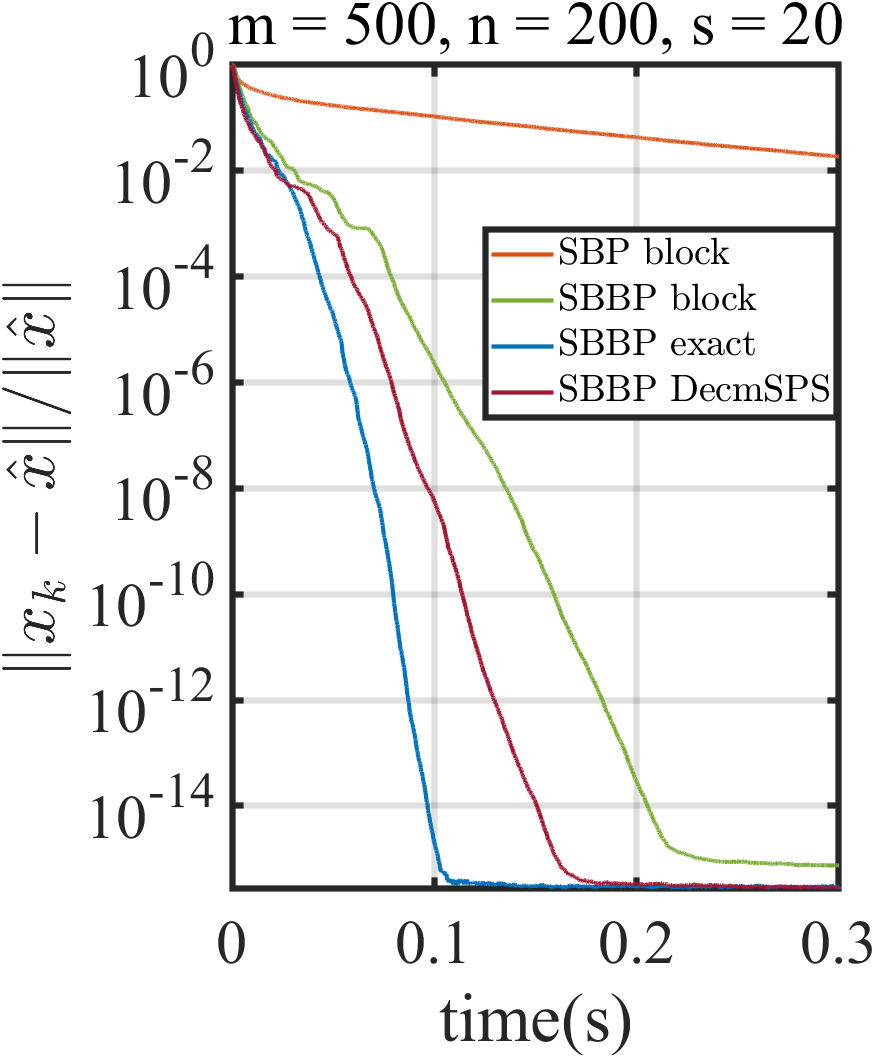

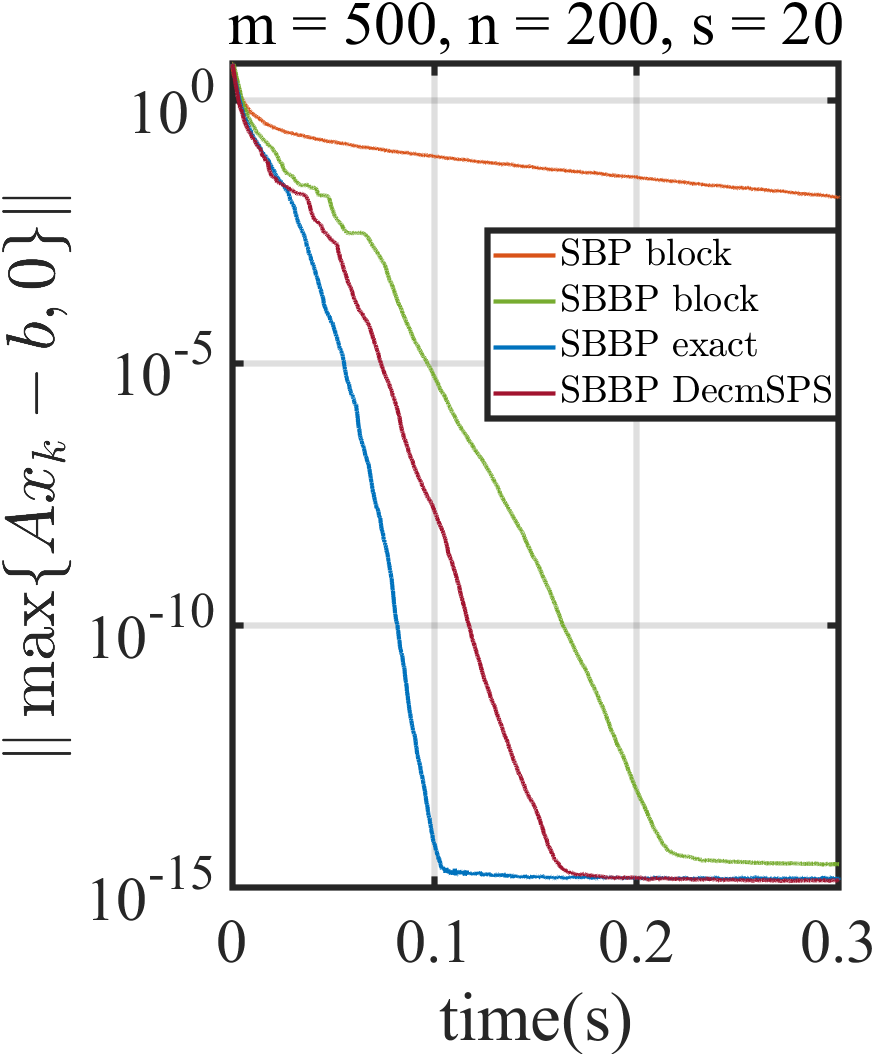

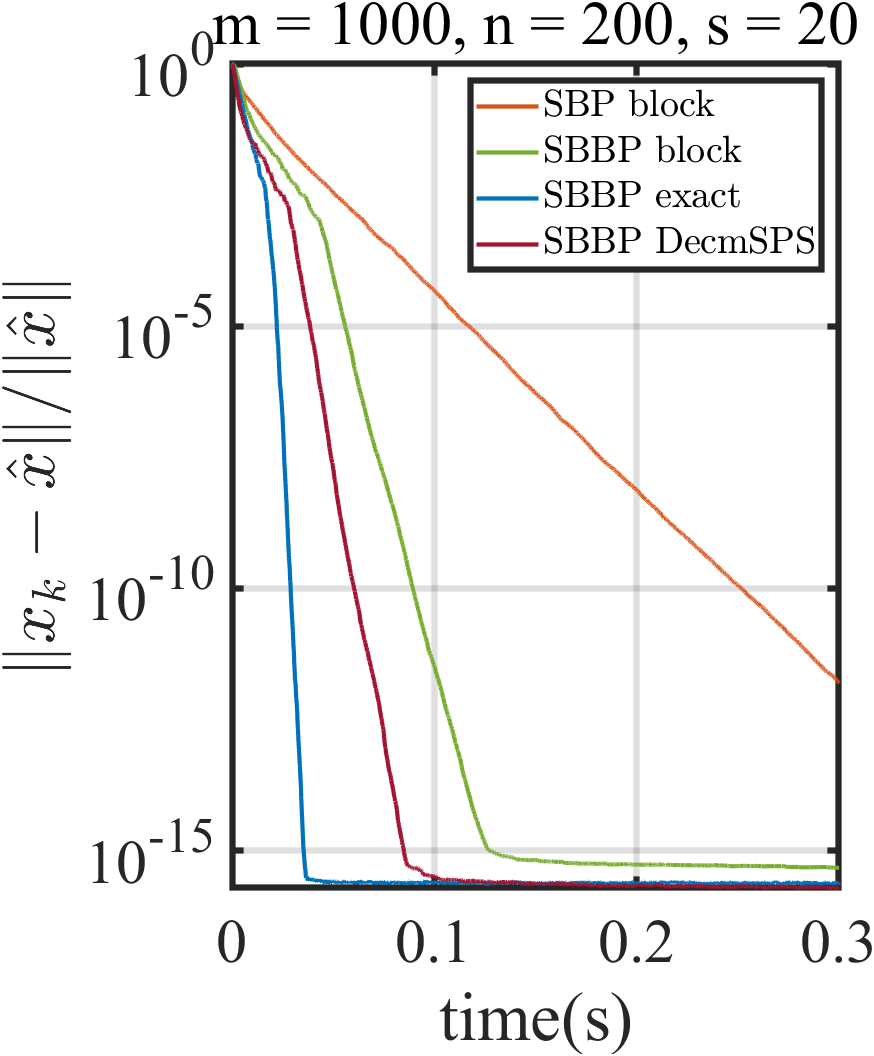

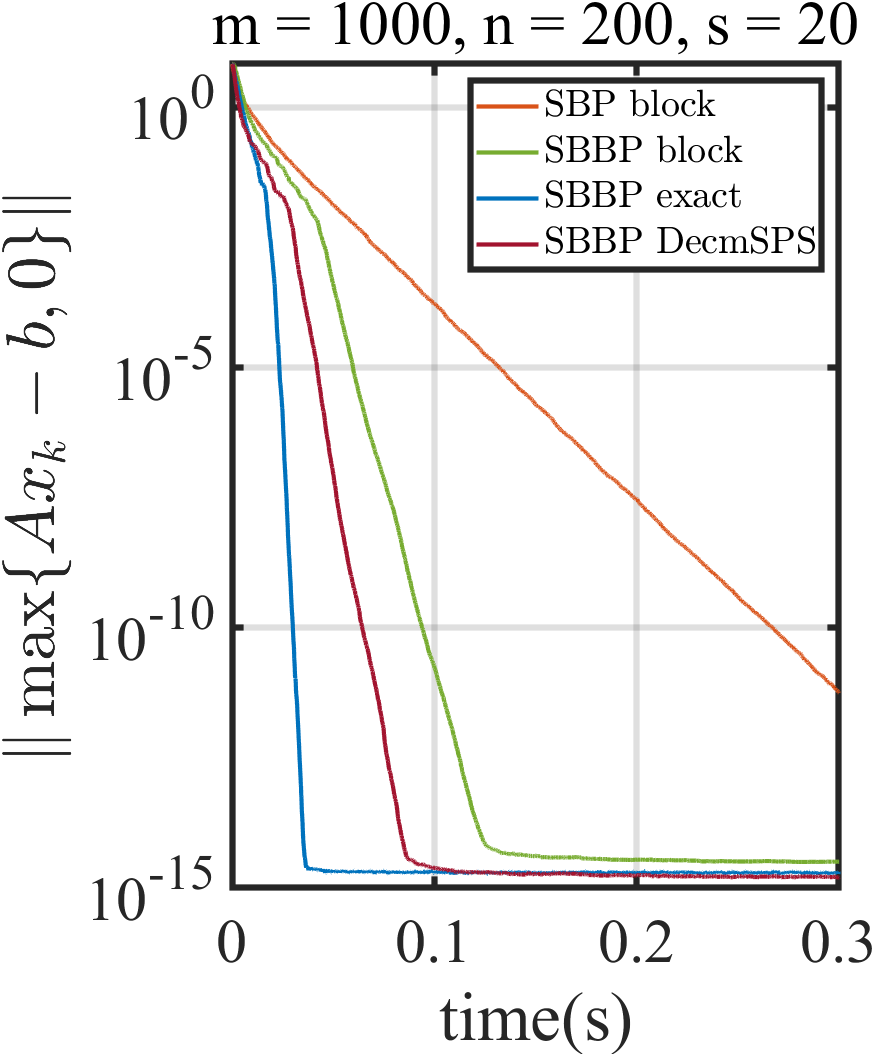

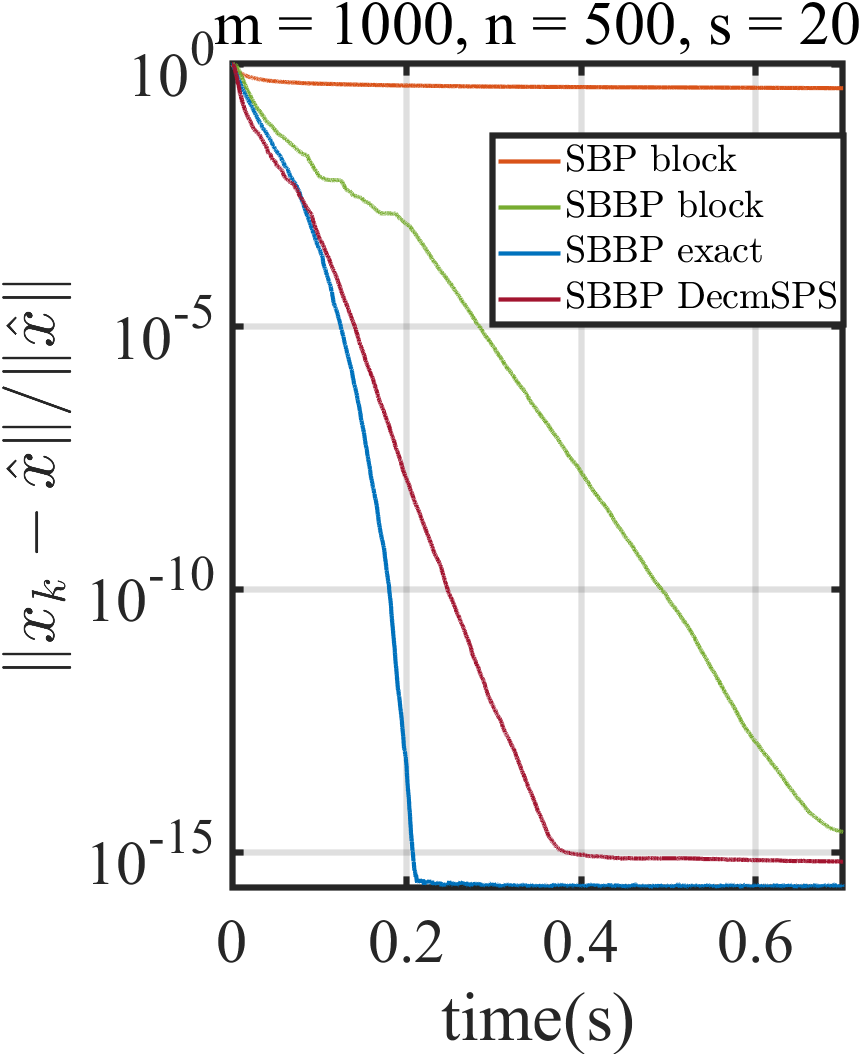

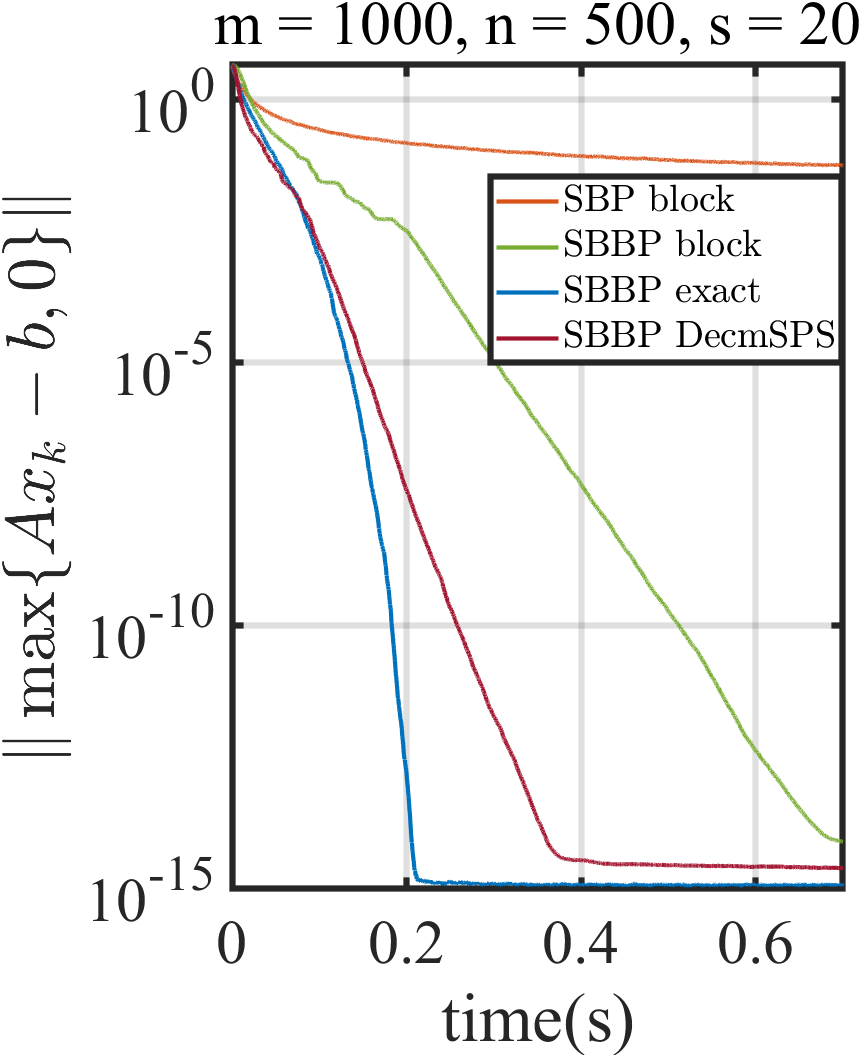

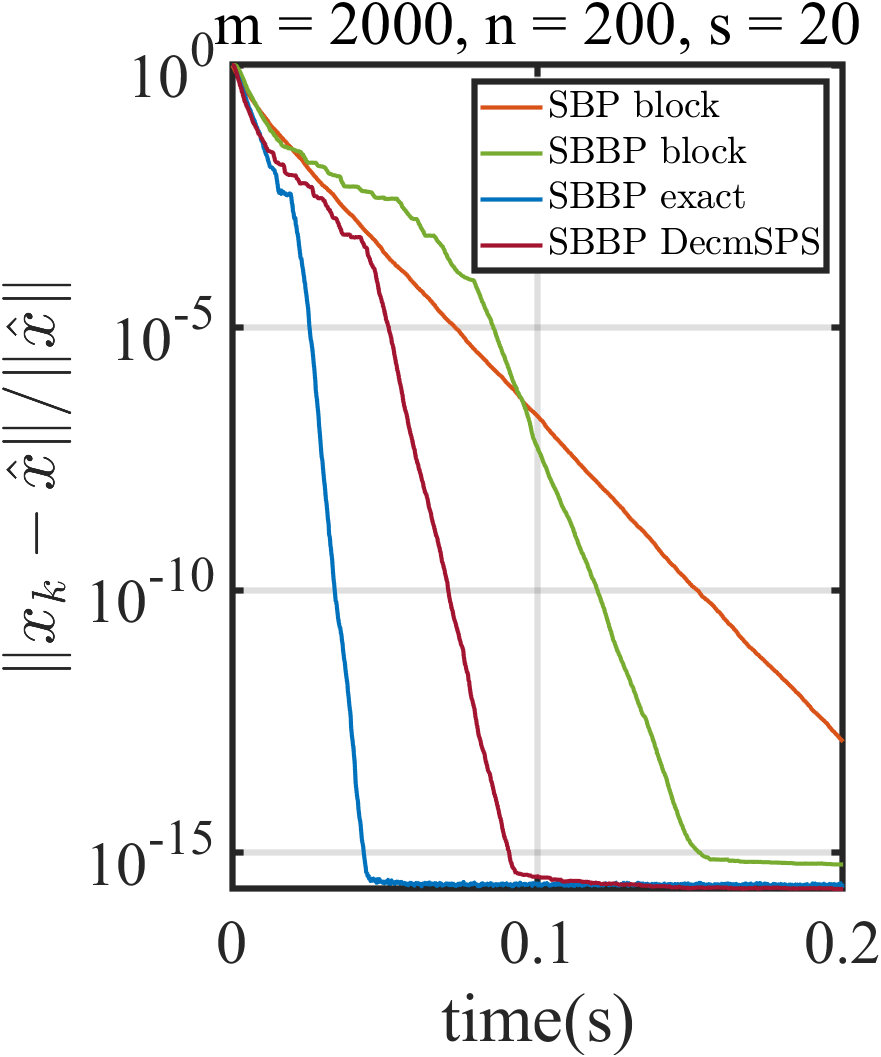

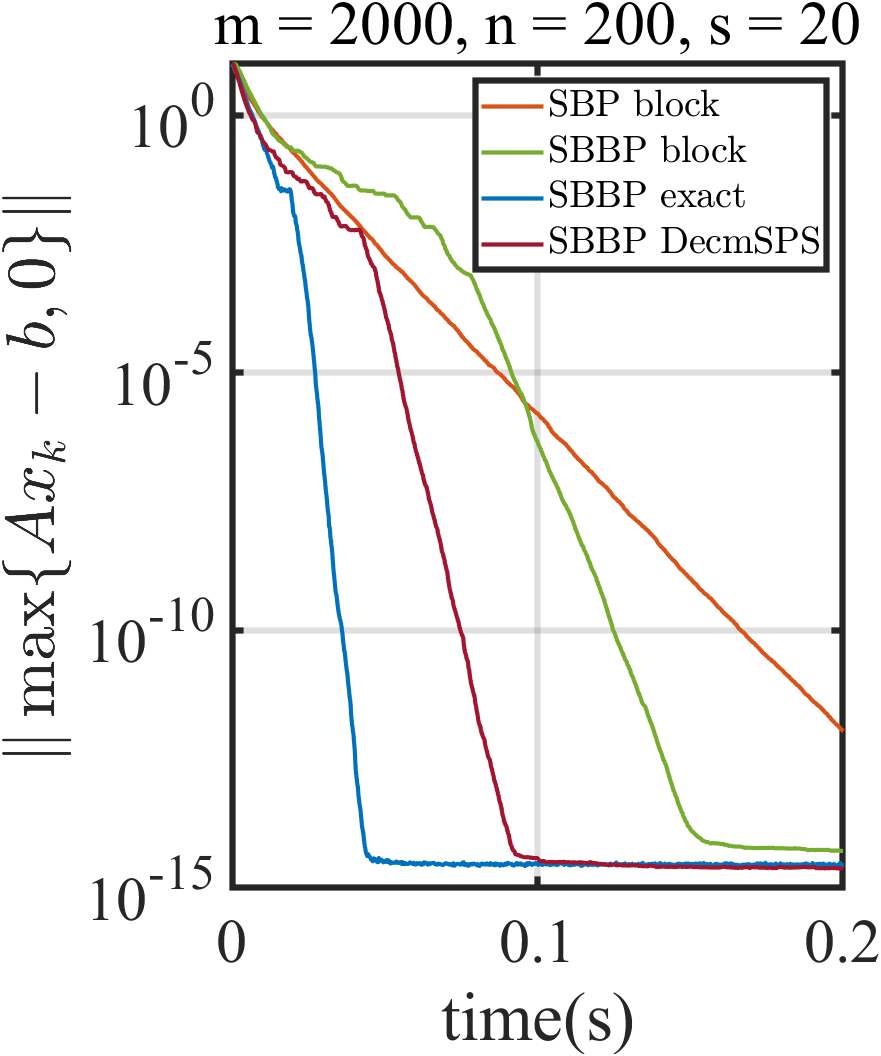

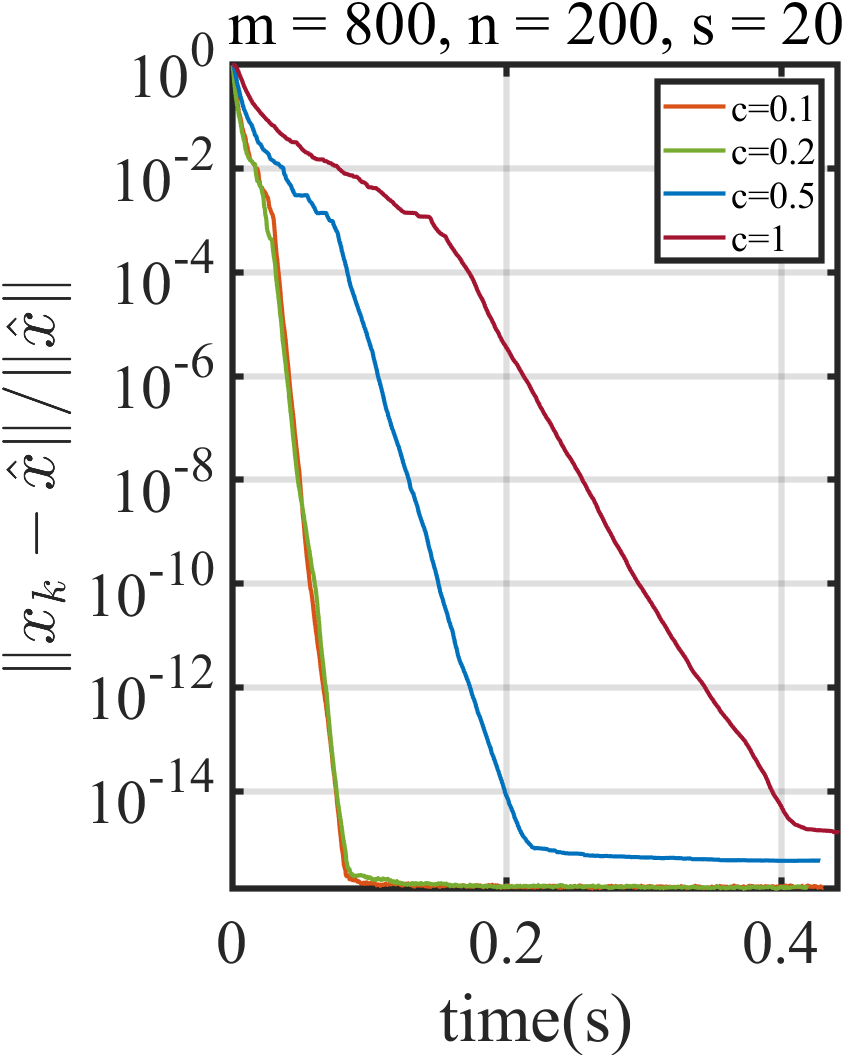

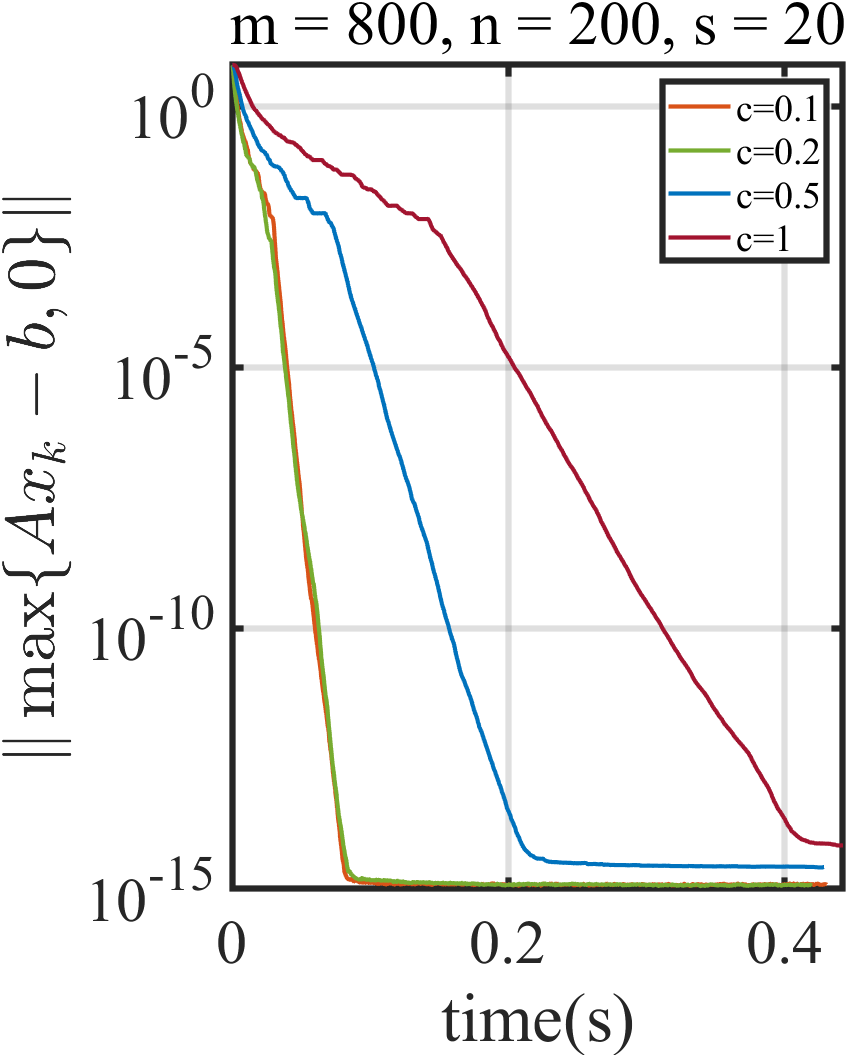

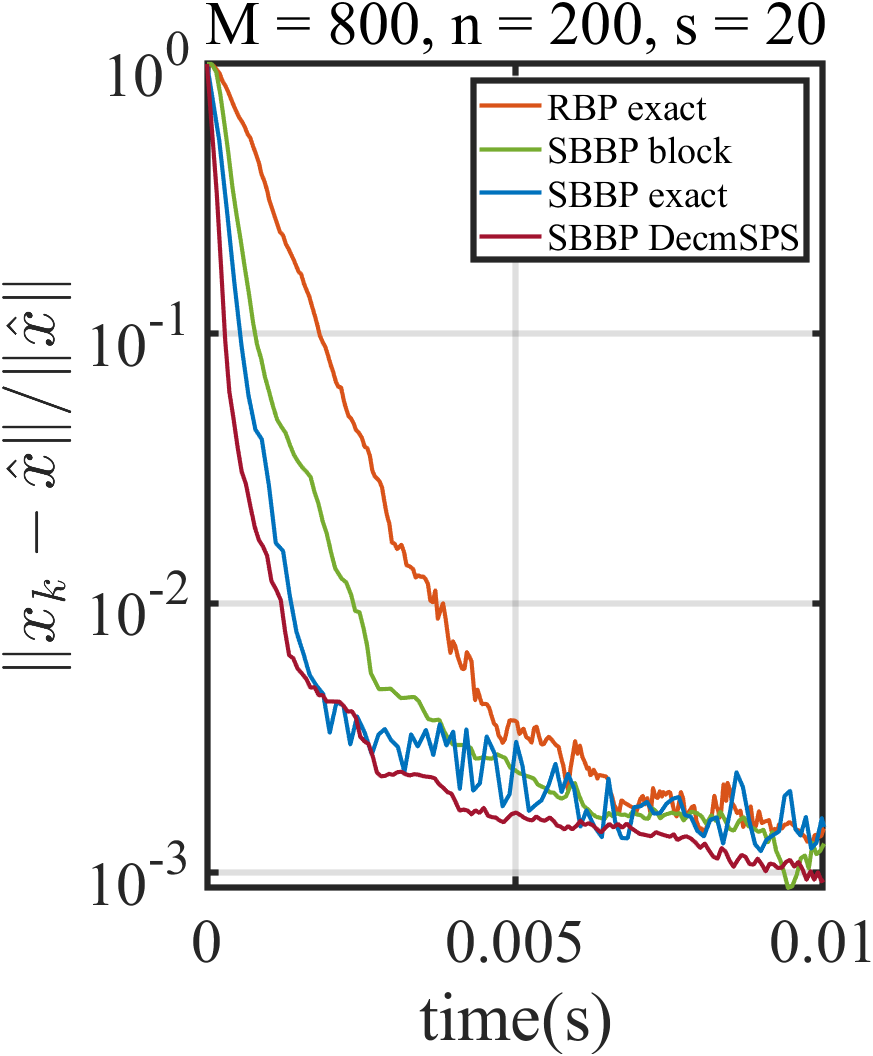

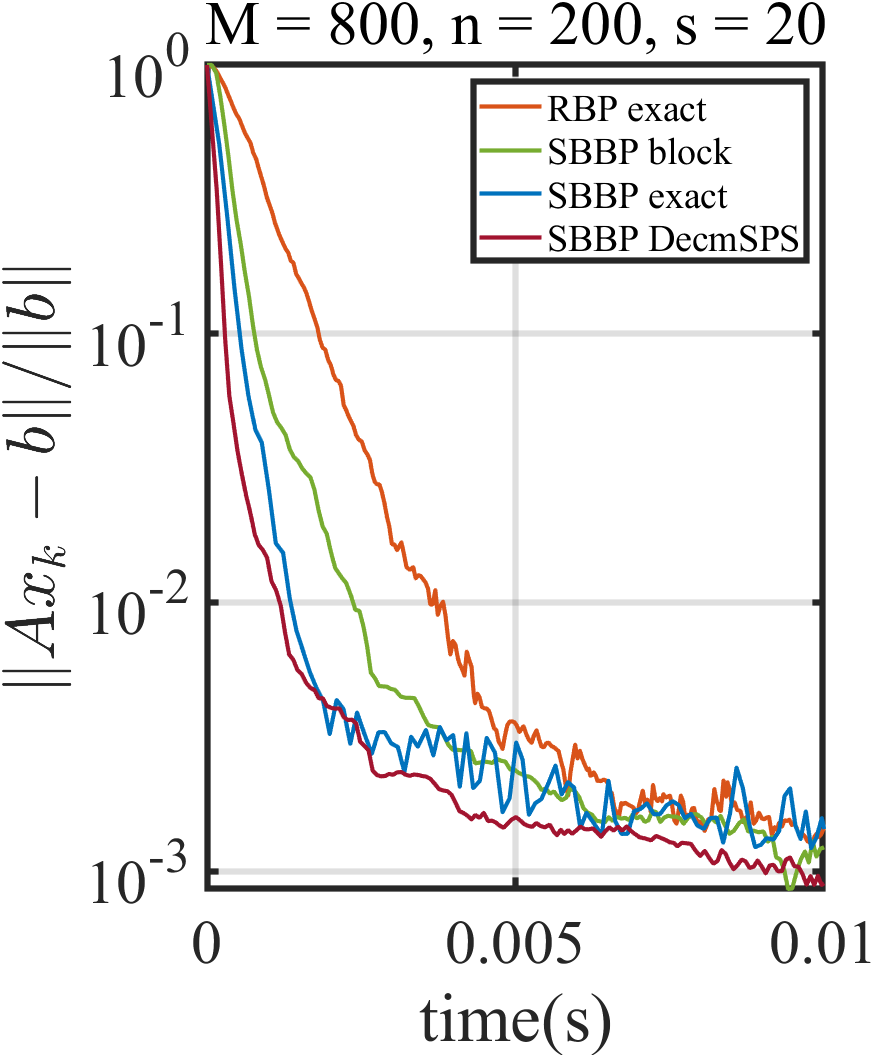

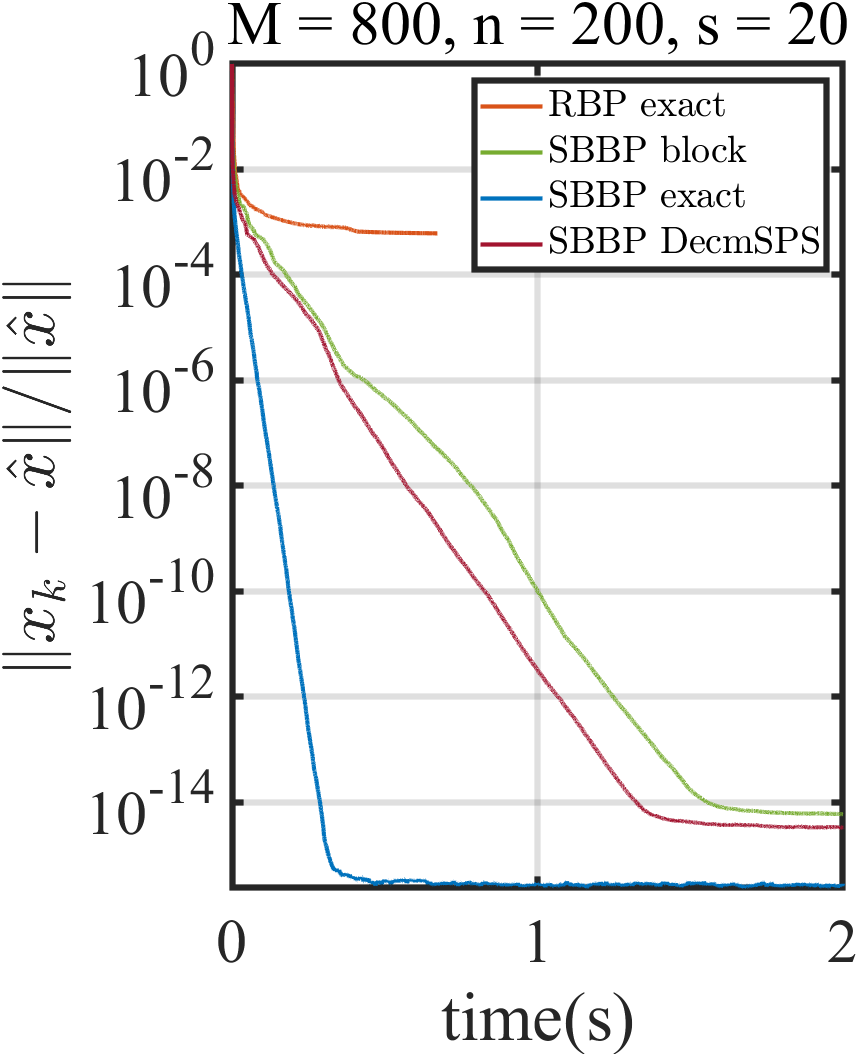

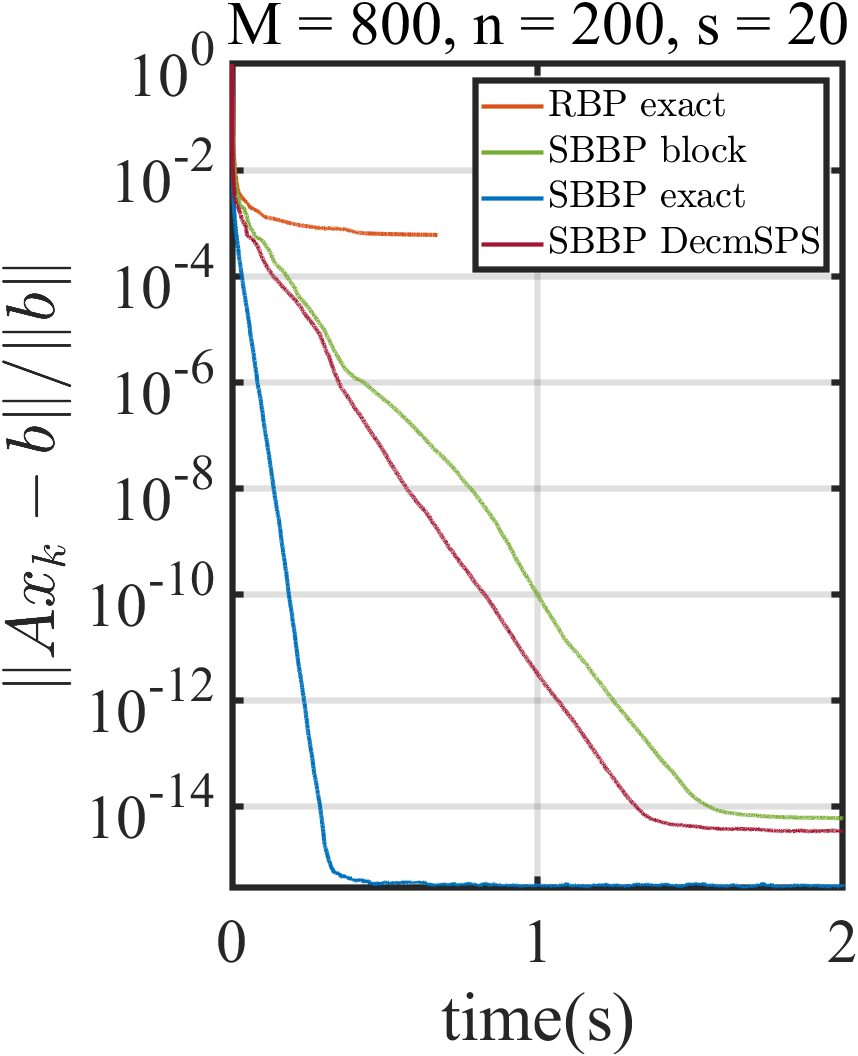

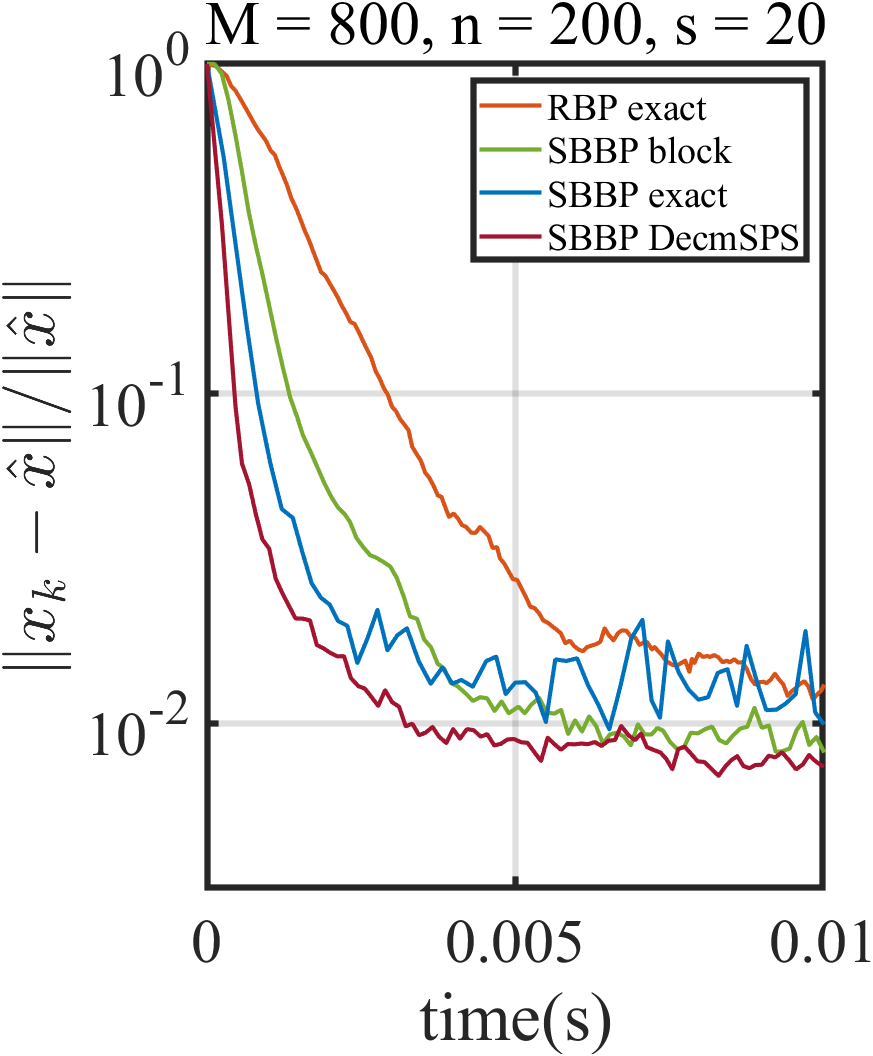

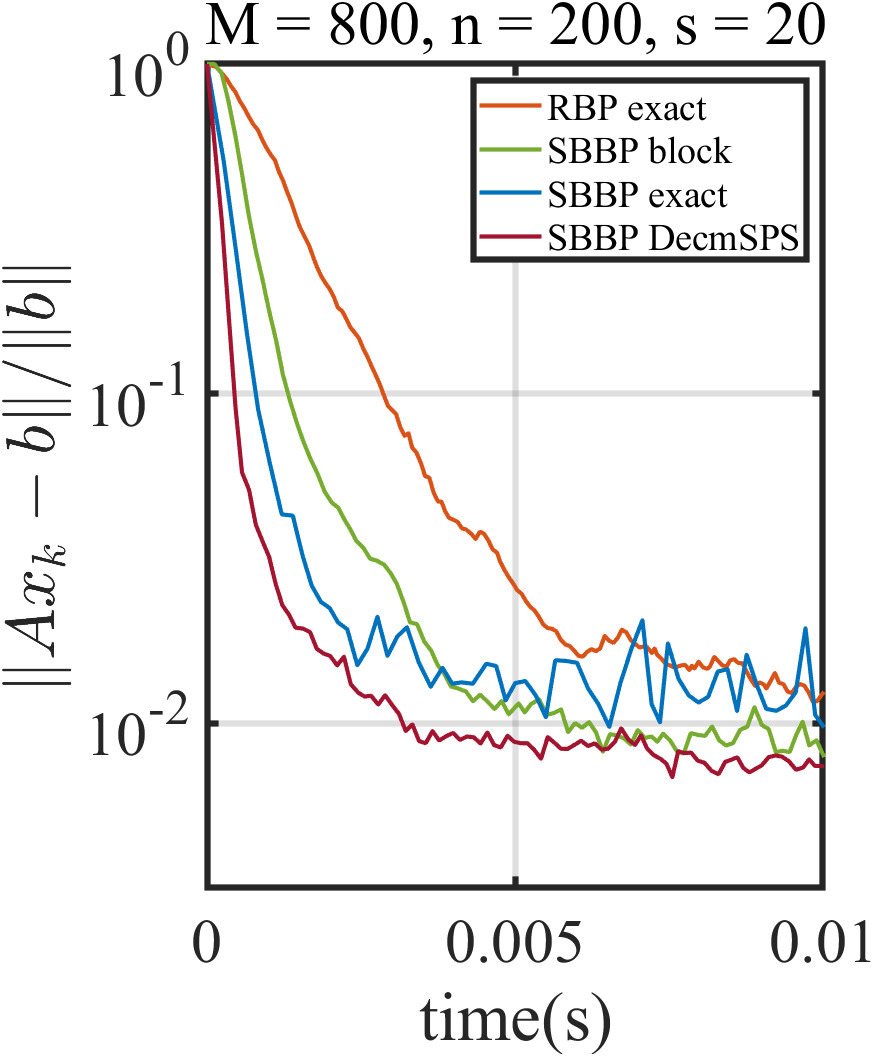

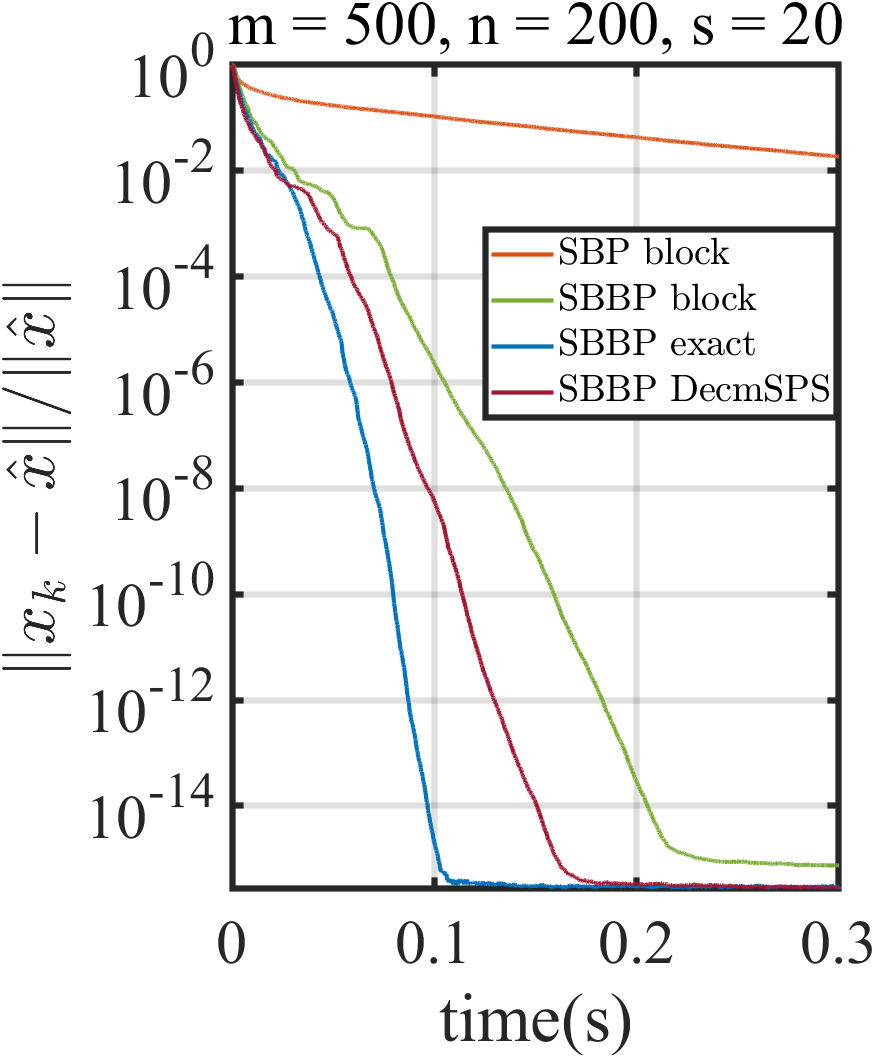

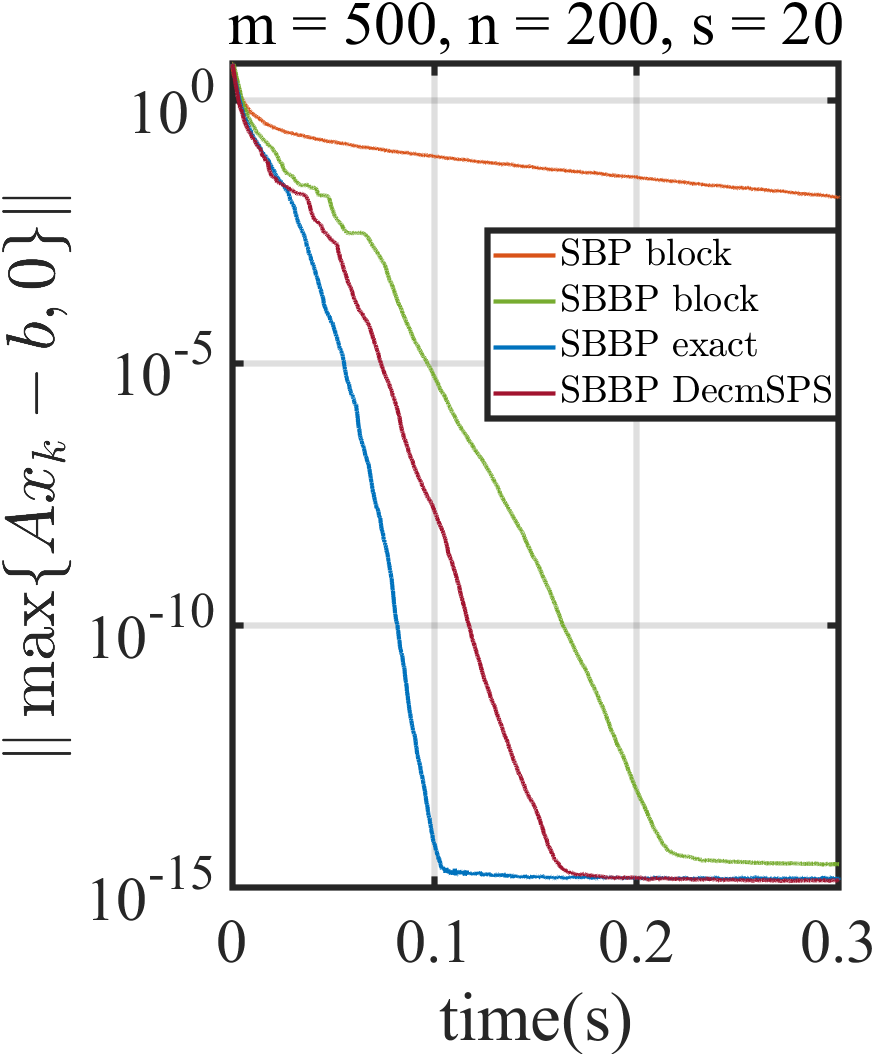

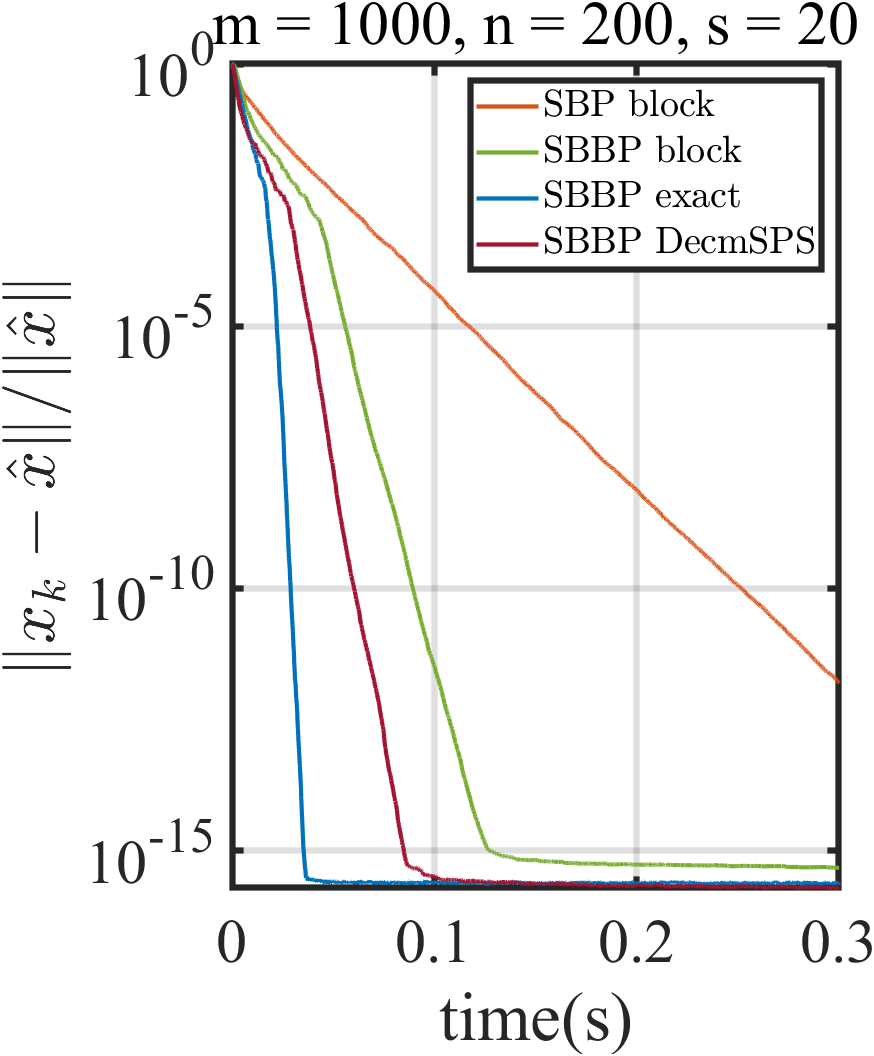

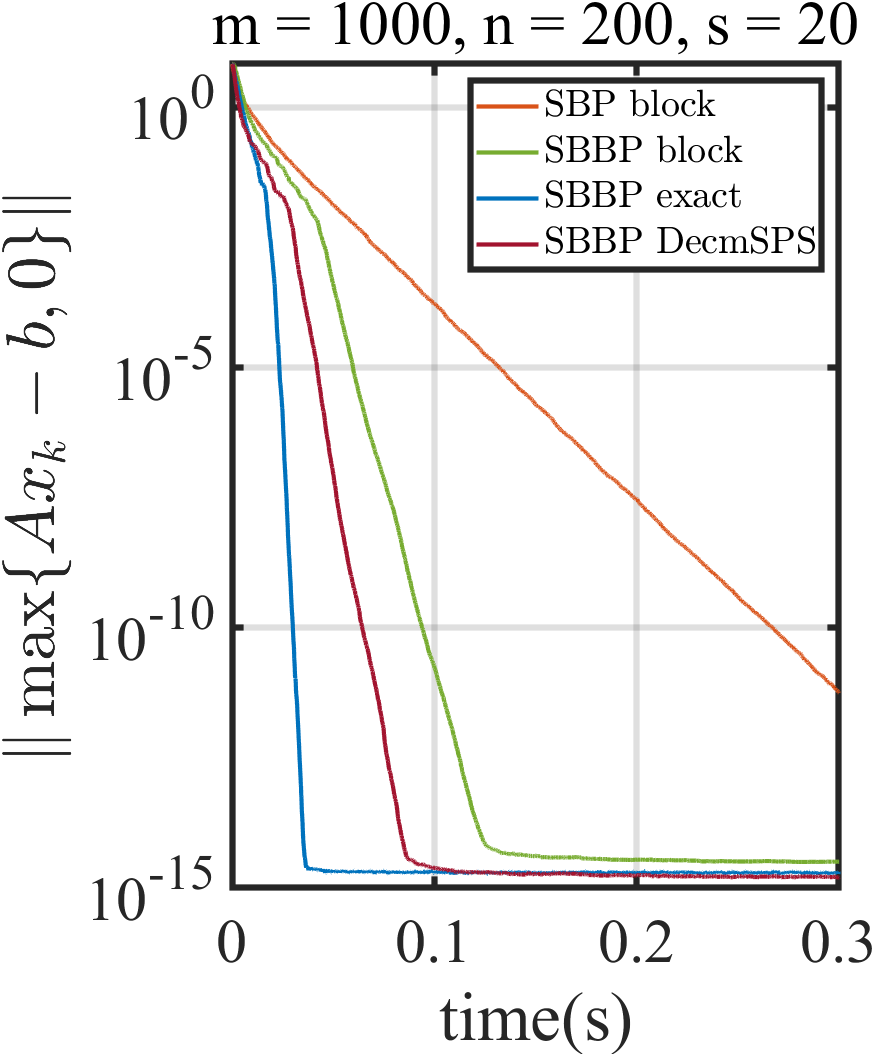

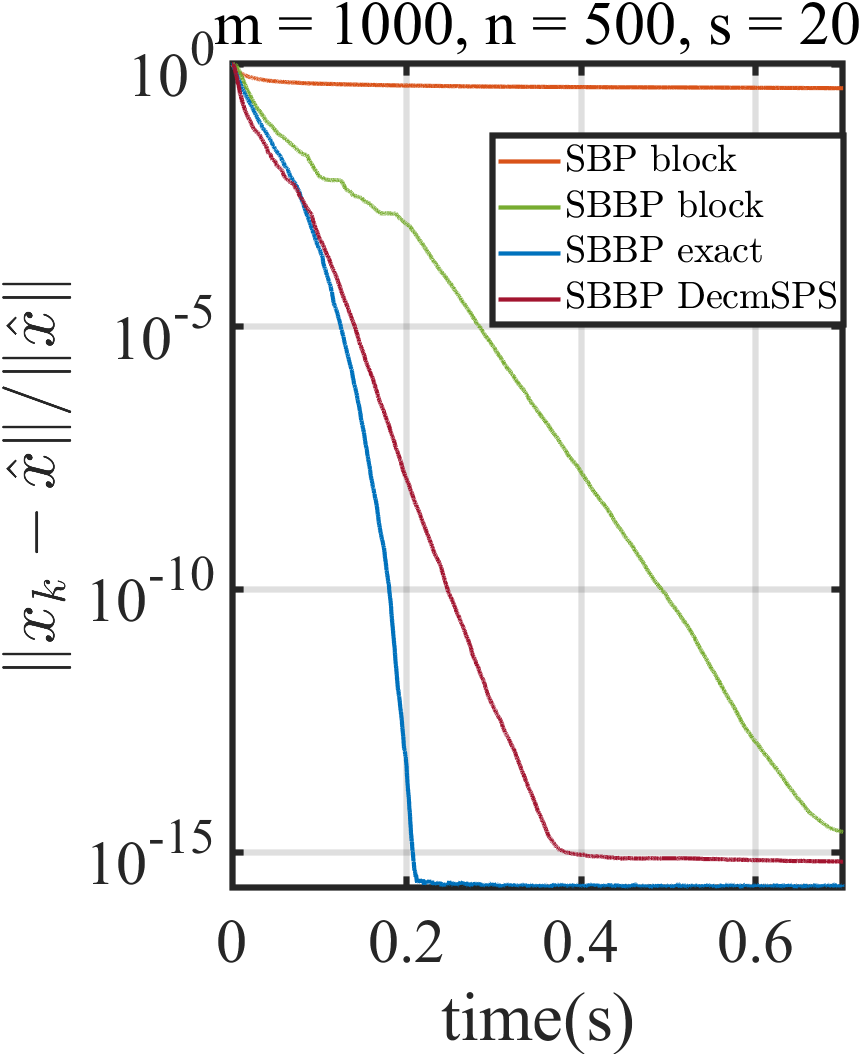

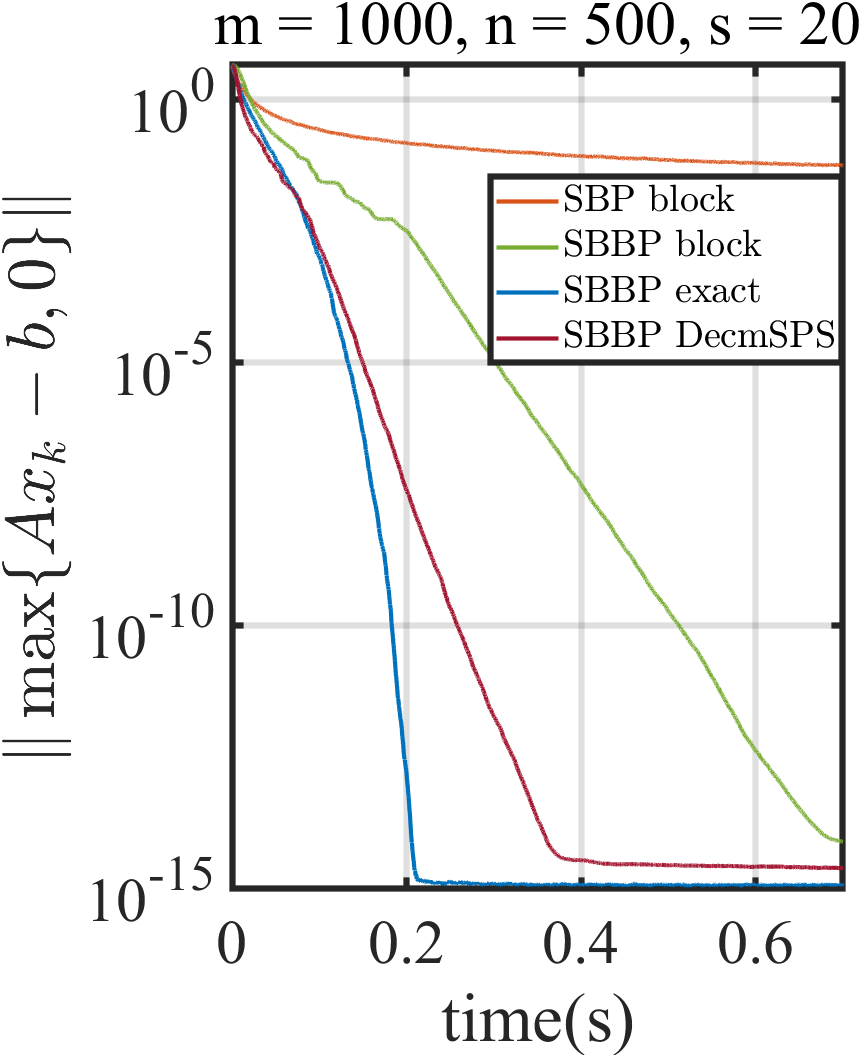

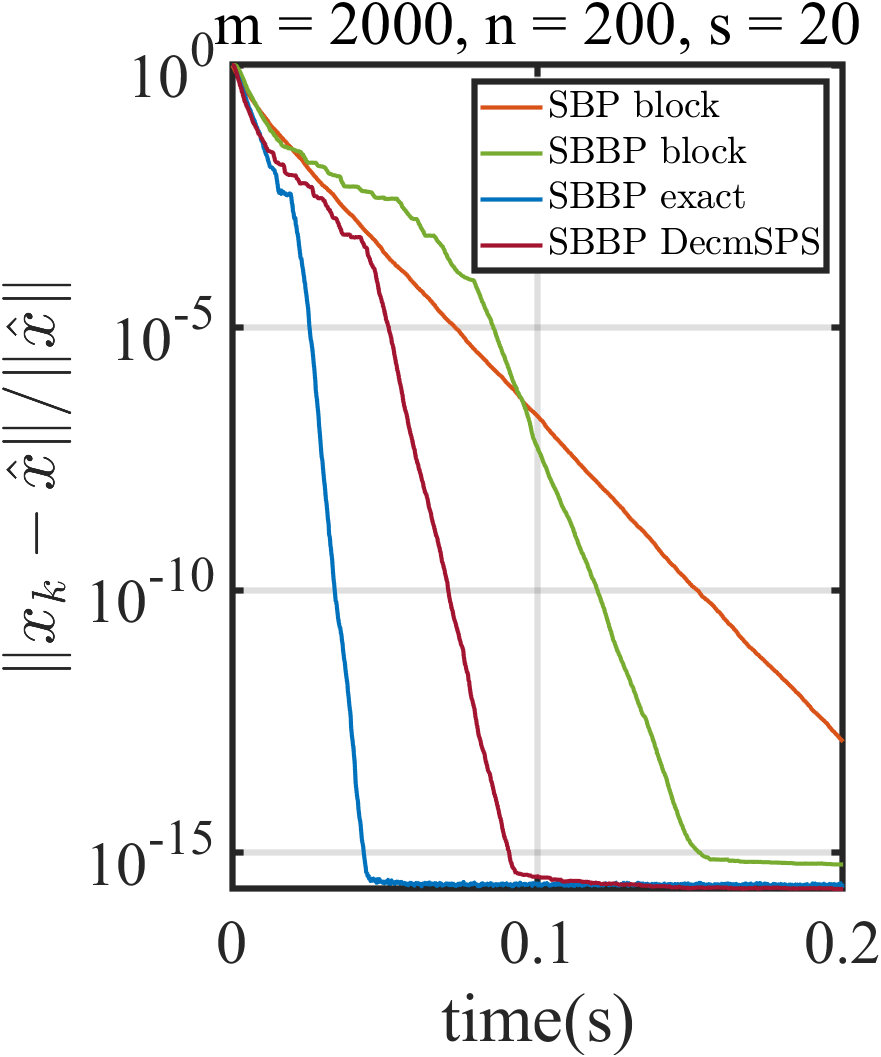

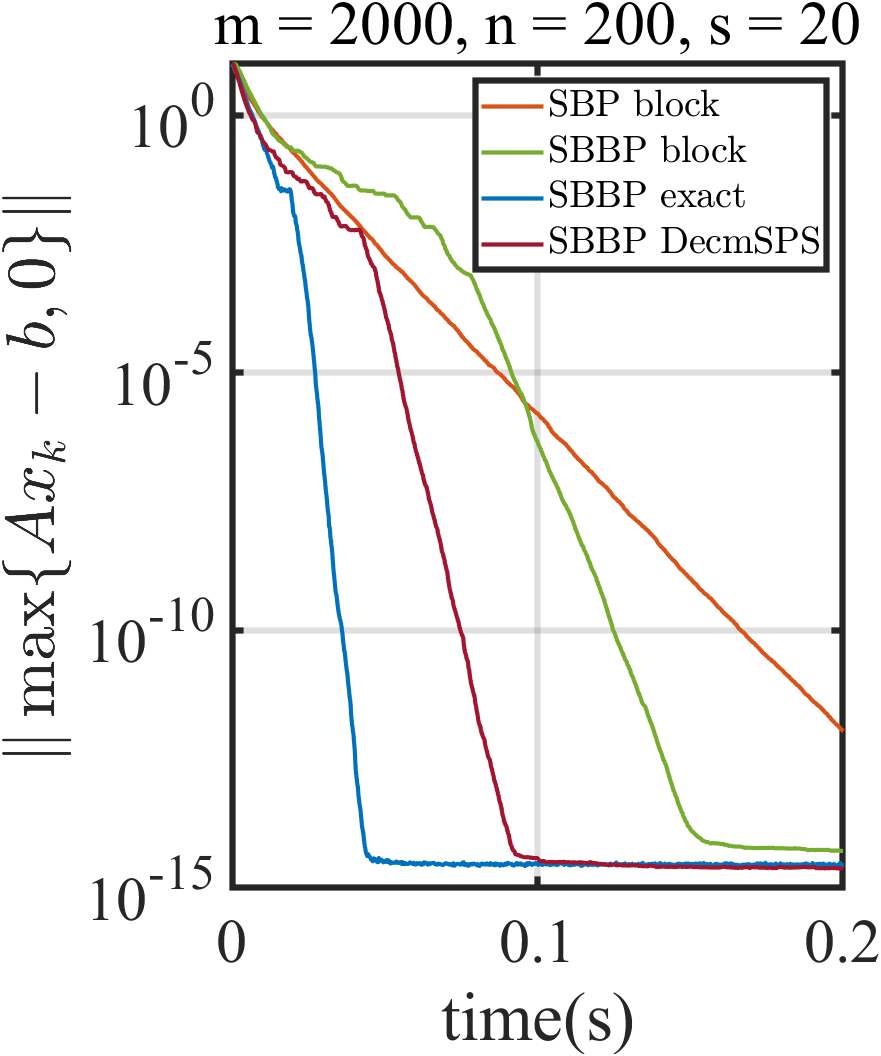

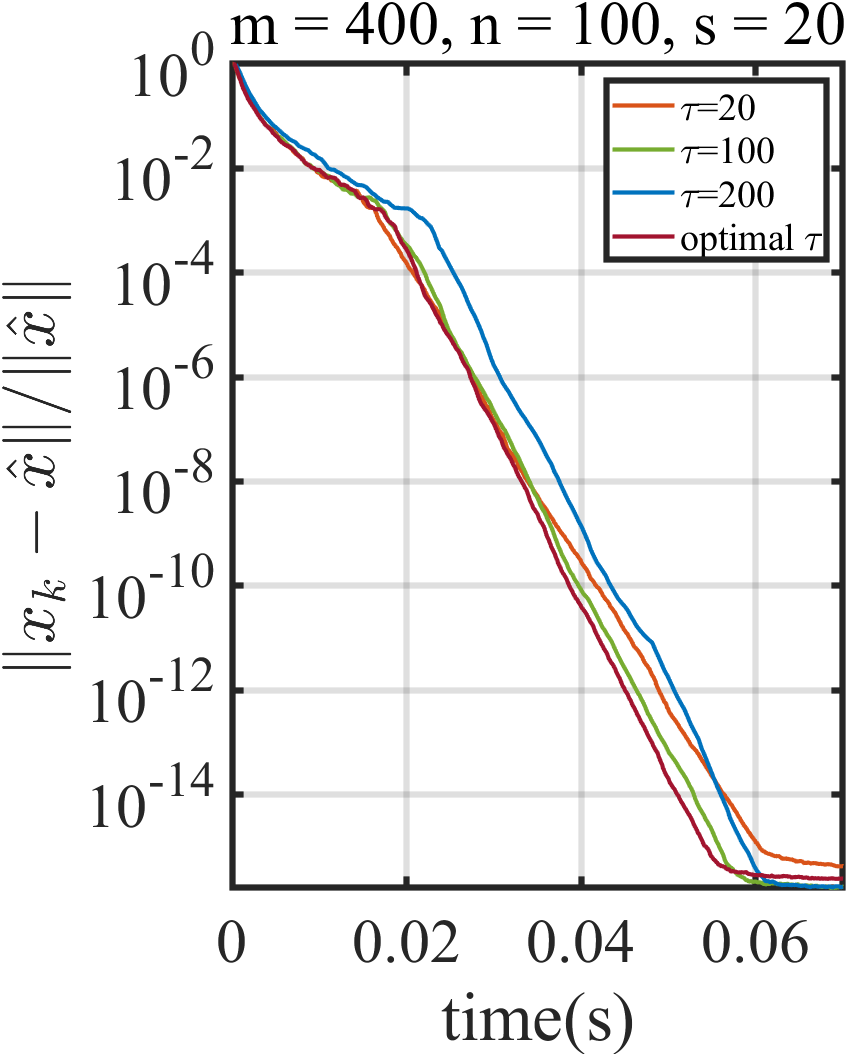

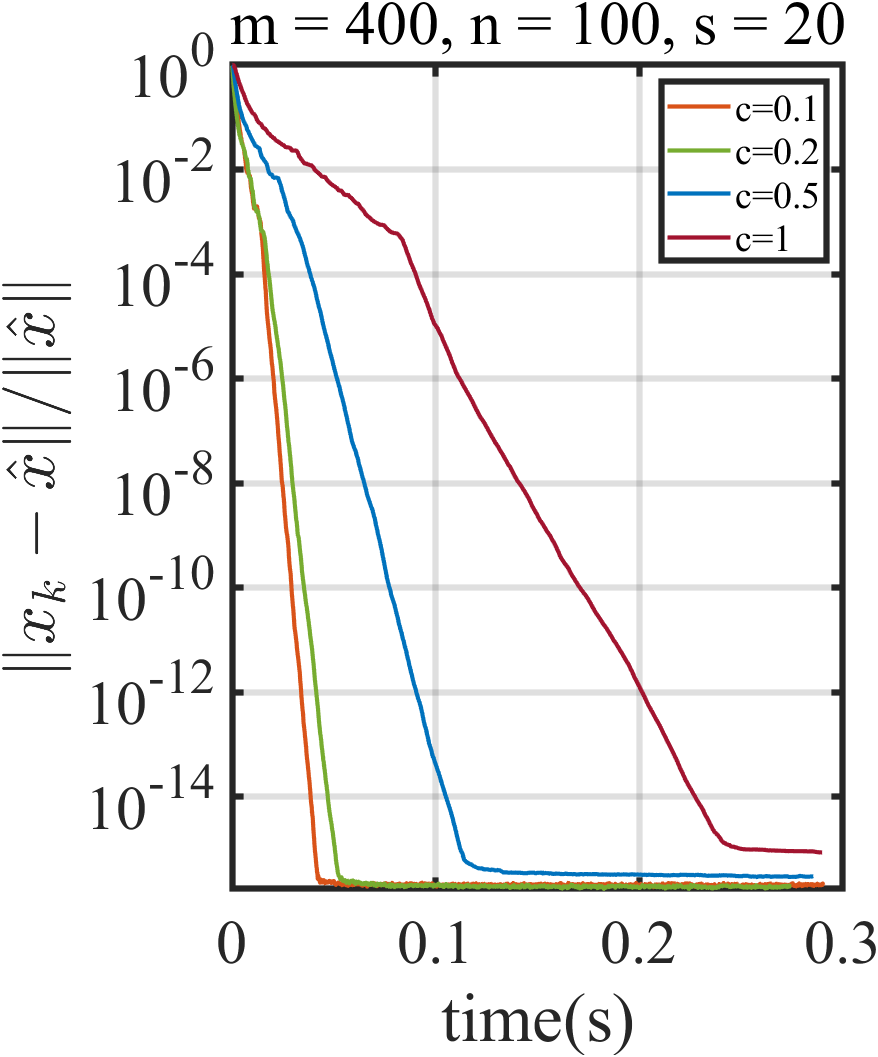

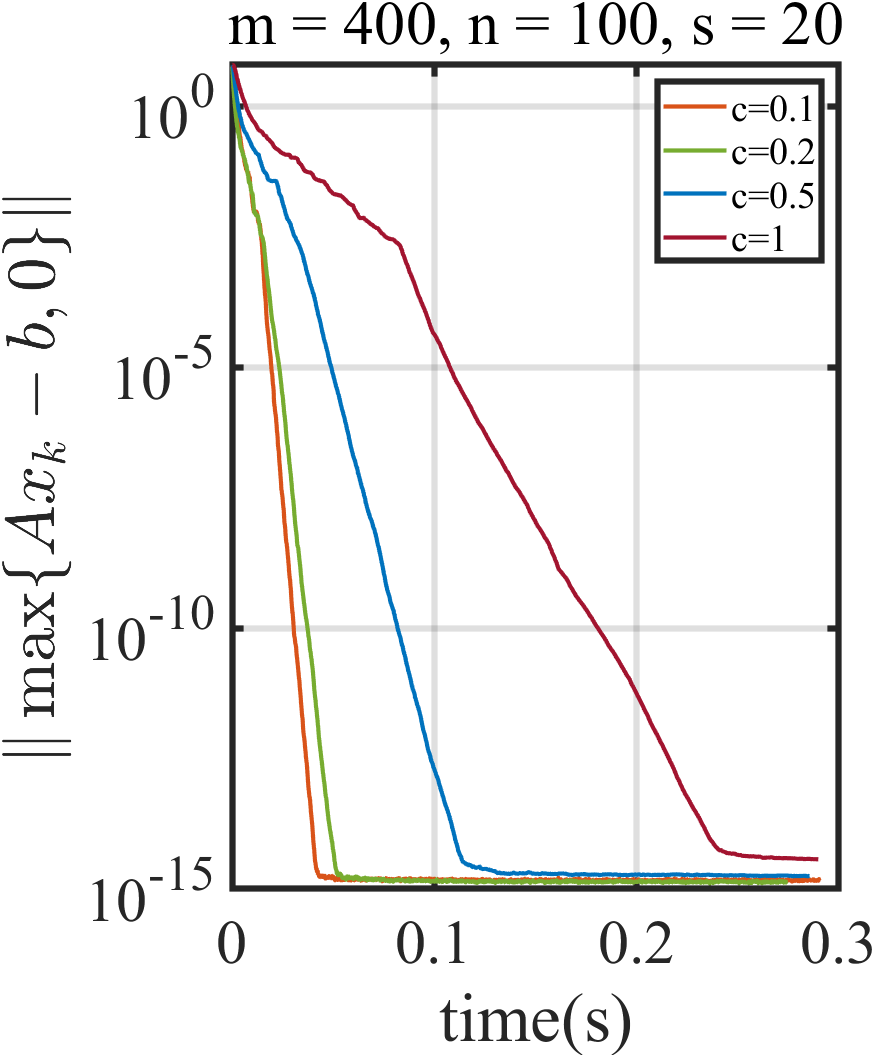

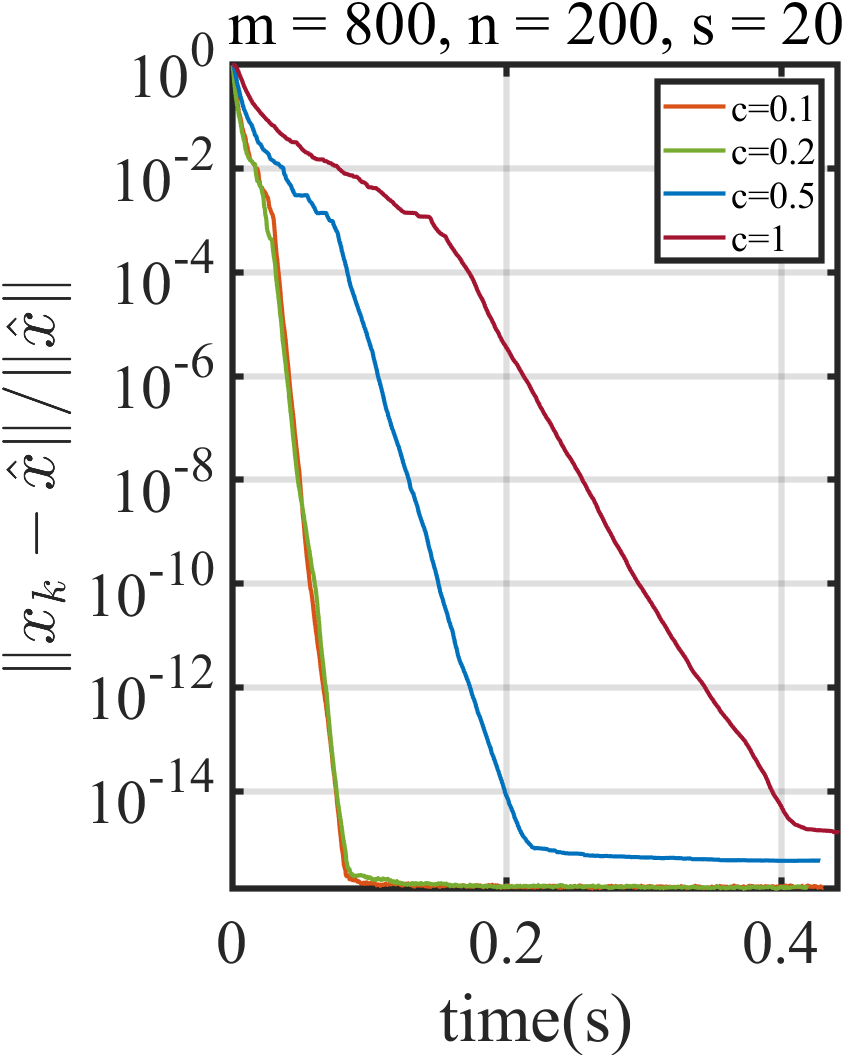

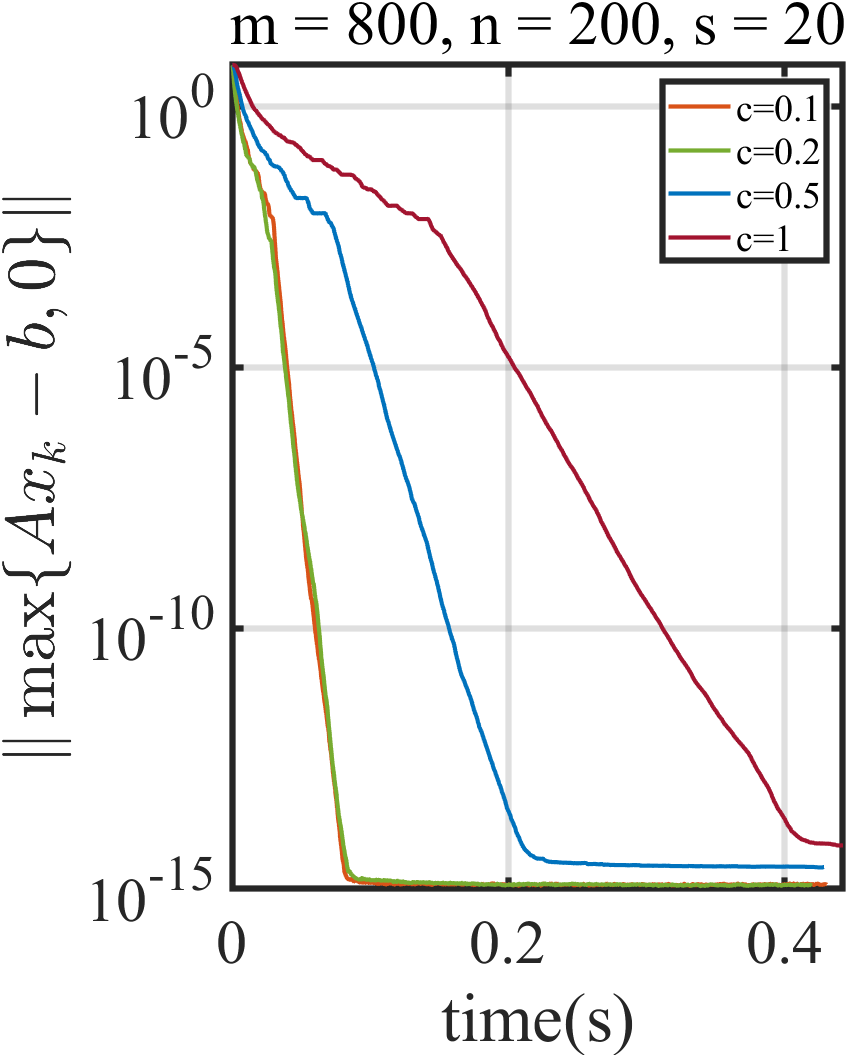

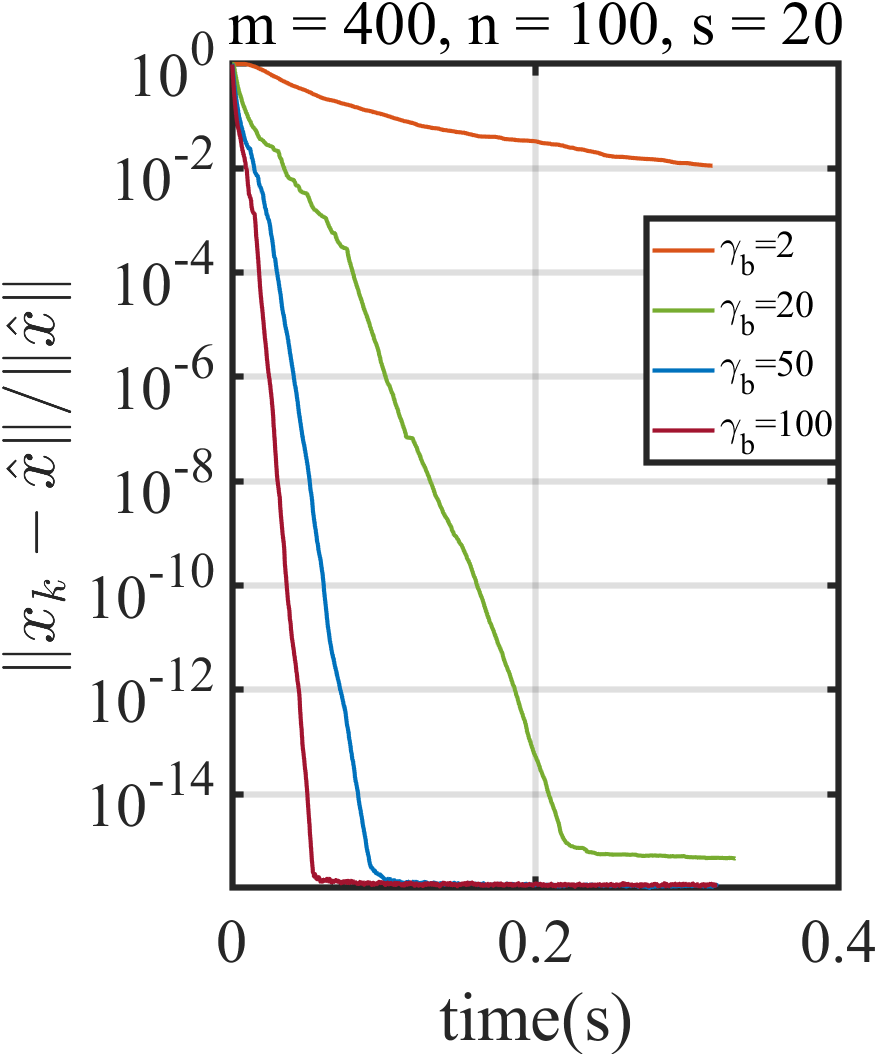

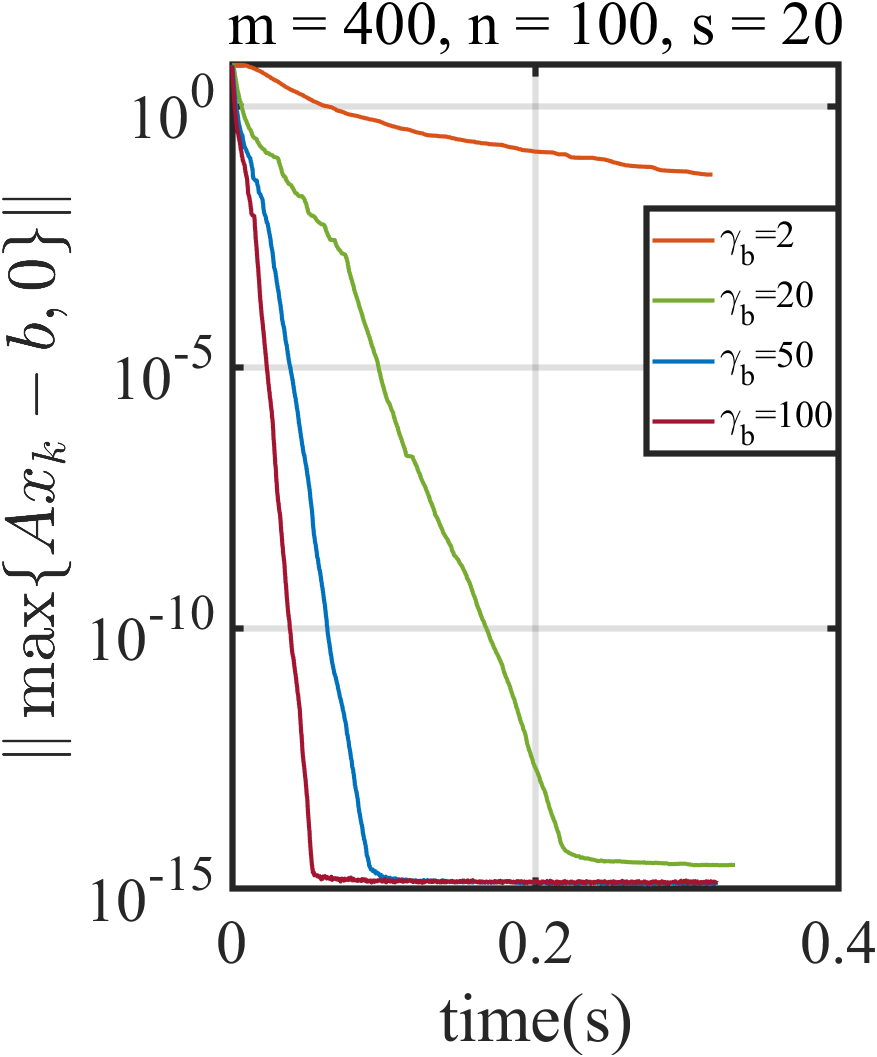

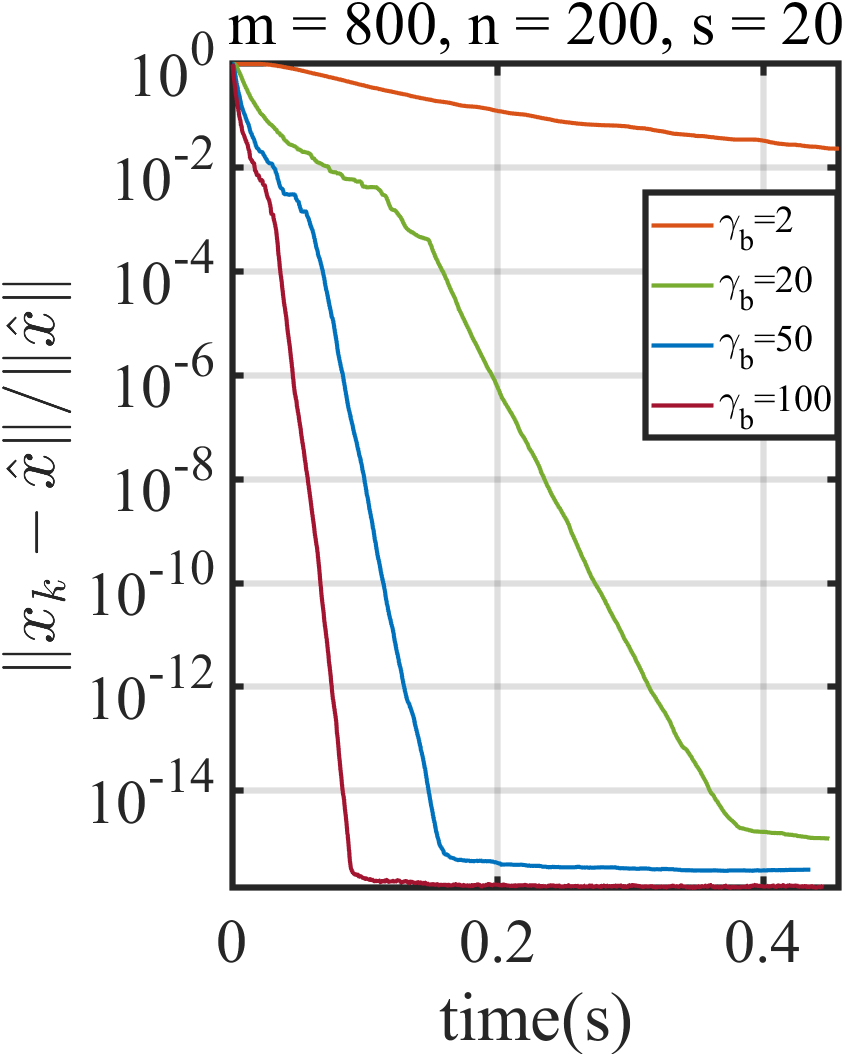

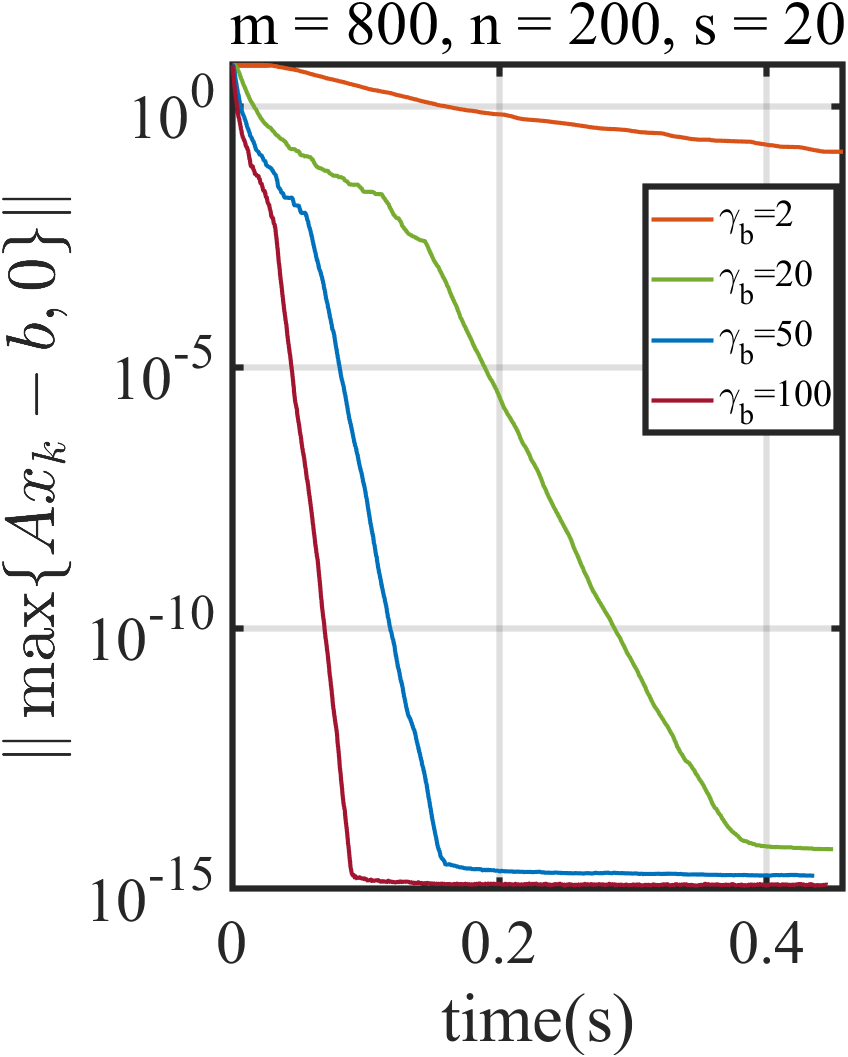

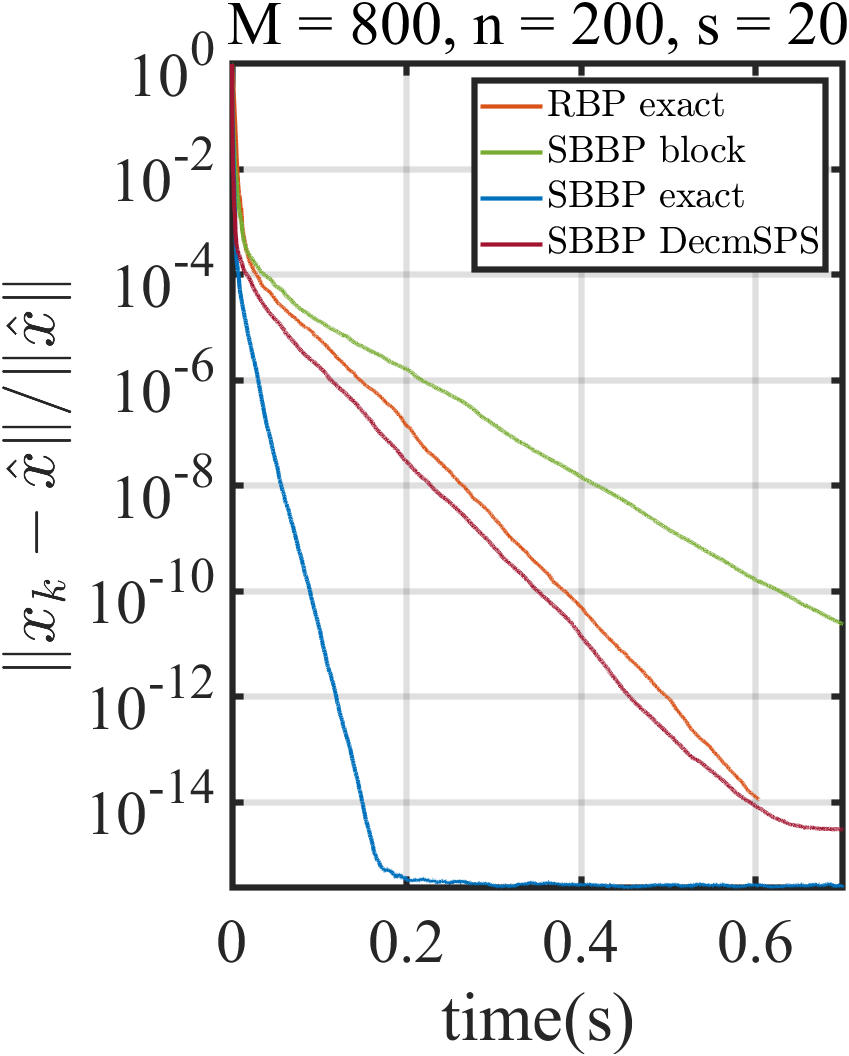

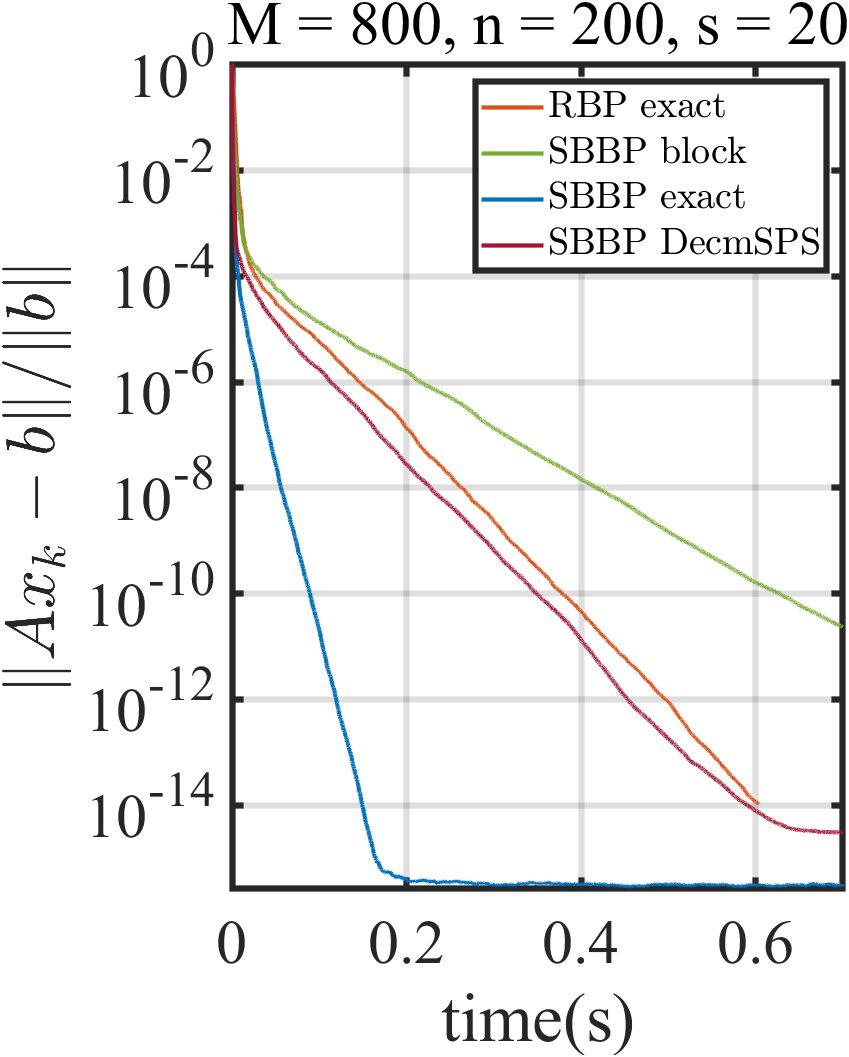

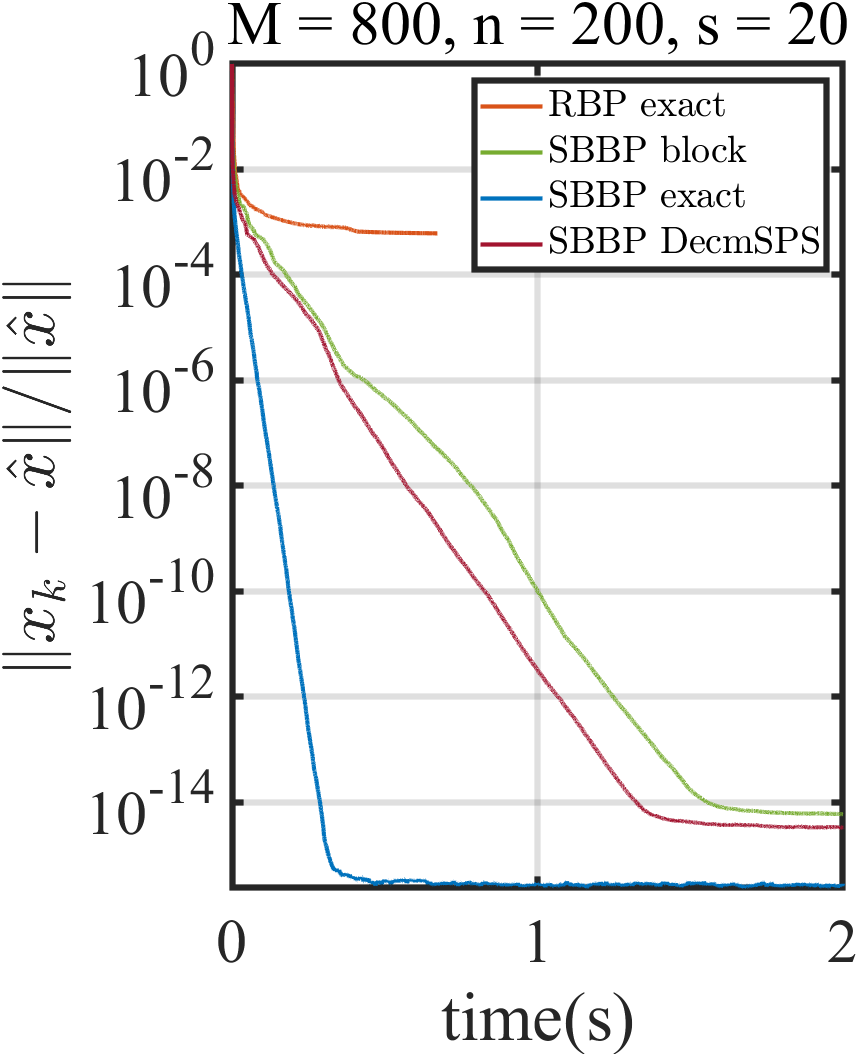

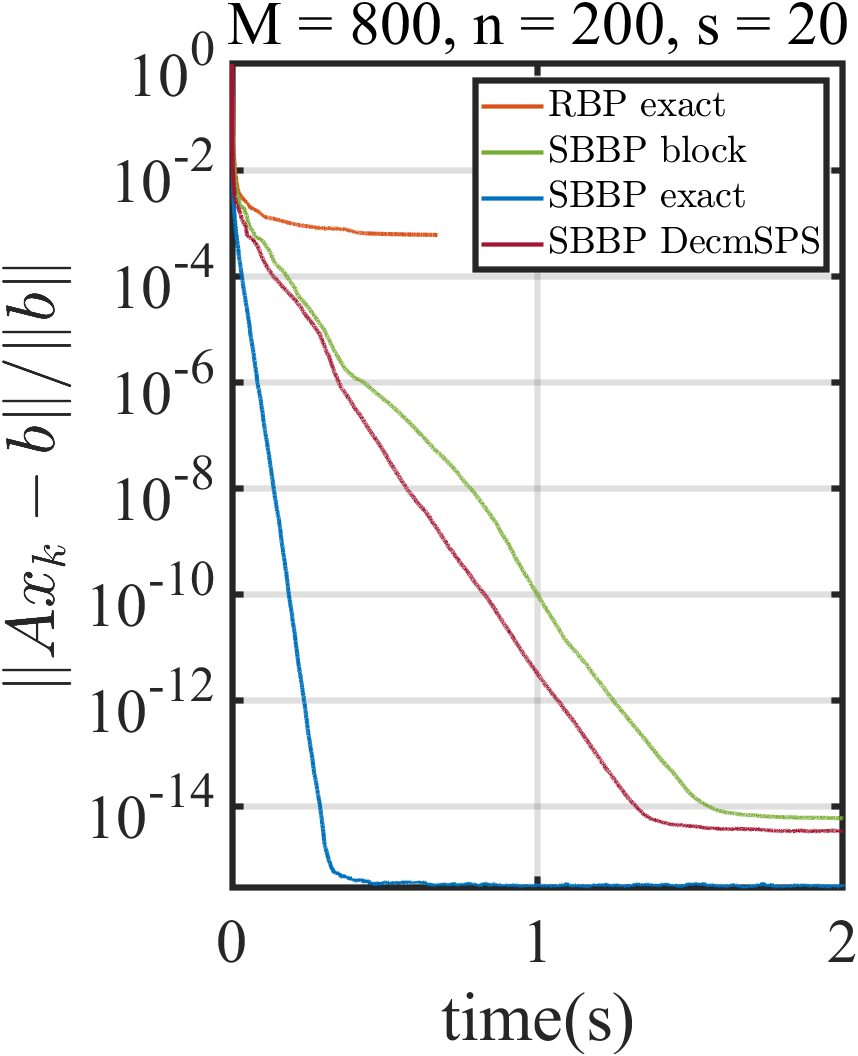

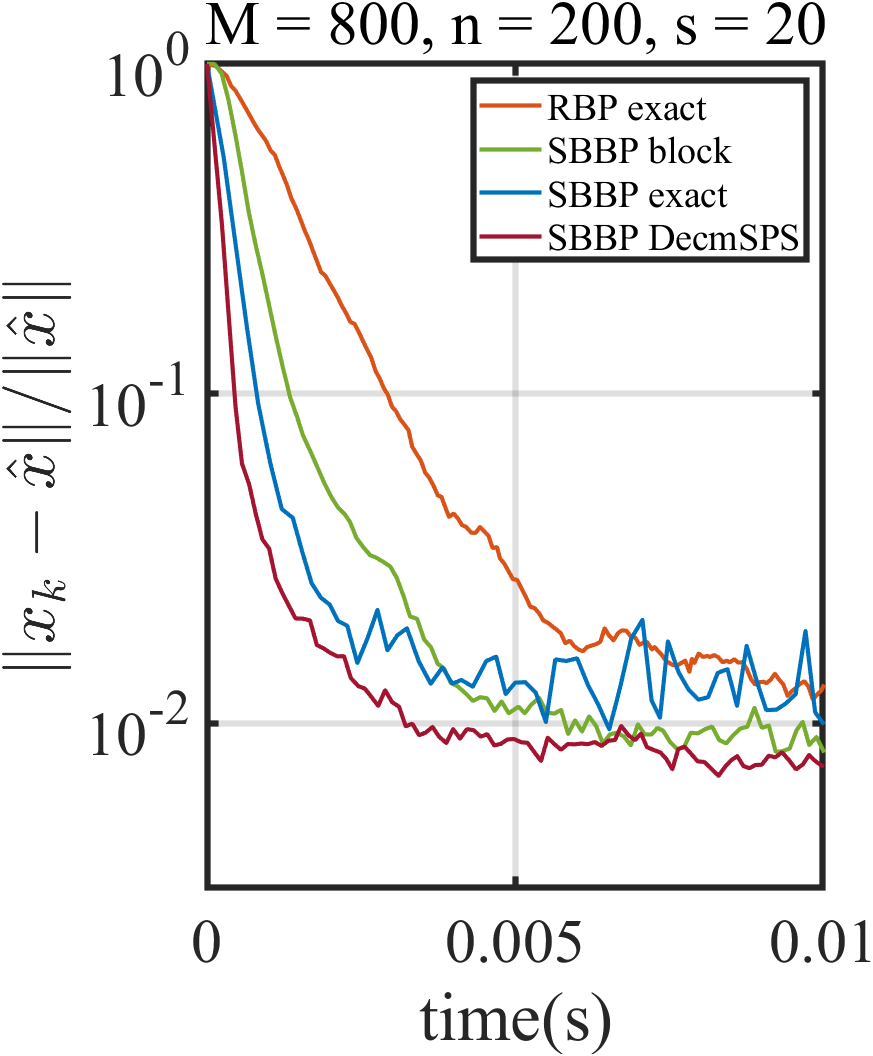

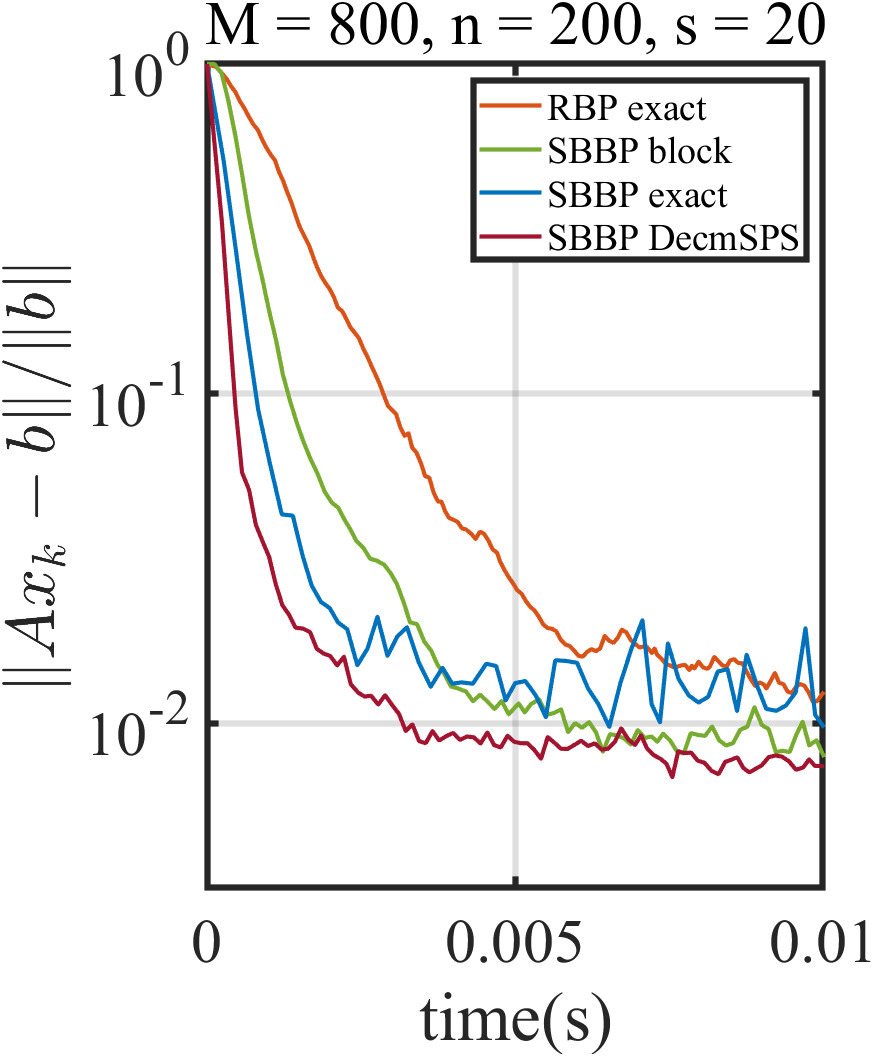

Figure 1: The convergence of SBP and SBBP with different stepsizes for several matrix sizes.

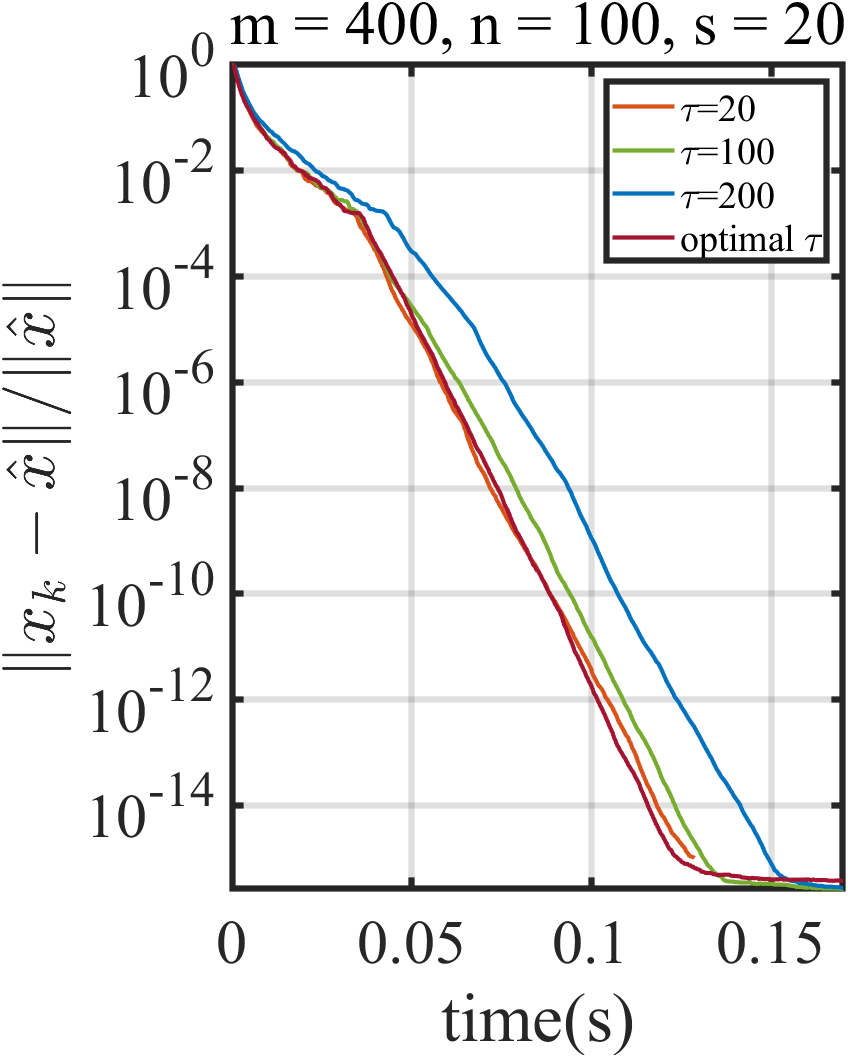

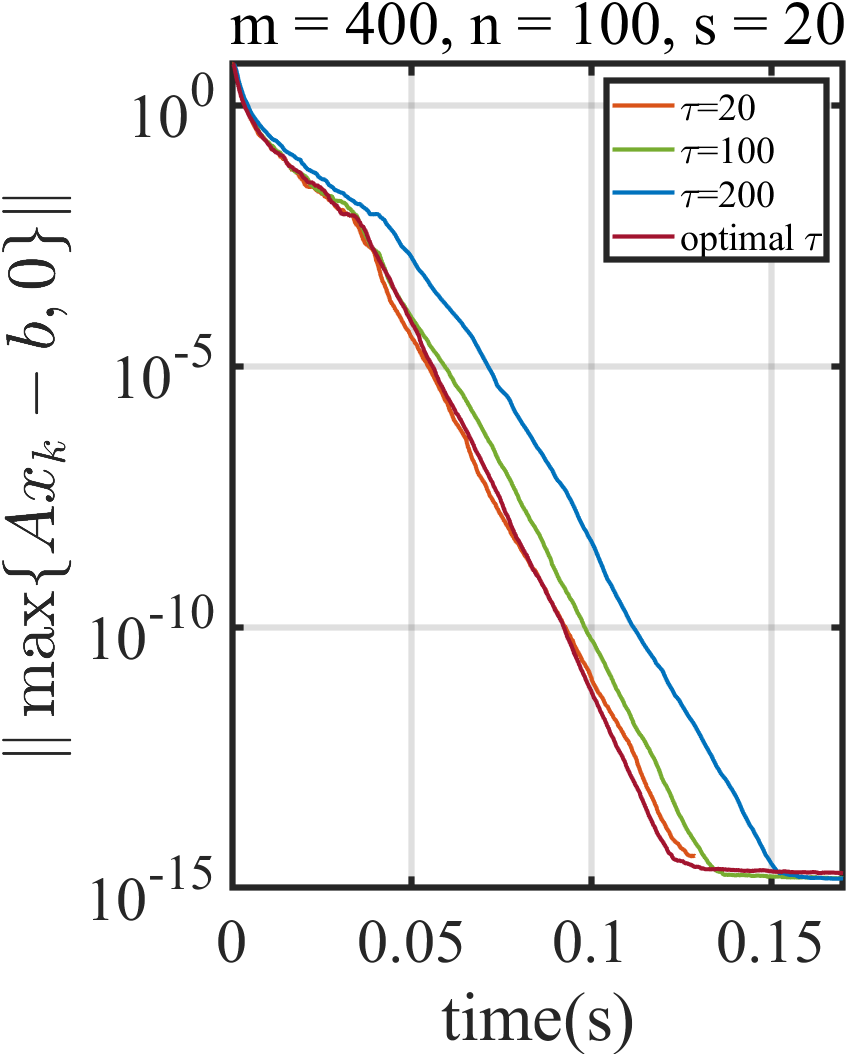

Block Size Selection

Optimal block sizes are explicitly characterized; theory and experiments confirm there exists a block size x∈Rnminψ(x)s.t. x∈argx∈RnminF(x),F(x)=E[fi(x)]=i=1∑mP(i)fi(x)3, computable via spectral properties of the constraint system, maximizing convergence rate. This block-regularity generalizes classical single-sample and full-batch methods.

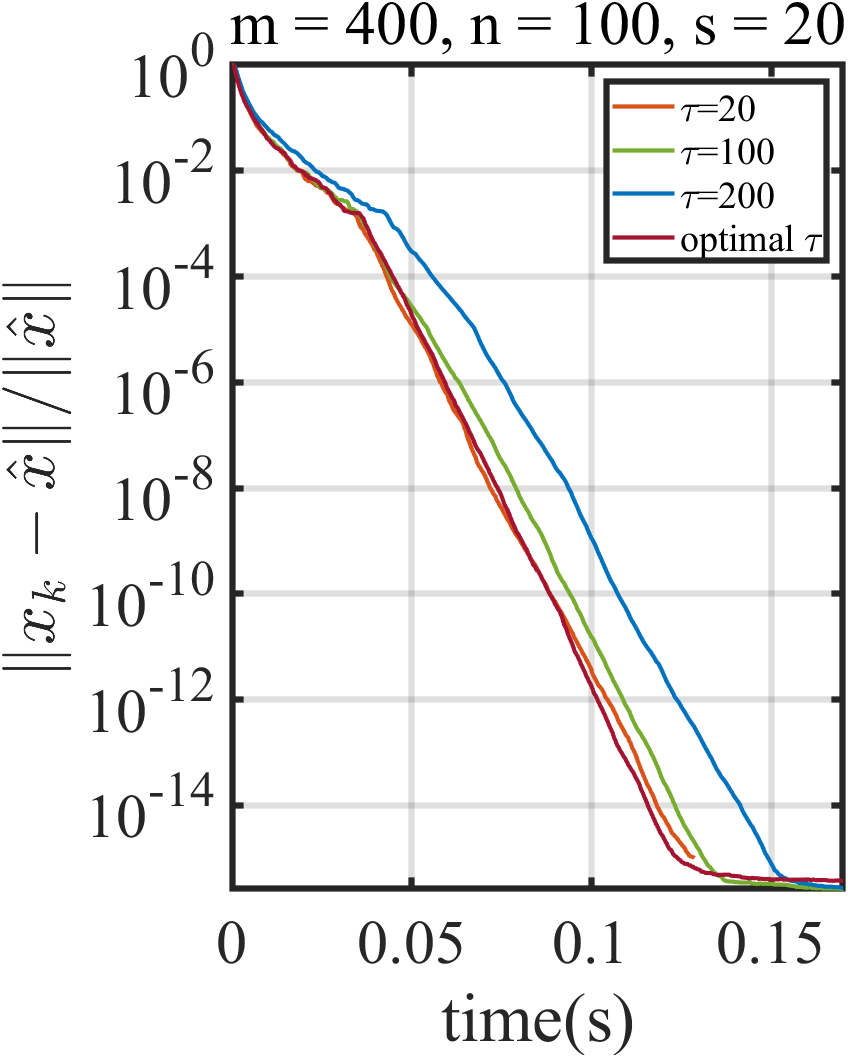

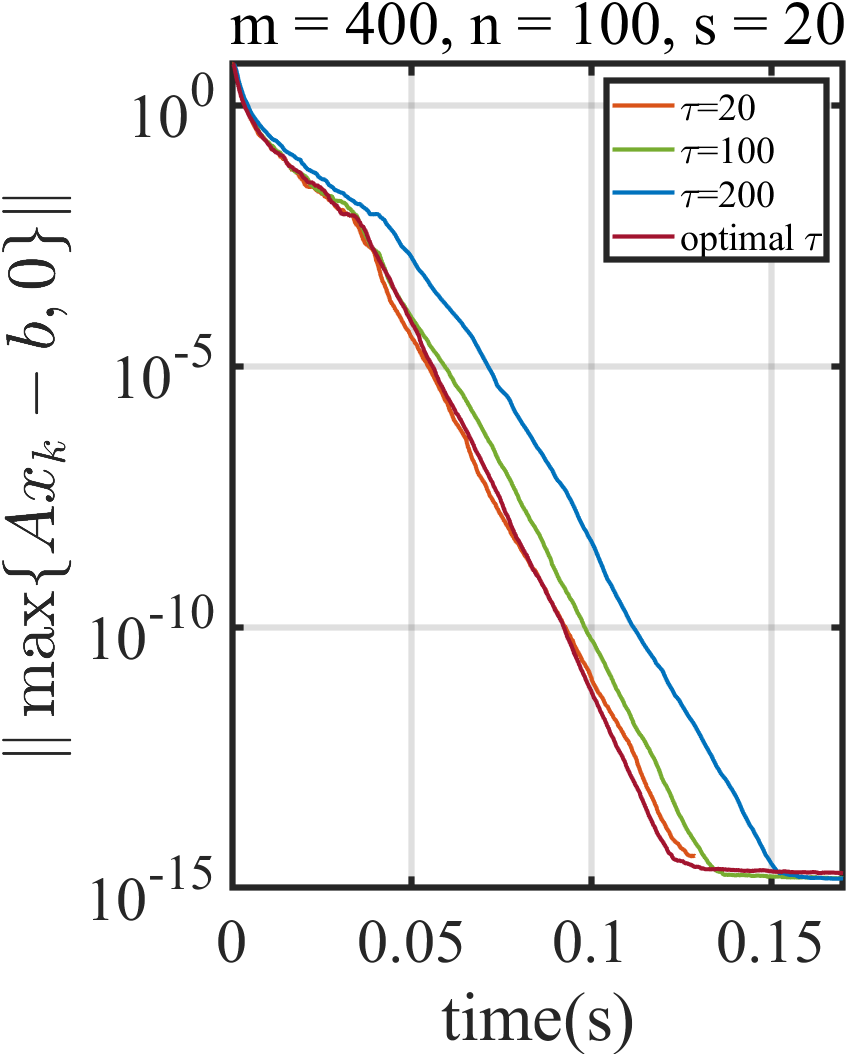

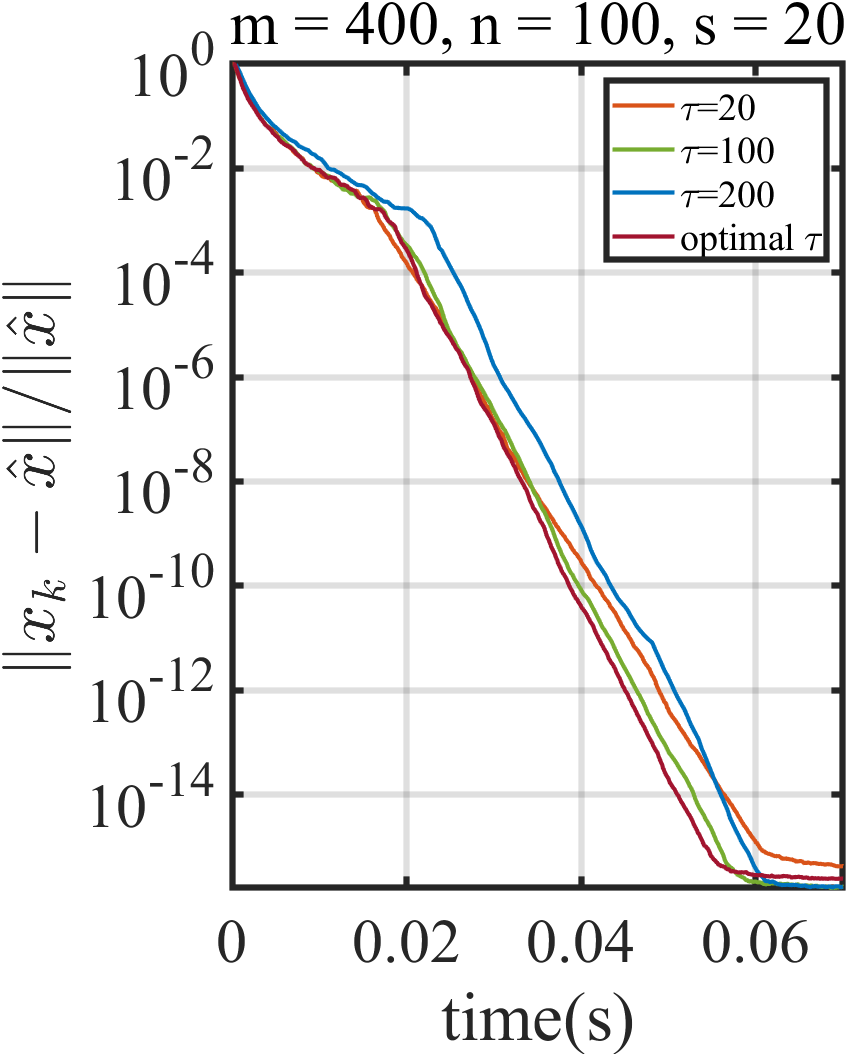

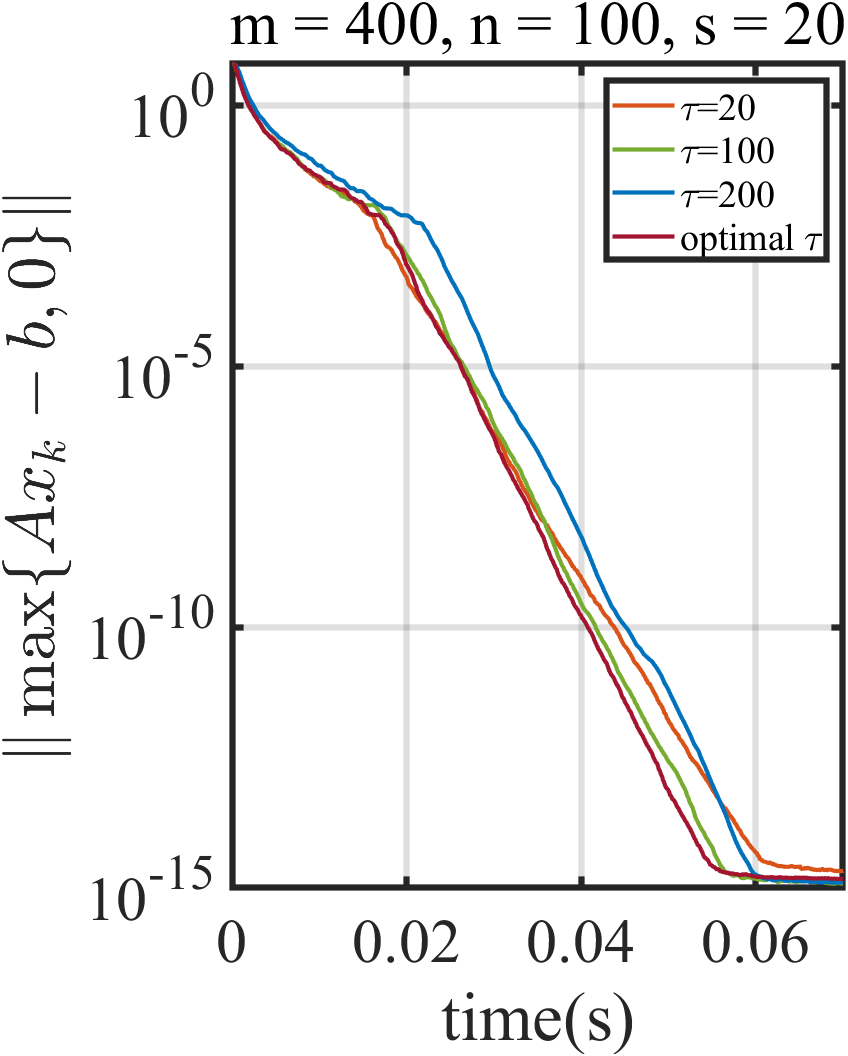

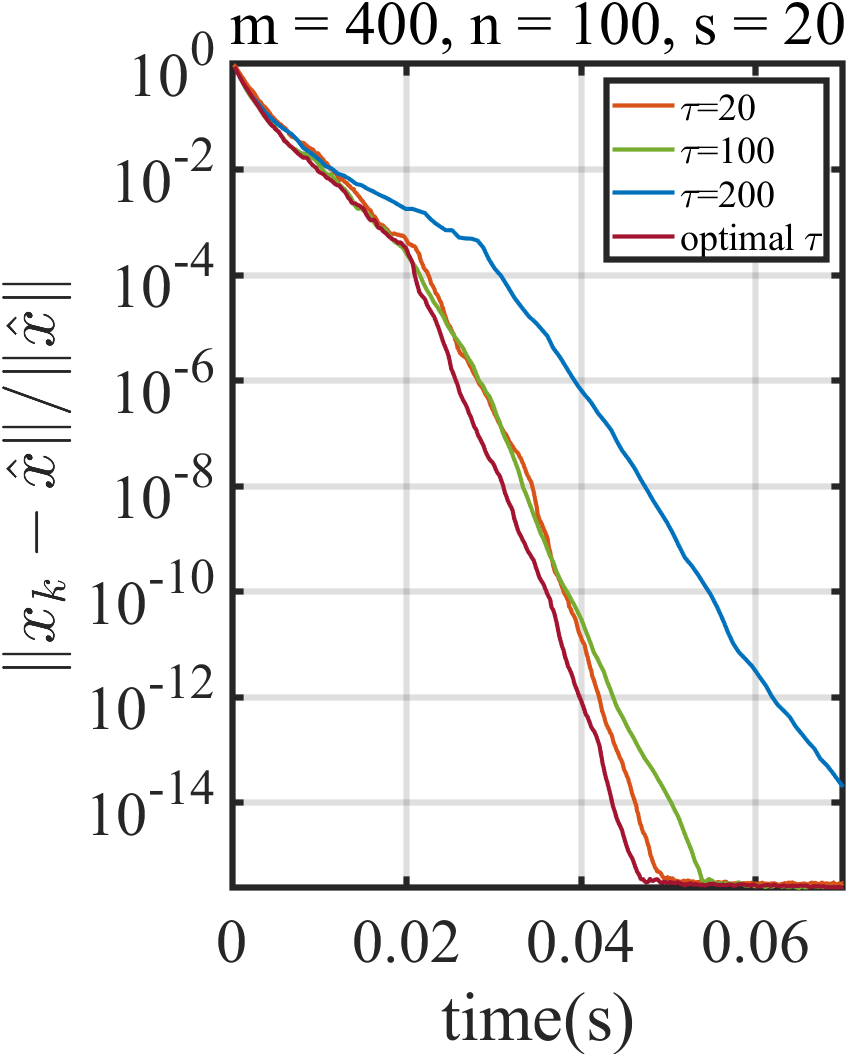

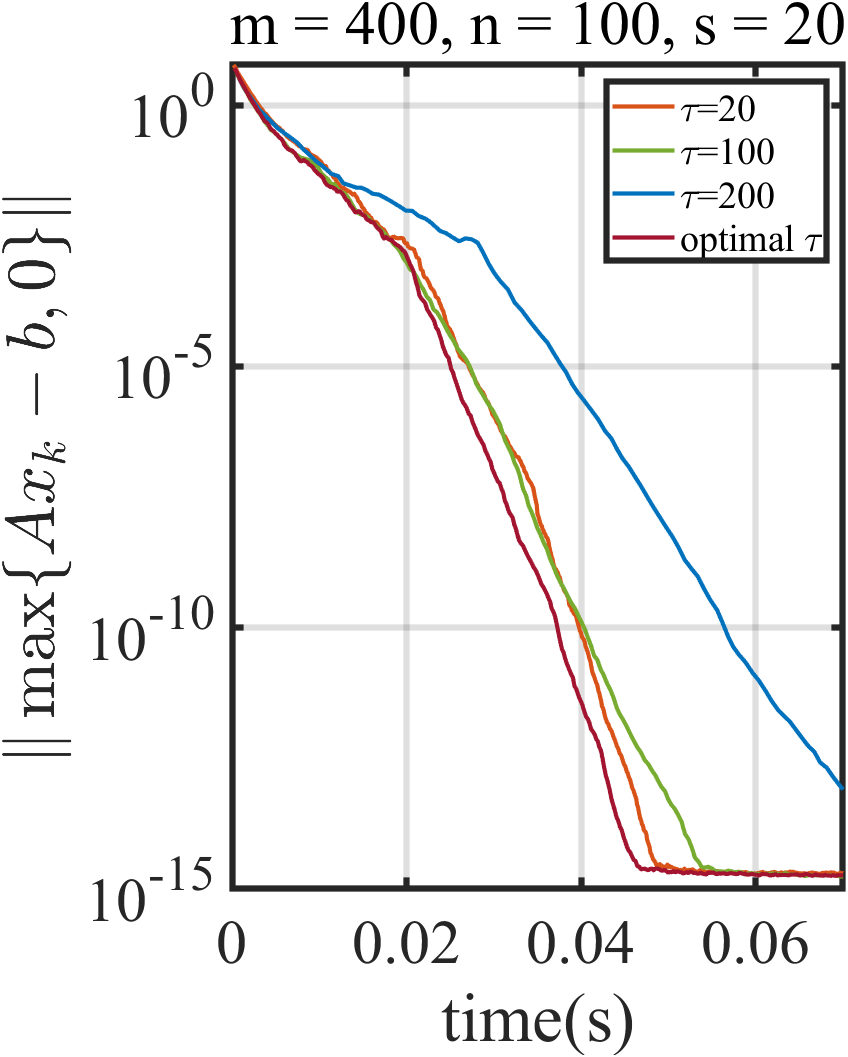

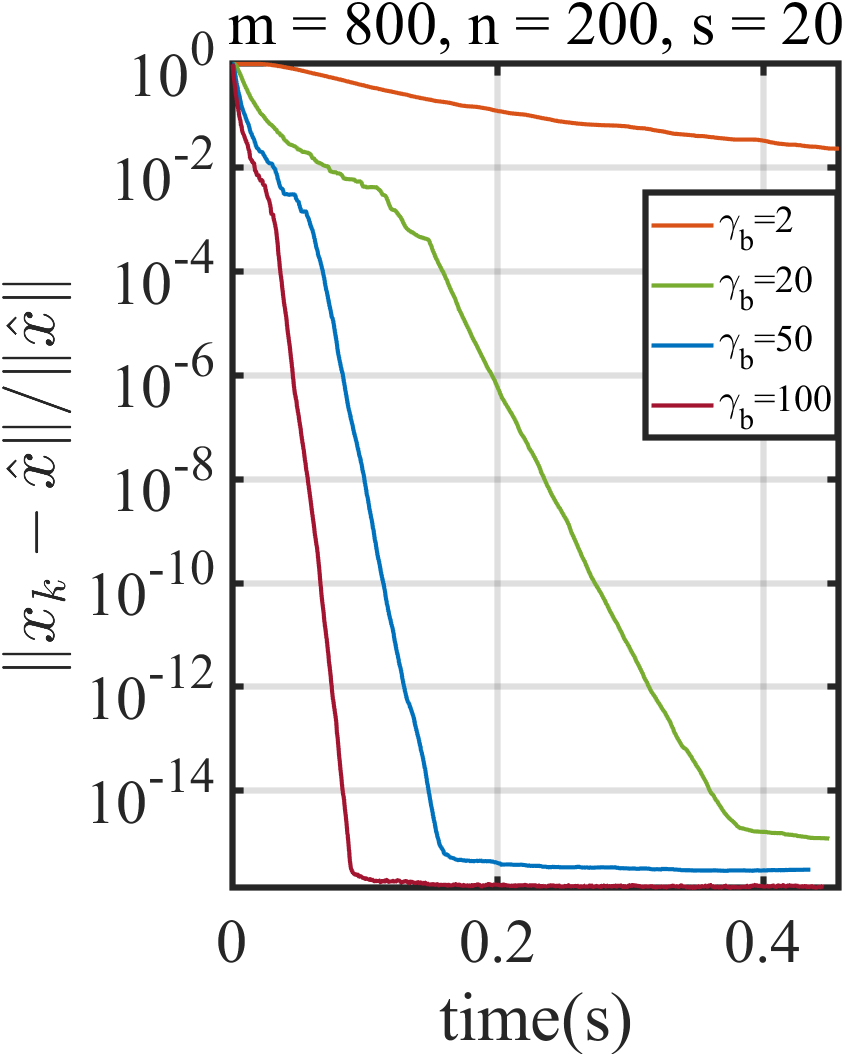

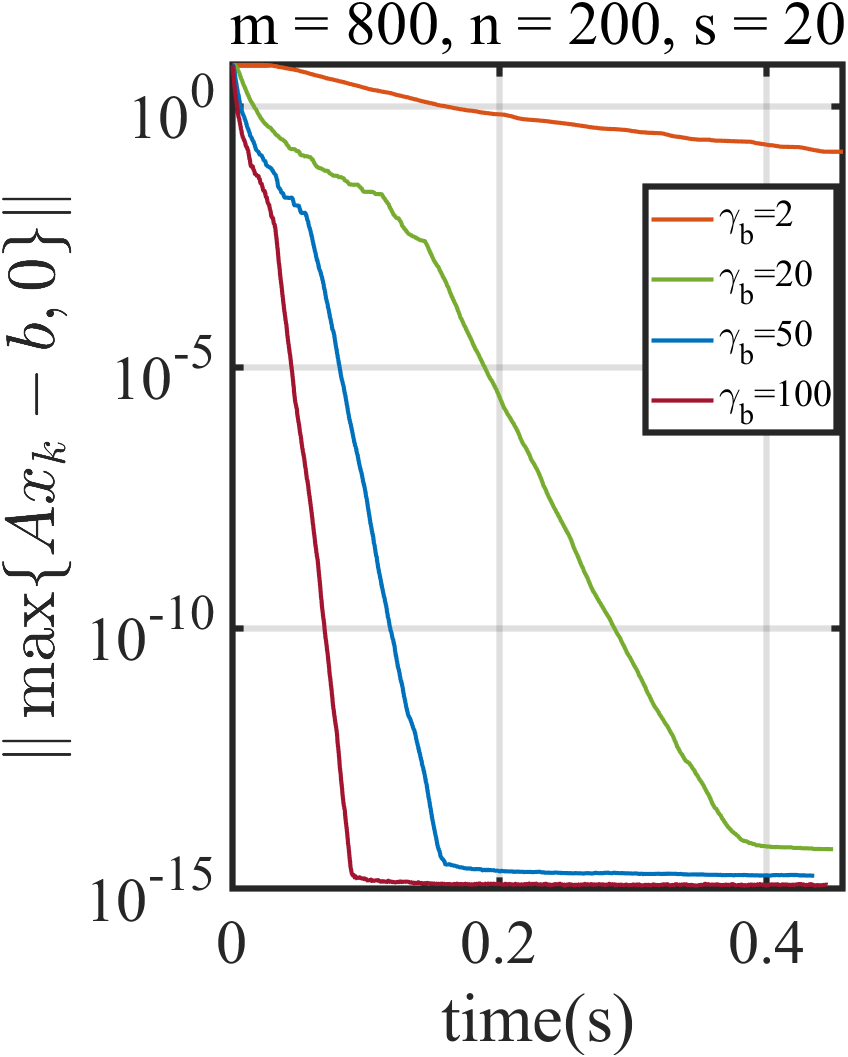

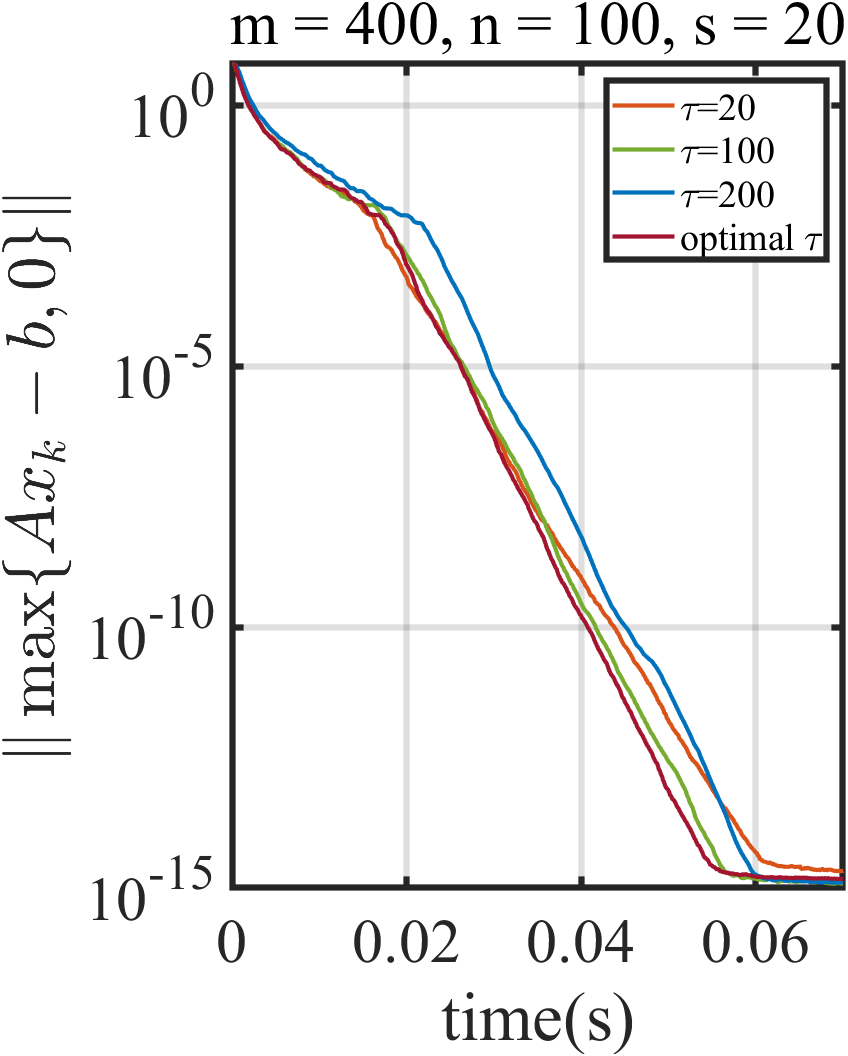

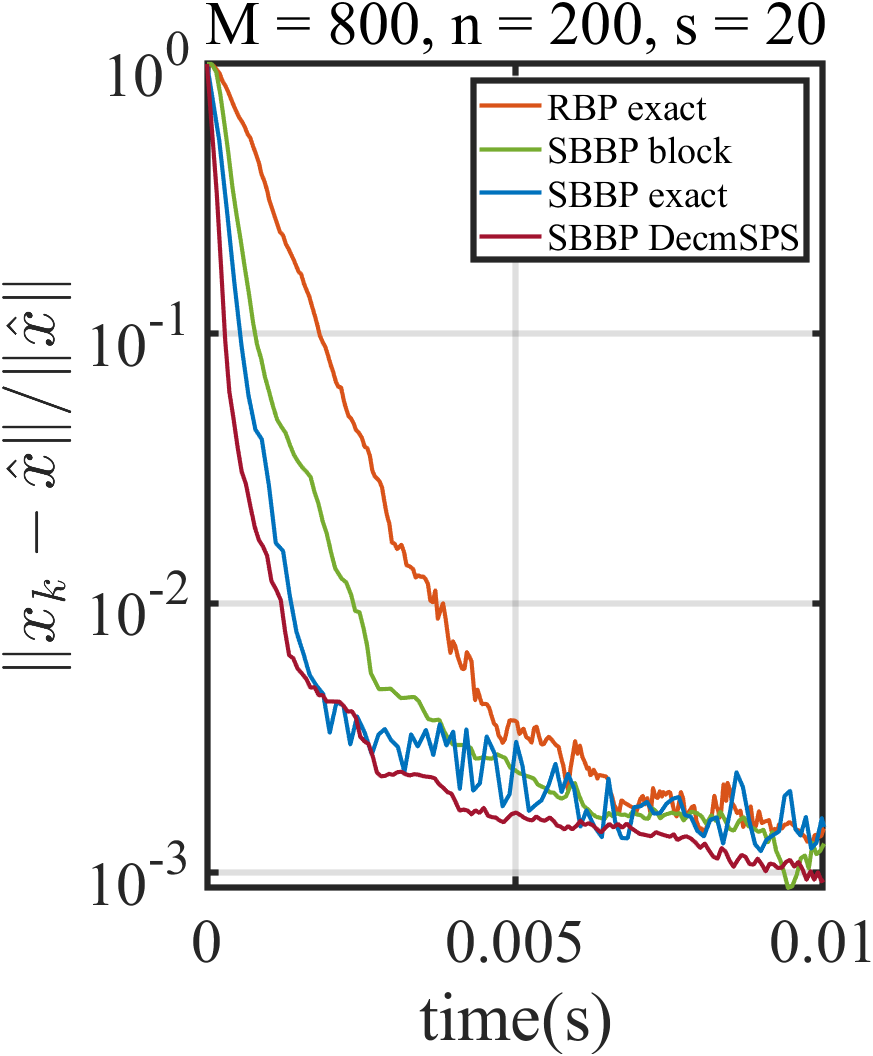

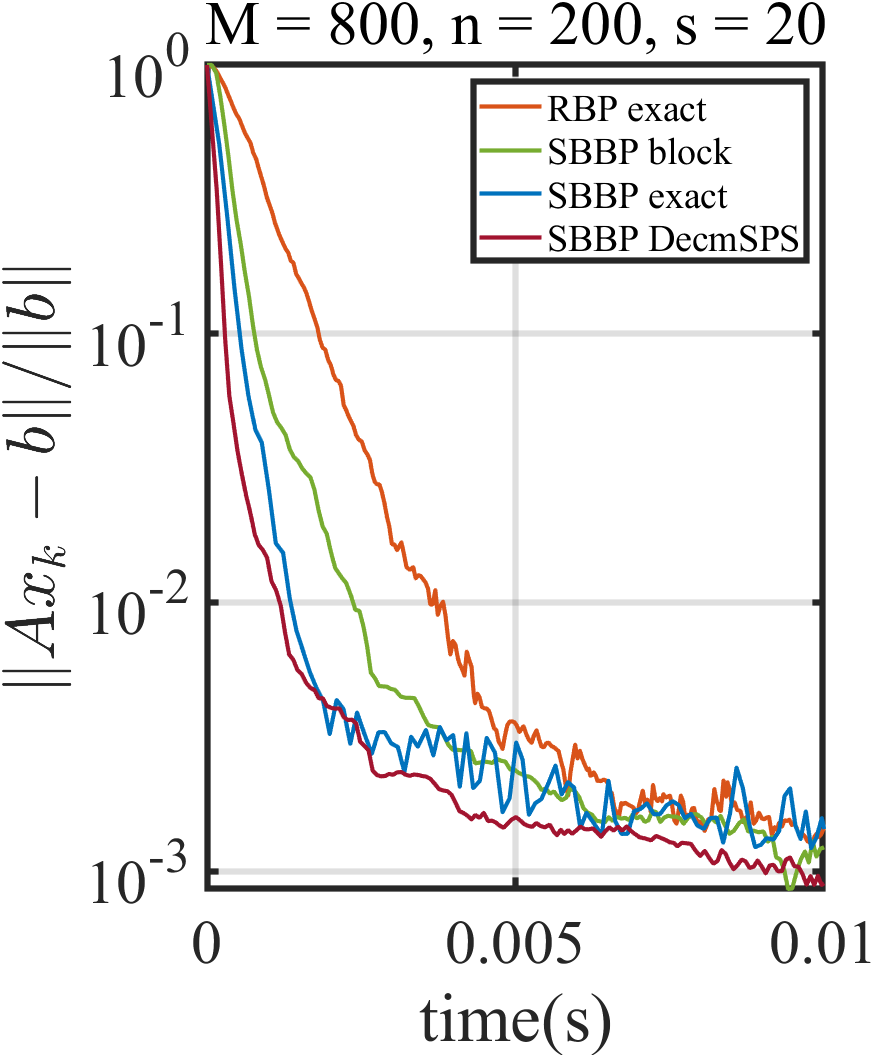

Figure 2: The performance of SBBP with different stepsizes under x∈Rnminψ(x)s.t. x∈argx∈RnminF(x),F(x)=E[fi(x)]=i=1∑mP(i)fi(x)4 and x∈Rnminψ(x)s.t. x∈argx∈RnminF(x),F(x)=E[fi(x)]=i=1∑mP(i)fi(x)5.

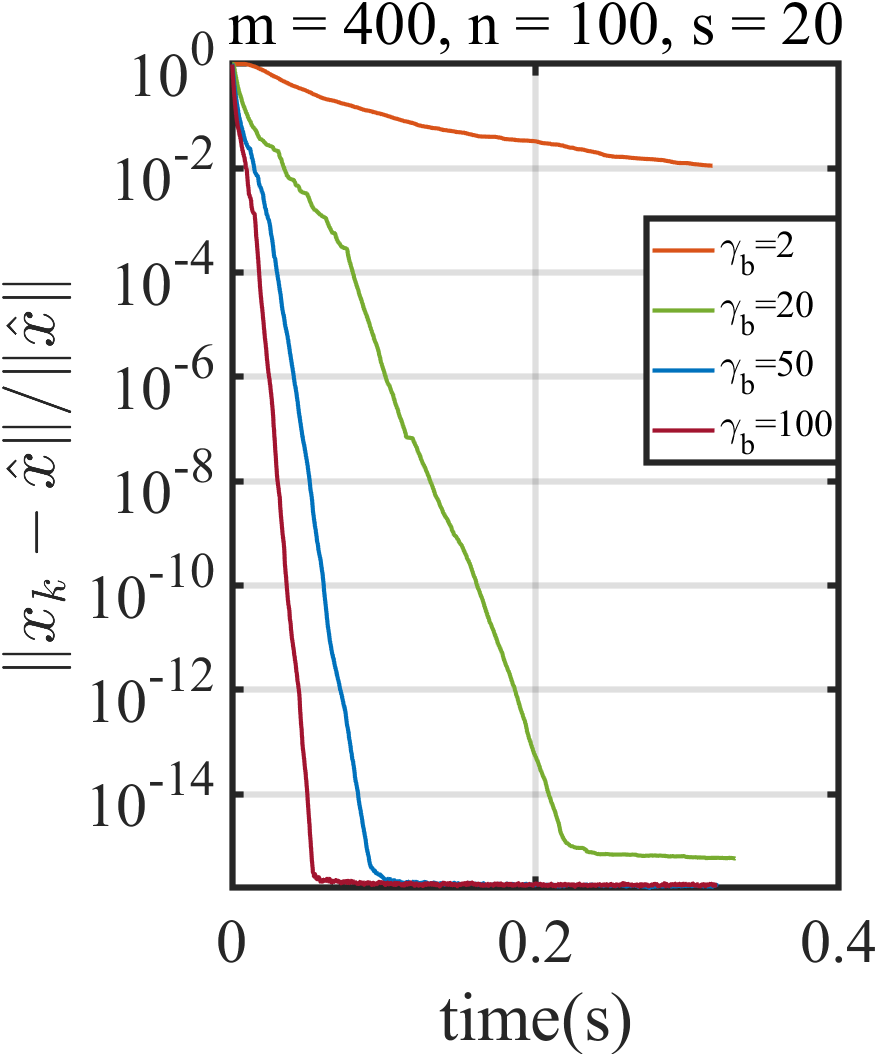

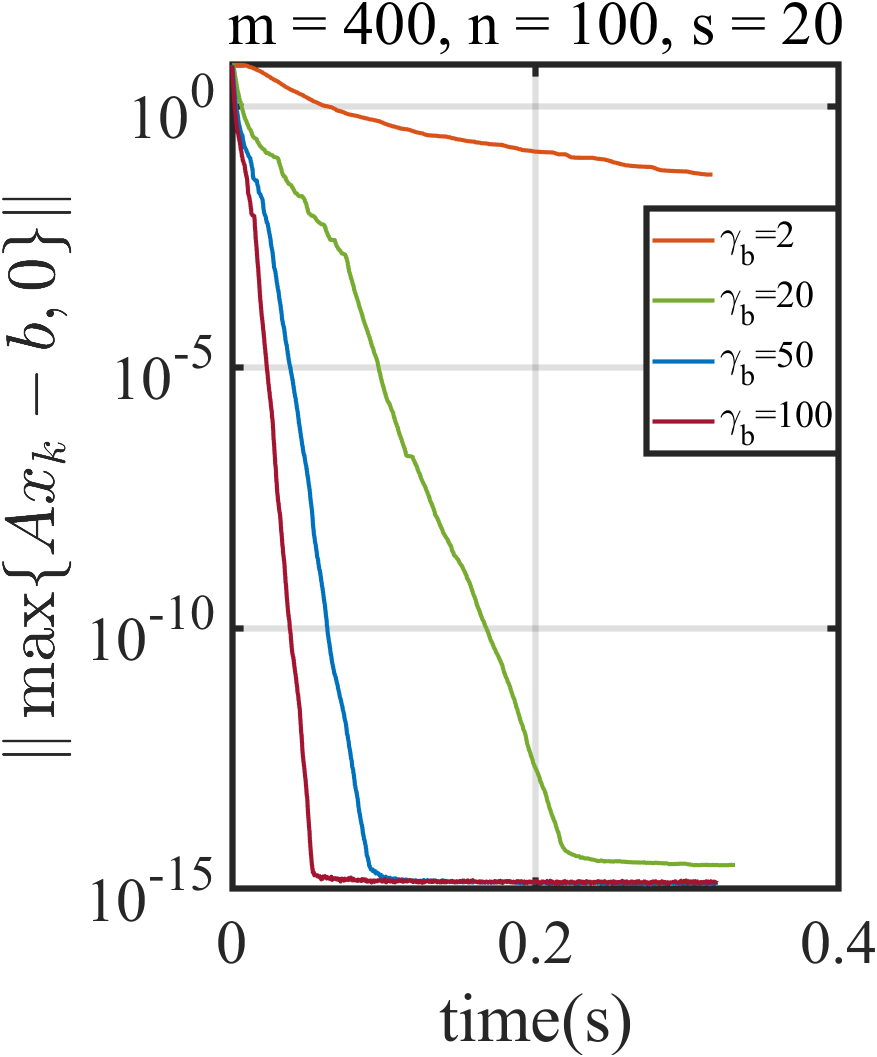

Robustness and Rate Behavior

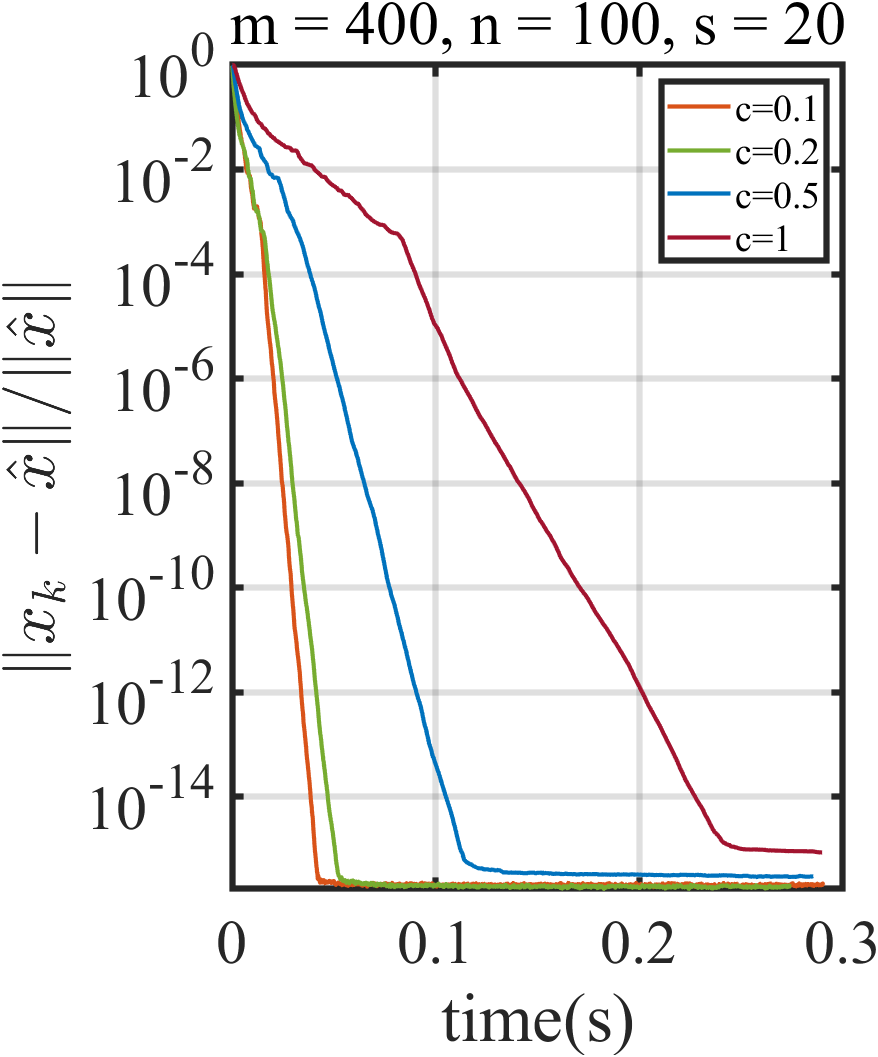

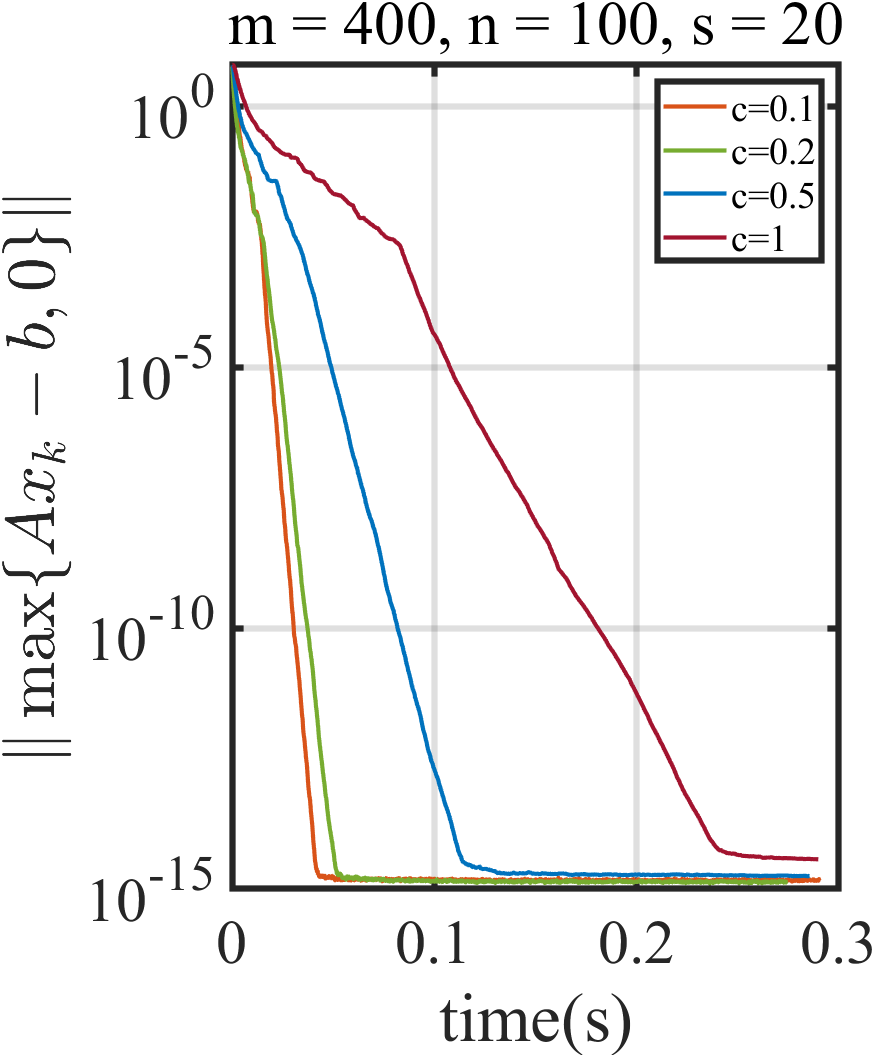

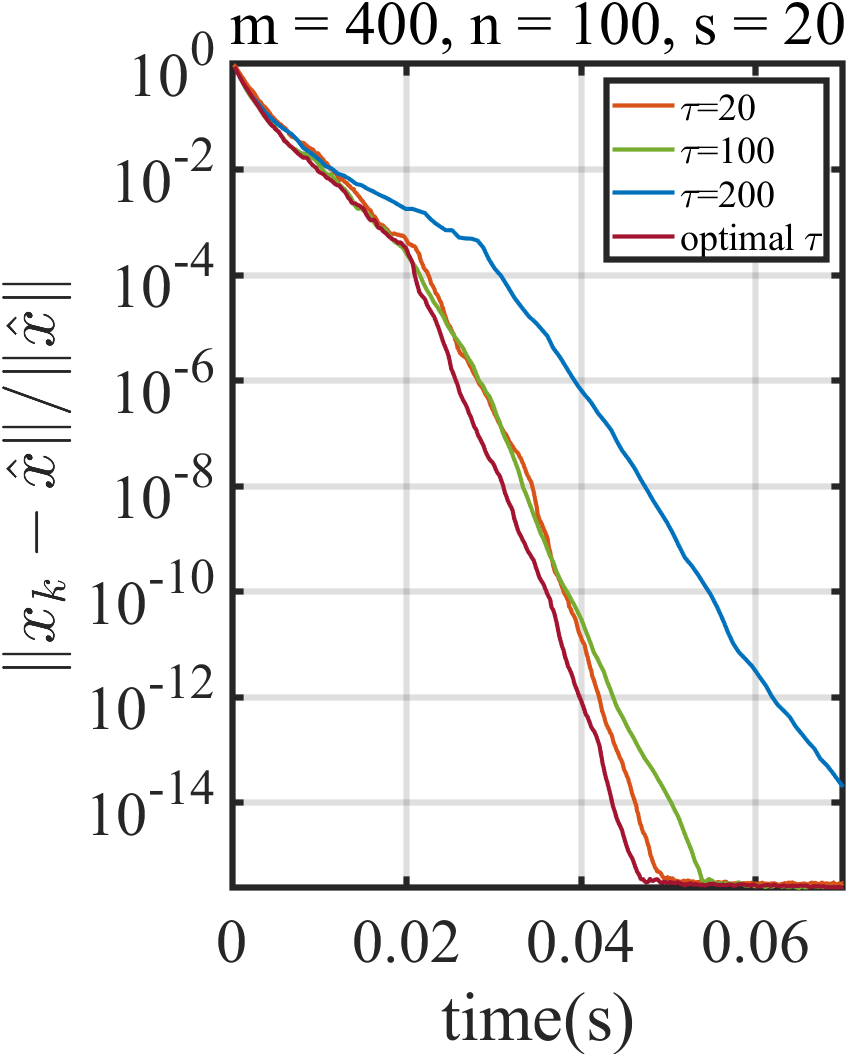

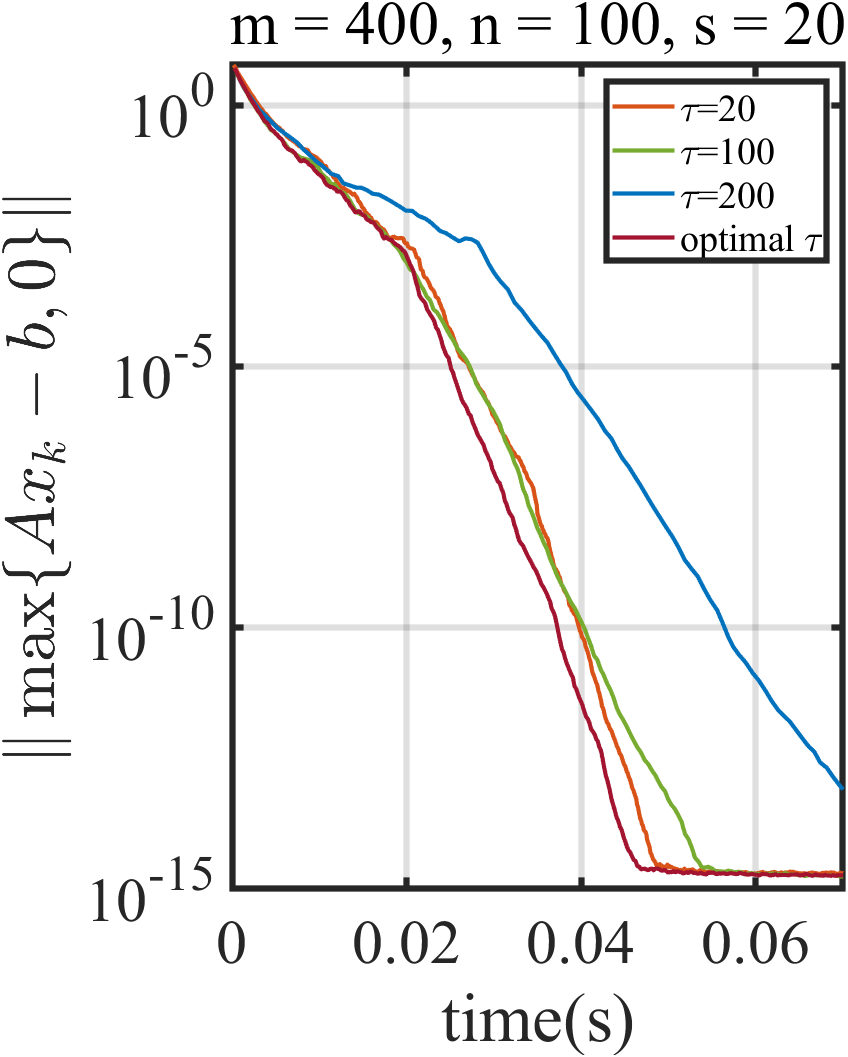

Numerical results corroborate the theoretical findings: smaller choices of the Polyak parameter x∈Rnminψ(x)s.t. x∈argx∈RnminF(x),F(x)=E[fi(x)]=i=1∑mP(i)fi(x)6 yield empirically faster convergence, and tuning the upper truncation x∈Rnminψ(x)s.t. x∈argx∈RnminF(x),F(x)=E[fi(x)]=i=1∑mP(i)fi(x)7 is necessary but noncritical beyond a threshold. SBBP consistently outperforms classic Euclidean stochastic block projection (SBP) and deterministic Bregman methods, especially on ill-conditioned and noisy problems.

Figure 3: The performance of SBBP with DecmSPS for x∈Rnminψ(x)s.t. x∈argx∈RnminF(x),F(x)=E[fi(x)]=i=1∑mP(i)fi(x)8 and varying x∈Rnminψ(x)s.t. x∈argx∈RnminF(x),F(x)=E[fi(x)]=i=1∑mP(i)fi(x)9.

Figure 4: The performance of SBBP with DecmSPS under fi(x)0 and fi(x)1.

Applications and Numerical Experiments

Linear and Split Feasibility Problems

The framework recovers and extends state-of-the-art stochastic methods for structured feasibility, including randomized (block) Kaczmarz, Kaczmarz for linear inequalities, block sparse Kaczmarz, and block projection with arbitrary Bregman divergences. The approach can induce new variants tailored to sparse/structured solutions.

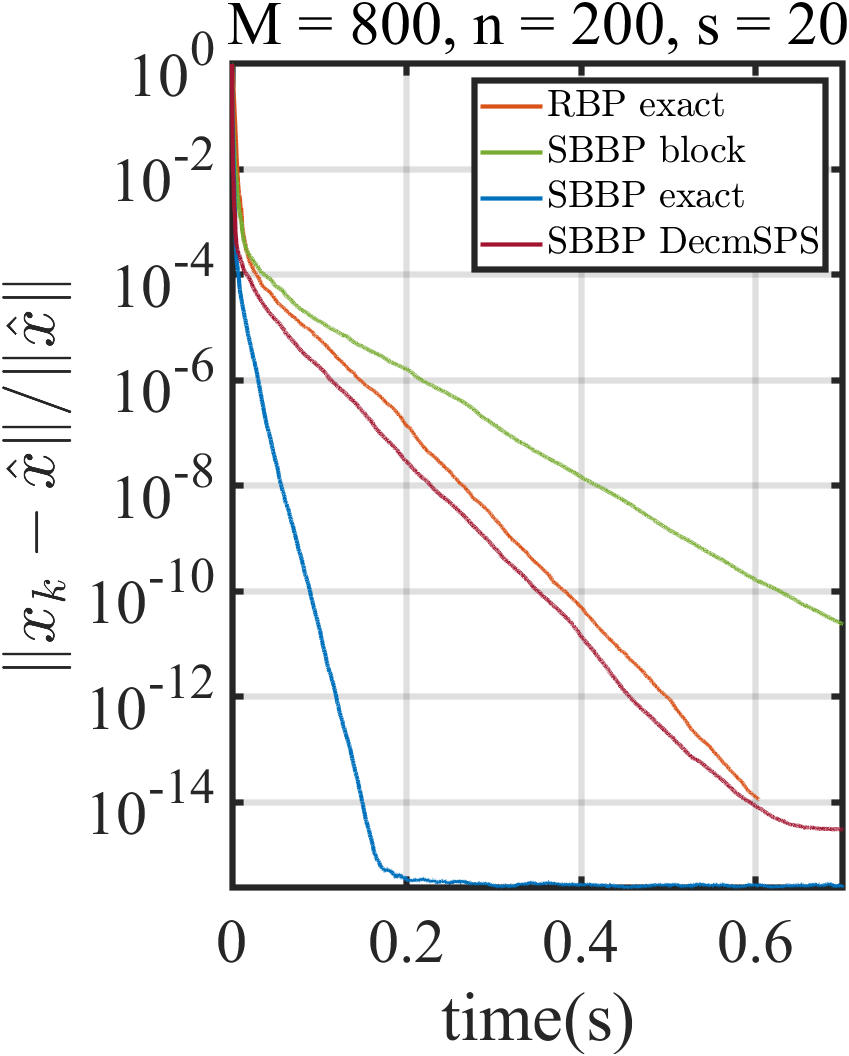

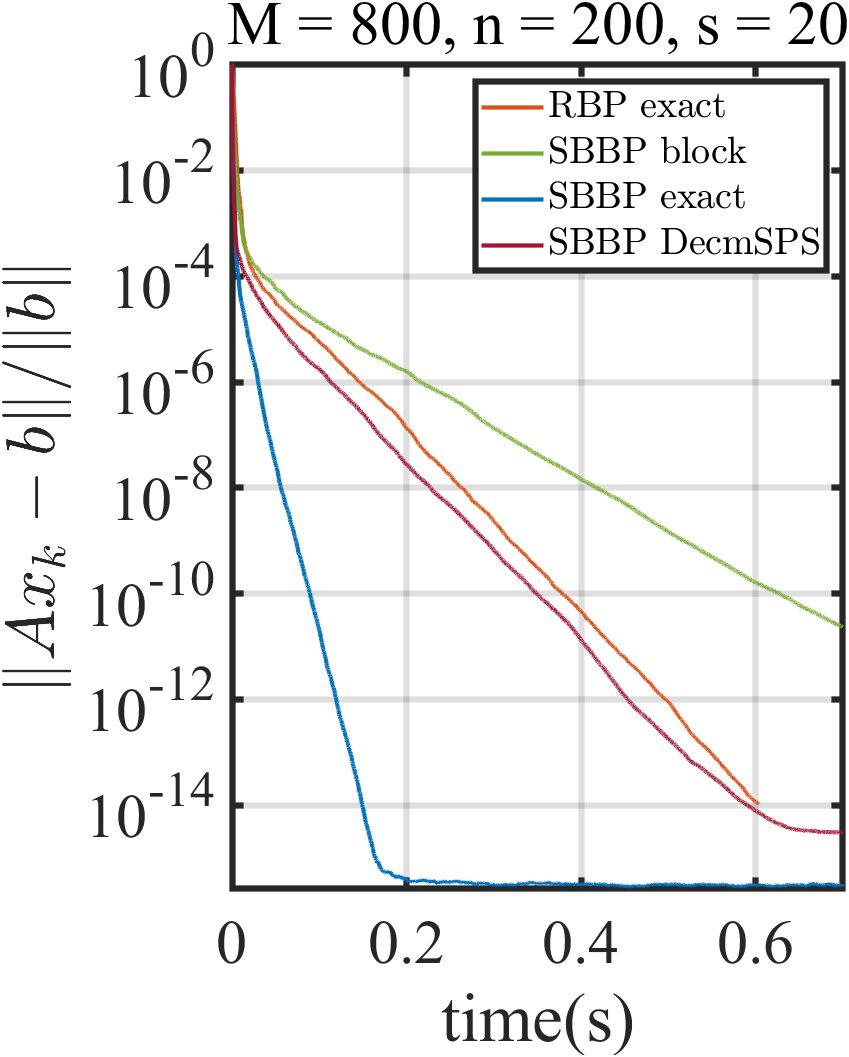

Noise-Robustness and Inconsistent Systems

SBBP is demonstrated to be robust to noise: in both consistent and inconsistent split feasibility problems, SBBP with DecmSPS or projective stepsize outperforms RBP variances, especially under high noise, due to both mini-batch averaging and adaptive stepsizes.

Figure 5: The performance of RBP and SBBP with different stepsizes with fi(x)2.

Figure 6: The performance of RBP and SBBP with different stepsizes with fi(x)3.

Comprehensive experiments confirm faster and more robust convergence for SBBP under multiple parameter regimes, block sizes, and problem dimensions.

Practical and Theoretical Implications

The SBBP framework introduces a principled mechanism to handle inconsistent feasibility in large models, enabling direct integration of priors (e.g., sparsity), and providing convergence guarantees in settings traditionally outside reach for projection methods. The stepsize rules generalize the stochastic Polyak stepsize to mirror-descent and Bregman geometries, yielding improved empirical performance and facilitating parameter selection. The minimizer set contraction under interpolation and noise (non-interpolation), as well as block-wise acceleration, are of significant interest for both theory and application.

Future Directions

The authors highlight ongoing work combining Nesterov acceleration, more advanced Polyak-type rules (e.g., AdaSPS), and convergence analysis for bilevel solution to the regularized problem itself (not only solution sets). Moreover, extensions to common fixed point problems (beyond traditional CFPs) are expected.

Conclusion

This work provides a comprehensive and extensible algorithmic and theoretical framework for stochastic Bregman-based feasibility methods in both consistent and inconsistent settings. The convergence guarantees, block size theory, and empirical robustness position the SBBP method as a compelling tool for structured, large-scale, and noisy convex feasibility problems, unifying and extending the landscape of existing projection and mirror-descent algorithms (2603.29348).