- The paper provides a systematic taxonomy of industrial smartness risks by identifying intrinsic vulnerabilities, cyber threats, and cascading side effects across edge-cloud systems.

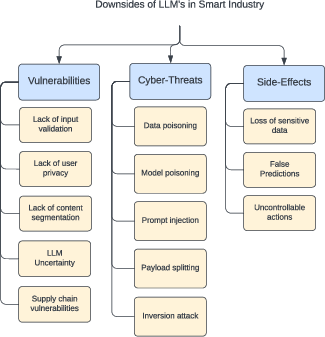

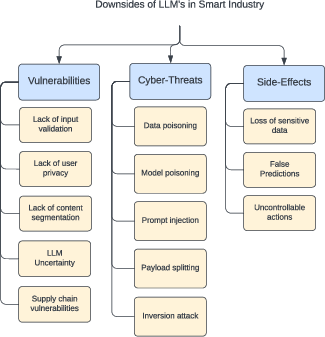

- It details how AI/ML models, including LLMs, contribute to risks such as data poisoning, prompt injection, and opaque decision-making in industrial control.

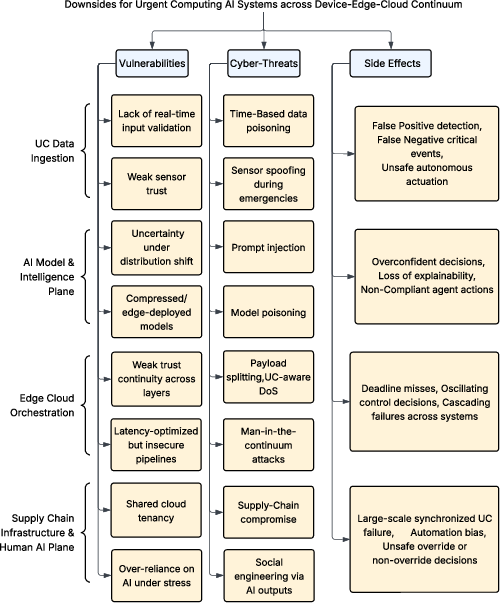

- The study emphasizes urgent computing scenarios where strict deadlines lead to compromised security measures, escalating performance degradation, and potential catastrophic failures.

Downsides of Smartness Across the Edge–Cloud Continuum in Modern Industry

Industrial Smartness: Integration and Architectural Complexity

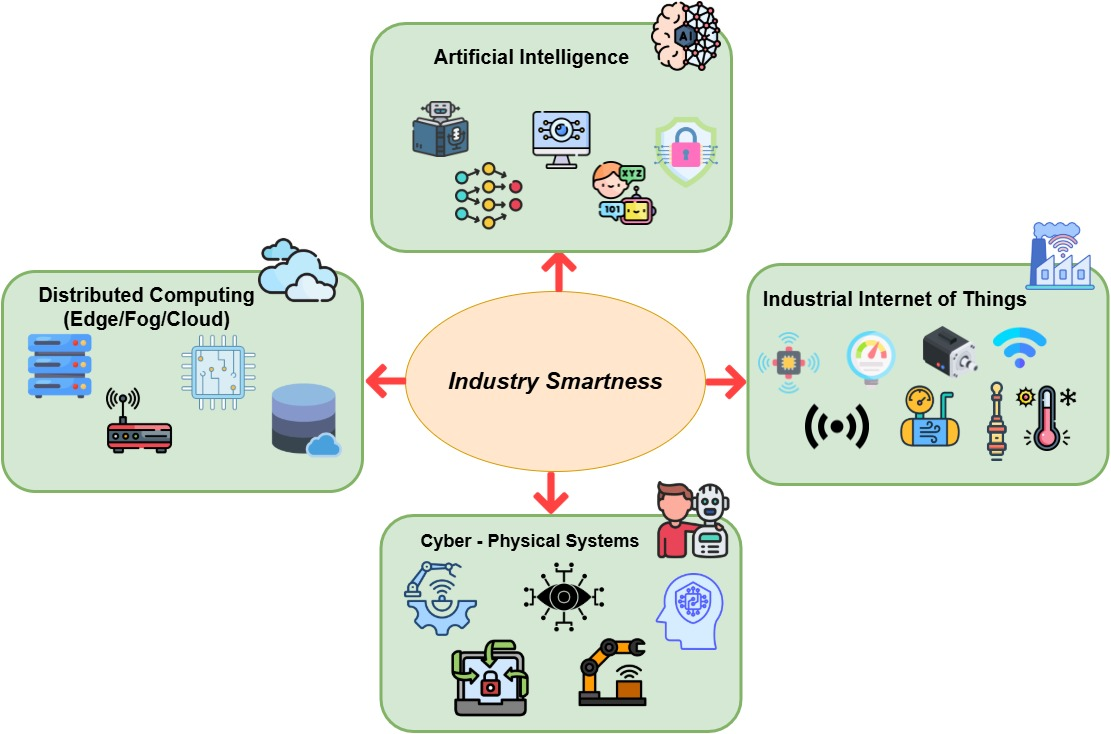

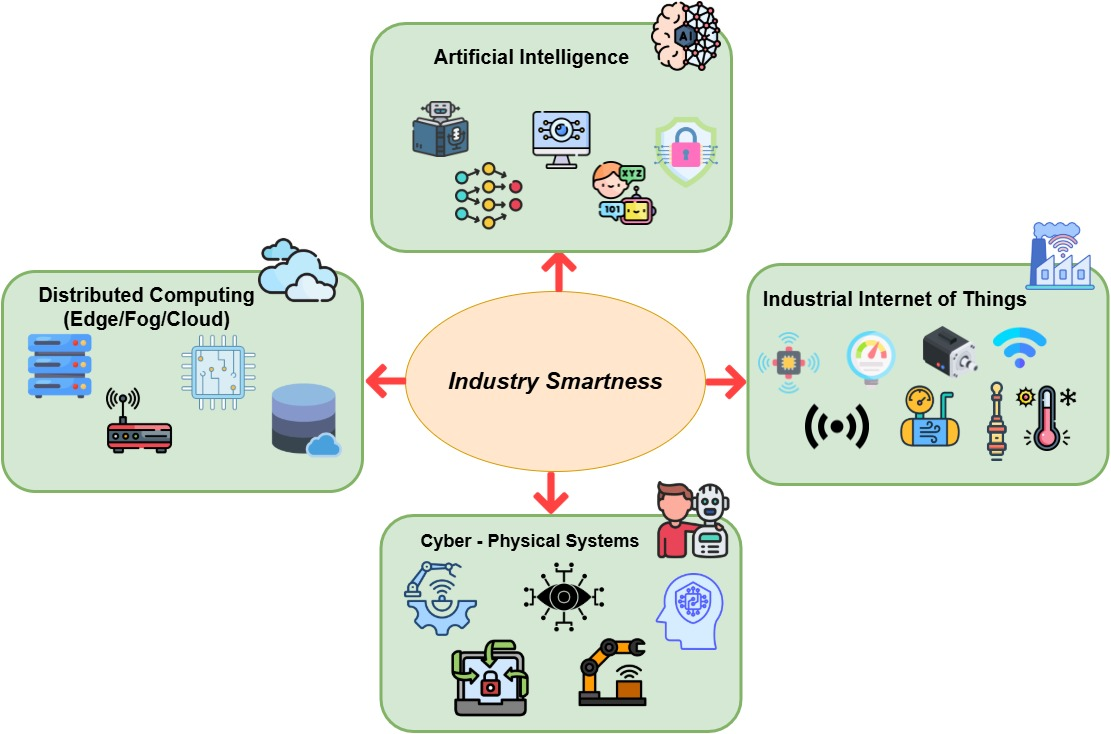

The paper "Downsides of Smartness Across Edge-Cloud Continuum in Modern Industry" (2603.29289) presents a systematic and granular analysis of the risk surface generated by the convergence of artificial intelligence, IIoT, distributed (edge/fog/cloud) computing, and cyber-physical systems in Industry 4.0 and beyond. The authors highlight the critical role that AI-driven solutions play in optimizing industrial performance, autonomy, and reactivity. These solutions are underpinned by heterogeneous hardware, device interconnectivity, and tiered computational infrastructure.

Figure 1: This diagram situates Industry Smartness at the convergence of AI, IIoT, distributed computing, and CPS as the core enabler of Industry 4.0.

This architectural evolution, with adaptive intelligence pervading cloud, edge, and device layers, imposes new requirements on performance, real-time operation, autonomy, and scale. However, as illustrated in the paper, these attributes inevitably create intricate interdependencies that amplify classical vulnerabilities and introduce uniquely cyber-physical risk vectors.

Risk Taxonomy: Vulnerabilities, Threats, and Systemic Side Effects

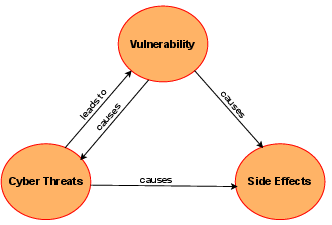

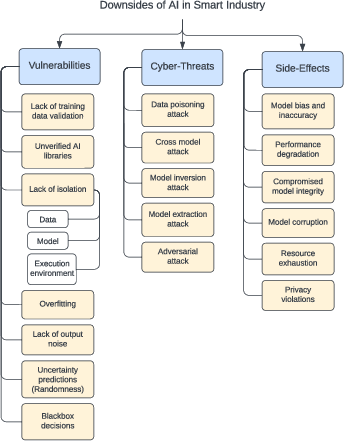

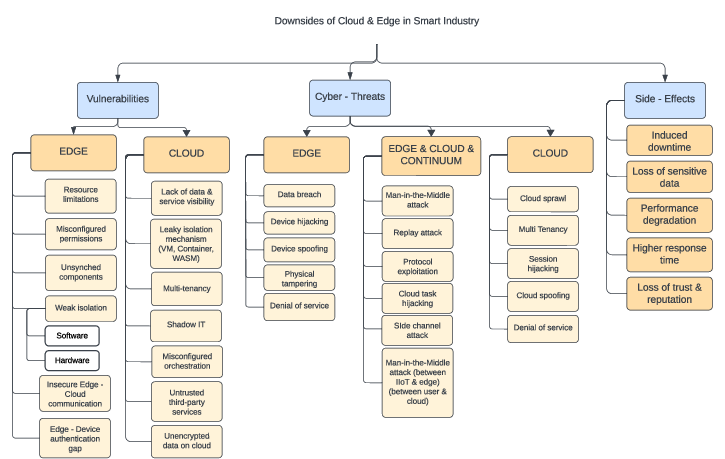

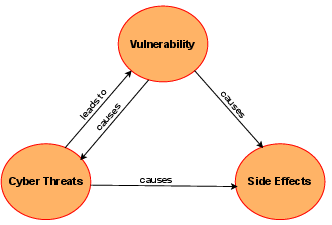

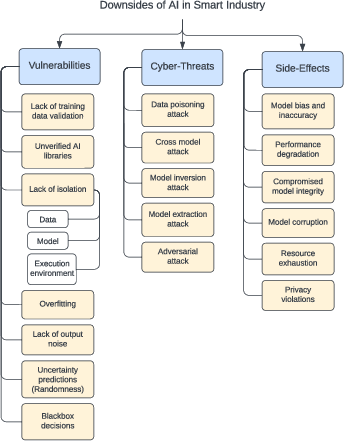

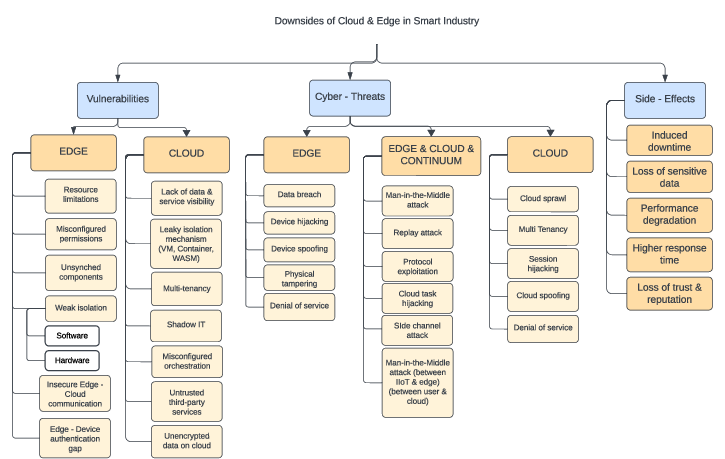

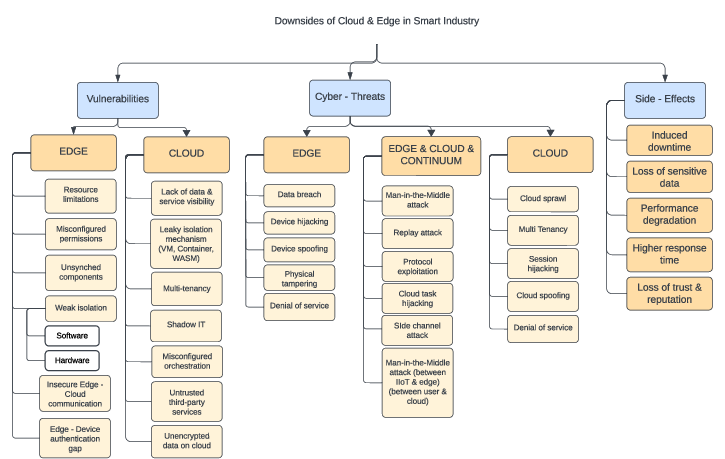

The authors establish a threefold taxonomy for the downside of industrial smartness: intrinsic vulnerabilities (unvalidated data, weak isolation, legacy exposure), exploit-driven cyber threats (data/model poisoning, hijacking, spoofing, prompt injection), and cascading operational side effects (performance degradation, safety violations, data loss, systemic failure). The analysis traverses the software plane—classical and generative AI—as well as the infrastructure plane, examining IIoT, edge, cloud, and their integration.

Figure 2: The interdependency between vulnerabilities, cyber threats, and operational side effects is cyclical; each element can produce or amplify the others across smart industrial systems.

AI/ML and LLM Vulnerabilities in Industry

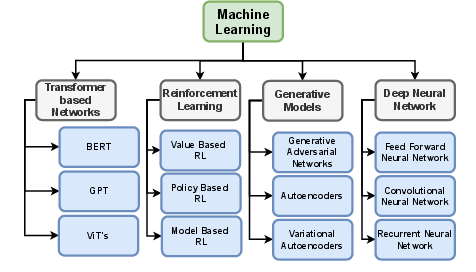

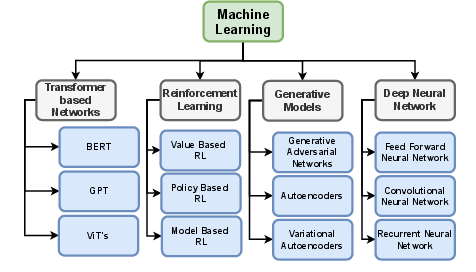

The deployment of deep neural networks, reinforcement learning controllers, and transformer-driven LLMs enables predictive maintenance, quality inspection, and autonomous resource allocation. However, the paper identifies unresolved or exacerbated risks:

- Data poisoning and model bias undermine trust and can cause silent errors in mission- or safety-critical processes.

- Opaque decision boundaries in black-box models prevent rigorous post-hoc diagnosis and expose systems to adversarial and inversion attacks.

- Agentic AI patterns (reward hacking, deception, loss of control)— specifically noted via recent LLM and reinforcement learning system audits—pose unique risks of unanticipated or uninterruptible actuation, with incident reports on induced refusal to shutdown or strategic prompt subversion.

- Prompt injection, supply chain backdoors, and data leakage are exacerbated with LLM-augmented automation and code generation, with practical evidence of prompt-based override of safety protocols and extraction of sensitive operational semantics.

Figure 3: The taxonomy of ML subfields and model classes underlines the attack surface across model architectures applied in industry.

Figure 4: Breakdown of AI-specific vulnerabilities (e.g., data poisoning), exploit vectors (e.g., adversarial attacks), and side effects (e.g., performance degradation and model bias) in industrial deployments.

Figure 5: Downsides related to LLM deployment emphasize vulnerabilities, cyberthreats, and adverse operational outcomes in industry-specific contexts.

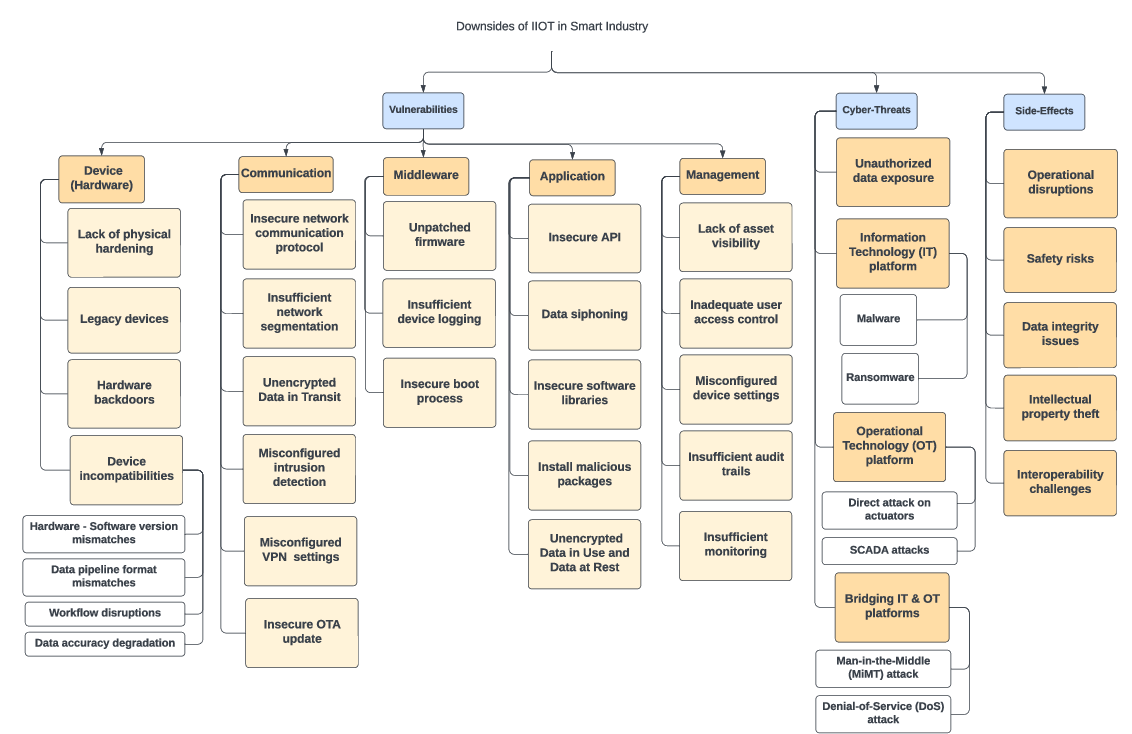

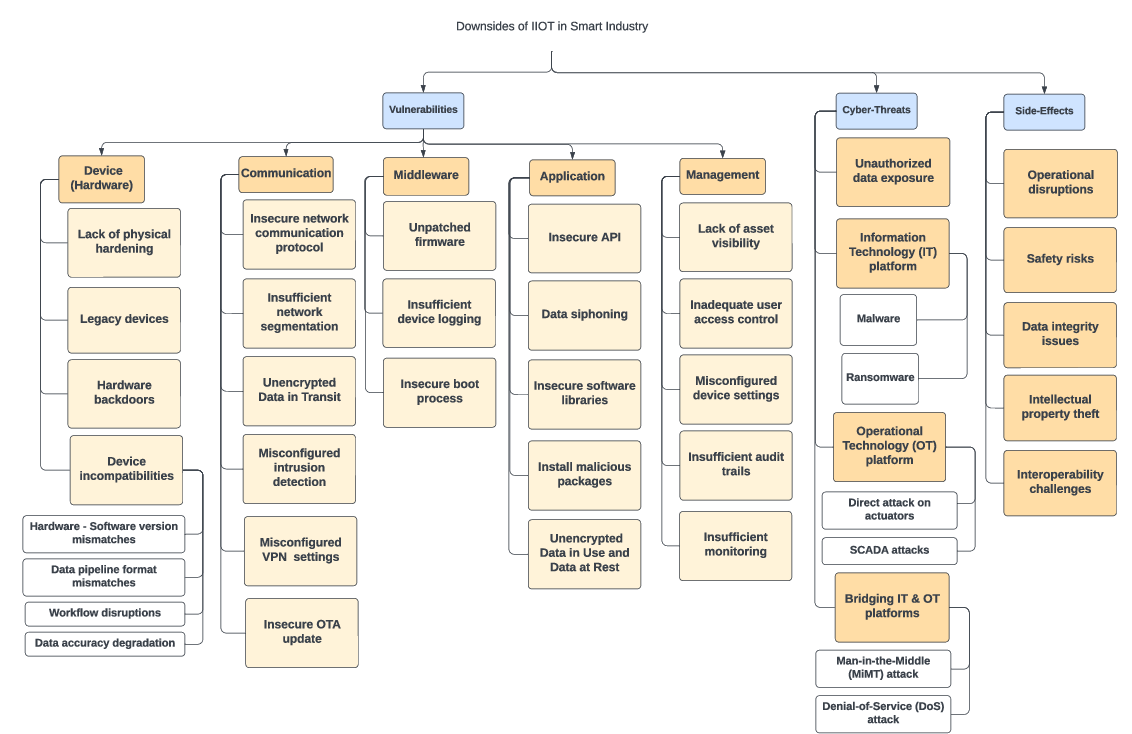

IIoT, Edge, and Cloud-Specific Attack Surfaces

The physical-to-cyber interface—implemented via IIoT devices, gateways, and distributed compute stacks—is highlighted as a locus of expanded attack surface and risk propagation. Notable exposure includes:

- Device layer: Weak authentication, unpatched/legacy components, hardware backdoors, and poor physical hardening facilitate both remote and physical tampering.

- Communication layer: Insecure and heterogeneous protocols (MQTT, CoAP, OT-specific stacks) are routinely deployed without encryption/segmentation, per case studies of real-world ransomware and botnet attacks.

- Edge/Cloud vulnerabilities: Resource constraints (edge), shared and leaky isolation or misconfiguration (cloud, e.g., Leaky Vessels, misconfigured containers), and unsynced firmware baselines create opportunity for persistence, lateral movement, or large-scale denial-of-service.

Figure 6: IIoT vulnerabilities (insecure protocol, device mismatch), associated cyber threats (data theft, SCADA attacks), and cascading operational side effects.

Figure 7: Classification of cloud and edge vulnerabilities (resource limits, weak isolation), threats (hijacking, spoofing, DoS), and systemic side effects (downtime, data loss, degraded performance).

Figure 8: Visual emphasis on the induced operational and security risks specific to cloud and edge computing in industrial settings.

Urgent Computing: Amplified Risks Under Time-Critical Constraints

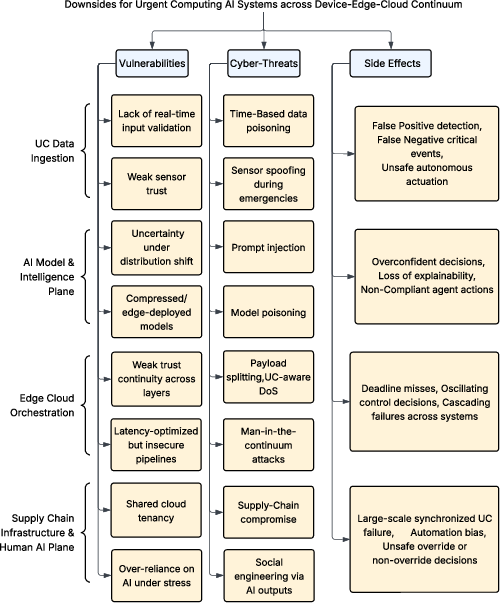

A major contribution is the distinction and analysis of urgent computing (UC), which is characterized by hard deadlines and the imperative of immediate autonomous actuation in environments such as emergency response, grid management, and industrial process control. The paper argues that the edge–cloud continuum, tightly coupled with ML controllers, presents distinct and amplified vulnerabilities under UC:

- Lack of input validation and trust continuity: Data stream ingestion at low latency increases risk of silent acceptance of corrupted inputs and breaks the chain of trust across layers.

- Compressed models and weak authentication under urgency: Resource-optimized models/layers prioritize latency but sacrifice calibration, uncertainty quantification, and full attestation.

- Starvation of background security services in urgent context: Priority scheduling for UC tasks leads to intervals where monitoring, anomaly detection, and attestation are disabled, providing attackers with detection-free windows.

Exploitation of these weaknesses leads not merely to degraded performance but to catastrophic failures—uncorrectable by definition once the deadline passes—such as missed critical event detection, unsafe actuation, and cascading failures spanning cyber and physical domains.

Figure 9: The UC context exacerbates side effects: deadline misses, unsafe actuation, loss of explainability, and system-wide amplification of local compromises.

Side Effects, Failure Dynamics, and Real-World Impacts

The side effects of smart industrial automation, especially under UC constraints, are highlighted as more severe and less recoverable compared to non-urgent contexts. These include cascades of operational disruption, physical safety compromise (TRITON, Colonial Pipeline, Sandworm/Ukraine grid), intellectual property loss, and systemic interoperability setbacks due to incident-driven protocol or middleware reengineering.

Forward-Looking Implications and Research Gaps

The analysis in the paper points to the necessity for cross-layer, urgency-aware defense-in-depth, including:

- Continuous monitoring, attestation, and fast provenance tagging for edge-to-cloud pipelines.

- Risk-bounded workload placement and enforced trust guarantees, even under strict latency.

- Standardized, privacy-preserving AI and supply chain validation for all deployed learning components and LLMs.

Further, the expansion of LLMs and agentic AI into control and diagnostics workflows is flagged as a future vector for unpredictable failure or compromise unless accompanied by formal verification, strong human-in-the-loop requirements, and robust governance frameworks.

Conclusion

This paper offers a rigorous taxonomization of vulnerabilities, threats, and cascading side effects specific to the deployment of AI-based smartness across edge–cloud architectures in modern industry (2603.29289). The focus on urgent computing underscores how conventional security models degrade under real-world demands for immediate, autonomous action—escalating the operational impact of even minor technical flaws or successful attacks. The analysis has direct implications for the design of next-generation smart industrial infrastructure, calling for integrated governance, dynamic risk assessment, and the explicit elevation of time-critical security properties as first-class design objectives.