- The paper introduces a novel, calibration-free CV pipeline to derive world-aligned 3D joint angles from standard monocular video.

- It demonstrates that unsupervised embedding of joint angle data distinguishes healthy from post-stroke motor patterns, revealing compensatory strategies.

- The method supports non-intrusive clinical assessments and scalable deployment in both clinical and home-based stroke rehabilitation settings.

Computer Vision-Enhanced Assessment of Upper-Extremity Function via the Box and Block Test

Introduction

The assessment of upper extremity (UE) motor function after stroke traditionally depends on ordinal scales or time-based quantitative evaluation, each with inherent limitations. Ordinal instruments such as FMA-UE and ARAT offer nuanced, multi-joint evaluation, but are hindered by coarse sensitivity and significant time demands for administration. In contrast, time-based assessments like the Box and Block Test (BBT) provide rapid throughput but lack granularity regarding movement quality and compensatory strategies. The paper "Enhancing Box and Block Test with Computer Vision for Post-Stroke Upper Extremity Motor Evaluation" (2603.29101) addresses these deficiencies by introducing a computer vision (CV)-based framework that extracts world-aligned 3D UE joint angles from standard monocular RGB video during BBT performance, delivering quantitative analysis of movement quality without necessitating modifications to prevailing clinical workflows.

Methodological Advancements

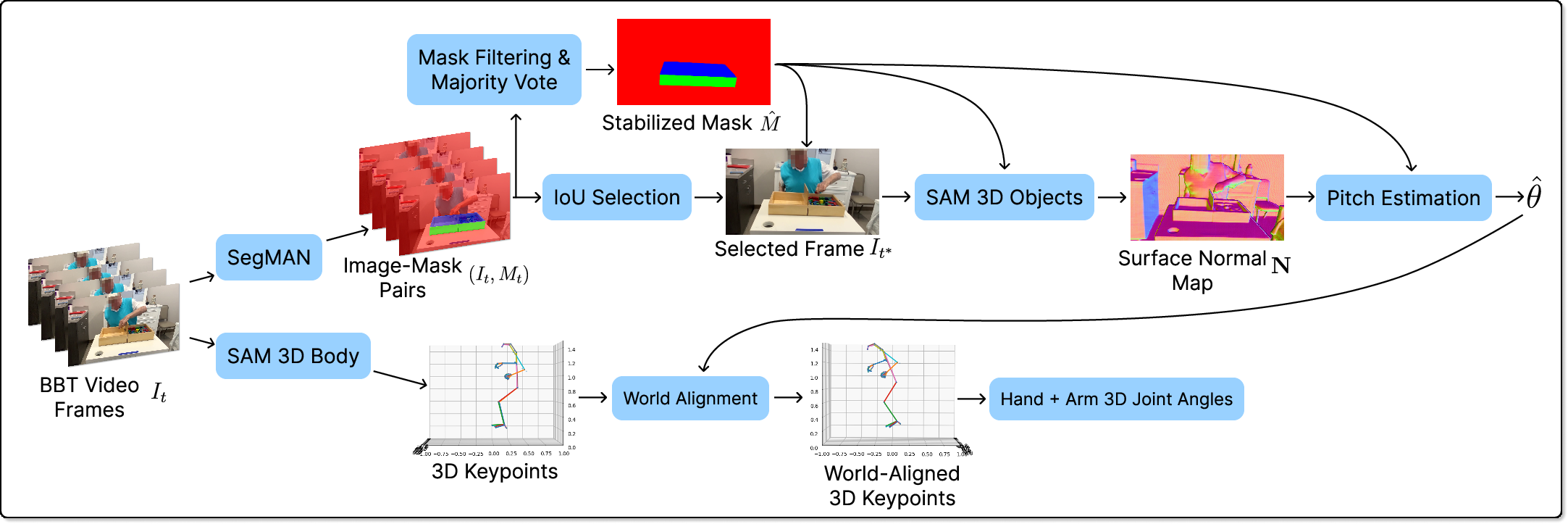

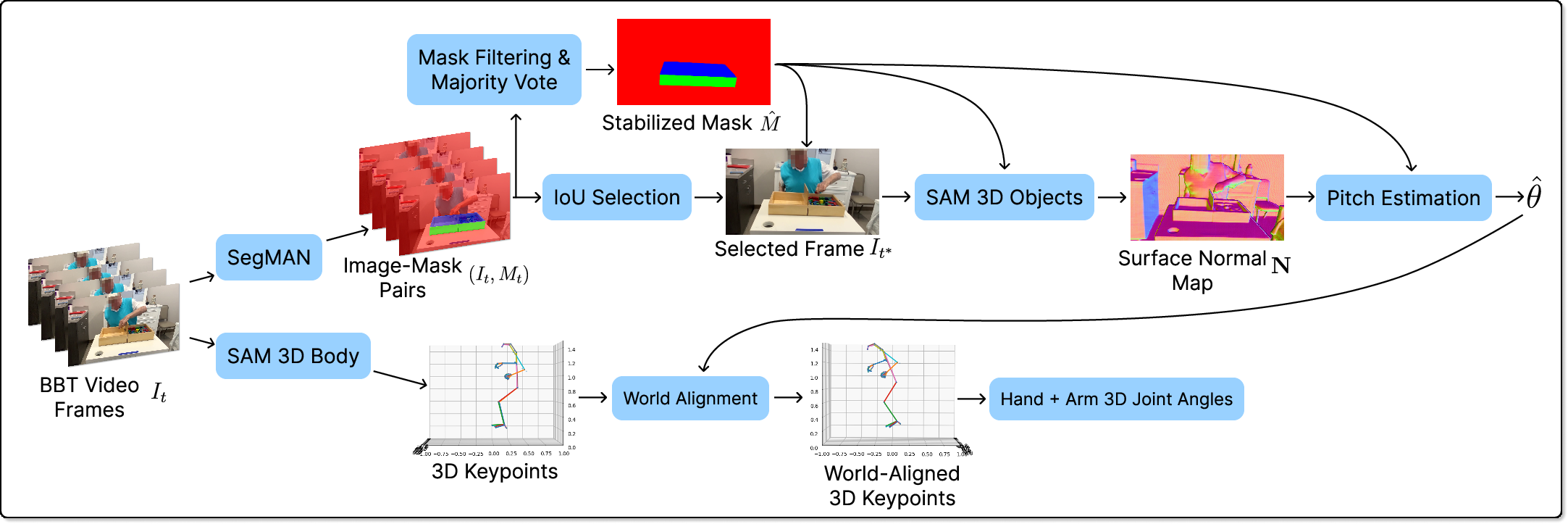

The centerpiece of the proposed method is a calibration-free pipeline designed for robust 3D joint angle estimation agnostic to arbitrary camera poses. The pipeline leverages a fine-tuned SegMAN architecture to semantically segment the BBT box, enabling recovery of the apparatus orientation and ensuing rigorous camera extrinsic calibration via surface normal estimation. Camera pitch with respect to gravity is derived, facilitating a critical transformation: monocular 3D body and hand poses—predicted via state-of-the-art models such as SAM 3D Body—are rotated to be gravity-aligned, thus decoupling biomechanical posture estimation from camera-induced artifacts.

Figure 1: Overview of the pipeline, including semantic segmentation, surface normal extraction, extrinsic rotation for alignment with gravity, and joint angle computation.

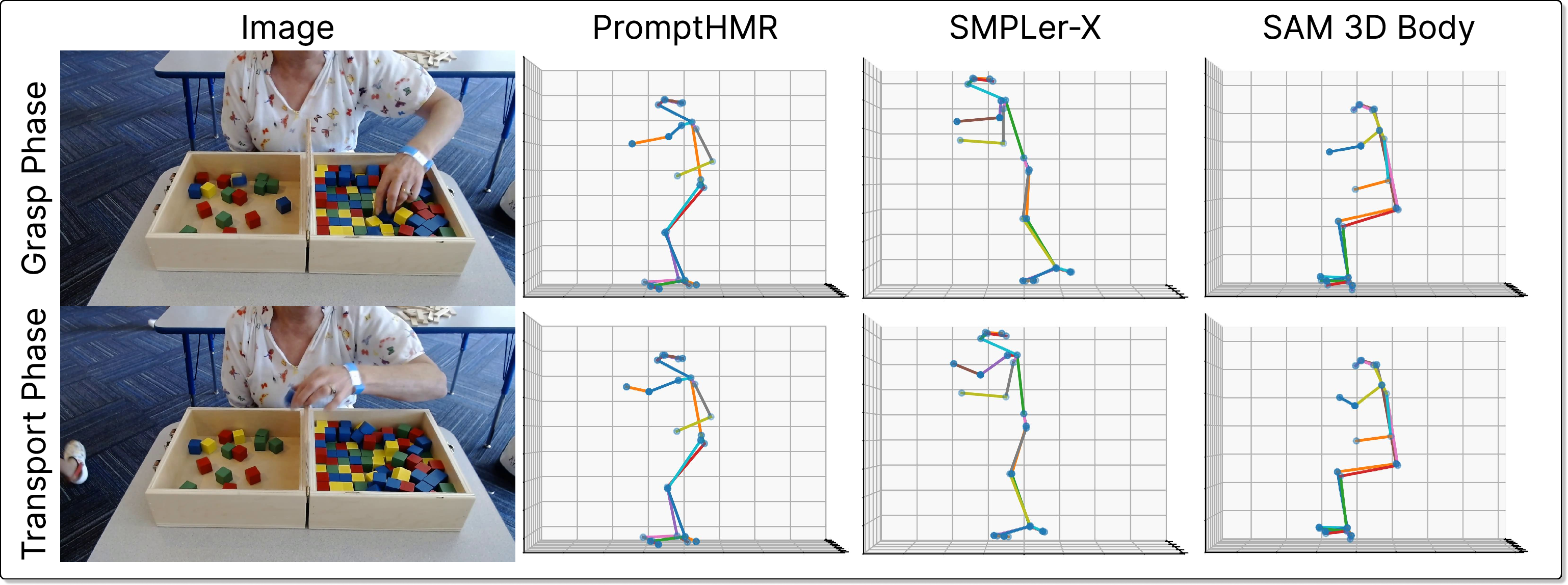

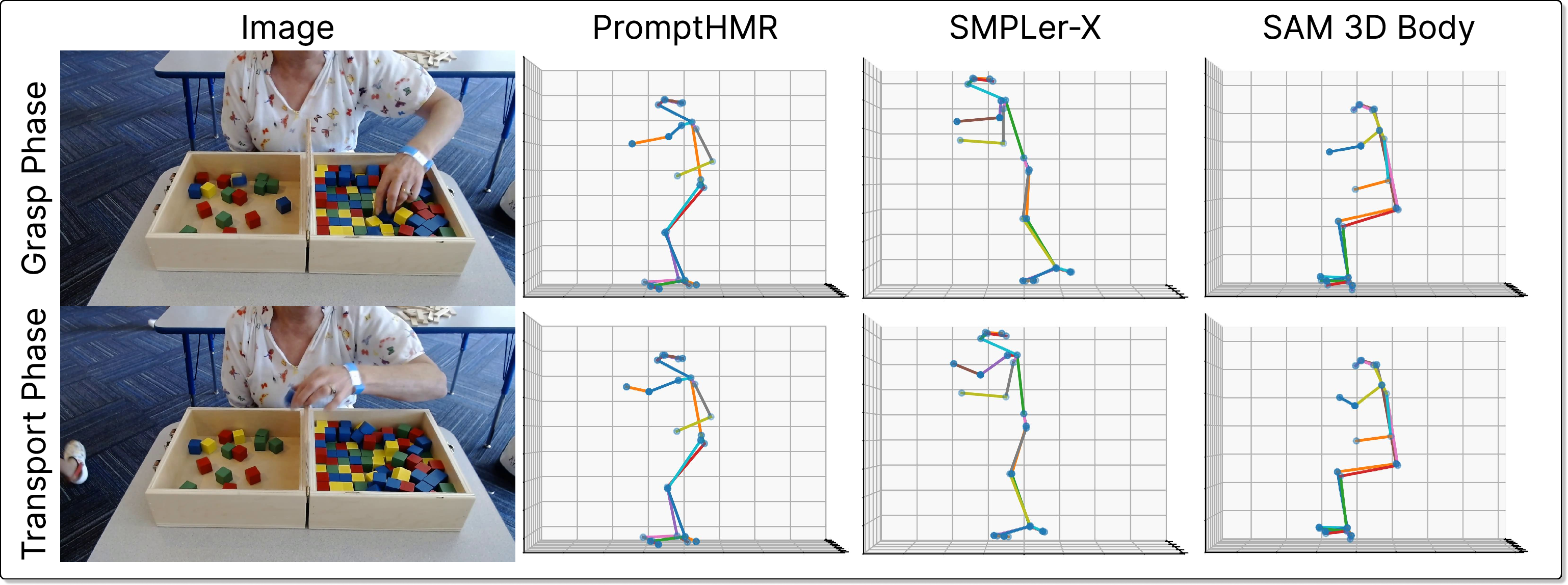

Pose estimation is performed per-frame with no temporal smoothing, employing SAM 3D Body for both hand and body keypoints due to its comparative advantages in anatomical plausibility and occlusion robustness over alternatives such as PromptHMR and SMPLer-X. Finger, wrist, elbow, shoulder, and trunk joint angles are computed via triplet vector analysis, with explicit transformation to the world frame prior to joint computation.

Evaluation of State-of-the-Art 3D Pose Estimation Methods

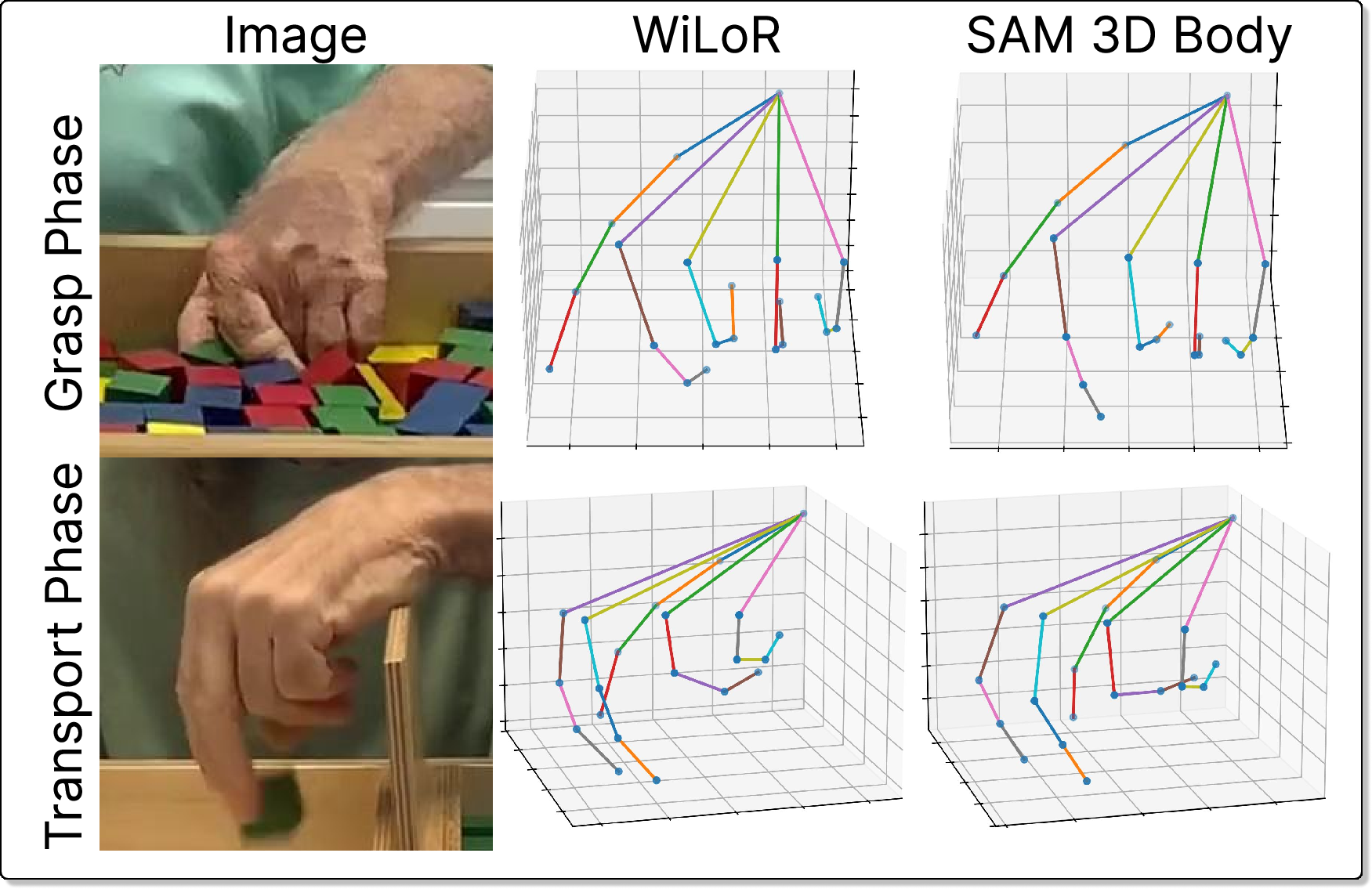

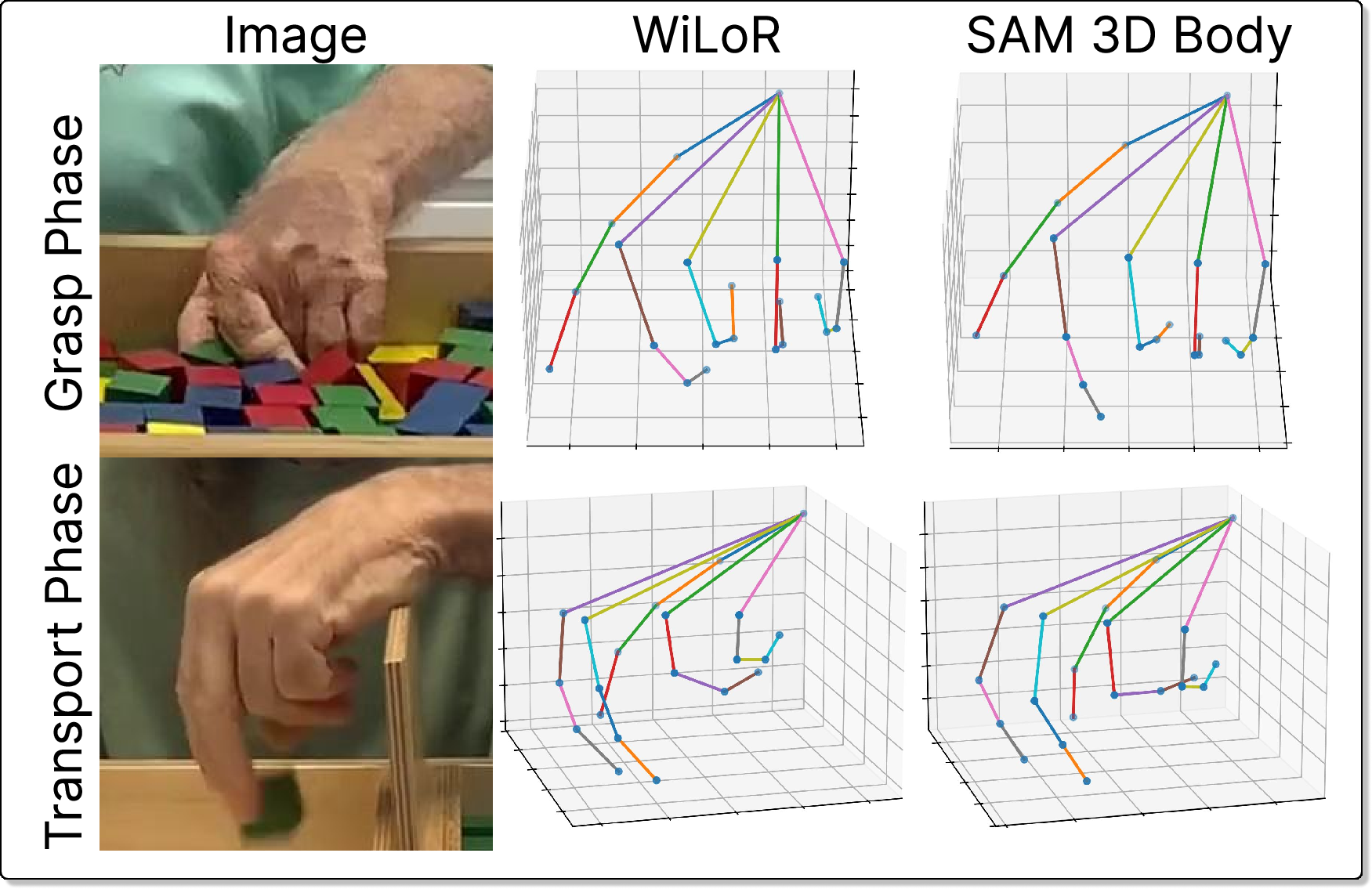

A head-to-head qualitative and benchmark-based comparison was performed among SMPLer-X, PromptHMR, SAM 3D Body (for whole body), and WiLoR (for hands). Side-view visualizations across BBT grasp and transport segments reveal significant disparities in depth consistency and joint articulation among these models. PromptHMR often exhibits over-extension due to depth ambiguities, while SMPLer-X and SAM 3D Body maintain closer congruence with visually observed arm configurations, with the latter providing superior temporal stability and articulation. In the hand domain, WiLoR underperforms when reconstructing complex, flexed poses typical of BBT, while SAM 3D Body, despite limitations inherent to monocular hand pose estimation, delivers the most plausible anatomical structures under severe occlusion.

Figure 2: Comparative visualizations of 3D body pose outputs from PromptHMR, SMPLer-X, and SAM 3D Body, illustrating depth consistency and joint angle variance in BBT grasp/transport.

Figure 3: 3D hand pose outputs from WiLoR and SAM 3D Body, highlighting underestimation of finger articulation in WiLoR during transport.

Selection of SAM 3D Body is further justified by its superior or competitive performance on established 3D pose benchmarks, validating its architecture choice for robust movement quality quantification.

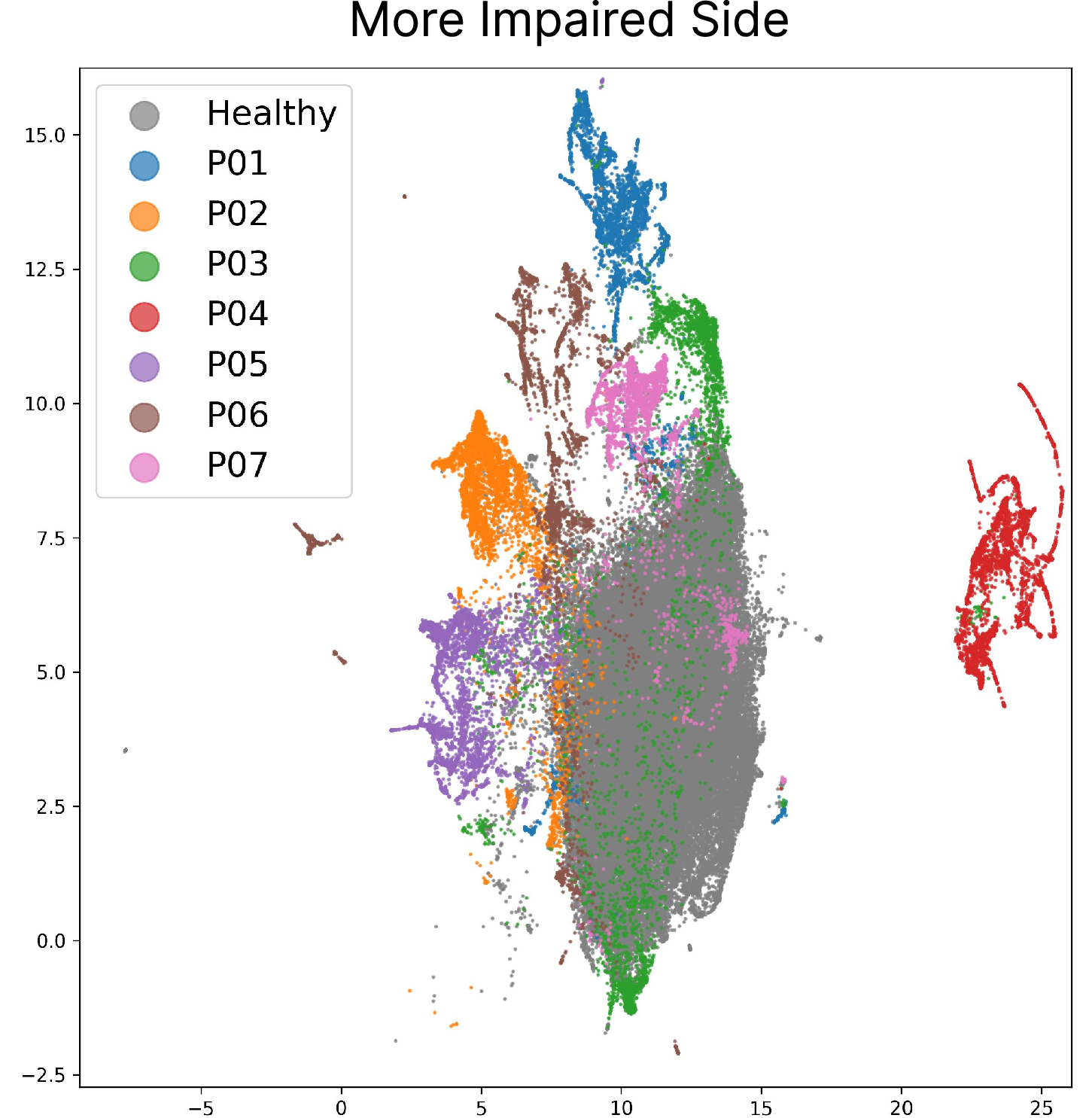

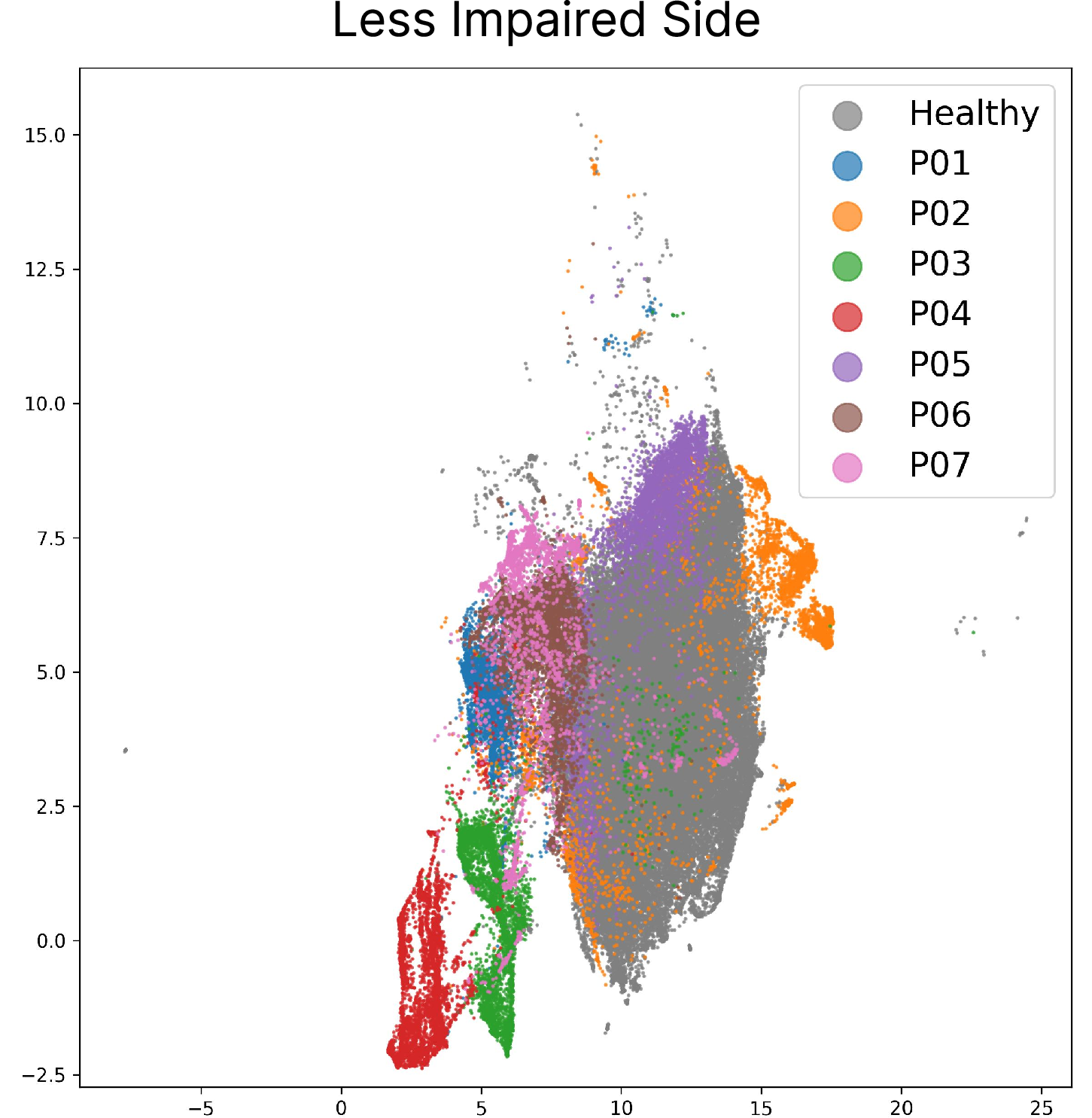

World-Aligned Joint Angle Embedding and Movement Clustering

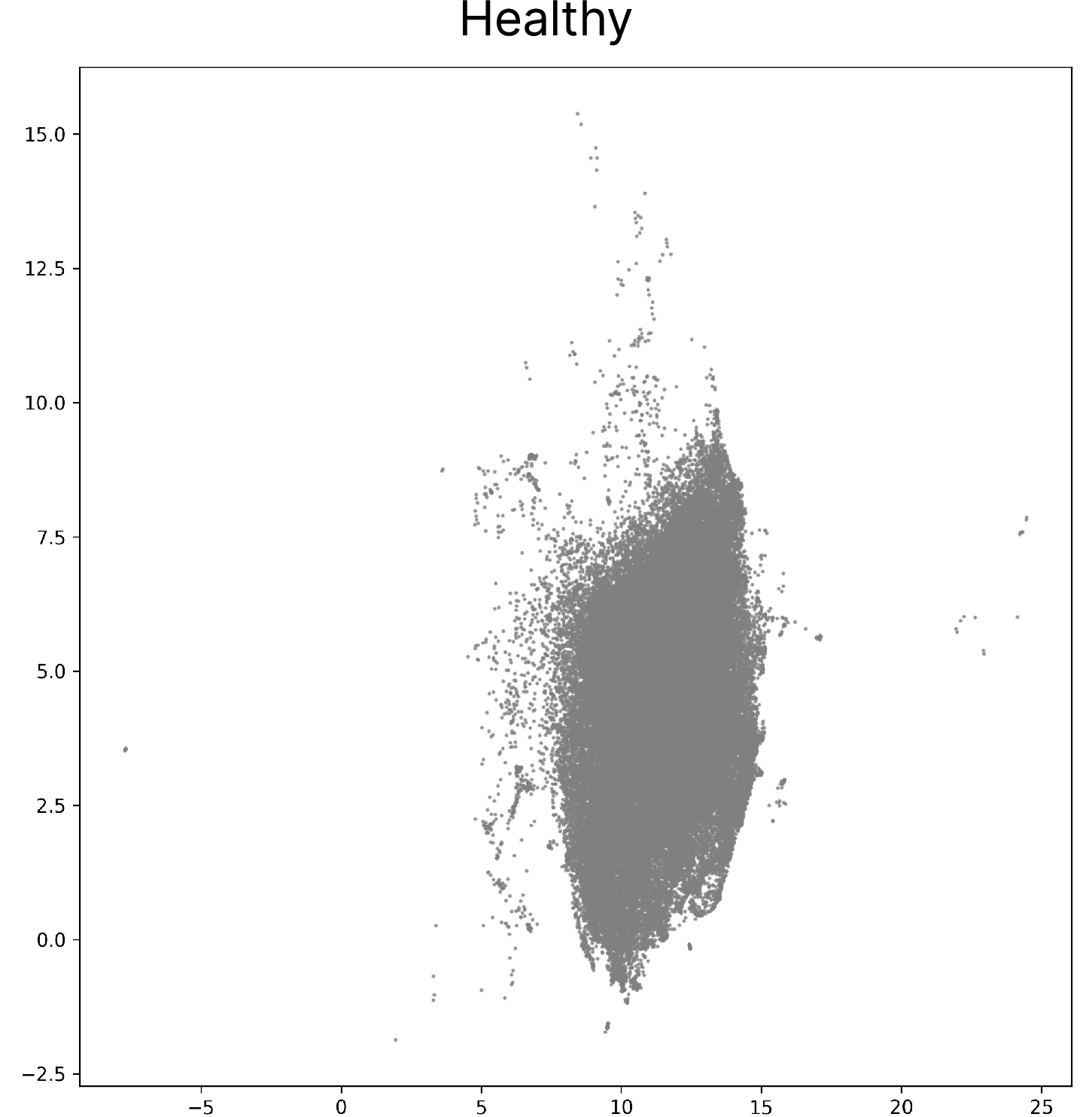

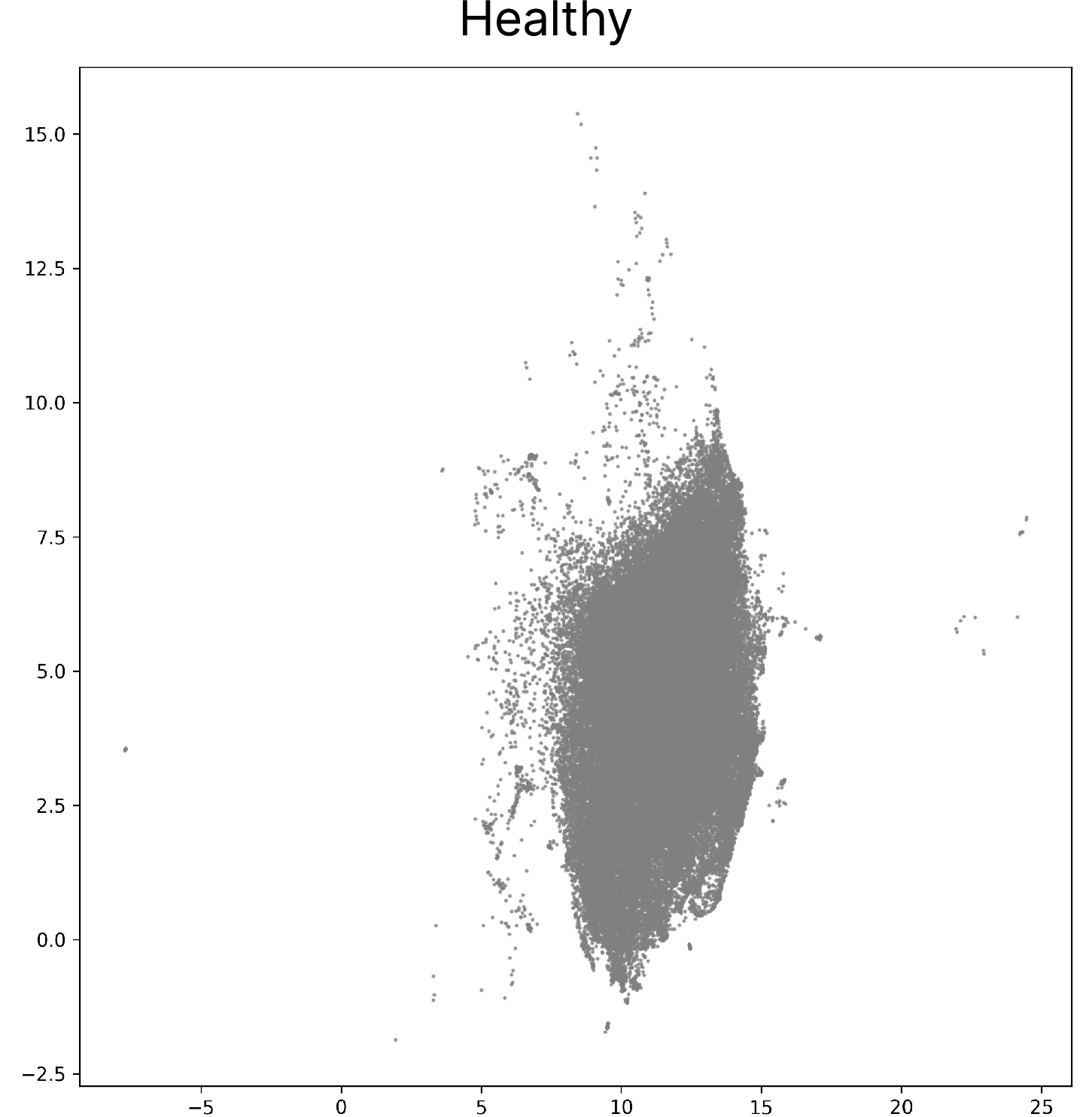

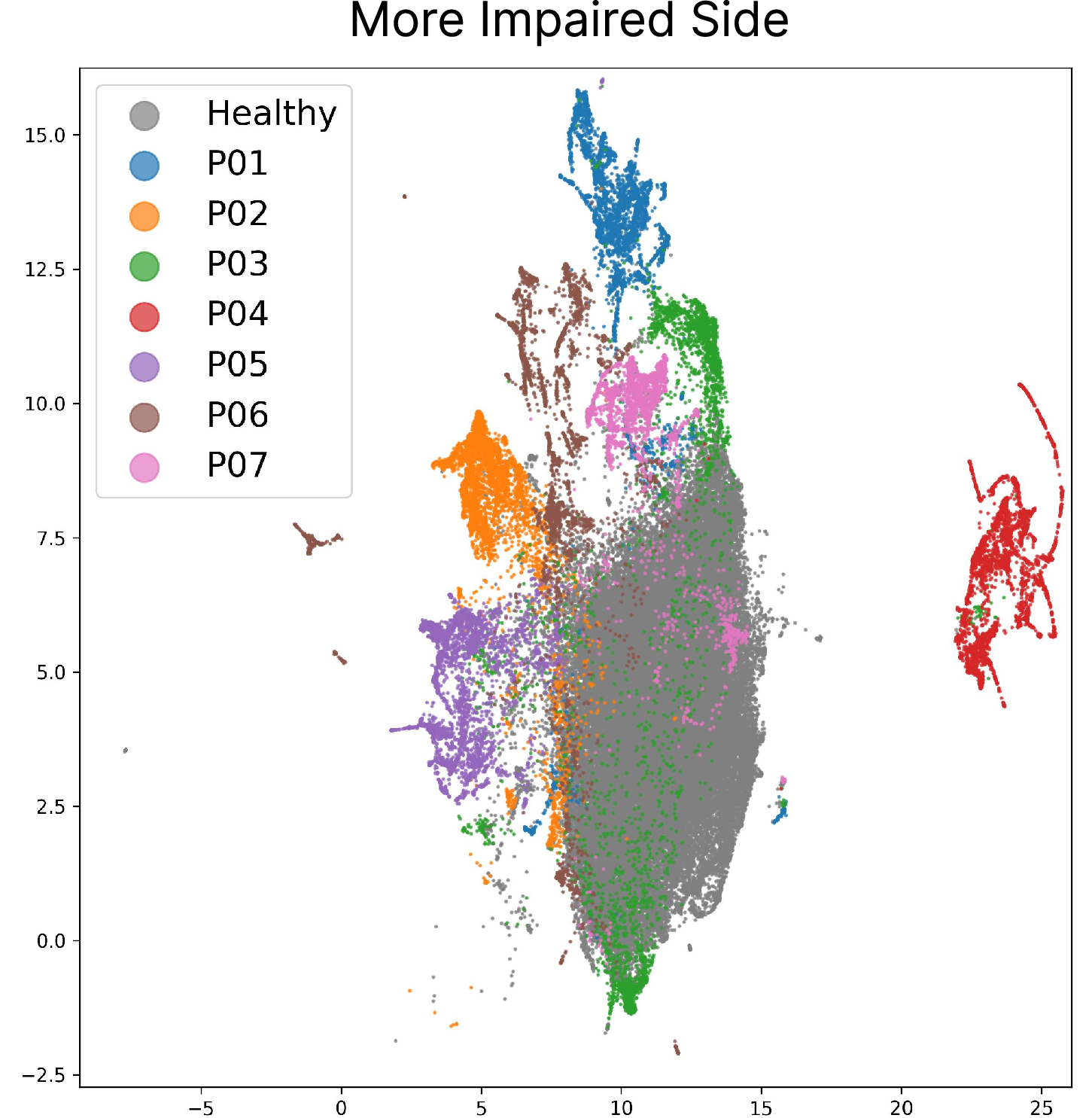

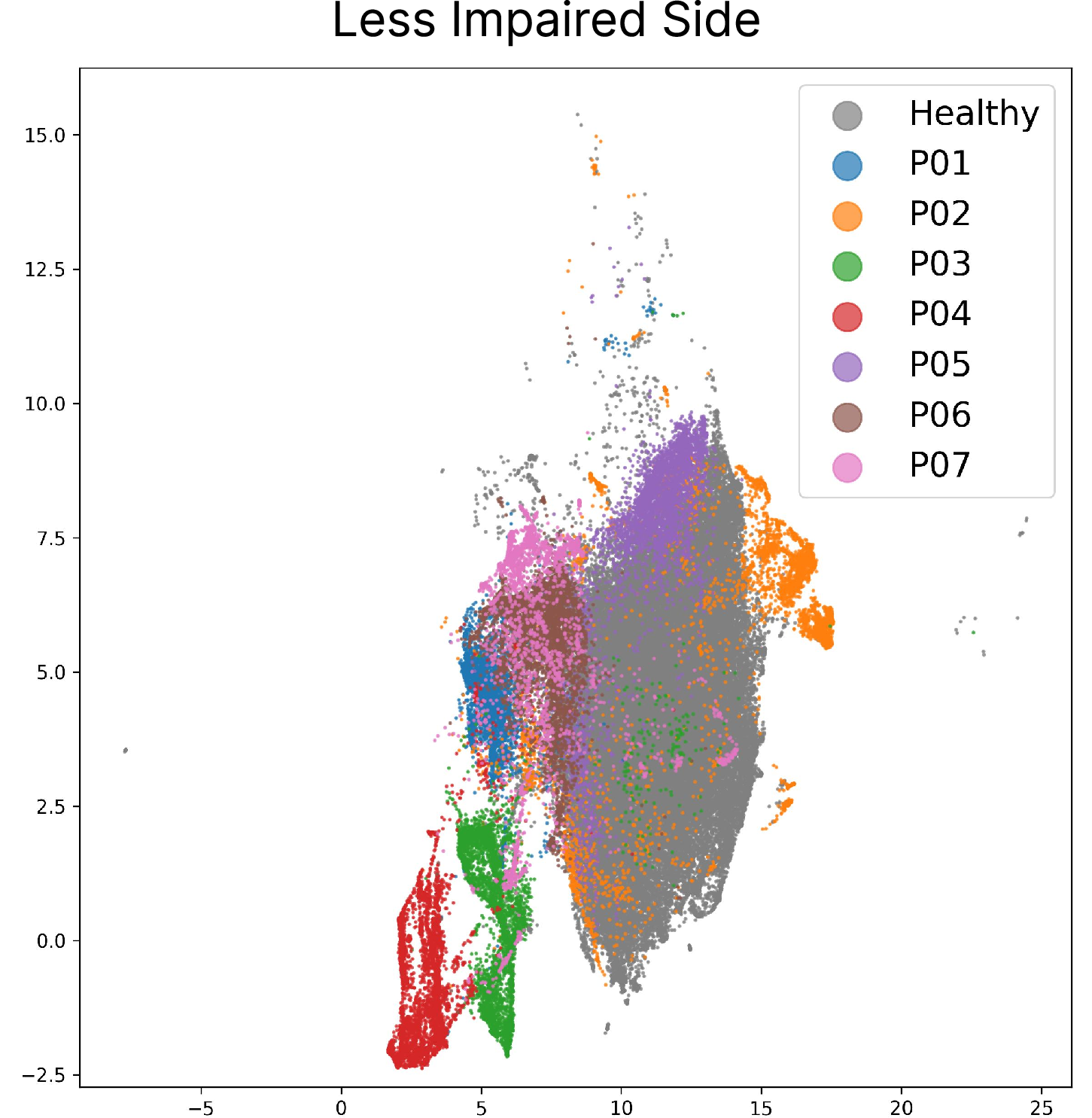

Leveraging the robust, world-aligned joint angle extraction, the authors aggregate 136 BBT recordings (healthy: 48, post-stroke: 7) and encode per-frame finger, arm, and trunk angles. Unsupervised dimensionality reduction via PCA (for finger joints, preserving >90% variance with 9 PCs) and subsequent UMAP projection enable visualization and analysis of the high-dimensional posture space.

Figure 4: UMAP embeddings of joint angles derived from healthy subject frames.

The resultant embeddings unequivocally separate healthy participants from those post-stroke, as well as differentiate postural compensation patterns across more-impaired and less-impaired sides within the same individual. Notably, individuals with identical BBT block counts, and thus indistinguishable test scores, exhibit distinct clusters in joint angle space, indicating divergent compensatory strategies and residual impairment.

These findings are underscored by quantitative scoring: the mean Euclidean distance in the joint-angle PCA feature space (relative to healthy distribution) is consistently higher for post-stroke participants, with additional stratification by impairment side. This suggests that the proposed pipeline detects subtle, clinically relevant variation in movement quality beyond what is captured in coarse summary scoring metrics.

Practical Implications and Theoretical Insights

By integrating camera-agnostic world-alignment and advanced monocular pose estimation, this method provides a non-intrusive, scalable augmentation to established clinical assessments. The reliance on only consumer-grade video acquisition supports deployment in routine clinical settings, home assessments, or resource-limited environments. Importantly, the extracted biomechanical parameters supply orthogonal information to speed-based metrics, supporting fine-grained monitoring of recovery trajectories, compensatory behavior emergence, and rehabilitation efficacy.

Theoretically, the separation of movement phenotypes in the unsupervised joint-angle embedding space motivates future work on outcome prediction, patient sub-group discovery, and individualized intervention optimization using richer, pose-derived representations. The observed limitations—primarily small N for post-stroke and absence of temporal feature analysis—suggest expansion to full-sequence modeling (e.g., with temporal graph networks) and multi-task learning with additional clinical labels.

Limitations

The core limitations include the modest sample size for the pathological cohort, a lack of temporal modeling (all analyses conducted frame-wise), and the open challenge of robust monocular articulated hand pose estimation in occluded, out-of-distribution poses. Furthermore, inherent dataset bias (e.g., healthy training populations for foundation models) constrains generalizability. Efforts to address these by expanding the dataset, integrating temporal dynamics, and refining hand estimation algorithms are warranted.

Conclusion

This work demonstrates that world-aligned, monocular 3D joint angle estimation via computer vision not only provides a camera- and orientation-invariant quantification of UE posture during the Box and Block Test but also exposes movement quality distinctions and compensatory motor strategies overlooked by standard BBT scores. The approach is extensible to other clinical tasks and populations and positions CV-based anatomical analysis as a viable augmentation for objective, fine-grained stroke rehabilitation evaluation and monitoring.