- The paper introduces a large-scale, multimodal dataset that integrates 3D near-field CSI, RGB, LiDAR, and GPS for realistic low-altitude sensing.

- It presents a customizable 3D dataset generation framework simulating UAV trajectories and detailed urban environments with precise ITU-R material parameters.

- Empirical validation demonstrates enhanced user localization and robust beam prediction, underscoring the value of multimodal data fusion in near-field conditions.

Authoritative Summary of Multimodal-NF: A Wireless Dataset for Near-Field Low-Altitude Sensing and Communications

Motivation and Contributions

The transition towards environment-aware 6G wireless networks is fundamentally challenged by the lack of datasets encompassing 3D spatial and near-field characteristics vital for low-altitude XL-MIMO systems, particularly in the upper midband (7–24 GHz). While conventional datasets primarily target 2D terrestrial and far-field scenarios, Multimodal-NF distinctly addresses these limitations by introducing a large-scale, open-source generator and dataset that supports rigorous 3D spatial modeling, near-field propagation, and multimodal data integration.

Multimodal-NF delivers:

- A customizable 3D near-field dataset generation framework, enabling research configurations of UAV trajectories, array geometries, and environments with detailed ITU-R material parameterization for realistic electromagnetic propagation.

- Synchronization across wireless and sensory modalities, including high-fidelity near-field CSI, precise wireless labels (Top-5 beam indices, LoS/NLoS indicators), RGB imagery, LiDAR point clouds, and GPS coordinates.

- Empirical validation via representative case studies on user localization and multimodal beam prediction, demonstrating the utility of this dataset in enabling high-resolution sensing and environment-aware communications.

System and Dataset Design

The system model established in Multimodal-NF features a low-altitude XL-MIMO BS using a 64×64 UPA in the yz-plane. A single-antenna UAV moves along predetermined trajectories, with RGB cameras and LiDAR sharing the BS coordinate system. The channel model employs per-antenna near-field computations with spherical wavefronts, capturing accurate 3D propagation effects. For each sample, wireless data includes CSI over all antennas and subcarriers, LoS/NLoS labels, and beam indices, while sensory modalities provide environmental and spatial priors.

The generation process comprises (i) scene construction with customizable urban layouts and realistic material assignment, (ii) UAV trajectory simulation with 10 modes spanning diverse altitude layers and kinematic complexity, and (iii) multimodal and wireless data synthesis with precise spatial, visual, and geometric context alignment.

Theoretical Support for Multimodal Integration

Through conditional entropy analysis, the paper formally shows that multimodal side information reduces geometric state uncertainty, which subsequently decreases the search space for both sensing and communication tasks. Informative multimodal observations (e.g., LiDAR/RGB/GPS) enable more precise inference of spatial state and communication targets, motivating the fusion-centric data structure in Multimodal-NF.

Dataset Statistics and Contents

Multimodal-NF constitutes over 215,000 samples across train, validation, and test splits, covering 30 cities and 10,770 UAV trajectories, with each trajectory sampled at 0.1s intervals spanning altitudes from 5–80m. The dataset includes:

- CSI tensor for all antennas/subcarriers

- Wireless labels (LoS/NLoS, Top-5 beam indices, normalized gains)

- GPS coordinates with controllable Gaussian noise

- RGB images (FoV 90°, 512×512 resolution)

- LiDAR point clouds (10,000 points)

- Flight trajectory IDs delineating the UAV's kinematic mode

Empirical Validation and Numerical Results

User Localization

High-resolution 3D user localization using OMP with a polar-domain codebook demonstrates precise alignment between estimated and ground truth UAV positions, validating the dataset's capacity to preserve 3D geometric features essential for near-field sensing.

(Figure 1)

Figure 1: Visualization of estimated and ground-truth UE positions (Cartesian and angular domains) demonstrating accurate spatial inference capabilities of Multimodal-NF.

The angular-domain channel response shows substantial angular spread, confirming the dataset's coverage of spherical wavefront effects and multi-dimensional spatial variation.

Multimodal Beam Prediction

Spatial-temporal analysis of beam indices reveals that LoS trajectories yield periodic beam index jumps due to discretization boundaries, while NLoS scenarios exhibit irregular discontinuities driven by scatterers. This underpins the complexities and realism of Multimodal-NF, making it suitable for blockage-aware beam alignment and robust communications.

(Figure 2)

Figure 2: Beam index dynamics in LoS and NLoS scenarios showing spatial-temporal discontinuities and the necessity of environmental awareness for beam prediction.

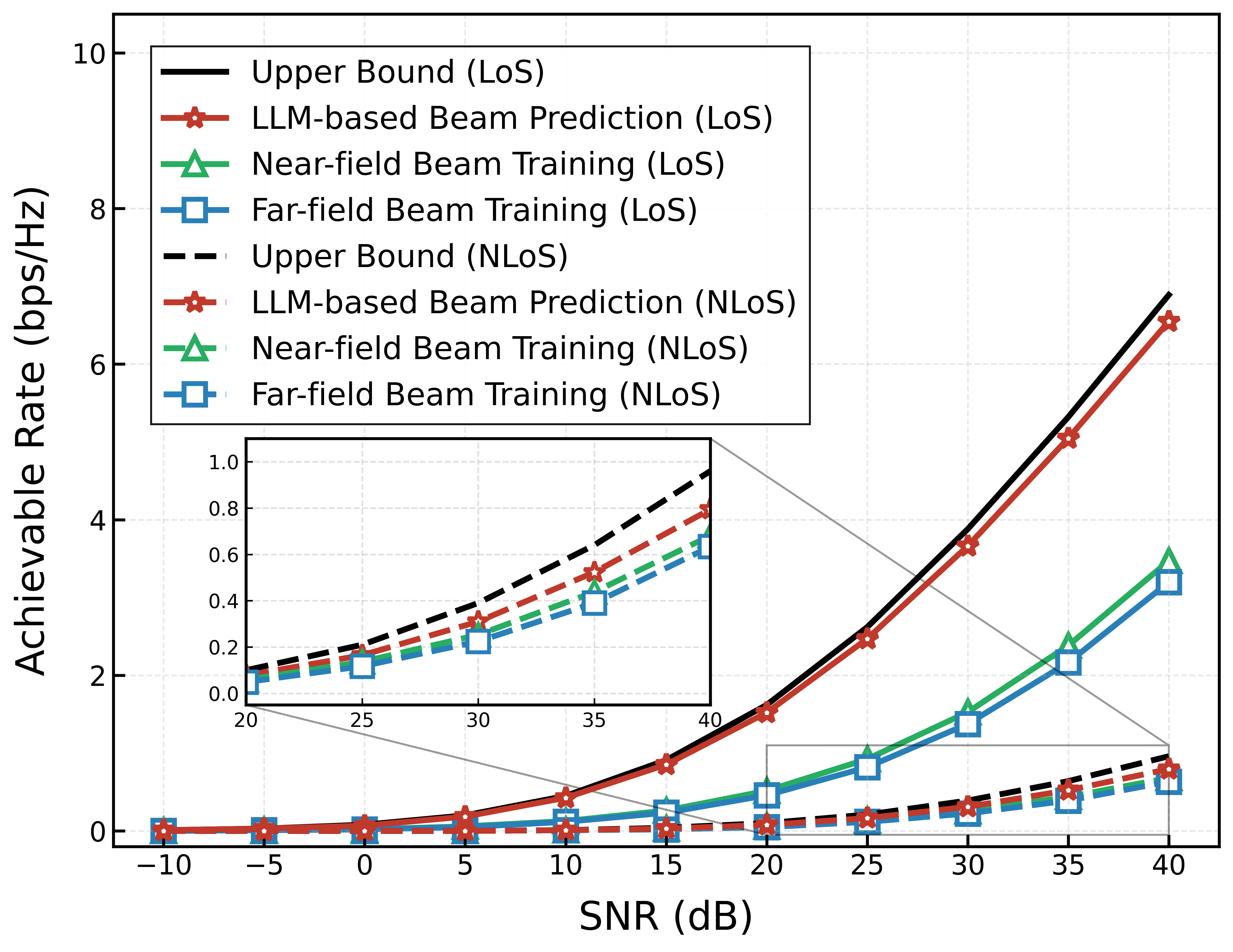

An LLM-based framework utilizing GPT-2 predicts future beam indices for Tp=10 slots based on historical multimodal data. The achievable rate comparison establishes that:

Modality Impact

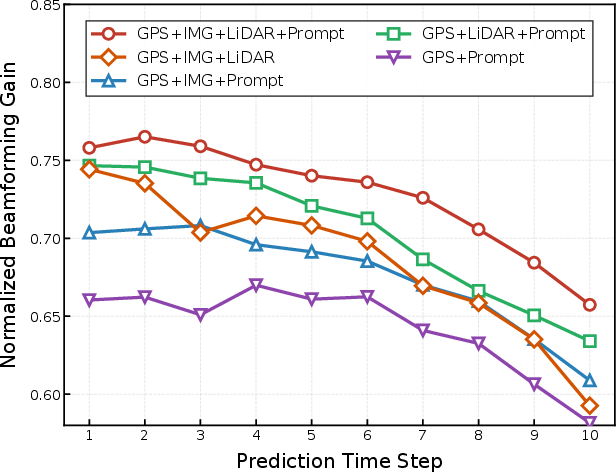

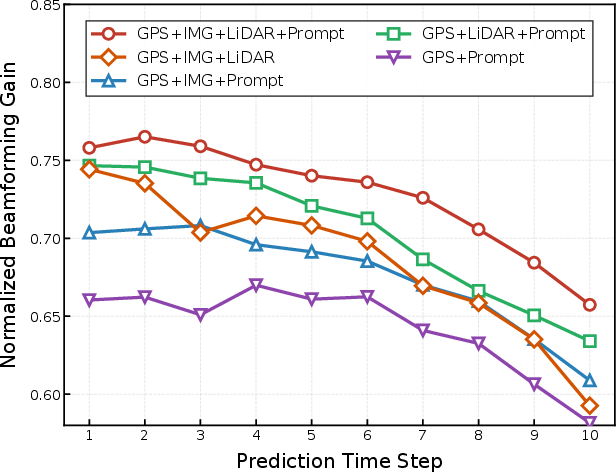

Ablation studies confirm that GPS alone provides baseline spatial awareness. The inclusion of RGB and LiDAR substantially increases normalized beamforming gain, particularly in NLoS cases, highlighting the critical role of visual and geometric modalities in spatial reasoning and blockage mitigation.

Figure 4: Ablation study of input modalities showing beamforming gain improvements with RGB and LiDAR integration in NLoS scenarios.

Implications and Outlook

Multimodal-NF offers a pivotal resource for research on 6G XL-MIMO systems operating in challenging low-altitude, near-field environments. Practically, it enables the objective evaluation of environment-aware algorithms, fusion strategies, and robust beam alignment under realistic propagation and blockage conditions. Theoretically, it facilitates the study of joint sensing-communication inference, spatial uncertainty reduction, and multimodal learning in dynamic 3D contexts.

The open-source generator, with customizable scene, trajectory, array, and frequency parameters, supports rapid extension to terrestrial vehicular networks, adjustable UPA configurations, and alternative spectral bands, accelerating the development of AI-driven wireless systems. Future research may target advanced fusion architectures, uncertainty quantification, and domain-adaptive learning, leveraging the comprehensive multimodal structure of Multimodal-NF for improved generalization in real-world deployments.

Conclusion

Multimodal-NF establishes a rigorous benchmark for multimodal sensing and communications in low-altitude near-field XL-MIMO systems, addressing the deficiencies of prior datasets and enabling data-driven research on environment-aware wireless AI. Empirical validations prove its efficacy for high-resolution localization and robust beam prediction, with strong numerical results supporting the critical value of multimodal data fusion. The resource is positioned to support future advances in 6G wireless systems and multimodal AI across both theoretical and practical axes.