- The paper introduces a robust SLAM framework that leverages physics-constrained deblurring and blur-aware tracking to achieve high-fidelity 3D reconstructions.

- Key innovations include adaptive blur classification, multi-scale per-pixel kernel modeling, and 3D Gaussian Splatting with hybrid bundle adjustment for consistent mapping.

- Quantitative results on benchmarks like ArchViz and TUM-RGBD demonstrate substantial improvements in PSNR (27.45 dB) and trajectory accuracy over state-of-the-art methods.

Introduction and Motivation

Unblur-SLAM addresses the long-standing challenge in dense monocular RGB SLAM of robustly reconstructing geometrically accurate and photorealistic 3D scenes from input sequences with severe image blur. While classical SLAM methods catastrophically degrade under defocus or motion-blurred inputs due to their strong dependency on reliable image features, recent dense 3D neural scene representations—particularly frameworks leveraging 3D Gaussian Splatting (3DGS)—offer a higher degree of flexibility. However, prior 3DGS SLAM pipelines either ignore blur or treat all frames as equally degraded, resulting in sub-optimal trade-offs between performance and computational efficiency. Unblur-SLAM introduces a principled approach leveraging automatic blur quantification, physics-constrained, 3D-consistent deblurring, and adaptive computation to handle both motion and defocus blur, targeting high-fidelity online scene reconstruction under real-world conditions.

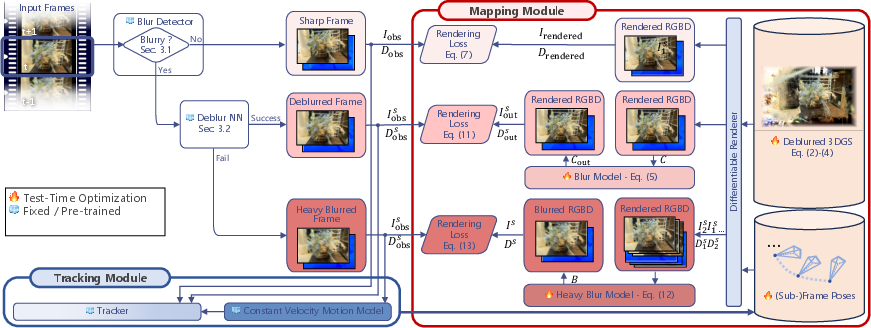

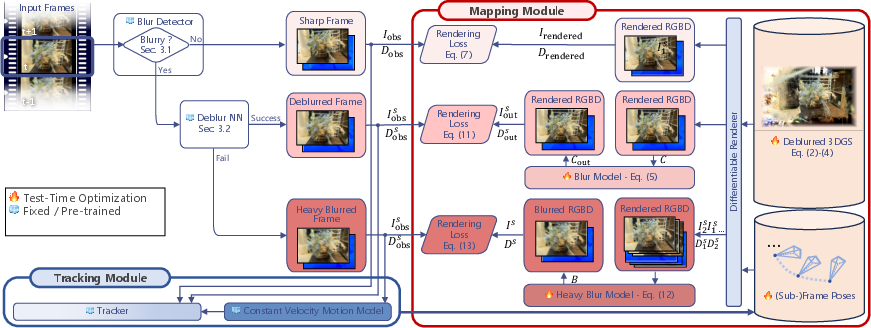

Figure 1: Unblur-SLAM overview; input frames are classified by blur severity, routed to appropriate deblurring and mapping modules, optimizing runtime and reconstruction fidelity.

Methodology Overview

The system architecture comprises several orthogonal innovations:

Quantitative and Qualitative Results

The effectiveness of Unblur-SLAM is substantiated with consistent improvements across both synthetic (ReplicaBlurry, ArchViz) and real-world (TUM-RGBD, IndoorMCD) datasets. For instance:

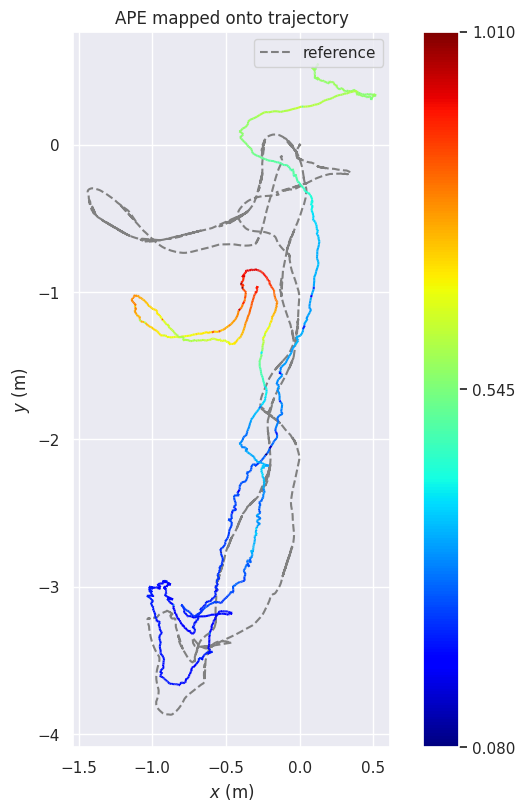

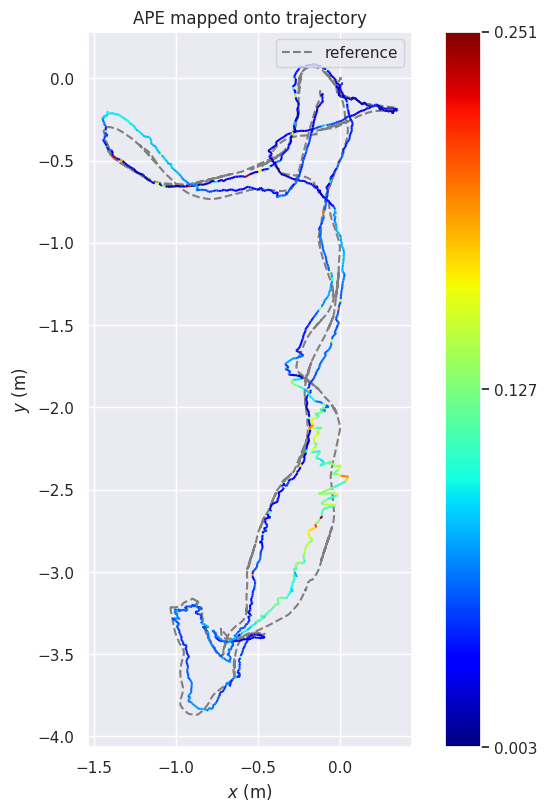

- On extreme-blur, synthetic ArchViz sequences, Unblur-SLAM demonstrates clear improvements in both accuracy and photorealism, as measured by lower Absolute Trajectory Error (ATE) and higher PSNR, over specialized motion-blur-aware baselines (MBA-SLAM).

- On the established Deblur-NeRF defocus blur benchmark, Unblur-SLAM achieves a substantial PSNR improvement over previous state-of-the-art (27.45 dB vs. 24.21 dB for Deblurring 3DGS), asserting that its online system outperforms even offline deblurring solutions.

- Across TUM RGB-D and MCD datasets, both ATE and PSNR are improved even in sequences with heterogeneous sharp/blurred frame proportions, aided by robust blur detection and adaptive processing.

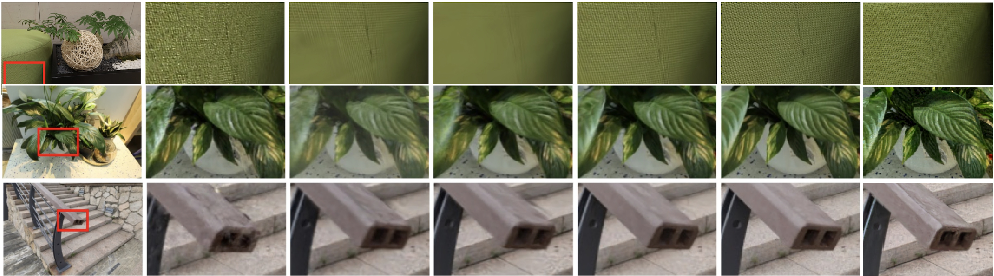

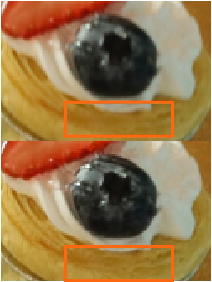

- Qualitative evaluations document sharper detail recovery and texture faithfulness compared to MBA-SLAM and I2-SLAM, especially in sequences with defocus or mixed blur (Figures 3, 4, 5).

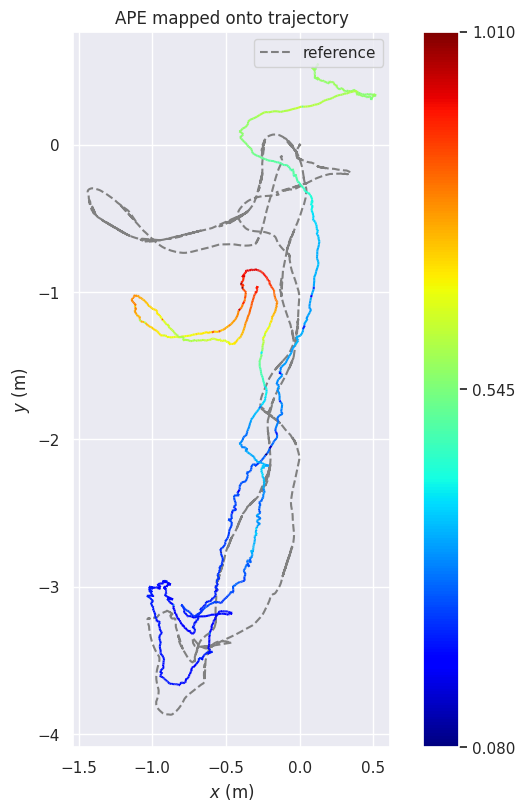

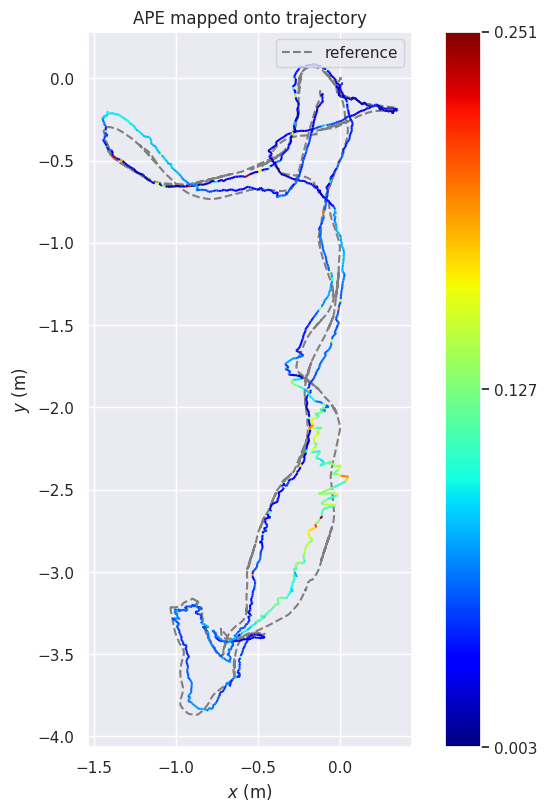

Figure 3: Comparison of camera trajectories on IndoorMCD; Unblur-SLAM achieves lower drift and alignment error than Droid-SLAM.

Figure 4: Qualitative comparison on TUM sequences; Unblur-SLAM preserves geometric structure and fine texture beyond MBA-SLAM and Deblur-SLAM outputs.

Analysis and Discussion

Unblur-SLAM’s core claim is that handling real-world SLAM blur requires both explicit and physically motivated regularization in deblurring and plug-in architecture allowing fast bypass of unaffected frames. The proposed hybridization of learning-based and analytic blur modeling avoids the key pitfalls of 2D deblurring methods (loss of 3D consistency, noise overfitting) while preventing the high complexity and inefficiency of fully model-based multi-frame approaches. Ablations confirm the fallback mechanism for severe blur is essential for robustness, with a measurable PSNR drop of 0.56 dB if omitted under extreme conditions.

Notably, Unblur-SLAM demonstrates that a well-trained, physics-constrained deblurring module can substantially improve not only aesthetic realism, but also metric localization and mapping accuracy in practical monocular online SLAM. Depth-aware, per-pixel kernel modeling is critical for detail preservation. Nevertheless, the system introduces runtime and memory trade-offs—going beyond 3 virtual sub-frames for extremely blurred inputs exacerbates memory consumption, implying the need for future research into more scalable 3D blur models.

Figure 5: Comparison with ground truth sharp frames (top), illustrating that the method’s reconstructions nearly recover hidden clean details lost in input blur.

Implications and Future Directions

This framework points toward several theoretical and practical advances:

- SLAM systems capable of real-time operation in unconstrained imaging conditions (e.g., handheld or mobile robots in low-light or fast-motion) due to automatic and adaptive computation.

- Blur physics constraints in training and multi-view consistency priors may become standard for neural scene representations targeting deployment outside ideal laboratory settings.

- There is strong evidence for favoring hybrid, modular design: combining physically interpretable models, learned priors, and dynamic control facilitates both accuracy and efficiency.

- To achieve wide applicability, future research must address the remaining bottlenecks—especially optimizing deblurring network inference and scalable memory footprint for heavy multi-frame blur scenarios.

Conclusion

Unblur-SLAM delivers a robust, efficient, and high-fidelity dense neural SLAM solution capable of reconstructing sharp, consistent 3D models from image sequences exhibiting significant motion and defocus blur. Key advancements include adaptive blur-aware tracking, physics-based deblurring training, and 3D-consistent multi-scale blur kernel modeling. The system achieves superior quantitative and qualitative performance against all state-of-the-art offline and online baselines, validating the claim that online SLAM pipelines can outperform even specialized single-task offline methods under challenging real-world blur conditions. Remaining runtime/memory constraints highlight the centrality of future work in scalable neural SLAM and real-time physically-driven image restoration.

Figure 6: Comparison with manually labeled sharp frames from I2-SLAM; Unblur-SLAM approaches the fidelity of ground truth even in heavily blurred scenes.

Figure 7: Side-by-side input (blurred) and deblurred Unblur-SLAM output, indicating effective restoration of detail and color consistency.

Figure 8: Generalization across datasets; reconstructed RGB renderings (right) exhibit fine surface and texture details reconstructed from highly corrupted inputs (left).